Short and Wide Network Paths

Network flow is a powerful mathematical framework to systematically explore the relationship between structure and function in biological, social, and technological networks. We introduce a new pipelining model of flow through networks where commodit…

Authors: Lavanya Marla, Lav R. Varshney, Devavrat Shah

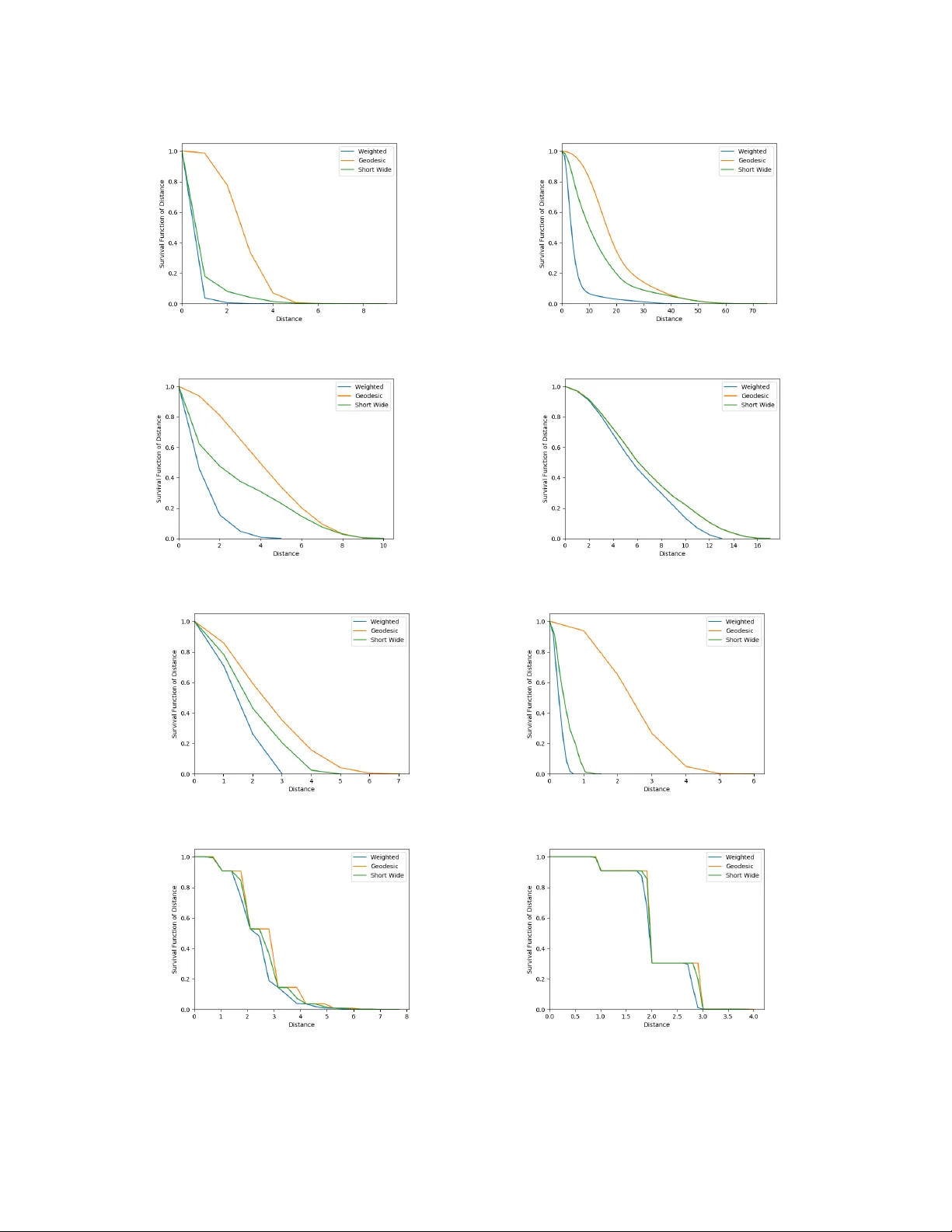

1 Short and W ide Network P aths La v anya Marla 1 , La v R. V arshney 1 , De va vrat Shah 2 , Nirmal A. Prakash 1 , and Michael E. Gale 1 Abstract Network flo w is a powerful mathematical framework to systematically explore the relationship between structure and function in biological, social, and technological networks. W e introduce a new pipelining model of flo w through networks where commodities must be transported ov er single paths rather than split o ver sev eral paths and recombined. W e show this notion of pipelined network flow is optimized using network paths that are both short and wide, and develop efficient algorithms to compute such paths for giv en pairs of nodes and for all-pairs. Short and wide paths are characterized for many real-world networks. T o further demonstrate the utility of this network characterization, we dev elop nov el information- theoretic lower bounds on computation speed in nervous systems due to limitations from anatomical connectivity and physical noise. For the nematode Caenorhabditis ele gans , we find these bounds are predictiv e of biological timescales of behavior . Further , we find the particular C. ele gans connectome is globally less efficient for information flow than random networks, but the hub-and-spoke architecture of functional subcircuits is optimal under constraint on number of synapses. This suggests functional subcircuits are a primary organizational principle of this small inv ertebrate nervous system. I . I N T RO D U C T I O N In studying complex systems via the interconnection of their elements, network science has emerged in the last two decades as an insightful approach for understanding collectiv e behavior in brains, societies, and physical infrastructures. Common network science analysis techniques draw on dynamical systems theory [1], [2] and man y univ ersal properties of disparate networks hav e been found [3]. Other prev alent analysis techniques are based on network flow [4]. 1 Univ ersity of Illinois at Urbana-Champaign 2 Massachusetts Institute of T echnology November 4, 2019 DRAFT 2 W e take the flo w perspectiv e and introduce a nov el notion of network flow that arises in many biological, social, and technological networks, yet has not pre viously been studied. Consider a network where a commodity to be transmitted from a source node to a destination node can be split into pieces in time and sent in a pipelined fashion over (possibly) se veral hops using as many time slots as needed. The commodity , howe ver , must go ov er a single route rather than being split ov er sev eral routes to be recombined by the destination [5], [6]. This is in contrast to the maximum capacity problem [7], [8] where flo w between two nodes may use as many dif ferent routes as needed. This “circuit-switched” model with a single route is prev alent in systems without the ability to split and recombine, e.g. signal flow in simple neuronal networks, message flow in social networks, and the flow of train cars in railroad networks with few engines. W e will see that the optimal paths for the pipelined network flow problem must not only be short in terms of number of hops but also wide in terms of the bottleneck edge in the route. That is, to maximize flo w requires finding the single best route between the two nodes: the route that minimizes the weight of the maximum-weight edge in the route and yet is short in path length. Finding all-pairs shortest paths in weighted networks can be accomplished in polynomial time using Floyd’ s dynamic programming algorithm [9]. This is an optimization problem in a metric space. Finding widest paths in weighted networks can be accomplished by taking paths in a maximum spanning tree [5], [6], also in polynomial time. This is an optimization problem in an ultrametric (non-Archimedean) space [10]. As far as we can tell, our problem of finding paths that minimize the width-length product between two nodes has remained unstudied in the literature. W e dev elop ef ficient algorithms for finding short and wide paths between tw o gi ven nodes, as well as for all pairs. As part of the dev elopment, we prov e correctness and also characterize the computational comple xity as polynomial time. Depth-first search strategies that enumerate all simple paths between two nodes [11], would check a factorial number of paths in the worst case. Ef ficient algorithms enable us to characterize the all-pairs distribution of short and wide paths for many complex networks. Note that traditional notions of network diameter and a verage path length are studied extensi vely in network science [2], but the all-pairs geodesic distance distribution of unweighted graphs of fairly arbitrary topology is also starting to be of interest [12], [13], essentially building on results for Erd ¨ os-R ´ enyi random graphs [14], [15]. As far as we kno w , this distance distribution for weighted graphs remains unstudied, as does the distribution November 4, 2019 DRAFT 3 of our short-and-wide path lengths. In studying the short-and-wide path length distribution, we do not see univ ersality across networks. T o demonstrate the detailed structure-function insights that pipelined flow giv es, we consider neuronal networks. Indeed with advances in experimental connectomics producing wiring dia- grams of many neuronal networks, there is growing interest in informational systems theories to provide insight [16]–[18]. For concreteness, we focus on hermaphrodite nematode Caenorhab- ditis ele gans , which is a standard model or ganism in biology [19]–[21] and has exactly 302 neurons [22]. W e consider three scientific questions in asking whether information transmission through the nervous system is a bottleneck that limits behavior . (Neural ef ficiency hypotheses of intelligence also argue information flows better in the nervous systems of bright individuals [23], [24].) Question I.1. Do neur onal cir cuits allow behaviors to happen as quic kly as possible under information flow limitations imposed by synaptic noise pr operties and neur onal connectivity patterns? Question I.2. Ar e information flow pr operties of neur onal networks significantly differ ent fr om random graphs drawn fr om ensembles that match other network functionals? That is, ar e net- works non-r andom [25] in allowing information flow that is faster or slower than other networks? Question I.3. Does the synaptic micr oar chitectur e of functional subcir cuits optimize information flow under constraint on number of synapses? Since the exact computations performed by the nervous system are unclear , we use general information-theoretic methods to lower bound optimal computational performance of a gi ven neural circuit in terms of its physical noise and connectivity structure. This approach is inspired by information-theoretic limits in distrib uted computing and control [26], [27]. If the performance of a neural circuit is close to the lo wer bound, then it is operating close to optimally . W e specifically consider gap junctions in C. ele gans , where neurons are directly electrically connected to each other through pores in their membranes. There can be more than one gap junction connecting two gi ven neurons. W e, for the first time, model and compute the Shannon channel capacity of gap junctions. Channel capacity used together with the network topology of the system in the short-and-wide path computation, and with an estimate of the informational November 4, 2019 DRAFT 4 requirements to perform computations, yields a bound on the minimum time to perform bio- logically plausible computations. Remarkably , when this lower bound is applied to C. ele gans , the result is rather close to behaviorally-observed timescales. This suggests nematodes may be operating close to the beha vioral limits imposed by physical properties of their nervous system. In asking whether the network is non-random [25] in allowing behavior that is faster or slower than other networks, we surprisingly find that the complete C. ele gans connectome has greater distances and is therefore slower in supporting global information flow than random networks. Contrarily , we pro ve that the hub-and-spoke architecture of C. ele gans functional subcircuits [28], [29] optimizes computation speed under constraint on number of synapses. As such, global information flow may not be a relev ant criterion for neurobiology , at least for a small in vertebrate system like C. elegans . Rather , functional subcircuits may be a primary organizational principle. I I . P I P E L I N I N G M O D E L O F I N F O R M A T I O N F L O W Consider a network where a commodity is to be sent from a source to a destination in a manner that can be split into pieces in time and sent in a pipelined fashion ov er (possibly) se veral hops using as many time slots as needed. The commodity must go ov er a single route rather than being split over sev eral routes to be recombined by the destination. As an example, in C. ele gans , each neuron is identified by name and is different from any other neuron [22]; computational specialization may arise from neuronal specialization, which in turn may require specific paths for specific information. In this model, maximizing flow requires finding the single best route between the two nodes: the route that minimizes the weight of the maximum-weight edge in the route and yet is short in path length. In the context of network behavior , note that since we adopt the short-and-wide path vie w of information flow rather than the maximum capacity view [7], [8], bounds on computation speed will be gov erned by an appropriate notion of graph diameter rather than by notions of graph conductance [27]. Since diameter provides weaker bounds than graph conductance, this is without loss of generality . Our notion of graph diameter is defined in the next section. A. Distance and Effective Diameter Consider the following standard definitions of graph distance for undirected, weighted graphs. November 4, 2019 DRAFT 5 Definition II.1. Let G = ( V , E ) be a weighted graph. Then the geodesic distance between nodes s, t ∈ V is denoted d G ( s, t ) and is the number of edges connecting s and t in the path with the smallest number of hops between them. If ther e is no path connecting the two nodes, then d G ( s, t ) = ∞ . Definition II.2. Let G = ( V , E ) be a weighted graph. Then the weighted distance between nodes s, t ∈ V is denoted d W ( s, t ) and is the total weight of edges connecting s and t , in the path with the smallest total weight between them. If ther e is no path connecting the two nodes, then d W ( s, t ) = ∞ . Another notion of distance arises from the pipelining model of flo w . W e want a path between two nodes that has a small number of hops but is also such that the weight of the maximum- weight edge is small; we measure path length weighted by this bottleneck weight. Definition II.3. Let G = ( V , E ) be a weighted gr aph. Then the bottleneck distance between nodes s, t ∈ V is denoted d B ( s, t ) and is the number of edges connecting s and t , scaled by the weight of the maximum-weight edge, in the path with the smallest total scaled weight between them. If there is no path connecting the two nodes, then d W ( s, t ) = ∞ . Proposition II.1. If weights of all actual edges ar e 1 or less, geodesic distance upper bounds the bottlenec k distance: d B ( s, t ) ≤ d G ( s, t ) . (1) Pr oof: Consider the path between s and t that governs d G ( s, t ) . Since the weights of all actual edges are 1 or less, the maximum-weight edge weight is 1 or less. Hence the bottleneck weight of this path must be less than or equal to the number of edges connecting s and t in that path. Since by definition d B ( s, t ) minimizes the bottleneck weight among paths connecting s and t , d B ( s, t ) ≤ d G ( s, t ) . Proposition II.2. W eighted distance lower bounds the bottleneck distance: d B ( s, t ) ≥ d W ( s, t ) . (2) Pr oof: Consider the path between s and t that governs d B ( s, t ) . Let w 0 be the maximum- November 4, 2019 DRAFT 6 weight edge weight and h the number of edges in it. Since other weights in the path are only less than w 0 , the total weight of this path is less than hw 0 , but by definition d W ( s, t ) is less than or equal to this quantity . Hence d B ( s, t ) ≥ d W ( s, t ) . It is con venient for the sequel to write these distances as constrained optimization problems. W e first define a set of constraints on the decision variables x ij that indicate how different edges are used in optimal paths, and N indicates neighborhood. X j :( s,j ) ∈ E x ij = 1 (3) X j :( i,j ) ∈ E x ij − X j :( j,i ) ∈ E x j i = 0 for all i ∈ N \ { s, t } (4) X i :( i,t ) ∈ E x it = 1 (5) x ij ∈ { 0 , 1 } ∀ ( i, j ) ∈ E (6) These constraints simply enforce the mov ement of one unit of flow from node s to node t and maintain flow balance at all other nodes. Integrality constraints ensure that exactly one path is chosen. These constraints are the same as for standard shortest path problems [4] and therefore the constraint matrix is totally unimodular . The distance expressions use the notation w ij for the edge weight between nodes i and j . d G ( s, t ) = min X ( i,j ) ∈ E x ij such that (3)–(6) hold. (7) d W ( s, t ) = min X ( i,j ) ∈ E w ij x ij such that (3)–(6) hold. (8) d B ( s, t ) = min max ( i,j ) ∈ E { w ij x ij } X ( i,j ) ∈ E x ij such that (3)–(6) hold. (9) Notice the objectiv e functions are nonlinear; yet we de velop ef ficient algorithms in Section III. Any of these distance functions can be used to define the all-pairs distance distribution of a network, which is just the empirical distribution of distances among all n 2 pairs of vertices, for a graph of size | V | = n . These distance distributions have various moments and order statistics, November 4, 2019 DRAFT 7 such as the av erage path length and the diameter . Definition II.4. The graph diameter is D = max s,t ∈ V d ( s, t ) . (10) W e also define a notion of effecti ve diameter where node pairs that are outliers in the all- pairs distance distribution do not enter into the calculation. Recall that the quantile function corresponding to the cumulati ve distribution function (cdf) F ( · ) is Q ( p ) = inf { x ∈ R | p ≤ F ( x ) } for a probability value 0 < p < 1 . Definition II.5. F or a network of size n , let F ( x ) be the empirical cdf of the distances of all n 2 distinct node pairs. Then the effecti ve diameter is: D e = Q (0 . 95) . (11) This definition is more stringent than others in the literature [30]. Of course, D e ≤ D . Moving forward, we use ef fecti ve diameter rather than diameter since it characterizes when most of a commodity would hav e reached its destination. Thresholds other than 0 . 95 can be easily defined. B. Unique Pr operty of Bottleneck P aths In this subsection, we discuss a property of short-and-wide paths that is distinct from geodesic and weighted paths and that has algorithmic importance. A ke y attribute of shortest paths e xploited by many shortest path algorithms is the optimal substructur e pr operty , that all subpaths of shortest paths are shortest paths [4]. This follo ws from metric structure, but for the ultrametric space induced by short-and-wide paths, the property does not hold. W e prove this through a counterexample. Consider the network in Figure 1, with the network weights w ij sho wn on the edges. For source node A and destination node H , the geodesic, weighted, and short-and-wide paths are, respecti vely: A − E − G − H (3 units), A − B − D − F − H (1.367 units), and A − B − D − F − H (or A − B − C − F − H , 2 units). Instead, if we compute the same paths with A as the source and K as the destination, path A − E − G − H − I − J − K is the geodesic path (6 units) A − B − D − F − H − I − J − K is the weighted path (3.367 units) and path A − E − G − H − I − J − K November 4, 2019 DRAFT 8 A B D F H I J K C E G 0.5 0.167 0.2 0.5 0.5 0.5 1.0 0.5 0.167 1.0 1.0 1.0 Fig. 1: Network that demonstrates the optimal substructure violation for short and wide paths. is the short-and-wide path. Notice if we compute the short-and-wide path from A to K , this does not guarantee that its sub-path up to node H is optimal for source A and destination H . This implies short-and-wide paths do not form trees, unlike geodesic or weighted paths. Hence, classic tree-based shortest path algorithms [4] must be modified significantly for this setting, as we no w show . I I I . E FFI C I E N T A L G O R I T H M S F O R C O M P U T I N G S H O RT A N D W I D E P A T H S In this section, we present algorithms for computing short-and-wide paths. A. One-to-All Bottlenec k Distance Algorithm W e present Algorithm 1 to compute the bottleneck distance. The algorithm maintains label sets at each node j ∈ V , denoted by L p ( j ) = [ p, l 1 p ( j ) , l 2 p ( j ) , l 3 p ( j ) , pr ed p ( j ) , pp p ( j )] , where the label sets include: (i) p , the index of the label set, (ii) l k p ( j ) , the value of label k in label set p at node j , k = 1, 2, 3; where: (a) l 1 p ( j ) tracks the number of edges trav ersed from s until the current node, (b) l 2 p ( j ) tracks the maximum width along a path from the origin until the current node, (c) l 3 p ( j )(= l 1 p ( j ) × l 2 p ( j )) tracks the product of the maximum width and the number of edges tra versed from s until the current node j ; (iii) pr ed p ( j ) : predecessor node for label set p at node j , (i v) pp p ( j ) : index of predecessor’ s label set for label p at node j . W e also let np ( j ) denote the number of non-dominated label sets for node j . Definition III.1. A label set L p ( j ) is strictly dominated by label set L q ( j ) at node j , if l 1 p ( j ) > l 1 q ( j ) and l 2 p ( j ) > l 2 q ( j ) (and consequently , l 3 p ( j ) > l 3 q ( j ) ). Label set p is dominated by label set q at node j , if either: (a) l 1 p ( j ) > l 1 q ( j ) and l 2 p ( j ) = l 2 q ( j ) or (b) l 1 p ( j ) = l 1 q ( j ) and l 2 p ( j ) > l 2 q ( j ) November 4, 2019 DRAFT 9 (and again consequently , l 3 p ( j ) > l 3 q ( j ) ). A label set that is not strictly dominated or dominated, is non-dominated . Remark III.1. Observe that each node j can have at most W non-dominated labels, wher e W is the maximum number of discr ete values of weights w ij , over all edges ( i, j ) in the network. This is a consequence of the fact l 1 p ( j ) and l 2 p ( j ) ar e the only two quantities being track ed (and l 3 p ( j ) is the pr oduct of l 1 p ( j ) and l 2 p ( j ) ); and the possibilities for non-dominated labels ar e if l 1 p ( j ) < l 1 q ( j ) and l 2 p ( j ) > l 2 q ( j ) (or vice versa) in labels p and q of node j . Also note that all non-dominated labels must be maintained since the optimal substructur e pr operty does not hold, and each such label must be pr opagated to the downstream nodes because it may dominate after pr opagation. Since l 1 p is always an inte ger , the number of discr ete weights upper bounds the possible number of non-dominated labels that can exist at each node. Lemma III.1. Along a given a − b path, the value of the labels on the path monotonically (but not necessarily strictly monotonically) incr eases. Pr oof: Note that the v alues in labels l 1 p and l 2 p are always non-negati ve because the labels measure the number of edges and the max width thus far , respectiv ely . The UpdateLabels operation defined below can only increase label v alues, and thus, on a giv en a − b path (including if a is the same as b ), the label v alues only increase. Lemma III.2. The s − t path corr esponding to bottlenec k distance will not contain any cycles. Pr oof: Proof is by contradiction. Suppose the s − t path with the bottleneck distance contains a cycle, that is the path is s − . . . − u − u 1 . . . u k − u − . . . − t , with cycle u − u 1 . . . u k − u . Ho we ver , by Lemma III.1, we know that as we trav el along u − u 1 . . . u k − u , the v alue of d B ( s, t ) only increases. Therefore the path s − . . . − u − . . . − t that excludes the cycle will hav e a smaller v alue of d B ( s, t ) , contradicting that s − . . . − u − u 1 . . . u k − u − . . . − t is the shortest path. Theorem III.1. When Algorithm 1 terminates, all nodes i have the bottleneck distance fr om s to i as labels. Pr oof: The correctness of the algorithm follows because the label sets keep track of l 1 p ( i ) , l 2 p ( i ) , November 4, 2019 DRAFT 10 and l 3 p ( i ) for each node i and each label p corresponding to that node i . At each iteration, each non-dominated label is explored, in increasing order of l 3 p ( i ) . That is, each label set from each edge out of the selected node i is propagated do wnstream during the UpdateLabels step. Due to the ConsolidateLabels step at each node in each iteration, a maximum of W labels can be present at each node (Remark III.1). All non-dominated labels are explored before termination, and due to Lemma III.1 and III.2, all possible paths are explored, resulting in the short-and-wide path. Having established correctness, we also analyze the computational complexity , in terms of the number of nodes n , number of edges m , and the number of possible discrete edge weights W . Lemma III.3. The running time of Algorithm 1 is O ( W 2 m log n ) , which is pseudopolynomial. Pr oof: The algorithm is structured like Dijkstra’ s algorithm which has complexity O ( m log n ) [4], but during each iteration, since the UpdateLabels step propagates from each label out of each edge and at each node, a consolidation step must be performed. With a discrete number of weights W ov er all edges in the network, the possible labels at each node is upper bounded by W . Hence, the order of the algorithm is O ( W 2 m log n ) . B. All-P airs Bottleneck Distance Algorithm No w we consider the computation for all-pairs, rather than separately computing for each source-destination pair . This is Algorithm 2. Theorem III.2. When Algorithm 2 terminates, all edges ( i, j ) have bottleneck distance fr om i to j as labels. Pr oof: Note that the short-and-wide path between any nodes i and j will not contain any cycles, and therefore have at most n − 1 edges. In the k th iteration, we consider adding node k to each edge along the path connecting i and j , from each label set at i to each label set at j . This algorithm is equi valent to enumerating all possible combinations of the label sets [ i, k ] and [ k , j ] . If the short-and-wide path between i and j contains node k , then the condition d [ i, j ] > max { l 2 [ i, k ] , l 2 [ k , j ] } × ( l 1 [ i, k ] + l 1 [ k , j ]) will be violated in iteration k , and k is added to one of the edges on the path. If there is an improving or non-dominated label that can be added to a node, it is added ev en if k is not on the bottleneck path between i and j . If the November 4, 2019 DRAFT 11 Algorithm 1 One-to-All Bottleneck Distance 1: Initialize: S = ∅ , S 0 = ∅ l k 1 ( s ) = 0 , ∀ k = 1 , 2 , 3 S = S ∪ { s } , S 0 = S 0 ∪ { l k p ( s ) } ∀ k = 1 , 2 , 3 , p = 1 , 2 l k p ( j ) = ∞ ; ∀ k = 1 , 2 , 3 , p = 1 , 2; np ( j ) = 0 , p p ( j ) = − 1 and pp ( j ) = − 1 ∀ j ∈ N \ { s } 2: V 0 = V 0 ∪ { l k p ( j ) } ∀ j ∈ V , ∀ k = 1 , 2 , 3 3: while S 6 = V and S 0 6 = V 0 do 4: f or each node j ∈ V \ S do 5: f or each non-dominated label set L p ( j ) (see Definition III.1) do 6: T := T ∪ { L p ( j ) } 7: end f or 8: end f or 9: Order label sets in T in increasing order of l 3 p ( j ) , ∀ p, ∀ j , breaking ties arbitrarily . Find label set L p ( i ) ∈ T such that l 3 p ( i ) = min L ∈ V 0 \ S 0 l 3 q ( j ) 10: T = T \ { L p ( i ) } , S 0 = S ∪ { L p ( i ) } , S := S ∪ { i } 11: UpdateLabels( L p ( i ) ): 12: f or each edge ( i, j ) ∈ E do 13: f or each label set q = 1 , .., np ( j ) at node j do 14: if l 1 q ( j ) > l 1 p ( i ) + 1 ∧ l 2 q ( j ) > max { l 2 p ( i ) , w ij } then 15: pr ed q ( j ) = i, pp q ( j ) = p 16: l 1 q ( j ) = l 1 p ( i ) + 1 17: l 2 q ( j ) = max { l 2 p ( i ) , w ij } 18: l 3 q ( j ) = l 1 q ( j ) ∗ l 2 q ( j ) 19: else if ( l 1 q ( j ) ≤ l 1 p ( i ) + 1 and l 2 q ( j ) > max { l 2 p ( i ) , w ij } ) ∨ ( l 1 q ( j ) > l 1 p ( i ) + 1 and l 2 q ( j ) ≤ max { l 2 p ( i ) , w ij } ) then 20: Create ne w temporary label L q 0 ( j ) at j with pred q 0 ( j ) = i, pp q 0 ( j ) = p, l 1 q 0 = l 1 p ( i ) + 1 , l 2 q 0 ( j ) = max { l 2 p ( i ) , w ij } , l 3 q ( j ) = l 1 q 0 ( j ) ∗ l 2 q 0 ( j ) 21: end if 22: end f or 23: ConsolidateLabels(j): 24: f or all (including temporary) labels p at i do 25: Delete all dominated labels (Definition III.1). Also combine labels p and q with l 1 q ( j ) = l 1 p ( j ) and l 2 q ( j ) = l 2 p ( j ) . T emporary label made permanent if non-dominated. Update np ( j ) . 26: end f or 27: end f or 28: end while November 4, 2019 DRAFT 12 Algorithm 2 All-to-All Bottleneck Distances 1: Initialize: l 1 [ i, j ] = ∅ ∀ i, j ∈ N l 2 [ i, j ] = ∅ ∀ i, j ∈ N Let l 1 1 [ i, j ] = 1 if nodes i and j are adjacent Let l 2 1 [ i, j ] be the weight between nodes i and j if they are connected np [ i, j ] = l 1 1 [ i, j ] ∀ i, j ∈ N 2: f or all nodes k ∈ N do 3: f or all nodes i ∈ N do 4: f or all nodes j ∈ N do 5: Node Insertion on label sets L [ i, k ] and L [ k , j ] 6: Maximize Labels on label sets L [ i, j ] and L 0 [ i, j ] 7: end f or 8: end f or 9: end f or Algorithm 3 Node Insertion 1: Initialize: p = np [ i, k ]; q = np [ k , j ] 2: while L p [ i, k ] and L q [ k , j ] e xist do 3: append to front l 1 p [ i, k ] + l 1 q [ k , j ] to l 0 1 [ i, j ] 4: append to front max { l 2 p [ i, k ] , l 2 q [ k , j ] } to l 0 2 [ i, j ] 5: if l 2 p [ i, k ] = l 2 q [ k , i ] then p = p − 1; q = q − 1 6: end if 7: if l 2 p [ i, k ] > l 2 q [ k , i ] then p = p − 1 8: end if 9: if l 2 p [ i, k ] < l 2 q [ k , i ] then q = q − 1 10: end if 11: end while bottleneck path does not contain node k , then the condition will not be violated and the current best path and distance are retained, leading to correctness. After establishing correctness, we characterize computational complexity in terms of the number of nodes n , number of edges m , and the number of discrete edge weights W . Theorem III.3. The worst-case runtime for Algorithm 2 is pseudopolynomial O ( n 3 W 2 ) . Pr oof: Note that the structure of the algorithm is similar to the Floyd-W arshall algorithm for shortest paths, which is O ( n 3 ) [4]. Rather than one comparison inside of this, the bottleneck distance algorithm has two sub-algorithms, Algorithm 3 and Algorithm 4, to compare all of the November 4, 2019 DRAFT 13 Algorithm 4 Maximize Labels 1: Initialize: bestL 1 = ∞ p, q = 1 np [ i, j ] = 0 Note L [ i, j ] and L 0 [ i, j ] are sorted ascending by l 2 2: while L p [ i, k ] and L 0 q [ i, j ] exist do 3: if l 2 p [ i, j ] < l 0 2 q [ i, j ] then 4: if l 1 p [ i, j ] < bestL 1 then 5: append L p [ i, j ] to L 00 [ i, j ] 6: bestL 1 = l 1 p [ i, j ] 7: end if 8: p = p + 1 9: else if l 2 p [ i, j ] > l 0 2 q [ i, j ] then 10: if l 0 1 q [ i, j ] < bestL 1 then 11: append L 0 q [ i, j ] to L 00 [ i, j ] 12: bestL 1 = l 0 1 q [ i, j ] 13: end if 14: q = q + 1 15: else if l 2 p [ i, j ] = l 0 2 q [ i, j ] then 16: if l 1 p [ i, j ] < = l 0 1 q [ i, j ] ∧ l 1 p [ i, j ] < bestL 1 then 17: append L p [ i, j ] to L 00 [ i, j ] 18: bestL 1 = l 1 p [ i, j ] 19: else if l 1 p [ i, j ] > l 0 1 q [ i, j ] ∧ l 0 1 q [ i, j ] < bestL 1 then 20: append L 0 q [ i, j ] to L 00 [ i, j ] 21: bestL 1 = l 0 1 q [ i, j ] 22: end if 23: p = p + 1 24: q = q + 1 25: end if 26: end while 27: f or each remaining node in L [ i, j ] or L 0 [ i, j ] do 28: if l 1 [ i, j ] < bestL 1 then append to L 00 [ i, j ] 29: end if 30: end f or 31: L [ i, j ] = L 00 [ i, j ] 32: Delete L 00 [ i, j ] and L 0 [ i, j ] 33: update np [ i, j ] November 4, 2019 DRAFT 14 label sets. Each sub-algorithm at worst iterates through two label sets in parallel with size W if there are W lev els of weights based on Remark III.1 (or maximum size m ). Therefore their runtimes are O ( W 2 ) (or O ( m 2 ) if all edges hav e different weights). Combining this with the whole algorithm giv es the total runtime as O ( n 3 W 2 ) (or worst-case of O ( n 3 m 2 ) ). I V . A L L - P A I R S B O T T L E N E C K D I S TA N C E D I S T R I B U T I O N I N C O M P L E X N E T W O R K S W ith ef ficient algorithms in place, we can compute the all-pairs bottleneck distance distrib u- tion for sev eral undirected, weighted, real-world networks drawn from the Index of Complex Networks [31] database, which is publicly av ailable. W e choose networks that span a v ariety of systems, including transportation networks, biological networks, and social networks that may naturally support pipelined flow . T able I details the size, type, and sources of each network. T ABLE I: Characteristics of Real-W orld Networks Name Nodes Edges T ype Source US airports 1574 28236 T ransportation US airport networks (2010) [31] Mumbai bus routes 2266 3042 T ransportation India bus routes (2016) [31] Chennai bus routes 1009 1610 T ransportation India bus routes (2016) [31] Author collaborations 475 625 Social Social Networks authors (2008) [31] Free-ranging dogs 108 1296 Social W ilson-Aggarwal dogs [31] Game of Thrones 107 353 Social Game of Thrones coappearances [31] Resting state fMRI network 638 18625 Biological Human brain functional coactiv ations [31] Human brain coactiv ation 638 18625 Biological Human brain functional coactiv ations [31] In Figure 2 we plot the surviv al functions for the all-to-all geodesic, weighted, and bottleneck distances d G , d W , and d B . For each network, we find that the inequalities d W ≤ d B ≤ d G hold, as required by Propositions II.1 and II.2. If all edge weights were 1 , all distance metrics are equi v alent, i.e., d W = d B = d G . For example, note that in the Authors’ collaboration network (Figure 2d), the v alues of d B and d G very nearly coincide because the maximum weight along nearly e very path is 1 . Ho wev er , because a significant number of weights are far from 1 , the v alues of d W and d B do not coincide. Additionally , we observe that the geodesic, bottleneck, and weighted distances div erge as the weights are distrib uted a way from 1 . Pre vious in vestig ations of the geodesic distance distribution of random graphs had suggested good fits by W eibull, gamma, lognormal, and generalized three-parameter gamma distributions [12] as well as basic generativ e models to explain these distributions. W e consider the same parametric families to understand if short-and-wide path length distributions are well-described by such parametric forms. For each netw ork in T able I, we fit the survi v al functions from Figure 2 November 4, 2019 DRAFT 15 (a) US airports 2010 (b) Mumbai bus network (c) Chennai bus network (d) Authors’ collaboration network (e) Free ranging dogs social network (f) Game of Thrones coappearances (g) Resting State fMRI network (h) Human brain coactiv ation network Fig. 2: Surviv al function for the empirical all-pairs lengths. November 4, 2019 DRAFT 16 and find goodness-of-fit for se veral dif ferent parametric families using the K olmogorov-Smirno v test. No single parametric family was best for all networks, but the gamma distribution provided reasonable fits for se veral networks, as shown in T able II which provides the fitted distribution parameters, along with the χ 2 and p -v alues from the test. As far as we can tell, there is no uni versality in the bottleneck distance distribution across networks. T ABLE II: Gamma Distribution Fits of Bottleneck Distance Name Shape Location Scale χ 2 p -value US airports 0.27 0 0.64 5.80 0.63 Chennai bus routes 0.54 0 0.22 1.03 0.06 Mumbai bus routes 0.41 0 0.23 21.25 0.095 Author collaborations 0.83 0 0.32 8.19 0.22 Free-ranging dogs 0.67 0 0.40 3.56 0.61 Game of Thrones 0.55 0 0.18 4.71 0.008 Resting state fMRI network 0.61 0 0.19 4.91 0.23 Human brain coactiv ation 368.63 -7.47 0.02 8.756 0.85 V . C. ele gans N E U R O N A L N E T W O R K : G L O B A L A N D L O C A L F L O W W e turn attention specifically to the C. ele gans gap junction network, to in vestigate ho w information flo w limits behavior . A bound on the Shannon capacity [bits/sec] of a single gap junction is dev eloped in the Appendix. Although there is no reason to belie ve capacity-achieving codes are used in neural signaling, Shannon capacity provides bounds on the information rate for an y signaling scheme. The topology of the gap junction network has been characterized in some detail in our prior work [22]. The somatic network consists of a giant component comprising 248 neurons, two small connected components, and several isolated neurons. W ithin the giant component, the av erage geodesic distance between two neurons is 4 . 52 . Since this characteristic path length is similar to that of a random graph and since the clustering coef ficient is lar ge with respect to a random graph, the network is said to be a small-world network. Moreover , the C. ele gans network overall has good expander properties [32, App. C]. Here we compute the ef fecti ve diameter of the giant component of the gap junction network, with respect to the bottleneck distance. W e also find upper and lo wer bounds. Figure 3 shows the survi v al function of the empirical all-pairs geodesic, weighted, and bottleneck distances. As can be observed, the effecti ve diameter for bottleneck distance is between 6 and 7 . The upper and lo wer bounds are close to one another since, as sho wn in Figure 4, the minimax width of paths November 4, 2019 DRAFT 17 Fig. 3: Survi val function for the empirical all-pairs distance distributions of the C. ele gans gap junction neuronal network giant component. The weighted and bottleneck distances are listed in terms of in verse gap junctions. Fig. 4: Surviv al function for the empirical all-pairs bottleneck width of the C. ele gans gap junction neuronal network giant component, without considering path length. The width is listed in terms of in verse gap junctions. in the C. ele gans network when ignoring path length is almost always one in verse gap junction rather than smaller . T aking path lengths into account only increases the bottleneck width. A. Limits in Computation Speed Having characterized the channel capacity of links and the topology of neuronal connecti vity , we no w develop an information-theoretic model of computation, which in turn yields a limit on computation speed deriv ed from information flow bounds. Consider the chemosensing problem faced by an organism like C. ele gans . It has 700 different types of chemoreceptors [33] and must take behavioral actions based on the chemical properties November 4, 2019 DRAFT 18 of its en vironment [28], [34]. Suppose that differentiating between 700 chemicals requires 10 bits of information, which we call the message volume log M . T o perform an action, the neurons must reach consensus among themselves based on the sensory neuron signals [28]. This may , in principle, need transport of information between all parts of the neuronal network. W e make the follo wing natural assumption: the consensus time is bounded by the amount of time it takes to transport 10 bits of information across the network (maximum over any two pair of neurons). The intuition behind this assumption is the following, which follows from the sparsity of sensory response in C. ele gans [35]. Suppose one part of the neuronal network has “strong” sensing information about an ev ent in the en vironment, e.g. worm near a chemical, but the other part has little or no such sensing information. Then effecti vely all information is communicated from one part of the network to the other . Accounting for such instances naturally justifies the abov e assumption. Note that the actual computational procedures used by C. ele gans may require se veral sweeps of signals through the organism, but for bounding purposes, we assume that one sweep is enough to spread the requisite information. In effect, the time for transportation of information across the network and hence to reach consensus is bounded below by t = D e log M C = 7 · 10 1700 = 0 . 041 s. This bound uses the geodesic ef fecti ve diameter and assumes bandwidth 1700 Hz or equiv alently a refractory period of 1 / 1 . 7 ms (see Appendix). There is evidence suggesting that the C. ele gans refractory period is likely to be near 1 ms instead. In that case, the above bound of 41 ms would become 70 ms. The bound of 41 ms or 70 ms applies to the whole giant component. There may be smaller subcircuits within the neuronal network responsible for specific func- tional reactions, within the giant component. If consensus is to be reached only in those functional subcircuits, we should utilize their diameter in place of effecti ve diameter 7 . As explained in Section V -B, they may hav e extremal diameters of 2 . Then the information propagation time would be bounded by 12 ms (or 20 ms under 1 ms refractory period). Using circuit-theoretic techniques, the predicted timescale of operation of functional circuits was between 20 ms and 83 ms [22]. This is clearly an excellent match to the range predicted by our bounds: 12 ms to 41 ms (or 20 ms to 70 ms under the 1 ms refractory period). This is November 4, 2019 DRAFT 19 rather surprising giv en that our technique is only attempting to deriv e fundamental lo wer bounds using anatomical information. No w we compare our lower -bound predictions to experimentally observed behavioral times. This is a true test of our methods in bounding the propagation time for decision-making infor - mation. The behavioral switch times in response to chemical gradients as fast as 200 ms have been observed in the literature [36, Supp. Fig. 1]. Since the time required for motor action like turning around must also be taken account—the worm can straighten itself in viscous fluids within 6 to 20 ms [37, Fig. 4B]—the lo wer-bound results are in agreement. Collecti vely , these agreements with the model calculation bounds suggest that information propagation is likely to be a primary bottleneck in the behavioral decision making of C. ele gans . B. Hub-and-Spoke Ar chitectur e As mentioned earlier , there are smaller subcircuits of the neuronal network that are responsible for certain functional reactions. For such subcircuits, the hub-and-spoke architecture has opti- mality properties. The basic premise is that the diameter of such a subcircuit should be small for computational speed; a hub-and-spoke network structure provides the smallest possible diameter of 2 with the constraint on the number of edges as well as connectivity requirement. Formally , we state the following easy fact. Proposition V .1. Given a connected graph G of n ≥ 3 nodes and n − 1 edges, the smallest possible diameter is 2 and is achieved by the hub-and-spoke structure . Pr oof: Clearly for a connected netw ork with n ≥ 3 nodes and n − 1 edges, it is a tree (no cycles). Further if there are n ≥ 3 nodes and n − 1 edges, there must be a pair of nodes not connected to each other through an edge. Since the graph is connected, they must be at least 2 hops apart. That is, the diameter of such a graph must be at least 2 . A hub-and-spoke network, by construction has diameter 2 . Indeed, as sho wn in Figure 5, certain known functional subcircuits in the C. ele gans neuronal network do indeed follow the hub-and-spoke architecture (or nearly so) [28], [29]. Note that other arguments also suggest the benefits to neuronal networks of small diameter [38], but not the optimality of hub-and-spoke architectures. November 4, 2019 DRAFT 20 (a) (b) Fig. 5: Gap junction connecti vity of a known chemosensory subcircuit [28]. The worm is almost left-right symmetric; the circuits on both sides are sho wn. The circuit on the left side of the worm (a) is a hub-and-spoke circuit whereas the circuit on the right side (b) is nearly so. C. Comparison to Random Networks No w we wish to study whether the bottleneck diameter of the C. ele gans gap junction network is more than, less than, or similar to the bottleneck diameter of random graphs that have certain other network functionals fixed. T o e v aluate the nonrandomness of the bottleneck diameter of the C. ele gans network giant component, we compare it with the same quantity expected in random networks. W e start with a weighted version of the Erd ¨ os-R ´ enyi random netw ork ensemble because it is a basic ensemble. Constructing the topology requires a single parameter , the probability of a connection between two neurons. There are 514 gap junction connections over 279 somatic neurons in C. ele gans , and so we choose the probability of connection as 0 . 0133 = 2 × 514 / 279 / 278 . After fixing the topology , we choose the multiplicity of the connections by sampling randomly according to the C. ele gans multiplicity distribution [22, Fig. 3(B)], which is well-modeled as a power -law with parameter 2 . 76 . Note that in general the giant component for such a construction will be much larger than that of C. ele gans . Figure 6 shows the surviv al function of the empirical all-pairs geodesic, weighted, and bot- tleneck distances of one hundred random networks. A random example is highlighted. As can be observ ed, the ef fectiv e diameter bottleneck distance is roughly 6 , significantly less than that for the C. ele gans network. No w we consider a degree-matched weighted ensemble of random networks. In such a random network, the degree distribution matches the degree distribution of the gap junction network; the November 4, 2019 DRAFT 21 Fig. 6: Survi val function for the empirical all-pairs distance distributions of 100 Erd ¨ os-R ´ enyi random network giant components; a random example is highlighted in colored lines. The weighted and bottleneck distances are in terms of in verse gap junctions. degree of a neuron is the number of neurons with which it makes a gap junction. Such a random ensemble is created using a numerical re wiring procedure to generate samples [39], [40]. Upon fixing the topology , the multiplicity of connections is sampled as for the Erd ¨ os-R ´ enyi ensemble. Note that in general the giant component for such a construction will be much larger than that of C. ele gans . Figure 7 shows the surviv al function of the empirical all-pairs geodesic, weighted, and bot- tleneck distances of one hundred random networks. A random example is highlighted. As can be observed, the effecti ve diameter for bottleneck distance is just below 5 , quite significantly less than that for the C. ele gans network. Comparing Figures 6 and 7 to Figure 3, note that the results hold for many defining thresholds for ef fecti ve diameter , not just 0 . 95 . These results rev eal a key nonrandom feature in synaptic connectivity of the C. ele gans gap junction network, but perhaps contrary to expectation. The network has a non-randomly worse bottleneck diameter compared to basic random graph ensembles. It enables globally slower behavioral speed than similar random networks. In contrast, Section V -B had found that at the micro-le vel of small functional sub-circuits, the C. ele gans gap junction network has se veral hub- and-spoke structures [28], [29], which are actually optimal from an information flo w perspecti ve. Thus, these results lend greater nuance to efficient flow hypotheses in neuroscience. November 4, 2019 DRAFT 22 Fig. 7: Surviv al function for the empirical all-pairs distance distributions of 100 degree-matched random network giant components; a random example is highlighted in colored lines. The weighted and bottleneck distances are in terms of in verse gap junctions. V I . C O N C L U S I O N W e hav e modeled pipelined network flow , as arises in sev eral biological, social, and techno- logical networks and de veloped algorithms to ef ficiently find short-and-wide paths that optimize this novel network flow . This ne w model of flow also specifically provides a ne w approach to understand the limits of information propagation and behavioral speed supported by neuronal networks. W ithin the field of network information theory itself, it is of interest to study the nov el notion of bottleneck distance in detail from a theoretical perspectiv e. Since we did not univ ersal scaling laws across several real-world networks, it is also of interest to analytically characterize its distribution in random ensembles such as W atts-Strogatz small worlds, Barab ´ asi-Albert scale- free networks, Kronecker random graphs, or random geometric graphs. Beyond our general study , we also specifically considered circuit neuroscience and connec- tomics, where the overarching goal is try to understand how an animal’ s behavior arises from the operations of its nervous system. The nervous system must transport information from one part to another , whether engaged in communication, computation, control, or maintenance. This paper proposes a way to characterize information flo w through the nervous system from detailed properties of anatomical connectivity data and to use this characterization to make lower bound statements on the behavioral timescales of animals. The efficac y of these techniques was demonstrated by explaining the communication bottle- November 4, 2019 DRAFT 23 necks in the gap junction network of the nematode C. ele gans . Remarkably , the timescale lower bounds are predictiv e of behavioral timescales. In considering the possibility of changing the network topology itself, we disco vered that the network has much worse bottleneck distance than similar random graphs (whether Erd ¨ os-R ´ enyi or degree-matched). The network does not seem to be optimized for global information flow . On the other hand, we noted the prominence of hub-and-spoke functional subcircuits in the C. ele gans gap junction network and proved their optimality for information flow under number of gap junctions constraints. In terms of neural organization, this suggests that smaller subcircuits within the larger neuronal network are responsible for specific functions, and these should hav e fast information flow (to quickly achie ve the computational objectiv e of that circuit, such as chemotaxis). Beha vioral speed of the global netw ork may not be biologically rele vant. As more and more connectomes are uncov ered and more details of the biophysical properties of synapses are determined, these information flow techniques may provide a general methodology to understand how physical constraints lead to informational and thereby behavioral limits in nervous systems. Author s’ Contributions: LM dev eloped and implemented efficient algorithms for computing bottleneck distance, prov ed their correctness and characterized their complexity , participated in the design of the study , and carried out analysis; LR V concei ved of the study , designed the study , coordinated the study , proved certain results, participated in data analysis, and drafted the manuscript; DS conceiv ed of the pipelining model of network flow and prov ed certain theoretical results; NAP and MEG implemented algorithms and participated in data analysis. All authors ga ve final approv al for publication and agree to be held accountable for the work performed therein. R E F E R E N C E S [1] D. J. W atts and S. H. Strogatz, “Collective dynamics of ‘small-world’ networks, ” Nature , vol. 393, no. 6684, pp. 440–442, Jun. 1998. [2] M. E. J. Ne wman, Networks: An Intr oduction . Oxford Univ ersity Press, 2010. [3] A.-L. Barab ´ asi and R. Albert, “Emergence of scaling in random networks, ” Science , vol. 286, no. 5439, pp. 509–512, Oct. 1999. [4] R. K. Ahuja, T . L. Magnanti, and J. B. Orlin, Network Flows: Theory , Algorithms, and Applications . Pearson, 1993. [5] M. Pollack, “The maximum capacity through a network, ” Oper . Res. , vol. 8, no. 5, pp. 733–736, Sept.-Oct. 1960. [6] T . C. Hu, “The maximum capacity route problem, ” Oper . Res. , vol. 9, no. 6, pp. 898–900, Nov .-Dec. 1961. November 4, 2019 DRAFT 24 [7] L. R. Ford, Jr . and D. R. Fulkerson, “Maximal flow through a network, ” Can. J. Math. , vol. 8, pp. 399–404, 1956. [8] P . Elias, A. Feinstein, and C. E. Shannon, “ A note on the maximum flow through a network, ” IRE T rans. Inf. Theory , vol. IT -2, no. 4, pp. 117–119, Dec. 1956. [9] R. W . Floyd, “ Algorithm 97: Shortest path, ” Commun. ACM , vol. 5, no. 6, p. 345, Jun. 1962. [10] R. Rammal, G. T oulouse, and M. A. V irasoro, “Ultrametricity for physicists, ” Rev . Mod. Phys. , vol. 58, no. 3, pp. 765–788, July-Sept. 1986. [11] P . E. Black, “ All simple paths, ” in Dictionary of Algorithms and Data Structures , V . Pieterse and P . E. Black, Eds. National Institute of Standards and T echnology , 2008. [12] C. Bauckhage, K. Kersting, and F . Hadiji, “Parameterizing the distance distribution of undirected networks, ” in Proc. 31st Annu. Conf. Uncertainty in Artificial Intelligence (U AI’15) , Jul. 2015. [13] S. Melnik and J. P . Gleeson, “Simple and accurate analytical calculation of shortest path lengths, ” [physics.soc-ph]., Apr . 2016. [14] V . D. Blondel, J.-L. Guillaume, J. M. Hendrickx, and R. M. Jungers, “Distance distrib ution in random graphs and application to network exploration, ” Phys. Rev . E , vol. 76, no. 6, p. 066101, Dec. 2007. [15] E. Katzav , M. Nitzan, D. ben A vraham, P . L. Krapivsky , R. K ¨ uhn, N. Ross, and O. Biham, “ Analytical results for the distribution of shortest path lengths in random networks, ” Eur ophys. Lett. , vol. 111, no. 2, p. 26006, Jul. 2015. [16] O. Sporns, G. T ononi, and R. K ¨ otter, “The human connectome: A structural description of the human brain, ” PLoS Comput. Biol. , vol. 1, no. 4, pp. 0245–0251, Sep. 2005. [17] H. S. Seung, “Neuroscience: T o wards functional connectomics, ” Nature , vol. 471, no. 7337, pp. 170–172, Mar . 2011. [18] S. Seung, Connectome: How the Brain’ s W iring Makes Us Who W e Ar e . Boston: Houghton Mifflin Harcourt, 2012. [19] C. Rockland, “The nematode as a model complex system: A program of research, ” M.I.T . Laboratory for Information and Decision Systems, W orking Paper WP-1865, Apr. 1989. [20] M. de Bono and A. V . Maricq, “Neuronal substrates of complex behaviors in C. elegans , ” Annu. Rev . Neurosci. , vol. 28, pp. 451–501, Jul. 2005. [21] P . Sengupta and A. D. T . Samuel, “ Caenorhabditis ele gans : A model system for systems neuroscience, ” Curr . Opin. Neur obiol. , vol. 19, no. 6, pp. 637–643, Dec. 2009. [22] L. R. V arshney , B. L. Chen, E. Paniagua, D. H. Hall, and D. B. Chklovskii, “Structural properties of the Caenorhabditis ele gans neuronal network, ” PLoS Comput. Biol. , vol. 7, no. 2, p. e1001066, Feb. 2011. [23] I. J. Deary , L. Penke, and W . Johnson, “The neuroscience of human intelligence differences, ” Nat. Rev . Neurosci. , vol. 11, no. 3, pp. 201–211, Mar . 2010. [24] T .-W . Lee, Y .-T . W u, Y . W .-Y . Y u, H.-C. W u, and T .-J. Chen, “ A smarter brain is associated with stronger neural interaction in healthy young females: A resting EEG coherence study , ” Intelligence , vol. 40, no. 1, pp. 38–48, Jan.-Feb . 2012. [25] S. Song, P . J. Sj ¨ ostr ¨ om, M. Reigl, S. Nelson, and D. B. Chklovskii, “Highly nonrandom features of synaptic connectivity in local cortical circuits, ” PLoS Biol. , vol. 3, no. 3, pp. 0507–0519, Mar . 2005. [26] N. C. Martins and M. A. Dahleh, “Feedback control in the presence of noisy channels: “Bode-like” fundamental limitations of performance, ” IEEE T rans. Autom. Control , vol. 53, no. 7, pp. 1604–1615, Aug. 2008. [27] O. A yaso, D. Shah, and M. A. Dahleh, “Information theoretic bounds for distributed computation over networks of point- to-point channels, ” IEEE T rans. Inf. Theory , vol. 56, no. 12, pp. 6020–6039, Dec. 2010. [28] E. Z. Macosko, N. Pokala, E. H. Feinberg, S. H. Chalasani, R. A. Butcher, J. Clardy , and C. I. Bargmann, “ A hub-and-spoke November 4, 2019 DRAFT 25 circuit drives pheromone attraction and social behaviour in C. elegans , ” Nature , vol. 458, no. 7242, pp. 1171–1176, Apr . 2009. [29] I. Rabinowitch, M. Chatzigeorgiou, and W . R. Schafer , “ A gap junction circuit enhances processing of coincident mechanosensory inputs, ” Curr . Biol. , vol. 23, no. 11, pp. 963–967, Jun. 2013. [30] J. Leskovec, J. Kleinberg, and C. Faloutsos, “Graph e volution: Densification and shrinking diameters, ” ACM T rans. Knowl. Discovery Data , vol. 1, no. 1, p. 2, Mar . 2007. [31] A. Clauset, E. T ucker , and M. Sainz, “The Colorado index of complex networks, ” https://icon.colorado.edu, 2016. [32] L. R. V arshney , B. L. Chen, E. Paniagua, D. H. Hall, and D. B. Chklovskii, “Structural properties of the Caenorhabditis ele gans neuronal network, ” arXiv:0907.2373v4 [q-bio]., Jun. 2010. [33] A. J. Whittaker and P . W . Sternberg, “Sensory processing by neural circuits in Caenorhabditis ele gans , ” Curr . Opin. Neur obiol. , vol. 14, no. 4, pp. 450–456, Aug. 2004. [34] S. H. Chalasani, N. Chronis, M. Tsunozaki, J. M. Gray , D. Ramot, M. B. Goodman, and C. I. Bargmann, “Dissecting a circuit for olfactory behaviour in Caenorhabditis ele gans , ” Natur e , vol. 450, no. 7166, pp. 63–70, Nov . 2007. [35] A. Zaslav er , I. Liani, O. Shtangel, S. Ginzburg, L. Y ee, and P . W . Sternberg, “Hierarchical sparse coding in the sensory system of Caenorhabditis ele gans , ” Pr oc. Natl. Acad. Sci. U .S.A. , vol. 112, no. 4, pp. 1185–1189, Jan. 2015. [36] D. R. Albrecht and C. I. Bargmann, “High-content behavioral analysis of Caenorhabditis ele gans in precise spatiotemporal chemical en vironments, ” Nat. Methods , vol. 8, no. 7, pp. 599–605, Jul. 2011. [37] C. Fang-Y en, M. W yart, J. Xie, R. Kawai, T . Kodger , S. Chen, Q. W en, and A. D. T . Samuel, “Biomechanical analysis of gait adaptation in the nematode Caenorhabditis ele gans , ” Proc. Natl. Acad. Sci. U.S.A. , vol. 107, no. 47, pp. 20 323–20 328, Nov . 2010. [38] M. Kaiser and C. C. Hilgetag, “Nonoptimal component placement, but short processing paths, due to long-distance projections in neural systems, ” PLoS Comput. Biol. , vol. 2, no. 7, p. e95, Jul. 2006. [39] S. Maslov and K. Sneppen, “Specificity and stability in topology of protein networks, ” Science , vol. 296, no. 5569, pp. 910–913, May 2002. [40] M. Reigl, U. Alon, and D. B. Chklovskii, “Search for computational modules in the C. ele gans brain, ” BMC Biol. , vol. 2, p. 25, Dec. 2004. [41] F . Rieke, D. W arland, R. de Ruyter van Stev eninck, and W . Bialek, Spikes: Exploring the Neural Code . Cambridge, MA: MIT Press, 1997. [42] A. Borst and F . E. Theunissen, “Information theory and neural coding, ” Nat. Neurosci. , vol. 2, no. 11, pp. 947–957, Nov . 1999. [43] B. W . Connors and M. A. Long, “Electrical synapses in the mammalian brain, ” Annu. Rev . Neur osci. , vol. 27, pp. 393–418, Jul. 2004. [44] T . C. Ferr ´ ee and S. R. Lockery , “Computational rules for chemotaxis in the nematode C. elegans , ” J. Comput. Neurosci. , vol. 6, no. 3, pp. 263–277, May 1999. [45] A. Manwani and C. Koch, “Detecting and estimating signals in noisy cable structures, I: Neuronal noise sources, ” Neural Comput. , vol. 11, no. 8, pp. 1797–1829, Nov . 1999. [46] J. B. Johnson, “Thermal agitation of electricity in conductors, ” Phys. Rev . , vol. 32, no. 1, pp. 97–109, Jul. 1928. [47] H. Nyquist, “Thermal agitation of electric charge in conductors, ” Phys. Rev . , vol. 32, no. 1, pp. 110–113, Jul. 1928. [48] S. R. Lockery and M. B. Goodman, “The quest for action potentials in C. elegans neurons hits a plateau, ” Nat. Neur osci. , vol. 12, no. 4, pp. 377–378, Apr . 2009. November 4, 2019 DRAFT 26 [49] T . B. Achacoso and W . S. Y amamoto, A Y’ s Neur oanatomy of C. elegans for Computation . CRC Press, 1992. [50] N. Chayat and S. Shamai (Shitz), “Bounds on the information rate of intertransition-time-restricted binary signaling over an A WGN channel, ” IEEE T rans. Inf. Theory , vol. 45, no. 6, pp. 1992–2006, Sep. 1999. [51] L. R. V arshney , P . J. Sj ¨ ostr ¨ om, and D. B. Chklovskii, “Optimal information storage in noisy synapses under resource constraints, ” Neur on , vol. 52, no. 3, pp. 409–423, Nov . 2006. A P P E N D I X : G A P J U N C T I O N L I N K S Although nearly all information-theoretic in vestigation of synaptic transmission has focused on electrochemical synapses [41], [42], purely electrical gap junctions are also rather ubiquitous, not only in C. ele gans but also in the mammalian brain [43]. Among the 282 somatic neurons in the C. ele gans connectome, there are 890 gap junctions [22]. As such, it is important to provide a mathematical model of signal flow through gap junctions, along with the noise that perturbs signals. A. Thermal Noise A gap junction is a hollow protein that allows electrical current to flow between cells, and thereby allows signaling in both directions. In previous modeling efforts, it has been determined that gap junctions can essentially just be modeled as resistors with thermal noise that is additi ve white Gaussian (A WGN) [44]. Noise due to stochastic chemical ef fects or due to random background synaptic activity [45] need not be considered. The electrical conductance of a C. ele gans gap junction is 200 pS [22]; a resistor with conductance 200 pS corresponds to a resistance of 5000 M Ω . In order to compute the root mean square (RMS) thermal noise v oltage v n , we use the Johnson-Nyquist formula [46], [47]: v n = p 4 k B T R ∆ f , (12) where k B is Boltzmann’ s constant 1 . 38 × 10 − 23 J/K, T is the absolute temperature in K, R is the resistance in Ω , and ∆ f is the bandwidth in Hz o ver which the noise is measured. W e assume room temperature ( 298 K) and for reasons that will become evident in the sequel, we take the bandwidth to be 1700 Hz. Then v n = √ 4 · 1 . 38 × 10 − 23 · 298 · 5 × 10 9 · 1700 (13) = 3 . 74 × 10 − 4 V . November 4, 2019 DRAFT 27 W e consider the thermal noise RMS voltage of a C. ele gans gap junction to be this 0 . 374 mV v alue. B. Plateau P otentials Although one might hope that the signaling scheme that can be used over a gap junction is unrestricted, there are biophysical constraints that impose limits. W e describe these signaling limitations for C. ele gans . Signaling arises from regenerati ve e vents in neurons. Electrophysiologists hav e described four main types of regenerati ve ev ents: action potentials, graded potentials, intrinsic oscillations, and plateau potentials. Plateau potentials are prolonged all-or-none depolarizations that can be triggered and terminated by brief positi ve- and negati ve-current pulses, respecti vely [48]. Plateau potentials are the biological equi v alent of Schmitt triggers [48]. It is thought that plateau potentials may be used by many neurons in C. ele gans , and that they may arise through synaptic interaction [48]. RMD neurons in C. ele gans have two stable resting potentials, one near − 70 mV and one near − 35 mV [48], and these v alues are thought to hold across the nerv ous system. These tw o le vels can be thought of as the two possible input lev els to a gap junction channel: v 0 = − 70 × 10 − 3 V , (14) v 1 = − 35 × 10 − 3 V , (15) as part of a random tele graph signal. Switching between the two lev els cannot happen arbitrarily quickly , as there is an absolute refractory period between regenerati ve e vents due to biochemical constraints. Unfortunately , the absolute refractory period for C. ele gans is not kno wn due to the difficulty in performing the requisite electrophysiology experiments [email communication with S. R. Lockery (14 Dec. 2010) and later confirmation]. The fastest impulse potentials observed in neurons in common reference are, perhaps, in the Renshaw cells of the mammalian spinal motor system, and ha ve been reported as high as 1700 per second [49, p. 47]. Moving forward, we use this as a bound, howe ver the typical value for the absolute refractory period across the animal kingdom is 1 ms; C. ele gans may be ev en slo wer . This is where the noise bandwidth value of 1700 Hz also comes from. November 4, 2019 DRAFT 28 C. Capacity Having determined the noise distribution and the signaling constraints, we aim to find the capacity of this continuous-time, intertransition-time-restricted, binary-input A WGN channel. This capacity computation problem has been studied by Chayat and Shamai [50], assuming no timing jitter . Rather than using those precise results, we take a simplified approach through the discretization of time. W e assume slotted pulse amplitude modulation, which is robust to any timing jitter that may be present in C. ele gans signal propagation. In particular , we consider a discrete-time channel with 1700 channel usages per second, two binary input lev els of − 35 and − 70 , and A WGN noise with standard deviation 0 . 374 . As can be noted, the signal-to-noise ratio is rather high, 17 . 5 2 / 0 . 374 2 = 2 . 2 × 10 3 , and so the capacity will be approximately one bit per channel usage. Just to be sure, we compute this more precisely . The capacity is C = h ( Y ) − 1 2 log 2 π e , (16) where h ( Y ) is the differential entropy of the distribution: p ( y ) = 1 2 " 1 √ 2 π exp ( − ( y − √ SNR) 2 2 )# + 1 2 " 1 √ 2 π exp ( − ( y + √ SNR) 2 2 )# , (17) with SNR = 2 . 2 × 10 3 , see e.g. [51]. Performing the calculation demonstrates the rate loss below 1 bit per channel usage due to noise is negligible. Thus we assume that the capacity of a C. ele gans gap junction is 1700 bits per second, or equi v alently 5 . 9 × 10 − 4 seconds per bit. Synaptic connection between two neurons may contain more than one gap junction. 1 Although it is dif ficult to maintain electrical separation between indi vidual gap junctions, for the purposes of this paper we assume that each gap junction can act independently . Hence the channel capacity of parallel gap junction links is simply taken to be the number of gap junctions between the two neurons multiplied by the capacity of an individual gap junction. 1 The mean number of gap junctions between two connected neurons is 1 . 73 ; see [22, Fig. 3(b)] for the distribution, which is well-modeled by a power law with exponent 2 . 76 . November 4, 2019 DRAFT

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment