Learning a Representation for Cover Song Identification Using Convolutional Neural Network

Cover song identification represents a challenging task in the field of Music Information Retrieval (MIR) due to complex musical variations between query tracks and cover versions. Previous works typically utilize hand-crafted features and alignment …

Authors: Zhesong Yu, Xiaoshuo Xu, Xiaoou Chen

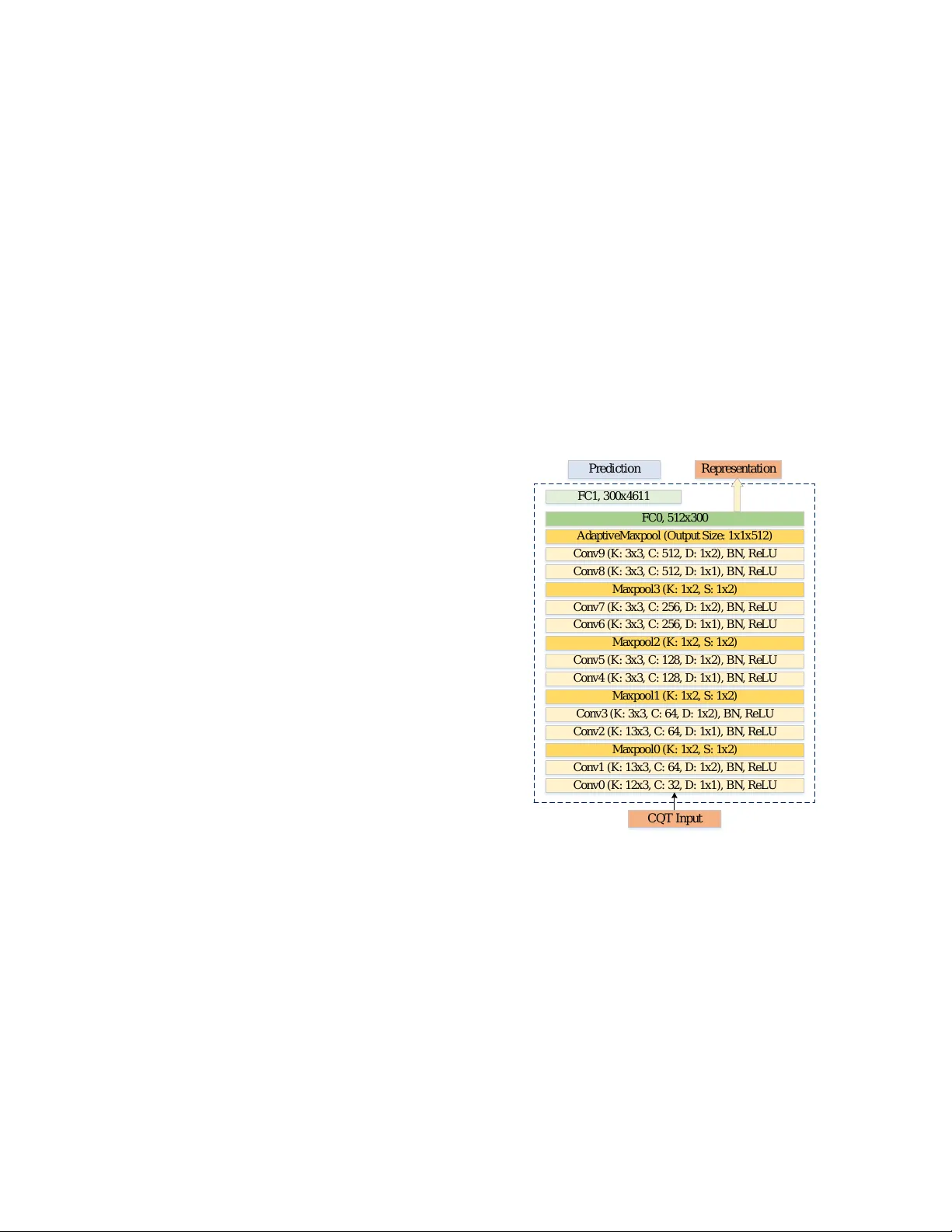

LEARNING A REPRESENT A TION FOR CO VER SONG IDENTIFICA TION USING CONV OLUTIONAL NEURAL NETWORK Zhesong Y u, Xiaoshuo Xu, Xiaoou Chen, Deshun Y ang W angxuan Institute of Computer T echnology , Peking University ABSTRA CT Cov er song identification represents a challenging task in the field of Music Information Retriev al (MIR) due to complex musical variations between query tracks and cover versions. Previous works typically utilize hand-crafted features and alignment algorithms for the task. More recently , further breakthroughs are achiev ed employing neural network ap- proaches. In this paper , we propose a nov el Con volutional Neural Netw ork (CNN) architecture based on the characteris- tics of the cov er song task. W e first train the netw ork through classification strategies; the network is then used to extract music representation for co ver song identification. A scheme is designed to train robust models against tempo changes. Experimental results show that our approach outperforms state-of-the-art methods on all public datasets, impro ving the performance especially on the large dataset. Index T erms — Music Information Retriev al, Cover Song Identification 1. INTR ODUCTION Cov er song identification has long been a popular task in the music information retriev al community , with potential appli- cations in areas such as music license management, music retriev al, and music recommendation. Cov er song identifi- cation can also be seen as measuring the similarity between music melodies. Gi ven those co ver songs may differ from the original song in key transposition, speed change and struc- tural variations, identifying cov er songs is a rather challeng- ing task. Over the past ten years, researchers initially at- tempt to address the problem employing Dynamic Program- ming (DP) approaches. T ypically , chroma sequences repre- senting the intensity of twelve pitch classes are used to de- scribe recordings, and then a DP method is utilized for finding an optimal alignment between two given recordings, resolv- ing the discrepancy caused by tempo changes and structural variations [1, 2, 3]. Such approaches w ork well when facing structural variations and tempo changes in the music; how- ev er , the in volv ement of element-to-element distance compu- tation with quadratic time comple xity makes it unsuitable for large-scale datasets. Alternativ ely , some researchers attempted to identify Q u e r y A u d i o N e t w o r k C Q T R e p r e s e n t a t i o n R e f e r e n c e A u d i o C Q T s N e t w o r k R e p r e s e n t a t i o n s D i s t a n c e M e a s u r e m e n t R a n k i n g l i s t T r a i n i n g A u d i o C Q T s N e t w o r k P r e d i c t i o n s { x n } { X n } { y n } q Q f θ ( Q ) { r n } { R n } { f θ ( R n ) } Fig. 1 . Training procedure and retrie val procedure. cov er songs by modeling the music. For instance, Serrà et al. studied time series modeling for cover song identification [3]. [4, 5] represent the music with fixed-dimensional vectors, which enables a direct measure of the mus ic similarity . These approaches highly improv ed the ef ficiency compared to align- ment methods, while the loss of the temporal information of music in these approaches may yield a lower precision. Moreov er , deep learning approaches are introduced to cov er song identification. For instance, CNNs are utilized to measure the similarity matrix [6] or learn features [7, 8, 9, 10]. While these methods ha ve achiev ed promising results, there is still room for improvement. In this paper , a specially de- signed CNN architecture is proposed to o vercome challenges of key transposition, speed change and structural variations existing in cov er song identification. Notably , the use of the specialized kernel size is fist ev er utilized in the field of music information retriev al. The dilation conv olution and the method of data augmentation are also introduced. Our approach outperforms state-of-the-art methods on all public datasets with better accuracy b ut lo wer time complexity . Fur - thermore, to our best kno wledge, our method is currently the best method to identify cov er songs in huge real-life corpora. 2. APPR O A CH 2.1. Problem F ormulation As shown in Figure 1, we ha ve a training dataset D = { ( x n , t n ) } , where x n is a recording and t n is a one-hot vector denoting to which song (or class) the recording be- longs. Different versions of the same song are vie wed as the samples from the same class, and different songs are re garded as the dif ferent classes. W e aim to train a classification net- work model parameterized as { θ , λ } from D . As shown in Figure 2, θ is the parameter of all con volutions and FC 0 ; λ is the parameter of FC 1 ; f θ is the output of the FC 0 layer . Then, this model could be used for cov er song retrie val. More specifically , after the training, giv en a query Q and references R n in the dataset, we extract latent features f θ ( Q ) , f θ ( R n ) which we call as music representations obtained by the net- work, and then we use a metric s to measure the similarity between them. In the following sections, we will discuss the low-le vel representation used, the design of network struc- ture and a robust trained model against key transposition and tempo change. W e use lo wercase for audio and uppercase for the CQT to discriminate. 2.2. Low-lev el Representation The CQT , mapping frequency energy into musical notes, is extracted by Libr osa [11] for our experiment. The audio is resampled to 22050 Hz, the number of bins per octave is set as 12 and Hann windo w is used for extraction with a hop size of 512 . Finally , a 20 -point mean do wn-sampling in the time direction is applied to the CQT , and the resulting feature is a sequence with a feature rate of about 2 Hz. It could also be viewed as an 84 × T matrix where T depends on the duration of input audio. 2.3. Network Structure Inspired by successful applications of network architectures like [12, 13], we design a novel Network architecture for the cov er song task. W e stack small filters following with max pooling operations, e xcept that in initial layers, we design the height of filter to be 12 or 13 (see Figure 2) as the number of bins per octav e is set as 12 in the CQT . This setting re- sults in that the units of the third layer have a receptive field with a height of 36 ; it spans across three octaves or thirty-six semitones. W e also introduce dilated con volution into the model to enlarge the receptive field because cover song identification focuses on the long-term melody of the music. The design is consistent with the ideas of e xisting works in [2, 4], which ex- tracted features or measured the similarity from a long range. More importantly , our model does not in volve any down- sample pooling operation in the frequency dimension; in other words, the vertical stride is always set to 1 , dif ferent from prev alent network structures like VGG and ResNet [12, 13]. The motiv ation behind this design focuses on the f act that ke y transposition may be one or two semitones, corresponding to moving the CQT matrix vertically for merely one or two elements. W ithout downsampling feature map, the network remains a higher resolution and deals with ke y transposition better . W e experimentally v alidate that this design helps im- prov e the precision (see Section 4.1). Furthermore, after se veral con volutional and pooling lay- ers, we apply an adaptiv e pooling layer to the feature map, whose length varies depending on the input audio. Ob vi- ously , this global pooling has the advantage of con verting variable-length feature maps into fixed-dimensional vectors, connected with two fully-connected layers to exploit more in- formation. W ithout the global pooling, the fully-connected layers only allow fix ed-dimensional inputs, which are not the cases of music as dif ferent compositions may have dif ferent durations. Comparing with [9] who utilized T emporal Pyra- mid Pooling, we utilize global pooling because it performs the same as TPP in our model. One explanation is that our con v olutional network is much deeper . Giv en the inputs of network X , the output of FC 0 is f θ ( X ) ∈ R 300 , and the prediction of network is y = softmax( λf θ ( X )) . Cross-entropy loss L is used for training. In our cases, different versions of a song are considered as the same category , and different songs are vie wed as dif ferent classes. C o n v 0 ( K : 12 x 3 , C : 32 , D : 1 x 1 ) , B N , R e L U C o n v 1 ( K : 1 3 x 3 , C : 64 , D : 1 x 2 ) , B N , R e L U M a x p o o l 0 ( K : 1 x 2 , S : 1 x 2 ) C o n v 2 ( K : 1 3 x 3 , C : 64 , D : 1 x 1 ) , B N , R e L U C o n v 3 ( K : 3 x 3 , C : 6 4 , D : 1 x 2 ) , B N , R e L U M a x p o o l 1 ( K : 1 x 2 , S : 1 x 2 ) C o n v 4 ( K : 3 x 3 , C : 1 2 8 , D : 1 x 1 ) , B N , R e L U C o n v 5 ( K : 3 x 3 , C : 1 2 8 , D : 1 x 2 ) , B N , R e L U M a x p o o l 2 ( K : 1 x 2 , S : 1 x 2 ) C o n v 6 ( K : 3 x 3 , C : 2 5 6 , D : 1 x 1 ) , B N , R e L U C o n v 7 ( K : 3 x 3 , C : 2 5 6 , D : 1 x 2 ) , B N , R e L U M a x p o o l 3 ( K : 1 x 2 , S : 1 x 2 ) C o n v 8 ( K : 3 x 3 , C : 5 1 2 , D : 1 x 1 ) , B N , R e L U C o n v 9 ( K : 3 x 3 , C : 5 1 2 , D : 1 x 2 ) , B N , R e L U A d a p t i v e M a x p o o l ( O u t p u t S i z e : 1 x 1 x 5 1 2 ) FC 0 , 5 1 2 x 3 0 0 R e p r e s e n t a t i o n C Q T I n p u t FC 1 , 3 0 0 x 4 6 1 1 P r e d i c t i o n Fig. 2 . Network structure. K: kernel size, C: channel number, D: dilation and S: stride. The stride is set to 1 × 1 for the con v olutional layers, and pooling layers has a dilation of 1 × 1 . The output dimension is 4611 , the number of classes in the training set. 2.4. T raining Scheme For each batch, we sample some recordings from the training set and extract CQTs from them. For each CQT , we randomly crop three subsequences with a length of 200 , 300 and 400 for training, corresponding to roughly 100 s, 150 s and 200 s, respectiv ely . Algorithm 1 Data augmentation and training strategy Input : Training set D , batch size n , changing range (a, b) Output : Optimized parameter θ , W 1: repeat 2: for L ∈ { 200 , 300 , 400 } do 3: sample a batch of recordings B from the training set D 4: S ← ∅ 5: f or x ∈ B do 6: r ← sample from U ( a, b ) 7: x ← simulate tempo changes on x with a chang- ing factor r 8: X ← e xtract the CQT from x 9: X ← crop a subsequence from X with a length l 10: S ← S ∪ X 11: end for 12: Feed-forward with T 13: Backpropagation to update θ and W 14: end for 15: until Network con ver ges Despite the training set contains covers performed at dif- ferent speeds, each song merely owns sev eral co vers on av- erage for training. Moreover , as our model does not e xplic- itly handle tempo changes in cover songs, it may be difficult for the model to learn a representation robust against tempo changes automatically . Therefore, we perform data augmen- tation during model training. As shown in Algorithm 1, we sample a changing factor from (0 . 7 , 1 . 3) for each recording in the batch following uniform distribution and simulate tempo changes using Librosa [11] on the recording before cropping subsequences. 2.5. Retrieval After the training, the network is used to extract music rep- resentations. As shown in Figure 1, giv en a query q and a reference r , we first extract their CQT descriptors Q and R respectiv ely , which are fed into the network to obtain music representations f θ ( Q ) and f θ ( R )) , and then the similarity s , defined as their cosine similarity , are measured. After com- puting the pair-wise similarity between the query and refer - ences in the dataset, a ranking list is returned for ev aluation. 3. EXPERIMENT AL SETTINGS 3.1. Dataset Second Hand Songs 100K (SHS100K) , which is collected from Second Hand Songs website by [8], consisting of 8858 songs with various covers and 108523 recordings. This dataset is split into three subsets – SHS100K-TRAIN , SHS100K-V AL and SHS100K-TEST with a ratio of 8 : 1 : 1 . Y outube is collected from the Y ouT ube website, contain- ing 50 compositions of multiple genres [14]. Each song in Y outube has 7 versions, with 2 original versions and 5 differ- ent versions and thus results in 350 recordings in total. In our experiment, we use the 100 original versions as references and the others as queries following the same as [15, 9, 8]. Covers80 is a widely used benchmark dataset in the liter- ature. It has 80 songs, with 2 co vers for each song, and has 160 recordings in total. T o compare with existing methods, we compute the similarity of any pair of recordings. Mazurkas is a classical music collection consisting of 2914 recordings of 49 Chopin’ s Mazurkas, originated from the Mazurka Project 1 . The number of covers for each piece varies between 41 and 95 . For this dataset, we follow the experimental setting of [15]. 3.2. Evaluation For ev aluation, we calculate the common ev aluation metrics: mean average precision (MAP), precision at 10 (P@10) and the mean rank of the first correctly identified cover (MR1). These metrics are the ones used in Mirex Audio Cover Song Identification contest 2 . Additionally , query time is recorded for efficiency e valuation. All the experiments are run in a Linux server with two TIT AN X (Pascal) GPUs. 4. EXPERIMENT AL RESUL T AND ANAL YSIS 4.1. Exploration of Network Structure Firstly we explore the kernel size of CNNs and replace the kernel size of the initial three layers with 3 × 3 , 7 × 3 , 15 × 3 , 7 × 7 , 12 × 12 . The result of e xperiment sho ws that the height of filter to be 12 or 13 performs the best. Additional, we change the vertical strides of max-pooling layers and conduct sev eral experiments to explore its influ- ence on accuracy . The original model is denoted as CQT - Net, and the modified network is denoted as CQT -Net { 4 } , CQT -Net { 3 , 4 } and CQT -Net { 2 , 3 , 4 } respectiv ely , where the numbers indicate the shape of corresponding pooling layers are changed to (2 , 2) . For instance, CQT -Net { 2 } means that Pool 2 is replaced with a pooling operation with a stride and size of (2 , 2) . That is, the total vertical strides for CQT - Net { 4 } , CQT -Net { 3 , 4 } and CQT -Net { 2 , 3 , 4 } are 2 , 4 and 8 , respectiv ely . When the vertical stride increases, MAP de- grades on the four datasets consistently , as well as MR1 and P@10. W e suppose this is because the key transposition may shift one or two semitones; the network having a higher res- olution of feature dimension (that is, setting vertical stride to be 1 ) could capture these changes and help improve the pre- cision. 1 www.mazurka.org.uk 2 https://www.music- ir.org/mirex/wiki/2019: Audio_Cover_Song_Identification MAP P@10 MR1 T ime Results on Y outube DPLA [2] 0.525 0.132 9.43 2420s SiMPle [15] 0.591 0.140 7.91 18.7s Fingerprinting [16] 0.648 0.145 8.27 - SuCo-DTW [17] 0.800 0.180 3.42 4.59s Ki-CNN [8] 0.656 0.155 6.26 0.35ms TPPNet [9] 0.859 0.188 2.85 0.04ms CQT -Net 0.917 0.192 2.50 0.04ms Results on Covers80 NCP-WIDI [18] 0.645 - - - CRP [3] 0.544 0.061 - - Fusing [19] 0.625 0.071 - - Ki-CNN [8] 0.506 0.068 16.4 0.55ms TPPNet [9] 0.744 0.086 6.88 0.06ms CQT -Net 0.840 0.091 3.85 0.06ms Results on Mazurkas DTW [15] 0.882 0.949 4.05 - NCD [20] 0.767 - - - Compression [21] 0.795 - - - Fingerprinting [22] 0.819 - - - SiMPle [15] 0.880 0.952 2.33 - SuCo-repeat [17] 0.850 0.940 2.77 - 2DFM [4] 0.363 0.578 15.6 4.76ms Ki-CNN [8] 0.707 0.892 4.01 5.34ms CQT -Net 0.933 0.956 2.87 0.50ms Results on SHS100K-TEST 2DFM [4] 0.104 0.113 415 13.9ms Ki-CNN [8] 0.219 0.204 174 21.0ms TPPNet [9] 0.465 0.357 72.2 3.68ms CQT -Net 0.655 0.456 54.9 3.68ms T able 1 . Performance on different datasets (- indicates the results are not shown in original w orks). 4.2. Comparison W e compare with other state-of-the-art methods on dif ferent datasets. T able 4.1 shows our approach outperforms state- of-the-art methods on all datasets. The advantages of our approach lie in without relying on complicated hand-crafted features and elaborately-designed alignment algorithms, our approach exploits massive data and feature learning and ob- tains high precision. By collecting a larger dataset, our ap- proach may obtain higher precision. It is worth noting that our training set SHS100K-TRAIN mainly consists of pop mu- sic while Mazurkas contains classical music. Our approach outperforms state-of-the-art methods on this dataset, which indicates a good generalization ability . W e do not sho w the result of [22] in Mazurka Project because our test sets are dif- ferent. As for the lar ge dataset SHS100K-TEST , our method performs much better than state-of-the-art methods. Moreov er , the query time shown in the table does not include the time of feature extracting. Therefore, our method has the same time consumed as [9]. It extracts a fixed- dimensional feature whatev er the duration of input audio is. Theoretically , it has linear time complexity , faster than sequence alignment methods with quadratic time complex- ity . One could find that the query time of our approach is shorter than approaches such as DPLA, SiMPle by several magnitudes. For Ki-CNN and TPPNet, they model mu- sic with a fixed-dimensional vector and have similar time complexity . In our implementation, our approach learns a 300 -dimensional representation, which is the same as TPP- Net, explaining why the time consumption of our approach is the same as TPPNet. 4.3. Result Demonstration and Error Analysis W e listen to the T op10 retriev al results and attempt to make some analysis on SHS100K-TEST . Our approach could iden- tify versions when performed by dif ferent genders, accom- panied by different instruments, sung in different languages, etc. Especially , as our training goal is to classify the song and different versions of the same song often hav e similar styles, melodic and chord structures, we find that even though some candidates in the T op10 may not be the cover of the query , but they hav e similar properties such as accompaniment and genre with the query . For instance, Everybody Knows This Is Nowhere by the Bluebeaters has a similar accompaniment with that of W aiting in V ain by Bob Marle y & The W ailers. In this sense, our approach may also be used to retriev e sim- ilar music of the query and extended to content-based music recommendation. Furthermore, we find that T op1 precision of our model is 0 . 81 , suggesting that it could find a cover as the T op1 candi- date for 81% queries. Howe ver , it works worse in some cases. This may explain why our approach obtains a MAP of 0 . 655 while only a MR1 of 54 . 9 on this dataset. Most importantly , the high T op1 precision and the fast retriev al speed make our method possible to handle the real-life co ver song task instead of just staying in the lab stage. 5. CONCLUSION Different from conv entional techniques, we propose CNNs for feature learning tow ards cover song identification. Utiliz- ing specific kernels and dilated con volutions to e xtend the re- ceptiv e field, we show that it could be used to capture melodic structures underlying the music and learn key-in variant repre- sentations. By casting the problem into a classification task, we train a model that is used for music version identification. Additionally , we design a training strategy to enhance the model’ s robustness against tempo changes and to deal with in- puts with different lengths. Combined with these techniques, our approach outperforms state-of-the-art methods on all pub- lic datasets with low time complexity . Furthermore, we show that this model could retrie ve various music v ersions and dis- cov er similar music. Eventually , we believ e our method is competent to solve real-life co ver song problem. 6. REFERENCES [1] Daniel PW Ellis and Graham E Poliner , “Identifying cov er songs with chroma features and dynamic pro- gramming beat tracking, ” in IEEE International Confer - ence on Acoustics, Speec h and Signal Pr ocessing , 2007. [2] Joan Serrà , Emilia Gómez, Perfecto Herrera, and Xavier Serrà , “Chroma binary similarity and local alignment applied to cover song identification, ” IEEE T ransactions on Audio, Speech, and Languag e Pr ocess- ing , vol. 16, no. 6, pp. 1138–1151, 2008. [3] Joan Serrà , Xavier Serrà , and Ralph G Andrzejak, “Cross recurrence quantification for cov er song identi- fication, ” New Journal of Physics , vol. 11, no. 9, pp. 093017, 2009. [4] Thierry Bertin-Mahieux and Daniel PW Ellis, “Lar ge- scale cover song recognition using the 2d fourier trans- form magnitude, ” in International Society for Music In- formation Retrieval Confer ence , 2012. [5] Julien Osmalsky , Marc V an Droogenbroeck, and Jean- Jacques Embrechts, “Enhanci ng cov er song identifi- cation with hierarchical rank aggreg ation, ” in Interna- tional Society for Music Information Retrieval Confer- ence , 2016, pp. 136–142. [6] Sungkyun Chang, Juheon Lee, Sang Keun Choe, and Kyogu Lee, “ Audio cover song identification using con v olutional neural network, ” in W orkshop Machine Learning for Audio Signal Pr ocessing at NIPS , 2017. [7] Xiaoyu Qi, Deshun Y ang, and Xiaoou Chen, “ Au- dio feature learning with triplet-based embedding net- work., ” in AAAI , 2017, pp. 4979–4980. [8] Xiaoshuo Xu, Xiaoou Chen, and Deshun Y ang, “K ey- in v ariant conv olutional neural network to ward efficient cov er song identification, ” in 2018 IEEE International Confer ence on Multimedia and Expo . IEEE, 2018, pp. 1–6. [9] Zhesong Y u, Xiaoshuo Xu, Xiaoou Chen, and Deshun Y ang, “T emporal pyramid pooling conv olutional neural network for cover song identification, ” in Pr oceedings of the 28th International Joint Confer ence on Artificial Intelligence , 2019, pp. 4846–4852. [10] Guillaume Doras and Geoffroy Peeters, “Cover detec- tion using dominant melody embeddings, ” CoRR , vol. abs/1907.01824, 2019. [11] Brian McFee, Colin Raffel, Dawen Liang, Daniel PW Ellis, Matt McV icar, Eric Battenberg, and Oriol Nieto, “librosa: Audio and music signal analysis in python, ” in Pr oceedings of the 14th python in science conference , 2015, pp. 18–25. [12] Karen Simonyan and Andrew Zisserman, “V ery deep con v olutional networks for large-scale image recogni- tion, ” in International Confer ence on Learning Repr e- sentations , 2015. [13] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun, “Deep residual learning for image recognition, ” in Pr oceedings of the IEEE conference on computer vision and pattern r ecognition , 2016, pp. 770–778. [14] Diego Furtado Silv a, V inícius MA de Souza, and Gus- tav o EAP A Batista, “Music shapelets for fast cover song recognition., ” in International Society for Music Infor- mation Retrieval Confer ence , 2015, pp. 441–447. [15] Diego F Silva, Chin-Chin M Y eh, Gustav o Enrique de Almeida Prado Alves Batista, Eamonn K eogh, et al., “Simple: assessing music similarity using subsequences joins, ” in International Society for Music Information Retrieval Confer ence , 2016. [16] Prem Seetharaman and Zafar Rafii, “Cover song identi- fication with 2d fourier transform sequences, ” in IEEE International Confer ence on Acoustics, Speech and Sig- nal Pr ocessing , 2017, pp. 616–620. [17] Diego Furtado Silv a, Felipe V ieira Falcao, and Nazareno Andrade, “Summarizing and comparing music data and its application on cover song identification, ” in Interna- tional Society for Music Information Retrieval Confer- ence , 2018. [18] Y ao Cheng, Xiaoou Chen, Deshun Y ang, and Xiaoshuo Xu, “Ef fectiv e music feature ncp: Enhancing cover song recognition with music transcription, ” in Pr oceed- ings of the 40th International ACM SIGIR Confer ence on Resear ch and Development in Information Retrieval . A CM, 2017, pp. 925–928. [19] Ning Chen, W ei Li, and Haidong Xiao, “Fusing similar - ity functions for cover song identification, ” Multimedia T ools and Applications , vol. 77, no. 2, pp. 2629–2652, 2018. [20] Juan P Bello, “Measuring structural similarity in mu- sic, ” IEEE T ransactions on Audio, Speech, and Lan- guage Pr ocessing , vol. 19, no. 7, pp. 2013–2025, 2011. [21] Diego Silva, Hélene Papadopoulos, Gustav o EAP A Batista, and Daniel PW Ellis, “ A video compression- based approach to measure music structural similarity , ” in 14th International Society for Music Information Re- trieval Confer ence , 2013, pp. 95–100. [22] Peter Grosche, Joan Serrà , Meinard Müller , and Josep Lluis Arcos, “Structure-based audio fingerprint- ing for music retrie val., ” in International Society for Music Information Retrieval Confer ence , 2012, pp. 55– 60.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment