A Dynamically Controlled Recurrent Neural Network for Modeling Dynamical Systems

This work proposes a novel neural network architecture, called the Dynamically Controlled Recurrent Neural Network (DCRNN), specifically designed to model dynamical systems that are governed by ordinary differential equations (ODEs). The current state vectors of these types of dynamical systems only depend on their state-space models, along with the respective inputs and initial conditions. Long Short-Term Memory (LSTM) networks, which have proven to be very effective for memory-based tasks, may fail to model physical processes as they tend to memorize, rather than learn how to capture the information on the underlying dynamics. The proposed DCRNN includes learnable skip-connections across previously hidden states, and introduces a regularization term in the loss function by relying on Lyapunov stability theory. The regularizer enables the placement of eigenvalues of the transfer function induced by the DCRNN to desired values, thereby acting as an internal controller for the hidden state trajectory. The results show that, for forecasting a chaotic dynamical system, the DCRNN outperforms the LSTM in $100$ out of $100$ randomized experiments by reducing the mean squared error of the LSTM’s forecasting by $80.0% \pm 3.0%$.

💡 Research Summary

The paper introduces a novel recurrent neural network architecture called the Dynamically Controlled Recurrent Neural Network (DCRNN) specifically designed for modeling dynamical systems that are governed by ordinary differential equations (ODEs). Traditional recurrent models such as Long Short‑Term Memory (LSTM) networks excel at tasks that require long‑term memory, but they often fail to capture the underlying physics of systems whose evolution depends only on the current state, input, and initial conditions. LSTMs tend to “memorize” rather than learn the governing dynamics, which can lead to poor generalization for physical processes.

DCRNN addresses this limitation by incorporating two key innovations. First, it adds learnable skip‑connections that link the hidden state at time t to a set of previous hidden states hₜ₋ᵢ for i = 1,…,k. Each skip‑connection is weighted by a diagonal matrix αᵢ whose entries are trainable parameters. The update rule can be written as

hₜ = ∑_{i=1}^{k} αᵢ hₜ₋ᵢ + φ_h(hₜ₋₁, xₜ),

where φ_h is a standard activation function (tanh in the experiments). When k = 0 the model reduces to a vanilla RNN; when k = 1 and α₁ = 1 it reproduces the constant‑error‑carousel structure of the original LSTM; and when α₁ is set to the forget‑gate value it recovers the standard LSTM formulation. By learning the α‑coefficients, the network can adaptively combine information from multiple past time steps, effectively representing higher‑order differential equations.

Second, the authors treat the hidden states as the state variables of a dynamical system and apply Lyapunov linearization to obtain a linearized state‑space model A around equilibrium points. The eigenvalues λ(A) determine the stability of the hidden‑state dynamics: if all eigenvalues lie strictly inside the unit circle (|λ| < 1), the hidden trajectories are guaranteed to converge. To enforce this property during training, a regularization term is added to the loss function that penalizes the Euclidean distance between the current eigenvalues of A (which depend on the learnable α‑coefficients) and a set of desired eigenvalues λ* chosen a priori (typically close to the origin for fast convergence). The total loss becomes

C_DCRNN = C_NN + β · C_λ,

where C_NN is the standard prediction loss (e.g., mean‑squared error) and C_λ = ∑_{i}‖λ*_i − λ_i(α)‖². In the experiments β = 1, and a small learning rate is used so that the eigenvalues evolve smoothly across iterations.

The inclusion of skip‑connections complicates back‑propagation through time (BPTT) because gradients must be propagated through multiple past states. The authors acknowledge the resulting combinatorial expansion and provide an alternative derivation in the supplementary material to keep the computational cost manageable.

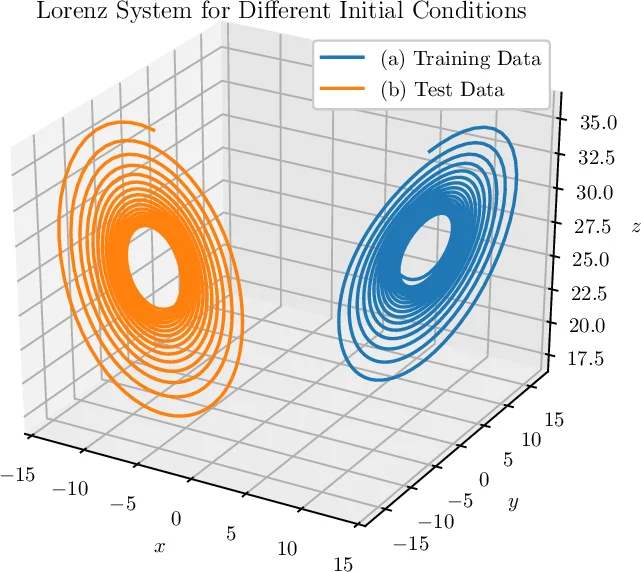

Experimental validation focuses on forecasting the chaotic Lorenz system. The authors conduct 100 randomized trials with different initial conditions and random seeds. In every trial DCRNN outperforms a baseline LSTM, achieving an average reduction in mean‑squared error of 80 % ± 3 % relative to the LSTM. This substantial improvement is attributed to the eigenvalue regularizer, which stabilizes hidden‑state trajectories and prevents the saturation or divergence that often hampers LSTM training on physical dynamics.

The paper situates DCRNN within related work on neural ODEs, adaptive skip intervals, batch normalization for RNNs, dilated RNNs, and hierarchical RNNs. While prior approaches either add memory cells, use fixed skip patterns, or apply external ODE solvers, DCRNN uniquely embeds a control‑theoretic mechanism directly into the network’s architecture and loss.

Limitations discussed include the O(m²) computational cost of eigenvalue computation (where m = n·k), the increased BPTT complexity for large k, and sensitivity to the choice of target eigenvalues. The authors suggest future directions such as efficient eigenvalue approximations, adaptive selection of k, and integration with more advanced nonlinear control techniques.

In conclusion, DCRNN demonstrates that incorporating control‑theoretic stability constraints into recurrent networks can dramatically improve the modeling of ODE‑driven dynamical systems. By learning skip‑connections and explicitly regularizing the hidden‑state eigenstructure, the architecture offers both interpretability and numerical stability, opening a promising pathway for physics‑informed deep learning in scientific and engineering time‑series prediction.

Comments & Academic Discussion

Loading comments...

Leave a Comment