Interactive Image Restoration

Machine learning and many of its applications are considered hard to approach due to their complexity and lack of transparency. One mission of human-centric machine learning is to improve algorithm transparency and user satisfaction while ensuring an…

Authors: Zhiwei Han, Thomas Weber, Stefan Matthes

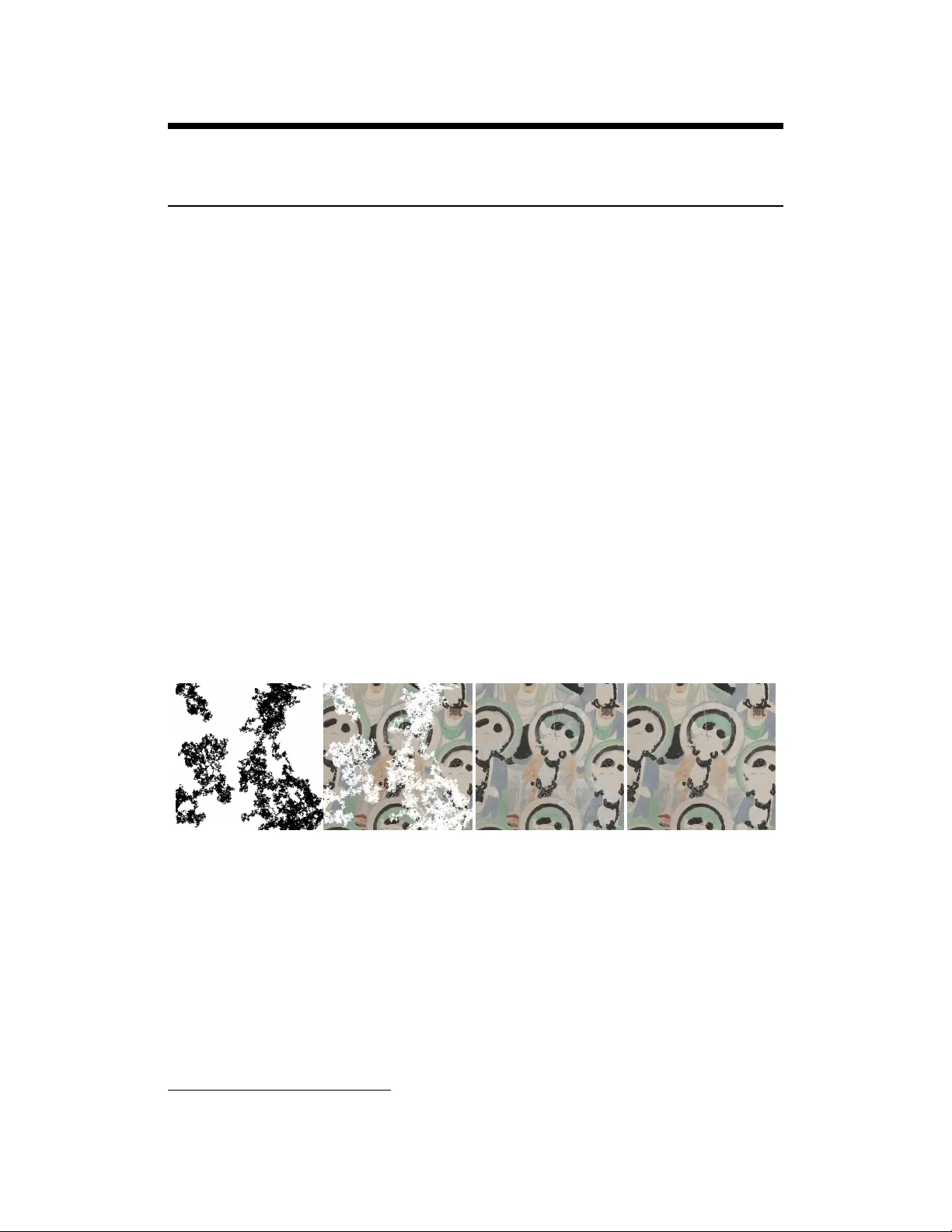

Interactiv e Image Restoration Zhiwei Han, Thomas W eber , Stefan Matthes ∗ fortiss Guerickestraße 25, 80805 München {han, weber, matthes}@fortiss.org Y uanting Liu, Hao Shen fortiss Guerickestraße 25, 80805 München {liu, shen}@fortiss.org Abstract Machine learning and many of its applications are considered hard to approach due to their comple xity and lack of transparenc y . One mission of human-centric machine learning is to improv e algorithm transparency and user satisfaction while ensuring an acceptable task accuracy . In this work, we present an interactiv e image restoration frame work, which exploits both image prior and human painting kno wl- edge in an iterati ve manner such that they can boost on each other . Additionally , in this system users can repeatedly get feedback of their interactions from the restoration progress. This informs the users about their impact on the restoration results, which leads to better sense of control, which can lead to greater trust and approachability . The positiv e results of both objective and subjecti ve ev aluation indicate that, our interactiv e approach positiv ely contributes to the approachability of restoration algorithms in terms of algorithm performance and user experience. 1 Introduction Figure 1: Left to right: An artificially generated damage mask, the image damaged within the masked area, the image restored by Deep Image Prior Ulyanov et al. [2018] and the restored image using our interactiv e approach. Image inpainting is a process for restoring damaged or missing sections of images, such that the results are visually plausible. Naturally , performance of restoration algorithm degrades when the corrupted sections become dense or large, since more semantic information is missing. Due to the lack of semantic information, restored images can contain artifacts like areas with inconsistent texture or monotone color as shown in the third image of Fig. 1. Despite this, given those pre-restored images, human beings can easily deduce the semantics in the corrupted re gions. Therefore, this awareness can be used to accomplish restoration tasks. Based on this intuition, we extend Deep Image Prior (DIP) Ulyano v et al. [2018] with Human-Computer Interaction (HCI) and present Interactiv e Deep Image Prior (iDIP), a collaborative, interacti ve image restoration frame work ∗ These authors contributed equally to this research. 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), V ancouver , Canada. (Sect. 3). This framew ork enables human and algorithms to collaborativ ely restore images in an iterativ e manner . W ith the proposed framework, ev en people with little painting knowledge can generate plausible images and manage restoration task. Furthermore, frequent feedback promises higher sense of control and better user satisfaction than non-interacti ve methods. W e then ev aluate iDIP-based image restoration system with respect to two research questions: 1. Does the interacti ve approach produce higher quality images? 2. Ho w do users view such a system reg arding user experience and satisfaction? W e answer the first question in Sect. 4 in terms of objecti ve and subjecti ve measurement. T o judge user experience and satisfaction, we ha ve conducted a user study as described in Sect. 5. 2 Related W ork Previous research works attempted to fully automate the image restoration process. As one of the state of the art approaches, DIP restores images by exploiting image prior modelled by a Con volution Neural Network (CNN) Krizhevsk y et al. [2012]. DIP minimizes the following loss function for image inpainting: L = min θ || ( f θ ( z ) − x 0 ) m 0 || 2 , (1) where f θ is a CNN parameterized with θ , z is a fixed input, x 0 is a corrupted image, is Hadamard product and m 0 is the mask for damage area. DIP overcomes the drawbacks of ex emplar-based Barnes et al. [2009], Hays and Efros [2007], Kw atra et al. [2005], He and Sun [2012] and learning- based methods Y u et al. [2018], Iizuka et al. [2017], Y eh et al. [2017], Y an et al. [2018], such as difficulties in reco vering sophisticated texture and requirement of large training set, respecti vely . Same as classic machine learning models, training of DIP is non-interacti ve and will be performed only once. Howe ver , DIP cannot use human understanding of te xtural semantics and leads to poor user satisfaction due to its low transparenc y . Nonetheless, interactiv e Machine Learning (iML) Fails and Olsen Jr [2003] increases the sense of control by introducing human interv ention into learning loops Amershi et al. [2014]. The increased sense of control can improve trust and user experience in many scenarios Amershi et al. [2014], Cohn et al. [2003], Holzinger [2016], Johnson and Johnson [2008]. 3 A pproach T o our best knowledge, there is no previous w ork combining DIP with iML. In this work, we extend the DIP with interactivity F ails and Olsen Jr [2003] and bring humans into the training loops of iDIP . The updates of iDIP is iterativ e, focused and rapid. These properties make the restoration process more transparent and contribute to a user -satisfied approach (Sect.5). iDIP restores images by iterati vely e xploiting image prior and human kno wledge via human-in-the- loop intervention. The underlying human in volv ement could be either creating new mask (correction) or painting on the corrupted regions (guidance). T raining iteration : One training iteration can be visualized in Fig. 2 and it consists of three stages. 1. User is presented with the image x n restored by iDIP from the last iteration, where n is the current timestamp. 2. User paints on the image x n to obtain a refined image x 0 n . 3. iDIP restores image x 0 n by minimizing the loss function in Eq. 1 and output the image x ∗ n . Note, the output image x ∗ n of the n th iteration is equiv alent to the input image x n +1 of the ( n + 1) th iteration. Giv en pre-restored image x n , users can come up more easily with textural semantics in the damage region than only gi ven x 0 . Furthermore, iDIP exploits its restoration performance by distilling the reconstructed textual information in the refined image x 0 n . In this way , iDIP and human kno wledge can jointly boost on each other . Besides, this iterati ve approach endo ws users with better control of their impact through trial-and- error . Therefore, users can better determine their in volvement intensity in next iterations. Frequent interaction contributes to better user satisfaction and system transparency . What’ s more, early 2 stopping can be applied on time since users continuously observ e the textural consistency and can terminate the process in any iteraction to a void o verfitting. Figure 2: iDIP T raining Iteration Figure 3: User Interface User interface (UI) : Fig. 3 shows the UI for iDIP . The image in center is the pre-restored one with the mask as red overlay . Users were supposed to pick appropriate color and paint in the masked region. 4 Experiment W e conducted experiments to answer the first research questions in this section: Does the interactive appr oach pr oduce higher quality images? Dataset: W e used the Dunhuang Grottoes Painting Dataset Y u et al. [2019] for the experiments. The dataset contains 500 full frame paintings with artificially generated masks for damage region, of which we randomly picked ten. Metrics: As performance measurement, we computed Dissimilarity Structural Similarity Index Measure (DSSIM) W ang et al. [2004] and Local Mean Squared Error (LMSE) Grosse et al. [2009] between restored and ground truth images. Mean Squared Error (MSE) and Structural Similarity Index Measure (SSIM) are common and easy-to-compute measures of the percei ved quality of digital images or videos in computer vision. In this paper , we compute MSE and equalize it to LMSE by setting k = 1 . By using D S S I M = 1 − S S I M 2 we let the DSSIM also be in versely proportional to restoration quality as LMSE. Baselines: T o show the ef fectiveness, we compared our approach with fi ve state of the art baselines. For learning based methods, we used their pre-trained model on Places2 Zhou et al. [2017], because it is one of the widely-used scene recognition dataset. • EdgeConnect : EdgeConnect Nazeri et al. [2019] proposed a tw o-stage adversarial model and can deal with irregular masks. • P artialCon v : P artialCon v Liu et al. [2018] used partial con volutions with an automatic mask update step. • P atchMatch : PatchMatch Barnes et al. [2009] can quickly find approximate nearest- neighbor matches between image patches and was adopted by Photoshop. • P atchOffset : PatchOf fset He and Sun [2012] minimizes an energy function to find patches with dominant offsets. • Deep Image Prior : DIP Ulyanov et al. [2018] e xploits the image prior by minimizing Mean Squared Error (MSE) in the unmasked region. For objecti ve e v aluation, we compared images restored by all six algorithms on ten randomly picked corrupted images using two metrics. The images generated by iDIP for the objective e valuation were recov ered by domain expert. Each image was completed within 1200 iterations (600 iterations before 3 DSSIM LMSE EdgeConnect 0.2803 629.65 PartialCon v 0.2816 2550.02 PatchMatch 0.2423 185.68 ∗ PatchOf fset 0.2246 558.05 DIP 0.2228 214.23 iDIP 0.2227 ∗ 207.37 T able 1: Comparison on restoration met- rics Figure 4: Comparison of subjecti ve opinions painting and 600 after) and the domain expert painted only once per image. Howe ver , only pixel-wise measures can not account for human criteria used to judge the quality such as semantic correctness and consistency . In consequence we asked each of the 19 participants from our user study (described in the following section) to subjec tiv ely select two best recov ered images out of the six produced by the six algorithms. 4.1 Results Objective evaluation : In the T ab . 1 we can see that, although we initialized the networks with pre- trained weights, two learning-based methods still hav e the worst performance. Style transfer failed because the image style of Dunhuang dataset varied too much from the training set. PatchMatch has the best LMSE score by a large margin. Howe ver , our approach slightly outperformed all non-interactiv e methods on DSSIM and has the second smallest LSME score. This suggests that interactivity positi vely contributes to output quality . Subjective evaluation : Fig.4 shows the probability of one algorithm being pick ed as top two algo- rithm in the subjecti ve e valuation. W e left out learning-based methods, since the y had not been pick ed. The two DIP-v ariants significantly outperformed other methods, ev en though PatchMatch demon- strated the best result on LMSE. Compared to DIP , iDIP still sho wed a considerable impro vement, which indicates interactivity introduced in iDIP added to the output quality . The difference between the two ev aluations is also note worthy: While PatchMatch has the lowest LMSE score, subjectiv ely it appears far inferior to the DIP-based methods. This may be an indicator that simple similarity measures are insufficient to account for human perception. T o summarize, introducing interactivity in iDIP positively affected the restoration performance. Therefore, we confidently giv e a positive answer to the first research question. 5 User Study W ith iDIP outperforming the other baselines in the subjectiv e perception and being not far off with respect to objectiv e measures, it remains whether an iML approach is attractive from a usage point of view . W e ev aluated this in a user study and via a questionnaire. Participants in this study (n = 19; 9 male, 9 female, 1 other; 20-29 years old: 10, 30-39 years old: 7, 40-49 years old: 2) were people medium expertise with image manipulation (mean: 2.68/5, std: 1.25) and low e xpertise with image reconstruction (mean: 1.74/5, std: 1.19). W e presented to them the UI and asked them to reconstruct tw o images. Due to practical reasons, we limited their w orking time to sev en minutes per image. W e then asked the participants to fill out the questionnaire regarding general satisfaction with the process using the System Usability Scale (SUS) and workload using N ASA TLX as well as questions regarding the benefits of our interacti ve approach. Results from the SUS and TLX were very positiv e (av erage score SUS: 86/100, TLX: 3.4/10). Measured on a 5-point Likert scale, the opinion of the participants regarding iML being suitable for image reconstruction (4.5/5) and in general (4.0/5) were also very positiv e. Participants also did not believ e that a non-interacti ve ML process (0.9/5) or a manual approach (1.8/5) would perform better . 4 The fact that all participants stated that they liked the combination of interactivity and machine learning, as well as other feedback, led us to conclude that iML can make machine learning more approachable. Whether it is an actual boost to expert-producti vity remains to be seen in future work. 6 Conclusion and Future W ork In this paper we hav e outlined our frame work for interacti ve image restoration. This framework allows users to interacti vely contrib ute to DIP-based image restoration process so that both image prior and human knowledge can be well lev eraged in an iML fashion. Our experiments sho w that the designed interactions positiv ely af fected the output quality as iDIP outperformed all five state of the art baselines. Meanwhile, good user satisfaction has been achie ved according to the user study , as participants stated their appreciation and confidence of the proposed method. In summary , the positiv e answers of two research questions indicate that our goal of human-centric machine learning hav e been fulfilled for image restoration tasks. As human-in-the-loop approach demonstrated its ef fectiveness in terms of algorithm performance and user satisfaction, we remain the interpretation of rich interactions forms in image restoration as future work. References Dmitry Ulyanov , Andrea V edaldi, and V ictor Lempitsky . Deep image prior . In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 9446–9454, 2018. Alex Krizhe vsky , Ilya Sutskev er , and Geof frey E Hinton. Imagenet classification with deep conv olu- tional neural networks. In Advances in neural information pr ocessing systems , pages 1097–1105, 2012. Connelly Barnes, Eli Shechtman, Adam Finkelstein, and Dan B Goldman. Patchmatch: A randomized correspondence algorithm for structural image editing. In A CM T ransactions on Graphics (T oG) , volume 28, page 24. A CM, 2009. James Hays and Ale xei A Efros. Scene completion using millions of photographs. ACM T ransactions on Graphics (TOG) , 26(3):4, 2007. V iv ek Kwatra, Irf an Essa, Aaron Bobick, and Nipun Kwatra. T exture optimization for example-based synthesis. In ACM T ransactions on Graphics (T oG) , volume 24, pages 795–802. A CM, 2005. Kaiming He and Jian Sun. Statistics of patch of fsets for image completion. In Eur opean Conference on Computer V ision , pages 16–29. Springer, 2012. Jiahui Y u, Zhe Lin, Jimei Y ang, Xiaohui Shen, Xin Lu, and Thomas S Huang. Generativ e image inpainting with contextual attention. In Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 5505–5514, 2018. Satoshi Iizuka, Edgar Simo-Serra, and Hiroshi Ishikawa. Globally and locally consistent image completion. ACM T ransactions on Graphics (T oG) , 36(4):107, 2017. Raymond A Y eh, Chen Chen, T eck Y ian Lim, Alexander G Schwing, Mark Hasega wa-Johnson, and Minh N Do. Semantic image inpainting with deep generati ve models. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 5485–5493, 2017. Zhaoyi Y an, Xiaoming Li, Mu Li, W angmeng Zuo, and Shiguang Shan. Shift-net: Image inpainting via deep feature rearrangement. In Pr oceedings of the Eur opean Confer ence on Computer V ision (ECCV) , pages 1–17, 2018. Jerry Alan Fails and Dan R Olsen Jr . Interacti ve machine learning. In Pr oceedings of the 8th international confer ence on Intelligent user interfaces , pages 39–45. A CM, 2003. Saleema Amershi, Maya Cakmak, W illiam Bradley Knox, and T odd Kulesza. Power to the people: The role of humans in interactiv e machine learning. AI Magazine , 35(4):105–120, 2014. 5 David Cohn, Rich Caruana, and Andre w McCallum. Semi-supervised clustering with user feedback. Constrained Clustering: Advances in Algorithms, Theory , and Applications , 4(1):17–32, 2003. Andreas Holzinger . Interacti ve machine learning for health informatics: when do we need the human-in-the-loop? Br ain Informatics , 3(2):119–131, 2016. Roger T Johnson and David W Johnson. Activ e learning: Cooperation in the classroom. The annual r eport of educational psychology in Japan , 47:29–30, 2008. T ianxiu Y u, Shijie Zhang, Cong Lin, and Shaodi Y ou. Dunhuang grotto painting dataset and benchmark. arXiv preprint , 2019. Zhou W ang, Alan C Bovik, Hamid R Sheikh, Eero P Simoncelli, et al. Image quality assessment: from error visibility to structural similarity . IEEE transactions on image pr ocessing , 13(4):600–612, 2004. Roger Grosse, Micah K Johnson, Edw ard H Adelson, and W illiam T Freeman. Ground truth dataset and baseline e valuations for intrinsic image algorithms. In 2009 IEEE 12th International Confer ence on Computer V ision , pages 2335–2342. IEEE, 2009. Bolei Zhou, Agata Lapedriza, Aditya Khosla, Aude Oliv a, and Antonio T orralba. Places: A 10 million image database for scene recognition. IEEE transactions on pattern analysis and machine intelligence , 40(6):1452–1464, 2017. http://places2.csail.mit.edu/. Kamyar Nazeri, Eric Ng, T ony Joseph, Faisal Qureshi, and Mehran Ebrahimi. Edgeconnect: Genera- tiv e image inpainting with adversarial edge learning. arXiv preprint , 2019. Guilin Liu, Fitsum A Reda, Ke vin J Shih, T ing-Chun W ang, Andre w T ao, and Bryan Catanzaro. Image inpainting for irre gular holes using partial con volutions. In Pr oceedings of the Eur opean Confer ence on Computer V ision (ECCV) , pages 85–100, 2018. 6 7 Supplementary Material As supplementary materials, we provide the subjectiv e ev aluation record of restoration performance, the questionnaire used in the user study and the statistical summary of user study . 7 Teste r / I m age 1 2 3 4 5 6 7 8 9 10 1 PM, ID IP D IP, ID IP PM , ID IP D IP, ID IP D IP, ID IP PM , IDIP D IP, IDIP PO, IDI P PM , ID IP D IP, ID IP 2 DIP , IDIP D IP, IDIP D IP, IDIP D IP, IDI P DI P, IDIP D IP, ID IP D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 3 DIP , IDIP D IP, IDIP D IP, IDIP D IP, IDI P DI P, IDIP D IP, ID IP D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 4 PM, ID IP D IP, ID IP PM , ID IP D IP, ID IP PM , ID IP D IP, ID IP D IP, ID IP PO, ID IP DI P, IDIP D IP, ID IP 5 DIP , IDIP D IP, IDIP D IP, IDIP D IP, IDI P DI P, IDIP D IP, ID IP D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 6 DIP , IDIP D IP, IDIP D IP, IDIP D IP, IDI P DI P, IDIP D IP, ID IP D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 7 DIP , IDIP D IP, IDIP P M , IDIP D IP, ID IP D IP, ID IP PM , ID IP PO, DIP PO, IDI P D IP, ID IP D IP, ID IP 8 DIP , IDIP P M, ID IP D IP, ID IP D IP, ID IP PM , ID IP D IP, ID IP D IP, ID IP PO, ID IP DIP , ID IP D IP, ID IP 9 DIP , IDIP D IP, IDIP D IP, IDIP D IP, IDI P DI P, IDIP D IP, ID IP D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 10 DIP , ID IP DI P, IDIP D IP, ID IP D IP, IDI P D IP, IDI P DI P, IDIP P M, ID IP DI P, IDIP D IP, ID IP D IP, IDI P 11 DIP , ID IP DI P, IDIP D IP, ID IP PM , ID IP DI P, IDIP D IP, IDI P D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 12 PM, ID IP D IP, ID IP D IP, ID IP D IP, ID IP D IP, ID IP D IP, ID IP D IP, ID IP PO, ID IP D IP, ID IP D IP, ID IP 13 DIP , ID IP DI P, IDIP P M, ID IP D IP, IDIP D IP, IDIP D IP, IDIP D IP, IDI P PO, IDI P D IP, ID IP D IP, ID IP 14 DIP , ID IP DI P, IDIP D IP, ID IP D IP, IDI P D IP, IDI P DI P, IDIP D IP, ID IP PO, ID IP D IP, ID IP D IP, ID IP 15 DIP , ID IP PM , ID IP DIP , ID IP D IP, ID IP D IP, ID IP D IP, ID IP PM , IDIP PO, IDIP D IP , ID IP DIP , IDIP 16 PM, ID IP D IP, ID IP D IP, ID IP PM , IDIP D IP, IDIP P M , ID IP DIP , IDIP PO, IDIP D IP, ID IP PO, IDIP 17 DIP , ID IP DI P, IDIP D IP, ID IP D IP, IDI P D IP, IDI P DI P, IDIP D IP, ID IP PO, ID IP PM , ID IP D IP, ID IP 18 DIP , ID IP DI P, IDIP D IP, ID IP D IP, IDI P D IP, IDI P PM , ID IP PM , ID IP PM , ID IP DIP , IDIP D IP, IDIP 19 DIP , ID IP DI P, IDIP D IP, ID IP D IP, IDI P D IP, IDI P DI P, IDIP D IP, ID IP D IP, IDI P D IP, IDI P DI P, IDIP 20 PM, ID IP D IP, ID IP D IP, ID IP PM , IDIP D IP, IDIP D IP, IDIP D IP, ID IP D IP, IDI P D IP, IDI P DI P, IDIP 21 DIP , ID IP DI P, IDIP D IP, ID IP PO, ID IP D IP, ID IP D IP, ID IP PM , ID IP PO, ID IP D IP, IDI P DI P, IDIP PM : P atchM atch, , PO: Pa tch Of f s et, DI P: D eep Im a g e Prio r, IDIP : In tera ctiv e D eep Im a ge Pr i o r 14/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/edit 2/5 1 . Please answer how you agree with the following statements * Mark only one oval per row . Fully disagree Somewhat disagree Neutral Somewhat agree Fully agree I can draw I have experience with machine learning I consider myself skilled in image manipulation I have previous experience with image manipulation software I consider myself skilled with technology I am open towards new technology I have experience with image reconstruction Machine Learning Support In the following section you will answer some questions regarding the task you have performed and the tool you have used. 2 . Please answer how you agree with the following statements * Mark only one oval per row . Fully disagree Somewhat disagree Neutral Somewhat agree Fully agree A human can perform Image reconstruction better than a machine T ools using machine learning are more efficient Machine learning should only be used where necessary T ools using machine learning are more effective Machine learning is a good support mechanism in tools I want more machine learning support in tools Machine learning is helpful for image reconstruction Machine learning should be used more frequently T ools using machine learning help me complete my work faster Machine learning takes a long time 14/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/edit 3/5 3 . Please answer how you agree with the following statements * Mark only one oval per row . Fully disagree Somewhat disagree Neutral Somewhat agree Fully agree I like the combination of interactive and automated elements for image reconstruction I am satisfied with the result I was in control of how the output turned out Image reconstruction should be more automated The interactive part of the image reconstruction worked well The image turned out the way I expected it to The automated part of the image reconstruction worked well Machine learning without interactivity would create a better image Manual image reconstruction would create a better image Image reconstruction should be done manually 4 . Did anything during the task not work the way you would have wanted it to? 5 . What would you change in the interactive image reconstruction process? General System Usability In the following section you will answer some questions regarding the usability of the system we have presented to you. 14/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/edit 4/5 6 . Please answer how you agree with the following statements * Mark only one oval per row . Fully disagree Somewhat disagree Neutral Somewhat agree Fully agree I need to learn a lot of things before I could get going with the system I found the system very cumbersome to use I felt very confident using the system I thought there was too much inconsistency in this system I think I would like to use this system frequently I found the system unnecessarily complex I found the various functions in the system well integrated I would imagine that most people would learn to use this system very quickly I thought the system was easy to use I think that I would need support of a technical person to be able to use the system W orkload In the following section you will answer some questions on the perceived workload for the task. Y ou can give a score from 1 to 10 where 1 means "low" or "not much" while 10 means "high" or "a lot". 7 . How mentally demanding was the task? 8 . How physically demanding was the task? 9 . How hurried or rushed was the pace of the task? 10 . How successful were you in accomplishing what you were asked to do? 1 1 . How hard did you have to work to accomplish your level of performance? 12 . How insecure, discourage, irritated, stressed and annoyed were you? 14/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/edit 5/5 Powered by Demographics Lastly we ask you for some general information about yourself 13 . How old are you? Mark only one oval. Y ounger than 20 20-29 30-39 40-49 50-59 60 or older 14 . What is your gender? Mark only one oval. Female Male Prefer not to say Other: 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 1/7 Survey - Image Reconstruction 21 responses Data Protection Exper tise Please answer how you agree with the following statements Machine Learning Suppor t Please answer how you agree with the following statements I have experience with machine learning I have experience with image reconstruction I consider myself skilled in image manipulation I consider myself sk with technology 0 5 10 15 Fully disagree Fully disagree Fully disagree Somewhat disagree Somewhat disagree Somewhat disagree Neutral Neutral Neutral Somewhat agree Somewhat agree Somewhat agree Fully agree Fully agree Fully agree 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 2/7 Please answer how you agree with the following statements Did anything during the task not work the way you would ha ve wanted it to? 8 r esponses No Sometimes the pen tool gets automatically deselected when switching between pipette and pen. I thought that the algorithm could also reconstruct sharp edges but it didn 't. No, everything worked well when you moved the mouse outside the image while painting on the edges it continued dr awing I would like to use the pen rstly dr aw a boundary , and fulll it with the coulor . The ColorPicker is sometime not sensitive enough to pick up the small area color . Fully disagree Fully disagree Fully disagree Somewhat disagree Somewhat disagree Somewhat disagree Neutral Neutral Neutral Somewhat agree Somewhat agree Somewhat agree Fully agree Fully agree Fully agree The autom… The intera… I like the c… Image rec… 0 5 10 15 Fully disagree Fully disagree Fully disagree Somewhat disagree Somewhat disagree Somewhat disagree Neutral Neutral Neutral Somewhat agree Somewhat agree Somewhat agree Fully agree Fully agree Fully agree 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 3/7 What would you change in the inter active image r econstruction process? 11 r esponses Let the automated process run in par allel and in background, so y ou have to wait less. comparing some images with similar ones (pattern recognition, duplicated elements,...) maybe more prof essional drawing brushes that I can give mor e details. size of the tool could be symbolized Shorter processing time. for now nothing Nothing Introduce instructions by voice Adding zoom functions. The mouse for a ner pencil-like item Copy patch instead of only one color General System Usability Please answer how you agree with the following statements I think I wo… I found the… I thought t… I think that… 0 5 10 15 Fully disagree Fully disagree Fully disagree Somewhat disagree Somewhat disagree Somewhat disagree Neutral Neutral Neutral Somewhat agree Somewhat agree Somewhat agree Fully agree Fully agree Fully agree 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 4/7 Workload How mentally demanding was the task? 21 r esponses How physically demanding was the task? 21 r esponses How hurried or rushed was the pace of the task? 21 r esponses 1 3 4 5 6 8 0.0 2.5 5.0 7.5 10.0 6 (28.6%) 10 (47.6%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 2 (9.5%) 1 2 3 5 6 0 5 10 15 15 (71.4%) 3 (14.3%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 5/7 How successful were you in accomplishing what y ou were ask ed to do? 21 r esponses How hard did you ha ve to work t o accomplish your lev el of performance? 21 r esponses 1 2 3 4 5 7 8 0 2 4 6 6 (28.6%) 6 (28.6%) 6 (28.6%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 6 (28.6%) 6 (28.6%) 6 (28.6%) 3 (14.3%) 3 (14.3%) 3 (14.3%) 2 (9.5%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 2 (9.5%) 4 5 7 8 9 10 0 5 10 15 2 (9.5%) 2 (9.5%) 3 (14.3%) 1 1 (52.4%) 1 (4.8%) 1 (4.8%) 1 (4.8%) 2 (9.5%) 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 6/7 How insecure, discour age, irritated, stressed and annoyed were you? 21 r esponses Demographics How old are you? 21 r esponses 4 6 8 7 (33.3%) 7 (33.3%) 7 (33.3%) 6 (28.6%) 6 (28.6%) 6 (28.6%) 3 (14.3%) 3 (14.3%) 3 (14.3%) 1 2 3 4 0 5 10 15 15 (71.4%) 2 (9.5%) 3 (14.3%) 1 (4.8%) 1 (4.8%) 1 (4.8%) Y ounger than 20 20-29 30-39 40-49 50-59 60 or older 9.5% 38.1% 52.4% 15/09/2019 Survey - Image Reconstruction https://docs.google.com/forms/d/1UhKNyMahf2s5XpY924lqa7Au0MA wrjIJ2pNidaEL Y us/viewanalytics 7/7 What is your gender? 21 r esponses This content is neither cr eated nor endorsed b y Google. Repor t Abuse - T erms of Ser vice Female Male Prefer not to say 42.9% 52.4% F o r m s

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment