Neural Logic Networks

Recent years have witnessed the great success of deep neural networks in many research areas. The fundamental idea behind the design of most neural networks is to learn similarity patterns from data for prediction and inference, which lacks the abili…

Authors: Shaoyun Shi, Hanxiong Chen, Min Zhang

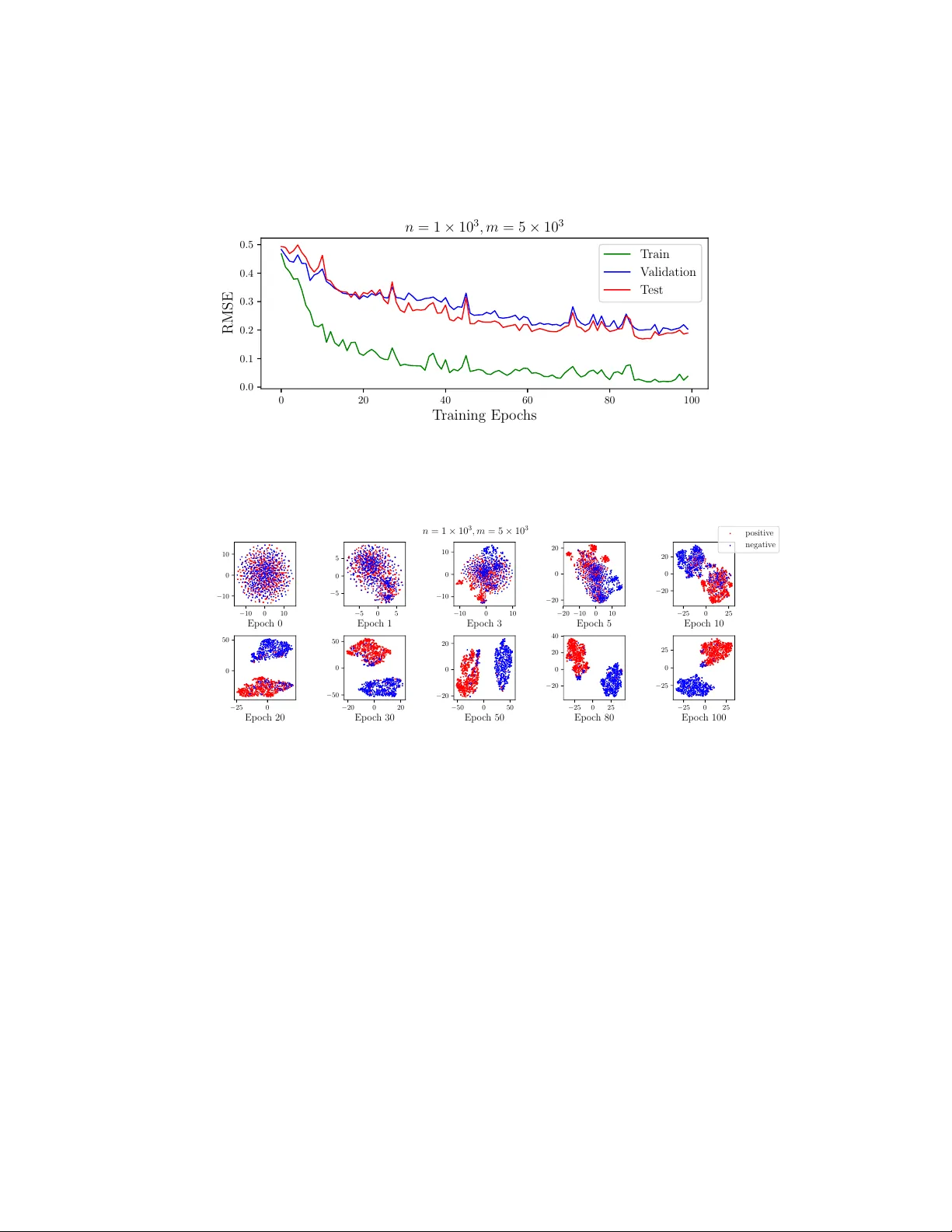

Neural Logic Networks Shaoyun Shi 1 ∗ , Hanxiong Chen 2 , Min Zhang 1 , Y ongfeng Zhang 2 † 1 Tsinghua Univ ersity , Beijing, China 2 Rutgers Univ ersity , NJ, USA {shisy17,z-m}@tsinghua.edu.cn, {hc691,yongfeng.zhang}@rutgers.edu Abstract Recent years hav e witnessed the great success of deep neural networks in many research areas. The fundamental idea behind the design of most neural netw orks is to learn similarity patterns from data for prediction and inference, which lacks the ability of logical reasoning. Howe ver , the concrete ability of logical reasoning is critical to many theoretical and practical problems. In this paper , we propose Neural Logic Network (NLN), which is a dynamic neural architecture that builds the computational graph according to input logical expressions. It learns basic logical operations as neural modules, and conducts propositional logical reasoning through the network for inference. Experiments on simulated data show that NLN achie ves significant performance on solving logical equations. Further experiments on real-w orld data sho w that NLN significantly outperforms state-of-the-art models on collaborativ e filtering and personalized recommendation tasks. 1 Introduction Deep neural networks hav e sho wn remarkable success in many fields such as computer vision, natural language processing, information retrie val, and data mining. The design philosophy of most neural network architectures is learning statistical similarity patterns from large scale training data. For example, representation learning approaches learn vector representations from image or text for prediction, while metric learning approaches learn similarity functions for matching and inference. Though they usually ha ve good generalization ability on similarly distrib uted new data, the design philosophy of these approaches makes it difficult for neural networks to conduct logical reasoning in many theoretical or practical tasks. Howe ver , logical reasoning is an important ability of human intelligence, and it is critical to many theoretical problems such as solving logical equations, as well as practical tasks such as medical decision support systems, legal assistants, and collaborati ve reasoning in personalized recommender systems. In fact, logical inference based on symbolic reasoning was the dominant approach to AI before the emer ging of machine learning approaches, and it served as the underpinning of many expert systems in Good Old Fashioned AI (GOF AI). Howe ver , traditional symbolic reasoning methods for logical inference are mostly hard rule-based reasoning, which may require significant manual efforts in rule dev elopment, and may only hav e very limited generalization ability to unseen data. T o integrate the adv antages of deep neural networks and logical reasoning, we propose Neural Logic Network (NLN), a neural architecture to conduct logical inference based on neural netw orks. NLN adopts vectors to represent logic v ariables, and each basic logic operation (AND/OR/NO T) is learned as a neural module based on logic regularization. Since logic expressions that consist of the same set of variables may ha ve completely dif ferent logical structures, capturing the structure information of logical e xpressions is critical to logical reasoning. T o solve the problem, NLN dynamically constructs its neural architecture according to the input logical e xpression, which is different from man y other ∗ This work was done when the first author w as visiting at Rutgers University . † Corresponding author . neural networks. By encoding logical structure information in neural architecture, NLN can flexibly process an exponential amount of logical e xpressions. Extensiv e experiments on both theoretical problems such as solving logical equations and practical problems such as personalized recommendation verified the superior performance of NLN compared with state-of-the-art methods. 2 Neural Logic Networks Most neural networks are de veloped based on fixed neural architectures, either manually designed or learned through neural architecture search. Differently , the computational graph in our Neural Logic Network (NLN) is b uilt dynamically according to the input logical expression. In NLN, variables in the logic expressions are represented as vectors, and each basic logic operation is learned as a neural module during the training process. W e further leverage logic regularizers o ver the neural modules to guarantee that each module conducts the expected logical operation. 2.1 Logic Operations as Neural Modules An expression of propositional logic consists of logic constants (T/F), logic v ariables ( v ), and basic logic operations (negation ¬ , conjunction ∧ , and disjunction ∨ ). In NLN, negation, conjunction, and disjunction are learned as three neural modules. Leshno u. a. [16] prov ed that multilayer feedforward networks with non-polynomial acti v ation can approximate any function. Thus it is possible to lev erage neural modules to approximate the negation, conjunction, and disjunction operations. Similar to most neural models in which input v ariables are learned as v ector representations, in our framew ork, T , F and all logic variables are represented as v ectors of the same dimension. Formally , suppose we hav e a set of logic expressions E = { e i } and their values Y = { y i } (either T or F), and they are constructed by a set of v ariables V = { v i } , where | V | = n is the number of v ariables. An example logic e xpression is ( v i ∧ v j ) ∨ ¬ v k = T . W e use bold font to represent the vectors, e.g. v i is the vector representation of v ariable v i , and T is the vector representation of logic constant T , where the vector dimension is d . AND ( · , · ) , OR ( · , · ) , and NO T ( · ) are three neural modules. For example, AND ( · , · ) takes two vectors v i , v j as inputs, and the output v = AND ( v i , v j ) is the representation of v i ∧ v j , a vector of the same dimension d as v i and v j . The three modules can be implemented by various neural structures, as long as they hav e the ability to approximate the logical operations. Figure 1 is an example of the neural logic network corresponding to the e xpression ( v i ∧ v j ) ∨ ¬ v k . The red left box sho ws how the frame work AND 𝐯 " 𝐯 # NOT 𝐯 $ 𝐯 " ⋀𝐯 # OR ¬𝐯 $ (𝐯 " ⋀𝐯 # )⋁¬𝐯 $ Sim 𝐓 0 < 𝑝 < 1 Logic Expr ession True/ F al se Evaluati on Figure 1: An example of the neural logic network. constructs a logic expression. Each intermediate vector represents part of the logic expression, and finally , we have the vector representation of the whole logic expression e = ( v i ∧ v j ) ∨ ¬ v k . T o ev aluate the T/F v alue of the e xpression, we calculate the similarity between the e xpression vector and the T vector , as sho wn in the right blue box, where T , F are short for logic constants T rue and False respecti vely , and T , F are their vector representations. Here S im ( · , · ) is also a neural module to calculate the similarity between tw o vectors and output a similarity value between 0 and 1. The output p = S im ( e , T ) e v aluates how lik ely NLN considers the expression to be true. T raining NLN on a set of expressions and predicting T/F values of other expressions can be considered as a classification problem, and we adopt cross-entropy loss for this task: L c = − X e i ∈ E y i log( p i ) + (1 − y i ) log(1 − p i ) (1) 2 2.2 Logical Regularization over Neural Modules So far , we only learned the logic operations AND , OR , NO T as neural modules, but did not explicitly guarantee that these modules implement the expected logic operations. For example, an y variable or expression w conjuncted with false should result in false w ∧ F = F , and a double negation should result in itself ¬ ( ¬ w ) = w . Here we use w instead of v in the pre vious section, because w could either be a single variable (e.g., v i ) or an expression (e.g., v i ∧ v j ). A neural logic network that aims to implement logic operations should satisfy the basic logic rules. As a result, we define logic regularizers to regularize the beha vior of the modules, so that they implement certain logical operations. A complete set of the logical regularizers are sho wn in T able 1. T able 1: Logical regularizers and the corresponding logical rules Logical Rule Equation Logic Regularizer r i NO T Negation ¬ T = F r 1 = P w ∈ W ∪{ T } S im ( NO T ( w ) , w ) Double Negation ¬ ( ¬ w ) = w r 2 = P w ∈ W 1 − S im ( NO T ( NO T ( w )) , w ) AND Identity w ∧ T = w r 3 = P w ∈ W 1 − S im ( AND ( w , T ) , w ) Annihilator w ∧ F = F r 4 = P w ∈ W 1 − S im ( AND ( w , F ) , F ) Idempotence w ∧ w = w r 5 = P w ∈ W 1 − S im ( AND ( w , w ) , w ) Complementation w ∧ ¬ w = F r 6 = P w ∈ W 1 − S im ( AND ( w , NOT ( w )) , F ) OR Identity w ∨ F = w r 7 = P w ∈ W 1 − S im ( OR ( w , F ) , w ) Annihilator w ∨ T = T r 8 = P w ∈ W 1 − S im ( OR ( w , T ) , T ) Idempotence w ∨ w = w r 9 = P w ∈ W 1 − S im ( OR ( w , w ) , w ) Complementation w ∨ ¬ w = T r 10 = P w ∈ W 1 − S im ( OR ( w , NOT ( w )) , T ) The regularizers are cate gorized by the three operations. The equations of laws are translated into the modules and variables in our neural logic network as logical regularizers. It should be noted that these logical rules are not considered in the whole v ector space R d , but in the vector space defined by NLN. Suppose the set of all v ariables as well as intermediate and final e xpressions observed in the training data is W = { w } , then only { w | w ∈ W } are taken into account when constructing the logical regularizers. T ake Figure 1 as an example, the corresponding w in T able 1 include v i , v j , v k , v i ∧ v j , ¬ v k and ( v i ∧ v j ) ∨ ¬ v k . Logical regularizers encourage NLN to learn the neural module parameters to satisfy these laws ov er the variable/e xpression vectors in v olved in the model, which is much smaller than the whole vector space R d . Note that in NLN the constant true v ector T is randomly initialed and fixed during the training and testing process, which w orks as an indication v ector in the frame work that defines the true orientation. The false vector F is thus calculated with NO T ( T ) . Finally , logical regularizers R l are added to the cross-entropy loss function (Eq. (1) ) with weight λ l : L 1 = L c + λ l R l = L c + λ l X i r i (2) where r i are the logic regularizers in T able 1. It should be noted that except for the logical regularizers listed above, a propositional logical system should also satisfy other logical rules such as the associativity , commutativity and distrib utivity of AND/OR/NO T operations. T o consider associati vity and commutati vity , the order of the variables joined by multiple conjunctions or disjunctions is randomized when training the network. For example, the netw ork structure of w i ∧ w j could be AND ( w i , w j ) or AND ( w j , w i ) , and the network structure of w i ∨ w j ∨ w k could be OR ( OR ( w i , w j ) , w k ) , OR ( OR ( w i , w k ) , w j ) , OR ( w j , OR ( w k , w i )) and so on during training. In this way , the model is encouraged to output the same vector representation when inputs are different forms of the same e xpression in terms of associativity and commutati vity . There is no explicit way to regularize the modules for other logical rules that correspond to more complex e xpression variants, such as distributi vity and De Mor gan laws. T o solve the problem, we make sure that the input expressions hav e the same normal form – e.g., disjunctiv e normal form – because any propositional logical expression can be transformed into a Disjunctive Normal Form (DNF) or Canonical Normal Form (CNF). In this way , we can a void the necessity to re gularize the neural modules for distributi vity and De Morg an laws. 3 2.3 Length Regularization over Logic V ariables W e found that the vector length of logic variables as well as intermediate or final logic e xpressions may explode during the training process, because simply increasing the v ector length results in a tri vial solution for optimizing Eq. (2) . Constraining the vector length provides more stable performance, and thus a ` 2 -length regularizer R ` is added to the loss function with weight λ ` : L 2 = L c + λ l R l + λ ` R ` = L c + λ l X i r i + λ ` X w ∈ W k w k 2 F (3) Similar to the logical regularizers, W here includes input variable v ectors as well as all intermediate and final expression v ectors. Finally , we apply ` 2 -regularizer with weight λ Θ to prev ent the parameters from overfitting. Suppose Θ are all the model parameters, then the final loss function is: L = L c + λ l R l + λ ` R ` + λ Θ R Θ = L c + λ l X i r i + λ ` X w ∈ W k w k 2 F + λ Θ k Θ k 2 F (4) 3 Implementation Details Our prototype task is defined in this way: giv en a number of training logical e xpressions and their T/F values, we train a neural logic network, and test if the model can solve the T/F value of the logic variables, and predict the value of new expressions constructed by the observed logic variables in training. W e first conduct experiments on manually generated data to show that our neural logic networks have the ability to make propositional logical inference. NLN is further applied to the personalized recommendation problem to verify its performance in practical tasks. W e did not design fanc y structures for different modules. Instead, some simple structures are ef fectiv e enough to show the superiority of NLN. In our experiments, the AND module is implemented by multi-layer perceptron (MLP) with one hidden layer: AND ( w i , w j ) = H a 2 f ( H a 1 ( w i | w j ) + b a ) (5) where H a 1 ∈ R d × 2 d , H a 2 ∈ R d × d , b a ∈ R d are the parameters of the AND network. | means v ector concatenation. f ( · ) is the acti vation function, and we use r elu in our networks. The OR module is built in the same w ay , and the NO T module is similar b ut with only one vector as input: NO T ( w ) = H n 2 f ( H n 1 w + b n ) (6) where H n 1 ∈ R d × d , H n 2 ∈ R d × d , b n ∈ R d are the parameters of the NO T network. The similarity module is based on the cosine similarity of two v ectors. T o ensure that the output is formatted between 0 and 1, we scale the cosine similarity by multiplying a value α , follo wing by a sigmoid function: S im ( w i , w j ) = sig moid α w i · w j k w i kk w j k (7) The α is set to 10 in our experiments. W e also tried other ways to calculate the similarity such as sig moid ( w i · w j ) or MLP . This way provides better performance. All the models including baselines are trained with Adam [ 13 ] in mini-batches at the size of 128. The learning rate is 0.001, and early-stopping is conducted according to the performance on the validation set. Models are trained at most 100 epochs. T o prev ent models from ov erfitting, we use both the ` 2 -regularization and dropout. The weight of ` 2 -regularization λ Θ is set between 1 × 10 − 7 to 1 × 10 − 4 and dropout ratio is set to 0.2. V ector sizes of the variables in simulation data and the user/item vectors in recommendation are 64. W e run the experiments with 5 different random seeds and report the a verage results and standard errors. Note that NLN has similar time and space complexity with baseline models and each e xperiment run can be finished in 6 hours (sev eral minutes on small datasets) with a GPU (NVIDIA GeForce GTX 1080T i). 4 Simulated Data W e first randomly generate n variables V = { v i } , each has a v alue of T or F . Then these variables are used to randomly generate m boolean expressions E = { e i } in disjuncti ve normal form (DNF) 4 as the dataset. Each expression consists of 1 to 5 clauses separated by the disjunction ∨ . Each clause consists of 1 to 5 v ariables or the negation of v ariables connected by conjunction ∧ . W e also conducted experiments on many other fix ed or variational lengths of expressions, which ha ve similar results. The T/F v alues of the expressions Y = { y i } can be calculated according to the v ariables. But note that the T/F v alues of the v ariables are in visible to the model. Here are some e xamples of the generated expressions when n = 100 : ( ¬ v 80 ∧ v 56 ∧ v 71 ) ∨ ( ¬ v 46 ∧ ¬ v 7 ∧ v 51 ∧ ¬ v 47 ∧ v 26 ) ∨ v 45 ∨ ( v 31 ∧ v 15 ∧ v 2 ∧ v 46 ) = T ( ¬ v 19 ∧ ¬ v 65 ) ∨ ( v 65 ∧ ¬ v 24 ∧ v 9 ∧ ¬ v 83 ) ∨ ( ¬ v 48 ∧ ¬ v 9 ∧ ¬ v 51 ∧ v 75 ) = F ¬ v 98 ∨ ( ¬ v 76 ∧ v 66 ∧ v 13 ) ∨ v 97 ( ∧ v 89 ∧ v 45 ∧ v 83 ) = T v 43 ∧ v 21 ∧ ¬ v 53 = F 4.1 Results Analysis T able 2: Performance on simulation data n = 1 × 10 3 , m = 5 × 10 3 n = 2 × 10 4 , m = 5 × 10 4 Accuracy RMSE Accuracy RMSE Bi-LSTM 0.6128 ± 0.0029 0.4952 ± 0.0032 0.6826 ± 0.0039 0.4529 ± 0.0038 Bi-RNN 0.6412 ± 0.0014 0.4802 ± 0.0033 0.6985 ± 0.0023 0.4412 ± 0.0005 NLN- R l 0.9064 ± 0.0136 0.2746 ± 0.0221 0.8400 ± 0.0011 0.3678 ± 0.0013 NLN 0.9716 ± 0.0023 * 0.1633 ± 0.0080 * 0.8827 ± 0.0019 * 0.3286 ± 0.0022 * *. Significantly better than the other models (italic ones) with p < 0 . 05 On simulated data, λ l and λ ` are set to 1 × 10 − 2 and 1 × 10 − 4 respectiv ely . Datasets are randomly split into the training (80%), validation (10%) and test (10%) sets. The overall performances on test sets are shown on T able 2. Bi-RNN is bidirectional V anilla RNN [ 20 ] and Bi-LSTM is bidirectional LSTM [ 6 ]. They represent traditional neural netw orks. NLN- R l is the NLN without logic re gularizers. The poor performance of Bi-RNN and Bi-LSTM verifies that traditional neural networks that ignore the logical structure of expressions do not have the ability to conduct logical inference. Logical expressions are structural and ha ve exponential combinations, which are dif ficult to learn by a fixed model architecture. Bi-RNN performs better than Bi-LSTM because the forget gate in LSTM may be harmful to model the variable sequence in expressions. NLN- R l provides a significant improv ement ov er Bi-RNN and Bi-LSTM because the structure information of the logical expressions is explicitly captured by the network structure. Howe ver , the behaviors of the modules are freely trained with no logical regularization. On this simulated data and many other problems requiring logical inference, logical rules are essential to model the internal relations. W ith the help of logic regularizers, the modules in NLN learn to perform expected logic operations, and finally , NLN achiev es the best performance and significantly outperforms NLN- R l . 0 1e-05 0.0001 0.001 0.01 0.1 λ l - W eigh t of Logic Regularizers 0 . 90 0 . 92 0 . 94 0 . 96 T est Accuracy n = 1 × 10 3 , m = 5 × 10 3 Figure 2: Performance with different weights of logical regularizers. − 40 − 20 0 20 40 − 20 − 10 0 10 20 n = 1 × 10 3 , m = 5 × 10 3 p ositiv e negativ e Figure 3: V isualization of the variable embed- dings with t-SNE. • W eight of Logical Re gularizers . T o better understand the impact of logical regularizers, we test the model performance with dif ferent weights of logical regularizers, sho wn in Figure 2. When λ l = 0 (i.e., NLN- R l ), the performance is not so good. As λ l grows, the performance gets better , which 5 shows that logical rules of the modules are essential for logical inference. Howe ver , if λ l is too large it will result in a drop of performance, because the expressi veness po wer of the model may be significantly constrained by the logical regularizers. • V isualization of V ariables . It is intuiti ve to study whether NLN can solve the T/F values of variables. T o do so, we conduct t-SNE [ 17 ] to visualize the variable embeddings on a 2D plot, sho wn in Figure 3. W e can see that the T and F variables are clearly separated, and the accuracy of T/F values according to the two clusters is 95.9%, which indicates high accuracy of solving v ariables based on NLN. 5 Personalized Recommendation The key problem of recommendation is to understand the user preference according to historical interactions. Suppose we ha ve a set of users U = { u i } and a set of items V = { v j } , and the ov erall interaction matrix is R = { r i,j } | U |×| V | . The interactions observed by the recommender system are the known values in matrix R . Ho wev er , they are very sparse compared with the total number of | U | × | V | . T o recommend items to users in such a sparse setting, logical inference is important. F or example, a user bought an iPhone may need an iPhone case rather than an Android data line, i.e., iPhone → iPhone case = T , while iPhone → Andr oid data line = F . Let r i,j = 1 / 0 if user u i likes/dislikes item v j . Then for a user u i with a set of interactions sorted by time { r i,j 1 = 1 , r i,j 2 = 0 , r i,j 3 = 0 , r i,j 4 = 1 } , 3 logical expressions can be generated: v j 1 → v j 2 = F , v j 1 ∧ ¬ v j 2 → v j 3 = F , v j 1 ∧ ¬ v j 2 ∧ ¬ v j 3 → v j 4 = T . Note that a → b = ¬ a ∨ b . So in this way , we can transform all the users’ interactions into logic expressions in the format of ¬ ( a ∧ b · · · ) ∨ c = T /F , where inside the brackets are the interaction history and to the right of ∨ is the target item. Note that at most 10 previous interactions right before the tar get item are considered in our experiments. Experiments are conducted on two publicly a vailable datasets: • ML-100k [ 8 ]. It is maintained by Grouplens 3 , which has been used by researchers for many years. It includes 100,000 ratings ranging from 1 to 5 from 943 users and 1,682 movies. • Amazon Electronics [ 9 ]. Amazon Dataset 4 is a public e-commerce dataset. It contains re views and ratings of items gi ven by users on Amazon, a popular e-commerce website. W e use a subset in the area of Electronics, containing 1,689,188 ratings ranging from 1 to 5 from 192,403 users and 63,001 items, which is bigger and much more sparse than the ML-100k dataset. The ratings are transformed into 0 and 1. Ratings equal to or higher than 4 ( r i,j ≥ 4 ) are transformed to 1, which means positiv e attitudes (like). Other ratings ( r i,j ≤ 3 ) are con verted to 0, which means negati ve attitudes (dislike). Then the interactions are sorted by time and translated to logic expressions in the way mentioned abo ve. W e ensure that e xpressions corresponding to the earliest 5 interactions of e very user are in the training sets. For those users with no more than 5 interactions, all the expressions are in the training sets. For the remaining data, the last two e xpressions of ev ery user are distributed into the v alidation sets and test sets respectively (T est sets are preferred if there remains only one expression of the user). All the other expressions are in the training sets. The models are ev aluated on two different recommendation tasks. One is binary Preference Prediction and the other is T op-K Recommendation. The NLN on the preference prediction tasks is trained similarly as on the simulated data (Section 4), training on the known e xpressions and predicting the T/F val ues of the unseen expressions, with the cross-entropy loss. For top-k recommendation tasks, we use the pair-wise training strategy [ 19 ] to train the model – a commonly used training strategy in many ranking tasks – which usually performs better than point-wise training. In detail, we use the positi ve interactions to train the baseline models, and use the expressions corresponding to the positiv e interactions to train our NLN. For each positi ve interaction v + , we randomly sample an item the user dislikes or has ne ver interacted with before as the ne gati ve sample v − in each epoch. Then the loss function of baseline models is: L = − X v + log sig moid ( p ( v + ) − p ( v − )) + λ Θ k Θ k 2 F (8) where p ( v + ) and p ( v − ) are the predictions of v + and v − , respectiv ely , and λ Θ k Θ k 2 F is ` 2 - regularization. The loss function encourages the predictions of positive interactions to be higher 3 https://grouplens.org/datasets/movielens/100k/ 4 http://jmcauley.ucsd.edu/data/amazon/index.html 6 than the neg ativ e samples. For our NLN, suppose the logic e xpression with v + as the target item is e + = ¬ ( · · · ) ∨ v + , then the ne gativ e expression is e − = ¬ ( · · · ) ∨ v − , which has the same history interactions to the left of ∨ . Then the loss function of NLN is: L = − X e + log sig moid ( p ( e + ) − p ( e − )) + λ l X i r i + λ ` X w ∈ W k w k 2 F + λ Θ k Θ k 2 F (9) where p ( e + ) and p ( e − ) are the predictions of e + and e − , respecti vely , and other parts are the logic, vector length and ` 2 regularizers as mentioned in Section 2. In top-k ev aluation, we sample 100 v − for each v + and e valuate the rank of v + in these 101 candidates. This way of data partition and ev aluation is usually called the Lea ve-One-Out setting in personalized recommendation. 5.1 Results Analysis T able 3: Performance on recommendation task ML-100k Amazon Electronics Preference 1 T op-K 2 Preference T op-K A UC nDCG@10 A UC nDCG@10 BiasedMF [15] 0.8017 ± 0.0002 0.3700 ± 0.0027 0.6448 ± 0.0003 0.3449 ± 0.0006 SVD++ [14] 0.8170 ± 0.0004 0.3651 ± 0.0022 0.6667 ± 0.0005 0.3902 ± 0.0003 NCF [10] 0.8063 ± 0.0006 0.3589 ± 0.0020 0.6723 ± 0.0008 0.3358 ± 0.0011 NLN- R l 0.7218 ± 0.0001 0.3711 ± 0.0069 0.6490 ± 0.0006 0.4075 ± 0.0036 NLN 0.8211 ± 0.0004 * 0.3807 ± 0.0046 * 0.6894 ± 0.0018 * 0.4113 ± 0.0015 * 1. Binary preference prediction tasks 2. T op-K recommendation tasks *. Significantly better than the best baselines (italic ones) with p < 0 . 05 On ML-100k, λ l and λ ` are set to 1 × 10 − 5 . On Electronics, they are set to 1 × 10 − 6 and 1 × 10 − 4 respectiv ely . The overall performance of models on two datasets and two tasks are on T able 3. BiasedMF [ 15 ] is a traditional recommendation method based on matrix factorization. SVD++ [ 14 ] is also based on matrix factorization b ut it considers the history implicit interactions of users when predicting, which is one of the best traditional recommendation models. NCF [ 10 ] is Neural Collaborativ e Filtering, which conducts collaborati ve filtering with a neural netw ork, and it is one of the state-of-the-art neural recommendation models using only the user-item interaction matrix as input. Their loss functions are modified as Equation 8 in top-k recommendation tasks. NLN- R l provides comparable results on top-k recommendation tasks b ut performs relati vely worse on preference prediction tasks. Binary preference prediction tasks are someho w similar to the T/F prediction task on simulated data. Although personalized recommendation is not a standard logical inference problem, logical inference still helps in this task, which is shown by the results – it is clear that on both the preference prediction and the top-k recommendation tasks, NLN achie ves the best performance. NLN makes more significant improvements on ML-100k because this dataset is denser that helps NLN to estimate reliable logic rules from data. Excellent performance on recommendation tasks re veals the promising potential of NLN. Note that NLN did not e ven use the user ID in prediction, which is usually considered important in personalized recommendation tasks. Our future work will consider making personalized recommendations with predicate logic. 0 1e-06 1e-05 0.0001 0.001 0.01 0.1 λ l - W eigh t of Logic Regularizers 0 . 72 0 . 74 0 . 76 0 . 78 0 . 80 0 . 82 T est A UC ML-100k Preference 0 1e-06 1e-05 0.0001 0.001 0.01 0.1 λ l - W eigh t of Logic Regularizers 0 . 36 0 . 37 0 . 38 0 . 39 T est nDCG@10 ML-100k T op-K Figure 4: Performance with different weights of logic re gularizers. 7 • W eight of Logic Re gularizers . Results of using different weights of logical regularizers verify that logical inference is helpful in making recommendations, as shown in Figure 4. Recommendation tasks can be considered as making fuzzy logical inference according to the history of users, since a user interaction with one item may imply a high probability of interacting with another item. On the other hand, learning the representations of users and items are more complicated than solving standard logical equations, since the model should have sufficient generalization ability to cope with redundant or e ven conflicting input e xpressions. Thus NLN, an integration of logic inference and neural representation learning, performs well on the recommendation tasks. The weights of logical regularizers should be smaller than that on the simulated data because it is not a complete propositional logic inference problem, and too big logical regularization weights may limit the expressi veness po wer and lead to a drop in performance. 6 Related W ork 6.1 Neural Symbolic Learning McCulloch und Pitts [18] proposed one of the first neural system for boolean logic in 1943. Re- searchers further developed logical programming systems to make logical inference [ 5 , 11 ], and proposed neural knowledge representation and reasoning frameworks [ 2 , 1 ] for logical reasoning. They all adopt meticulously designed neural architectures to achie ve the ability of logical inference. Although Garcez u. a. [4] ’ s framework has been verified helpful in nonclassical logic, abductiv e reasoning, and normati ve multi-agent systems, these frame works focus more on hard logic reason- ing and are short of learning representations and generalization ability compared with deep neural networks, and thus are not suitable for reasoning ov er large-scale, heterogeneous, and noisy data. 6.2 Deep Learning with Logic Deep learning has achiev ed great success in many areas. Howe ver , most of them are data-driven models without the ability of logical reasoning. Recently there are several w orks using deep neural networks to solve logic problems. Hamilton u. a. [7] embedded logical queries on knowledge graphs into vectors. Johnson u. a. [12] and [ 23 ] designed deep framew orks to generate programs and make visual reasoning automatically . Y ang u. a. [22] proposed a Neural Logic Programming system to learn probabilistic first-order logical rules for kno wledge base reasoning. Dong u. a. [3] dev eloped Neural Logic Machines trying to learn inductiv e logical rules from data. Researchers are e ven trying to solve SA T problems with neural networks [ 21 ]. These works use pre-designed model structures to process dif ferent logical inputs, which is different from our NLN approach that constructs dynamic neural architectures. Although they help in logical tasks, they are less flexible in terms of model architecture, which makes them problem-specific and limits their application in a di verse range of both theoretical and practical tasks. 7 Conclusion & Discussions In this work, we proposed a Neural Logic Network (NLN) framework to make logical inference with deep neural networks. In particular , we learn logic variables as vector representations and logic operations as neural modules re gularized by logical rules. The integration of logical inference and neural network re veals a promising direction to design deep neural networks for both abilities of logical reasoning and generalization. Experiments on simulated data show that NLN works well on theoretical logical reasoning problems in terms of solving logical equations. W e further apply NLN on personalized recommendation tasks effortlessly and achie ved e xcellent performance, which rev eals the prospect of NLN in terms of practical tasks. W e believ e that empowering deep neural networks with the ability of logical reasoning is essential to the next generation of deep learning. W e hope that our work provides insights on de veloping neural networks for logical inference. In this work, we mostly focused on propositional logical reasoning with neural networks, while in the future, we will further explore predicate logic reasoning based on our neural logic network architecture, which can be easily extended by learning predicate operations as neural modules. W e will also e xplore the possibility of encoding knowledge graph reasoning based on NLN, and applying NLN to other theoretical or practical problems such as SA T solvers. 8 References [1] B RO W N E , Antony ; S U N , Ron: Connectionist inference models. In: Neural Networks 14 (2001), Nr . 10, S. 1331–1355 [2] C L O E T E , Ian ; Z U R A D A , Jacek M.: Kno wledge-based neurocomputing. (2000) [3] D O N G , Honghua ; M AO , Jiayuan ; L I N , Tian ; W A N G , Chong ; L I , Lihong ; Z H O U , Denny: Neural Logic Machines. In: ICLR (2019) [4] G A R C E Z , Artur S. ; L A M B , Lus C. ; G A B BAY , Dov M.: Neural-Symbolic Cognitiv e Reasoning. (2008) [5] G A R C E Z , Artur S A. ; Z A V E RU C H A , Gerson: The connectionist inducti ve learning and logic programming system. In: Applied Intelligence 11 (1999), Nr . 1, S. 59–77 [6] G R A V E S , Alex ; S C H M I D H U B E R , Jürgen: 2005 Special Issue: Framewise phoneme classifica- tion with bidirectional LSTM and other neural network architectures. In: Neural Networks 18 (2005), Nr . 5-6, S. 602–610 [7] H A M I L T O N , W ill ; B A JA J , Payal ; Z I T N I K , Marinka ; J U R A F S K Y , Dan ; L E S KO V E C , Jure: Em- bedding logical queries on knowledge graphs. In: Advances in Neural Information Pr ocessing Systems , 2018, S. 2026–2037 [8] H A R P E R , F M. ; K O N S T A N , Joseph A.: The movielens datasets: History and context. In: Acm transactions on inter active intelligent systems (tiis) 5 (2016), Nr . 4, S. 19 [9] H E , Ruining ; M C A U L E Y , Julian: Ups and downs: Modeling the visual e volution of f ashion trends with one-class collaborativ e filtering. In: pr oceedings of the 25th international confer ence on world wide web International W orld W ide W eb Conferences Steering Committee (V eranst.), 2016, S. 507–517 [10] H E , Xiangnan ; L I AO , Lizi ; Z H A N G , Hanwang ; N I E , Liqiang ; H U , Xia ; C H UA , T at-Seng: Neural collaborati ve filtering. In: Pr oceedings of the 26th International Confer ence on W orld W ide W eb International W orld W ide W eb Conferences Steering Committee (V eranst.), 2017, S. 173–182 [11] H Ö L L D O B L E R , Steffen ; K A L I N K E , Yvonne ; K I , Fg W . u. a.: T ow ards a ne w massi vely parallel computational model for logic programming. In: In ECAI’94 workshop on Combining Symbolic and Connectioninst Pr ocessing Citeseer (V eranst.), 1994 [12] J O H N S O N , Justin ; H A R I H A R A N , Bharath ; M A AT E N , Laurens van der ; H O FF M A N , Judy ; F E I - F E I , Li ; Z I T N I C K , C L. ; G I R S H I C K , Ross: Inferring and Executing Programs for V isual Reasoning. In: 2017 IEEE International Conference on Computer V ision (ICCV) IEEE (V eranst.), 2017, S. 3008–3017 [13] K I N G M A , Diederik P . ; B A , Jimmy: Adam: A method for stochastic optimization. In: arXiv pr eprint arXiv:1412.6980 (2014) [14] K O R E N , Y ehuda: Factorization meets the neighborhood: a multifaceted collaborativ e filtering model. In: Proceedings of the 14th A CM SIGKDD international confer ence on Knowledge discovery and data mining A CM (V eranst.), 2008, S. 426–434 [15] K O R E N , Y ehuda ; B E L L , Robert ; V O L I N S K Y , Chris: Matrix Factorization T echniques for Recommender Systems. In: Computer 42 (2009), Nr . 8, S. 30–37 [16] L E S H N O , Moshe ; L I N , Vladimir Y . ; P I N K U S , Allan ; S C H O C K E N , Shimon: Multilayer feedforward networks with a nonpolynomial acti vation function can approximate an y function. In: Neural networks 6 (1993), Nr . 6, S. 861–867 [17] M A A T E N , Laurens van d. ; H I N T O N , Geoffre y: V isualizing data using t-SNE. In: Journal of machine learning r esear ch 9 (2008), Nr . Nov , S. 2579–2605 [18] M C C U L L O C H , W arren S. ; P I T T S , W alter: A logical calculus of the ideas immanent in nervous activity . In: The bulletin of mathematical biophysics 5 (1943), Nr . 4, S. 115–133 9 [19] R E N D L E , Steffen ; F R E U D E N T H A L E R , Christoph ; G A N T N E R , Zeno ; S C H M I D T - T H I E M E , Lars: BPR: Bayesian personalized ranking from implicit feedback. In: Pr oceedings of the 25th confer ence on uncertainty in artificial intelligence A U AI Press (V eranst.), 2009, S. 452–461 [20] S C H U S T E R , Mike ; P A L I W A L , Kuldip K.: Bidirectional recurrent neural networks. In: IEEE T r ansactions on Signal Pr ocessing 45 (1997), Nr . 11, S. 2673–2681 [21] S E L S A M , Daniel ; L A M M , Matthe w ; B Ü N Z , Benedikt ; L I A N G , Percy ; M O U R A , Leonardo de ; D I L L , David L.: Learning a SA T solver from single-bit supervision. In: arXiv pr eprint arXiv:1802.03685 (2018) [22] Y A N G , Fan ; Y A N G , Zhilin ; C O H E N , W illiam W .: Dif ferentiable learning of logical rules for knowledge base reasoning. In: Pr oceedings of the 31st International Confer ence on Neural Information Pr ocessing Systems Curran Associates Inc. (V eranst.), 2017, S. 2316–2325 [23] Y I , Kexin ; W U , Jiajun ; G A N , Chuang ; T O R R A L BA , Antonio ; K O H L I , Pushmeet ; T E N E N - BA U M , Josh: Neural-symbolic vqa: Disentangling reasoning from vision and language under- standing. In: Advances in Neural Information Pr ocessing Systems , 2018, S. 1031–1042 10 APPENDIX T o help understand the training process, we show the curves of Training, V alidation, and T esting RMSE during the training process on the simulated data in Figure 5. 0 20 40 60 80 100 T raining Ep o c hs 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 RMSE n = 1 × 10 3 , m = 5 × 10 3 T rain V alidation T est Figure 5: RMSE curves during the training process. Furthermore, the visualization of variable embeddings in dif ferent epochs are sho wn in Figure 6. − 10 0 10 Ep o c h 0 − 10 0 10 − 5 0 5 Ep o c h 1 − 5 0 5 − 10 0 10 Ep o c h 3 − 10 0 10 − 20 − 10 0 10 Ep o c h 5 − 20 0 20 − 25 0 25 Ep o c h 10 − 20 0 20 − 25 0 Ep o c h 20 0 50 − 20 0 20 Ep o c h 30 − 50 0 50 − 50 0 50 Ep o c h 50 − 20 0 20 − 25 0 25 Ep o c h 80 − 20 0 20 40 − 25 0 25 Ep o c h 100 − 25 0 25 n = 1 × 10 3 , m = 5 × 10 3 p ositiv e negativ e Figure 6: V isualization of the variable embeddings in dif ferent epochs based on t-SNE. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment