CameraTransform: a Scientific Python Package for Perspective Camera Corrections

Scientific applications often require an exact reconstruction of object positions and distances from digital images. Therefore, the images need to be corrected for perspective distortions. We present \textit{CameraTransform}, a python package that performs a perspective image correction whereby the height, tilt/roll angle and heading of the camera can be automatically obtained from the images if additional information such as GPS coordinates or object sizes are provided. We present examples of images of penguin colonies that are recorded with stationary cameras and from a helicopter.

💡 Research Summary

The paper introduces CameraTransform, an open‑source Python package designed to correct perspective distortion in single images by automatically estimating the camera’s extrinsic parameters (height, tilt, roll, and heading) from minimal auxiliary information such as GPS coordinates or known object sizes. The authors begin by reviewing the geometric foundations of image formation: world points are expressed in homogeneous (projective) coordinates and mapped to image points via a 3 × 4 camera matrix C, which is the product of an intrinsic matrix C_intr (derived from focal length, sensor dimensions, and pixel resolution) and an extrinsic matrix C_extr (encoding the camera’s 3‑D position and three rotation angles). The intrinsic matrix is straightforward to compute, while the extrinsic matrix is built from a translation vector (x, y, –height) and three rotation matrices for tilt (about the x‑axis), roll (about the optical axis), and heading (about the vertical axis).

Projection from world to image is a simple matrix multiplication followed by division by the homogeneous scale factor. Inverse projection is more involved because depth information is lost; the authors resolve this by fixing one world coordinate (e.g., assuming the ground plane z = 0) and inverting a reduced 3 × 3 sub‑matrix of C.

The core contribution is a set of fitting routines that infer the extrinsic parameters from image features:

-

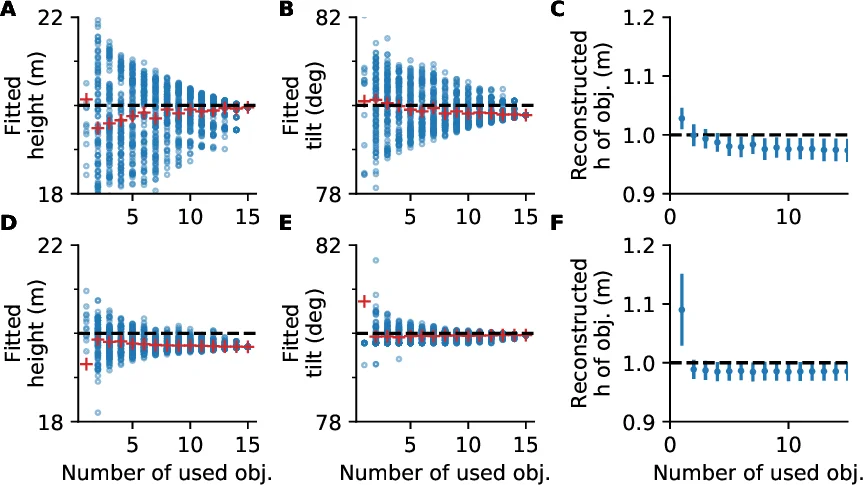

Height‑based fitting – Users manually mark the foot and head of objects whose true height is known (e.g., penguins ≈ 1 m). The algorithm projects the foot points to world coordinates, lifts them by the known height, re‑projects them, and minimizes the residual between the projected heads and the manually marked heads using a non‑linear least‑squares optimizer. This yields estimates of camera height, tilt, and optionally roll and heading.

-

Horizon constraint – When a visible horizon line is present, the user can draw it; the fitting routine adds a term that penalizes deviation between the fitted horizon (derived from the current camera model) and the user‑drawn line. This dramatically reduces uncertainty, especially for tilt, as demonstrated in synthetic experiments.

-

Geo‑referencing via image registration – For images taken from large tilt angles (e.g., helicopter surveys) where object size does not vary enough for height‑based fitting and the horizon may be absent, the package can align the image to a georeferenced map or satellite photo. By selecting at least eight corresponding points, a cost function measuring the Euclidean distance between transformed image points and map points is minimized, simultaneously solving for camera position, height, tilt, and heading.

The authors conduct a sensitivity analysis by varying camera height and tilt by ±10 % and projecting 1 m tall objects at distances of 50–300 m. Height variations produce negligible reconstruction error, whereas tilt variations cause errors that increase with distance, confirming that accurate tilt estimation is critical.

Two real‑world case studies illustrate the workflow. In the first, a stationary camera monitors an Emperor penguin colony; 20 manually marked foot–head pairs yield a fitted camera height of 23.7 m, close to the GPS‑measured 25.7 m. In the second, a helicopter image of a King penguin colony is registered to a Google Earth orthophoto using eight terrain features; the fit aligns all but one point (which had shifted due to river course change) within ~1 m.

CameraTransform is released under GPLv3, depends on NumPy and SciPy, and provides both functional and object‑oriented APIs (e.g., fit_camera_from_heights, project_world_to_image, Camera). Comprehensive documentation, installation instructions, and example notebooks are hosted at http://cameratransform.readthedocs.io.

In summary, CameraTransform offers a practical, mathematically transparent solution for post‑hoc camera calibration and perspective correction in ecological and geospatial research. By leveraging minimal field data—known object dimensions, horizon lines, or map correspondences—it enables accurate reconstruction of real‑world positions from single images, facilitating quantitative analyses that would otherwise require costly or impractical in‑field measurements. Future extensions could incorporate multi‑camera rigs, video streams, or deep‑learning based feature detection to broaden its applicability across scientific and engineering domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment