Respect Your Emotion: Human-Multi-Robot Teaming based on Regret Decision Model

Often, when modeling human decision-making behaviors in the context of human-robot teaming, the emotion aspect of human is ignored. Nevertheless, the influence of emotion, in some cases, is not only undeniable but beneficial. This work studies the hu…

Authors: Longsheng Jiang, Yue Wang

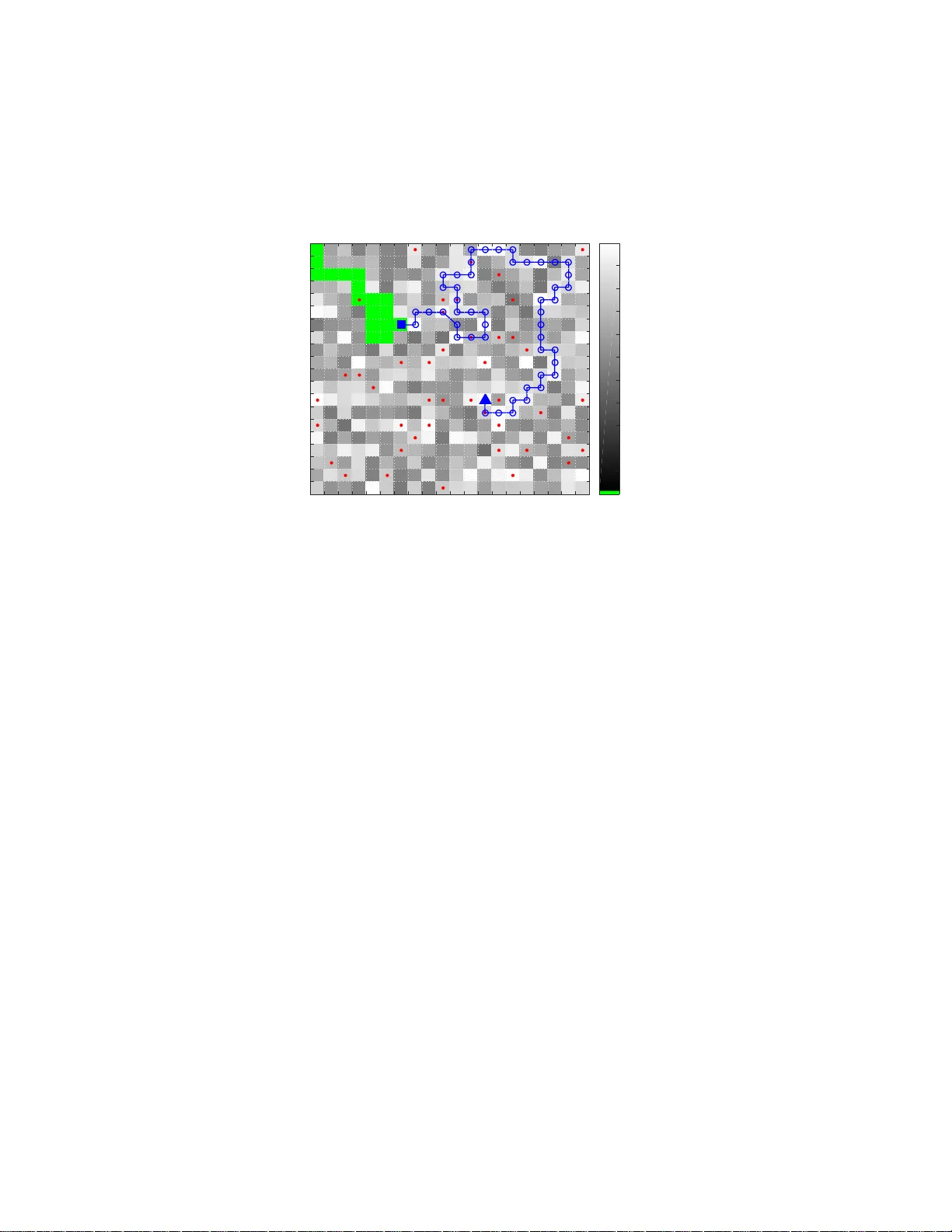

Respect Y our Emotion: Human-Multi-Robot T eaming based on Re gret Decision Mod el Longshe ng Jiang and Y ue W ang Abstract Often, when modeling human decision-making behaviors in the context of human-robot teaming, the emotion aspect of human is igno red. Nev ertheless, the influence of emotion, in some cases, is n ot only undeniable but ben eficial. This work studies the human-like characteristics broug ht by r egret emotion in one-human-multi-robot teaming for the application of domain search . In such application, the task mana gement load is outsourced to the robots t o reduce the human’ s workload, freeing t he human to do more important work. The regret decision model is first used by each robot for deciding whether to request human service, then is extende d for optimally queuing the requests from multiple robots. For the mov ement of the robots in the domain search, w e designed a path planning algorithm based on dynamic programming for each robot. The simulation sho ws that the human-like characteristics, namely , risk- seeking and r i sk-av ersion, indeed bring some appealing effects for balancing the workload and performance in the human-multi-robot team. I . I N T R O D U C T I O N A team of many low-cost small robots may of ten p erform b etter than one big expen si ve robo t. The rob ot team is flexible, r obust, and the robots are expendab le. However , low-cost ro bots d o n o t have high ly accu rate sen so rs. These d rawbacks can be alleviated by teamin g the robots with hum an operator s. Due to this reaso n , the resear ch in studying the interaction between o ne hu man and multiple robots has attracted much interests [1 ] . One challen ge in such c ollaboration is th e balance betwe e n h uman workload and team perf ormance [ 2]. Humans are much better in sensing and recognition than lo w- cost robots. Thus, utilizing human s can attain b etter task outcomes. Howe ver , h u mans are also slower , mor e expen sive and subject to fatigue. Hence, hu man worklo a d mu st be taken into co nsideration whe n desig n ing the in teraction b etween a h uman a nd multiple robots. For instanc e , the workload of task manag e ment can be outsourc e d to r o bots to certain extents [3]; an in tellig ent agent can be used to coordin a te the gro up of robots [4]. Because the human in the team is not only a co llaborator but a lso a sup ervisor, h e/she holds the respon sib ility of man aging the robo ts. When the mana g ement lo ad is d elegated to the rob ots, the robo ts shou ld make decisions in a way similar to that of the human. Th us, a quantitative mod el of the hu man’ s d ecision-mak ing is need ed. In early d a ys, when needing a qu a n titati ve decisio n -making m o del, humans were o ften approx im ated as optima l controller s [5] or expected u tility maximizers [6]. Howe ver, research ers gradually noticed the inevitable influence of em otion to decision -making [7] an d started to incorporate the effects of emotion wh en designing their ro bots [8]. Emotion is not o nly o f affection impor tance, sometimes it actually helps people to make wiser de c isio n s. For instance, it is th e wariness of futu re disasters an d the r egre t when up on the d isasters that bias p e ople tow ard purcha sin g insur ances [9]. Regret is im portant fo r hum an decision-mak in g. It explains peop le’ s risk-seek in g and risk- averse behaviors. Risk- av erse hap pens, as in the above insur ance example, whe n facing two o p tions 1) of a high c ost but low occur rence probab ility and 2 ) of a small b ut su r e cost, respectiv ely . Risk-seeking h appens when facing two o ptions 1) of a moderate cost but high occurre nce pro bability an d 2) of a sure co st close to the mo derate cost, respectively . These two typ es of decision-ma k ing pattern shou ld not be con sidered as anoma lies b ecause they are ob served in th e majority of p eople [10]. In this work, we will include the hum a n risk-attitud es in the coord in ation of a huma n -multi-ro bot team in perfor ming a domain search task . In the task, the human o perator outsou rces the task management load to each robot and an in telligent agent. The novelty is that we u se the extende d versions of regret theory [9] as th e decision- making m odel, for the ro b ots’ decision- m aking and for th e agen t’ s queue -orderin g. The intuition beh ind regret theory is that both the risk-seeking an d risk-averse behaviors are caused by regretful emotion: Choo sing one op tion means fo rgo ing the other, and you migh t regret that y ou did no t choose otherwise. The th eory is b acked by , in neurology , the identification of brain r egions which generates re gret d uring de c ision-making [1 1], and b y the quantitative mea su rement of regret theory [12]. The authors are with the Mechanic al Engineering Depa rtment, Cle mson Uni versity , Clemson, SC, 29634, USA. E-mail: { longshj, yue6 } @g.cl emson.edu. Research is supported in part by the National Science Foundation under Grant No. CMMI-1454139. W e formu late the task sear ch pro blem in section I I, and explain the d ecision-mak ing of eac h robo t in section III. Section IV describes h ow the several waiting robots are queued o ptimally . Section V shows the path plan n ing of each r o bot. W e show the simulatio n and r esults in section VI and conclude the work in section VII. I I . P RO B L E M F O R M U L A T I O N A group of target objects, with a known total amount, are scattered in a large 2 -D d omain with unknown locations. A team—com prising of mu ltiple robots and a h uman o perator—is tasked to find th ose objects. While the rob ots are dep loyed o n field, the operator stays in the oper ating statio n . Robots ar e equipped with v ision sensors, GPS and c o mmunicatio n u nits. They have direct commun ica tion with th e station. The large domain is d i vided into small regions with only one ro bot in each . Each region is divided f urther into cells. The size of each cell is assum ed to b e large eno ugh to contain at m ost one object. Each r obot r ∈ R , where R is the set of all r obots, sweep s the cells o n e by on e to d e tect if a cell con tains an object. When a rob ot r is at cell x r , du e to the limitatio n of its sensing capability , its detection r esult Y r might po ssibly be correct o r wrong . Alternatively , the r obot has th e o ption to request th e h uman to tele-operate itself; the com munication chan nel is established f or the human to see th rough th e rob o t’ s v ideo ca m era. Th e hum an detec tion accuracy is superior, yet with a h igher cost. The robo t needs to choose b etween acc e pting its own detection results (optio n R) an d req uesting human ser v ice (op tion H). When multiple r o bots choo se option H simultan eously , the station qu eues the requests. The complete state of robot r , ( x r , Y r ) , describes b oth the cell at which the robo t curr e n tly locates ( x r ) and correctne ss of the cu rrent detection ( Y r ). It is assumed that the rob ot motio n control is precise enou gh so that x r is determin istic. As mention e d above, howev er , the detectio n result Y r is uncer ta in over two po ssible states: y c for correct detection and y w for w r ong detec tio n. The prior prob ability distribution (belief) of Y r roots in the prio r knowledge o f the possible locations of the objects as well as the sensor model of robot r . The prior p robability of an object present (or ab sent) a t ce ll x r can be represen ted as p ( S x = s p ) (or p ( S x = s a ) ), wher e s p (or s a ) den otes ob ject presence (o r ab sence). For brevity , when denoting proba b ilities, the r andom variables in th e parentheses ar e omitted, e.g ., p ( S x = s p ) = p ( s p ) . The observations ar e represented by O = o p for presen ce and O = o a for a b sence. The sensor model for ro bot r is described by fo ur kn own probab ilities: p ( o a | s a , r ) , p ( o p | s a , r ) , p ( o p | s p , r ) and p ( o a | s p , r ) . The prob ability p r for robot r obtain ing Y r = y c at a cell thus is p r , p ( y c | r ) = p ( o a | s a , r ) p ( s a ) + p ( o p | s p , r ) p ( s p ) . The p robability f o r robo t r to obtain Y r = y w at a cell follows immediate ly : p ( y w | r ) = 1 − p r . The variables in ( x r , Y r ) have different observability as well. W e assume that the localization unce r tainty in GPS is n egligible: x r is f ully observable. H owever , Y r is o nly partially ob servable. Even after an ob servation at cell x r , the actual state of Y r is still no t kn own fo r sure, althoug h th e ob servation help s update the be lief . In deter mining whether to request human serv ice, two types of inform ation sho uld be consid ered: p e r forman ce and cost. Op tion R has compr omised p erforma n ce. Its two p ossible o utcomes, rig ht or wrong , can be de fin ed by the r esulting costs. W e consider the co rrect detectio n causing n o cost, thus c ( y c ) = 0 ; the wrong detectio n howe ver has a cost c ( y w ) < 0 . The human service is con sid e red to have perf ect p erforman ce (a lways y c ), but it has an operation al cost c H < 0 . T he two o p tions are r epresented in T able I. When c H < c ( y w ) < 0 , option R is certainly the choice. Howe ver , wh en c ( y w ) < c H < 0 , how to b a lance the perform ance and cost becom es ch a llenging. Th e later one is the case we study . T A BL E I T W O O P T I O N S R E P R E S E N T E D I N T H E O R I G I N A L F O R M Option H Option R Cost: c H Cost: 0 c ( y w ) Probabil ity: 100% Probabil ity: p r 1 − p r The most pop ular, y e t mo st straightforward, decision-mak ing method is expec ted value theory . It calculates the net advantage o f optio n H relative to option R as e v = c H − (1 − p r ) c ( y w ) . (1) It chooses o p tion H, if e v > 0 ; option R, if e v < 0 ; equ ally liking, otherwise. Multiple r obots ma y requ est huma n service simu ltaneously . When they send their req u ests to the inte llig ent agen t at the station, the agent queues them into a waiting line. The qu euing is u rgency based . Th e human ser ves th e robots one by one. For the team to accomp lish the jo b, each robot needs to move in the domain. Th us, a method of o ptimal path plann ing is needed. I I I . R E G R E T T H E O RY B A S E D D E C I S I O N - M A K I N G The nam e of regret theory co mes from its effort to account f or the in fluence of anticipatory r egre tf ul emotion, which arises fr o m the com parisons of costs. These compar iso ns can b e hig hlighted by representin g T able I in the ne w form in T able II. The last two columns sho w the comparison s of costs, with the joint probabilities f o r the comparisons to occu r (i.e., p r = 1 · p r and 1 − p r = 1 · (1 − p r ) ). Denote the costs of option R as vector c R , [0 , c ( y w )] ; of option H, a s c H , [ c H , c H ] . W e ca n use c R and c H as handles to the two op tions. T A BL E II T W O O P T I O N S R E P R E S E N T E D I N T H E C O M PA R A T I V E F O R M Joint probability p r 1 − p r Cost Option H: c H c H c H Option R: c R 0 c ( y w ) Mathematically , the regret influ ence is mod elled by a function Q (∆ c ) , where the a rgument ∆ c is the difference of co sts in comp arison. The f unction Q (∆ c ) has some important pr operties [9] that include: i) Q (∆ c ) is an odd functio n, ii) Q (∆ c ) is m o notonica lly incr e asing. It is also shown that, in human decision -making , pro babilities are subjec ti vely biased [10], and the ev a lu ation of absolute costs is affected by the range of costs [13]. W e th us in troduce p robability weig hting f unctions w k ( p r ) , k = 1 , 2 , [14] and a constan t cost normalizer c range > 0 to th e original regret theor y [1 5]. The costs afte r normalization are in [ − 1 , 0] . The decision- making in regret theor y is deter mined b y the net advantage of on e option with respect to the oth e r option. I n the case of T able II, the net advantage of c H when u sing c R as th e referen ce is e r ( c H , c R ) , 2 X k =1 w k ( p r ) Q c H ( k ) − c R ( k ) c range , (2) where c ( · ) ( k ) is the k -th elem ent in vector c ( · ) . Th e two fu nctions w 1 and w 2 are d ifferent but d epend on a un ique w -f unction such that w 1 ( p r ) = w ( p r ) an d w 2 ( p r ) = 1 − w ( p r ) , ac c ording to [14]. The ch oice is option H—denoted as c H ≻ c R —if e r ( c H , c R ) > 0 ; o p tion R ( c H ≺ c R ) if e r ( c H , c R ) < 0 ; equally liking ( c H ∼ c R ), o therwise. There, the Q - f unction and the w -fun ction ar e individual specific and can b e measure d [15]. In th is work, we use the f u nctional fo rms for the the Q -fu nction and the w -fu nction. Th ese an alytical expression s agree with the empirica l data in th e literature. The Q -func tio n [ 1 2] and the w - f unction [1 6] are defined as, Q (∆ c ) , α 1 sinh( α 2 ∆ c ) + α 3 ∆ c, (3) w ( p r ) , exp − β 1 − log( p r ) β 2 , (4) where α 1 , α 2 , α 3 > 0 and β 1 , β 2 > 0 are parameter s spe cific to ind i viduals and can be estimated with data. I V . R E G R E T T H E O RY B A S E D Q U E U E - O R D E R I N G It is often the c ase that multiple robots c h oose op tion H simultaneously . Howe ver , the operato r can o nly serve one robot at a time ; the robots need to f orm a waiting line. An ordered waiting lin e can be re presented by a permutatio n. Supp o se N rob ots are cho osing option H. The n umber of permutations is rough ly ab out N ! ( so me service requ e sts may be rejected). The N robots form a set R H . Regarding robot r ∈ R H , the intellige nt ag e n t needs to decide either to reject its request or to designate to it a line position n ∈ { 1 , 2 , . . . , M } , and M ≤ N because of possible ser v ice rejections. Thus, for the intellige n t ag ent, there are M + 1 op tions for robot r , which are de fin ed in set C r , { c H 1 , . . . , c H M , c R | p r } , as in T able I II. Th e cost p art { c H 1 , . . . , c H M , c R } is indepen dent to robo t r , becau se cost vectors c H n depend on line po sition n , and c R on cost c ( y w ) only; set C r relates to ro bot r only because of pr obability p r . As r o bot r is further d own the line, the waiting time is long er , cost c H n is more negativ e, i.e . , c H 1 > c H 2 > . . . > c H M . Then, w e have the f ollowing result. T A BL E III M U L T I P L E O P T I O N S I N C O M PA R A T I V E F O R M F O R R O B OT r Joint probability p r 1 − p r Cost Option H 1 : c H 1 c H 1 c H 1 . . . . . . . . . Option H M : c H M c H M c H M Option R: c R 0 c ( y w ) Theor em 1 . For one certa in option c H n and o ne uncertain option c R , the net advantage e r ( c H n , c R ) is mon otonically increasing with respe ct to scalar co st c H n . Pr oof. Substitute c H n = [ c H n , c H n ] f or c H in Eqn. (2) and take d e riv ative with respect to c H n , we have ∂ e r ∂ c H n ( c H n , c R ) = 1 c range 2 X k =1 w k ( p r ) Q ′ c H n − c R ( k ) c range . Because of Q ′ ( · ) > 0 fro m prop erty (ii), the weighting fu n ction w k ( p r ) > 0 , k = 1 , 2 , for any p r 6 = 0 , an d c range > 0 , the deriv ativ e ∂ e r ∂ c H n > 0 for any c H n . Moreover , in an option set with more than two o ptions, wha t matters is no t only which o p tion is superio r , but how mu ch one op tion is better than ano ther . T o enable th e comp arison in mag n itudes, a comm on reference option should be chosen again st which the net advantage of each option in the set is com puted. W e choo se c R in C r as such referenc e be c a use option c R is th e “bo ttom line” of C r ; ro b ot r opts for o ption H—we call c H n , n = 1 , . . . , M , all as optio n H—if and o nly if c H n ≻ ∼ c R . W e use an augmented permutation matrix P fo r r ecording the order of the N r obots in a line o f len gth M ; whenever a rob ot is rejected o f serv ice (c hoosing c R ), its po sition is set as M + 1 . The accum ulated net advantage function of P can be d efined a s G ( P ) , X r ∈R H e r ( c r , c R ) , (5) where c r ∈ C r and c R is the option R for r obot r . It has th e co nstraint th at if c r 1 = c H m and c r 2 = c H n for a ny two ro bots in R H , respectiv ely , then m 6 = n . W e then can find the optima l P ∗ in the space P of P , P ∗ = ar gmax P ∈P G ( P ) . (6) Each of the optimal queues is a sequence such that the robots in urgency are served first, and th e urgen cy dep ends on h ow fast their n et ad vantages decr e ase. T o find the exact optima, it inv olves enumeratin g all P in P . When N grows large, |P | ≈ N ! , makin g it difficult to compu te P ∗ in real- time. Hence, we use a fast h euristic algorith m to approx imate th e o ptimal solution. Recall c H n denotes the co st of waiting at line po sition n . Forming a line consists of selecting a robo t fo r each position n = M , M − 1 , . . . , 1 . Algo rithm 1 describes a simp le mech anism fo r such a selectio n. Algorithm 1 Optimiza tion for the App roximated Mod el Input: R H Output: P 1: Initialization : M ← 0 , ˆ R H ← R H , and N q ← |R H | 2: while M < N q do 3: M ← M + 1 an d N q ← 0 4: for r ∈ ˆ R H do 5: if e r ( c H M , c R ) > 0 t hen 6: N q ← N q + 1 7: end if 8: end for 9: end while 10: for r ∈ ˆ R H do 11: if e r ( c H M , c R ) ≤ 0 t hen 12: Reject service to ro bot r . 13: Save this choice for robo t r to P 14: Prun in g: ˆ R H ← ˆ R H \ r 15: end if 16: end for 17: for n = M co unting down to n = 1 do 18: Select a rob ot r at p o sition n accor d ing to Eqn. (7) 19: Sa ve th e determin ed position n of ro bot r to P 20: Pruning: ˆ R H ← ˆ R H \ r 21: end for In Algorith m 1, lines 2–9 d etermine the length M of the que u e such that e r ( c H n , c R ) > 0 , n ∈ { 1 , 2 , . . . , M } for the rob ots. I f, because of waiting, th e net advantage becomes e r ( c H n , c R ) ≤ 0 for so m e r , then the robot should opt for option R, he n ce M ≤ N . Lines 10–1 5 reject serving the robots which a re determined better to choo se option R. Lines 16– 20 proce e d to select a robot r at position n from n = M to n = 1 , using r ∗ = ar gmax r ∈ ˆ R H ∆ e r ( c H n , c H n +1 ) , (7) and ∆ e r ( c H n , c H n +1 ) , e r ( c H n +1 , c R ) − e r ( c H n , c R ) . Since in the line, c H n only exists for n ∈ { 1 , . . . , M } , th e artificial c H M +1 is d efined as c H M +1 , c R . It is imp o rtant that this algorithm works backward. Due to Theorem 1, ∆ e r ( c H n , c H n +1 ) < 0 . What Eqn. (7) does is to find th e ∆ e r which is clo sest to zero, meaning th e chang e is not rapid and th e robo t is less urgent. W e thus pu t this robot toward the end of th e waiting line. V . P A T H P L A N N I N G This section fo cuses on the path p lanning of one r obot, thu s nota tio n r is omitted without loss o f specificity . In th is work, we em p loy two strategies for the searching . First, we u se a sweeping strategy: Each cell is visited only o nce, and the sear c h ends as soon as all the objects ar e fou nd. This strategy is e fficient: It av o ids rep e titi ve and complete coverage of th e domain. Its drawback , h owe ver, is th at there is no secon d cha nce f or e ach detection. Second, we adop t mo d ular desig n fo r th e decision-mak ing and the path pla n ning. Its v irtue is allowing us to fo cus on dev eloping and improving th e two m odules indepen d ently . The price is the d isregard for the vexing in fluence between the two modules, wh ich we will address in ou r futu re work. The state of the robo t is ( x , Y ) . W e model the state tran sition of the ro bot as in Fig. 1. At x = i , the robot is uncertain if its detection state is y w or y c . T he un certainty is denoted with a b elief b Y ( i ) . T he ro b ot acts by choosing the n ext c e ll amo ng all unvisited ce lls. Wit h a candidate action a j , the r obot will move to x = j . It will receive an observation, o p or o a , at cell j . Based on th e ob servation, the robot w ill up date its belief o f detec tion states a t ce ll j , denoted as B ( i, a j , o p ) or B ( i, a j , o a ) , respectively . Then , the robot will fur th er select anoth er next cell. Depending o n the detection state th e robot is in and the action it selects, d ifferent co sts apply , denoted a s c ( y w ) , c ( y c ) and c ij . Cumulative costs ˆ V h are accumu lated from the in dicated locations to the en d of the plannin g horizon , where h is the length to end of the h o rizon. W e assum e that f or any two cells i and j , be lief b Y ( i ) and b Y ( j ) are indepen dent. The o ptimal p o licy a t cell i can be obta in ed throu gh dyna mical progr amming: ˆ V h ( i ) = max a j ∈A h c ij + X y j ∈Y p ( y j ) c ( y j ) + ˆ V h − 1 ( j ) i , where set A con tains the un visited cells, Y , { y w , y c } . V I . S I M U L AT I O N A N D R E S U LT S W e simulated a team consisting of 1 h uman and 10 rob ots, as in Fig. 2. Each rob ot was assigned to a region with 1 0 by 1 0 cells. I n total there were 100 0 cells. Each cell had a pr ior pro bability o f contain ing an objec t that was drawn randomly fro m interval [0 , 0 . 2] . In total there were 100 cells contain ing objects. For the regret decision model, we used the paramete r s o f subjec t 12 in our p revious work [15]. W e simulated the sear c h task in two cond itions. In the condition of hig h sensor acc uracy and low human co st, we set the sen sor m odel of each robot as p ( o a | s a ) = p ( o p | s p ) = 0 . 7 . These sensors were moderate b ecause they were on low-cost rob ots. T he observations in cells wer e simu la ted once ran d omly using the pr io r distribution an d the sensor model, a n d th en saved for the f ollowing simulatio ns. Th us, we had th e same simu lation environment to com pare regret th eory an d expected value theory . The cost of being wrong (a m iss or a false alarm) was c ( y w ) = − 30 . The cost of being correct (a hit or a correc t rejection) with human service was relatively low , c H n = − 1 . 5 n , where n was th e line po sition assigned by the intelligen t agent. As a contrast, in th e co ndition of low sen sor accuracy and high human cost, we set the sensor m odel as p ( o a | s a ) = p ( o p | s p ) = 0 . 5 to simulate severe situation s. In this case, the wrong d e tection cost was still c ( y w ) = − 30 but the human service co st was set at c H n = − 1 0 n . Fig. 3 shows the difference between q ueue-or dering with the regret d ecision model an d the expe c ted value decision mod e l un der th e high sensor accu racy low human cost co ndition. As seen, the waiting lin e based on regret theory is lo nger than based on expec te d value theory . I t ind icates that more r obots d ecided to requ est human service under the regret decision m o del, comparin g with expecte d value th eory . I n this scenar io, th e robots with the regret decision model became risk-averse. The intelligen t agent was also r isk -av erse because it retained a longer line. Also, the queu e-orderin g was dynam ic . At time t there were 7 robots waiting under the regret d e c ision model. In the simulatio n we set tha t the human ope rator could pro cess 3 requests each time step. Thus, at time t + 1 , the length of the waiting line redu ced to 4. At time t + 2 , howev er , there were n ew requests from the rob ots. Th ese requests did not n ecessarily q ueue at the end of the line. Depen d ing on their urgency , they could be in serted to the front; In Fig. 3, they were inserted before th e request from robot 1. The perform ance of the two decision -making models can be further sho wn in T ab le IV. In the high senor accuracy low hu man co st cond ition, r obot d etection perfo rmed well and the op erator was fresh thus was of low costs. Th e accuracy of d etection, ind icated by the perc e ntage of o b jects foun d , was much h igher for the r egret decision mod el than the expected value d ecision mod el. The improvement of accuracy was due to the risk-averse attitud e, showing by th e willing ness to accept more hu man service requ ests and longer task dur ation. Opposite to Fig. 3 and T ab le IV, wh en u nder the low sensor accuracy hig h hu man cost con dition, the waiting lines were sho r ter fo r th e regret decision mod el, see T ab le V. In this severe situation , ro bot detection perfor med poorly and the oper ator w as very tired thus was costly in providing service. Shown by the co mparisons in the percentag e o f objects found an d the number s of hu m an services, th e queuin g based o n the regret decision mo del became risk-seeking: I t did n ot want to provide service for on ly a small improvement in accu racy . Fig. 1. T he state transition of the robot moving from cell i to j . 1 2 3 4 5 Requests: Station Human Fig. 2. The collaborat ion of a human and multi-robot search team. R4 R6 R7 R8 R3 R2 R1 R4 R6 R8 R3 R2 R1 R10 R10 R7 R6 R4 R5 R9 R1 R7 R5 R2 R4 R5 R8 R9 R1 R3 R1 1 2 3 4 5 6 7 Head Tail Regret Theory Expected Value Theory Fig. 3. The comparison between the wait ing lines formed accord ing to regret theory and expe cted v alue theory , respecti vely , under the high sensor accurac y low human cost condition . T A BL E IV T E A M I N G P E R F O R M A N C E ( H I G H S E N S O R A C C U R A C Y L OW H U M A N C O S T ) Regr et Theory Expected V alue A vera ge queue length: 5.0 2.3 Percent age of objects found: 100% 66% Number of human services: 970 349 T ask duration (steps): 385 168 T A BL E V T E A M I N G P E R F O R M A N C E ( L O W S E N S O R A C C U R A C Y H I G H H U M A N C O S T ) Regr et Theory Expected V alue A vera ge queue length: 0.8 1.84 Percent age of objects found: 15% 19% Number of human services: 44 134 T ask duration (steps): 58 72 From the human ope r ator’ s per sp ecti ve, the decision s mad e by the regret decision mode l are mo r e accep table. When the human is fresh, he/she tends to, and is able to, avoid additional cost in the task by p roviding extra effort. Howe ver, when the human is tired, the ergono m ics becom es imp o rtant. Even tho ugh the human provided extra effort, the outcomes in the task would only im prove marginally . A wiser decision thus is to just sa ve effort. In Fig. 4, we show the planned p a th of one robot using d y namic pro gramming with receding horizon. The grid of this region is 20 by 20 , becau se we want to show a longer plan ning ho rizon. The sensor model used here was p ( o a | s a ) = 0 . 7 and p ( o p | s p ) = 0 . 9 . The tra nsition cost was propo rtional to the distance d between cells and was set as − 2 d . The gray scale in each cell indicates th e exp e c ted local cost within the cell. As shown, the plann ed path was a comp romise b etween the local c o st and the transition cost: The robot would v isit the cell with lowest expected local cost but within its vicinity . Total number of objects: 44 -9.8 -9.6 -9.4 -9.2 -9 -8.8 -8.6 -8.4 -8.2 -8 Fig. 4. The planned path of one robot in its assigned region is shown. The square and the triangle indicate the current location and the end of the planning horizon, respect i vel y . The green regi on has been visited. The dots indicat e the true locatio ns of the object s that are unkno wn to the robot. T he bar indicates the expected local cost in each cell. The length of the planning horizon is 50. V I I . C O N C L U S I O N Considering the influ ence of regret emo tion in a r obotic d ecision-mak ing m odel is imp o rtant, as it will brin g more human -like characteristics, such as risk-seek ing and risk-averse. W e inco rporated the d ecision model based on regret theory into the co ordination o f a team with one human and multiple rob ots. Th e coo rdination include d robot decision-mak ing, q ueue-or dering an d path plannin g. The simulated results show tha t the human -like de c ision model does br ing appealin g effects to the hum an-rob ot teaming . R E F E R E N C E S [1] A. Koll ing, P . W alker , N. Chakraborty , K. Sycara, and M. Lewis, “Human interact ion with robot swarms: A survey , ” IEEE T ransactions on Human-Machin e Systems , vol. 46, no. 1, pp. 9–26, 2016. [2] J. Y . Chen and M. J. Barnes, “Human–ag ent teaming for multirobot control: A re vie w of human fact ors issues, ” IEEE T ransact ions on Human-Mac hine Systems , vol. 44, no. 1, pp. 13–29, 2014. [3] R. Parasurama n, T . B. Sheridan, and C. D. Wic kens, “ A model for types and le vels of human interact ion with automation, ” IEEE T ransactions on systems, man, and cyberne tics-P art A: Systems and Humans , vol. 30, no. 3, pp. 286–297, 2000. [4] J. Y . Chen and M. J. Barnes, “Supervisory control of m ultiple robots: Effec ts of imperfe ct automation and indi vidual diffe rences, ” Human F actors , vol. 54, no. 2, pp. 157–174, 2012. [5] D. L. Kleinman , S. Baron, and W . Levison, “ An optimal control model of human response part i: Theory and valida tion, ” Automat ica , vol. 6, no. 3, pp. 357–369, 1970. [6] R. L. Keen ey and H. Raif fa, Decisions with multiple objecti ves: prefer ences and value trade-of fs . Cambridge univ ersity press, 1993. [7] S. C. Marsella and J . Gratch, “ Ema: A process model of app raisal dynamics, ” Cogni tive Syste ms Resear ch , vol. 10, no. 1, pp. 70–90, 2009. [8] J. D. V el ´ asquez, “When robots weep: emotional memories and decision -making, ” in AAA I/IAAI , 1998, pp. 70–75. [9] G. Loomes and R. Sugden, “Re gret theory: An alternati ve theory of rati onal choice under unc ertaint y , ” The econ omic journal , vol . 92, no. 368, pp. 805–824, 1982. [10] D. Kahneman and A. Tversk y , “Prospect theory: An analysis of decision under risk, ” Econometri ca , vol. 47, no. 2, pp. 263–292, 1979. [11] N. Camille, G. Coricelli , J. Sallet, P . Pradat-Diehl, J.-R. Duhamel, and A. Sirigu, “The in volvemen t of the orbitofront al corte x in the expe rience of regret, ” Science , vol. 304, no. 5674, pp. 1167–1170, 2004. [12] Z. Liao, L. Jiang, and Y . W ang, “ A quanti tati ve measure of regre t in decisi on-making for human-robot colla borati ve search tasks, ” in American Contr ol Confer ence (ACC), 2017 . IEEE, 2017, pp. 1524–1529. [13] K. Kontek and M. Le wando wski, “Range -depende nt utilit y , ” Manag ement Scien ce , vol. 64, no. 6, pp. 2812–2832, 2017. [14] J. Quiggin, “ A theory of anticip ated utility , ” Journal of E conomic Behavior & Organizat ion , vol. 3, no. 4, pp. 323–343, 1982. [15] L. J iang and Y . W ang, “ A human-c omputer int erfa ce desig n for quantit ati ve measure of regret theory , ” IF AC-P apersOnLin e , vol. 51 , no. 34 , pp. 15–20, 2019. [16] D. Prelec, “The probability weighting function, ” Econometrica , pp. 497–527, 1998.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment