MG-VAE: Deep Chinese Folk Songs Generation with Specific Regional Style

Regional style in Chinese folk songs is a rich treasure that can be used for ethnic music creation and folk culture research. In this paper, we propose MG-VAE, a music generative model based on VAE (Variational Auto-Encoder) that is capable of captur…

Authors: Jing Luo, Xinyu Yang, Shulei Ji

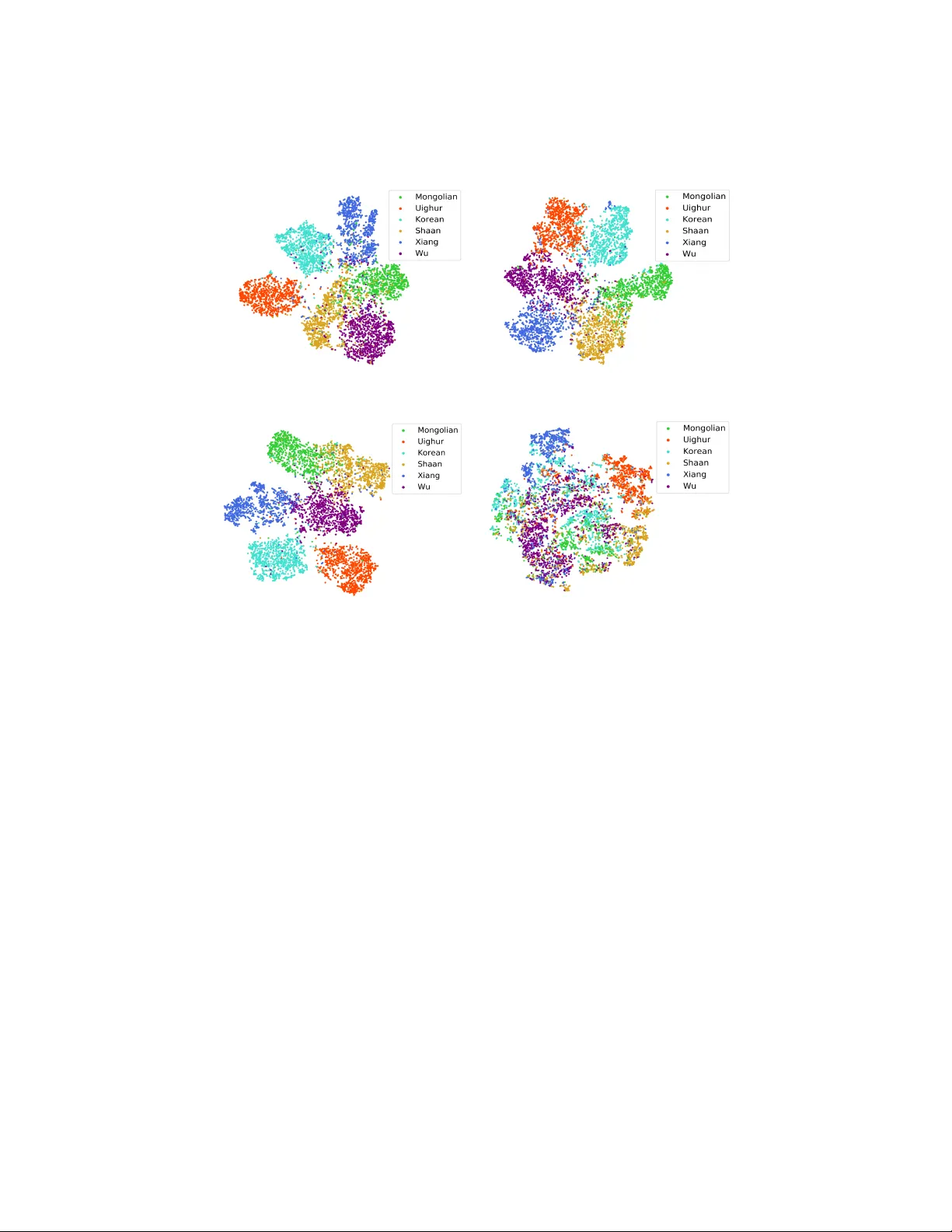

MG-V AE: Deep Chinese F olk Songs Generation with Sp ecific Regional St yle Jing Luo 1 , Xin yu Y ang 1 Sh ulei Ji 1 , and Juan Li 2 1 Sc ho ol of Computer Science and T ec hnology , Xi’an Jiaotong Universit y , Xi’an, China 2 Cen ter of Music Education, Xi’an Jiaotong Universit y , Xi’an, China Abstract. Regional st yle in Chinese folk songs is a rich treasure that can b e used for ethnic music creation and folk culture researc h. In this pap er, w e prop ose MG-V AE, a music generativ e mo del based on V AE (V ariational Auto-Enco der) that is capable of capturing sp ecific music st yle and generating nov el tunes for Chinese folk songs ( Min Ge ) in a manipulatable w a y . Specifically , w e disen tangle the latent space of V AE in to four parts in an adversarial training wa y to control the information of pitc h and rhythm sequence, as w ell as of music style and con tent. In detail, tw o classifiers are used to separate st yle and conten t latent space, and temporal supervision is utilized to disentangle the pitch and rh ythm sequence. The exp erimen tal results show that the disentangle- men t is successful and our mo del is able to create nov el folk songs with con trollable regional styles. T o our b est kno wledge, this is the first study on applying deep generative mo del and adversarial training for Chinese m usic generation. Keyw ords: Music Generation, Disentangled Latent Representation, Chi- nese F olk S ongs, Regional Style 1 In tro duction Creating realistic music pieces automatically has alw a ys b een regarded as one of fron tier sub jects in the field of computational creativit y . With recen t adv ances in deep learning, deep generativ e model and its v ariants ha ve been widely used in automatic music generation [11][2]. Ho wev er, most of deep comp osition metho ds fo cus on W estern m usic rather than Chinese m usic. Ho w to emplo y deep learning to mo del the structure and st yle of Chinese music is a challenging but nov el problem. Chinese folk songs, an imp ortan t part of traditional Chinese m usic, are im- pro vised by lo cal p eople and passed on from one generation to the next orally . F olk tunes from the same region exhibit similar style while tunes from differen t areas present differen t regional styles [25][20]. F or example, the songs named Mo Li Hua ha ve different versions in man y areas of China and sho w v arious mu- 2 Jing Luo et al. sic styles, though they share the same name and similar lyrics 3 . The regional c haracteristics of Chinese folk songs are not w ell explored and should b e utilized to guide automatic comp osition for Chinese folk tunes. F urthermore, folk song comp osition based on regional style provides abundant p oten tial materials for Chinese national music creation, and promotes the spread and developmen t of Chinese national m usic and even Chinese culture in the w orld. There are lots of studies on music style composition of W estern Music[3]. Ho wev er, few studies employ deep generative mo del for Chinese music comp o- sition. There is a clear difference b et w een Chinese and W estern music. Unlik e W estern Music, which focuses on the v ertical structure of music, Chinese music fo cuses on the horizontal structure, i.e., the developmen t of melo dy , and the regional style of Chinese folk songs is mainly reflected in its rhythm and pitc h in terv al patterns [8]. In this pap er, w e prop ose a deep m usic generation mo del named MG-V AE to capture regional style of Chinese folk songs ( Min Ge ) and create no vel tunes with con trolled regional style. Firstly , a MIDI dataset with more than 2000 Chinese folk songs co vering six regions is collected. After that, w e enco de the input m usic represen tations to the latent space and decode the latent space to reconstruct m usic notes. In detail, the latent space is divided into t wo parts to presen t the pitc h features and rh ythm features, namely , pitch variable and rhythm variable . Then w e further divide the pitch latent space in to style variable part and c on- tent variable part to present st yle feature and style-less feature in pitch v ariable, the same op eration is launched in rh ythm v ariable. In order to capture the re- gional style of Chinese folk songs precisely and generate regional st yle songs in con trollable wa y , we prop ose a metho d based on adversarial training for disen- tanglemen t of the four laten t v ariables, where temporal supervision is emplo y ed in the separation of pitc h and rhythm v ariable, and lab el sup ervision is used for the disentanglemen t the st yle and conten t v ariable. The experimental results and visualization of laten t spaces sho w that our mo del is effective to disentangle laten t v ariables and is able to generate folk songs with sp ecific regional st yle. The rest of the pap er is structured as follo ws: after in tro ducing related work on deep music generation in Section 2, we presen t our music represen tations and mo del in Section 3. Section 4 describ es the exp erimen tal results and analysis of our metho ds. Conclusions and future w ork are presen ted in Section 5. 2 Related W ork RNN (Recurrent Neural Net work) is one of the most earliest mo dels intro- duced in to the domain of deep m usic generation. Researc hers emplo y RNNs to model the music structure and generate different formats of music, including monophonic folk melo dies [30], rhythm composition [23], expressiv e music p er- formance [27], m ulti-part music harmonization [31]. Other recent studies ha ve 3 The Chorus of Mo Li Hua Diao from v arious regions in China by Cen tral National Orc hestra: h ttp://ncpa- classic.cntv.cn/2017/05/11/ VIDEEMEg82W5MuXUMM1jpEuL170511.sh tml. MG-V AE 3 started to com bine con volutional structure and explore using V AE, GAN (Gener- ativ e Adversarial Netw ork) and T ransformer for m usic generation. MidiNet [32] and MuseGAN [4] combine CNN (Con volutional Neural Net w ork) and GAN arc hitecture to generate music with multiple MIDI tracks. MusicV AE [29] in- tro duces a hierarc hical deco der in to general V AE model to generate m usic note sequences with long-term structure. Due to the impressiv e results of T ransformer in neural translation, Huang et al. mo dify this sequence mo dels relative attention mec hanism and generate minutes of music clips with high long-range structural coherence [15]. In addition to the study of music structure, researc hers also emplo y deep generative mo dels to mo del music styles, such as pro ducing jazz melo dies through t wo LSTM net works [18], harmonizing a user-made melo dy in Bac hs st yle [14]. Most of them are trained on the sp ecific style dataset. The m usic generated from these mo dels can only mimic the single style embo died in the training data. Moreo ver, little attention has b een paid to Chinese m usic generation with deep learning tec hniques, esp ecially for modeling the m usic style of Chinese m u- sic, though some researc hers utilize Seq2Seq mo del to create m ulti-track Chinese p opular songs from scratch [34] or generate melo dy of Chinese p opular songs with giv en lyrics [1]. The existing generation algorithms for Chinese traditional songs are mostly based on non-deep mo dels such as Marko v mo dels [13], genetic al- gorithms [33]. These studies cannot break up the bottleneck in melo dy creation and st yle imitation. Some latest work in the domain of m usic st yle transfer b egins to generate m usic with mixed style or recombine m usic conten t and st yle. F or example, Mao et al. prop ose an end to end generative mo dal to pro duce music with mixture of differen t classical composer styles [24]. Lu et al. study the deep st yle transfer b et w een Bach c horales and Jazz [21]. Nak amura et al. complete melo dy st yle con version among differen t m usic genres [26]. The abov e studies are based on the m usic data from differen t genres or comp osing perio ds. How ever, the regional st yle generation of Chinese folk songs studied here is mo deling style within the same genre, whic h is more challenging. 3 Approac h 3.1 Music Representation The monophonic folk songs M can b e represen ted as a sequence of note tokens, whic h is a combination of its pitch, interv al and rh ythm. Pitch and rhythm are essential information for music. The in terv al is an imp ortant indicator to distinguish the regional music feature, esp ecially for Han Chinese folk songs[9]. The detail pro cessing is described as b elo w and shown in Fig. 1. – Pitc h Sequence P : Sequence of pitc h tokens which consists of the pitch t yp e presen ted in melo dy sequence. Rest note is assigned a sp ecial tok en. – In terv al Sequence I : Sequence of in terv al tokens derived from P . Each in- terv al token is represen ted as a deviation b et ween the next pitch and current pitc h in step of semitone. 4 Jing Luo et al. Fig. 1. Chinese folk songs representation including pitc h sequence, interv al sequence, rh ythm sequence. – Rh ythm Sequence R : Sequence of duration tokens comprised of the dura- tion t yp e presen ted in melo dy sequence. 3.2 Mo del As men tioned in Section 1, the regional c haracteristics of Chinese folk songs are mainly reflected in their pitc h patterns and rhythm patterns. In some areas, the regional c haracteristics of folk songs are more dependent on pitch feature, while the rh ythm patterns in some areas are more distinctive. F or example, in terms of pitc h, folk songs in northern Shaanxi tend to use p erfect forth, the Hunan folk songs often use the com bination of ma jor third and minor third [25], while Uigh ur folk songs emplo y the non-pentatonic scale. In terms of rh ythm, Korean folk songs hav e their sp ecial rhythm system named Jangdan , while Mongolian L ong Song s generally prefer long duration notes [6]. Inspired b y the ab o v e observ ations, it is necessary to further refine the style of folk songs b oth in pitch and rhythm. Therefore, we prop ose a V AE-based mo del to separate pitch space and rhythm space, and further disen tangle the m usic style and con tent space from pitc h and rh ythm space, resp ectiv ely . V AE and its Laten t Space Division The V AE introduces a contin uous latent v ariable z from a Gaussian prior p θ ( z ), and then generates sequence x from the distribution p θ ( x | z ) [19]. Concisely , a V AE includes an enco der q φ ( z | x ), a decoder p θ ( x | z ) and laten t v ariable z . The loss function of V AE is J ( φ, θ ) = − E q φ ( z | x ) [log p θ ( x | z )] + β K L ( q φ ( z | x ) k p θ ( z )) (1) where the first term denotes reconstruction loss, and the second term refers to the Kullbac k-Leibler (KL) div ergence, whic h is added to regularize the laten t space. W eight β is a hyperparameter to balance the t wo loss terms. By setting β < 1, we can improv e the generation quality of the mo del [12]. p θ ( z ) is the prior and generally ob eys the standard normal distribution, i.e., p θ ( z ) = N (0 , I ). The p osterior approximation q φ ( z | x ) is parameterized b y encoder which is also MG-V AE 5 Fig. 2. Arc hitecture of our model, it consists of melody enco der E , pitc h decoder D P , rh ythm decoder D R and m elo dy decoder D M . assumed to be Gaussian and reparameterization tric k is used to acquire its mean and v ariance. With the labeled data, we can disentangle the laten t space of V AE in a wa y that different parts of the latent space correspond to different external attributes, whic h can enable the generation pro cess in a more con trollable w ay . In our case, w e assume that the latent space can b e firstly divided into tw o indep enden t parts, i.e., pitch variable and rhythm variable . The pitc h v ariable learns the pitc h features of Chinese folk songs, while rh ythm v ariable captures the rh ythm patterns. F urther, we assume both the pitch v ariable and rhythm v ariable consist of t wo independent parts, which refer to m usic style variable and m usic c ontent variable , resp ectiv ely . Sp ecifically , given a melo dy sequence M = { m 1 , m 2 , · · · , m n } as the input sequence with n tok ens (notes), where m k denotes the feature com bination of the corresp onding pitc h tok en p k , interv al sequence i k and rhythm sequence r k , we firstly enco de M and obtain four latent v ariables from the linear transformation of the enco ders output. The four latent v ariables are pitch style v ariable Z P s , pitc h conten t v ariable Z P c , rh ythm st yle v ariable Z R s and rh ythm con tent v ari- able Z R c , resp ectiv ely . Then, we concatenate Z P s and Z P c in to the total pitch v ariable Z P , whic h is used to predict the pitc h sequence ˆ P . The same opera- tion is launc hed in rhythm v ariable to predict ˆ R . Finally , all laten t v ariables are concatenated to predict the total melo dy sequence ˆ M . The architecture of our mo del is sho wn in Fig. 2. Based the ab o v e assumption and op eration, it is easy to extend the basic loss function: J v ae = H ( ˆ P , P ) + H ( ˆ R, R ) + B C E ( ˆ M , M ) + β K L total (2) where H ( · , · ) and B C E ( · , · ) denote the cross entrop y and binary cross entrop y b et w een prediction v alues and target v alues, resp ectiv ely , and K L total denotes the sum KL loss of the four laten t v ariables. Adv ersarial T raining for Latent Spaces Disentanglemen t Here, w e pro- p ose an adversarial training based metho d to conduct the disentanglemen t of 6 Jing Luo et al. pitc h and rhythm, music style and conten t. The detail pro cessing is sho wn in Fig. 3. Fig. 3. Detail processing of laten t spaces disentanglemen t. The dashed lines indicate the adversarial training parts. As shown in Fig. 2, w e use tw o parallel deco ders to reconstruct pitch sequence and rh ythm sequence, resp ectively . Ideally , w e expect the pitch v ariable Z P and rh ythm v ariable Z R should be indep endent of each other. How ev er, the pitch feature may b e implicit in rhythm v ariables actually , vice versa, since the tw o v ariables are sampled from the same enco der output. In order to separate the pitch and rhythm v ariable explicitly , the temp oral sup ervision is emplo yed in the separation of pitch and rh ythm, whic h is similar to the w ork of disentangled represen tation for pitc h and tim bre [16]. Specifically , w e feed the latent v ariable to the wrong decoder deliberately and force the decoder to predict nothing, i.e., all zero sequence, resulting in the following tw o loss terms based on cross en tropy: J adv ,P = − Σ [ 0 · log ˆ P adv + (1 − 0 ) · log(1 − ˆ P adv )] (3) J adv ,R = − Σ [ 0 · log ˆ R adv + (1 − 0 ) · log(1 − ˆ R adv )] (4) where 0 denotes all zero sequence, ‘ · ’ denotes the element-wise product. F or the disen tanglement of m usic st yle and conten t, we firstly obtain the total m usic style v ariable Z s and con tent v ariable Z c : Z s = Z P s ⊕ Z R s , Z c = Z P c ⊕ Z R c (5) where ⊕ means the concatenate op eration. Then t wo classifiers are defined to force the separation of st yle and con ten t in the laten t space using the regional information. The style classifier ensures the st yle v ariable is discriminative for regional lab el, while the adversary classifier force the conten t v ariable is not distinctive for regional lab el. F or st yle classifier MG-V AE 7 is trained with the cross en tropy defined b y J dis,Z s = − Σ y log p ( y | Z s ) (6) where y denotes the ground truth, p ( y | Z s ) is the predicted probabilit y dis- tributions from st yle classifier. F or adversary classifier, w e train it by maximizing the empirical entrop y of the adversary classifier’s prediction [7][17]. The training pro cessing is divided in to t w o steps. Firstly , the parameters of the adv ersary classifier are trained indep enden tly , i.e., the gradien ts of the classifier don’t propagate back to V AE. Secondly , we compute the empirical entrop y based on the output from adv ersary classifier as defined b y J adv ,Z c = − Σ p ( y | Z c )log p ( y | Z c ) (7) where p ( y | Z c ) is the predicted probabilit y distributions from adv ersary classifier. In summary , the ov erall training ob jective of our mo del is the minimization the loss function defined b y J total = J v ae + J adv ,P + J adv ,R + J dis,Z s − J adv ,Z c (8) 4 Exp erimen tal Results and Analysis 4.1 Datasets and Prepro cessing The lack of large-scale Chinese folk song datasets makes it imp ossible to apply deep learning metho ds for automatic generation and analysis of Chinese music. Therefore, we digitize more than 2000 Chinese folk songs in MIDI format from the record of Chinese F olk Music Inte gr ation 4 . These songs contain Han folk songs from W u dialect district, Xiang dialect district 5 and northern Shaanxi, as w ell as three ethnic minority folk songs of Uygur in Xinjiang, Mongolian in Inner Mongolia and Korean in northeast China. All melo dies in datasets are transposed to C key . W e use the Prett y-midi p ython to olkit [28] to pro cess each MIDI file, and coun t the num b ers of pitc h tok en, interv al token and rhythm token as the feature dimension of the corre- sp onding sequence, which are 40, 46 and 58, resp ectiv ely . Then pitc h sequence, 4 Chinese F olk Music Inte gr ation is one of the ma jor national cultural pro ject leaded b y the former Ministry of Culture, National Ethnic Affairs Commission and Chinese Musicians Association from 1984 to 2001. This set of bo ok con tains more than 40000 selected folk songs of different nationalities. The pro ject w ebsite is h ttp://www.cefla. org/pro ject/b ook. 5 According to the analysis of Han Chinese folk songs [9][5], the folk song style of each region is closely related to the lo cal dialects. Therefore, w e classify Han folk songs based on dialect divisions. W u dialect district here mainly includes Southern Jiangsu, Northern Zhejiang and Shanghai. Xiang dialect district here mainly includes Yiyang, Changsha, Hengyang, Loudi and Shaoy ang in Hunan pro vince. 8 Jing Luo et al. in terv al sequence and rhythm sequence are extracted from raw notes sequence with the o verlapping window of length 32 tok ens and a hop-size of 1. Finally , w e get 65508 ternary sequences in total. The regional lab els of the token sequences dra wn from the same song are consistent. 4.2 Exp erimen tal Setup Fig. 4. Encoder with residual connections. In order to extract melody feature into latent space effectiv ely , we employ a bidirectional GR U mo del with the residual connection [10] as enco der, which is illustrated in Fig. 4. The deco der is a normal tw o-lay ers GRU. All recurren t hidden size in this paper is 128. Both style classifier and adversary classifier are one-la yer linear lay er with Softmax function. The size of pitc h st yle v ariable and rh ythm style v ariable is set to 32, while the size of pitch conten t v ariable and rh ythm con tent v ariable is 96. During training perio d, the KL term co efficien t β increases from 0.0 to 0.15 linearly to alleviate the impact of p osterior collapse. Adam optimizer is employ ed with the initial learning rate of 0.01 for V AE training, and v anilla SGD optimizer with the initial learning rate of 0.005 for classifiers. All test mo dels are trained for 30 ep o c hs and the size of mini-batc h is set to 50. 4.3 Ev aluation and Results Analysis T o ev aluate the generated m usic, we emplo y the following metrics from ob jective and sub jective persp ectiv es. – Reconstruction Accuracy : W e calculate the accuracy betw een the target notes sequence and reconstructed notes sequence on our test set to ev aluate the m usic generation quality . MG-V AE 9 – St yle Recognition Accuracy : W e train a separate style ev aluation classi- fier using the arc hitecture in Fig. 4 to predict the regional style of the tunes that are generated using differen t latent v ariables. The classifier ac hiev es a reasonable regional accuracy on the indep enden t test set, which is up to 82.71%. – Human Ev aluation : As h uman should b e the ultimate judge of creations, h uman ev aluations are conducted to ov ercome the inco ordinations betw een ob jective metrics and user studies. W e in vite three experts who are w ell educated and exp ertise in Chinese m usic. Each exp ert is asked to listen to the random selected five folk songs of eac h region on-site, and rate each song on a 5-point scale from 1 (v ery lo w) to 5 (v ery high) according to the following tw o criteria: a) Music ality : Do es the song hav e a clear music pattern or structure? b) Style Signific anc e : Do es the songs’ st yle match the giv en regional lab el? T able 1. Results of automatic ev aluations. Ob jectives Reconstruction Accuracy St yle Recognition Accuracy J vae 0.7684 0.1726/0.1772/0.1814 J vae , J adv,P ,R 0.7926 0.1835/0.1867/0.1901 J vae , J adv,P ,R , J adv,Z c 0.7746 0.4797/0.4315/0.5107 J vae , J adv,P ,R , J dis,Z s 0.8079 0.5774/0.5483/0.6025 J total 0.7937 0.6271 / 0.5648 / 0.6410 T ab 1 shows all ev aluation results of our mo dels. The three v alues in the third column denote the accuracies deriv ed from the follo wing three kinds of latent v ariables: a) the concatenation of pitch st yle v ariable Z P s and a random v ariable sampled from standard normal distribution; b) the concatenation of rh ythm st yle v ariable Z R s and the random v ariable; c) the concatenation of total style v ariable Z s and the random v ariable. J adv ,P,R denotes the sum of J adv ,P and J adv ,R . The model with J total ac hieves the b est results in style recognition accuracy and a sub-optimal result in reconstruction accuracy . The model without any con- strain ts performs p oorly on the tw o ob jectiv e metrics. The addition of J adv ,P,R impro ves the the reconstruction accuracy but fails to bring meaningful improv e- men t to st yle classification. With the addition of either J adv ,Z c or J dis,Z s , all the three recognition accuracies impro v e a lot, whic h indicates that the laten t spaces are disentangled into style and conten t subspaces as exp ected. Moreov er, only emplo ying pitch st yle or rhythm style for st yle recognition can also obtain fair results, demonstrating the disen tanglement of pitc h and rhythm is effectiv e. The result of h uman ev aluations is shown in Fig. 5. In terms of musicalit y , all test models ha ve similar p erformance, which demonstrates the addition of extra loss function has no negativ e impact on the generation quality of original V AE. Moreo ver, the mo del with total ob jectives J total p erforms significan tly b etter 10 Jing Luo et al. Fig. 5. Results of human ev aluations including m usicality and st yle significance. The heigh ts of bars represen t means of the ratings and the error bars represent the standard deviation. than other mo dels in terms of style significance (tw o-tailed t -test, p < 0 . 05), whic h is consistent with the results in T ab 1. Fig. 6 shows the t-SNE visualization[22] of our mo del with J total . W e can observ e that music with differen t regional lab els is noticeably separated in the pitc h style space, rhythm style space and total style space, but lo oks chaos in con tent space.This further demonstrates the v alidity of our prop osed metho ds to disen tangle the pitch, rh ythm, style and con ten t. Finally , w e present several examples 6 of generating folk song with given regional lab els with our metho ds in Fig. 7. As seen, w e can create nov el folk songs with dominated regional features suc h as long duration notes and large in terv al in Mongolian songs, the combination of ma jor third and minor third in Hunan folk songs, and so on. Ho wev er, there are still sev eral failed examples. F or instance, few generated songs repeat same melo dy pattern. More commonly , some songs don’t show the correct regional feature, esp ecially when the given regions belong to Han nationality areas. This may due to the fact that folk tunes in those regions share the same tonal system. 5 Conclusion In this pap er, we fo cus on how to capture the regional style of Chinese folk songs and generate no vel folk songs with sp ecific regional lab els. W e firstly col- lect a database including more than 2000 Chinese folk songs for analysis and generation. Then, inspired b y the observ ation of the regional characteristics in Chinese folk songs, a mo del named MG-V AE based on adversarial learning is prop osed to disentangle the pitch v ariable, rhythm v ariable, style v ariable and 6 Online Su pplementary Material: h ttps://csmt201986.gith ub.io/mgv aeresults/. MG-V AE 11 (a) Pitc h st yle laten t space (b) Rh ythm st yle laten t space (c) T otal st yle laten t space (d) T otal con tent latent space Fig. 6. t-SNE visualization of mo del with J total . con tent v ariable in the laten t space of V AE. Three metrics con taining automatic and sub jective ev aluation in our experiments are used to ev aluate the prop osed mo del. Finally , the exp erimen tal results and t-SNE visualization sho w that the disen tanglement of the four v ariables is succes sful and our mo del is able to gener- ate folk songs with controllable regional st yle. In the future, w e plan to expand the prop osed mo del to generate longer melo dy sequence using more p o werful mo del like T ransformers, and explore the evolution of tune families like Mo Li Hua Diao , Chun Diao among different regions. References 1. Bao, H., Huang, S., W ei, F., Cui, L., W u, Y., T an, C., Piao, S., Zhou, M.: Neural melo dy comp osition from lyrics. CoRR abs/1809.04318 (2018) 2. Briot, J., Hadjeres, G., Pac het, F.: Deep learning tec hniques for music generation - A surv ey . CoRR abs/1709.01620 (2017) 3. Dai, S., Zhang, Z., Xia, G.: Music style transfer issues: A p osition paper. CoRR abs/1803.06841 (2018) 4. Dong, H., Hsiao, W., Y ang, L., Y ang, Y.: Musegan: Multi-track sequen tial gener- ativ e adv ersarial net w orks for symbolic m usic generation and accompaniment. In: 12 Jing Luo et al. Fig. 7. Examples of folk songs generation giv en regional lab els. In order to align eac h ro w, the scores of several regions are not completely display ed. Pro ceedings of the Thirt y-Second AAAI Conference on Artificial Intelligence, pp. 34–41. N ew Orleans, Louisiana, USA (2018) 5. Du, Y.: The m usic dialect area and its division of han chinese folk songs. Journal of Cen tral Conserv atory of Music 1 , 14–16 (1993) 6. Du, Y.: An ov erview of Ethnic Minorities F olk Music in China. Shanghai Conser- v atory of Music Press, Shanghai (2014) 7. F u, Z., T an, X., P eng, N., Zhao, D., Y an, R.: Style transfer in text: Exploration and ev aluation. In: Proceedings of the Thirty-Second AAAI Conference on Artificial In telligence, pp. 663–670. New Orleans, Louisiana, USA (2018) 8. Guan, J.: The Contrast b et ween Chinese and W estern Music. Nanjing Normal Univ ersity Press, Nanjing (2014) 9. Han, K.: F olk songs of the han chinese: Characteristics and classifications. Asian Music 20 (2), 107–128 (1989) 10. He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition, pp. 770– 778. Las V egas, NV, USA (2016) 11. Herremans, D., Chuan, C., Chew, E.: A functional taxonom y of m usic generation systems. ACM Compututing Surv eys 50 (5), 69:1–69:30 (2017) 12. Higgins, I., Matthey , L., Pal, A., Burgess, C., Glorot, X., Botvinic k, M., Mohamed, S., Lerchner, A.: b eta-v ae: Learning basic visual concepts with a constrained v ari- ational framework. In: 5th In ternational Conference on Learning Representations, pp. 1–22 . T oulon, F rance (2017) 13. Huang, C., Lian, Y., Nien, W., Chieng, W.: Analyzing the p erception of chinese melo dic imagery and its application to automated comp osition. Multimedia T o ols and Ap plications 75 (13), 7631–7654 (2016) 14. Huang, C.A., Co oijmans, T., Dinculescu, M., Hawthorne, A.R.C.: Co conet: the ml mo del b ehind to da ys bach do odle (Accessed July 9, 2019). URL https://magen ta. tensorflo w.org/co conet MG-V AE 13 15. Huang, C.A., V aswani, A., Uszkoreit, J., Shazeer, N., Hawthorne, C., Dai, A.M., Hoffman, M.D., Eck, D.: An improv ed relative self-attention mechanism for trans- former with application to m usic generation. CoRR abs/1809.04281 (2018) 16. Hung, Y., Chen, Y., Y ang, Y.: Learning disentangled represen tations for timber and pitc h in music audio. CoRR abs/1811.03271 (2018) 17. John, V., Mou, L., Bahuley an, H., V ech tomov a, O.: Disentangled representation learning f or text st yle transfer. CoRR abs/1808.04339 (2018) 18. Johnson, D.D., Keller, R.M., W eintraut, N.: Learning to create jazz melodies using a pro duct of exp erts. In: Pro ceedings of the Eighth International Conference on Computational Creativity , pp. 151–158. A tlanta, Georgia, USA (2017) 19. Kingma, D.P ., W elling, M.: Auto-enco ding v ariational bay es. In: 2nd International Conference on Learning R epresentations, pp. 1–14. Banff, AB, Canada (2014) 20. Li, J., Luo, J., Ding, J., Zhao, X., Y ang, X.: Regional classification of chinese folk songs based on CRF mo del. Multimedia T ools and Applications 78 (9), 11,563– 11,584 (2 019) 21. Lu, W.T., Su, L.: T ransferring the style of homophonic m usic using recurrent neu- ral netw orks and autoregressiv e mo del. In: Proceedings of the 19th In ternational So ciet y for Music Information Retriev al Conference, pp. 740–746. Paris, F rance (2018) 22. Maaten, L.v.d., Hin ton, G.: Visualizing data using t-sne. Journal of machine learn- ing resea rch 9 (Nov), 2579–2605 (2008) 23. Makris, D., Kaliak atsos-Papak ostas, M.A., Karydis, I., Kermanidis, K.L.: Condi- tional neural sequence learners for generating drums’ rhythms. Neural Computing and App lications 31 (6), 1793–1804 (2019) 24. Mao, H.H., Shin, T., Cottrell, G.W.: Deep j: Style-specific music generation. In: 12th IEEE In ternational Conference on Seman tic Computing, pp. 377–382. Laguna Hills, CA , USA (2018) 25. Miao, J., Qiao, J.: A study of similar color area divisions in han folk songs. Journal of Cen tral Conserv atory of Music 1 (1), 26–33 (1985) 26. Nak amura, E., Shibata, K., Nishikimi, R., Y oshii, K.: Unsup ervised melody st yle con version. In: IEEE International Conference on Acoustics, Sp eech and Signal Pro cessing, pp. 196–200. Brighton, United Kingdom (2019) 27. Oore, S., Simon, I., Dieleman, S., Eck, D., Simony an, K.: This time with feeling: Learning expressiv e musical performance. Neural Computing and Applications pp. 1–13 (20 18) 28. Raffel, C., Ellis, D.P .: Intuitiv e analysis, creation and manipulation of midi data with pretty midi. In: 15th International So ciet y for Music Information Retriev al Conference Late Breaking and Demo P ap ers, pp. 84–93. T aip ei, T aiwan (2014) 29. Rob erts, A., Engel, J., Raffel, C., Hawthorne, C., Eck, D.: A hierarchical laten t v ector mo del for learning long-term structure in m usic. In: Proceedings of the 35th In ternational Conference on Mac hine Learning, pp. 4361–4370. Sto c kholm, Sw eden (2018) 30. Sturm, B.L., San tos, J.F., Ben-T al, O., Korshuno v a, I.: Music transcription mod- elling an d composition using deep learning. CoRR abs/1604.08723 (2016) 31. Y an, Y., Lustig, E., V anderStel, J., Duan, Z.: Part-in v ariant mo del for music gen- eration and harmonization. In: Pro ceedings of the 19th International So ciet y for Music In formation Retriev al Conference, pp. 204–210. Paris, F rance (2018) 32. Y ang, L., Chou, S., Y ang, Y.: Midinet: A conv olutional generative adversarial net- w ork for symbolic-domain m usic generation. In: Pro ceedings of the 18th Interna- tional Society for Music Information Retriev al Conference, pp. 324–331. Suzhou, China (20 17) 14 Jing Luo et al. 33. Zheng, X., W ang, L., Li, D., Shen, L., Gao, Y., Guo, W., W ang, Y.: Algorithm com- p osition of c hinese folk music based on swarm in telligence. International Journal of Computin g Science and Mathematics 8 (5), 437–446 (2017) 34. Zh u, H., Liu, Q., Y uan, N.J., Qin, C., Li, J., Zhang, K., Zhou, G., W ei, F., Xu, Y., Chen, E.: Xiaoice band: A melody and arrangement generation framew ork for p op m usic. In: Pro ceedings of the 24th A CM SIGKDD International Conference on Kno wledge Disco very & Data Mining, pp. 2837–2846. London, UK (2018)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment