Lightweight Image Super-Resolution with Information Multi-distillation Network

In recent years, single image super-resolution (SISR) methods using deep convolution neural network (CNN) have achieved impressive results. Thanks to the powerful representation capabilities of the deep networks, numerous previous ways can learn the …

Authors: Zheng Hui, Xinbo Gao, Yunchu Yang

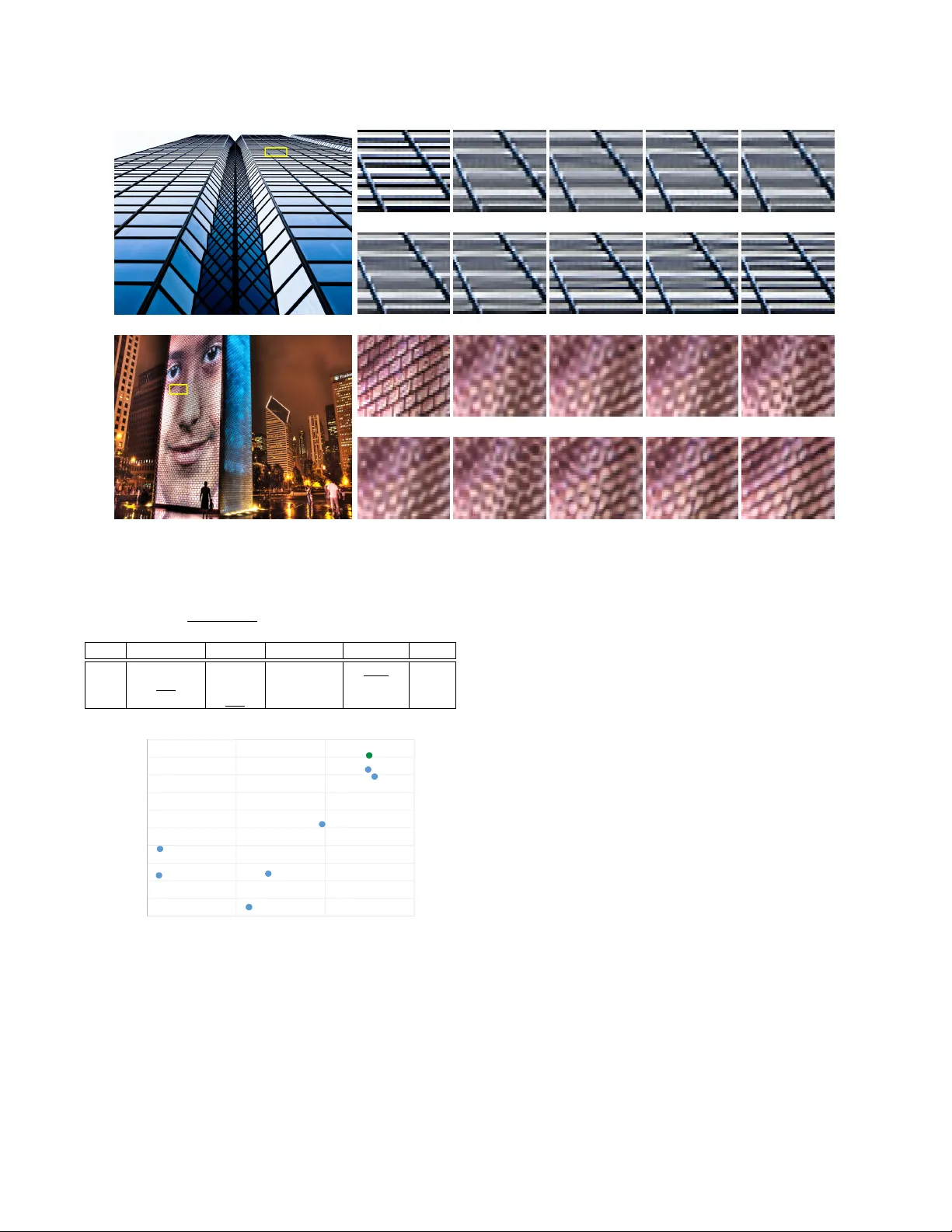

Lightweight Image Sup er-Resolution with Information Multi-distillation Network Zheng Hui School of Electronic Engineering, Xidian University Xi’an, China zheng_hui@aliyun.com Xinbo Gao School of Electronic Engineering, Xidian University Xi’an, China xbgao@mail.xidian.edu.cn Y unchu Y ang School of Electronic Engineering, Xidian University Xi’an, China yc_yang@aliyun.com Xiumei W ang ∗ School of Electronic Engineering, Xidian University Xi’an, China wangxm@xidian.edu.cn ABSTRA CT In recent years, single image super-resolution (SISR) methods using deep convolution neural network (CNN) have achieved impressive results. Thanks to the powerful repr esentation capabilities of the deep networks, numerous previous ways can learn the complex non-linear mapping between low-resolution (LR) image patches and their high-resolution (HR) versions. Ho wever , excessive convo- lutions will limit the application of super-resolution technology in low computing power devices. Besides, super-resolution of any ar- bitrary scale factor is a critical issue in practical applications, which has not been well solv e d in the previous approaches. T o address these issues, we propose a lightweight information multi-distillation network (IMDN) by constructing the cascaded information multi- distillation blocks (IMDB), which contains distillation and selective fusion parts. Specically , the distillation module extracts hierarchi- cal features step-by-step, and fusion module aggregates them ac- cording to the importance of candidate featur es, which is e valuated by the proposed contrast-aware channel attention mechanism. T o process real images with any sizes, we develop an adaptive cropping strategy ( ACS) to super-resolve block-wise image patches using the same well-trained model. Extensive experiments suggest that the proposed method performs fav orably against the state-of-the-art SR algorithms in term of visual quality , memor y footprint, and infer- ence time. Code is available at https://github .com/Zheng222/IMDN. CCS CONCEPTS • Computing methodologies → Computational photography ; Reconstruction ; Image processing. ∗ Corresponding author Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commer cial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than ACM must be honored. Abstracting with cr edit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, requires prior specic permission and /or a fee. Request permissions from permissions@acm.org. MM ’19, October 21–25, 2019, Nice, France © 2019 Association for Computing Machinery . ACM ISBN 978-1-4503-6889-6/19/10. . . $15.00 https://doi.org/10.1145/3343031.3351084 KEY W ORDS image super-resolution; lightweight network; information multi- distillation; contrast-aware channel attention; adaptive cropping strategy A CM Reference Format: Zheng Hui, Xinbo Gao, Y unchu Y ang, and Xiumei W ang. 2019. Lightweight Image Sup er-Resolution with Information Multi-distillation Network. In Proceedings of the 27th A CM International Conference on Multimedia (MM’19), October 21–25, 2019, Nice, France . ACM, New Y ork, N Y , USA, 9 pages. https: //doi.org/10.1145/3343031.3351084 1 IN TRODUCTION Single image super-resolution (SISR) aims at reconstructing a high- resolution (HR) image from its low-resolution (LR) observation, which is inherently ill-posed be cause many HR images that can be downsampled to an identical LR image. T o address this prob- lem, numerous image SR methods [ 11 , 12 , 25 , 27 , 36 , 38 ] based on deep neural architectures [ 7 , 9 , 23 ] have been propose d and shown prominent performance. Dong et al. [ 4 , 5 ] rst developed a three-layer network (SRCNN) to establish a direct relationship between LR and HR. Then, W ang et al. [ 31 ] proposed a neural network according to the conventional sparse coding framework and further designed a progressive up- sampling style to produce better SR results at the large scale factor ( e.g. , × 4 ). Inspired by VGG model [ 23 ] that used for ImageNet clas- sication, Kim et al. [ 12 , 13 ] rst pushed the depth of SR network to 20 and their model outperformed SRCNN by a large margin. This indicates a deeper model is instructive to enhance the quality of generated images. T o accelerate the training of deep network, the authors introduced global residual learning with a high initial learn- ing rate. At the same time, they also presented a deeply-recursive convolutional network (DRCN), which applied r e cursive learning to SR problem. This way can signicantly reduce the mo del param- eters. Similarly , Tai et al. proposed two novel networks, and one is a deep recursive residual network (DRRN) [ 24 ], another is a persis- tent memory network (MemNet) [ 25 ]. The former mainly utilized recursive learning to reach the goal of economizing parameters. The latter model tackled the long-term dependency problem existed in the previous CNN architecture by se veral memory blocks that stacked with a densely connected structure [ 9 ]. Howev er , these two algorithms required a long time and huge graphics memory con- sumption both in the training and testing phases. The primar y rea- son is the inputs sent to these two models are interpolation v ersion of LR images and the networks have not adopted any downsampling operations. This scheme will bring about a huge computational cost. T o incr ease testing spe ed and shorten the testing time, Shi et al. [ 22 ] rst performed most of the mappings in low-dimensional space and designe d an ecient sub-pixel convolution to upsample the resolutions of feature maps at the end of SR models. T o the same end, Dong et al. proposed fast SRCNN (FSRCNN) [ 6 ], which employed a learnable upsampling layer (transposed con- volution) to accomplish p ost-upsampling SR. After ward, Lai et al. presented the Laplacian p yramid super-resolution netw ork (Lap- SRN) [ 14 ] to progressively reconstruct higher-resolution images. Some other work such as MS-LapSRN [ 15 ] and progressive SR (ProSR) [ 29 ] also adopt this progressive upsampling SR framework and achieve relatively high performance. EDSR [ 18 ] made a sig- nicant breakthrough in term of SR performance, which won the competition of N TIRE 2017 [ 1 , 26 ]. The authors removed some un- necessary modules ( e.g. , Batch Normalization) of the SRResNet [ 16 ] to obtain better results. Based on EDSR, Zhang et al. incorporated densely connected blo ck [ 9 , 27 ] into residual block [ 7 ] to construct a residual dense network (RDN). Soon they exploited the residual- in-residual architecture for the very deep model and introduced channel attention mechanism [ 8 ] to form the ver y deep residual attention networks (RCAN) [ 36 ]. More recently , Zhang et al. also introduced spatial attention (non-lo cal module) into the residual block and then constructed residual non-local attention network (RNAN) [37] for various image restoration tasks. The major trend of these algorithms is incr easing more convo- lution layers to improv e p erformance that measured by PSNR and SSIM [ 30 ]. As a result, most of them suered from large mo del parameters, huge memory footprints, and slo w training and testing speeds. For instance, EDSR [ 18 ] has about 43 M parameters, 69 lay- ers, and RDN [ 38 ] achieved comparable p erformance, which has about 22 M parameters, over 128 layers. Another typical network is RCAN [ 36 ], its depth up to 400 but the parameters are about 15 . 59 M. Howev er , these methods are still not suitable for resource- constrained equipment. For the mobile devices, the desir ed practice should be to pursuing higher SR performance as much as p ossible when the available memory and inference time are constrained in a certain range. Many cases require not only the performance but also high execution sp eed, such as video applications, edge devices, and smartphones. Accordingly , it is signicant to devise a lightweight but ecient model for meeting such demands. Concerning the reduction of the parameters, many approaches adopted the recursive manner or parameter sharing strategy , such as [ 13 , 24 , 25 ]. Although these methods did reduce the size of the model, they increased the depth or the width of the network to make up for the performance loss caused by the recursive module. This will lead to sp ending a great lot of calculating time when performing SR processing. T o address this issue, the better way is to design the lightw eight and ecient network structures that avoid using recursive paradigm. Ahn et al. developed CARN-M [ 2 ] for mobile scenario through a cascading network architecture, but it is at the cost of a substantial r eduction on PSNR. Hui et al. [ 11 ] proposed an information distillation network (IDN) that explicitly divided the prece ding extracted features into two parts, one was retained and another was further processed. Through this way , IDN achieved good performance at a moderate size. But there is still room for improvement in term of performance. Another factor that ae cts the inference spe ed is the depth of the network. In the testing phase, the pr evious layer and the next layer have dependencies. Simply , conducting the computation of the current layer must wait for the previous calculation is com- pleted. But multiple convolutional operations at each layer can be processed in parallel. Therefore, the depth of model architecture is an essential factor aecting time performance. This point will be veried in Section 4. As to solving the dierent scale factors ( × 2 , × 3 , × 4 ) SR problem using a single model, previous solutions pretreated an image to the desired size and using the fully convolutional network without any downsampling operations. This way will inevitably lead to a substantial increase in the amount of calculation. T o address the above issues, we propose a lightweight informa- tion multi-distillation network (IMDN) for better balancing p erfor- mance against applicability . Unlike most previous small parameters models that use recursive structure , we elaborately design an in- formation multi-distillation block (IMDB) inspired by [ 11 ]. The proposed IMDB extracts features at a granular level, which retains partial information and further treats other features at each step (layer) as illustrated in Figure 2. For aggregating features distilled by all steps, we devise a contrast-aware channel attention layer , specif- ically related to the low-level vision tasks, to enhance colle cted various rened information. Concretely , we exploit more useful features (edges, corners, te xtures, et al. ) for image restoration. In order to handle SR of any arbitrary scale factor with a single model, we need to scale the input image to the target size, and then employ the proposed adaptive cropping strategy (see in Figure 4) to obtain image patches of appropriate size for lightweight SR model with downsampling layers. The contributions of this paper can be summarized as follows: • W e propose a lightweight information multi-distillation net- work (IMDN) for fast and accurate image super-resolution. Thanks to our information multi-distillation block (IMDB) with contrast-aware attention (CCA) layer , we achieve com- petitive results with a modest number of parameters (refer to Figure 6). • W e propose the adaptive cropping strategy ( ACS), which allows the network included downsampling operations ( e.g. , convolution layer with a stride of 2) to process images of any arbitrary size. By adopting this scheme, the computational cost, memory occupation, and inference time can dramati- cally reduce in the case of treating indenite magnication SR. • W e explore factors aecting actual infer ence time through experiments and nd the depth of the network is related to the execution sp eed. It can be a guideline for guiding a lightweight network design. And our model achieves an excellent balance among visual quality , inference spe ed, and memory occupation. 2 RELA TED W ORK 2.1 Single image super-resolution With the rapid development of deep learning, numerous meth- ods based on convolutional neural network (CNN) have been the mainstream in SISR. The pioneering work of SR is proposed by Dong et al. [ 4 , 5 ] named SRCNN. The SRCNN upscaled the LR image with bicubic interpolation before feeding into the network, which would cause substantial unnecessary computational cost. T o address this issue, the authors removed this pre-processing and upscaled the image at the end of the net to reduce the computation in [ 6 ]. Lim et al. [ 18 ] modied SRResNet [ 16 ] to construct a more in-depth and broader residual network denoted as EDSR. With the smart topology structure and a signicantly large number of learnable parameters, EDSR dramatically advanced the SR perfor- mance. Zhang et al. [38] introduced channel attention [8] into the residual block to further boost very de ep network (more than 400 layers without considering the depth of channel attention modules). Liu [19] explored the eectiveness of non-local module applied to image restoration. Similarly , Zhang et al. [ 37 ] utilized non-local attention to better guide feature extraction in their trunk branch for reaching better performance. V ery recently , Li et al. [ 17 ] exploited feedback mechanism that enhancing low-level representation with high-level ones. For lightweight networks, Hui et al. [ 11 ] developed the informa- tion distillation network for better exploiting hierarchical features by separation processing of the current feature maps. And Ahn [ 2 ] designed an architecture that implemented a cascading mechanism on a residual network to boost the performance. 2.2 Attention model Attention model, aiming at concentrating on more useful informa- tion in features, has been widely used in various computer vision tasks. Hu et al. [ 8 ] introduced sque eze-and-excitation (SE) block that models channel-wise relationships in a computationally ecient manner and enhances the representational ability of the netw ork, showing its eectiveness on image classication. CBAM [ 32 ] modi- ed the SE block to e xploit both spatial and channel-wise attention. W ang et al. [ 28 ] proposed the non-local module to generate the wide attention map by calculating the correlation matrix between each spatial p oint in the feature map, then the attention map guided dense contextual information aggregation. 3 METHOD 3.1 Framework In this section, we describe our proposed information multi-distillation network (IMDN) in detail, its graphical depiction is shown in Fig- ure 1(a). The upsampler (see Figure 1(b)) includes one 3 × 3 con- volution with 3 × s 2 output channels and a sub-pixel convolution. Given an input LR image I L R , its corresponding target HR image I H R . The super-resolved image I S R can be generated by I S R = H I M D N I L R , (1) where H I M D N ( · ) is our IMDN. It is optimized with mean absolute error (MAE) loss follow e d most of previous works [ 2 , 11 , 18 , 36 , 38 ]. Given a training set I L R i , I H R i N i = 1 that has N LR-HR pairs. Thus, the loss function of our IMDN can be expressed by L ( Θ ) = 1 N N Õ i = 1 H I M D N I L R i − I H R i 1 , (2) where Θ indicates the updateable parameters of our model and ∥ · ∥ 1 is l 1 norm. Then we give more details about the entire framew ork. W e rst conduct LR feature extraction implemented by one 3 × 3 convolution with 64 output channels. Then, the key component of our netw ork utilizes multiple stacked information multi-distillation blocks (IMDBs) and assembles all intermediate features to fusing by a 1 × 1 convolution layer . This scheme, intermediate informa- tion collection (IIC), is benecial to guarantee the integrity of the collected information and can further bo ost the SR performance by increasing very few parameters. The nal upsampler only consists of one learnable layer and a non-parametric operation (sub-pixel convolution) for saving parameters as much as possible. 3.2 Information multi-distillation block As depicte d in Figure 2, our information multi-distillation block (IMDB) is constructed by progressive renement module, contrast- aware channel attention (CCA) lay er , and a 1 × 1 convolution that is used to reduce the numb er of feature channels. The whole block adopts residual connection. The main idea of this block is extracting useful features little by little like DenseNet [ 9 ]. Then we give more details to these modules. T able 1: PRM architecture. The columns represent layer , kernel-size, stride, input channels, and output channels. The symbols, C, and L denote a convolution layer , and Leaky ReLU ( α = 0 . 05 ). Layer Kernel Stride Input_channel Output_channel CL 3 1 64 64 CL 3 1 48 64 CL 3 1 48 64 CL 3 1 48 16 3.2.1 Progressive refinement module. As labeled with the gray box in Figure 2, the progr essive renement module (PRM) rst adopts the 3 × 3 convolution layer to extract input features for multiple subsequent distillation (renement) steps. For each step, we emplo y channel split operation on the preceding features, which will pr o- duce two-part features. One is preserved and the other portion is fed into the next calculation unit. The retained part can be r egarded as the rened featur es. Given the input featur es F i n , this procedure in the n -th IMDB can b e described as F n r e f i n e d _ 1 , F n c o a r s e _ 1 = S p l i t n 1 C L n 1 F n i n , F n r e f i n e d _ 2 , F n c o a r s e _ 2 = S p l i t n 2 C L n 2 F n c o a r s e _ 1 , F n r e f i n e d _ 3 , F n c o a r s e _ 3 = S p l i t n 3 C L n 3 F n c o a r s e _ 2 , F n r e f i n e d _ 4 = C L n 4 F n c o a r s e _ 3 , (3) where C L n j denotes the j -th convolution layer (including Leaky ReLU) of the n -th IMDB, S pl i t n j indicates the j -th channel split layer InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Upsampler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 (a) IMDN InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 (b) Upsampler Figure 1: The architecture of information multi-distillation network (IMDN). (a) The orange box represents Leaky ReLU acti- vation function and the details of IMDB is shown in Figure 2. (b) s represents the upscale factor . InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Conv‐3 ChannelSplit Conv‐3 ChannelSplit Conv‐3 ChannelSplit Conv‐3 Concat Conv‐1 CCALayer progressive refinemen t module (PRM) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 Figure 2: The architecture of our proposed information multi-distillation block (IMDB). Here, 64 , 48 , and 16 all repr e- sent the output channels of the convolution layer . “Conv-3” denotes the 3 × 3 convolutional layer , and “CCA Layer” indi- cates the proposed contrast-aware channel attention (CCA) that is depicte d in Figure 3. Each convolution followed by a Leaky ReLU activation function except for the last 1 × 1 convolution. W e omit them for concise. of the n -th IMDB, F n r e f i n e d _ j represents the j -th rened features (preserved), and F n c o a r s e _ j is the j -th coarse features to be further processed. The hyperparameter of PRM architecture is shown in T able 1. The following stage is concatenating rened features from each step. It can be expressed by F n d i s t i l l e d = Conc at F n r e f i n e d _ 1 , F n r e f i n e d _ 2 , F n r e f i n e d _ 3 , F n r e f i n e d _ 4 , (4) where Conc at denotes concatenation operation along the channel dimension. 3.2.2 Contrast-aware channel aention lay er . The initial channel attention is employ e d in image classication task and is well-known as the squeeze-and-excitation (SE) module. In the high-lev el eld, the importance of a feature map depends on activated high-value areas, since these regions in favor of classication or dete ction. Ac- cordingly , global average/maximum pooling is utilized to capture InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 Figure 3: Contrast-aware channel attention module. the global information in these high-le vel or mid-level vision. Al- though the average pooling can inde ed improve the PSNR value, it lacks the information about structures, textures, and edges that are propitious to enhance image details (related to SSIM). As depicted in Figure 3, the contrast-aware channel attention module is special to low-level vision, e .g. , image sup er-resolution, and enhancement. Specically , we replace global average pooling with the summation of standard deviation and mean (e valuating the contrast degree of a feature map). Let’s denote X = [ x 1 , . . . , x c , . . . , x C ] as the input, which has C feature maps with spatial size of H × W . Therefore, the contrast information value can be calculated by z c = H G C ( x c ) = v u u u t 1 HW Õ ( i , j ) ∈ x c © « x i , j c − 1 HW Õ ( i , j ) ∈ x c x i , j c ª ® ¬ 2 + 1 HW Õ ( i , j ) ∈ x c x i , j c , (5) where z c is the c -th element of output. H G C ( · ) indicates the global contrast (GC) information evaluation function. With the assistance of the CCA module, our netw ork can steadily impro ve the accuracy of SISR. 3.3 Adaptive cropping strategy The adaptive cropping strategy ( ACS) is special to image of any arbitrary size super-resolving. Meanwhile, it can also deal with the SR problem of any scale factor with a single model (see Fig- ure 5). W e slightly modify the original IMDN by intr o ducing two downsampling layer and construct the curr ent IMDN_AS (IMDN for any scales). Here, the LR and HR images have the same spatial size (height and width). T o handle images whose height and width are not divisible by 4 , we rst cut the entire images into 4 parts and then feed them into our IMDN_AS. As illustrated in Figure 4, we can obtain 4 overlapped image patches through ACS. T ake the rst patch in the upper left corner as an example, and we give the InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 (a) The rst image patch InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 (b) The last image patch Figure 4: The diagrammatic sketch of adaptive cropping strategy (A CS). The cropped image patches in the green dot- ted boxes. InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Upsampler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 Figure 5: The network structure of our IMDN_AS. “s2” rep- resents the stride of 2. details about ACS. This image patch must satisfy H 2 + ∆ l H %4 = 0 , W 2 + ∆ l W %4 = 0 , (6) where ∆ l H , ∆ l W are extra increments of height and width, respec- tively . They can be computed by ∆ l H = pad d i n д H − H 2 + padd in д H %4 , ∆ l W = pad d i n д W − W 2 + padd in д W %4 , (7) where padd in д H , padd in д W are preset additional lengths. In gen- eral, their values are setting by padd in д H = pad d i n д W = 4 k , k ≥ 1 . (8) Here, k is an integer greater than or equal to 1. These four patches can be processed in parallel (they have the same sizes), after which the outputs are pasted to their original lo cation, and the extra increments ( ∆ l H and ∆ l W ) are discarded. 4 EXPERIMEN TS 4.1 Datasets and metrics In our experiments, we use the DIV2K dataset [ 1 ], which contains 800 high-quality RGB training images and widely used in image restoration tasks [ 18 , 36 – 38 ]. For evaluation, we use ve widely used benchmark datasets: Set5 [ 3 ], Set14 [ 33 ], BSD100 [ 20 ], Ur- ban100 [ 10 ], and Manga109 [ 21 ]. W e evaluate the performance of the super-resolved images using two metrics, including peak signal- to-noise ratio (PSNR) and structure similarity inde x (SSIM) [ 30 ]. As with existing works [ 2 , 11 , 12 , 18 , 24 , 36 , 38 ], we calculate the values on the luminance channel ( i.e. , Y channel of the YCbCr channels converted from the RGB channels). Additionally , for any/unknown scale factor experiments, we use RealSR dataset from N TIRE2019 Real Super-Resolution Challenge 1 . It is a novel dataset of real lo w and high resolution paired images. The training data consists of 60 real low , and high r esolution paired images, and the validation data contains 20 LR-HR pairs. It is note- worthy that the LR and HR have the same size. 4.2 Implementation details T o obtain LR DI V2K training images, we downscale HR images with the scaling factors ( × 2 , × 3 , and × 4 ) using bicubic interp olation in MA TLAB R2017a. The HR image patches with a size of 192 × 192 are randomly cropped from HR images as the input of our model, and the mini-batch size is set to 16 . For data augmentation, we perform randomly horizontal ip and 90 degree rotation. Our model is trained by ADAM optimizer with the momentum parameter β 1 = 0 . 9 . The initial learning rate is set to 2 × 10 − 4 and halved at every 2 × 10 5 iterations. W e set the number of IMDB to 6 in our IMDN and IMDN_AS. W e apply PyT orch framework to implement the proposed network on the desktop computer with 4.2GHz Intel i7- 7700K CPU, 64G RAM, and NVIDIA TI T AN Xp GP U (12G memory). 4.3 Model analysis In this subsection, we investigate model parameters, the eective- ness of IMDB, the intermediate information collection scheme, and adaptive cropping strategy . SRCNN FSRCN N VDSR LapSRN DRRN MemNet IDN CARN IMDN EDSR‐baseline 30.2 30.4 30.6 30.8 31 31.2 31.4 31.6 31.8 32 32.2 32.4 00 . 511 . 52 PSNR(dB) Numberofparamete rs(K) 𝟏 𝟎 𝟑 Figure 6: Trade-o between performance and number of pa- rameters on Set5 × 4 dataset. 4.3.1 Model parameters. T o construct a lightweight SR mo del, the parameters of the network is vital. From T able 5, we can observe that our IMDN with fewer parameters achieves comparative or better performance when comparing with other state-of-the-art methods, such as EDSR-baseline (CVPRW’17), IDN (CVPR’18), SR- MDNF (CVPR’18), and CARN (ECCV’18). W e also visualize the trade-o analysis between p erformance and model size in Figure 6. W e can see that our IMDN achieves a better trade-o between the performance and model size. T able 2: Investigations of CCA module and IIC scheme. Scale PRM CCA IIC Params Set5 Set14 BSD100 Urban100 Manga109 PSNR / SSIM PSNR / SSIM PSNR / SSIM PSNR / SSIM PSNR / SSIM × 4 # # # 510K 31.86 / 0.8901 28.43 / 0.7775 27.45 / 0.7320 25.63 / 0.7711 29.92 / 0.9003 ! # # 480K 32.01 / 0.8927 28.49 / 0.7792 27.50 / 0.7338 25.81 / 0.7773 30.16 / 0.9038 ! ! # 482K 32.10 / 0.8934 28.51 / 0.7794 27.52 / 0.7341 25.89 / 0.7793 30.25 / 0.9050 ! ! ! 499K 32.11 / 0.8934 28.52 / 0.7797 27.53 / 0.7342 25.90 / 0.7797 30.28 / 0.9054 T able 3: Comparison with original channel attention (CA) and the presented contrast-aware channel attention (CCA). Module Set5 Set14 BSD100 Urban100 IMDN_basic_B4 + CA 32.0821 28.5086 27.5124 25.8829 IMDN_basic_B4 + CCA 32.0964 28.5118 27.5185 25.8916 InformationMultipleDistillationsNetwork(IMDN) 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 64 16 48 Co n v ‐ 3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐ 3 Co n c at Co n v ‐ 1 Contrast sigmod Conv‐1 Conv‐1 46 4 2 W 2 H W l H l Conv‐3 Sub‐pixel 2 3 s Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR LR Conv‐3 64 64 64 Conv‐1 Conv‐3 IMDB IMDB IMDB IMDB Ups a mpler SR Conv‐3 64 64 64 LR 64 Conv‐3 s2 Conv‐1 s2 64 16 48 Co n v ‐3 Chan nel Split Co n v ‐3 Chan nel Split Co n v ‐ 3 Chan nel Split Co n v ‐3 Co n c at Co n v ‐1 CC A Laye r pr og ressive re fi ne m e n t mo d u l e (PR M ) Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 Contrast Conv‐1 Conv‐1 46 4 Mean Conv‐1 sigmod Conv‐1 2 H H l 2 W W l sigmod Conv‐1 Conv‐1 46 4 Contrast Conv‐3 IMDB IMDB IMDB IMDB Upsampler SR LR Conv‐3 64 64 Figure 7: The structure of IMDN_basic_B4. 4.3.2 Ablation studies of CCA module and IIC scheme. T o quickly validate the eectiveness of the contrast-aware attention (CCA) module and intermediate information collection (IIC) scheme, we adopt 4 IMDBs to conduct the following ablation study experi- ment, named IMDN_B4. When removing the CCA module and IIC scheme, the IMDN_B4 becomes IMDN_basic_B4 as illustrated in Figure 7. From Table 2, we can nd out that the CCA module leads to performance improvement (PSNR: +0.09dB , SSIM: +0.0012 for × 4 Manga109) only by increasing 2K parameters (which is an increase of 0 . 4% ). The results compared with the CA module are placed in T able 3. T o study the eciency of PRM in IMDB, we replace it with three cascaded 3 × 3 convolution layers (64 channels) and remo ve the nal 1 × 1 convolution (used for fusion). The compared results are given in T able 2. Although this network has more parameters (510K), its performance is much lower than our IMDN_basic_B4 (480K) especially on Urban100 and Manga109 datasets. T able 4: Quantitative evaluation of VDSR and our IMDN_AS in PSNR, SSIM, LPIPS, running time, and memor y occupa- tion. Method PSNR SSIM LPIPS [35] Time Memory VDSR [12] 28.75 0.8439 0.2417 0.0290 7,855M IMDN_AS 29.35 0.8595 0.2147 0.0041 3,597M 4.3.3 Investigation of A CS. T o verify the eciency of the pr opose d adaptive cropping strategy ( ACS), w e use RealSR training images to train VDSR [ 12 ] and our IMDN_AS. The results, evaluated on RealSR RGB validation dataset, are illustrated in T able 4 and we 1 http://www.vision.ee.ethz.ch/ntir e19/ can easily observe that the presented IMDN_AS achiev es b etter performance in term of image quality , execution speed, and foot- print. Accordingly , it also suggests the proposed A CS is powerful to address SR problem of any scales. 4.4 Comparison with state-of-the-arts W e compare our IMDN with 11 state-of-the-art methods: SRCNN [ 4 , 5 ], FSRCNN [ 6 ], VDSR [ 12 ], DRCN [ 13 ], LapSRN [ 14 ], DRRN [ 24 ], MemNet [ 25 ], IDN [ 11 ], EDSR-baseline [ 18 ], SRMDNF [ 34 ], and CARN [ 2 ]. T able 5 shows quantitative comparisons for × 2 , × 3 , and × 4 SR. It can nd out that our IMDN performs favorably against other compared approaches on most datasets, especially at the scaling factor of × 2 . Figure 8 shows × 2 , × 3 and × 4 visual comparisons on Set5 and Urban100 datasets. For “img_67” image from Urban100, we can se e that grid structure is r ecovered better than others. It also demon- strates the eectiveness of our IMDN. 4.5 Running time 4.5.1 Complexity analysis. As the proposed IMDN mainly consists of convolutions, the total number of parameters can be computed as P ar a ms = L Õ l = 1 n l − 1 · n l · f 2 l | {z } c on v + n l | {z } b i a s , (9) where l is the layer index, L denotes the total number of layers, and f represents the spatial size of the lters. The number of convolutional kernels belong to l -th layer is n l , and its input channels are n l − 1 . Suppose that the spatial size of output feature maps is m l × m l , the time complexity can be roughly calculated by O L Õ l = 1 n l − 1 · n l · f 2 l · m 2 l ! . (10) W e assume that the size of the HR image is m × m and then the computational costs can be calculate d by Equation 10 (see T able 7). 4.5.2 Running Time. W e use ocial codes of the compared meth- ods to test their running time in a feed-for ward process. From T able 6, we can b e informed of actual execution time is related to the depth of networks. Although EDSR has a large number of parameters (43M), it runs very fast. The only drawback is that it takes up more graphics memor y . The main reason should be the convolution computation for each layer are parallel. And RCAN has only 16M parameters, its depth is up to 415 and results in very slow inference speed. Compared with CARN [ 2 ] and EDSR-baseline [ 18 ], T able 5: A verage PSNR/SSIM for scale factor × 2 , × 3 and × 4 on datasets Set5, Set14, BSD100, Urban100, and Manga109. Best and second best results are highlighted and underlined. Method Scale Params Set5 Set14 BSD100 Urban100 Manga109 PSNR / SSIM PSNR / SSIM PSNR / SSIM PSNR / SSIM PSNR / SSIM Bicubic × 2 - 33.66 / 0.9299 30.24 / 0.8688 29.56 / 0.8431 26.88 / 0.8403 30.80 / 0.9339 SRCNN [4] 8K 36.66 / 0.9542 32.45 / 0.9067 31.36 / 0.8879 29.50 / 0.8946 35.60 / 0.9663 FSRCNN [6] 13K 37.00 / 0.9558 32.63 / 0.9088 31.53 / 0.8920 29.88 / 0.9020 36.67 / 0.9710 VDSR [12] 666K 37.53 / 0.9587 33.03 / 0.9124 31.90 / 0.8960 30.76 / 0.9140 37.22 / 0.9750 DRCN [13] 1,774K 37.63 / 0.9588 33.04 / 0.9118 31.85 / 0.8942 30.75 / 0.9133 37.55 / 0.9732 LapSRN [14] 251K 37.52 / 0.9591 32.99 / 0.9124 31.80 / 0.8952 30.41 / 0.9103 37.27 / 0.9740 DRRN [24] 298K 37.74 / 0.9591 33.23 / 0.9136 32.05 / 0.8973 31.23 / 0.9188 37.88 / 0.9749 MemNet [25] 678K 37.78 / 0.9597 33.28 / 0.9142 32.08 / 0.8978 31.31 / 0.9195 37.72 / 0.9740 IDN [11] 553K 37.83 / 0.9600 33.30 / 0.9148 32.08 / 0.8985 31.27 / 0.9196 38.01 / 0.9749 EDSR-baseline [18] 1,370K 37.99 / 0.9604 33.57 / 0.9175 32.16 / 0.8994 31.98 / 0.9272 38.54 / 0.9769 SRMDNF [34] 1,511K 37.79 / 0.9601 33.32 / 0.9159 32.05 / 0.8985 31.33 / 0.9204 38.07 / 0.9761 CARN [2] 1,592K 37.76 / 0.9590 33.52 / 0.9166 32.09 / 0.8978 31.92 / 0.9256 38.36 / 0.9765 IMDN (Ours) 694K 38.00 / 0.9605 33.63 / 0.9177 32.19 / 0.8996 32.17 / 0.9283 38.88 / 0.9774 Bicubic × 3 - 30.39 / 0.8682 27.55 / 0.7742 27.21 / 0.7385 24.46 / 0.7349 26.95 / 0.8556 SRCNN [4] 8K 32.75 / 0.9090 29.30 / 0.8215 28.41 / 0.7863 26.24 / 0.7989 30.48 / 0.9117 FSRCNN [6] 13K 33.18 / 0.9140 29.37 / 0.8240 28.53 / 0.7910 26.43 / 0.8080 31.10 / 0.9210 VDSR [12] 666K 33.66 / 0.9213 29.77 / 0.8314 28.82 / 0.7976 27.14 / 0.8279 32.01 / 0.9340 DRCN [13] 1,774K 33.82 / 0.9226 29.76 / 0.8311 28.80 / 0.7963 27.15 / 0.8276 32.24 / 0.9343 LapSRN [14] 502K 33.81 / 0.9220 29.79 / 0.8325 28.82 / 0.7980 27.07 / 0.8275 32.21 / 0.9350 DRRN [24] 298K 34.03 / 0.9244 29.96 / 0.8349 28.95 / 0.8004 27.53 / 0.8378 32.71 / 0.9379 MemNet [25] 678K 34.09 / 0.9248 30.00 / 0.8350 28.96 / 0.8001 27.56 / 0.8376 32.51 / 0.9369 IDN [11] 553K 34.11 / 0.9253 29.99 / 0.8354 28.95 / 0.8013 27.42 / 0.8359 32.71 / 0.9381 EDSR-baseline [18] 1,555K 34.37 / 0.9270 30.28 / 0.8417 29.09 / 0.8052 28.15 / 0.8527 33.45 / 0.9439 SRMDNF [34] 1,528K 34.12 / 0.9254 30.04 / 0.8382 28.97 / 0.8025 27.57 / 0.8398 33.00 / 0.9403 CARN [2] 1,592K 34.29 / 0.9255 30.29 / 0.8407 29.06 / 0.8034 28.06 / 0.8493 33.50 / 0.9440 IMDN (Ours) 703K 34.36 / 0.9270 30.32 / 0.8417 29.09 / 0.8046 28.17 / 0.8519 33.61 / 0.9445 Bicubic × 4 - 28.42 / 0.8104 26.00 / 0.7027 25.96 / 0.6675 23.14 / 0.6577 24.89 / 0.7866 SRCNN [4] 8K 30.48 / 0.8628 27.50 / 0.7513 26.90 / 0.7101 24.52 / 0.7221 27.58 / 0.8555 FSRCNN [6] 13K 30.72 / 0.8660 27.61 / 0.7550 26.98 / 0.7150 24.62 / 0.7280 27.90 / 0.8610 VDSR [12] 666K 31.35 / 0.8838 28.01 / 0.7674 27.29 / 0.7251 25.18 / 0.7524 28.83 / 0.8870 DRCN [13] 1,774K 31.53 / 0.8854 28.02 / 0.7670 27.23 / 0.7233 25.14 / 0.7510 28.93 / 0.8854 LapSRN [14] 502K 31.54 / 0.8852 28.09 / 0.7700 27.32 / 0.7275 25.21 / 0.7562 29.09 / 0.8900 DRRN [24] 298K 31.68 / 0.8888 28.21 / 0.7720 27.38 / 0.7284 25.44 / 0.7638 29.45 / 0.8946 MemNet [25] 678K 31.74 / 0.8893 28.26 / 0.7723 27.40 / 0.7281 25.50 / 0.7630 29.42 / 0.8942 IDN [11] 553K 31.82 / 0.8903 28.25 / 0.7730 27.41 / 0.7297 25.41 / 0.7632 29.41 / 0.8942 EDSR-baseline [18] 1,518K 32.09 / 0.8938 28.58 / 0.7813 27.57 / 0.7357 26.04 / 0.7849 30.35 / 0.9067 SRMDNF [34] 1,552K 31.96 / 0.8925 28.35 / 0.7787 27.49 / 0.7337 25.68 / 0.7731 30.09 / 0.9024 CARN [2] 1,592K 32.13 / 0.8937 28.60 / 0.7806 27.58 / 0.7349 26.07 / 0.7837 30.47 / 0.9084 IMDN (Ours) 715K 32.21 / 0.8948 28.58 / 0.7811 27.56 / 0.7353 26.04 / 0.7838 30.45 / 0.9075 T able 6: Memory Consumption (MB) and average inference time (second). Method Scale Params Depth BSD100 Urban100 Manga109 Memory / Time Memor y / Time Memor y / Time EDSR-baseline [18] × 4 1.6M 37 665 / 0.00295 2,511 / 0.00242 1,219 / 0.00232 EDSR [18] 43M 69 1,531 / 0.00580 8,863 / 0.00416 3,703 / 0.00380 RDN [38] 22M 150 1,123 / 0.01626 3,335 / 0.01325 2,257 / 0.01300 RCAN [36] 16M 415 777 / 0.09174 2,631 / 0.55280 1,343 / 0.72250 CARN [2] 1.6M 34 945 / 0.00278 3,761 / 0.00305 2,803 / 0.00383 IMDN (Ours) 0.7M 34 671 / 0.00285 1,155 / 0.00284 895 / 0.00279 Urban100 ( 2 × ): img_67 HR VDSR [12] DRCN [13] DRRN [24] LapSRN [14] PSNR/SSIM 24.10/0.9537 23.64/0.9493 24.73/0.9594 23.80/0.9527 MemNet [25] IDN [11] EDSR-baseline [18] CARN [2] IMDN (Ours) 24.98/0.9613 24.68/0.9594 26.01/0.9695 25.96/0.9692 27.75 / 0.9773 Urban100 ( 3 × ): img_76 HR VDSR [12] DRCN [13] DRRN [24] LapSRN [14] PSNR/SSIM 24.75/0.8284 24.82/0.8277 24.80/0.8312 24.89/0.8337 MemNet [25] IDN [11] EDSR-baseline [18] CARN [2] IMDN (Ours) 24.97/0.8359 24.95/0.8332 25.85/0.8565 25.92/0.8583 26.19 / 0.8610 Figure 8: Visual comparisons of IMDN with other SR metho ds on Set5 and Urban100 datasets. T able 7: The computational costs. For representing concisely , we omit m 2 . Least and se cond least computational costs are highlighted and underlined. Scale LapSRN [14] IDN [11] EDSR-b [18] CARN [2] IMDN × 2 112K 175K 341K 157K 173K × 3 76K 75K 172K 90K 78K × 4 76K 51K 122K 76K 45K VDSR DRCN LapSRN DRRN_B1U9 IDN EDSR‐baseline CARN IMDN 31.3 31.4 31.5 31.6 31.7 31.8 31.9 32 32.1 32.2 32.3 0.001 0.01 0.1 1 PSNR(dB) Executiontime(sec) Figure 9: Trade-o between p erformance and running time on Set5 × 4 dataset. VDSR, DRCN, and LapSRN were imple- mented by MatConvNet, while DRRN, and IDN emplo yed Cae package. The rest EDSR-baseline, CARN, and our IMDN utilized Py T orch. Our IMDN achieves dominant performance in term of memory usage and time consumption. For more intuitive comparisons with other approaches, we pro- vide the trade-o between the running time and performance on Set5 dataset for × 4 SR in the Figure 9. It shows our IMDN gains comparable execution time and best PSNR value. 5 CONCLUSION In this paper , we propose an information multi-distillation netw ork for lightweight and accurate single image super-resolution. W e construct a progressive renement module to extract hierarchical feature step-by-step. By cooperating with the proposed contrast- aware channel attention module, the SR p erformance is signicantly and steadily improved. Additionally , we present the adaptive crop- ping strategy to solve the SR problem of an arbitrary scale factor , which is critical for the application of SR algorithms in the ac- tual scenes. Numerous experiments have shown that the proposed method achieves a commendable balance between factors ae cting practical use, including visual quality , execution speed, and mem- ory consumption. In the future, this approach will be explored to facilitate other image restoration tasks such as image denoising and enhancement. A CKNO WLEDGMEN TS This work was supported in part by the National Natural Science Foundation of China under Grant 61432014, 61772402, U1605252, 61671339 and 61871308, in part by the National Ke y Research and Development Program of China under Grant 2016Q Y01W0200, in part by National High-Lev el T alents Sp ecial Support Program of China under Grant CS31117200001. REFERENCES [1] Eirikur Agustsson and Radu Timofte. 2017. NTIRE 2017 Challenge on Single Image Super-Resolution: Dataset and Study. In IEEE Conference on Computer Vision and Pattern Recognition W orkshop (CVPRW) . 126–135. [2] Namhyuk Ahn, Byungkon Kang, and K yung-Ah Sohn. 2018. Fast, Accurate, and Lightweight Super-Resolution with Cascading Residual Network. In European Conference on Computer Vision (ECCV) . 252–268. [3] Marco Bevilacqua, Aline Roumy, Christine Guillemot, and Marie Line Alb eri- Morel. 2012. Low-complexity single-image super-resolution based on nonnega- tive neighbor embedding. In British Machine Vision Conference (BMVC) . [4] Chao Dong, Chen Change Loy , Kaiming He, and Xiaoou T ang. 2014. Learning a deep convolutional network for image super-resolution. In European Conference on Computer Vision (ECCV) . 184–199. [5] Chao Dong, Chen Change Loy , Kaiming He, and Xiaoou T ang. 2016. Image super- resolution using deep convolutional networks. IEEE Transactions on Pattern A nalysis and Machine Intelligence 38, 2 (2016), 295–307. [6] Chao Dong, Chen Change Loy , and Xiao ou T ang. 2016. Accelerating the super- resolution convolutional neural network. In Eur opean Conference on Computer Vision (ECCV) . 391–407. [7] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. 2016. Deep residual learning for image recognition. In IEEE Conference on Computer Vision and Pattern Recognition ( CVPR) . 770–778. [8] Jie Hu, Li Shen, and Gang Sun. 2018. Squeeze-and-Excitation Networks. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 7132–7141. [9] Gao Huang, Zhuang Liu, Laurens van der Maaten, and Kilian Q W einberger. 2017. Densely conne cted convolutional networks. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 4700–4708. [10] Jia-Bin Huang, Abhishek Singh, and Narendra Ahuja. 2015. Single image super- resolution from transformed self-exemplars. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 5197–5206. [11] Zheng Hui, Xiumei W ang, and Xinb o Gao. 2018. Fast and Accurate Single Image Super-Resolution via Information Distillation Network. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 723–731. [12] Jiwon Kim, Jung K won Lee, and K young Mu Lee. 2016. Accurate image super- resolution using very deep convolutional networks. In IEEE Conference on Com- puter Vision and Pattern Recognition (CVPR) . 1646–1654. [13] Jiwon Kim, Jung Kw on Lee, and Ky oung Mu Lee. 2016. Deeply-recursive con- volutional network for image super-resolution. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 1637–1645. [14] W ei-Sheng Lai, Jia-Bin Huang, Narendra Ahuja, and Ming-Hsuan Y ang. 2017. Deep laplacian pyramid networks for fast and accurate sup er-resolution. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 624–632. [15] W ei-Sheng Lai, Jia-Bin Huang, Narendra Ahuja, and Ming-Hsuan Y ang. 2018. Fast and Accurate Image Super-Resolution with Deep Laplacian Pyramid Networks. IEEE Transactions on Pattern Analysis and Machine Intelligence (2018). [16] Christian Ledig, Lucas Theis, Ferenc Huszár , Jose Caballero, and Andrew Cun- ningham. 2017. Photo-Realistic single image super-resolution using a generative adversarial network. In IEEE Conference on Computer Vision and Pattern Recogni- tion (CVPR) . 4681–4690. [17] Zhen Li, Jinglei Y ang, Zheng Liu, Xiaoming Y ang, Gwanggil Je on, and W ei Wu. 2019. Fee dback Network for Image Super-Resolution. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . [18] Bee Lim, Sanghyun Son, He ewon Kim, Seungjun Nah, and Ky oung Mu Lee. 2017. Enhanced Deep Residual Networks for Single Image Super-Resolution. In IEEE Conference on Computer Vision and Pattern Recognition W orkshop (CVPRW) . 136–144. [19] Ding Liu, Bihan W en, Yuchen Fan, Chen Change Loy , and Thomas S Huang. 2018. Non-Local Recurrent Network for Image Restoration. In Advances in Neural Information Processing Systems (NeurIPS) . 1680–1689. [20] David Martin, Charless Fowlkes, Doron T al, and Jitendra Malik. 2001. A database of human segmented natural images and its application to evaluating segmenta- tion algorithms and measuring ecological statistics. In International Conference on Computer Vision (ICCV) . 416–423. [21] Y usuke Matsui, Kota Ito, Y uji Aramaki, Azuma Fujimoto, T oru Ogawa, T oshihiko Y amasaki, and Kiyoharu Aizawa. 2017. Sketch-based manga retrieval using manga109 dataset. Multimedia T ools and A pplications 76, 20 (2017), 21811–21838. [22] W enzhe Shi, Jose Caballero, Huszár , Ferenc, Johannes T otz, Andrew P . Aitken, Rob Bishop, Daniel Rueckert, and Zehan W ang. 2016. Real-time single image and video super-resolution using an ecient sub-pixel convolutional neural network. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 1874–1883. [23] Karen Simonyan and Andrew Zisserman. 2015. V ery De ep Convolutional Net- works for Large-Scale Image Recognition. In International Conference for Learning Representations (ICLR) . [24] Ying T ai, Jian Y ang, and Xiaoming Liu. 2017. Image super-resolution via deep recursive residual network. In IEEE Conference on Computer Vision and Pattern Recognition ( CVPR) . 3147–3155. [25] Ying T ai, Jian Y ang, Xiaoming Liu, and Chunyan Xu. 2017. MemNet: A Persistent Memory Network for Image Restoration. In IEEE International Conference on Computer Vision (ICCV) . 4539–4547. [26] Radu Timofte, Shuhang Gu, Jiqing W u, Luc V an Gool, Lie Zhang, and et al. 2017. NTIRE 2018 Challenge on Single Image Super-Resolution: Methods and Results. In IEEE Conference on Computer Vision and Pattern Recognition W orkshop (CVPRW) . 965–976. [27] T ong T ong, Gen Li, Xiejie Liu, and Qinquan Gao. 2017. Image Super-Resolution Using Dense Skip Connections. In IEEE International Conference on Computer Vision (ICCV) . 4799–4807. [28] Xiaolong W ang, Ross Girshick, Abhinav Gupta, and K aiming He. 2018. Non-local Neural Networks. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 7794–7803. [29] Yifan W ang, Federico Perazzi, Brian McWilliams, Alexander Sorkine-Hornung, Olga Sorkin-Hornung, and Christopher Schroers. 2018. A Fully Progressive Approach to Single-Image Super-Resolution. In IEEE Conference on Computer Vision and Pattern Recognition W orkshop (CVPRW) . 977–986. [30] Zhou W ang, A.C. Bovik, H.R. Sheikh, and E.P. Simoncelli. 2004. Image quality assessment: from error visibility to structural similarity. IEEE Transactions on Image Processing 13, 4 (2004), 600–612. [31] Zhaowen W ang, Ding Liu, Jianchao Y ang, W ei Han, and Thomas Huang. 2015. Deep networks for image sup er-resolution with sparse prior . In IEEE International Conference on Computer Vision (ICCV) . 370–378. [32] Sanghyun W oo, Jongchan Park, Jo on- Y oung Lee, and In So Kw eon. 2018. CBAM: Convolutional Block Attention Module. In The European Conference on Computer Vision (ECCV) . 3–19. [33] Roman Zeyde, Michael Elad, and Matan Protter . 2010. On single image scale-up using sparse-representations. In International Conference on Curves and Surfaces (ICCS) . 711–730. [34] Kai Zhang, W angmeng Zuo, and Lei Zhang. [n. d.]. Learning a Single Convolu- tional Super-Resolution Network for Multiple Degradations. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 3262–3271. [35] Richard Zhang, Phillip Isola, Alexei A. Efr os, Eli Shechtman, and Oliver W ang. 2018. The Unreasonable Eectiveness of Deep Features as a Perceptual Metric. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 586–595. [36] Y ulun Zhang, kunpeng Li, Kai Li, Lichen W ang, Bineng Zhong, and Y un Fu. 2018. Image Super-Resolution Using V ery Deep Residual Channel Attention Networks. In European Conference on Computer Vision (ECCV) . 286–301. [37] Y ulun Zhang, Kunpeng Li, Kai Li, Bineng Zhong, and Yun Fu. 2019. Residual Non-local Attention Networks for Image Restoration. In International Conference on Learning Representations (ICLR) . [38] Y ulun Zhang, Yapeng Tian, Y u Kong, Bineng Zhong, and Yun Fu. 2018. Residual Dense Network for Image Super-Resolution. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . 2472–2481.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment