SpeechYOLO: Detection and Localization of Speech Objects

In this paper, we propose to apply object detection methods from the vision domain on the speech recognition domain, by treating audio fragments as objects. More specifically, we present SpeechYOLO, which is inspired by the YOLO algorithm for object …

Authors: Yael Segal, Tzeviya Sylvia Fuchs, Joseph Keshet

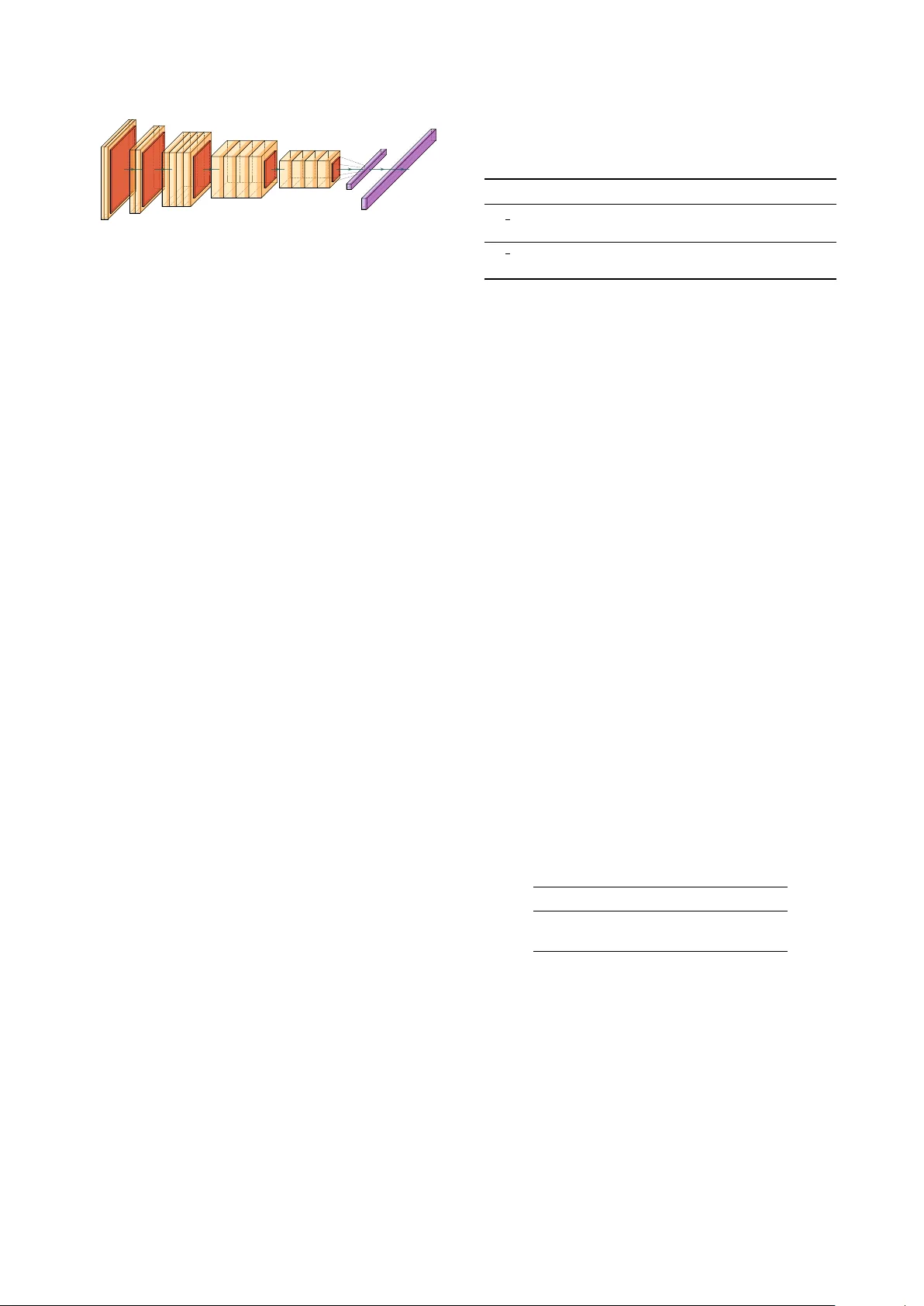

SpeechY OLO: Detection and Localization of Speech Objects Y ael Se gal * , Tzeviya Sylvia Fuc hs * , J oseph Keshet Bar-Ilan Uni versity , Ramat Gan, Israel { segalya, fuchstz, jkeshet } @cs.biu.ac.il Abstract In this paper, we propose to apply object detection methods from the vision domain on the speech recognition domain, by treating audio fragments as objects. More specifically , we present SpeechY OLO, which is inspired by the Y OLO algo- rithm [1] for object detection in images. The goal of SpeechY - OLO is to localize boundaries of utterances within the input signal, and to correctly classify them. Our system is composed of a con volutional neural network, with a simple least-mean- squares loss function. W e e valuated the system on several ke y- word spotting tasks, that include corpora of read speech and spontaneous speech. Our system compares fa vorably with other algorithms trained for both localization and classification. Index T erms : keyw ord spotting, event detection, speech pro- cessing, con volutional neural networks 1. Introduction Recently automatic speech recognition (ASR) has became ubiq- uitous in many applications. While ASR systems like Deep- Speech 2 [2] and wav2letter [3] reached amazing results in tran- scribing read and con versational speech, sometimes it is desired to spot and locate a predefined small set of words with ex- tremely high accurac y . For example, services like Google Now or Apple’ s Siri can be activated by pronouncing “OK Google” or “Hey Siri”, respectiv ely [4, 5]. It is also used by intelligence ser- vices to accurately find specific keywords while monitoring sus- pected phone calls. The task of detecting and localizing words can be used to automatically analyze the diadochokinetic artic- ulatory task [6, 7] that is used to analyze pathological speech and hence cannot be performed ef fectiv ely with ASR systems. In this work we present an end-to-end system that goes from a speech signal to the transcription and alignment of giv en key- words (this is in contrast to the spoken term detection task that makes predictions on ke ywords that it has not been trained on). Our architecture performs both detection and localization of these predefined ke ywords. Previous works typically focus on only one of these two challenges. Namely , algorithms w ould either predict what w ords appear in a gi ven utterance, thus per- forming detection [4, 5], or are given the audio signal and the target transcription and align them, thus performing localiza- tion mostly using forced alignment [8, 9]. Keshet et al. [10] proposed to use the confidence of a phoneme aligner and an ex- haustiv e search to detect and localize terms that are given by their phonetic content. In the vision domain, object detection algorithms combine the two aforementioned tasks: detection of the desired object and its localization in the image. Specifically , the YOLO [1] and SSD [11] algorithms identify objects in an image using bound- ing boxes. Inspired by the idea of using bounding boxes for object detection in images, we propose to identify speech ob- jects in an audio signal. More specifically , consider the word * These authors contributed equally to this work classification task as a form of object detection for a speech sig- nal. Palaz et al. [12] presented w ork that is most similar to ours. Their algorithm was trained to jointly locate and classify words. Howe ver , they used a weakly supervised setting and did not use word alignments, and hence were unable to perfectly predict the whole time-span of the predicted words. In our work, howe ver , our goal is both to detect and to locate the entire span of e very word, so both tasks’ results are strongly accurate. This paper is organized as follows. In Section 2 we for- mally introduce the classification and localization problem set- ting. W e present our proposed method in Section 3, and in Sec- tion 4 we show experimental results and various applications of our derived method. Finally , concluding remarks and future directions are discussed in Section 5. 2. Problem Setting The input to our system is a speech utterance. The input speech utterance is represented as a series of acoustic feature vectors. Formally , let ¯ x = ( x 1 , . . . , x T ) denote the input speech ut- terance of a fixed duration T , where each x t ∈ R D is a D - dimensional vector for 0 ≤ t ≤ T . W e further define the lex- icon L = { k 1 , k 2 , ..., k L } to be the target set of L keywords or terms that may appear in the audio signal ¯ x . Note that the utterance does not necessarily contain any of these k eywords or may contain se veral of them. In our setting the speech objects are the L ke ywords, but the model proposed here is not limited to specific keywords and can be used to detect and localize any audio or speech object, e.g., the syllables /pa/, /ta/, and /ka/ in the diadochokinetic articulatory task. Our goal is to spot all the occurrences of the ke ywords in a giv en utterance ¯ x and estimate their corresponding locations. W e assume that N keywords were pronounced in the utter- ance ¯ x , where N ≥ 0 . Each of these N e vents is defined by its lexical content and its time location. Each such ev ent e is defined formally by the the tuple e = ( k, t k start , t k end ) , where k ∈ L is the actual keyw ord that was pronounced, and t k start and t k end are its start and end times, respectively . Our goal is therefore to find all the e vents in an utterance, so that for each ev ent the correct object k is identified as well as its beginning and end times. 3. Model As previously mentioned, our model is inspired by the YOLO model [1]. W e no w describe our model formally . Our notation is schematically depicted in Fig. 1. W e assume that the input utterance ¯ x is of a fix ed size T (1 second in our setting). W e di- vide the input-time to C non-overlapping equal sections called cells ( C = 6 in our setting). Each cell is in charge of detecting a single event (at most) in its time-span. That is, the i -th cell, denoted c i , is in charge of the portion [ t c i , t c i +1 − 1] , where t c i is the start-time of the cell and t c i +1 − 1 is its end-time, for k ke yw ord t AAAB73icbZDJSgNBEIZ74hbjFvXopTEInsJMFPRmQA8eI5gFkiH0dGqSJj2L3TVCGPISXsQF8eoj+BrefBt7khw08YeGj/+voqvKi6XQaNvfVm5peWV1Lb9e2Njc2t4p7u41dJQoDnUeyUi1PKZBihDqKFBCK1bAAk9C0xteZnnzHpQWUXiLoxjcgPVD4QvO0FitzhVIZBS7xZJdtieii+DMoHTx+ZTpudYtfnV6EU8CCJFLpnXbsWN0U6ZQcAnjQifREDM+ZH1oGwxZANpNJ/OO6ZFxetSPlHkh0on7uyNlgdajwDOVAcOBns8y87+snaB/7qYijBOEkE8/8hNJMaLZ8rQnFHCUIwOMK2FmpXzAFONoTlQwR3DmV16ERqXsnJQrN3apekqmypMDckiOiUPOSJVckxqpE04keSAv5NW6sx6tN+t9WpqzZj375I+sjx+tKJQl t AAAB6HicbZDLSsNAFIZP6q3WW9Wlm2ARXJWkCrqz4MZlC/YCbSiT6aQdO5mEmROhhD6BGxeK1KVv4Wu4822ctF1o6w8DH/9/DnPO8WPBNTrOt5VbW9/Y3MpvF3Z29/YPiodHTR0lirIGjUSk2j7RTHDJGshRsHasGAl9wVr+6DbLW49MaR7JexzHzAvJQPKAU4LGqmOvWHLKzkz2KrgLKN18TjO913rFr24/oknIJFJBtO64ToxeShRyKtik0E00iwkdkQHrGJQkZNpLZ4NO7DPj9O0gUuZJtGfu746UhFqPQ99UhgSHejnLzP+yToLBtZdyGSfIJJ1/FCTCxsjOtrb7XDGKYmyAUMXNrDYdEkUomtsUzBHc5ZVXoVkpuxflSt0pVS9hrjycwCmcgwtXUIU7qEEDKDB4ghd4tR6sZ+vNms5Lc9ai5xj+yPr4Af3ckXk= box c i AAAB6nicbVDLSgNBEOyNrxhfMR69DAbBU9iNgh4DevAY0TwgWcLspDcZMju7zMwKIeQTvHhQxKv4F/6BJ2/+jZPHQRMLGoqqbrq7gkRwbVz328msrK6tb2Q3c1vbO7t7+f1CXcepYlhjsYhVM6AaBZdYM9wIbCYKaRQIbASDy4nfuEeleSzvzDBBP6I9yUPOqLHSLevwTr7oltwpyDLx5qRYyX5+FK7ej6qd/Fe7G7M0QmmYoFq3PDcx/ogqw5nAca6dakwoG9AetiyVNELtj6anjsmxVbokjJUtachU/T0xopHWwyiwnRE1fb3oTcT/vFZqwgt/xGWSGpRstihMBTExmfxNulwhM2JoCWWK21sJ61NFmbHp5GwI3uLLy6ReLnmnpfKNTeMMZsjCIRzBCXhwDhW4hirUgEEPHuAJnh3hPDovzuusNePMZw7gD5y3Hy9FkKQ= cell b i,j AAAB7nicbVC7SgNBFL0TXzG+ohYWNoNBsJCwGwUtAzbaRTAPSJY4O5lNxszOLjOzQljyETYWitj6B/6HnT9g6Tc4eRSaeODC4Zx7ufcePxZcG8f5RJmFxaXllexqbm19Y3Mrv71T01GiKKvSSESq4RPNBJesargRrBErRkJfsLrfvxj59XumNI/kjRnEzAtJV/KAU2KsVPfbKT++G7bzBafojIHniTslhfLe1df3e+a20s5/tDoRTUImDRVE66brxMZLiTKcCjbMtRLNYkL7pMualkoSMu2l43OH+NAqHRxEypY0eKz+nkhJqPUg9G1nSExPz3oj8T+vmZjg3Eu5jBPDJJ0sChKBTYRHv+MOV4waMbCEUMXtrZj2iCLU2IRyNgR39uV5UisV3ZNi6dqmcQoTZGEfDuAIXDiDMlxCBapAoQ8P8ATPKEaP6AW9TlozaDqzC3+A3n4A93iS9w== Figure 1: The notation used in our paper . The ke ywor d “star” is found within cell c i . One of the timing boxes b i,j is depicted with a shaded box, and it defines the timing of the ke ywor d r el- ative to the cell’ s boundaries. 1 ≤ i ≤ C . The cell estimates the probability Pr( k | c i ) of each keyw ord k ∈ L to be uttered within its time-span. W e denote the estimation of this probability by p c i ( k ) . The cell is also in charge of localizing the detected ev ent. The localization is defined relative to the cell’ s boundaries. Specifically , the location of the ev ent is defined by the time t ∈ [ t c i , t c i +1 − 1] , which is the center of the ev ent relativ e to the cell’ s boundaries, and ∆ t , the duration of the e vent. Note that ∆ t can be longer than the time-span of the cell. Using this notation the ev ent spans [ t c i + t − ∆ t/ 2 , t c i + t + ∆ t/ 2] . In order to localize effectiv ely , each cell is associated with B timing boxes (called bounding boxes in the YOLO litera- ture). Each box b i,j of the cell c i tries to independently lo- calize the e vent and estimate the probability of the timing gi ven the presumed keyword, Pr( t, ∆ t | k ) . It is defined by the tuple ( t j , ∆ t j , p b i,j ) , where p b i,j is the confidence score of the lo- calization t, ∆ t and it can be considered as an estimation of the probability Pr( t, ∆ t | k , c i ) . W e now turn to describe the model’ s inference. The infer- ence for each cell is performed independently . For the i -th cell, c i , the chosen e vent is composed of the ke yword k 0 and the tim- ing t 0 , ∆ t 0 that maximizes the conditional probabilities: ( k 0 , t 0 , ∆ t 0 ) = arg max k,t, ∆ t Pr( k , t, ∆ t | c i ) (1) = arg max k,t, ∆ t Pr( k | c i ) Pr(t , ∆t | k , c i ) . (2) The first conditional probability in Eq. (2) is p c i ( k ) , whereas the second conditional probability is p b i,j of box b i,j . Since there are L ke ywords and B boxes the search space reduces to L × B elements, hence it is very efficient: max k ∈L max 1 ≤ j ≤ B p c i ( k ) p b i,j . Finally , the event is considered to exist in the cell if its condi- tional probability from abov e is greater than a threshold, θ . W e conclude this section by describing the training proce- dure. Our model, SpeechY OLO , is implemented as a conv olu- tional neural network. The initial con volution layers of the net- work e xtract features from the utterance while fully connected layers are later added to predict the output probabilities and co- ordinates. Our network architecture is inspired by PyT orch’ s [13] implementation of the VGG19 [14] architecture 1 , and is presented in Section 4. 1 https://github.com/pytorch/vision/blob/master/torchvision/models/vgg.py The training set is composed of examples, where each example is an event that is composed of the tuple ( ¯ x , k , t k start , t k end ) . W e denote by 1 k i the indicator that is 1 if the keyw ord k was uttered within the cell c i , and 0 otherwise. Formally , 1 k i = 1 t c i ≤ t k ≤ t c i +1 − 1 0 otherwise . where t k is defined as the center of ev ent k . When we would like to indicate that the keyword is not in the cell we will use the notation (1 − 1 k i ) . The model’ s loss function is defined as a sum ov er se veral terms, each of which took into consideration a different aspect of the model, as a follows: ¯ ` ( ¯ x , k , t k start , t k end ) = λ 1 C X i =1 B X j =1 1 k i t j − t 0 j 2 + λ 2 C X i =1 B X j =1 1 k i q ∆ t j − q ∆ t 0 j 2 + C X i =1 B X j =1 1 k i 1 − p b i,j 2 + λ 3 C X i =1 B X j =1 1 − 1 k i 0 − p b i,j 2 + C X i =1 X k ∈L 1 k i 1 − p c i ( k ) 2 . W e would like to note that our system is inspired by the first version of Y OLO [1]. Further research on YOLO has been conducted in [15] and [16]. It seems, ho wev er, that most expan- sions made to their algorithm are irrelev ant for our domain. In [15] the authors’ main contributions are the addition of anchor boxes , which defines constraints on the shapes of the bounding boxes. This lead to specifying a separate class probability v alue for every bounding box. This is relev ant when dealing with a multidimensional domain, and is less relevant for speech. In their paper , they additionally suggest the usage of a fully conv o- lutional network, i.e. replacing the fully connected layers with con volutions. W e found that this yielded inferior results for our dataset. In [16], the main de velopment was the shift from mul- ticlass classification to multilabel classification. This changed the loss function from using regression to using cross entropy instead. This too is irrelev ant for our domain. 4. Experiments W e used data from the LibriSpeech corpus [17], which was de- riv ed from read audio books. The training set consisted of 960 hours of speech. This corpus had two test sets: test clean and test other , which summed up to 5 hours of speech. The first set was composed of high quality utterances and the second set was composed of lower quality utterances. The audio files were aligned to their giv en transcriptions using the Montreal Forced Aligner (MF A) [9]. W e extracted the Short-T ime Fourier Trans- form (STFT) as features to the sound files using the librosa package [18]. These features were computed on a 20 ms win- dow , with a shift of 10 ms. A tar get ev ent of the input speech signal can be defined as any discrete part of an utterance that is discernible to a human 64 64 conv1 128 128 conv2 256 256 256 256 conv3 512 512 512 512 conv4 512 512 512 512 conv5 1 512 fc6 + ReLU 1 L + 3 · B C fc7 Figure 2: The detection network has 16 con volutional layers followed by 2 fully connected layers. Every con volutional layer is followed by BatchNorm and ReLU. W e pretr ained the convo- lutional layers on the Goo gle Command classification task and then r eplace the final layer for detection and localization. annotator . Hence, events could be defined as a set of words, phrases, phones, etc. It w as assumed that only ev ents from the selected lexicon L are available during training time. W e used a conv olutional neural network that is similar to the VGG19 architecture. It had 16 conv olutional layers and 2 fully connected layers, and the final layer predicted both class probabilities and timing boxes’ coordinates. W e denote this ar- chitecture as V GG 19 ∗ . The model is described in detail in Fig- ure 2. For comparison, we also implemented a version of the VGG11 model (denoted by V GG 11 ∗ ), which had less con vo- lutional layers. Both models were trained using Adam [19] and a learning rate of 10 − 3 . W e pretrained our con volutional net- work using the Google Command dataset [20] for L = 30 . W e later replaced the last linear layer in order to perform prediction on a dif ferent number of events, and further trained the network. The size of this new final layer is C × ( L + 3 · B ) . W e di vided our experiments into two parts: in the first, we ev aluated SpeechY OLO’ s capability to correctly predict and lo- calize words within an utterance, and compared its performance to other similar systems. Then, we evaluated SpeechY OLO for the keyw ord spotting task on various domains. 4.1. W ord prediction and localization In this subsection, we e valuated the system’ s capability to learn word detection and localization. W e defined the target e vents to be the 1000 most common words in the training set ( L = 1000 ). It turned out that the a verage utterance time of a single word in our corpus was approximately 0.2 seconds. T o assure that the timing cells properly cov ered the span of the speech signal, we chose to use C = 6 timing cells per utterance of T = 1 sec. W e arbitrarily set the number of timing boxes per cell to be B = 2 . W e chose the value of the threshold θ that maximizes the F1 score, which is defined as the harmonic mean of the preci- sion and recall. W e ev aluated the model’ s detection capabilities using Precision and Recall. Results are presented in T able 1. It seems that the proposed system was able to correctly detect most of the words, with V GG 19 ∗ outperforming V GG 11 ∗ , due to its size and enhanced expressi ve abilities. 4.1.1. Pr ediction and Localization Due to the uniqueness of our system’ s aim to both classify and localize words, it is challenging to find an equiv alent algorithm to justly compare with. Most other algorithms focus on either one of the tasks, b ut not on both. The system of P alaz, Synnae ve and Collobert [12] w as de veloped for weakly-supervised word recognition; that is, its aim is to perform w ord classification and find word position, while training with a BoW supervision. As T able 1: SpeechYOLO evaluations with two ar chitectur es on both of LibriSpeech’ s test sets. The thr eshold value that maxi- mized the F1 scor e was chosen ( θ = 0 . 4 ). precision recall F1 test clean V GG 11 ∗ 0.743 0.637 0.686 V GG 19 ∗ 0.836 0.779 0.807 test other V GG 11 ∗ 0.547 0.456 0.498 V GG 19 ∗ 0.697 0.553 0.617 in [21], we refer to this system as PSC. PSC receives the Mel Filterbanks coefficients as input fea- tures. Their architecture is composed of 10 con volutional lay- ers. The final con volution has 1000 output filters for e very time span, with every filter corresponding to a word k in the le xicon L . The idea is that the score for w ord k would be highest in the time span it occurred in. The system is trained using SGD with a learning rate of 10 − 5 . W e compared SpeechYOLO’ s prediction and localization abilities to PSC’ s, as shown in T able 2. W e calculated the F1 measure as before. The Actual accuracy measure was calcu- lated as described in [12], and measures localization as well as prediction. For PSC, the Actual accuracy was calculated as fol- lows: word detection was performed by thresholding the prob- ability of a word being present in the sequence. For ev ery word k that passed the chosen threshold, we chose the frame in which it recei ved the highest score. W e then assessed if this frame was located within the range of k stated by the ground truth align- ment. The closest equi valent of this measure for our model w as to choose this frame to be the center of the predicted timing box. This value w as in turn compared to the ground truth alignment. As before, the threshold θ was chosen to maximize the F1 mea- sure. SpeechYOLO clearly outperformed PSC for both the F1 score and the Actual accuracy measure. T o assess the strength of SpeechYOLO’ s localization abil- ity , we calculated both systems’ average intersection ov er union (IOU) value with the ground truth alignments. While SpeechY - OLO’ s IOU value clearly outperformed that of PSC, one must remember that PSC was not trained with aligned data. T able 2: Comparing SpeechYOLO and PSC [12]’s evaluations of the F1 scor e, Actual accuracy and avera ge IOU value. The thr eshold value that maximized the F1 scor e was chosen for ev- ery algorithm separately ( θ = 0 . 4 ). F1 Actual IOU SpeechY OLO 0.807 0.774 0.843 PSC 0.767 0.692 0.3 W e further checked the quality of SpeechY OLO’ s local- ization capability . T o do so, we compared SpeechYOLO with MF A, after both had been trained on LibriSpeech. W e tested them on the training set of the TIMIT corpus. TIMIT is a corpus of read speech, and presents a dif ferent linguistic context com- pared to LibriSpeech. The IOU measure was used to compare both algorithm’ s output alignments with TIMIT’ s given word alignments for the 1000 most common words in the LibriSpeech training set. In order to predict SpeechYOLO’ s IOU v alues, it was assumed that its predictions were perfect. This was due to the fact that SpeechY OLO does not receive transcriptions as an (a) F1 scor e (b) Actual accuracy Figure 3: SpeechYOLO’ s performances when injecting back- gr ound noise. The y-axis is the measur e and the x-axis is the str ength of the noise added ( α ). input, and because our goal was to asses the localization task alone. The IOU of SpeechYOLO on TIMIT was 0.673, while MF A achieved 0.827. The forced aligner , MF A, performs its alignments using a complete transcription of the words uttered in a speech sig- nal. On the other hand, SpeechY OLO receives no information about the words uttered. Hence, gi ven MF A ’ s extended knowl- edge, we considered its localization ability as an “upper bound” to ours. Therefore, we found that SpeechY OLO’ s IOU value, while lower than MF A ’ s, were sufficiently high. Additionally , an aligner could naturally go wrong if there are incorrect or missing words in its transcription, or alterna- tiv ely if the audio signal contains long silences or untranscripted noises between words (e.g. a laugh or a cough) [22]. It should be noted that given SpeechY OLO’ s lack of knowledge about the transcription, these problems do not affect it. 4.1.2. Robustness to noise W e further demonstrated SpeechY OLO’ s rob ustness by arti- ficially adding background noise to LibriSpeech’ s audio files with a relativ e amplitude α . W e injected 3 types of background noises: a coffee shop, gaussian noise, and speckle. In Figure 3 we show SpeechY OLO’ s F1 score and Actual accuracy mea- sures when increasing the α variable, thus intensifying the in- jected noise. It is apparent that minor amounts of noise do not degrade SpeechYOLO’ s performances. Note that SpeechYOLO was able to deal even with higher α values, although it yielded somewhat reduced results. 4.2. Keyw ord spotting In this part, we evaluate SpeechY OLO on a real-world applica- tion: keyw ord spotting. For ev aluation, we use the F1 metric, and the Maximum T erm W eight V alue (MTWV) metric [23]. MTWV is defined as one minus the weighted sum of the prob- abilities of miss and false alarm. 4.2.1. LibriSpeech Corpus W e compare SpeechYOLO’ s k eyword spotting capabilities with those of the PSC system. In their work, they use a set of key- words that is a subset of the 1000 words used previously for prediction and localization. The chosen keyw ords are in T able 2 of [12], and are e valuated on both of LibriSpeech’ s test sets. A comparison of our results are presented in T able 3. Here too SpeechY OLO’ s results outperformed those of PSC. T able 3: MTWV values for SpeechYOLO and PSC on the ke y- wor d spotting task, e valuated on both of LibriSpeech’ s test sets. SpeechY OLO PSC test clean 0.74 0.72 test other 0.38 0.27 4.2.2. Spontaneous speech corpus W e no w present the results of SpeechYOLO for ke yword spot- ting with spontaneous speech. This is relev ant for mobile ap- plications, where a de vice is acti vated by a v oice command like ‘OK Google” or “Hey Siri”. T o simulate this task, we use a cor- pus taken from a daily TV show Good e vening with Guy Pines 2 . This corpus, which we will call “Hi Guy”, consists of sponta- neous and noisy recordings. In each recording, a celebrity is prompted to utter the phrase Hi Guy! These recordings vary greatly in terms of their environment and the speakers within them are highly div erse. The corpus consists of 880 examples, out of which 445 con- tain the chosen keyword. W e chose the phrase “Hi Guy” to be the keyword that our system searches for . The input length is 3 seconds. The system achie ves an Actual accuracy of 0.624, and an F1 score of 0.755 (precision: 0.748, recall: 0.761). W e find these results to be surprisingly satisfying due to the small size of the dataset and due to the diversity found in the corpus: the audio files are at times extremely noisy , the pronunciation of the speakers vary , and the keyword is sometimes sung instead of being spoken. 5. Conclusions In this work, we introduce the concept of treating parts of audio signals as objects. W e propose the SpeechYOLO algorithm for object detection and localization, and ev aluate its performances for both of these tasks. W e further show its use for the keyw ord spotting task. Future work includes expanding its keyword spot- ting ability for other speech parts. W e would also like to extend SpeechY OLO to detect words it has not been trained on. 6. Acknowledgements W e would like to thank Hi Guy! Guy Pines Communications Ltd. for providing their data. T . S. Fuchs is sponsored by the Malag scholarship for outstanding doctoral students in the high tech professions. 2 https://www.timesofisrael.com/topic/good-evening-with-guy-pines/ 7. References [1] J. Redmon, S. Divv ala, R. Girshick, and A. Farhadi, “Y ou only look once: Unified, real-time object detection, ” in Pr oceedings of the IEEE conference on computer vision and pattern recognition , 2016, pp. 779–788. [2] D. Amodei, S. Ananthanarayanan, R. Anubhai, J. Bai, E. Bat- tenberg, C. Case, J. Casper, B. Catanzaro, Q. Cheng, G. Chen et al. , “Deep speech 2: End-to-end speech recognition in english and mandarin, ” in International confer ence on machine learning , 2016, pp. 173–182. [3] R. Collobert, C. Puhrsch, and G. Synnaeve, “W av2letter: an end- to-end con vnet-based speech recognition system, ” arXiv preprint arXiv:1609.03193 , 2016. [4] G. Chen, C. Parada, and G. Heigold, “Small-footprint keyword spotting using deep neural networks, ” in 2014 IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2014, pp. 4087–4091. [5] T . Sainath and C. Parada, “Con volutional neural networks for small-footprint keyw ord spotting, ” 2015. [6] S. G. Fletcher, “Time-by-count measurement of diadochokinetic syllable rate, ” Journal of speech and hearing r esearch , vol. 15, no. 4, pp. 763–770, 1972. [7] J. W estbury and J. Dembowski, “ Articulatory kinematics of nor - mal diadochokinetic performance, ” Annual Bulletin of the Re- sear ch Institute of Logopedics and Phoniatrics , v ol. 27, pp. 13– 36, 1993. [8] J. Keshet, S. Shalev-Shw artz, Y . Singer, and D. Chazan, “ A large margin algorithm for speech-to-phoneme and music-to- score alignment, ” IEEE T ransactions on Audio, Speech, and Lan- guage Pr ocessing , vol. 15, no. 8, pp. 2373–2382, 2007. [9] M. McAuliffe, M. Socolof, S. Mihuc, M. W agner, and M. Son- deregger , “Montreal forced aligner: Trainable text-speech align- ment using kaldi. ” in Interspeec h , 2017, pp. 498–502. [10] J. K eshet, D. Grangier , and S. Bengio, “Discriminativ e keyw ord spotting, ” Speech Communication , vol. 51, no. 4, pp. 317–329, 2009. [11] W . Liu, D. Anguelov , D. Erhan, C. Szegedy , S. Reed, C.-Y . Fu, and A. C. Berg, “Ssd: Single shot multibox detector , ” in Eur opean confer ence on computer vision . Springer, 2016, pp. 21–37. [12] D. Palaz, G. Synnaeve, and R. Collobert, “Jointly learning to lo- cate and classify words using con volutional networks. ” in INTER- SPEECH , 2016, pp. 2741–2745. [13] A. Paszke, S. Gross, S. Chintala, G. Chanan, E. Y ang, Z. DeV ito, Z. Lin, A. Desmaison, L. Antiga, and A. Lerer , “ Automatic dif fer- entiation in pytorch, ” in NIPS-W , 2017. [14] K. Simon yan and A. Zisserman, “V ery deep conv olutional networks for large-scale image recognition, ” arXiv pr eprint arXiv:1409.1556 , 2014. [15] J. Redmon and A. Farhadi, “Y olo9000: better , faster , stronger , ” in Proceedings of the IEEE conference on computer vision and pattern r ecognition , 2017, pp. 7263–7271. [16] ——, “Y olov3: An incremental improvement, ” arXiv preprint arXiv:1804.02767 , 2018. [17] V . Panayotov , G. Chen, D. Povey , and S. Khudanpur , “Lib- rispeech: an asr corpus based on public domain audio books, ” in 2015 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2015, pp. 5206–5210. [18] B. McFee, M. McV icar , S. Balke, V . Lostanlen, C. Thom, C. Raffel, D. Lee, K. Lee, O. Nieto, F . Zalkow , and et al., “librosa/librosa: 0.6.3, ” Feb 2019. [Online]. A vailable: https://zenodo.org/record/2564164 [19] D. P . Kingma and J. Ba, “ Adam: A method for stochastic opti- mization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [20] P . W arden, “Speech commands: A dataset for limited-vocabulary speech recognition, ” arXiv pr eprint arXiv:1804.03209 , 2018. [21] H. Kamper, S. Settle, G. Shakhnaro vich, and K. Liv escu, “V isu- ally grounded learning of keyword prediction from untranscribed speech, ” arXiv pr eprint arXiv:1703.08136 , 2017. [22] E. Chodroff, “Corpus phonetics tutorial, ” arXiv pr eprint arXiv:1811.05553 , 2018. [23] J. G. Fiscus, J. Ajot, J. S. Garofolo, and G. Doddingtion, “Re- sults of the 2006 spoken term detection ev aluation, ” in Proc. sigir , vol. 7, 2007, pp. 51–57.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment