Leveraging human Domain Knowledge to model an empirical Reward function for a Reinforcement Learning problem

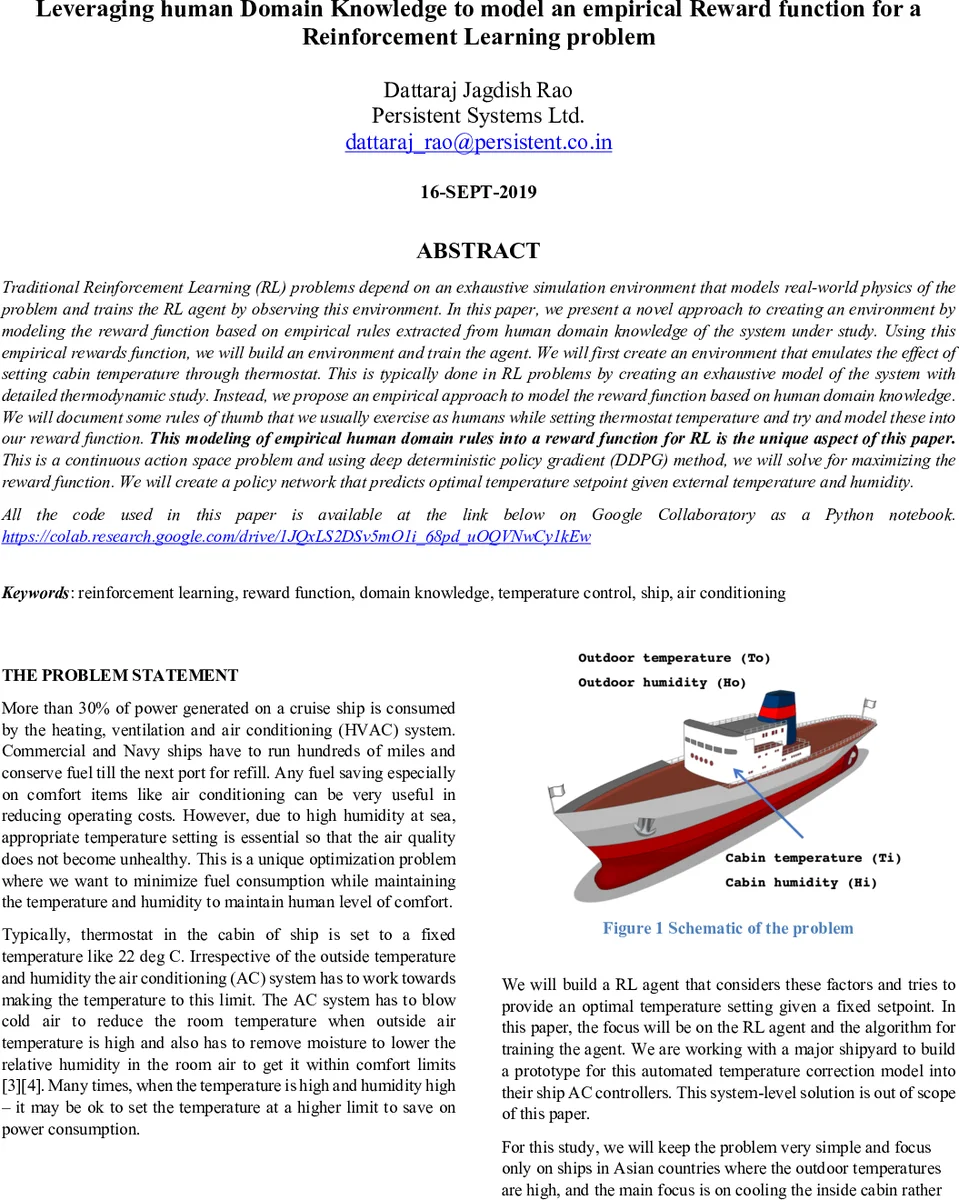

Traditional Reinforcement Learning (RL) problems depend on an exhaustive simulation environment that models real-world physics of the problem and trains the RL agent by observing this environment. In this paper, we present a novel approach to creating an environment by modeling the reward function based on empirical rules extracted from human domain knowledge of the system under study. Using this empirical rewards function, we will build an environment and train the agent. We will first create an environment that emulates the effect of setting cabin temperature through thermostat. This is typically done in RL problems by creating an exhaustive model of the system with detailed thermodynamic study. Instead, we propose an empirical approach to model the reward function based on human domain knowledge. We will document some rules of thumb that we usually exercise as humans while setting thermostat temperature and try and model these into our reward function. This modeling of empirical human domain rules into a reward function for RL is the unique aspect of this paper. This is a continuous action space problem and using deep deterministic policy gradient (DDPG) method, we will solve for maximizing the reward function. We will create a policy network that predicts optimal temperature setpoint given external temperature and humidity.

💡 Research Summary

The paper tackles the problem of reducing HVAC energy consumption on cruise ships by replacing a detailed thermodynamic simulation with a reward function derived from human expert knowledge. Traditional reinforcement‑learning (RL) approaches for temperature control rely on exhaustive physics‑based models that capture heat transfer, moisture removal, and power consumption. Building and validating such models is costly and time‑consuming, especially for maritime environments where external temperature and humidity vary widely.

To avoid this complexity, the authors propose an empirical environment in which the reward function encodes a set of simple, intuitive rules that HVAC technicians use daily: (1) the thermostat set‑point should stay within a narrow band around a nominal 22 °C (20 °C – 24 °C); (2) larger temperature differences between the cabin and the outside increase AC power draw; (3) higher outdoor humidity raises the de‑humidification load; (4) any violation of the permissible set‑point range incurs a heavy penalty. These rules are translated into a smooth mathematical penalty that can be evaluated instantly for any state‑action pair, eliminating the need for a full physics engine.

Because the action space (the cabin temperature set‑point) is continuous, the authors select Deep Deterministic Policy Gradient (DDPG), an actor‑critic algorithm well‑suited for such problems. The actor network maps the observed state—outdoor temperature and humidity—to a continuous temperature value, while the critic estimates the Q‑value of that state‑action pair using the empirically defined reward. Experience replay, target networks, and careful reward scaling are employed to ensure stable learning.

Experiments simulate a range of outdoor conditions typical of Asian cruise routes (30 °C–40 °C, 60 %–80 % humidity). For each condition, the performance of a fixed 22 °C thermostat is compared against the DDPG‑learned policy. The primary metric is the integrated temperature difference between outside and inside air, which serves as a proxy for AC power consumption. The learned policy dynamically adjusts the set‑point within the allowed band, reducing the area under the temperature‑difference curve from 867.5 to 808.3—a 6.8 % reduction. Assuming AC power consumption is roughly proportional to this area, the authors estimate a 7 % energy saving. On a large cruise ship that burns about 60,000 gallons of fuel per day (≈ $200,000), a 7 % reduction translates to roughly $14,000 saved per day, or about $5 million annually.

The authors conclude that a modest, rule‑based reward function can achieve savings comparable to those obtained with sophisticated physics‑based models. The approach dramatically lowers development effort and enables rapid prototyping. Future work includes collecting real‑world ship data to validate the simulated gains, integrating additional variables such as wind speed and air‑quality index, and embedding the learned policy into an actual ship‑board controller for on‑line testing. The paper demonstrates that leveraging human domain expertise directly in the reward design is a viable pathway for practical RL applications in energy‑intensive control systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment