Adversarial Examples Versus Cloud-based Detectors: A Black-box Empirical Study

Deep learning has been broadly leveraged by major cloud providers, such as Google, AWS and Baidu, to offer various computer vision related services including image classification, object identification, illegal image detection, etc. While recent works extensively demonstrated that deep learning classification models are vulnerable to adversarial examples, cloud-based image detection models, which are more complicated than classifiers, may also have similar security concern but not get enough attention yet. In this paper, we mainly focus on the security issues of real-world cloud-based image detectors. Specifically, (1) based on effective semantic segmentation, we propose four attacks to generate semantics-aware adversarial examples via only interacting with black-box APIs; and (2) we make the first attempt to conduct an extensive empirical study of black-box attacks against real-world cloud-based image detectors. Through the comprehensive evaluations on five major cloud platforms: AWS, Azure, Google Cloud, Baidu Cloud, and Alibaba Cloud, we demonstrate that our image processing based attacks can reach a success rate of approximately 100%, and the semantic segmentation based attacks have a success rate over 90% among different detection services, such as violence, politician, and pornography detection. We also proposed several possible defense strategies for these security challenges in the real-life situation.

💡 Research Summary

The paper “Adversarial Examples Versus Cloud‑based Detectors: A Black‑box Empirical Study” investigates the security of real‑world cloud vision APIs offered by major providers such as Google, Amazon Web Services (AWS), Baidu, Alibaba, and Microsoft Azure. While prior work has extensively demonstrated that deep‑learning classifiers are vulnerable to adversarial examples, the authors point out that cloud‑based detectors are more complex, typically comprising object detection, semantic segmentation, and sometimes human‑in‑the‑loop verification. Consequently, attacking such services in a black‑box setting (i.e., without any knowledge of model architecture, training data, or parameters) is considerably more challenging.

The authors propose four novel black‑box attack methods that rely solely on API queries and on a publicly available semantic segmentation model (Fully Convolutional Network, FCN). The attacks are:

-

Image Processing (IP) attack – applies conventional image transformations (color shift, blur, rotation, etc.) to the whole image. Because the transformations change the distribution of pixel values, the detector’s confidence scores shift dramatically, leading to near‑perfect bypass rates.

-

Single‑Pixel (SP) attack – modifies a single pixel in the input image. This method demonstrates the theoretical possibility of minimal perturbations but suffers from low success rates when the API returns only coarse confidence scores rather than full probability vectors.

-

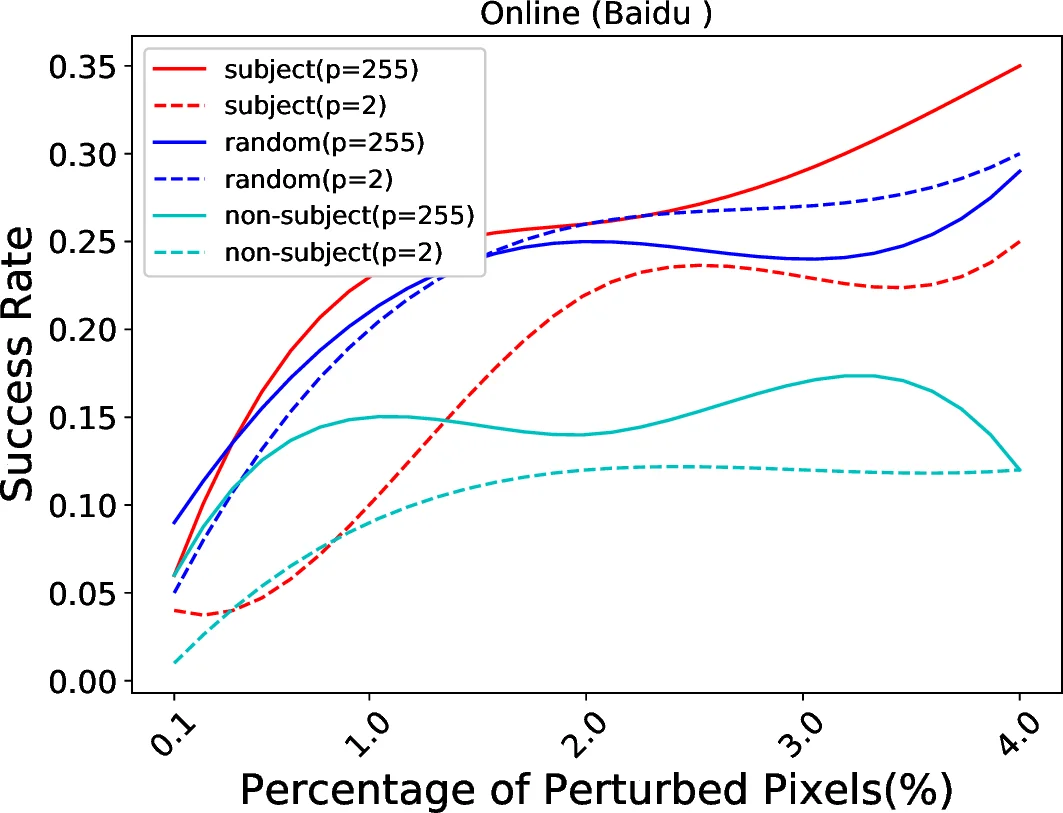

Subject‑based Local Search (SBLS) attack – first runs a semantic segmentation model to isolate a “subject” pixel set (e.g., person, face). Then, a local search algorithm iteratively perturbs the most influential pixels within this set, guided by the detector’s feedback. The method converges within a few thousand queries.

-

Subject‑based Boundary (SBB) attack – also uses the segmented subject set but follows a boundary‑search strategy akin to binary search, gradually reducing the perturbation magnitude until the detector’s top‑1 label changes. This approach achieves high success with even fewer queries than SBLS.

The threat model assumes an attacker who can only query the cloud API and is limited in the number of queries (to keep costs realistic). Success is defined as top‑1 misclassification: the detector’s highest‑confidence label differs from the ground‑truth label.

Experiments were conducted on five cloud platforms, targeting three detection categories: violence, political figures, and pornography. Table 2 in the paper reports the following key findings:

- IP attack achieved 100 % success across all platforms and categories, confirming that simple image‑processing tricks can fully evade detection.

- SBLS and SBB achieved success rates ranging from 60 % to 100 % depending on the service. For example, Baidu’s violence detector was bypassed 100 % of the time, while Azure’s pornography detector saw a 80 % success rate with SBB.

- The attacks required only a few thousand API calls, a dramatic reduction compared with prior black‑box attacks that often need hundreds of thousands or millions of queries.

- Visual quality metrics (L₀ distance, PSNR, SSIM) indicate that the adversarial images remain visually similar to the originals, making the attacks stealthy to human observers.

The authors also discuss potential defenses. They suggest input‑level preprocessing (randomized resizing, noise addition), ensemble of heterogeneous detectors, rate‑limiting and anomaly detection on query patterns, and randomizing the output of the semantic segmentation stage. However, they acknowledge that many of these countermeasures could increase latency or cost, which may be undesirable for commercial services.

Finally, the paper reports that the authors responsibly disclosed the vulnerabilities to the affected cloud providers, receiving positive acknowledgments and indicating that the findings could guide future hardening efforts.

In summary, this work provides the first comprehensive empirical evaluation of black‑box adversarial attacks against cloud‑based image detectors. By leveraging semantic segmentation to focus perturbations on semantically important regions, the authors achieve high bypass rates with limited queries, exposing a serious and practical security gap in widely deployed vision APIs. The study not only highlights the need for more robust detection pipelines but also offers a concrete framework for future research on both attacks and defenses in the cloud‑AI ecosystem.

Comments & Academic Discussion

Loading comments...

Leave a Comment