A Reinforcement Learning Framework for Sequencing Multi-Robot Behaviors

Given a list of behaviors and associated parameterized controllers for solving different individual tasks, we study the problem of selecting an optimal sequence of coordinated behaviors in multi-robot systems for completing a given mission, which cou…

Authors: Pietro Pierpaoli, Thinh T. Doan, Justin Romberg

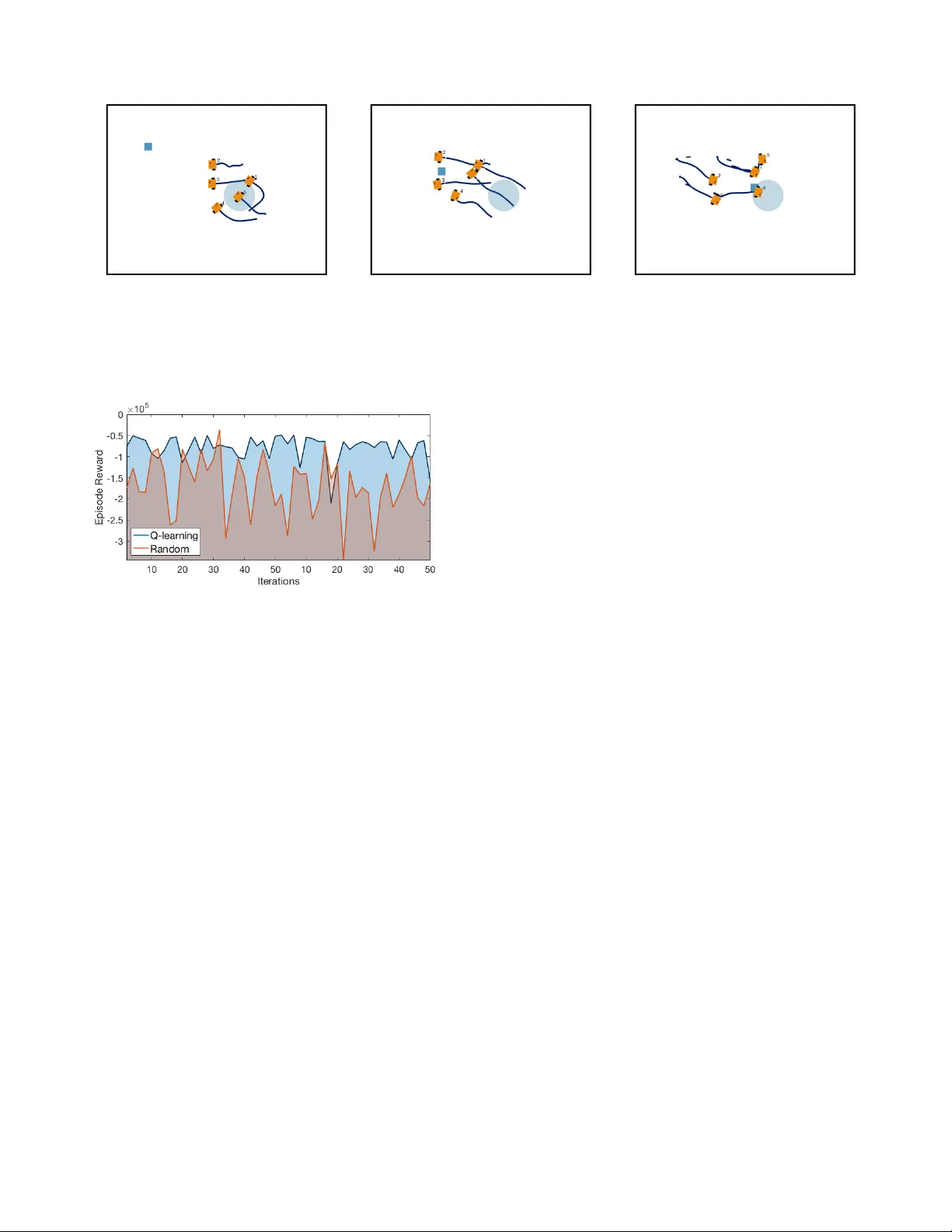

A Reinforcement Learning Frame work for Sequencing Multi-Robot Beha viors* Pietro Pierpaoli, Thinh T . Doan, Justin Romber g, and Magnus Egerstedt † Abstract —Given a list of behaviors and associated parameter- ized controllers for solving differ ent individual tasks, we study the problem of selecting an optimal sequence of coordinated behaviors in multi-r obot systems for completing a given mission, which could not be handled by any single behavior . In addition, we are interested in the case where partial information of the underlying mission is unknown, theref ore, the robots must coop- eratively learn this inf ormation thr ough their course of actions. Such problem can be formulated as an optimal decision problem in multi-robot systems, ho wever , it is in general intractable due to modeling imperfections and the curse of dimensionality of the decision variables. T o circumv ent these issues, we first consider an alter nate f ormulation of the original pr oblem through introducing a sequence of beha viors’ switching times. Our main contribution is then to propose a novel reinf orcement learning based method, that combines Q-learning and online gradient descent, f or solving this r eformulated problem. In particular , the optimal sequence of the robots’ behaviors is f ound by using Q-learning while the optimal parameters of the associated controllers ar e obtained through an online gradient descent method. Finally , to illustrate the effectiveness of our pr oposed method we implement it on a team of differential-drive robots for solving two different missions, namely , con voy pr otection and object manipulation. I . I N T RO D U C T I O N In multi-robot systems, there has been a great success in designing distrib uted controllers to address individual tasks through coordination [1]–[4]. Howe ver , many complex tasks in real-world applications require the robots being capable of performing beyond what has been achieved with task- oriented controllers. In addition, it is often that the information (or model) of the underlying tasks required by the e xisting controllers is partially known or imperfect, making solving these tasks nontrivial. For example, consider a team of robots tasked with moving a box between two points without pre vious knowledge of the box’ s physical properties (e.g. mass distri- bution, friction with the ground). Robots should first localize the box by exploring the space. Then, depending on their capabilities and available behaviors, the box should be pushed or lifted towards its destination. Through the paradigm of behavioral robotics [5] it is possible to address this complexity by sequentially combining multi-robot task-oriented controllers (or behaviors ); see for example [6], [7]. In addition, to achieve a long-term autonomy *This w ork was supported by the Army Research Lab under Grant DCIST CRA W911NF-17-2-0181. † The authors are with the School of Electrical and Com- puter Engineering, Georgia Institute of T echnology , Atlanta, GA 30332, USA. { pietro.pierpaoli,thinhdoan, magnus } @gatech.edu, jrom@ece.gatech.edu and an interaction with imperfect model the robots have to choose actions based on the real-time feedback observed from the systems. The idea of learning by interacting with the system (or en vironment) is the main theme in reinforcement learning [8], [9]. Our focus in this paper is to study the problem of selecting a sequence of coordinated behaviors and the associated parame- terized controllers in multi-robot systems for achieving a giv en task, which could not be handled by any individual behavior . In addition, we consider the applications where a partial information of the underlying task is unknown, therefore, the robots must cooperatively learn this information through their course of actions. Such problem can be formulated as an optimal decision problem in multi-robot systems. Howe ver , it is in general intractable due to the imperfection of the problem model and the curse of dimensionality of the de- cision v ariables. T o address these issues, we first formulate a behaviors switching condition based on the dynamic of the behavior itself. Our main contribution is then to propose a nov el reinforcement learning based method, a combination of Q-learning and online gradient descent to find the optimal sequence of the robots’ behaviors and the optimal parameters of the associated controllers, respectiv ely . Finally , to illustrate the effecti veness of our proposed method we implement it on a team of differential-dri ve robots for solving tw o different tasks, namely , conv oy protection and simplified object manipulation. Our paper is organized as follows. W e start by describing a class of weighted-consensus coordinated behaviors in Sec- tion II. The behavior selection problem is then formulated as in Section III, while our proposed method for solving this problem is presented in Section IV. W e conclude this paper by illustrating two applications of the proposed technique to a multi-robot systems in Section V. A. Related W ork A common approach in the design of multi-robot controllers for solving specific tasks is through the use of local control rules, whose collectiv e implementation results in the desired behavior . W eighted consensus protocols can be employed to this end. See for example [2] and references therein. The advantage of stating the multi-agent control problem in terms of task-specific controllers resides in the fact that prov able guarantees exist on their conv ergence [10]. The fundamental idea behind behavioral robotics [5] is to compose task-specific primiti ves into sequential or hierarchical controllers. This idea has been largely inv estigated for sin- gle robot systems and less so for multi-robot teams, where constrains on the flow of information prev ents direct appli- cation of the single robots’ algorithms. Proposed techniques for the composition of primitiv e controllers include formal methods [11], path planning [6], Finite State Machines [12], Petri Nets [13], and Behavior T rees [14]. Once an appropriate sequence of controllers is chosen, transitions between indi- vidual controllers must be feasible. Solutions to this problem include Motion Description Language [15], Graph Process Specifications [16] and Control Barrier Functions [7], [17]. Finally , reinforcement learning of fers a general paradigm for learning optimal policies in stochastic control problems based on simulation [8], [9]. In this context, an agent seeks to find an optimal policy through interacting with the unknown en vironment with the goal of optimizing its long-term future rew ard. Motiv ated by broad applications of the multi-agent systems, for example, mobile sensor networks [18], [19] and power networks [20], there is a growing interest in studying multi-agent reinforcement learning; see for example [21]–[23] and the references therein. Our goal in this paper is to consider another interesting application of multi-agent reinforcement learning in solving the optimal behaviors selection problem ov er multi-robot systems. I I . C O O R D I N A T E D C O N T R O L O F M U LT I - R O B OT S Y S T E M S In this section, we provide some preliminaries for the main problem formulation. W e start with some general definitions in multi-robot systems and then formally define the notion of behaviors used throughout the paper . A. Multi-Robot Systems Consider a team of N robots operating in a 2 -dimensional domain, where we denote by x i ∈ R 2 the state of robot i , for i = 1 , . . . , N . In addition, the dynamic of the robots is gov erned by a single integrator giv en as ˙ x i = u i , (1) where u i is the controller at robot i , which is a function of x i and the states of the robots interacting with robot i . The pattern of interactions between the robots is presented by an undirected graph G = ( V , E ) , where V = { 1 , . . . , N } and E = ( V × V ) are the index set and the set of pairwise interactions between the robots, respectively . Moreover , let N i = { j ∈ V | ( i, j ) ∈ E } be the neighboring set of robot i . Each controller u i at robot i is composed of two com- ponents, one only depends on its own state while the other represents the interaction with its neighbors. In particular , the controller u i : R 2+2 |N i | 7→ R 2 in (1) is giv en as u i = − X j ∈ N i ( w ( x i , x j , θ )( x i − x j ) ) + v ( x i , φ ) , (2) where w : R 2 × R 2 × Θ → R , often referred to as an edge weight function [24], depends on the states of robot i and its neighbors, and the parameter θ ∈ Θ . In addition, v : R 2 × Φ → R 2 is the state-feedback term at the robot i , which depends only on its own state x i and a parameter φ ∈ Φ representing robot i ’ s preference. Here, Θ and Φ are the feasible sets of the parameters θ and φ , respecti vely . A concrete example of such controller together with the associated parameters will be giv en in the next section. Finally , as studied in [2], one can define an appropriate energy function E : R 2 N 7→ R ≥ 0 with respect to the graph G , where the controller in (2) can be described as the negati vity of the local gradient of E , i.e., u i = − ∂ E ∂ x i · (3) This observation will be useful for our later dev elopment. B. Coor dinated Behaviors in Multi-Robot Systems Giv en a collection of behaviors, our goal is to optimally select a sequence of them in order to complete a gi ven mission. Definition 2.1: A coordinated behavior B is defined by 5 − tuple B = ( w , Θ , v , Φ , G ) (4) where Θ and Φ are feasible sets for the parameters of controller (2). Moreov er , G is the graph representing the interaction structure between the robots. In addition, gi ven M distinct beha viors we compactly represent them as a library of behaviors L L b = {B 1 , . . . , B M } , (5) where each behavior B k is defined as in (4), i.e., B k = ( w k , Θ k , v k , Φ k , G k ) k = 1 , . . . , M . (6) Here, note that the feasible sets Θ and Φ , and the graph G are different for different behaviors, that is, in switching between different behaviors the communication graphs of the robots may be time-varying. Moreover , based on Definition 2.1 it is important to note the difference between behavior and contr oller . The controller (2) e xecuted by the robots for a gi ven behavior is obtained by selecting a proper pair of parameters ( θ , φ ) from the sets Θ and Φ . Indeed, consider a behavior B and let x t = [ x T 1 ,t , . . . , x T N ,t ] T ∈ R 2 N be the ensemble states of the robots at time t . In addition, let u B ( x t , θ , φ ) , where u B = [ u T 1 , . . . , u T N ] T ∈ R 2 N , be the controllers of the robots defined in (2) for a feasible pair of parameters ( θ, φ ) . The ensemble dynamic of the robots associated B is then giv en as ˙ x t = u B ( x t , θ , φ ) . (7) T o further illustrate the dif ference between a behavior and its associated controller , we consider the following example about a formation control problem. Example 2.2: Consider the formation control problem over a network of 4 robots moving in a plane in Fig. 1, where the desired inter-robot distances are giv en by a vector θ = { θ 1 , . . . , θ 5 } , with θ i ∈ R + . Here, agent 1 acts as a leader and mov es toward the goal φ ∈ R 2 (red dot). Note that the desire formation also implies the interaction structure between the robots (graph G in our setting). The goal of the robots is to maintain their desired formation while moving to the red dot. T o achiev e this goal, one possible choice of the edge-weight function of the controller (2) is w = k x i − x j k − θ k , ∀ e k = ( i, j ) ∈ E , (8) φ θ 1 θ 2 θ 3 θ 4 θ 5 1 3 4 2 1 2 3 4 Fig. 1: Example formation for a team of 4 robots and one leader with goal φ (left). Interaction graph G needed for the correct formation assembling (right). while the state-feedback term v = 0 except for the one at the leader given as v 1 = φ − x 1 . (9) In this example, Φ is simply a subset of R 2 while Θ is a set of geometrically feasible distances. Thus, given the for- mation control behavior B = ( w , Θ , v , Φ , G ) , the controllers u B ( x, θ , φ ) of the robots can be easily derived from (2). W e conclude this section with some comments on the formation control problem described abov e, which are the motiv ation for our study in the next section. In Example 2.2, one can choose a single behavior B i ∈ L b together with a pair of parameters ( θ, φ ) for solving the formation control problem [24]. This controller , howe ver , is designed under the assumption that the en vironment is static and known, i.e., the target φ in Example 2.2 is fixed and kno wn by the robots. Such an assumption is less practical since in many applications, the robots are often operating in dynamically ev olving and potentially unknown en vironments; for example, φ is time- varying and unknown. On the other hand, while the formation control problem can be solved by using a single behavior , many practical complex tasks require the robots to implement more than one behavior [6], [7]. Our interest, therefore, is to consider the problem of selecting a sequence of the behaviors in L b for solving a giv en task while assuming that the state of the en vironment is unknown and possibly time-varying. In our setting, although the dynamic of the environment is unkno wn, we will assume that the robots can observe the state of the en vironment at any time through their course of action. W e will refer to this setting as a behavior selection problem, which is formally presented in the next section. Finally , depending on the application, the en vironment can represent different quantities, (e.g. the target (red dot) in Example 2.2). I I I . O P T I M A L B E H A V I O R S E L E C T I O N P R O B L E M S In this section, we present the problem of optimal behaviors selection over a network of robots, motiv ated by the reinforce- ment learning literature. In particular , consider a team of N robots cooperativ ely operating in an unknown en vironment and their goal is to complete a giv en mission in a time interval [0 , t f ] . Let x t and e t be the states of robots and en vironment at time t ∈ [0 , t f ] , respectively . At any time t , the robots first observe the state of the en vironment e t , select a behavior B t chosen from the library L b , compute the pair of parameters ( θ t , φ t ) associated with B t , and implement the resulting controller u B ( x t , θ t , φ t ) . The environment then mov es to a new state e 0 t and the robots get a re ward returned by the environment based on the selected behavior and tuning parameters. W e assume that the rewards encode the giv en mission, which is motiv ated by the usual consideration in the literature of reinforcement learning [9]. That is, solving the task is equiv alent to maximizing the total accumulated rew ards received by the robots. In Section V, we provide a few examples of how to design rew ard functions for particular applications. It is worth to point out that designing such a rew ard function is itself challenging and requires a good knowledge on the underlying problem [9]. One can try to solve the optimal behavior selection problem by using the existing techniques in reinforcement learning. Howe ver , this problem is in general intractable since the dimension of state space is infinite, i.e., x t and e t are con- tinuous variables. Moreov er, due to the physical constraint of the robots, it is infeasible for the robots to switch to a new behavior at every time instant. That is, the robots require a finite amount of time to implement the controller of the selected behavior . Thus, to circumvent these issues we next consider an alternate version of this problem. Inspired by the work in [25] we introduce an interrupt condition ξ : E 7→ { 0 , 1 } , where E is giv en in (3), defined as ξ ( E t ) = 1 if E t ≤ ε (10) and ξ ( E t ) = 0 otherwise. Here ε is a small positiv e threshold. In other words, ξ ( E t ) represents a binary trigger with value 1 whenev er the network energy for a certain behavior at time t is smaller than a threshold, that is, the current controller is nearly complete. Thus, it is reasonable to enforce that the robots should not switch to a new behavior at time t unless ξ ( E t ) = 1 for a gi ven . Based on this observation, given a desired threshold , let τ i be the switching time associated with the current behavior B defined as τ i ( B , , t 0 ) = min { t ≥ t 0 | E t ≤ ε } . (11) Consequently , the mission time interval [0 , t f ] is partitioned into K switching times τ 0 , . . . , τ K satisfying 0 = τ 0 ≤ τ 1 ≤ . . . ≤ τ K = t f , (12) where each switching time is define as in (11). Note that the number of switching time K depends on the accuracy and it is not known in advance. In this paper , we do not consider the problem of optimizing the number of switching times giv en a threshold . At each switching time τ i , the robots choose one behavior B i ∈ L b based on their current states x τ i and the environment state e τ i . Next, they find a pair of parameters ( θ i , φ i ) and implement the underlying controller u B i ( x t , θ i , φ i ) for t ∈ [ τ i , τ i +1 ) . Based on the selected behaviors and parameters, the robots receiv e an instantaneous reward R ( x τ i , e τ i , B i , θ i , φ i ) returned by the environment as an estimate for their selection. Finally , we assume that the states of the environment at the switching times belong to a finite set S , i.e., e τ i ∈ S for all i . Let J be the accumulati ve reward receiv ed by the robots at the switching times in [0 , t f ] J = K X i =0 R ( x τ i , e τ i , B i , θ τ i , φ τ i ) . (13) As mentioned abov e, the optimal behavior selection is equi v- alent to the problem of seeking a sequence of behaviors {B i } from L b at { τ i } and the associated parameters { ( θ i , φ i ) } ∈ { Θ i × Φ i } so that the accumulativ e re ward J is maximized. This optimization problem can be formulated as follows maximize B i ,θ i ,φ i K X i =0 R ( x τ i , e τ i , B i , θ i , φ i ) such that B i ∈ L b , ( θ i , φ i ) ∈ Θ i × Φ i e t +1 = f e ( x t , e t ) ˙ x = u B i ( x t , θ i , φ i ) , t ∈ [ τ i , τ i +1 ) . (14) where f e : R 2 N × R 2 7→ R 2 is the unkno wn dynamic of the en vironment. Since f e is unknown, one cannot use dynamic programming to solve this problem. Thus, in the next section we propose a novel method for solving (14), which is a combination of Q -learning and online gradient descent. Moreov er , by introducing the switching times τ i computing the optimal sequence of behaviors using Q-learning is now tractable. I V . Q - L E A R N I N G A P P R OAC H F O R B E H A V I O R S E L E C T I O N In this section we propose a novel reinforcement learn- ing based method for solving problem (14). Our method is composed of Q -learning and online gradient descent methods to find an optimal sequence of beha viors {B ∗ i } and the associated parameters { ( θ ∗ i , φ ∗ i ) } , respectiv ely . In particular , we maintain a Q-table, whose ( i, j ) entry is the state-behavior value estimating the performance of behavior B j ∈ L b giv en the en vironment state j ∈ S . Thus, one can view the Q - table as a matrix Q ∈ R S × M , where S is the size of S and M is the number of behaviors in L b . The entries of Q- table are updated by using Q-learning while the controller parameters are updated by using the continuous-time online gradient method. These updates are formally presented in Algorithm 1. In our algorithm, at each switching time τ i the robots first observes the environment state e τ i = s ∈ S , and then select a behavior B m with respect to the maximum entry in the s -th ro w of the Q -table with tie broken arbitrarily . Next, the robots implement the distributed controller f B m and use online gradient descent to find the best parameters ( θ m , φ m ) associated with B m . Here the function C t represents the cost of implementing the controller at time t , which can be chosen priorily by the robots (e.g., E in (3)) or randomly returned by the environment. Based on the selected behavior and the associated controller , the robots receive an instantaneous rew ard r i while the environment moves to a new state s 0 ∈ S . Finally , the robots updates the ( s, m ) entry of the Q -table using the update of Q -learning method. It is worth to note that the Q-learning step is done in a centralized manner (either by the robots or a supervisory coordinator) since it depends on the state of the en vironment. Similarly , depending on the structure of the cost functions C t the online gradient descent updates can be implemented either distributedly or in a centralized manner . Finally , we note that the decentralized nature proper of each controller (2) is preserved. x 0 ∼ U ( R 2 N ) ; L b = {B 1 , . . . , B M } ; Q ( s, m ) ∼ U ( R ) ∀ m = 1 , . . . , M s ∈ S ; for m ∈ 1 , . . . , M do θ m ∼ U (Θ m ) , φ m ∼ U (Φ m ) ; end s ∈ S ← Observe e τ i ; for i = 0 to K do Select m = arg max ` =1 ,...,M Q ( s, ` ) ; ( ¯ θ t , ¯ φ t ) ← ( θ m , φ m ) ; while E ≥ ε do ˙ x = f B m ( x t , ¯ θ t , ¯ φ t ) ; ˙ ¯ θ = −∇ θ C t ( x t , e τ i , ¯ θ t , ¯ φ t ) ; ˙ ¯ φ = −∇ φ C t ( x t , e τ i , ¯ θ t , ¯ φ t ) ; end ( θ m , φ m ) ← ( ¯ θ t , ¯ φ t ) ; r i ← from en vironment ; s 0 ∈ S ← Observe e τ i +1 ; Q ( s, m ) = Q ( s, m ) + r i + max j Q ( s 0 , j ) − Q ( s, m ) ; s ← s 0 end Algorithm 1: Q-learning algorithm for optimal beha vior selection and tuning. The notation ∼ U ( O ) is used to represent variables uniformly selected from a set O V . A P P L I C A T I O N S In this section we describe two implementations of our be- haviors selection technique. For both examples, we considered a team of 5 robots and a library of 5 behaviors given as 1 1) Static formation: u i = X j ∈N i ( k x i − x j k 2 − ( θ δ ij ) 2 )( x j − x i ) , where δ ij is the desired separation between robots i and j , while θ ∈ R is a shape scaling factor . 2) Formation with leader: u i = X j ∈N i ( k x i − x j k 2 − θ 2 ij )( x j − x i ) u l = X j ∈N i ( k x i − x j k 2 − θ 2 ij )( x i − x i ) + ( φ − x i ) , where δ ij and θ are defined as in the previous controller, while φ ∈ Φ ⊆ R 2 is the leader’ s goal. Subscript ` leaders’ controllers. 1 These functions represent possible behaviors of the robots. In particular, for the consistency with Example 2.2, all individual agents terms are described by proportional controllers with unitary gains. Unlike Example 2.2, here we let θ be a scaling factor, which are assumed to be fixed by the formation. 3) Cyclic pursuit: u i = X j ∈N i R ( θ ) ( x j − x i ) + ( φ − x i ) , where θ = 2 r sin π N , r is the radius of the cycle formed by the robots, and R ( θ ) ∈ S O (2) . The point φ ∈ Φ ⊆ R 2 is the center of the cycle. 4) Leader-follo wer: u i = X j ∈N i ( k x i − x j k 2 − θ 2 )( x j − x i ) u l = X j ∈N i ( k x i − x j k 2 − θ 2 )( x j − x i ) + ( φ − x i ) , where θ is the separation between the agents and φ ∈ Φ ⊆ R 2 is the leader’ s goal. 5) T riangulation coverage: u i = X j ∈N i ( k x i − x j k 2 − θ 2 )( x j − x i ) , (15) where θ is the separation between the agents in the triangulation. For all the behaviors considered above, we assume the following parameter spaces Θ = [0 . 05 , 1 . 1] and Φ = [ − 1 , 1] . In both examples, we construct the state-action value function by implementing our propose method, Algorithm 1, on the Robotarium simulator [26], which captures a number of the features of the actual robots, such as the full kinematic model of the vehicles and collision av oidance algorithm. A. Con voy Pr otection First, we consider a con voy pr otection problem, where a team of robots must surround a moving target and maintain a single robot-to-target distance equal to a constant ∆ at all times. Although this problem can be solved by executing a single behavior (e.g. cyclic-pursuit), it allows us to compare the performance of our framework against an ideal solution. The position of the target is denoted with z t and it’ s described by the following dynamics ˙ z = v z + σ, (16) where v z is a constant velocity and σ is a zero-mean Gaussian disturbance. In this case, the state of the en vironment at time step t is considered to be the separation between robots’ centroid ¯ x = 1 N P N i =1 x i and the target e t = k ¯ x t − z t k , (17) where k · k denotes the Euclidian norm. The reward provided by the environment at time t is r t = −k e t − ¯ x t k 2 − 1 N N X 1 ( k x i,t − e t k − ∆) 2 , (18) where the first term represents the proximity between centroid and target, while the second term weights the individual robot- to-target distance. Training is executed over 1000 episodes, with an exponentially decaying ε -greedy policy . The plot in Fig. 2 shows the collected rew ards over a trial of 50 episodes. The results from the trained model (blue) are compared against an ad-hoc ideal solution (red), where C Y C L I C - P U R S U I T behavior is recursively executed with pa- rameters θ and φ being selected so that the resulting cycle has radius ∆ and is centered on the target’ s position. Finally , we show the re wards collected when the behaviors and parameters are selected uniformly at random (green). The variance of the results from random selection could be a direct indicator for the complexity of the problem. Fig. 2: Comparison between accrued rew ard for 50 different episodes of the con vo y protection example. Rewards from trained model (blue) are compared with ad-hoc solution (red) and random behaviors/parameters selection (green). B. Simplified Object Manipulation In the second example in Fig. 3, we consider a team of robots tasked with moving an object from two points. Let e t represent the position of the object at time t . In order not to complicate the focus of the experiment, we assume a simplified manipulation dynamics. In particular , the box maintains its position if the closest robot is further than a certain threshold (i.e., object is not detected by the robots), otherwise it mov es as ¯ x . This manipulation dynamic guarantees that the task cannot be solved with fix ed behavior and parameters since it is unknown to the robots. In this context, the robots get the following rew ard r t = − ( κ + k e t − ¯ e k ) , (19) where κ is a constant used to weight the running time until completion of an episode and k e t − e g k is the distance between the box and its final destination ¯ e . V I . C O N C L U S I O N In this paper , we presented a reinforcement learning based approach for solving the optimal behavior selection problem, where the robots interact with an unkno wn en vironment. Giv en a finite library of behaviors, our technique exploits rewards collected through interaction with the en vironment to solve a giv en task that could not be solved by any single behavior . W e also provide some numerical experiments on a network of robots to illustrate the effecti veness of our method. Future directions of this work include optimal design of behavior switching times and decentralized implementation of the Q- learning update. Fig. 3: Screen shoot from Robotarium simulator during e xecution of the simplified object manipulation scenario at three dif ferent times. Executed behaviors are L E A D E R - F O L L O W E R (left), F O R M AT I O N W I T H L E A D E R (center), and C Y C L I C - P U R S U I T (right). Blue square represents the object which is collectiv ely transported towards the origin (blue circle). Fig. 4: Comparison between accrued reward over 50 episodes of the object manipulation example. Re wards from trained model (blue) are compared with random behaviors/parameters selection (red). R E F E R E N C E S [1] K.-K. Oh, M.-C. Park, and H.-S. Ahn, “ A survey of multi-agent formation control, ” Automatica , vol. 53, pp. 424–440, 2015. [2] J. Cort ´ es and M. Egerstedt, “Coordinated control of multi-robot sys- tems: A survey , ” SICE Journal of Contr ol, Measurement, and System Inte gration , vol. 10, no. 6, pp. 495–503, 2017. [3] G. Antonelli, “Interconnected dynamic systems: An overvie w on dis- tributed control, ” IEEE Control Systems Magazine , vol. 33, no. 1, 2013. [4] M. Schwager , D. Rus, and J.-J. Slotine, “Unifying geometric, proba- bilistic, and potential field approaches to multi-robot deployment, ” The International Journal of Robotics Research , v ol. 30, no. 3, 2011. [5] R. C. Arkin et al. , Behavior-based robotics . MIT press, 1998. [6] S. Nagavalli, N. Chakraborty , and K. Sycara, “ Automated sequencing of sw arm behaviors for supervisory control of robotic swarms, ” in 2017 IEEE International Conference on Robotics and Automation (ICRA) . IEEE, 2017, pp. 2674–2681. [7] P . Pierpaoli, A. Li, M. Sriniv asan, X. Cai, S. Coogan, and M. Egerst- edt, “ A sequential composition framework for coordinating multi-robot behaviors, ” arXiv preprint , 2019. [8] D. P . Bertsekas, Reinfor cement Learning and Optimal Control , 2019. [9] R. S. Sutton and A. G. Barto, Reinfor cement Learning: An Introduction , 2nd ed. MIT Press, 2018. [10] D. Zelazo, M. Mesbahi, and M.-A. Belabbas, “Graph theory in systems and controls, ” in 2018 IEEE Confer ence on Decision and Contr ol (CDC) . IEEE, 2018, pp. 6168–6179. [11] H. Kress-Gazit, M. Lahijanian, and V . Raman, “Synthesis for robots: Guarantees and feedback for robot behavior , ” Annual Review of Control, Robotics, and Autonomous Systems , vol. 1, pp. 211–236, 2018. [12] A. Marino, L. Parker , G. Antonelli, and F . Caccavale, “Behavioral con- trol for multi-robot perimeter patrol: A finite state automata approach, ” in 2009 IEEE International Conference on Robotics and Automation . IEEE, 2009, pp. 831–836. [13] E. Klavins and D. E. Koditschek, “ A formalism for the composition of concurrent robot behaviors, ” in Pr oceedings 2000 ICRA. Millennium Confer ence. IEEE International Conference on Robotics and Automa- tion. Symposia Proceedings (Cat. No. 00CH37065) , vol. 4. IEEE, 2000, pp. 3395–3402. [14] M. Colledanchise and P . ¨ Ogren, “How behavior trees modularize ro- bustness and safety in hybrid systems, ” in 2014 IEEE/RSJ International Confer ence on Intelligent Robots and Systems . IEEE, 2014, pp. 1482– 1488. [15] P . Martin and M. B. Egerstedt, “Hybrid systems tools for compiling con- trollers for cyber-physical systems, ” Discr ete Event Dynamic Systems , vol. 22, no. 1, pp. 101–119, 2012. [16] P . T wu, P . Martin, and M. Egerstedt, “Graph process specifications for hybrid networked systems, ” IF AC Proceedings V olumes , vol. 43, no. 12, pp. 65–70, 2010. [17] A. Li, L. W ang, P . Pierpaoli, and M. Egerstedt, “Formally correct composition of coordinated behaviors using control barrier certificates, ” in 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) . IEEE, 2018, pp. 3723–3729. [18] J. Cortes, S. Martinez, T . Karatas, and F . Bullo, “Coverage control for mobile sensing networks, ” IEEE T ransactions on Robotics and Automation , vol. 20, no. 2, pp. 243–255, 2004. [19] P . Ogren, E. Fiorelli, and N. E. Leonard, “Cooperati ve control of mobile sensor networks:adaptiv e gradient climbing in a distributed en vironment, ” IEEE T ransactions on Automatic Contr ol , vol. 49, no. 8, pp. 1292–1302, 2004. [20] S. Kar, J. M. F . Moura, and H. V . Poor, “Qd-learning: A collabora- tiv e distributed strategy for multi-agent reinforcement learning through consensus + innovations, ” IEEE T rans. Signal Pr ocessing , vol. 61, pp. 1848–1862, 2013. [21] K. Zhang, Z. Y ang, and T . Basar, “Networked multi-agent reinforcement learning in continuous spaces, ” in 2018 IEEE Confer ence on Decision and Contr ol (CDC) . IEEE, 2018, pp. 2771–2776. [22] H.-T . W ai, Z. Y ang, Z. W ang, and M. Hong, “Multi-agent reinforcement learning via double averaging primal-dual optimization, ” in Annual Confer ence on Neural Information Pr ocessing Systems , 2018. [23] T . T . Doan, S. T . Maguluri, and J. Romberg, “Finite-time analysis of distributed TD(0) with linear function approximation on multi- agent reinforcement learning, ” in Proceedings of the 36th International Confer ence on Machine Learning , vol. 97, 2019, pp. 1626–1635. [24] M. Mesbahi and M. Egerstedt, Graph theoretic methods in multiagent networks . Princeton Univ ersity Press, 2010, vol. 33. [25] T . R. Mehta and M. Egerstedt, “ An optimal control approach to mode generation in hybrid systems, ” Nonlinear Analysis: Theory, Methods & Applications , vol. 65, no. 5, pp. 963–983, 2006. [26] D. Pickem, P . Glotfelter, L. W ang, M. Mote, A. Ames, E. Feron, and M. Egerstedt, “The robotarium: A remotely accessible swarm robotics research testbed, ” in 2017 IEEE International Conference on Robotics and Automation (ICRA) . IEEE, 2017, pp. 1699–1706.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment