On the Hardness of Robust Classification

It is becoming increasingly important to understand the vulnerability of machine learning models to adversarial attacks. In this paper we study the feasibility of robust learning from the perspective of computational learning theory, considering both…

Authors: Pascale Gourdeau, Varun Kanade, Marta Kwiatkowska

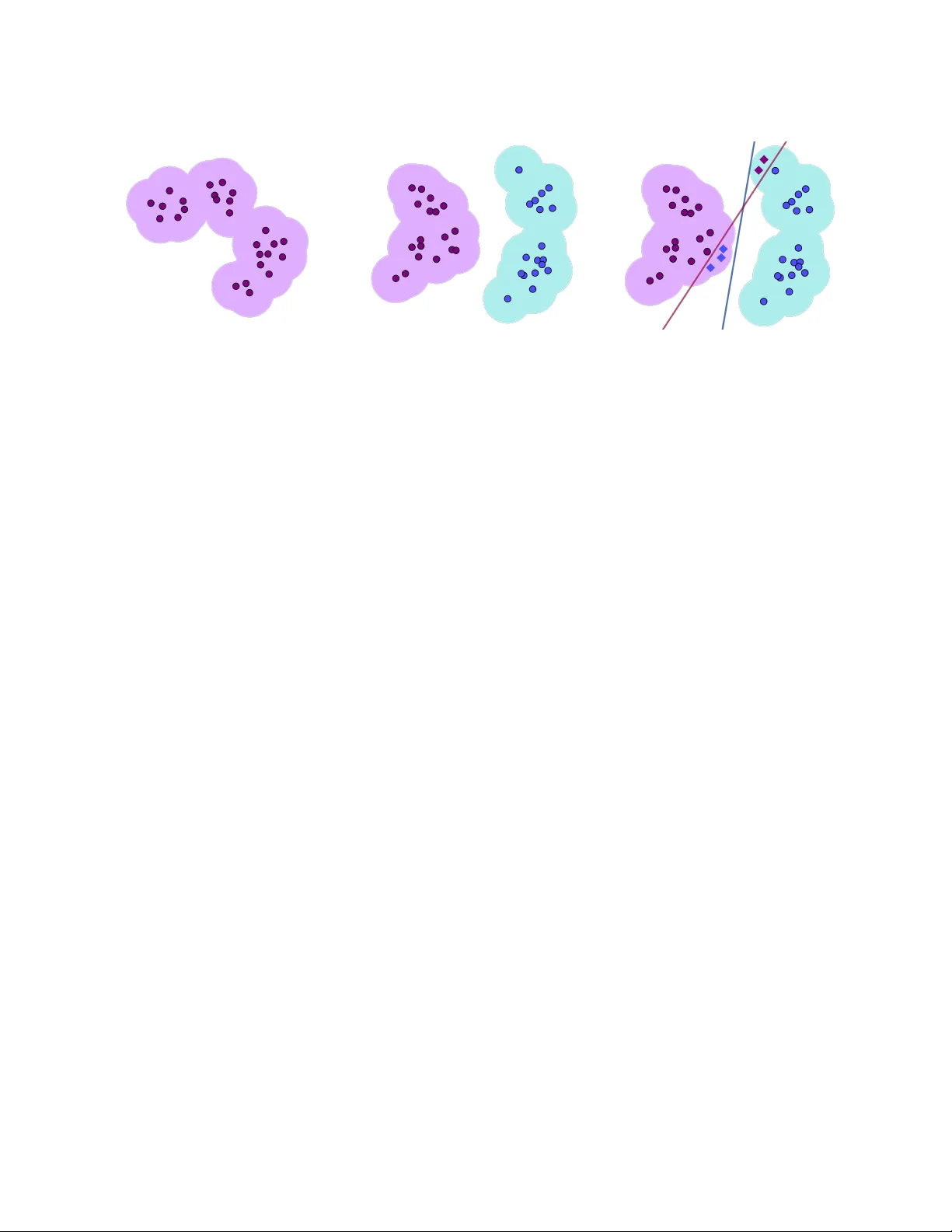

On the Hardness of Robust Classification P ascale Gourdeau, V arun Kanade, Marta Kwiatk o wsk a, and James W orrell Univ ersit y of Oxford Septem b er 13, 2019 Abstract It is b ecoming increasingly important to understand the vulnerabilit y of mac hine learning mo dels to adv ersarial attac ks. In this pap er w e study the feasibilit y of robust learning from the persp ective of computational learning theory , considering b oth sample and computational complexit y . In particular, our definition of robust learnabilit y requires p olynomial sample com- plexit y . W e start with tw o negative results. W e show that no non-trivial concept class can b e robustly learned in the distribution-free setting against an adversary who can p erturb just a single input bit. W e sho w moreov er that the class of monotone conjunctions cannot be robustly learned under the uniform distribution against an adv ersary who can p erturb ω (log n ) input bits. How ev er if the adv ersary is restricted to perturbing O (log n ) bits, then the class of mono- tone conjunctions can be robustly learned with respect to a general class of distributions (that includes the uniform distribution). Finally , w e provide a simple pro of of the computational hardness of robust learning on the b o olean hypercub e. Unlik e previous results of this nature, our result do es not rely on another computational model (e.g. the statistical query mo del) nor on any hardness assumption other than the existence of a hard learning problem in the P AC framew ork. 1 In tro duction There has b een considerable in terest in adv ersarial mac hine learning since the seminal work of Szegedy et al. [25], who coined the term adversarial example to denote the result of applying a carefully c hosen perturbation that causes a classification error to a previously correctly classified datum. Biggio et al. [4] indep enden tly observ ed this phenomenon. Ho w ev er, as p ointed out b y Biggio and Roli [3], adv ersarial machine learning has b een considered muc h earlier in the context of spam fil- tering [8, 19, 20]. Their surv ey also distinguished t w o settings: evasion attacks , where an adversary mo difies data at test time, and p oisoning attacks , where the adversary mo difies the training data. 1 Sev eral differen t definitions of adv ersarial learning exist in the literature and, unfortunately , in some instances the same terminology has b een used to refer to different notions (for some discussion see e.g., [11, 10]). Our goal in this paper is to tak e the most widely-used definitions and consider their implications for robust learning from a statistical and computational viewp oin t. F or simplicit y , we will fo cus on the setting where the input space is the b o olean hypercub e X = { 0 , 1 } n 1 F or an in-depth review and definitions of different t yp es of attac ks, the reader ma y refer to [3, 11]. 1 (a) (b) (c) Figure 1: (a) The supp ort of the distribution is such that R C ρ ( h, c ) = 0 can only b e achiev ed if c is constant. (b) The ρ -expansion of the supp ort of the distribution and target c admit hypotheses h such that R C ρ ( h, c ) = 0. (c) An example where R C ρ and R E ρ differ. The red concept is the target, while the blue one is the h yp othesis. The dots are the supp ort of the distribution and the shaded regions represen t their ρ -expansion. The diamonds represen t p erturb ed inputs whic h cause R E ρ > 0. and consider the r e alizable setting, i.e. the labels are consisten t with a target concept in some concept class. An adversarial example is constructed from a natur al example b y adding a p erturbation. T yp- ically , the p ow er of the adv ersary is curtailed b y sp ecifying an upp er b ound on the p erturbation under some norm; in our case, the only meaningful norm is the Hamming distance. F or a p oint x ∈ X , let B ρ ( x ) denote the Hamming ball of radius ρ around x . Given a distribution D on X , w e consider the adversarial risk of a hypothesis h with resp ect to a target concept c and p erturbation budget ρ . W e fo cus on t w o definitions of risk. The exact in the b al l risk R E ρ ( h, c ) is the probability P x ∼ D ( ∃ y ∈ B ρ ( x ) · h ( y ) 6 = c ( y )) that the adversary can p erturb a p oint x drawn from distribution D to a point y such that h ( y ) 6 = c ( y ). The c onstant in the b al l risk R C ρ ( h, c ) is the probabilit y P x ∼ D ( ∃ y ∈ B ρ ( x ) · h ( y ) 6 = c ( x )) that the adversary can p erturb a p oint x drawn from distribution D to a p oint y suc h that h ( y ) 6 = c ( x ). These definitions encode t wo differen t interpretations of robustness. In the first view, robustness sp eaks ab out the fidelit y of the hypothesis to the target concept, whereas in the latter view robustness concerns the sensitivity of the output of the h yp oth- esis to corruptions of the input. In fact, the latter view of robustness can in some circumstances b e in conflict with accuracy in the traditional sense [26]. 1.1 Ov erview of Our Con tributions W e view our conceptual contributions to be at least as imp ortant as the technical results and b elieve that the issues highlighted in our work will result in more concrete theoretical framew orks b eing dev elop ed to study adv ersarial learning. Imp ossibilit y of Robust Learning in Distribution-F ree P A C Setting W e first consider the question of whether ac hieving zer o (or low) robust risk is possible under either of the t wo definitions. If the b al ls of radius ρ around the data points in tersect so that the total region 2 is connected, then unless the target function is constant, it is imp ossible to achiev e R C ρ ( h, c ) = 0 (see Figure 1). In particular, in most cases R C ρ ( c, c ) 6 = 0, i.e., even the target concept do es not ha v e zero risk with resp ect to itself. W e show that this is the case for extremely simple concept classes suc h as dictators or p arities . When considering the exact on the b al l notion of robust learning, w e at least hav e R E ρ ( c, c ) = 0; in particular, any concept class that can b e exactly learned can b e robustly learned in this sense. How ever, even in this case we show that no “non-trivial” class of functions can b e robustly learned. W e highlight that these results sho w that a p olynomial-size sample from the unkno wn distribution is not sufficien t, even if the learning algorithm has arbitrary computational p o w er (in the sense of T uring computability). 2 Robust Learning of Monotone Conjunctions Giv en the imp ossibility of distribution-free robust learning, we consider robust learning under sp ecific distributions. W e consider one of the simplest concept class studied in P A C Learning, the class of monotone c onjunctions , under the class of log -Lipsc hitz distributions (which includes the uniform distribution) and show that this class of functions is robustly learnable pro vided ρ = O (log n ) and is not robustly learnable with p olynomial sample complexit y for ρ = ω (log n ). A class of distributions is said to b e α -log-Lipschitz if the logarithm of the densit y function is log ( α )- Lipsc hitz with resp ect to the Hamming distance. Our results apply in the setting where the learning algorithm only receives random lab eled examples. On the other hand, a more p o w erful learning algorithm that has access to mem b ership queries can exactly learn monotone conjunctions and as a result can also robustly learn with resp ect to exact in the b al l loss. Computational Hardness of P A C Learning Finally , we consider computational asp ects of robust learning. Our fo cus is on tw o questions: c omputability and c omputational c omplexity . Recent work b y Bub eck et al. [7] provides a result that states that minimizing the robust loss on a p olynomial-size sample suffices for robust learning. Ho w ev er, b ecause of the existential quan tifier ov er the ball implicit in the definition of the exact in the b al l loss, the empirical risk cannot b e c ompute d as this requires en umeration ov er the r e als . Ev en if one restricted attention to concepts defined o v er Q n , computing the loss would b e r e cursively enumer able , but not r e cursive . In the case of functions defined ov er finite instance spaces, such as the b o olean hypercub e, the loss can b e ev aluated pro vided the learning algorithm has access to a mem b ership query oracle; for the c onstant in the b al l loss membership queries are not required. F or functions defined on R n it is unclear ho w either loss function can b e ev aluated ev en if the learner has access to membership queries, since in principle it requires en umerating o v er the reals. Under strong assumptions of inductive bias on the target and hypothesis class, it may b e p ossible to ev aluate the loss functions; ho wev er this w ould ha v e to b e handled on a case by case basis – for example, prop erties of the target and h yp othesis, such as Lipschitzness or large margin, could b e used to compute the exact in the ball loss in finite time. Second, w e consider the computational complexity of robust learning. Bub eck et al. [6] and Deg- w ek ar and V aikuntanathan [9] hav e sho wn that there are concept classes that are hard to robustly 2 W e do require any op eration p erformed by the learning algorithm is computable; the results of Bub eck et al. [7] imply that an algorithm that can potentially ev aluate unc omputable functions can alwa ys robustly learn using a p olynomial-size sample. See the discussion on computational hardness b elow. 3 learn under cryptographic assumptions, even when robust learning is information-theoretically fea- sible. Bub eck et al. [7] establish super-p olynomial low er b ounds for robust learning in the statistic al query framework. W e give an arguably simpler pro of of hardness, based simply on the assump- tion that there exist concept classes that are hard to P A C learn. In particular, our reduction also implies that robust learning is hard even if the learning algorithm is allow ed membership queries, pro vided the concept class that we reduce from is hard to learn using mem b ership queries. Since the existence of one-wa y functions implies the existence of concept classes that are hard to P AC learn (with or without membership queries), our result is also based on a slightly weak er assumption than Bub ec k et al. [7] 3 . 1.2 Related w ork on the Existence of Adv ersarial Examples There is a considerable bo dy of w ork that studies the inevitabilit y of adv ersarial examples, e.g., [12, 14, 13, 16, 24]. These pap ers characterize robustness in the sense that a classifier’s output on a p oin t should not change if a p erturbation of a certain magnitude is applied to it. Among other things, these works study geometrical characteristics of classifiers and statistical characteristics of classification data that lead to adversarial vulnerability . Closer to the presen t pap er are [10, 21, 22], which w ork the with exact-in-a-ball notion of robust risk. In particular, [10] considers the robustness of monotone conjunctions under the uniform distribution on the bo olean hypercub e for this notion of risk (therein called the err or r e gion risk). Ho w ev er [10] do es not address the sample and computational complexity of learning: their results rather concern the ability of an adv ersary to magnify the missclassification error of any hypothesis with resp ect to any target function by p erturbing the input. F or example, they show that an adv ersary who can p erturb O ( √ n ) bits can increase the missclassification probabilit y from 0 . 01 to 1 / 2. By contrast we show that a weak er adversary , who can p erturb only ω (log n ) bits, renders it imp ossible to learn monotone conjunctions with p olynomial sample complexit y . The main to ol used in [10] is the isop erimetric inequalit y for the Bo olean hypercub e, whic h gives lo w er b ounds on the volume of the expansions of arbitrary subsets. On the other hand, we use the probabilistic metho d to establish the existence of a single hard-to-learn target concept for an y giv en algorithm with p olynomial sample complexit y . 2 Definition of Robust Learning The notion of robustness can be accommodated within the basic set-up of P AC learning b y adapting the definition of risk function. In this section we review t w o of the main definitions of r obust risk that hav e b een used in the literature. F or concreteness we consider an input space X = { 0 , 1 } n with metric d : X × X → N , where d ( x, y ) is the Hamming distance of x, y ∈ X . Given x ∈ X , we write B ρ ( x ) for the ball { y ∈ X : d ( x, y ) ≤ ρ } with cen tre x and radius ρ ≥ 0. The first definition of robust risk asks that the h yp othesis b e exactly equal to the target concept in the ball B ρ ( x ) of radius ρ around a “test p oint” x ∈ X : Definition 1. Given r esp e ctive hyp othesis and tar get functions h, c : X → { 0 , 1 } , distribution D on X , and r obustness p ar ameter ρ ≥ 0 , we define the “exact in the b al l” r obust risk of h with r esp e ct 3 It is b elieved that the existence of hard to P A C learn concept classes is not sufficient to construct one-wa y functions. [1]. 4 to c to b e R E ρ ( h, c ) = P x ∼ D ( ∃ z ∈ B ρ ( x ) : h ( z ) 6 = c ( z )) . While this definition captures a natural notion of robustness, an obvious disadv antage is that ev aluating the risk function requires the learner to hav e knowledge of the target function outside of the training set, e.g., through membership queries. Nonetheless, by considering a learner who has oracle access to the predicate ∃ z ∈ B ρ ( x ) : h ( z ) 6 = c ( z ), we can use the exact-in-the-ball framew ork to analyse sample complexity and to prov e strong lo w er b ounds on the computational complexit y of robust learning. A p opular alternativ e to the exact-in-the-ball risk function in Definition 1 is the follo wing c onstant-in-the-b al l risk function: Definition 2. Given r esp e ctive hyp othesis and tar get functions h, c : X → { 0 , 1 } , distribution D on X , and r obustness p ar ameter ρ ≥ 0 , we define the “c onstant in the b al l” r obust risk of h with r esp e ct to c as R C ρ ( h, c ) = P x ∼ D ( ∃ z ∈ B ρ ( x ) : h ( z ) 6 = c ( x )) . An ob vious adv antage of the constan t in the ball risk o ver the exact in the ball v ersion is that in the former, ev aluating the loss at p oin t x ∈ X requires only knowledge of the correct lab el of x and the h yp othesis h . In particular, this definition can also b e carried o v er to the non-realizable setting, in whic h there is no target. How ever, from a foundational p oin t of view the constan t in the ball risk has some dra wbacks: recall from the previous section that under this definition it is possible to ha ve strictly p ositive robust risk in the case that h = c . (Let us note in passing that the risk functions R C ρ and R E ρ are in general incomparable. Figure 1c gives an example in whic h R C ρ = 0 and R E ρ > 0.) Additionally , when w e w ork in the hypercub e, or a b ounded input space, as ρ b ecomes larger, we ev en tually require the function to b e constant in the whole space. Essentially , to ρ -robustly learn in the realisable setting, we require concept and distribution pairs to b e represented as t w o sets D + and D − whose ρ -expansions don’t intersect, as illustrated in Figures 1a and 1b. These limitations app ear ev en more stringen t when w e consider simple concept classes suc h as parit y functions, whic h are defined for an index set I ⊆ [ n ] as f I ( x ) = P i x i + b mo d 2 for b ∈ { 0 , 1 } . This class can b e P AC-learned, as w ell as exactly learned with n membership queries. Ho w ev er, for any p oin t, it suffices to flip one bit of the index set to switc h the lab el, so R C ρ ( f I , f I ) = 1 for an y ρ ≥ 1 if I 6 = ∅ . Ultimately , w e w an t the adv ersary’s p ow er to come from creating p erturbations that cause the h yp othesis and target functions to differ in some regions of the input space. F or this reason we fa v or the exact-in-the-ball definition and henceforth work with that. Ha ving settled on a risk function, we now form ulate the definition of robust learning. F or our purp oses a c onc ept class is a family C = {C n } n ∈ N , with C n a class of functions from { 0 , 1 } n to { 0 , 1 } . Lik ewise a distribution class is a family D = {D n } n ∈ N , with D n a set of distributions on { 0 , 1 } n . Finally a r obustness function is a function ρ : N → N . Definition 3. Fix a function ρ : N → N . We say that an algorithm A efficiently ρ - robustly learns a c onc ept class C with r esp e ct to distribution class D if ther e exists a p olynomial p oly ( · , · , · ) such that for al l n ∈ N , al l tar get c onc epts c ∈ C n , al l distributions D ∈ D n , and al l ac cur acy and c onfidenc e p ar ameters , δ > 0 , ther e exists m ≤ p oly (1 /, 1 /δ, n ) , such that when A is given ac c ess to a sample S ∼ D m it outputs h : { 0 , 1 } n → { 0 , 1 } such that P S ∼ D m R E ρ ( n ) ( h, c ) < > 1 − δ . 5 Note that the definition of robust learning requires p olynomial sample complexity and allo ws improp er learning (the h yp othesis h need not b elong to the concept class C n ). In the standard P AC framework, a hypothesis h is considered to hav e zero risk with resp ect to a target concept c when P x ∼ D ( h ( x ) 6 = c ( x )) = 0. W e hav e remarked that exact learnabilit y implies robust learnability; w e next giv e an example of a concept class C and distribution D such that C is P AC learnable under D with zero risk and y et cannot b e robustly learned under D (regardless of the sample complexit y). Lemma 4. The class of dictators is not 1-r obustly le arnable (and thus not r obustly le arnable for any ρ ≥ 1 ) with r esp e ct to the r obust risk of Definition 1 in the distribution-fr e e setting. Pr o of. Let c 1 and c 2 b e the dictators on v ariables x 1 and x 2 , resp ectiv ely . Let D b e suc h that P x ∼ D ( x 1 = x 2 ) = 1 and P x ∼ D ( x k = 1) = 1 2 for k ≥ 3. Dra w a sample S ∼ D m and lab el it according to c ∼ U ( c 1 , c 2 ). By the c hoice of D , the elemen ts of S will hav e the same lab el regardless of whether c 1 or c 2 w as pick ed. Ho w ev er, for x ∼ D , it suffices to flip any of the first tw o bits to cause c 1 and c 2 to disagree on the p erturb ed input. W e can easily show that, for any h ∈ { 0 , 1 } X , R E 1 ( c 1 , h ) + R E 1 ( c 2 , h ) ≥ R E 1 ( c 1 , c 2 ) = 1 . Then E c ∼ U ( c 1 ,c 2 ) E S ∼ D m R E 1 ( h, c ) ≥ 1 / 2 . W e conclude that one of c 1 or c 2 has robust risk at least 1/2. Note that a P AC learning algorithm with error probability threshold ε = 1 / 3 will either output c 1 or c 2 and will hence hav e standard risk zero. W e refer the reader to App endix B for further discussion on the relationship b et ween robust and zero-risk learning. 3 No Distribution-F ree Robust Learning in { 0 , 1 } n In this section, w e show that no non-trivial concept class is efficiently 1-robustly learnable in the bo olean hypercub e. Suc h a class is thus not efficien tly ρ -robustly learnable for any ρ ≥ 1. Efficien t robust learnability then requires access to a more p o w erful learning mo del or distributional assumptions. Let C n b e a concept class on { 0 , 1 } n , and define a concept class as C = S n ≥ 1 C n . W e say that a class of functions is trivial if C n has at most t wo functions, and that they differ on every point. Theorem 5. Any c onc ept class C is efficiently distribution-fr e e r obustly le arnable iff it is trivial. The pro of of the theorem relies on the following lemma: Lemma 6. L et c 1 , c 2 ∈ { 0 , 1 } X and fix a distribution on X . Then for al l h : { 0 , 1 } n → { 0 , 1 } R E ρ ( c 1 , c 2 ) ≤ R E ρ ( c 1 , h ) + R E ρ ( c 2 , h ) . Pr o of. Let x ∈ { 0 , 1 } n b e arbitrary , and supp ose that c 1 and c 2 differ on some z ∈ B ρ ( x ). Then either h ( z ) 6 = c 1 ( z ) or h ( z ) 6 = c 2 ( z ). The result follows. 6 The idea of the pro of of Theorem 5 (which can b e found in App endix C) is a generalization of the pro of of Lemma 4 that dictators are not robustly learnable. Ho wev er, note that w e construct a distribution whose supp ort is all of X . It is possible to find tw o hypotheses c 1 and c 2 and create a distribution suc h that c 1 and c 2 will lik ely lo ok iden tical on samples of size polynomial in n but ha ve robust risk Ω(1) with resp ect to one another. Since any hypothesis h in { 0 , 1 } X will disagree either with c 1 or c 2 on a given p oint x if c 1 ( x ) 6 = c 2 ( x ), by c ho osing the target hypothesis c at random from c 1 and c 2 , w e can guaran tee that h won’t be robust against c with positive probabilit y . Finally , note that an analogous argument can b e made for a more general setting (for example in R n ). 4 Monotone Conjunctions It turns out that we do not need recourse to “bad” distributions to show that very simple classes of functions are not efficiently robustly learnable. As we demonstrate in this section, MON-CONJ , the class of monotone conjunctions, is not efficiently robustly learnable even under the uniform distribution for robustness parameters that are sup erlogarithmic in the input dimension. 4.1 Non-Robust Learnabilit y The idea to sho w that MON-CONJ is not efficiently robustly learnable is in the same vein as the pro of of Theorem 5. W e first start b y proving the following lemma, whic h low er b ounds the robust risk of t w o disjoint monotone conjunctions. Lemma 7. Under the uniform distribution, for any n ∈ N , disjoint c 1 , c 2 ∈ MON-CONJ of length 3 ≤ l ≤ n/ 2 on { 0 , 1 } n and r obustness p ar ameter ρ ≥ l/ 2 , we have that R E ρ ( c 1 , c 2 ) is b ounde d b elow by a c onstant that c an b e made arbitr arily close to 1 2 as l gets lar ger. Pr o of. F or a h yp othesis c ∈ MON-CONJ , let I c b e the set of v ariables in c . Let c 1 , c 2 ∈ C b e as in the theorem statemen t. Then the robust risk R E ρ ( c 1 , c 2 ) is b ounded b elo w b y P x ∼ D ( c 1 ( x ) = 0 ∧ x has at least l / 2 1’s in I c 2 ) = (1 − 2 − l ) / 2 . No w, the follo wing lemma shows that if w e c ho ose the length of the conjunctions c 1 and c 2 to b e sup er-logarithmic in n , then, for a sample of size p olynomial in n , c 1 and c 2 will agree on S with probabilit y at least 1 / 2. The pro of can b e found in App endix D.1. Lemma 8. F or any functions l ( n ) = ω (log ( n )) and m ( n ) = p oly ( n ) , for any disjoint monotone c onjunctions c 1 , c 2 such that | I c 1 | = | I c 2 | = l ( n ) , ther e exists n 0 such that for al l n ≥ n 0 , a sample S of size m ( n ) sample d i.i.d. fr om D wil l have that c 1 ( x ) = c 2 ( x ) = 0 for al l x ∈ S with pr ob ability at le ast 1 / 2 . W e are now ready to prov e our main result of the section. Theorem 9. MON-CONJ is not efficiently ρ -r obustly le arnable for ρ ( n ) = ω (log( n )) under the uniform distribution. 7 Pr o of. Fix any algorithm A for learning MON-CONJ . W e will sho w that the exp ected robust risk b et w een a randomly chosen target function and any h yp othesis returned by A is b ounded b elow b y a constant. Fix a function p oly( · , · , · , · , · ), and note that, since size( c ) and ρ are b oth at most n , w e can simply consider a function p oly( · , · , · ) in the v ariables 1 /, and 1 /δ, n instead. Let δ = 1 / 2, and fix a function l ( n ) = ω (log( n )) that satisfies l ( n ) ≤ n/ 2, and let ρ ( n ) = l ( n ) / 2 ( n is not y et fixed). Let n 0 b e as in Lemma 8, where m ( n ) is the fixed sample complexity function.Then Equation (8) holds for all n ≥ n 0 . No w, let D b e the uniform distribution on { 0 , 1 } n for n ≥ max( n 0 , 3), and choose c 1 , c 2 as in Lemma 7. Note that R E ρ ( c 1 , c 2 ) > 5 12 b y the choice of n . Pic k the target function c uniformly at random b et w een c 1 and c 2 , and lab el S ∼ D m with c , where m = p oly(1 /, 1 /δ, n ). By Lemma 8, c 1 and c 2 agree with the lab eling of S (which implies that all the p oin ts ha v e lab el 0) with probabilit y at least 1 2 o v er the choice of S . Define the follo wing three even ts for S ∼ D m : E : c 1 | S = c 2 | S , E c 1 : c = c 1 , E c 2 : c = c 2 . Then, b y Lemmas 8 and 6, E c,S R E ρ ( A ( S ) , c ) ≥ P c,S ( E ) E c,S R E ρ ( A ( S ) , c ) | E > 1 2 P c,S ( E c 1 ) E S R E ρ ( A ( S ) , c ) | E ∩ E c 1 + P c,S ( E c 2 ) E S R E ρ ( A ( S ) , c ) | E ∩ E c 2 = 1 4 E S R E ρ ( A ( S ) , c 1 ) + R E ρ ( A ( S ) , c 2 ) | E ≥ 1 4 E S R E ρ ( c 2 , c 1 ) > 0 . 1 . 4.2 Robust Learnabilit y Against a Logarithmically-Bounded Adversary The argumen t showing the non-robust learnability of MON-CONJ under the uniform distribution in the previous section cannot b e carried through if the conjunction lengths are logarithmic in the input dimension, or if the robustness parameter is small compared to that target conjunction’s length. In b oth cases, we sho w that it is p ossible to efficien tly robustly learn these conjunctions if the class of distributions is α -log-Lipschitz, i.e. there exists a universal constan t α ≥ 1 suc h that for all n ∈ N , all distributions D on { 0 , 1 } n and for all input p oin ts x, x 0 ∈ { 0 , 1 } n , if d H ( x, x 0 ) = 1, then | log( D ( x )) − log ( D ( x 0 )) | ≤ log( α ) (see App endix A.3 for further details and useful facts). Theorem 10. L et D = {D n } n ∈ N , wher e D n is a set of α - log -Lipschitz distributions on { 0 , 1 } n for al l n ∈ N . Then the class of monotone c onjunctions is ρ -r obustly le arnable with r esp e ct to D for r obustness function ρ ( n ) = O (log n ) . The pro of can b e found in App endix D. This combined with Theorem 10 sho ws that ρ ( n ) = log( n ) is essentially the threshold for efficien t robust learnability of the class MON-CONJ . 8 5 Computational Hardness of Robust Learning In this section, w e establish that the computational hardness of P AC-learning a concept class C with resp ect to a distribution class D implies the computational hardness of robustly learning a family of concept-distribution pairs from a related class C 0 and a restricted class of distributions D 0 . This is essentially a version of the main result of [7], which used the constant-in-the-ball definition of robust risk. Our proof also uses the [7] trick of encoding a p oint’s lab el in the input for the robust learning problem. In terestingly , our pro of do es not rely on any assumption other than the existence of a hard learning problem in the P AC framework and is v alid under b oth Definitions 1 and 2 of robust risk. Construction of C 0 . Supp ose we are giv en C = {C n } n ∈ N and D = {D n } n ∈ N with C n and D n defined on X n = { 0 , 1 } n . Giv en k ∈ N , w e define the family of concept and distribution pairs { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 , where C 0 = {C 0 ( k,n ) } k,n ∈ N on X 0 k,n = { 0 , 1 } (2 k +1) n +1 as follo ws. Let ma j k : X 0 k,n → X n b e the function that returns the ma jority v ote on eac h subsequent blo ck of k bits, and ignores the last bit. W e define C 0 ( k,n ) = c ◦ ma j 2 k +1 | c ∈ C n . Let ϕ k : X n → X 0 k,n b e defined as ϕ k ( x ) := x 1 . . . x 1 x 2 . . . x d − 1 x d . . . x d | {z } 2 k +1 copies of each x i c ( x ) , ϕ k ( S ) := { ϕ k ( x i ) | x i ∈ S } , for x = x 1 x 2 . . . x d ∈ X and S ⊆ X . F or a concept c ∈ C n , each D ∈ D n induces a distribution D 0 ∈ D 0 c 0 , where c 0 = c ◦ ma j 2 k +1 and D 0 ( z ) = D ( x ) if z = ϕ k ( x ), and D 0 ( z ) = 0 otherwise. As shown below, this set up allows us to see that an y algorithm for learning C n with resp ect to D n yields an algorithm for learning the pairs { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 . Ho w ev er, an y r obust learning algo- rithm cannot solely rely on the last bit of the input, as it could b e flipp ed by an adversary . Then, this algorithm can b e used to P AC-learn C n . This establishes the equiv alence of the computational diffi- cult y betw een P A C-learning C n with respect to D 0 ( k,n ) and robustly learning { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 ( k,n ) . As mentioned earlier, w e can still efficiently P A C-learn the pairs { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 simply by al- w a ys outputting a hypothesis that returns the last bit of the input. Theorem 11. F or any c onc ept class C n , family of distributions D n over { 0 , 1 } n and k ∈ N , ther e exists a c onc ept class C 0 ( k,n ) and a family of distributions D 0 ( k,n ) over { 0 , 1 } (2 k +1) n +1 such that efficien t k -r obust le arnability of the c onc ept-distribution p airs { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 ( k,n ) and either of the r obust risk functions R C k or R E k implies efficien t P A C-le arnability of C n with r esp e ct to D n . Before pro ving the ab o ve result, let us first prov e the follo wing prop osition. Prop osition 12. The c onc ept-distribution p airs { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 ( k,n ) c an b e k -r obustly le arne d using O 1 log |C n | + log 1 δ examples. Pr o of. First note that, since C n is finite, we can use P AC-learning sample bounds for the realizable setting (see for example [23]) to get that the sample complexit y of learning C n is O 1 (log |C n | + log 1 δ ) . No w, if we hav e P A C-learned C n with resp ect to D n , and h is the hypothesis returned on a sample lab eled according to a target concept c ∈ C n , w e can comp ose it with the function ma j k to get a h yp othesis h 0 for which an y p erturbation of at most k bits of x 0 ∼ D 0 (where D 0 is the distribution induced b y the target concept c and distribution D ) will not change h 0 ( x 0 ). Th us, we also hav e k -robustly learned C 0 ( k,n ) . 9 R emark 13 . The sample complexity in Prop osition 12 is indep endent of k , and so the construction of the class C 0 on X 0 allo ws the adversary to modify 1 2 n fraction of the bits. There are w ays to mak e the adv ersary more pow erful and k eep the sample complexity unc hanged. Indeed, the fraction of the bits the adv ersary can flip can b e increased by using error correction co des. F or example, BCH co des [5, 17] would allow us to obtain an input space X 0 of dimension n + k log n where the adv ersary can flip k n + k log n bits. W e are now ready to prov e the main result of this section. Pr o of of The or em 11. Giv en C n and D , let C 0 ( k,n ) and {D 0 c 0 } c 0 ∈C 0 ( k,n ) b e constructed as ab o v e. Sup- p ose that it is hard to P AC-learn C n with resp ect to the distribution family D n . Supp ose that we are giv en an algorithm A 0 to k -robustly learn { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 ( k,n ) and a sample complexit y m . Let , δ > 0 b e arbitrary and c ∈ C n b e an arbitrary target concept and let c 0 ∈ C 0 ( k,n ) b e suc h that c 0 = c ◦ ma j 2 k +1 . Let D ∈ D n b e a distribution on X n , and let D 0 ∈ D 0 c 0 b e its induced distribution on X 0 k,n . A P A C-learning algorithm for C n is as follows. Draw a sample S ∼ D m and let S 0 = ϕ k ( S ). Note that this simulates a sample S 0 ∼ D 0 m , and that c 0 will give the same lab el to all p oin ts in the ρ -ball centred at x 0 for an y x 0 in the supp ort of D 0 . Since A 0 k -robustly learns the concept-distribution pairs { ( c 0 , D 0 ) } D 0 ∈D 0 c 0 ,c 0 ∈C 0 ( k,n ) , with proba- bilit y at least 1 − δ ov er S 0 , for an y x ∼ D , w e hav e that h 0 will b e wrong on ϕ k ( x ) (where the last bit is random) with probabilit y at most . So by outputting h = h 0 ◦ ϕ k , we ha v e an algorithm to P AC-learn C n with resp ect to the distribution family D n . 6 Conclusion W e hav e studied robust learnabilit y from a computational learning theory persp ective and hav e sho wn that efficien t robust learning can b e hard – even in v ery natural and apparently straight- forw ard settings. W e hav e moreov er giv en a tight c haracterization of the strength of an adv ersary to preven t robust learning of monotone conjunctions under certain distributional assumptions. An in teresting a v en ue for future work is to see whether this result can b e generalised to other classes of functions. Finally , w e hav e provided a simpler pro of of the previously established re sult of the computational hardness of robust learning. In the ligh t of our results, it seems to us that more thought needs to b e put in to what w e w an t out of robust learning in terms of computational efficiency and sample complexity , which will inform our choice of risk functions. Indeed, at first glance, robust learning definitions that hav e app eared in prior w ork seem in man y w ays natural and reasonable; ho w ev er, their inadequacies surface when viewed under the lens of computational learning theory . Giv en our negativ e results in the con text of the current robustness mo dels, one may surmise that requiring a classifier to b e correct in an entire ball near a p oint is asking for to o muc h. Under such a requirement, we can only solve “easy problems” with strong distributional assumptions. Nev ertheless, it may still be of in terest to study these notions of robust learning in differen t learning mo dels, for example where one has access to membership queries. 10 References [1] Benn y Applebaum, Boaz Barak, and Da vid Xiao. On basing lo w er-b ounds for learning on w orst-case assumptions. In Pr o c e e dings of the 49th Annual IEEE symp osium on F oundations of c omputer scienc e , 2008. [2] Pranjal Awasthi, Vitaly F eldman, and V arun Kanade. Learning using local mem b ership queries. In COL T , volume 30, pages 1–34, 2013. [3] Battista Biggio and F abio Roli. Wild patterns: T en years after the rise of adv ersarial mac hine learning. arXiv pr eprint arXiv:1712.03141 , 2017. [4] Battista Biggio, Igino Corona, Davide Maiorca, Blaine Nelson, Nedim ˇ Srndi ´ c, Pa vel Lasko v, Giorgio Giacinto, and F abio Roli. Ev asion attacks against machine learning at test time. In Joint Eur op e an c onfer enc e on machine le arning and know le dge disc overy in datab ases , pages 387–402. Springer, 2013. [5] Ra j Chandra Bose and Dwijendra K Ra y-Chaudhuri. On a class of error correcting binary group co des. Information and c ontr ol , 3(1):68–79, 1960. [6] S ´ ebastien Bub eck, Yin T at Lee, Eric Price, and Ilya Razenshteyn. Adv ersarial examples from cryptographic pseudo-random generators. arXiv pr eprint arXiv:1811.06418 , 2018. [7] S ´ ebastien Bubeck, Eric Price, and Ily a Razensh teyn. Adversarial examples from computational constrain ts. arXiv pr eprint arXiv:1805.10204 , 2018. [8] Nilesh Dalvi, P edro Domingos, Sumit Sanghai, Deepak V erma, et al. Adversarial classification. In Pr o c e e dings of the tenth ACM SIGKDD international c onfer enc e on Know le dge disc overy and data mining , pages 99–108. ACM, 2004. [9] Aksha y Degwek ar and Vino d V aikuntanathan. Computational limitations in robust classifica- tion and win-win results. arXiv pr eprint arXiv:1902.01086 , 2019. [10] Dimitrios Dio c hnos, Saeed Mahloujifar, and Mohammad Mahmo o dy . Adv ersarial risk and robustness: General definitions and implications for the uniform distribution. In A dvanc es in Neur al Information Pr o c essing Systems , 2018. [11] T ommaso Dreossi, Shromona Ghosh, Alb erto Sangio v anni-Vincen telli, and Sanjit A Seshia. A formalization of robustness for deep neural netw orks. arXiv pr eprint arXiv:1903.10033 , 2019. [12] Alh ussein F awzi, Seyed-Moh sen Mo osavi-Dezfooli, and Pascal F rossard. Robustness of classi- fiers: from adversarial to random noise. In A dvanc es in Neur al Information Pr o c essing Systems , pages 1632–1640, 2016. [13] Alh ussein F a wzi, Hamza F awzi, and Omar F awzi. Adv ersarial vulnerability for an y classifier. arXiv pr eprint arXiv:1802.08686 , 2018. [14] Alh ussein F awzi, Omar F awzi, and P ascal F rossard. Analysis of classifiers? robustness to adv ersarial p erturbations. Machine L e arning , 107(3):481–508, 2018. 11 [15] Dan F eldman and Leonard J Sch ulman. Data reduction for w eigh ted and outlier-resistant clustering. In Pr o c e e dings of the twenty-thir d annual ACM-SIAM symp osium on Discr ete A lgorithms , pages 1343–1354. So ciety for Industrial and Applied Mathematics, 2012. [16] Justin Gilmer, Luke Metz, F artash F aghri, Samuel S Schoenholz, Maithra Ragh u, Martin W attenberg, and Ian Go o dfello w. Adversarial spheres. arXiv pr eprint arXiv:1801.02774 , 2018. [17] Alexis Ho cquenghem. Codes correcteurs d’erreurs. Chiffr es , 2(2):147–56, 1959. [18] Vladlen Koltun and Christos H Papadimitriou. Appro ximately dominating represen tativ es. The or etic al Computer Scienc e , 371(3):148–154, 2007. [19] Daniel Lo wd and Christopher Meek. Adversarial learning. In Pr o c e e dings of the eleventh A CM SIGKDD international c onfer enc e on Know le dge disc overy in data mining , pages 641–647. A CM, 2005. [20] Daniel Lowd and Christopher Meek. Go o d w ord attac ks on statistical spam filters. In CEAS , v olume 2005, 2005. [21] Saeed Mahloujifar and Mohammad Mahmoo dy . Can adversarially robust learning leverage computational hardness? arXiv pr eprint arXiv:1810.01407 , 2018. [22] Saeed Mahloujifar, Dimitrios I Diochnos, and Mohammad Mahmo o dy . The curse of concen tra- tion in robust learning: Ev asion and p oisoning attac ks from concentration of measure. AAAI Confer enc e on Artificial Intel ligenc e , 2019. [23] Mehry ar Mohri, Afshin Rostamizadeh, and Ameet T alw alk ar. F oundations of machine le arning . MIT press, 2012. [24] Ali Shafahi, W Ronny Huang, Christoph Studer, Soheil F eizi, and T om Goldstein. Are adv er- sarial examples inevitable? arXiv pr eprint arXiv:1809.02104 , 2018. [25] Christian Szegedy , W o jciech Zarem ba, Ilya Sutsk ever, Joan Bruna, Dumitru Erhan, Ian Go o d- fello w, and Rob F ergus. In triguing prop erties of neural net w orks. In International Confer enc e on L e arning R epr esentations , 2013. [26] Dimitris Tsipras, Shibani San turk ar, Logan Engstrom, Alexander T urner, and Aleksander Madry . Robustness may b e at o dds with accuracy . In International Confer enc e on L e arning R epr esentations , 2019. [27] Leslie G V aliant. A theory of the learnable. In Pr o c e e dings of the sixte enth annual A CM symp osium on The ory of c omputing , pages 436–445. A CM, 1984. 12 App endix A Learning Theory Basics A.1 The P A C framework W e study the problem of robust classification. This is a generalization of standard class ification tasks, which are defined on an input space X n of dimension n and finite output space Y . Common examples of input spaces are { 0 , 1 } n , [0 , 1] n , and R n . W e fo cus on binary classific ation in the r e alizable setting , where Y = { 0 , 1 } , and w e get access to a sample S = { ( x i , y i ) } m i =1 where the x i ’s are drawn i.i.d. from an unknown underlying distribution D , and there exists c : X → Y such that y i = c ( x i ), namely , there exists a tar get c onc ept that has labeled the sample. In the P A C framew ork [27], our goal is to find a function h that approximates c with high probability ov er the training sample. This means we are allo wing a small chance of having a sample that is not representativ e of the distribution. As w e require our confidence to increase, we require more data. P A C learning is formally defined for c onc ept classes C n ⊆ { 0 , 1 } X n as follo ws. Definition 14 (P A C Learning) . L et C n b e a c onc ept class over X n and let C = S n ∈ N C n . We say that C is P A C learnable using hypothesis class H and sample c omplexity function p ( · , · , · ) if ther e exists an algorithm A that satisfies the fol lowing: for al l n ∈ N , for every c ∈ C n , for every D over X n , for every 0 < < 1 / 2 and 0 < δ < 1 / 2 , if whenever A is given ac c ess to m ≥ p ( n, 1 /, 1 /δ ) examples dr awn i.i.d. fr om D and lab ele d with c , A outputs h ∈ H such that with pr ob ability at le ast 1 − δ , P x ∼ D ( c ( x ) 6 = h ( x )) ≤ . We say that C is statistic al ly efficiently P A C le arnable if p is p olynomial in n, 1 / and 1 /δ , and c omputational ly efficiently P AC le arnable if A runs in p olynomial time in n, 1 / and 1 /δ . P AC learning is distribution-fr e e , in the sense that no assumptions are made ab out the distri- bution from which the data comes from. The setting where C = H is called pr op er le arning , and impr op er le arning otherwise. A.2 Monotone Conjunctions A conjunction c o v er { 0 , 1 } n can b e represented a set of literals l 1 , . . . , l k , where, for x ∈ X n , c ( x ) = V k i =1 l i . F or example, c ( x ) = x 1 ∧ ¯ x 2 ∧ x 5 is a conjunction. Monotone conjunctions are the sub class of conjunctions where negations are not allo w ed, i.e. all literals are of the form l i = x j for some j ∈ [ n ]. The standard P A C learning algorithm to learn monotone conjunctions is as follows. W e start with the hypothesis h ( x ) = V i ∈ I h x i , where I h = [ n ]. F or each example x in S , we remov e i from I h if c ( x ) = 1 and x i = 0. When one has access to mem b ership queries, one can easily exactly learn monotone conjunctions o v er the whole input space: we start with the instance where all bits are 1 (whic h is alw ays a p ositiv e example), and we can test whether each v ariable is in the target conjunction b y setting the corresp onding bit to 0 and requesting the lab el. W e refer the reader to [23] for an in-depth introduction to machine learning theory . 13 A.3 Log-Lipsc hitz Distributions Definition 15. A distribution D on { 0 , 1 } n is said to b e α - log -Lipschitz if for al l input p oints x, x 0 ∈ { 0 , 1 } n , if d H ( x, x 0 ) = 1 , then | log( D ( x )) − log( D ( x 0 )) | ≤ log( α ) . The intuition b ehind log -Lipsc hitz distributions is that p oints that are close to eac h other must not ha v e frequencies that greatly differ from each other. Note that, b y definition, D ( x ) > 0 for all inputs x . Moreo v er, the uniform distribution is log-Lipschitz with parameter α = 1. Another example of log-Lipschitz distributions is the class of pro duct distributions where the probability of dra wing a 0 (or equiv alently a 1) at index i is in the in terv al h 1 1+ α , α 1+ α i . Log-Lipschitz distributions ha v e b een studied in [2], and its v ariants in [15, 18]. Log-Lipsc hitz distributions hav e the follo wing useful prop erties, which w e will often refer to in our pro ofs. Lemma 16. L et D b e an α - log -Lipschitz distribution over { 0 , 1 } n . Then the fol lowing hold: 1. F or b ∈ { 0 , 1 } , 1 1+ α ≤ P x ∼ D ( x i = b ) ≤ α 1+ α . 2. F or any S ⊆ [ n ] , the mar ginal distribution D ¯ S is α - log -Lipschitz, wher e D ¯ S ( y ) = P y 0 ∈{ 0 , 1 } S D ( y y 0 ) . 3. F or any S ⊆ [ n ] and for any pr op erty π S that only dep ends on variables x S , the mar ginal with r esp e ct to ¯ S of the c onditional distribution ( D | π S ) ¯ S is α - log -Lipschitz. 4. F or any S ⊆ [ n ] and b S ∈ { 0 , 1 } S , we have that 1 1+ α | S | ≤ P x ∼ D ( x i = b ) ≤ α 1+ α | S | . Pr o of. T o prov e (1), fix i ∈ [ n ] and b ∈ { 0 , 1 } and denote by x ⊕ i the result of flipping the i -th bit of x . Note that P x ∼ D ( x i = b ) = X z ∈{ 0 , 1 } n : z i = b D ( z ) = X z ∈{ 0 , 1 } n : z i = b D ( z ) D ( z ⊕ i ) D ( z ⊕ i ) ≤ α X z ∈{ 0 , 1 } n : z i = b D ( z ⊕ i ) = α P x ∼ D ( x i 6 = b ) . The result follo ws from solving for P x ∼ D ( x i = b ). Without loss of generality , let ¯ S = { 1 , . . . , k } for some k ≤ n . Let x, x 0 ∈ { 0 , 1 } ¯ S with d H ( x, x 0 ) = 1. T o prov e (2), let D ¯ S b e the marginal distribution. Then, D ¯ S ( x ) = X y ∈{ 0 , 1 } S D ( xy ) = X y ∈{ 0 , 1 } S D ( xy ) D ( x 0 y ) D ( x 0 y ) ≤ α X y ∈{ 0 , 1 } S D ( x 0 y ) = αD ¯ S ( x 0 ) . T o prov e (3), denote by X π S the set of p oints in { 0 , 1 } S satisfying prop erty π S , and by xX π S the set of inputs of the form xy , where y ∈ X π S . By a slight abuse of notation, let D ( X π S ) b e the probabilit y of dra wing a p oint in { 0 , 1 } n that satisfies π S . Then, D ( xX π S ) = X y ∈ X π S D ( xy ) = X y ∈ X π S D ( xy ) D ( x 0 y ) D ( x 0 y ) ≤ α X y ∈ X π S D ( x 0 y ) = αD ( x 0 X π S ) . 14 W e can use the ab ov e and show that ( D | π S ) ¯ S ( x ) = D ( xX π S ) D ( x 0 X π S ) D ( x 0 X π S ) D ( X π S ) ≤ α ( D | π S ) ¯ S ( x 0 ) . Finally , (4) is a corollary of (1)–(3). B Discussion on the Relationship b et w een Robust and Zero-Risk Learning W e saw that, for b oth robust risks R C ρ and R E ρ , zero-risk learning do es not necessarily imply robust learning. Moreo ver, as shown in Section 3, efficien t distribution-free robust learning is not possible ev en in the realizable setting. What can b e said if we ha v e access to a robust learning algorithm for a sp ecific distribution on the b o olean h yp ercub e? W e will sho w that distribution-dep endent robust learning implies zero-risk learning for b oth robust risk definitions, under certain conditions on the measure of balls in the support of the distribution. Let us start with Definition 1, where w e require the h yp othesis to b e exact in the ρ -balls around a p oint. Prop osition 17. F or any pr ob ability me asur e µ on { 0 , 1 } n , r obustness p ar ameter ρ and c onc epts h, c , ther e exists > 0 such that if R E ρ ( h, c ) < then h ( x ) = c ( x ) for any x ∈ X such that µ ( B ρ ( x )) > 0 . In p articular, one has that h and c agr e e on the supp ort of µ . Pr o of. Supp ose there exists x ∗ ∈ X with µ ( B ρ ( x ∗ )) > 0 such that h ( x ∗ ) 6 = c ( x ∗ ). Then for an y z ∈ B ρ ( x ∗ ), we ha v e that R E ρ ( h, c, z ), the robust risk of h with resp ect to c at p oin t z , is 1. Let ˜ X := { x ∈ X : µ ( B ρ ( x )) > 0 } , and = min x ∈ ˜ X µ ( B ρ ( x )). W e ha ve that R E ρ ( h, c ) ≥ X z ∈ B ρ ( x ∗ ) µ ( { z } ) ` R ρ ( h, c, z ) = µ ( B ρ ( x ∗ )) ≥ . Corollary 18. F or any fixe d distribution D , r obust le arning with r esp e ct to D implies zer o-risk le arning with r esp e ct to D for any r obustness p ar ameter as long as in Pr op osition 17 satisfies − 1 = p oly ( n ) . Pr o of. Fix a distribution D ∈ D on X . Supp ose that we hav e a ρ -robust learning algorithm A R F ( D ) for F , namely for all , δ, ρ > 0, for all c ∈ F , if A R F ( D ) has access to a sample S of size m ≥ poly( 1 , 1 δ , size( c ) , n ), it returns f ∈ F such that P S ∼ D m ` R ρ ( f , c ) < ≥ 1 − δ . (1) By Prop osition 17, w e can c ho ose suc h that R E ρ ( h, c ) < implies that h ( x ) = c ( x ) for any x ∈ X suc h that µ ( B ρ ( x )) > 0. Note that this dep ends on D , ρ and n . So we ha v e that P x ∼ D ( f ( x ) 6 = c ( x )) = 0 , (2) with probability at least 1 − δ ov er the training sample S , whose size remains p olynomial in 1 δ and n b y the prop osition assumptions. 15 R emark 19 . The assumption on in Corollary 18 is necessary to use the robust learning algorithm as a blac k b o x: in Section 4.2, we w ork under a well-behav ed class of distributions that includes the uniform distribution and sho w that, for long enough monotone conjunctions and small enough robustness parameter (with resp ect to the conjunction length), efficien t robust learning is possible. Ho w ev er, w e cannot exactly learn these monotone conjunctions. In the uniform distribution setting, the ρ -balls all ha ve the same probability mass and − 1 is essen tially sup erp olynomial in n . T o show the same result for R C ρ , where the hypothesis is constant in a ball, w e can use the exact same reasoning as in Corollary 18, except that w e need to show the analogue of Prop osition 17 for this setting. Prop osition 20. F or any pr ob ability me asur e µ on { 0 , 1 } n and for any c onc epts h, c , ther e exists > 0 such that if R C ρ ( h, c ) < then h and c agr e e on the supp ort of µ . Pr o of. Fix h, c, D and let = min x ∈ supp( µ ) µ ( { x } ). Supp ose there exists x ∗ ∈ supp( µ ) and z ∈ B ρ ( x ∗ ) suc h that c ( x ∗ ) 6 = h ( z ). Then R C ρ ( h, c ) = P x ∼ µ ( ∃ z ∈ B ρ ( x ) . c ( x ) 6 = h ( z )) ≥ . C Pro ofs from Section 3 Pr o of of The or em 5. First, if C is trivial, w e need at most one example to identify the target function. F or the other direction, supp ose that C is non-trivial. W e first start b y fixing an y learning algorithm and p olynomial sample complexit y function m . Let η = 1 2 ω (log n ) , 0 < δ < 1 2 , and note that for an y constant a > 0, lim n →∞ n a log(1 − η ) − 1 = 0 , and so any p olynomial in n is o (log(1 / (1 − η ))) − 1 . Then it is p ossible to c ho ose n 0 suc h that for all n ≥ n 0 , m ≤ log(1 /δ ) 2 n log(1 − η ) − 1 . (3) Since C is non-trivial, w e can choose concepts c 1 , c 2 ∈ C n and p oints x, x 0 ∈ { 0 , 1 } n suc h that c 1 and c 2 agree on x but disagree on x 0 . This implies that there exists a p oint z ∈ { 0 , 1 } n suc h that (i) c 1 ( z ) = c 2 ( z ) and (ii) it suffices to c hange only one bit in I := I c 1 ∪ I c 2 to cause c 1 to disagree on z and its p erturbation. Let D b e suc h that P x ∼ D ( x i = z i ) = ( 1 − η if i ∈ I 1 2 otherwise . Dra w a sample S ∼ D m and lab el it according to c ∼ U ( c 1 , c 2 ). Then, P S ∼ D m ( ∀ x ∈ S c 1 ( x ) = c 2 ( x )) ≥ (1 − η ) m | I | . (4) 16 Bounding the RHS b elo w by δ > 0, we get that, as long as m ≤ log(1 /δ ) | I | log (1 − η ) − 1 , (5) (4) holds with probability at least δ . But this is true as Equation (3) holds as well. How ever, if x = z , then it suffices to flip one bit of x to get x 0 suc h that c 1 ( x 0 ) 6 = c 2 ( x 0 ). Then, R E ρ ( c 1 , c 2 ) ≥ P x ∼ D ( x I = z I ) = (1 − η ) | I | . (6) The constraints on η and the fact that | I | ≤ n are sufficient to guarantee that the RHS is Ω(1). Let α > 0 b e a constan t suc h that R E ρ ( c 1 , c 2 ) ≥ α . W e can use the same reasoning as in Lemma 6 to argue that, for an y h ∈ { 0 , 1 } X , R E 1 ( c 1 , h ) + R E 1 ( c 2 , h ) ≥ R E 1 ( c 1 , c 2 ) . Finally , we can show that E c ∼ U ( c 1 ,c 2 ) E S ∼ D m R R 1 ( h, c ) ≥ αδ / 2 , hence there exists a target c with exp ected robust risk b ounded b elow by a constant 4 . D Pro ofs from Section 4 D.1 Pro of of Lemma 8 Pr o of. W e b egin by bounding the probabilit y that c 1 and c 2 agree on an i.i.d. sample of size m : P S ∼ D m ( ∀ x ∈ S · c 1 ( x ) = c 2 ( x ) = 0) = 1 − 1 2 l 2 m . (7) Bounding the RHS b elo w by 1 / 2, we get that, as long as m ≤ log(2) 2 log(2 l / (2 l − 1)) , (8) (7) holds with probabilit y at least 1 / 2. No w, if l = ω (log( n )), then for a constan t a > 0, lim n →∞ n a log 2 l 2 l − 1 = 0 , and so an y p olynomial in n is o log 2 l 2 l − 1 − 1 . 4 F or a more detailed reasoning, we refer the reader to the pro of of Theorem 9, where w e b ound the expected v alue E c,S R E ρ ( A ( S ) , c ) of the robust risk of a target c hosen at uniformly random and the h ypothesis outputted by a learning algorithm A on a sample S . 17 D.2 Pro of of Theorem 10 Pr o of. W e show that the algorithm A for P AC-learning monotone conjunctions (see [23], chapter 2) is a robust learner for an appropriate choice of sample size. W e start with the hypothesis h ( x ) = V i ∈ I h x i , where I h = [ n ]. F or each example x in S , w e remov e i from I h if c ( x ) = 1 and x i = 0. Let D b e a class of α -log-Lipschitz distributions. Let n ∈ N and D ∈ D n . Suppose moreov er that the target concept c is a conjunction of l v ariables. Fix ε, δ > 0. Let η = 1 1+ α , and note that b y Lemma 16, for any S ⊆ [ n ] and b S ∈ { 0 , 1 } S , w e ha ve that η | S | ≤ P x ∼ D ( x i = b ) ≤ (1 − η ) | S | . Claim 1. If m ≥ l log n − log δ η l +1 m then given a sample S ∼ D m , algorithm A outputs c with probability at least 1 − δ . Pr o of of Claim 1. Fix i ∈ { 1 , . . . , n } . Algorithm A eliminates i from the output hypothesis just in case there exists x ∈ S with x i = 0 and c ( x ) = 1. Now we hav e P x ∼ D ( x i = 0 ∧ c ( x ) = 1) ≥ η l +1 and hence P S ∼ D ( ∀ x ∈ S · i remains in I h ) ≤ (1 − η l +1 ) m ≤ e − mη l +1 = δ n . The claim no w follows from union b ound ov er i ∈ { 1 , . . . , n } . Claim 2. If l ≥ 8 η 2 log( 1 ε ) and ρ ≤ η l 2 then P x ∼ D ( ∃ z ∈ B ρ ( x ) · c ( z ) = 1) ≤ ε . Pr o of of Claim 2. Define a random v ariable Y = P i ∈ I c I ( x i = 1). W e simulate Y by the follo wing pro cess. Let X 1 , . . . , X l b e random v ariables taking v alue in { 0 , 1 } , and whic h may b e dep enden t. Let D i b e the marginal distribution on X i conditioned on X 1 , . . . , X i − 1 . This distribution is also α -log-Lipschitz b y Lemma 16, and hence, P X i ∼ D i ( X i = 1) ≤ 1 − η . (9) Since w e are interested in the random v ariable Y representing the n um b er of 1’s in X 1 , . . . , X l , w e define the random v ariables Z 1 , . . . , Z l as follo ws: Z k = k X i =1 X i ! − k (1 − η ) . The sequence Z 1 , . . . , Z l is a sup ermartingale with resp ect to X 1 , . . . , X l : E [ Z k +1 | X 1 , . . . , X k ] = E Z k + X 0 k +1 − (1 − η ) | X 0 1 , . . . , X 0 k = Z k + P X 0 k +1 = 1 | X 0 1 , . . . , X 0 k − (1 − η ) ≤ Z k . (b y (9)) No w, note that all Z k ’s satisfy | Z k +1 − Z k | ≤ 1, and that Z l = Y − l (1 − η ). W e can thus apply the 18 Azuma-Ho effding (A.H.) Inequalit y to get P ( Y ≥ l − ρ ) ≤ P Y ≥ l (1 − η ) + p 2 ln(1 /ε ) l = P Z l − Z 0 ≥ p 2 ln(1 /ε ) l ≤ exp − p 2 ln(1 /ε ) l 2 2 l ! (A.H.) = ε , where the first inequalit y holds from the given b ounds on l and ρ : l − ρ = (1 − η ) l + η l 2 + η l 2 − ρ ≥ (1 − η ) l + η l 2 (since ρ ≤ η l 2 ) ≥ (1 − η ) l + p 2 log(1 /ε ) l . (since l ≥ 8 η 2 log( 1 ε )) This completes the pro of of Claim 2. W e no w com bine Claims 1 and 2 to prov e the theorem. Define l 0 := max( 2 η log n, 8 η 2 log( 1 ε )). Define m := l log n − log δ η l 0 +1 m . Note that m is p olynomial in n , δ , ε . Let h denote the output of algorithm A giv en a sample S ∼ D m . W e consider t w o cases. If l ≤ l 0 then, by Claim 1, h = c (and hence the robust risk is 0) with probability at least 1 − δ . If l 0 ≤ l then, since ρ = log n , we hav e l ≥ 8 η 2 log( 1 ε ) and ρ ≤ η l 2 and so we can apply Claim 2. By Claim 2 w e hav e R E ρ ( h, c ) ≤ P x ∼ D ( ∃ z ∈ B ρ ( x ) · c ( z ) = 1) ≤ ε 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment