Reinforcement Learning for Temporal Logic Control Synthesis with Probabilistic Satisfaction Guarantees

Reinforcement Learning (RL) has emerged as an efficient method of choice for solving complex sequential decision making problems in automatic control, computer science, economics, and biology. In this paper we present a model-free RL algorithm to syn…

Authors: Mohammadhosein Hasanbeig, Yiannis Kantaros, Aless

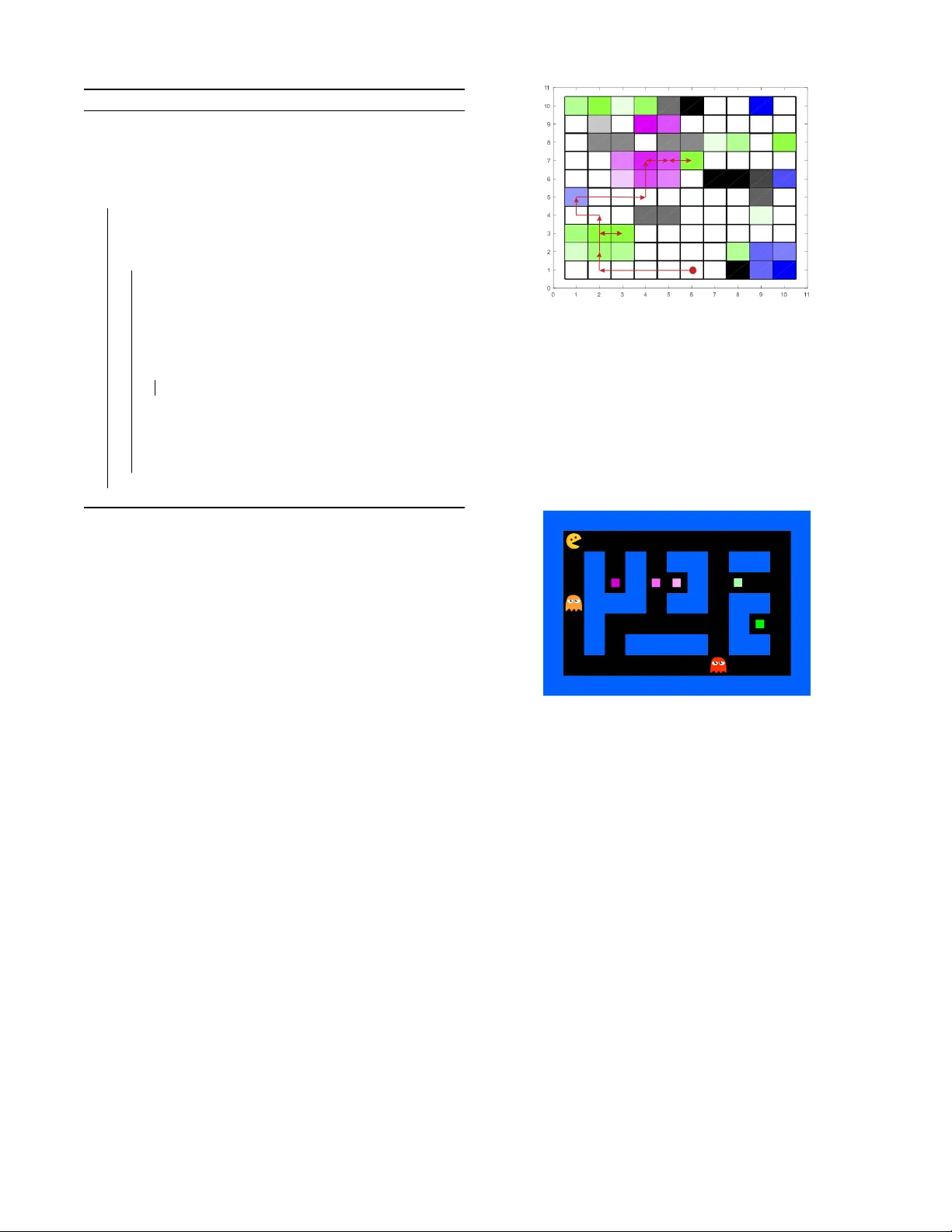

Reinf or cement Learning f or T emporal Logic Control Synthesis with Probabilistic Satisfaction Guarantees M. Hasanbeig, Y . Kantaros, A. Abate, D. Kroening, G. J. P appas, I. Lee Abstract — Reinfor cement Learning (RL) has emerged as an efficient method of choice for solving complex sequential decision making problems in automatic control, computer science, economics, and biology . In this paper we present a model-free RL algorithm to synthesize control policies that maximize the probability of satisfying high-level control objectives given as Linear T emporal Logic (L TL) formulas. Uncertainty is considered in the workspace properties, the structure of the w orkspace, and the agent actions, giving rise to a Probabilistically-Labeled Markov Decision Process (PL-MDP) with unknown graph structure and stochastic beha viour , which is even more general case than a fully unknown MDP . W e first translate the L TL specification into a Limit Deterministic Büchi A utomaton (LDBA), which is then used in an on-the-fly product with the PL-MDP . Thereafter , we define a synchronous reward function based on the acceptance condition of the LDBA. Finally , we sho w that the RL algorithm delivers a policy that maximizes the satisfaction probability asymptotically . W e pro- vide experimental results that showcase the efficiency of the proposed method. I . I N T RO D U C T I O N The use of temporal logic has been promoted as formal task specifications for control synthesis in Markov Decision Processes (MDPs) due to their expressiv e po wer , as they can handle a richer class of tasks than the classical point- to-point navigation. Such rich specifications include safety and liveness requirements, sequential tasks, coverage, and temporal ordering of different objectiv es [1]–[5]. Control synthesis for MDPs under Linear T emporal Logic (L TL) specifications has also been studied in [6]–[10]. Common in these works is that, in order to synthesize policies that maximize the satisfaction probability , exact knowledge of the MDP is required. Specifically , these methods construct a product MDP by composing the MDP that captures the underlying dynamics with a Deterministic Rabin Automaton (DRA) that represents the L TL specification. Then, given the product MDP , probabilistic model checking techniques are employed to design optimal control policies [11], [12]. In this paper , we address the problem of designing optimal control policies for MDPs with unknown stochastic beha viour so that the generated traces satisfy a given L TL specification with maximum probability . Unlike previous work, uncertainty is considered both in the environment properties and in the M. Hasanbeig, A. Abate, and D. Kroening are with the Department of Computer Science, University of Oxford, UK, and are supported by the ERC project 280053 (CPRO VER) and the H2020 FET OPEN 712689 SC 2 . { hosein.hasanbeig,aabate,kroening } @cs.ox.ac.uk. Y . Kantaros, G. J. Pappas, and I. Lee are with the School of Engineering and Applied Science (SEAS), Univ ersity of Pennsylvania, P A, USA, and are supported by the AFRL and D ARP A under Contract No. F A8750-18-C-0090 and the ARL RCT A under Contract No. W911NF-10-2-0016 { kantaros,pappasg,lee } @seas.upenn.edu. agent actions, provoking a Pr obabilistically-Labeled MDP (PL-MDP). This model further extend MDPs to provide a way to consider dynamic and uncertain en vironments. In order to solve this problem, we first conv ert the L TL formula into a Limit Deterministic Büchi Automaton (LDBA) [13]. It is known that this construction results in an exponential-sized automaton for L TL \ GU , and it results in nearly the same size as a DRA for the rest of L TL. L TL \ GU is a fragment of linear temporal logic with the restriction that no until operator occurs in the scope of an always operator . On the other hand, the DRA that are typically employed in relev ant work are doubly exponential in the size of the original L TL formula [14]. Furthermore, a Büchi automaton is semantically simpler than a Rabin automaton in terms of its acceptance conditions [10], [15], which makes our algorithm much easier to implement. Once the LDBA is generated from the given L TL property , we construct on-the-fly a product between the PL-MDP and the resulting LDBA and then define a synchr onous re war d function based on the acceptance condition of the Büchi automaton over the state-action pairs of the product. Using this algorithmic rew ard shaping procedure, a model-free RL algorithm is introduced, which is able to generate a polic y that returns the maximum expected reward. Finally , we show that maximizing the expected accumulated reward entails the maximization of the satisfaction probability . Related work – A model-based RL algorithm to design policies that maximize the satisfaction probability is proposed in [16], [17]. Specifically , [16] assumes that the giv en MDP model has unknown transition probabilities and builds a Probably Approximately Correct MDP (P A C MDP), which is composed with the DRA that expresses the L TL property . The overall goal is to calculate the finite-horizon ( T -step) value function for each state, such that the obtained value is within an error bound from the probability of satisfying the giv en L TL property . The P A C MDP is generated via an RL- like algorithm, then v alue iteration is applied to update state values. A similar model-based solution is proposed in [18]: this also hinges on approximating the transition probabilities, which limits the precision of the policy generation process. Unlike the problem that is considered in this paper, the work in [18] is limited to policies whose traces satisfy the property with probability one. Moreover , [16]–[18] require to learn all transition probabilities of the MDP . As a result, they need a significant amount of memory to store the learned model [19]. This specific issue is addressed in [20], which proposes an actor-critic method for L TL specification that requires the graph structure of the MDP , but not all transition probabilities. The structure of the MDP allo ws for the computation of Accepting Maximum End Components (AMECs) in the product MDP , while transition probabilities are generated only when needed by a simulator . By contrast, the proposed method does not require knowledge of the structure of the MDP and does not rely on computing AMECs of a product MDP . A model-free and AMEC-free RL algorithm for L TL planning is also proposed in [21]. Nevertheless, unlike our proposed method, all these cognate contributions rely on the L TL-to-DRA con version, and uncertainty is considered only in the agent actions, but not in the workspace properties. In [22] and [23] safety-critical settings in RL are addressed in which the agent has to deal with a heterogeneous set of MDPs in the context of cyber-ph ysical systems. [24] further employs DDL [25], a first-order multi-modal logic for specifying and proving properties of hybrid programs. The first use of LDBA for L TL-constrained policy synthesis in a model-free RL setup appears in [26], [27]. Specifically , [27] propose a hybrid neural network architecture combined with LDB As to handle MDPs with continuous state spaces. The work in [26] has been taken up more recently by [28], which has focused on model-free aspects of the algorithm and has employed a different LDB A structure and re ward, which introduce extra states in the product MDP . The authors also do not discuss the complexity of the automaton construction with respect to the size of the formula, but gi ven the fact that resulting automaton is not a generalised Büchi, it can be expected that the density of automaton acceptance condition is quite low , which might result in a state-space explosion, particularly if the L TL formula is complex. As we sho w in the proof for the counter example in the Appendix-E the authors indeed have overlook ed that our algorithm is episodic, and allows the discount factor to be equal to one. Unlike [26]– [28], in this work we consider uncertainty in the workspace properties by employing PL-MDPs. Summary of contributions – F irst , we propose a model- free RL algorithm to synthesize control policies for unknown PL-MDPs which maximizes the probability of satisfying L TL specifications. Second , we define a synchr onous re war d function and we show that maximizing the accumulated rew ard maximizes the satisfaction probability . Thir d , we con vert the L TL specification into an LDBA which, as a result, shrinks the state-space that needs to explored compared to relev ant L TL-to-DRA-based works in finite-state MDPs. Moreov er , unlike previous works, our proposed method does not require computation of AMECs of a product MDP , which av oids the quadratic time complexity of such a computation in the size of the product MDP [11], [12]. I I . P RO B L E M F O R M U L A T I O N Consider a robot that resides in a partitioned environment with a finite number of states. T o capture uncertainty in both the robot motion and the workspace properties, we model the interaction of the robot with the environment as a PL-MDP , which is defined as follows. Definition 2.1 (Pr obabilistically-Labeled MDP [9]): A PL-MDP is a tuple M = ( X , x 0 , A , P C , AP , P L ) , where X is a finite set of states; x 0 ∈ X is the initial state; A is a finite set of actions. W ith slight abuse of notation A ( x ) denotes the available actions at state x ∈ X ; P C : X × A × X → [0 , 1] is the transition probability function so that P C ( x, a, x 0 ) is the transition probability from state x ∈ X to state x 0 ∈ X via control action a ∈ A and P x 0 ∈S P C ( x, a, x 0 ) = 1 , for all a ∈ A ( x ) ; AP is a set of atomic propositions; and P L : X × 2 AP → [0 , 1] specifies the associated probability . Specifically , P L ( x, ` ) denotes the probability that ` ∈ 2 AP is observed at state x ∈ X , where P ` ∈ 2 AP P L ( x, ` ) = 1 , ∀ x ∈ X . The probabilistic map P L provides a means to model dynamic and uncertain en vironments. Hereafter , we assume that the PL-MDP M is fully observable, i.e., at any time/stage t the current state, denoted by x t , and the observations in state x t , denoted by ` t ∈ 2 AP , are known. At any stage T ≥ 0 we define the robot’ s past path as X T = x 0 x 1 . . . x T , the past sequence of observed labels as L T = ` 0 ` 1 . . . ` T , where ` t ∈ 2 AP and the past sequence of control actions A T = a 0 a 1 . . . a T − 1 , where a t ∈ A ( x t ) . These three sequences can be composed into a complete past run, defined as R T = x 0 ` 0 a 0 x 1 ` 1 a 1 . . . x T ` T . W e denote by X T , L T , and R T the set of all possible sequences X T , L T and R T , respectiv ely . The goal of the robot is accomplish a task expressed as an L TL formula. L TL is a formal language that comprises a set of atomic propositions AP , the Boolean operators, i.e., conjunction ∧ and negation ¬ , and two temporal operators, next and until ∪ . L TL formulas over a set AP can be constructed based on the following grammar: φ ::= true | π | φ 1 ∧ φ 2 | ¬ φ | φ | φ 1 ∪ φ 2 , where π ∈ AP . The other Boolean and temporal operators, e.g., always , have their standard syntax and meaning. An infinite word σ ov er the alphabet 2 AP is defined as an infinite sequence σ = π 0 π 1 π 2 · · · ∈ (2 AP ) ω , where ω denotes infinite repetition and π k ∈ 2 AP , ∀ k ∈ N . The language σ ∈ (2 AP ) ω | σ | = φ is defined as the set of words that satisfy the L TL formula φ , where | = ⊆ (2 AP ) ω × φ is the satisfaction relation [29]. In what follows, we define the probability that a stationary policy for M satisfies the assigned L TL specification. Specif- ically , a stationary policy ξ for M is defined as ξ = ξ 0 ξ 1 . . . , where ξ t : X × A → [0 , 1] . Gi ven a stationary policy ξ , the probability measure P ξ M , defined on the smallest σ - algebra over R ∞ , is the unique measure defined as P ξ M = Q T t =0 P C ( x t , a t , x t +1 ) P L ( x t , ` t ) ξ t ( x t , a t ) , where ξ t ( x t , a t ) denotes the probability that at time t the action a t will be selected giv en the current state x t [11], [30]. W e then define the probability of M satisfying φ under policy ξ as [11], [12] P ξ M ( φ ) = P ξ M ( R ∞ : L ∞ | = φ ) , (1) The problem we address in this paper is summarized as follows. Pr oblem 1: Giv en a PL-MDP M with unknown transition probabilities, unkno wn label mapping, unknown underlying graph structure, and a task specification captured by an L TL formula φ , synthesize a deterministic stationary control policy ξ ∗ that maximizes the probability of satisfying φ captured in (1), i.e., ξ ∗ = argmax ξ P ξ M ( φ ) . 1 I I I . A N E W L E A R N I N G - F O R - P L A N N I N G A L G O R I T H M In this section, we first discuss how to translate the L TL formula into an LDB A A (see Section III-A). Then, we define the product MDP P , constructed by composing the PL-MDP M and the LDBA A that expresses φ (see Section III-B). Next, we assign rewards to the product MDP transitions based on the accepting condition of the LDB A A . As we show later , this allows us to synthesize a policy µ ∗ for P that maximizes the probability of satisfying the acceptance conditions of the LDB A. The projection of the obtained policy µ ∗ ov er model M results in a policy ξ ∗ that solves Problem 1 (Section III-C). A. T ranslating LTL into an LDBA An L TL formula φ can be translated into an automaton, namely a finite-state machine that can express the set of words that satisfy φ . Con ventional probabilistic model checking methods translate L TL specifications into DRAs, which are then composed with the PL-MDP , giving rise to a product MDP . Nev ertheless, it is known that this con version results, in the worst case, in automata that are doubly exponential in the size of the original L TL formula [14]. By contrast, in this paper we propose to express the giv en L TL property as an LDB A, which results in a much more succinct automaton [13], [15]. This is the key to the reduction of the state-space that needs to be explor ed ; see also Section V. Before defining the LDBA, we first need to define the Generalized Büchi Automaton (GB A). Definition 3.1 (Generalized Büchi Automaton [11]): A GB A A = ( Q , q 0 , Σ , F , δ ) is a structure where Q is a finite set of states, q 0 ∈ Q is the initial state, Σ = 2 AP is a finite alphabet, F = F 1 , . . . , F f is the set of accepting conditions where F j ⊂ Q , 1 ≤ j ≤ f , and δ : Q × Σ → 2 Q is a transition relation. An infinite run ρ of A ov er an infinite word σ = π 0 π 1 π 2 · · · ∈ Σ ω , π k ∈ Σ = 2 AP ∀ k ∈ N , is an infinite sequence of states q k ∈ Q , i.e., ρ = q 0 q 1 . . . q k . . . , such that q k +1 ∈ δ ( q k , π k ) . The infinite run ρ is called accepting (and the respecti ve word σ is accepted by the GB A) if Inf ( ρ ) ∩ F j 6 = ∅ , ∀ j ∈ { 1 , . . . , f } , where Inf ( ρ ) is the set of states that are visited infinitely often by ρ . Definition 3.2 (Limit Deterministic Büchi Automaton [13]): A GBA A = ( Q , q 0 , Σ , F , δ ) is limit deterministic if Q can be partitioned into two disjoint sets Q = Q N ∪ Q D , so that (i) δ ( q, π ) ⊂ Q D and | δ ( q, π ) | = 1 , for every state q ∈ Q D and π ∈ Σ ; and (ii) for every F j ∈ F , it holds that F j ⊂ Q D and there are ε -transitions from Q N to Q D . An ε -transition allows the automaton to change its state without reading any specific input. In practice, the ε - transitions between Q N and Q D reflect the “guess” on reach- ing Q D : accordingly , if after an ε -transition the associated 1 The fact that the graph structure is unknown implies that we do not know which transition probabilities are equal to zero. As a result, relevant approaches that require the structure of the MDP , as e.g., [20] cannot be applied. labels in the accepting set of the automaton cannot be read, or if the accepting states cannot be visited, then the guess is deemed to be wrong, and the trace is disregarded and is not accepted by the automaton. Ho wev er , if the trace is accepting, then the trace will stay in Q D ev er after , i.e. Q D is in variant. Definition 3.3 (Non-accepting Sink Component): A non- accepting sink component in an LDB A A is a directed graph induced by a set of states Q sink ⊂ Q such that (1) is strongly connected, (2) does not include all accepting sets F j , j = 1 , ..., f , and (3) there exist no other strongly connected set Q 0 ⊂ Q , Q 0 6 = Q sink that Q sink ⊂ Q 0 . W e denote the union set of all non-accepting sink components as Q sinks . B. Product MDP Giv en the PL-MDP M and the LDB A A , we define the product MDP P = M × A as follows. Definition 3.4 (Pr oduct MDP): Giv en a PL-MDP M = ( X , x 0 , A , P C , AP , P L ) and an LDBA A = ( Q , q 0 , Σ , F , δ ) , we define the product MDP P = M × A as P = ( S , s 0 , A P , P P , F P ) , where (i) S = X × 2 AP × Q is the set of states, so that s = ( x, `, q ) ∈ S , x ∈ X , ` ∈ 2 AP , and q ∈ Q ; (ii) s 0 = ( x 0 , ` 0 , q 0 ) is the initial state; (iii) A P is the set of actions inherited from the MDP , so that A P ( s ) = A ( x ) , where s = ( x, `, q ) ; (iv) P P : S × A × S : [0 , 1] is the transition probability function, so that P P ([ x, `, q ] , a, [ x 0 , ` 0 , q 0 ]) = P C ( x, u, x 0 ) P L ( x 0 , ` 0 ) , (2) where [ x, `, q ] ∈ S , [ x 0 , ` 0 , q 0 ] ∈ S , a ∈ A ( x ) and q 0 = δ ( q, ` 0 ) ; (v) F P = { ( F P j ) , j = 1 , . . . , f } is the set of accepting states, where F P j = X × 2 AP × F j . In order to handle ε -transitions in the constructed LDBA we have to add the following modifications to the standard definition of the product MDP [15]. First, for every ε -transition to a state q 0 ∈ Q we add an action ε q 0 in the product MDP , i.e., A P ( s ) = A P ( s ) ∪ { ε q 0 , s 0 = [ x, ` 0 , q 0 ] , q 0 ∈ Q} . Second, the transition probabilities of ε -transitions are giv en by P P ( s, a, s 0 ) = ( 1 , if ( x = x 0 ) ∧ ( ` = ` 0 ) ∧ ( δ ( q, ε q 0 ) = q 0 ) 0 , otherwise , (3) where s = ( x, `, q ) and s 0 = ( x 0 , ` 0 , q 0 ) . Gi ven an y policy µ for P , we define an infinite run ρ µ P of P to be a n infinite sequence of states of P , i.e., ρ µ P = s 0 s 1 s 2 . . . , where P P ( s t , µ ( s t ) , s t +1 ) > 0 . By definition of the accepting condition of the LDBA A , an infinite run ρ µ P is accepting, i.e., µ satisfies φ with a non-zero probability (denoted by µ | = φ ), if Inf ( ρ µ P ) ∩ F P j 6 = ∅ , ∀ j ∈ { 1 , . . . , f } . In what follows, we design a synchronous reward function based on the accepting condition of the LDB A so that maximization of the expected accumulated reward implies maximization of the satisfaction probability . Specifically , we generate a control policy µ ∗ that maximizes the probability of (i) reaching the states of F P from s 0 and (ii) the probability that each accepting set F P j will be visited infinitely often. C. Construction of the Rewar d Function T o synthesize a policy that maximizes the probability of satisfying φ , we construct a synchronous reward function for the product MDP . The main idea is that (i) visiting a set F j , 1 ≤ j ≤ f yields a positiv e re ward r > 0 ; and (ii) revisiting the same set F j returns zero reward until all other sets F k , k 6 = j are also visited; (iii) the rest of the transitions hav e zero rewards. Intuitiv ely , this reward shaping strategy moti vates the agent to visit all accepting sets F j of the LDB A infinitely often, as required by the acceptance condition of the LDB A; see also Section IV. T o formally present the proposed reward shaping method, we need first to introduce the the accepting fr ontier set A which is initialized as the family set A = { F k } f k =1 . (4) This set is updated on-the-fly e very time a set F j is visited as A ← AF ( q , A ) where AF ( q, A ) is the accepting fr ontier function defined as follows. Definition 3.5 (Accepting F rontier Function): Giv en an LDB A A = ( Q , q 0 , Σ , F , δ ) , we define AF : Q × 2 Q → 2 Q as the accepting frontier function, which ex ecutes the following operation over any given set A ∈ 2 Q : AF ( q, A ) = A \ F j : ( q ∈ F j ) ∧ ( A 6 = F j ) : { F k } f k =1 \ F j : ( q ∈ F j ) ∧ ( A = F j ) . In words, giv en a state q ∈ F j and the set A , AF outputs a set containing the elements of A minus those elements that are common with F j (first case). Howe ver , if A = F j , then the output is the family set of all accepting sets of A minus those elements that are common with F j , resulting in a reset of A to (4) minus those elements that are common with F j (second case). Intuitively , A always contains those accepting sets that are needed to be visited at a given time and in this sense the reward function is synchronous with the LDB A accepting condition. Gi ven the accepting frontier set A , we define the follo wing rew ard function R ( s, a ) = r if q 0 ∈ A , s 0 = ( x 0 , ` 0 , q 0 ) , 0 otherwise . (5) In (5) , s 0 is the state of the product MDP that is reached from state s by taking action a , and r > 0 is an arbitrary positiv e reward. In this way the agent is guided to visit all accepting sets F j infinitely often and, consequently , satisfy the giv en L TL property . Remark 3.6: The initial and accepting components of the LDB A proposed in [13] (as used in this paper) are both deterministic. By Definition 3.2, the discussed LDB A is indeed a limit-deterministic automaton, howe ver notice that the obtained determinism within its initial part is stronger than that required in the definition of LDB A. Thanks to this feature of the LDB A structure, in our proposed algorithm there is no need to “explicitly build” the product MDP and to store all its states in memory . The automaton transitions can be executed on-the-fly , as the agent reads the labels of the MDP states. Gi ven P , we compute a stationary deterministic policy µ ∗ , that maximizes the expected accumulated return, i.e., µ ∗ ( s ) = arg max µ ∈D U µ ( s ) , (6) where D is the set of all stationary deterministic policies ov er S , and U µ ( s ) = E µ [ ∞ X n =0 γ n R ( s n , µ ( s n )) | s 0 = s ] , (7) where E µ [ · ] denotes the expected value given that the product MDP follows the policy µ [30], 0 ≤ γ ≤ 1 is the discount factor , and s 0 , ..., s n is the sequence of states generated by policy µ up to time step n , initialized at s 0 = s . Note that the optimal policy is stationary as shown in the following result. Theor em 3.7 ([30]): In any finite-state MDP , such as P , if there exists an optimal policy , then that policy is stationary and deterministic. In order to construct µ ∗ , we employ episodic Q-learning (QL), a model-free RL scheme described in Algorithm 1. 2 Specifically , Algorithm 1 requires as inputs (i) the LDB A A , (ii) the re ward function R defined in (5) , and (iii) the hyper-parameters of the learning algorithm. Observe that in Algorithm 1, we use an action-value function Q : S × A P → R to ev aluate µ instead of U µ ( s ) , since the MDP P is unknown. The action-value function Q ( s, a ) can be initialized arbitrarily . Note that U µ ( s ) = max a ∈A P Q ( s, a ) . Also, we define a function C : S × A P → N that counts the number of times that action a has been taken at state s . The policy µ is selected to be an -greedy policy , which means that with probability 1 − , the greedy action argmax a ∈A P Q ( s, a ) is taken, and with probability a random action a is selected. Every episode terminates when the current state of the automaton gets inside Q sinks (Definition 3.3) or when the iteration number in the episode reaches a certain threshold τ . Note that it holds that µ asymptotically conv erges to the optimal greedy policy µ ∗ = argmax a ∈A P Q ∗ ( s, a ) : where Q ∗ is the optimal Q function. Further , Q ( s, µ ∗ ( s )) = U µ ∗ ( s ) = V ∗ ( s ) , where V ∗ ( s ) is the optimal v alue function that could hav e been computed via Dynamic Programming (DP) if the MDP was fully known [19], [31], [32]. Projection of µ ∗ onto the state- space of the PL-MDP , yields the finite-memory policy ξ ∗ that solves Problem 1. I V . A NA LY S I S O F T H E A L G O R I T H M In this section, we show that the policy µ ∗ generated by Algorithm 1 maximizes (1) , i.e., the probability of satisfying the property φ . Furthermore, we show that, unlike existing approaches, our algorithm can produce the best av ailable 2 Note that any other off-the-shelf model-free RL algorithm can also be used within Algorithm 1, including any variant of the class of temporal difference learning algorithms [19]. Algorithm 1: RL for L TL objectiv e input : Reward function R , LDBA A , γ , τ output : µ ∗ 1 Initialize C ( s, a ) = 0 , Q ( s, a ) , ∀ s ∈ S , ∀ a ∈ A P 2 A = S f k =1 F k 3 episode - number := 0 , iter ation - number := 0 4 while Q is not con ver ged do 5 episode - number + + 6 s cur = s 0 7 = 1 / ( episode - number ) 8 while ( q 6∈ Q sinks ) ∧ ( iter ation - number < τ ) do 9 iter ation - number + + 10 Set a cur = argmax a ∈A P Q ( s, a ) with probability 1 − and set a cur as a random action in A P with probability 11 Execute a cur and observe s next = ( x next , ` next , q next ) , and R ( s cur , a cur ) 12 if R ( s cur , a cur ) > 0 then 13 A = AF ( q next , A ) , 14 C ( s cur , a cur ) + + 15 Q ( s cur , a cur ) = Q ( s cur , a cur ) + (1 /C ( s cur , a cur ))[ R ( s cur , a cur ) − Q ( s cur , a cur ) + γ max a 0 ( s next , a 0 ))] 16 s cur = s next 17 end 18 end policy if the property cannot be satisfied. T o prove these claims, we need to show the following results. All proofs are presented in the Appendix. First, we show that the accepting frontier set A is time-inv ariant. This is needed to ensure that the L TL formula is satisfied over the product MDP by a stationary policy . Pr oposition 4.1: For an L TL formula φ and its associated LDB A A = ( Q , q 0 , Σ , F , δ ) , the accepting frontier set A is time-in variant at each state of A . As stated earlier , since QL is prov ed to conv erge to the optimal Q-function [19], it can synthesize an optimal policy with respect to the gi ven re ward function. The following result sho ws that the optimal policy produced by Algorithm 1 satisfies the giv en L TL property . Theor em 4.2: Assume that there exists at least one de- terministic stationary policy in P whose traces satisfy the property φ with positi ve probability . Then the traces of the optimal policy µ ∗ defined in (6) satisfy φ with positive probability , as well. Next we show that µ ∗ and subsequently its projection ξ ∗ maximize the satisfaction probability . Theor em 4.3: If an L TL property φ is satisfiable by the PL-MDP M , then the optimal policy µ ∗ that maximizes the expected accumulated reward, as defined in (6) , maximizes the probability of satisfying φ , defined in (1), as well. Next, we show that if there does not exist a policy that satisfies the L TL property φ , Algorithm 1 will find the policy that is the closest one to property satisfaction. T o this end, we first introduce the notion of closeness to satisfaction . Definition 4.4 (Closeness to Satisfaction): Assume that two policies µ 1 and µ 2 do not satisfy the property φ . Consequently , there are accepting sets in the automaton Fig. 1: PL-MDP that models the interaction of the robot with the en vironment. The color of each region (square) corresponds to the probability that some e vent can be observed there. Specifically , gray , magenta, blue, and green mean that there is a non-zero probability of an obstacle ( obs ), a user ( user ), target 1 ( target1 ), and target 2 target2 . Higher intensity indicates a higher probability . The red trajectory represents a sample path of the robot with the optimal control strategy ξ ∗ for the first case study . The red dot is the initial location of the robot. Fig. 2: Initial condition in Pacman en vironment. The magenta square is labeled food1 and the green one food2 . The color intensity of each square corresponds to the probability of the food being observed. The state of being caught by a ghost is labeled ghost and the rest of the state space neutral. that have no intersection with runs of the induced Markov chains P µ 1 and P µ 2 . The policy µ 1 is closer to satisfying the property if runs of P µ 1 hav e more intersections with accepting sets of the automaton than runs of P µ 2 . Cor ollary 4.5: If there does not exist a policy in the PL- MDP M that satisfies the property φ , then proposed algorithm yields a policy that is closest to satisfying φ . V . E X P E R I M E N T S In this section we present three case studies, implemented on MA TLAB R2016a on a computer with an Intel Xeon CPU at 2.93 GHz and 4 GB RAM. In the first two experiments, the en vironment is represented as a 10 × 10 discrete grid world, as illustrated in Figure 1. The third case study is an adaptation of the well-known Atari game Pacman (Figure 2), which is initialized in a configuration that is quite hard for the agent to solve. 0 0.5 1 1.5 2 2.5 10 5 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 (a) Case Study I 0 1 2 3 4 5 6 7 8 10 5 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 (b) Case Study II 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 2 10 5 0.36 0.38 0.4 0.42 0.44 0.46 0.48 0.5 (c) Case Study III Fig. 3: Illustration of the ev olution of U ¯ µ ( s 0 ) with respect to episodes. ¯ µ denotes the -greedy policy which con ver ges to the optimal greedy policy µ ∗ . V ideos of Pacman winning the game can be found in [33] The first case study pertains to a temporal logic planning problem in a dynamic and unknown en vironment with AMECs, while the second one does not admit AMECs. Note that the majority of existing algorithms fail to provide a control policy when AMECs do not exist [8], [20], [34], or result in control policies without satisfaction guarantees [18]. The L TL formula considered in the first two case studies is the following: φ 1 = ♦ ( target1 ) ∧ ♦ ( target2 ) ∧ ♦ ( user ) ∧ ( ¬ user ∪ target2 ) ∧ ( ¬ obs ) . (8) In words, this L TL formula requires the robot to (i) even- tually visit target 1 (expressed as ♦ target1 ); (ii) visit target 2 infinitely often and take a picture of it ( ♦ target2 ); (iii) visit a user infinitely often where, say , the collected pictures are uploaded (captured by ♦ user ); (i v) avoid visiting the user until a picture of target 2 has been taken; and (v) always av oid obstacles (captured by ( ¬ obs). The L TL formula (8) can be expressed as a DRA with 11 states. On the other hand, a corresponding LDB A has 5 states (fewer , as expected), which results in a significant reduction of the state space that needs to be explored. The interaction of the robot with the en vironment is modeled by a PL-MDP M with 100 states and 10 actions per state. The actions space is { Up , R ight , Down , L eft , None } × { T ake pictur e , Do not take pictur e } . W e assume that the targets and the user are dynamic, i.e., their location in the en vironment v aries probabilistically . Specifically , their presence in a gi ven region x ∈ X is determined by the unknown function P L from Definition 2.1 (Figure 1). The L TL formula specifying the task for Pacman (third case study) is: φ 2 = ♦ [( food1 ∧ ♦ food2 ) ∨ ( food2 ∧ ♦ food1 )] ∧ ( ¬ ghost ) . (9) Intuiti vely , the agent is tasked with (i) e ventually eating food1 and then food2 (or vice versa), while (ii) av oiding any contact with the ghosts. This L TL formula corresponds to a DRA with 5 states and to an LDBA with 4 states. The agent can ex ecute 5 actions per state { Up , R ight , Down , L eft , None } and if the agent hits a wall by taking an action it remains in the previous location. The ghosts dynamics are stochastic: with a probability p g = 0 . 9 each ghost chases the Pacman (often referred to as “chase mode”), and with its complement it ex ecutes a random action (“scatter mode”). In the first case study , we assume that there is no uncertainty in the robot actions. In this case, it can be verified that AMECs exist. Figure 3(a) illustrates the evolution of U ¯ µ ( s 0 ) ov er 260000 episodes, where ¯ µ denotes the -greedy policy . The optimal policy was constructed in approximately 30 minutes. A sample path of the robot with the projection of optimal control strategy µ ∗ onto X , i.e. policy ξ ∗ , is gi ven in Figure 1 (red path). In the second case study , we assume that the robot is equipped with a noisy controller and, therefore, it can ex ecute the desired action with probability 0 . 8 , whereas a random action among the other av ailable ones is taken with a probability of 0 . 2 . In this case, it can be verified that AMECs do not exist. Intuitiv ely , the reason why AMECs do not exist is that there is alw ays a non-zero probability with which the robot will hit an obstacle while it travels between the access point and target 2 and, therefore, it will violate φ . Figure 3(b) shows the ev olution of U ¯ µ ( s 0 ) ov er 800000 episodes for the -greedy policy . The optimal policy was synthesized in approximately 2 hours. In the third experiment, there is no uncertainty in the ex ecution of actions, namely the motion of the Pacman agent is deterministic. Figure 3(c) shows the ev olution of U ¯ µ ( s 0 ) ov er 186000 episodes where ¯ µ denotes the -greedy policy . On the other hand, the use of standard Q-learning (without L TL guidance) would require either to construct a history-dependent rew ard for the PL-MDP M as a proxy for the considered L TL property , which is very challenging for complex L TL formulas, or to perform exhausti ve state-space search with static rewards, which is e vidently quite wasteful and failed to generate an optimal policy in our experiments. Note that gi ven the policy ξ ∗ for the PL-MDP , probabilistic model checkers, such as PRISM [35], or standard Dynamic Programming methods can be employed to compute the probability of satisfying φ . For instance, for the first case study , the synthesized policy satisfies φ with probability 1 , while for the second case study , the satisfaction probability is 0 , since AMECs do not exist. For the same reason, even if the transition probabilities of the PL-MDP are known, PRISM could not generate a policy for the second case study . Nev ertheless, the proposed algorithm can synthesize the closest-to-satisfaction policy , as shown in Corollary 4.5. V I . C O N C L U S I O N S In this paper we have proposed a model-free reinforcement learning (RL) algorithm to synthesize control policies that maximize the probability of satisfying high-le vel control objectiv es captured by L TL formulas. The interaction of the agent with the environment has been captured by an unknown probabilistically-labeled Markov Decision Process (MDP). W e have shown that the proposed RL algorithm produces a policy that maximizes the satisfaction probability . W e hav e also shown that ev en if the assigned specification cannot be satisfied, the proposed algorithm synthesizes the best possible policy . W e have provided e vidence via numerical e xperiments on the efficienc y of the proposed method. R E F E R E N C E S [1] G. E. Fainekos, H. Kress-Gazit, and G. J. Pappas, “Hybrid controllers for path planning: A temporal logic approach, ” in CDC and ECC , December 2005, pp. 4885–4890. [2] H. Kress-Gazit, G. E. Fainekos, and G. J. Pappas, “T emporal-logic- based reactiv e mission and motion planning, ” IEEE Tr ansactions on Robotics , vol. 25, no. 6, pp. 1370–1381, 2009. [3] A. Bhatia, L. E. Kavraki, and M. Y . V ardi, “Sampling-based motion planning with temporal goals, ” in ICRA , 2010, pp. 2689–2696. [4] Y . Kantaros and M. M. Zavlanos, “Sampling-based optimal control synthesis for multi-robot systems under global temporal tasks, ” IEEE Tr ansactions on Automatic Contr ol , 2018. [Online]. A vailable: DOI:10.1109/T A C.2018.2853558 [5] ——, “Distributed intermittent connectivity control of mobile robot networks, ” IEEE Tr ansactions on Automatic Contr ol , vol. 62, no. 7, pp. 3109–3121, 2017. [6] X. C. Ding, S. L. Smith, C. Belta, and D. Rus, “MDP optimal control under temporal logic constraints, ” in CDC and ECC , 2011, pp. 532– 538. [7] E. M. W olff, U. T opcu, and R. M. Murray , “Robust control of uncertain Markov decision processes with temporal logic specifications, ” in CDC , 2012, pp. 3372–3379. [8] X. Ding, S. L. Smith, C. Belta, and D. Rus, “Optimal control of Markov decision processes with linear temporal logic constraints, ” IEEE Tr ansactions on Automatic Control , vol. 59, no. 5, pp. 1244– 1257, 2014. [9] M. Guo and M. M. Zavlanos, “Probabilistic motion planning under temporal tasks and soft constraints, ” IEEE Tr ansactions on Automatic Contr ol , 2018. [10] I. Tkache v , A. Mereacre, J.-P . Katoen, and A. Abate, “Quantitative model-checking of controlled discrete-time Markov processes, ” Infor- mation and Computation , vol. 253, pp. 1–35, 2017. [11] C. Baier and J.-P . Katoen, Principles of model checking . MIT Press, 2008. [12] E. M. Clarke, O. Grumberg, D. Kroening, D. Peled, and H. V eith, Model Checking , 2nd ed. MIT Press, 2018. [13] S. Sickert, J. Esparza, S. Jaax, and J. K ˇ retínsk ` y, “Limit-deterministic Büchi automata for linear temporal logic, ” in CA V . Springer , 2016, pp. 312–332. [14] R. Alur and S. La T orre, “Deterministic generators and games for L TL fragments, ” TOCL , vol. 5, no. 1, pp. 1–25, 2004. [15] S. Sickert and J. K ˇ retínsk ` y, “MoChiB A: Probabilistic L TL model checking using limit-deterministic Büchi automata, ” in ATV A . Springer, 2016, pp. 130–137. [16] J. Fu and U. T opcu, “Probably approximately correct MDP learning and control with temporal logic constraints, ” in Robotics: Science and Systems X , 2014. [17] T . Brázdil, K. Chatterjee, M. Chmelík, V . Forejt, J. K ˇ retínsk ` y, M. Kwiatko wska, D. Parker , and M. Ujma, “V erification of Markov decision processes using learning algorithms, ” in A TV A . Springer, 2014, pp. 98–114. [18] D. Sadigh, E. S. Kim, S. Coogan, S. S. Sastry , and S. A. Seshia, “ A learning based approach to control synthesis of Markov decision processes for linear temporal logic specifications, ” in CDC . IEEE, 2014, pp. 1091–1096. [19] R. S. Sutton and A. G. Barto, Reinforcement learning: An introduction . MIT press Cambridge, 1998, vol. 1. [20] J. W ang, X. Ding, M. Lahijanian, I. C. Paschalidis, and C. A. Belta, “T emporal logic motion control using actor–critic methods, ” The International Journal of Robotics Research , vol. 34, no. 10, pp. 1329– 1344, 2015. [21] Q. Gao, D. Hajinezhad, Y . Zhang, Y . Kantaros, and M. M. Zavlanos, “Reduced variance deep reinforcement learning with temporal logic specifications, ” 2019 (to appear). [22] N. Fulton and A. Platzer, “V erifiably safe off-model reinforcement learning, ” arXiv preprint , 2019. [23] N. Fulton, “V erifiably safe autonomy for cyber -physical systems, ” Ph.D. dissertation, Carnegie Mellon University Pittsburgh, P A, 2018. [24] N. Fulton and A. Platzer, “Safe reinforcement learning via formal methods: T oward safe control through proof and learning, ” in Thirty- Second AAAI Confer ence on Artificial Intelligence , 2018. [25] A. Platzer, “Differential dynamic logic for hybrid systems, ” Journal of Automated Reasoning , vol. 41, no. 2, pp. 143–189, 2008. [26] M. Hasanbeig, A. Abate, and D. Kroening, “Logically-constrained reinforcement learning, ” arXiv preprint , 2018. [27] ——, “Logically-constrained neural fitted Q-iteration, ” in AAMAS , 2019, pp. 2012–2014. [28] E. M. Hahn, M. Perez, S. Schewe, F . Somenzi, A. Tri vedi, and D. W ojtczak, “Omega-regular objectiv es in model-free reinforcement learning, ” arXiv preprint , 2018. [29] A. Pnueli, “The temporal logic of programs, ” in F oundations of Computer Science . IEEE, 1977, pp. 46–57. [30] M. L. Puterman, Markov decision pr ocesses: Discr ete stochastic dynamic pr ogramming . John Wile y & Sons, 2014. [31] A. Abate, M. Prandini, J. L ygeros, and S. Sastry , “Probabilistic reachability and safety for controlled discrete time stochastic hybrid systems, ” Automatica , vol. 44, no. 11, pp. 2724–2734, 2008. [32] A. Abate, J.-P . Katoen, J. L ygeros, and M. Prandini, “ Approximate model checking of stochastic hybrid systems, ” European Journal of Contr ol , vol. 16, no. 6, pp. 624–641, 2010. [33] https://www .cs.ox.ac.uk/conferences/LCRL/complementarymaterials/ Pacman. [34] J. Fu and U. T opcu, “Probably approximately correct MDP learn- ing and control with temporal logic constraints, ” arXiv preprint arXiv:1404.7073 , 2014. [35] M. Kwiatko wska, G. Norman, and D. Parker , “PRISM 4.0: V erification of probabilistic real-time systems, ” in CA V . Springer, 2011, pp. 585– 591. [36] R. Durrett, Essentials of stochastic pr ocesses . Springer, 1999, vol. 1. [37] V . Forejt, M. Kwiatkowska, and D. Parker , “Pareto curves for probabilistic model checking, ” in A TV A . Springer, 2012, pp. 317–332. [38] E. A. Feinberg and J. Fei, “ An inequality for variances of the discounted rew ards, ” Journal of Applied Pr obability , vol. 46, no. 4, pp. 1209–1212, 2009. A P P E N D I X Definition 1.1: Giv en an L TL property φ and a set G of G-subformulas, i.e., formulas in the form ( · ) , we define φ [ G ] to be the resulting formula when we substitute true for e very G-subformula in G and ¬ true for other G-subformulas of φ . A. Proof of Pr oposition 4.1 Let G = { ζ 1 , ..., ζ f } be the set of all G-subformulas of φ . Since elements of G are subformulas of φ we can assume an ordering ov er G so that if ζ i is a subformula of ζ j then j > i . The accepting component of LDBA Q D is a product of f DB As { D 1 , ...., D f } (called G-monitors) such that each D i = ( Q i , q i 0 , Σ , F i , δ i ) expresses ζ i [ G ] where Q i is the state space of the i -th G-monitor , Σ = 2 AP , and δ i : Q i × Σ → Q i [13]. Note that ζ i [ G ] has no G- subformulas. The states of the G-monitor D i are pairs of formulas where at each state, the first checks if the run satisfies ζ i [ G ] , while the second puts the next G-subformula in the ordering of G on hold. Howev er , all the previous G-subformulas ha ve been checked already and is replaced by tr ue in ζ i [ G ] . The product of the G-monitors is a deterministic generalized Büchi automaton: A D = ( Q D , q D 0 , Σ , F , δ ) , where Q D = Q 1 × ... × Q f , Σ = 2 AP , F = {F 1 , ..., F f } , and δ = δ 1 × ... × δ f . As shown in [13], while a word w is being read by the accepting component of the LDB A, the set of G-subformulas that hold “monotonically” expands. If w ∈ σ ∈ (2 AP ) ω | σ | = φ , then ev entually all G-subformulas become true. Assume that the current state of the automaton is q D = ( q 1 , ..., q i , ..., q f ) and the automaton is checking whether ζ i [ G ] is satisfied or not, assuming that ζ i − 1 is already tr ue , while putting ζ i +1 [ G ] on hold. At this point, the accepting frontier set is A = {F i , F i +1 , ..., F f } . Also assume the automaton returns to q D but A 6 = {F i , F i +1 , ..., F f } then at least one accepting set F j , j > i has been removed from A (Note that an accepting set F k , k < i cannot be added since the set of satisfied G-subformulas monotonically expands). This essentially means that ζ j [ G ] is already checked while ζ i [ G ] is not checked yet, making ζ j a subformula of ζ i . This violates the ordering of G and hence the assumption of A being time-variant is not correct. B. Proof of Theor em 4.2 W e prove this result by contradiction. Consider an y policy µ whose traces satisfy φ with positiv e probability . Policy µ induces a Markov chain P µ when it is applied over the MDP P . This Markov chain comprises a disjoint union between a set of transient states T µ and h sets of irreducible recurrent classes R i µ , i = 1 , ..., h [36], namely: P µ = T µ t R 1 µ t ... t R h µ . From the accepting condition of the LDBA, traces of policy µ satisfy φ with positive probability if and only if ∃R a µ s.t. ∀ j ∈ { 1 , ..., f } , F P j ∩ R a µ 6 = ∅ . The recurrent class R a µ is called an accepting recurrent class. Note that if all h recurrent classes are accepting then traces of policy µ satisfy φ with probability one. By construction of the rew ard function (5) the agent receiv es a positiv e rew ard r ev er after it has reached an accepting recurrent class as it keeps visiting all the accepting sets F j infinitely often. There are two other possibilities concerning the remaining recurrent classes that are not accepting. A non-accepting recurrent class, name it R n µ , either (i) has no intersection with any accepting set F P j , or (ii) or has intersection with some of the accepting sets but not all of them. In case (i), the agent does not visit any accepting set in the recurrent class and the likelihood of visiting accepting sets within the transient states T µ is zero since Q D is in variant. In case (ii), the agent is able to visit some accepting sets but not all of them. This means that there exist always at least one accepting set F P j that has no intersection with R n µ and after a finite number of times, no positi ve re ward can be obtained, and the re-initialization of A in Definition 3.5 will nev er happen. By (7) , in both cases, for any arbitrary r > 0 , there always exists a γ such that the expected re ward of a trace reaching an accepting recurrent class such as R a µ with infinite number of positiv e rew ards, is higher than the expected re ward of any other trace. Next, assume that the traces of optimal policy µ ∗ , defined in (6) , do not satisfy the property φ . In other words, ∀R i µ ∗ , ∃ j ∈ { 1 , ..., f } , F P j ∩ R i µ ∗ = ∅ and all of the recurrent classes are non-accepting. As discussed in cases (i) and (ii) above, the accepting policy µ has a higher expected rew ard than the optimal policy µ ∗ due to expected infinite number of positiv e rewards in policy µ . Howe ver , this contradicts the optimality of µ ∗ in (6) , completing the proof. C. Proof of Theor em 4.3 W e first re vie w how the satisfaction probability is calculated traditionally when the MDP is fully known and then we show that the proposed algorithm con ver gence is the same. Normally when the MDP graph and transition probabilities are known, the probability of property satisfaction is often calculated via DP-based methods such as standard value iteration over the product MDP P [11]. This allows to conv ert the satisfaction problem into a reachability problem. The goal in this reachability problem is to find the maximum (or minimum) probability of reaching AMECs . The value function V : S → [0 , 1] in value iteration is then initialized to 0 for non-accepting maximum end components and to 1 for the rest of the MDP . Once value iteration conv erges then at any gi ven state s ∈ S the optimal policy µ ∗ : S → A P is produced by µ ∗ ( s ) = argmax a P s 0 ∈S P ( s, a, s 0 ) V ∗ ( s 0 ) , where V ∗ is the con ver ged value function, representing the maximum probability of satisfying the property at state s , i.e. U µ ∗ ( s ) in our setup. The ke y to compare standard model-checking methods to our method is reduction of value iteration to basic form . More specifically , quantitative model-checking over an MDP with a reachability predicate can be con verted to a model- checking problem with an equiv alent re ward predicate which is called the basic form. This reduction is done by adding a one-off (or sometimes called terminal) reward of 1 upon reaching AMECs [37]. Once this reduction is done, Bellman operation is applied over the v alue function (which represents the satisfaction probability) and policy µ ∗ maximizes the probability of satisfying the property . In the proposed method, when an AMEC is reached, all of the automaton accepting sets hav e surely been visited by policy µ ∗ and an infinite number of positiv e rew ards r > 0 will be giv en to the agent as shown in Theorem 4.2. There are two natural ways to define the total discounted rew ards [38]: (i) to interpret discounting as the coefficient in front of the re ward; and (ii) to define the total discounted rew ards as a terminal reward after which no rew ard is giv en and treat the update rule as if it is undiscounted. It is well-known that the expected total discounted rewards corresponding to these methods are the same; see, e.g., [38]. Therefore, without loss of generality , giv en any discount factor γ , and any positiv e reward component r , the expected discounted rew ard for the discounted case (the proposed algorithm) is c times the undiscounted case (value iteration) where c is a positive constant. This concludes that maximizing one is equiv alent to maximizing the other . D. Proof of Cor ollary 4.5 Assume that there exists no policy in M whose traces can satisfy the property φ . Construct the induced Markov chain P µ for any arbitrary policy µ and its associated set of transient states T µ and its h sets of irreducible recurrent classes R i µ : P µ = T µ t R 1 µ t . . . t R h µ . By assumption, policy µ cannot satisfy the property and thus ∀R i µ , ∃ j ∈ { 1 , ..., f } , F P j ∩ R i µ = ∅ . Follo wing the same logic as in the proof of Theorem 4.2, after a limited number of times no positiv e reward is gi ven to the agent. Ho wev er , by the con ver gence guarantees of QL, Algorithm 1 will generate a policy with the highest expected accumulated reward. By construction of the re ward function in (5) , this policy has the highest number of intersections with accepting sets. E. Counter-example W e would like to emphasise that in this work and [26] 0 ≤ γ ≤ 1 due to the fact the algorithm that we proposed is “episodic” and thus, covers the un-discounted case as well. This has been unfortunately overlooked in [28]. In the follo wing we examine the general cases of discounted and un- discounted learning and we sho w that our algorithm, which is episodic, is able to output the correct action for the example provided in [28] (Fig. 4). For the sake of generality , we have parameterised the probabilities associated with action right and left with 1 − ν and ν , respectively . Recall that for a policy µ : S → A on an MDP M , and giv en a reward function R , the expected discounted re ward at state s by taking action a is defined as [19]: U µ ( s, a ) = E µ [ ∞ X n =0 γ n R ( s n , µ ( s n )) | s 0 = s, a 0 = a ] , (10) where E µ [ · ] denotes the expected v alue by following policy µ , and s 1 , a 1 , ..., s n , a n is the sequence of state-action pairs generated by policy Pol up to time step n . W e would like to show that for some γ ∈ [0 , 1] , U µ ( s 0 , left ) > U µ ( s 0 , right ) . From (10) , at state s 0 , the expected return for each action is: U µ ( s 0 , right ) = (1 − ν )[ r + γ r + γ 2 r + ... ] U µ ( s 0 , left ) = γ 2 r + γ 5 r + γ 8 r + ... (11) Notice that µ has no effect on the expected return after the agent chose to go right or left as there is only one action available in subsequent states. Let us first consider U µ ( s 0 , right ) . The RHS is a geometric series with the initial term (1 − ν ) r and ratio of γ . Thus, U µ ( s 0 , right ) = (1 − ν ) r 1 − γ n 1 − γ . (12) The e xpected return U µ ( s 0 , left ) is also a geometric series such that: U µ ( s 0 , left ) = γ 2 r 1 − γ 3 n 1 − γ 3 . (13) Consider two cases (1) 0 ≤ γ < 1 , and (2) γ = 1 . In the first case 0 ≤ γ < 1 , as n → ∞ , γ n → 0 and γ 3 n → 0 and therefore, the following inequality can be solved for γ : γ 2 r 1 − γ 3 > (1 − ν ) r 1 − γ − → γ 2 1 + γ + γ 2 > 1 − ν − → γ < ( − p 1 /ν 2 + 2 /ν − 3 − 1) ν + 1 2 ν , or γ > ( p 1 /ν 2 + 2 /ν − 3 − 1) ν + 1 2 ν . (14) Thus, for some ν ∈ [0 , 1] , the discounted case 0 ≤ γ < 1 is suf ficient if γ > ( p 1 /ν 2 + 2 /ν − 3 − 1) ν + 1 / 2 ν . Howe ver , it is possible that for some ν ∈ [0 , 1] both conditions push γ to be outside of its range of 0 ≤ γ < 1 in the first case. Therefore, in the learning algorithm γ needs to be equal to 1 , which brings us to the second case, that is allo wed in our work thanks to the episodic nature of our algorithm. Note that when γ = 1 we cannot deriv e (14) since lim n →∞ γ n = lim n →∞ γ 3 n = 1 , and also 1 − γ = 0 cannot be cancelled from both sides of the inequality . Further to this, (12) and (13) become undefined when γ = 1 . From (11) though, we kno w with γ = 1 , the summations go to infinity as n → ∞ . The question is, can we show that U µ ( s 0 , left ) > U µ ( s 0 , right ) . Recall that the conv ergence of QL is asymptotic and if we can sho w that U µ ( s 0 , left ) > U µ ( s 0 , right ) after a number of episodes, then essentially our algorithm can output the correct result and will choose action left once QL has con verged. s 0 { u } s 1 { p } s 2 { u } s 3 { u } s 4 { u } s 5 { p } 1 − ν ν a : 1 a : 1 left : 1 a : 1 a : 1 a : 1 right Fig. 4: Example Product MDP with AP = { p, u } with φ = ♦ p T o prov e this claim let us consider the following limit as we push γ towards 1 : lim γ → 1 U µ ( s 0 , left ) U µ ( s 0 , right ) = lim γ → 1 γ 2 r 1 − γ 3 n 1 − γ 3 (1 − ν ) r 1 − γ n 1 − γ = lim γ → 1 γ 2 A r (1 − γ n )(1 + γ n + γ 2 n ) (1 − γ )(1 + γ + γ 2 ) (1 − ν ) A r 1 − γ n 1 − γ = γ 2 1 − ν (15) In case when ν = 0 , 1 − ν = 1 then the limit is 1 , namely the algorithm is indifferent between choosing left or right . This matches with the MDP as well since going to either direction does not change the optimality of the action when 1 − ν = 1 . Howe ver , if 0 < ν ≤ 1 then the limit is always greater than one, meaning that the expected return for taking left is greater than taking right after some finite number of episodes.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment