Towards Safe Machine Learning for CPS: Infer Uncertainty from Training Data

Machine learning (ML) techniques are increasingly applied to decision-making and control problems in Cyber-Physical Systems among which many are safety-critical, e.g., chemical plants, robotics, autonomous vehicles. Despite the significant benefits b…

Authors: Xiaozhe Gu, Arvind Easwaran

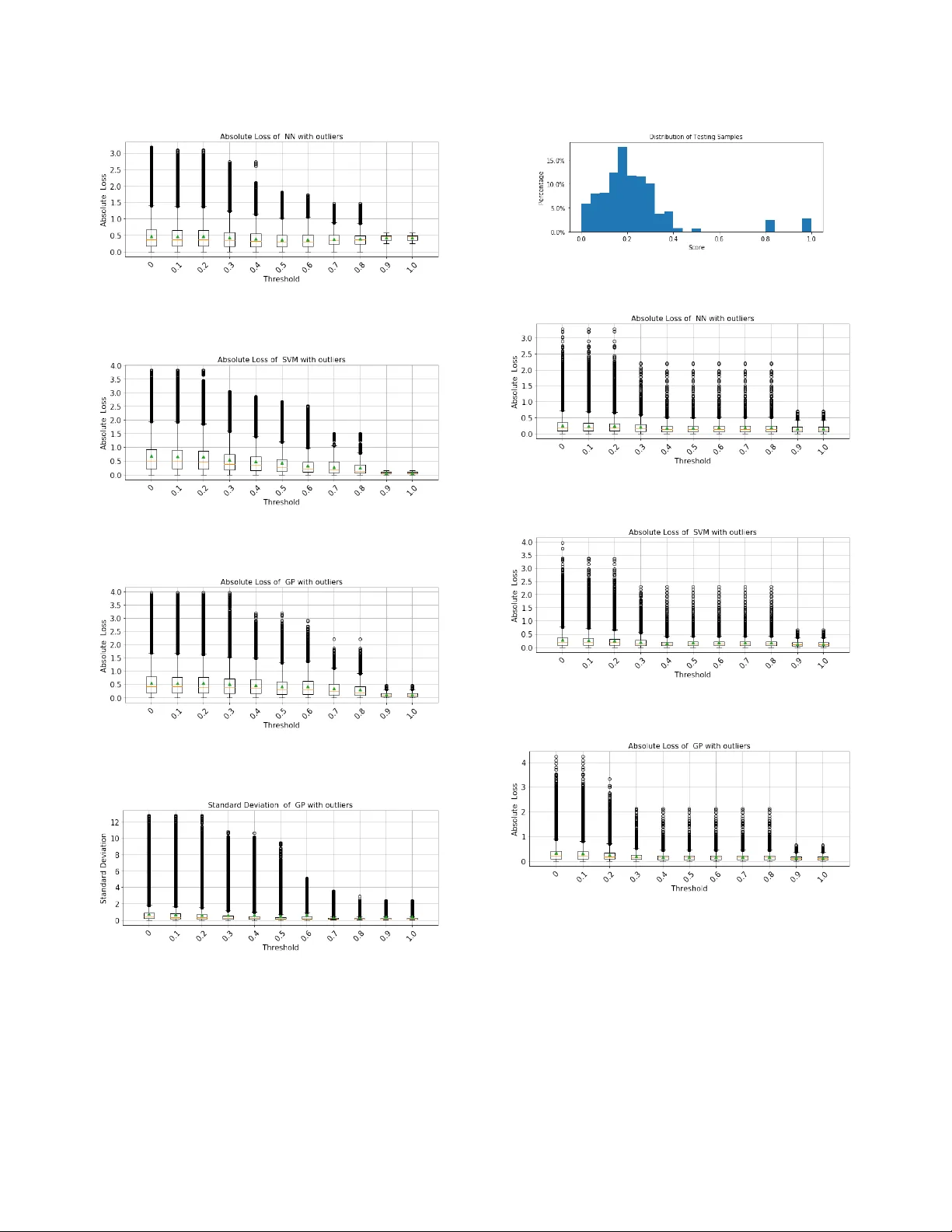

T owar ds Safe Machine Learning for CPS Infer Uncertainty from T raining Data Xiaozhe Gu Energy Research Institute @ Nanyang T echnological University XZGU@ntu.edu.sg Arvind Easwaran School of Computer Science and Entineering, Nanyang T echnological University arvinde@ntu.edu.sg ABSTRA CT Machine learning ( ML ) techniques are increasingly applied to decision- making and control problems in Cyber-Physical Systems among which many are safety-critical, e .g., chemical plants, robotics, au- tonomous vehicles. Despite the signicant benets brought by ML techniques, they also raise additional safety issues b ecause 1) most expressive and pow erful ML models are not transparent and behave as a black box and 2) the training data which plays a crucial role in ML safety is usually incomplete. An important technique to achie ve safety for ML models is “Safe Fail” , i.e., a model sele cts a reject option and applies the backup solution, a traditional controller or a human operator for example, when it has low condence in a prediction. Data-driven mo dels produced by ML algorithms learn fr om train- ing data, and hence they are only as goo d as the examples they have learnt. As pointed in [ 17 ], ML models work well in the “ training space ” (i.e., feature space with sucient training data), but they could not extrapolate beyond the training space. As obser ved in many previous studies, a feature space that lacks training data generally has a much higher error rate than the one that contains sucient training samples [ 31 ]. Therefore, it is essential to iden- tify the training space and avoid extrapolating beyond the training space. In this pap er , we propose an ecient Featur e Space Partition- ing Tree ( FSPT ) to address this problem. Using experiments, we also show that, a strong relationship exists between model performance and FSPT score. CCS CONCEPTS • General and refer ence → Reliability ; • Computing method- ologies → Classication and regression trees; KEY W ORDS Machine Learning Safety , Safe Fail A CM Reference Format: Xiaozhe Gu and Ar vind Easwaran. 2019. T owards Safe Machine Learning for CPS: Infer Uncertainty from Training Data. In 10th ACM/IEEE International Conference on Cyber-Physical Systems (with CPS-Io T W e ek 2019) (ICCPS ’19), Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than the author(s) must be honor ed. Abstracting with cr edit is permitted. T o copy otherwise, or republish, to p ost on servers or to r edistribute to lists, requires prior spe cic permission and /or a fee. Request p ermissions from permissions@acm.org. ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada © 2019 Copyright held by the owner/author(s). Publication rights licensed to A CM. ACM ISBN 978-1-4503-6285-6/19/04. . . $15.00 https://doi.org/10.1145/3302509.3311038 A pril 16–18, 2019, Montreal, QC, Canada. A CM, New Y ork, NY , USA, 10 pages. https://doi.org/10.1145/3302509.3311038 1 IN TRODUCTION Cyber-physical systems (CPS) are the new generation of engineered systems that continually interact with the physical w orld and hu- man operators. Sensors, computational and physical processes are all tightly coupled together in CPS. Many CPS hav e already b een de- ployed in safety-critical domains such as aerospace, transportation, and healthcare. On the other hand, machine learning (ML) techniques have achieved impressive results in recent years. They can reduce de- velopment cost as well as provide practical solutions to complex tasks which cannot be solved by traditional methods. Not surpris- ingly , ML te chniques have been applied to many de cision-making and control problems in CPS such as energy control [ 13 ], surgical robots [ 16 ], self-driving [ 6 ], and so forth. The safety-critical nature of CPS involving ML raises the nee d to improve system safety and reliability . Unfortunately , ML has many undesired characteristics that can impede this achievement of safety and reliability . • ML models with strong expressiv e power , e.g., deep neural networks (DNN), are typically considered non-transpar ent. Non-transparency is an obstacle to safety assurance because if the model b ehaves as a black b ox and cannot be understood by an assessor , it is dicult to dev elop condence that the model is operating as intended. • The standard empirical risk minimization approach used to train ML models reduces the empirical loss of a subset of possible inputs (i.e., training samples) that could be encoun- tered operationally . An implicit assumption made here is that training samples are drawn based on the actual underlying probability distribution. As a result, the representativeness of training samples is a necessar y condition to produce reli- able ML models. However , this may not always be the case, and training samples could be absent from most parts of the feature space. W e can apply various techniques to improv e the safety/reliability of ML models [ 21 ]. T o increase transparency , we can insist on models that can be interpreted by people such as ensembles of low-dimensional interpretable sub-models [ 19 ] or use specic ex- plainers [ 22 ] to interpret the predictions made by ML models. W e can also exclude features [ 26 ] that are not causally related to the outcome. A practical technique for ML to avoid unsafe predictions is “Safe Fail” . If a model is not likely to produce a correct output, a reject option is selected, and the backup solution, a traditional non- ML approach or a human operator , for example, is applied, thereby causing the system to fail safely . Such a “Safe Fail” te chnique is ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Xiaozhe Gu and Arvind Easwaran also not new in ML based system [ 5 , 12 ]. These works [ 5 , 12 ] focus on minimizing the empirical loss of the training set and hence im- plicitly assume the training set to b e representative . For example, a support vector machine (SVM) like classier with a reject option [ 5 ] could b e used for this purpose. As shown in Equation 1, ϕ ( x ) is the predictive output of the SVM classier , which is interpreted as its condence in a prediction, and t is the threshold for the r eject option. The classier is supposed to be most uncertain when ϕ ( x ) approximates to 0 . ˆ y ( x ) = − 1 if ϕ ( x ) ≤ − t reject option, if ϕ ( x ) ∈ (− t , t ) 1 if ϕ ( x ) ≥ t (1) As we can obser ve from Equation 1, to determine a reject option, we need the prediction “condence ” ϕ ( x ) , which however , can b e mislead- ing. 1.1 Motivation ML models learn from a subset of possible scenarios that could b e encountered operationally . Thus, they can only be as good as the training examples they have learnt. As pointed in [ 17 ], ML models work well in the “training space” with a cloud of training points, but they could not extrapolate beyond the training space. In other words, the training data determines the training space and hence the upper bound of ML model’s performance. A previous study [ 30 ] has also demonstrated that a feature space that lacks training data generally has a much higher error rate. Unfortunately , the training data is usually incomplete in practice and covers a very small part of the entire feature space. In fact, there is no guarantee that the training data is even representative [ 24 ]. Here we use two simple examples to illustrate this problem. Example 1. Figure 1 shows the decision b oundaries of an SVM classier to predict whether a mobile robot is turning right sharply . The value in the contour map represents the “predictive probability” that the input instance b elongs to the class “Sharp-Right- T urn”. In this example, the training samples are not representativ e of testing samples, and only cover a very small p ortion of the feature space. However , the classier still has very high condence beyond its training space even though there exists no training samples. As a result, the accuracy of testing samples decreases to 66% while the accuracy of training samples is almost 100% . Example 2. Figure 2 shows a toy regression problem, where 40 training samples drawn from a sine function have feature x in [ 0 , 5 ] , and 10 training samples have feature x in ( 10 , 15 ] . However , the testing samples have feature x in ( 5 , 10 ] . W e use a neural network (NN) regressor to t the data, and as shown, NN does a b etter job in tting the sine function in [ 0 , 5 ] than in ( 10 , 15 ] . Meanwhile, it do es a terrible job in extrapolating outside of the training space, i.e., ( 5 , 10 ] . 1.2 Contribution From the above examples we can observe that ML models work well only in the “training space ” (i.e., feature space with sucient training samples.) Meanwhile, in general, ML models have greater potential to achieve better performance in feature space with more training samples. Therefore , it is essential for safety-critical ML Figure 1: W all-following navigation task with mobile rob ot SCI TOS-G5 based on sensor readings from the front and left sensor [9]. Figure 2: A toy regression example based systems to ensure that their underlying ML mo dules only work in the training space. As a result, we aim to design a novel technique to do the following job: (1) split the feature space into multiple partitions, (2) identify those in which training samples are insucient, and (3) reject input instances from these data-lacking feature space partitions. W e rst outline the desired characteristics for such a technique: (1) A score function is required to evaluate the resulting feature space partitions. (2) The boundaries of feature space partitions are preferred to be interpretable and understandable, so that we can know , in which partition, ML models may have poor p erformance. With such information, we can collect more training samples from these regions (if possible). (3) Since we use this technique as a complement to ML models, the output must be generated eciently , and the additional overhead should be as small as possible. In this paper , we propose a Feature Space Partitioning T ree (FSPT) with the characteristics mentioned above, which comprises (1) a tree-based classier for splitting feature space (Section 3) with specic stopping and splitting criterion (Section 4.1 to 4.3.1), and (2) a score function S (R ) to evaluate the resulting feature space partitions (Section 4.3.2). As a toy example , Figure 3 shows the resulting feature space parti- tions for Example 1. The color of each hyper-rectangle represents the scores from FSPT . As we can see, FSPT gives very low score to T owards Safe Machine Learning for CPS ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Figure 3: The resulting feature space partitions with scores from FSPT for classication problem in Example 1 most partitions of the feature space be cause the training data only covers a small portion of the feature space 1 . Organization : In Section 2 we present related work, and in Sec- tion 3, w e propose the technique for splitting the feature space into multiple hyper-rectangles based on Classication And Regression Tr ee (CART) [ 8 ]. W e propose customization techniques (e.g., a ne w splitting criterion that takes feature importance into consideration and a score function for the resulting feature space partitions) in Section 4. In Section 5, we intr oduce how to apply FSPT in ML with a reject option. Finally , the experimental results in Section 6 also meet our expectations that, on average, ML models have a higher loss/error rate in feature space partitions with lower FSPT scores. In T able 1, we list notations that will be used in the remainder of this paper . T able 1: Notations Z = ( X , y ) data set X N × d feature matrix with N samples and d dimensions y label of an input instance ˆ y prediction of an input instance y vector of labels x feature vector of an input instance I a particular feature index f I importance of feature I x k I the value of the kth sample in X on feature I R a feature space partition I ( R ) upper bound value of feature I of R I ( R ) lower bound value of feature I of R ∆ I ( R ) I ( R ) − I ( R ) | R + | weighted number of training samples in R | R − | weighted number of E-points in R G ( R ) Gini index of R ˆ G ( R , I , s ) weighted Gini index with split feature I and value s ∆ G ( R ) gain in Gini index S ( R ) score of a resulting feature space partition R ϕ F ( x ) output of FSPT for input instance x ϕ M ( x ) output of the ML model for input instance x 2 RELA TED WORK In order to determine whether an ML model should select a reject option, we must obtain the “condence ” in its pr edictive output. For classication problems, the output of an ML model is usually 1 Note that, it does not mean that we have to reject most input instances. In fact, if the training data is representative, w e will encounter few input instances from these low score feature space partitions. interpreted as its condence in that prediction. For example, the output obtained at the end of the softmax layers of standard deep learning are often interpreted as the predictive probabilities. For ensemble methods 2 , the weighted votes of the underlying classi- ers [ 27 ] will b e use d as the predictive probabilities. As a result, existing works on classication with a reject option [ 5 , 12 ] usually determine a reject option based on the predictive output. An implicit assumption made here is that these classiers are most uncertain near the decision boundaries of dierent classes and the distance from the decision b oundary is inversely related to the condence that an input instance belongs to a particular class. This assumption is reasonable in some sense because the decision boundaries learnt by these mo dels are usually located where many training samples with dierent labels overlap. However , if a feature space X contains few or no training samples at all, then the decision boundaries of ML models may wholly be based on an inductive bias, thereby having much epistemic uncertainty [ 4 ]. In other words, it is possible that an input instance coming from a feature space partition without any training samples would be classied falsely with a very high “predictive probability” by the ML model [10]. Prediction condence can also b e obtained by Bayesian meth- ods. Unlike classical learning algorithm, Bayesian algorithms do not attempt to identify “best-t” models of the data. Instead, they compute a posterior distribution over models P ( θ | X , y ) . For exam- ple, Gaussian pr ocess (GP) [ 25 ] assumes that p ( f ( x 1 ) , . . . , f ( x N )) is jointly Gaussian N ( µ , Σ ) . Given unobserved instance x ∗ , the output ˆ f ( x ∗ ) of GP is then also conditional Gaussian p ( ˆ f ( x ∗ ) | x ∗ , X , y ) = N ( µ ∗ , σ ∗ ) . The standard deviations σ ∗ can then b e interpreted as the prediction uncertainty . GP is computationally intensive and has complexity O ( N 3 ) , where N is the number of training samples. Bayesian methods can also be applied to neural networks (NNs). Innitely wide single hidden layer NNs with distributions placed over their weights converge to Gaussian processes [ 18 ]. V ariational inference [ 14 , 20 ] can be used to obtain approximations for nite Bayesian neural networks. The dropout techniques in NNs can also be interpreted as a Bayesian appro ximation of Gaussian pro- cess [ 10 ]. Despite the nice properties of Bayesian inference, there are some controv ersial aspects : (1) The prior plays a key role in computing the marginal likeli- hood b ecause we are averaging the likelihood over all possi- ble parameter settings θ , as weighted by the prior [ 23 ]. If the prior is not carefully chosen, w e may generate misleading results. (2) It often comes with a high computational cost, especially in models with a large number of parameters. The conformal prediction framework [ 28 , 29 ] uses past experi- ence to determine precise levels of condence in new predictions. Given a certain error probability requirement ϵ , it forms a predic- tion interval [ f ( x ) , f ( x )] for regression or a prediction label set { Lab el 1 , Lab el 2 , . . . } for classication so that the inter val/set con- tains the actual prediction with a probability greater than 1 − ϵ . Howev er , its theoretical correctness depends on the assumption that all the data are independent and identically distributed (later , a weaker assumption of “ exchangeability” replaces this assumption) . Besides, 2 Ensemble methods are learning algorithms that construct a set of classiers and then classify new data points by taking a weighted vote of their predictions. ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Xiaozhe Gu and Arvind Easwaran points representing empty spa ce points representing training samples Figure 4: Decision b ound- aries of a classier points representing empty spa ce points representing training samples Figure 5: Decision b ound- aries of a tree-based classi- er for regression problems, it tends to produce prediction bands whose width are roughly constant ov er the whole feature space [15]. 3 BASIC IDEA OF P ARTI TIONING THE FEA T URE SP A CE Our objective is to distinguish the featur e space partitions with a high density of training samples from those with a low density of training samples. Let’s assume that there is another category of data points representing the empty feature space ( E-points for short) that are uniformly distributed among the entire featur e space. A s shown in Figure 4, w e can use a classier to distinguish the training data points from E-points. Then, the output of the classier can be used to indicate whether an input instance is from a feature space partition with sucient training data. Howev er , the number of E-points we nee d to sample will increase exponentially with the number of features. There are two possible solutions to address this issue: (1) apply dimension reduction techniques such as ensembles of low-dimensional sub-models [19] or (2) use tree/rule-based classiers because their following prop- erties are suitable for our task. (a) As shown in Figure 5, the feature space partitions con- structed by a tree-based classier are hyper-r ectangles. A s a result, we can get useful information about each parti- tion, e.g., the side length of each feature, the volume , and the number of training samples within it, very easily . (b) Suppose the initial feature space R is split into R 1 and R 2 by feature I and value s , such that ∀ x ∈ R 1 : x I ≤ s and ∀ x ∈ R 2 : x I > s . The number of E-points belonging to R 1 and R 2 will be in proportion to the side length of feature I , i.e., ∆ I (R 1 ) = s − I (R ) and ∆ I (R 2 ) = I ( R ) − s because the E-points are assumed to be uniformly distributed. Here I (R ) and I (R ) denote the lower and upper bound values of feature I , respectively . Let Z = ( X , y ) denote the data set, where X and y denote the feature matrix and the vector of labels, respectively , then the lower and upp er b ound values of feature I of the entire feature space are as follows. Upper Bound : I = max x ∈ X x I Lower Bound : I = min x ∈ X x I 3.1 Tr ee Construction In this paper , we consider the classication and regression tree (CART) [ 8 ] for feature space partitioning. CART uses Gini index to measure the purity of a feature space partition. Gini index is a measure of how often a randomly chosen element fr om the set would be incorrectly labeled if it was randomly labeled according to the distribution of labels in the subset. Supp ose | R k | and | R | denote the weighted number of data points lab eled k and the weighted number of all the data points in R , respectively . Then the Gini index of R can be computed as follows. G (R ) = K Õ k = 1 | R k | | R | 1 − | R k | | R | ! (2) Suppose feature space R is split into R 1 and R 2 by feature I and value s . CART will always select the split point to minimize the weighted Gini index of R 1 and R 2 . ⟨ I , s ⟩ = arg min ⟨ I , s ⟩ ˆ G (R , I , s ) , where (3) ˆ G (R , I , s ) = | R 1 | | R | G (R 1 ) + | R 2 | | R | G (R 2 ) The gain in the Gini index from the split is then ∆ G ( R ) = G ( R ) − min I , s ˆ G (R , I , s ) (4) Let + and − denote the label of training data points and E-points, respectively . Suppose there are innite E-points uniformly dis- tributed in the hyper-rectangle R . If we set the weight of each E-point to | R − | ∞ , the weighted number of E-p oints 3 in R is equal to | R − | ∞ × ∞ = | R − | . Let | R + | denote the weighted number 4 of training points in the hyper-rectangle R . The weighted Gini index after R is split into R 1 and R 2 is equal to | R + 1 | + | R − 1 | | R + | + | R − | × G (R 1 ) + | R + 2 | + | R − 2 | | R + | + | R − | × G (R 2 ) , where (5) | R − 1 | = s − I ( R ) I (R ) − I ( R ) × | R − | = s − I ( R ) ∆ I (R ) × | R − | (6) | R − 2 | = I (R ) − s I (R ) − I ( R ) × | R − | = I (R ) − s ∆ I (R ) × | R − | (7) 4 CART CUSTOMIZA TIONS FOR FSPT FEASIBILI T Y In this section, we propose several customization techniques for CART to realize FSPT so that it is suitable for identifying feature space partitions without sucient training samples. 3 In the rest of this paper , when we refer to the number of E-points, what we actually mean is the weighted number . 4 W e assume the weight of a single training sample is equal to 1 . T owards Safe Machine Learning for CPS ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada points r epresenting training samples R 1 R 2 Figure 6: Stop Construction 4.1 Stopping Criterion and the Number of E-points The rst question we must answer is when can w e stop constructing the tree because other wise, the tree can grow innitely . In our case, we can stop further splitting feature space partition R if all training samples ar e uniformly distributed in R approximately . A s sho wn in Figure 6, we can stop splitting R 1 and R 2 because data points are evenly distributed in them, e ven though the densities of training samples are dierent. The intuition for the stopping criterion is that, when the stopping condition is satised, any further split can only generate two hyper-rectangles with similar data distributions. Another parameter we need to determine is the number of E- points | R − | . According to Equations 6 and 7, the numb er of E-points | R − | for hyper-rectangle R could decrease drastically if the split value s is close to the bound of feature I , i.e., I (R ) − s → 0 or s − I (R ) → 0 . For a high dimension space, | R − | will become close to 0 after several splits. A s a r esult, any further division can only result in a negligible decrease in the Gini Index and hence a negligible increase in the gain of Gini Index, which makes it more dicult to nd the optimal split point. T o address the above two issues, we x | R − | = | R + | at each split. In this case ( | R + | = | R − | ), when the condition, i.e., all training samples are uniformly distributed in R approximately , is satised, the gain in Gini index ∆ G ( R ) will b e close to 0 . = 0 . 5 z }| { G (R ) − | R 1 | | R | × → 0 . 5 z}|{ G (R 1 ) − | R 2 | | R | → 0 . 5 z}|{ G (R 2 ) → 0 Thus, the gain in Gini index ∆ G ( R ) → 0 is a necessary condition to indicate whether the stopping criterion (training samples are evenly distributed in R approximately ) is satised. Howe ver , it is not a sucient condition. Figure 7 shows an exceptional scenario where ∆ G ( R ) is close to 0 , but training samples are not distributed uniformly in R . T o address this issue, w e use a counter c to record the number of successive times that ∆ G ( R ) ≤ ϵ where ϵ → 0 . The construction process terminates when c is greater than some threshold λ . For example, in Figure 7, ∆ G ( R ) ≤ ϵ and hence c ← c + 1 . After we split R into R 1 and R 2 , ∆ G ( R 1 ) = G (R 1 ) − | R 11 | | R 1 | G (R 11 ) − | R 12 | | R 1 | G (R 12 ) > ϵ ∆ G ( R 2 ) = G (R 2 ) − | R 21 | | R 2 | G (R 21 ) − | R 22 | | R 2 | G (R 22 ) > ϵ points repr esenting training samples R 1 R 2 R 11 R 21 R 12 R 22 Figure 7: Exceptional Scenario and hence the counter c is reset to 0 . As a result, the tree con- struction algorithm will continue to split R 1 → {R 11 , R 12 } and R 2 → { R 21 , R 22 } . Meanwhile, λ need not ne cessarily be a xed value. When there are lots of training samples in R , then we can assign a larger value to λ . Otherwise, if | R + | is very small, the construction process can terminate immediately . 4.2 Split Points One of the critical problems in tree learning is to nd the best split as represented by Equation 3. A simple gree dy solution is to enumerate over all possible split values on all the features. In our case, howe ver , there are innite possible split values, because we assume there are innite E-points uniformly distributed in the feature space. Suppose we want to nd the best split for the hyper-r ectangle R on feature I . Let S I = { s 1 , s 2 , . . . , s N } denote the set of unique values of feature I of training samples in R , where items in S I are sorted in ascending order , i.e., I (R ) ≤ s 1 < . . . < s N ≤ I (R ) . Let cdf ( s ) denote the cumulative distribution function of training samples in R on feature I . Then ∀ s ∈ [ s k , s k + 1 ) , we have cdf ( s ) = cdf ( s k ) because there exists no training sample with feature I within ( s k , s k + 1 ) . For simplicity , we assume feature I is normalized to 1, i.e., ∆ I (R ) = I (R ) − I ( R ) = 1 , and hence the split value s ∈ [ 0 , 1 ] . If we split R into R 1 and R 2 by value s and feature I , then G (R 1 ) = 2 × | R + 1 | | R + 1 | + | R − 1 | | R − 1 | | R + 1 | + | R − 1 | = 2 cdf ( s ) cdf ( s ) + s s cdf ( s ) + s G (R 2 ) = 2 × | R + 2 | | R + 2 | + | R − 2 | | R − 2 | | R + 2 | + | R − 2 | = 2 1 − cdf ( s ) 2 − cdf ( s ) − s 1 − s 2 − cdf ( s ) − s ⇒ ˆ G (R , I , s ) = cdf ( s ) + s 2 × G (R 1 ) + 2 − s − cdf ( s ) 2 × G (R 2 ) = cdf ( s ) s cdf ( s ) + s + ( 1 − cdf ( s ))( 1 − s ) 2 − cdf ( s ) − s ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Xiaozhe Gu and Arvind Easwaran Let’s compute the partial derivative of weighted Gini index on s . ∂ ˆ G (R , I , s ) ∂ s = cdf ( s ) cdf ( s ) + s 2 − 1 − cdf ( s ) 2 − cdf ( s ) − s 2 = > 0 z }| { cdf ( s ) cdf ( s ) + s + 1 − cdf ( s ) 2 − cdf ( s ) − s cdf ( s ) − s ( cdf ( s ) + s )( 2 − cdf ( s ) − s ) When s ∈ [ s k , s k + 1 ) , cdf ( s ) = cdf ( s k ) is a constant value. If s k ≥ cdf ( s k ) ⇒ ∂ ˆ G ( R , I , s ) ∂ s ≤ 0 , then ˆ G (R , I , s ) is minimized when s = s k + 1 − ϵ , where ϵ → + 0 . If s k + 1 ≤ cdf ( s k ) ⇒ ∂ ˆ G ( R , I , s ) ∂ s ≥ 0 , then ˆ G (R , I , s ) is minimized when s = s k . Finally if s k < cdf ( s k ) < s k + 1 , then ˆ G (R , I , s ) is minimized when s = s k or s = s k + 1 − ϵ . Therefore, there is no need to try all innite split values, and the potential split value set is reduced to max I (R ) , s 1 − ϵ , s 1 , s 2 − ϵ , s 2 , s 3 − ϵ , . . . , s N It can still be computationally demanding to enumerate all the split points in the candidate set, especially when the data cannot t entirely into memory . T o address this issue, we can also use approximate split nding algorithms (e.g., use candidate splitting points according to percentiles of feature distribution), which is quite common in tree learning algorithms. 4.3 New Splitting Criterion and Score Function In general, more training samples are required for an ML mo del to achieve good performance within a feature space partition R with a larger volume V ( R ) , where V ( R ) = Ö I I (R ) − I ( R ) = Ö I ∆ I (R ) This scenario is clearly shown in Example 2 in Section 1.1. While two feature space partitions x ∈ [ 0 , 5 ] and x ∈ ( 10 , 15 ] have the same volume , the ML model ts the sine function much better in the former with 40 training samples than in the latter with 10 training samples. Of course, this is not always the case, especially when the model is po orly trained. For example, imagine we have a model whose output is always zero. Then it is evident that its performance has a very weak dep endence on the training samples. Thus, the premise to infer ML models’ performance in dier ent feature space partitions from training samples and volume V ( R ) is that it does not over-t or under-t. Another challenge in inferring ML mo dels’ performance in R from its volume and training samples is that all features are equally important in the computation of volume. Besides, as long as there exists any feature I with ∆ I (R ) close to 0, the v olume of R will also be close to 0 irrespective of the feature importance of I and the side length of other features. As shown in Figure 8, R 1 and R 2 have the same number of training samples, but R 1 covers a larger feature space . If feature x and feature y are equally important, then we can expect that an ML model will have a higher tting degree of the objective function in R 2 than in R 1 , if it does not over-t or under-t. Howev er , if feature y is of very low importance or irrelevant to the objective function, then the previous inference can be misleading. points representing training samples R 1 R 2 feature x feature y Figure 8: Problem of Ir- relevant Features points r e presenting training samples 3 994 3 Figure 9: Resulting hyper-rectangles have too small rectangles T o address this problem, we can exclude all the irrelevant fea- tures, and meanwhile, incorporate feature importance into the split- ting criterion as well as the score function for the resulting feature space partitions. There are lots of techniques that can be use d to assess feature importance [ 7 , 11 , 32 ]. Of course, it is not trivial to obtain precise feature importance values. Meanwhile, features that are globally important may not be important in the local con- text, and vice versa. In this paper , we only consider global feature importance with constant values. Another issue is that we also do not want to split the feature space into too many hyper-rectangles with an extr emely small side length on a particular feature I , i.e ., ∆ I ( R ) ∆ I → 0 , wher e ∆ I is the side length of the entire feature space. For e xample, in Figur e 9, since ∆ I ( R ) ∆ I = 0 . 3% → 0 , we will not further split on this feature unless it can bring signicant gain in the splitting criterion. T o meet the requirements mentioned ab ove, w e propose: (1) A more reasonable splitting criterion named w eighed gain in Gini index, which considers 1) ˆ G (R , I , s ) , 2) the feature importance values { f 1 , f 2 , . . . , f d } and 3) the side length of each feature ∆ I (R ) . (2) A heuristic score function to assess the resulting feature space partitions. 4.3.1 Spliing Criterion. Equation 8 shows the new criterion for selecting splitting points. ⟨ I , s ⟩ = arg max ⟨ I , s ⟩ f I × ∆ I (R ) ∆ I ∆ G ( R ) (8) where ∆ G ( R ) = G (R ) − ˆ G (R , I , s ) The intuition of this criterion is simple. W e prefer to split on features that are important and have a larger side length, unless there is a signicant increase in ∆ G ( R ) . 4.3.2 Score Function. W e propose a heuristic score function in Equation 9 to evaluate the resulting hyper-rectangle R , and it takes both the feature importance and the side length of each featur e into consideration. S (R ) = Õ I f I × | R + | | R + | + ∆ I ( R ) ∆ I × E (9) In Equation 9, E is a hyperparameter . W e set E = N d in our exper- iments in Section 6, where N is the number of training samples T owards Safe Machine Learning for CPS ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada and d is the number of features. The intuition for Equation 9 is that we evaluate R on each feature separately based the training samples and side length ∆ I (R ) . For example, if ∆ I (R ) → 0 , i.e., training samples in R have the same value on feature I , then R is supposed to get the full score of feature I (i.e., f I ). After FSPT completes the tree construction, the nal scores for each partition can be normalized into the range [ 0 , 1 ] . Complexity : Suppose FSPT has depth T , then the score of an input instance can be calculated eciently with complexity O ( T ) . Since each feature space partition contains at least one training sample, the run-time complexity is O ( log N ) 5 REJECT MODEL Suppose input instance x is in feature space partition R , the output of FSPT for x is ϕ F ( x ) = S (R ) Regression Problems : W e can use ϕ F ( x ) to determine whether the prediction for input instance x should be rejected in regression problems directly . Let ϕ M ( x ) denote the predictive output of ML models for input instance x , then a reject option can b e selecte d when ϕ F ( x ) is smaller than a particular threshold t . ˆ y ( x ) = ( ϕ M ( x ) if ϕ F ( x ) ≥ t reject option, otherwise (10) Classication Problems : Suppose ϕ c M ( x ) denotes ML models’ pre- dictive probability that x belongs to class “c” , then the nal predic- tive class for x is c = arg max c { ϕ c M ( x ) } Let ϕ M ( x ) = max c { ϕ c M ( x ) } , we use b oth ϕ M ( x ) and ϕ F ( x ) to de- termine whether a reject option should be selected. The reason to adopt such a reject strategy is that an ML model’s accuracy for an input instance depends on its distance to the decision boundaries signicantly . Figure 10 shows a to y e xample of a binary classication pr oblem. As we can see, few training samples e xist in feature space partition R 1 and R 2 perhaps due to small probability density there. Thus, FSPT gives relatively low scores to R 1 and R 2 because there is not sucient evidence to draw a condent conclusion. Howev er , the CLASS 2 CLASS 1 R 1 R 2 Decision Boundary Figure 10: Training samples for a binar y classication prob- lem model may have 100% accuracy in R 1 and R 2 just because its guess is “lucky” enough to be correct. Thus, for classication problems, the output of FSPT will be used as a complement to the ML model, and the prediction of ML models will be adopte d only when both ϕ F ( x ) and ϕ c M ( x ) exceed the corresponding thresholds. ˆ y ( x ) = ( c if ϕ c M ( x ) ≥ t 1 ∧ ϕ F ( x ) ≥ t 2 reject option, otherwise (11) 6 EV ALU A TION In this section, we evaluate the eectiveness of our proposed tech- nique for both regression and classication problems. The feature importance values used in the experiments are obtained from Ran- dom Forest [ 7 ]. W e investigate the performance of three popular ML models, i.e., (1) Neural Networks (NN) (2) Support V ector Machine (SVM) (3) Gaussian Process (GP) when we set dierent r ejection thresholds for them. Since GP can oer standard deviations for testing samples in regression prob- lems, we also investigate the relationship b etween the standard deviations and ϕ F ( x ) . For a given data set Z , testing samples are randomly sampled from Z . In Figure 11, 16, 21 and 22, we show the distributions of testing samples with respect to the scores. 6.1 Regression Problems For regression problems, we evaluate the reject model in Equa- tion 10. W e use box-plot (Figure 12 to 20) to show absolute loss | y − ˆ y | /standard deviation of testing samples with dierent rejection thresholds t ∈ { 0 , 0 . 1 , 0 . 2 , 0 . 3 , . . . , 1 } . W e consider the following two applications in the experiments. Quality Prediction in a Mining Process : This dataset is about a otation plant which is a process used to concentrate the iron ore [ 2 ]. The goal of this task is to predict the percentage of Silica at the end of the process from 22 features. As this value is measured every hour , if we can predict how much silica is in the ore concentrate, we can help the engineers, giving them early information to take actions. Hence, they will be able to take corrective actions in advance and also help the environment by reducing the amount of ore that goes to tailings. Figure 11: Mining Process: sample distribution The Inverse Dynamics of a SARCOS Rob ot Arm : This task is to map from a 21-dimensional input space (7 joint positions, 7 joint velocities, 7 joint accelerations) fr om a seven degrees-of-freedom SARCOS robot arm [ 3 ] to the inverse dynamics of a corresponding torque. An inverse dynamics model can be used in the following manner: a planning module decides on a trajectory that takes the ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Xiaozhe Gu and Arvind Easwaran Figure 12: Mining Process: absolute loss for NNs Figure 13: Mining Process: absolute loss for SVM Figure 14: Mining Process: absolute loss for GP Figure 15: Mining Process: standard deviation for GP robot from its start to goal states, and this sp ecies the desired positions, velocities and accelerations at each time. The inverse dy- namics model is use d to compute the torques ne eded to achieve this trajectory and errors are corrected using a feedback controller [ 25 ]. Figure 16: SARCO: sample distribution Figure 17: SARCO: absolute loss for NNs Figure 18: SARCO: absolute loss for SVM Figure 19: SARCO: absolute loss for GP 6.2 Classication Problems For classication problems, we e valuate the reject model in Equa- tion 11. Through the experiment results, we show that input in- stance with both high scores from FSPT ϕ F ( x ) and high scores (i.e., predictive probability output ϕ M ( x ) ) from ML models has a lower error rate. Let t 1 denote the rejection threshold for ML model, and t 2 denote rejection threshold for FSPT . W e partition testing samples into group G 1 and G 2 . T owards Safe Machine Learning for CPS ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Figure 20: SARCO: standard deviation for GP (1) G 1 : ϕ M ( x ) ≥ t 1 ∧ ϕ F ( x ) ≥ t 2 (2) G 2 : ϕ M ( x ) ≥ t 1 ∧ ϕ F ( x ) < t 2 From T able 2 to Table 7, w e present the accuracy of G 1 and G 2 for dierent combinations of t 1 and t 2 . Besides, we also show the mean value of ϕ M ( x ) and proportion of G 1 and G 2 . W e show that even when G 1 and G 2 have similar mean values of ϕ M ( x ) , which indicates that ML models have similar condence in the predictions of the testing samples in G 1 and G 2 , their accuracy of G 1 is higher than G 2 . W e consider the following two applications in the experiments. The Navigation T ask for Mobile Rob ot SCI TOS-G5 : This task is to map 24-dimension input, i.e., sensor readings from the 24 sensors of the mobile robot SCITOS-G5 [ 9 ] to 4 classes of behaviors: 1) “Move-Forward” , 2) “Sharp-Right- T urn” , 3) “Slight-Left- T urn” and 4) “Slight-Right-T urn” . ML models will be trained for the mobile robot to take decisions that determine its correct movement. Figure 21: SCI TOS: sample distribution T able 2: SCI TOS: NNs t 1 t 2 G 1 G 2 Proportion Accuracy ϕ M ( x ) Proportion Accuracy ϕ M ( x ) 0.90 0.00 1.00 0.925 0.970 0.00 nan nan 0.90 0.30 0.86 0.925 0.970 0.14 0.921 0.972 0.90 0.60 0.55 0.930 0.970 0.45 0.918 0.970 0.90 0.90 0.14 0.931 0.972 0.86 0.924 0.970 0.95 0.00 1.00 0.947 0.984 0.00 nan nan 0.95 0.30 0.85 0.948 0.984 0.15 0.944 0.984 0.95 0.60 0.54 0.951 0.984 0.46 0.942 0.984 0.95 0.90 0.15 0.956 0.984 0.85 0.946 0.984 Breast Cancer Diagnosis : This task is to map 30 features com- puted from a digitized image of a ne ne edle aspirate (FNA) of a breast mass to Class “benign” and Class “malignant” [1]. 6.3 Short Summar y: As we can observe from the experimental results in this section, a strong relationship exists between the ML model’s performance T able 3: SCI TOS: SVM t 1 t 2 G 1 G 2 Proportion Accuracy ϕ M ( x ) Proportion Accuracy ϕ M ( x ) 0.90 0.00 1.00 0.960 0.963 0.00 nan nan 0.90 0.30 0.86 0.961 0.963 0.14 0.954 0.962 0.90 0.60 0.56 0.967 0.962 0.44 0.950 0.963 0.90 0.90 0.15 0.959 0.961 0.85 0.960 0.963 0.95 0.00 1.00 0.968 0.979 0.00 nan nan 0.95 0.30 0.86 0.970 0.979 0.14 0.958 0.979 0.95 0.60 0.56 0.975 0.978 0.44 0.959 0.980 0.95 0.90 0.14 0.974 0.978 0.86 0.967 0.979 T able 4: SCI TOS: GP t 1 t 2 G 1 G 2 Proportion Accuracy ϕ M ( x ) Proportion Accuracy ϕ M ( x ) 0.60 0.00 1.00 0.975 0.703 0.00 nan nan 0.60 0.30 0.52 0.989 0.712 0.48 0.960 0.694 0.60 0.60 0.12 1.000 0.738 0.88 0.972 0.698 0.60 0.90 0.02 1.000 0.782 0.98 0.975 0.702 0.70 0.00 1.00 0.989 0.761 0.00 nan nan 0.70 0.30 0.58 0.989 0.762 0.42 0.989 0.761 0.70 0.60 0.18 1.000 0.764 0.82 0.986 0.761 0.70 0.90 0.03 1.000 0.785 0.97 0.989 0.761 Figure 22: Breast Cancer Diagnosis: sample distribution T able 5: Breast Cancer Diagnosis: NNs t 1 t 2 G 1 G 2 Proportion Accuracy ϕ M ( x ) Proportion Accuracy ϕ M ( x ) 0.80 0.00 1.00 0.892 0.990 0.00 nan nan 0.80 0.20 0.75 0.916 0.992 0.25 0.821 0.981 0.80 0.40 0.48 0.907 0.994 0.52 0.879 0.986 0.80 0.60 0.26 0.926 0.993 0.74 0.881 0.988 0.90 0.00 1.00 0.899 0.994 0.00 nan nan 0.90 0.20 0.76 0.914 0.996 0.24 0.849 0.990 0.90 0.40 0.48 0.905 0.997 0.52 0.892 0.992 0.90 0.60 0.26 0.924 0.997 0.74 0.890 0.993 and the scores ϕ F ( x ) from FSPT . In regression problems, we can reduce both mean error and maximum error , and thereby impro ve the reliability of ML mo dels, by rejecting predictions with ver y small ϕ F ( x ) . In classication problems, by rejecting input instances with either low FSPT score or ML model score, the prediction accu- racy can also be improved. Ev en among the predictions in which ML models have similar condence (i.e ., predictions with similar predictive probability ϕ M ( x ) ), the error rate of those with higher ϕ F ( x ) is lower than the rest. 7 CONCLUSION In this paper , we propose a feature space partition tree (FSPT) to split the feature space into multiple partitions with dierent training data densities. The resulting feature space partitions are scored using a heuristic metric based on the principle that an ML model’s performance in a particular feature space partition R is ICCPS ’19, April 16–18, 2019, Montreal, QC, Canada Xiaozhe Gu and Arvind Easwaran T able 6: Breast Cancer Diagnosis: SVM t 1 t 2 G 1 G 2 Proportion Accuracy ϕ M ( x ) Proportion Accuracy ϕ M ( x ) 0.80 0.00 1.00 0.988 0.936 0.00 nan nan 0.80 0.20 0.77 0.995 0.938 0.23 0.966 0.928 0.80 0.40 0.47 0.992 0.942 0.53 0.985 0.930 0.80 0.60 0.27 0.985 0.944 0.73 0.989 0.933 0.90 0.00 1.00 0.995 0.964 0.00 nan nan 0.90 0.20 0.78 1.000 0.966 0.22 0.976 0.959 0.90 0.40 0.48 1.000 0.971 0.52 0.990 0.958 0.90 0.60 0.28 1.000 0.969 0.72 0.993 0.962 T able 7: Breast Cancer Diagnosis: GP t 1 t 2 G 1 G 2 Proportion Accuracy ϕ M ( x ) Proportion Accuracy ϕ M ( x ) 0.55 0.00 1.00 0.940 0.914 0.00 nan nan 0.55 0.20 0.76 0.944 0.913 0.24 0.925 0.920 0.55 0.40 0.48 0.943 0.899 0.52 0.937 0.928 0.55 0.60 0.25 0.964 0.912 0.75 0.932 0.915 0.60 0.00 1.00 0.948 0.923 0.00 nan nan 0.60 0.20 0.76 0.955 0.922 0.24 0.924 0.924 0.60 0.40 0.47 0.954 0.910 0.53 0.942 0.934 0.60 0.60 0.25 0.975 0.925 0.75 0.939 0.922 upper bounded by the training samples within R . Based on FSPT , we propose two r ejection models for regression and classication problems, respective. The pr eliminary experimental results in Sec- tion 6 also meet our expectations. Howe ver , the current version of FSPT has many limitations to be addressed: (1) First, the criterion to construct FSPT depends on the feature importance or model’s reliance on dierent features. Ho w- ever , it is not a trivial task to get accurate feature importance values. Besides, features that ar e globally important may not be important in the lo cal context, and vice versa. Thus, one possible direction to improve FSPT is to incorp orate lo cal feature importance in it. (2) Another major limitation is that FSPT is only suitable for low- dimension tabular data sets. For complex input data such as images, we should apply FSPT to more meaningful features extracted by other techniques rather than pixel values. For example, DNN trained on images can extract ey es, tail etc. as features in their last layers. (3) Besides, since the score function is heuristic, we can only show that a str ong r elationship exists between model perfor- mance and FSPT score. In the future , we also plan to derive a more accurate score function. (4) Finally , we also need to derive a threshold for reject option for a required condence level. Perhaps, we can apply the conformal prediction framework [ 28 , 29 ] in the dierent feature space partitions locally , and derive a threshold for a certain error probability requirement. A CKNO WLEDGMEN TS This work was supported by the Energy Research Institute@N T U. REFERENCES [1] [n.d.]. Breast Cancer Wisconsin (Diagnostic) Data Set. https://www.kaggle .com/ uciml/breast- cancer- wisconsin- data/home [2] [n.d.]. Quality Prediction in a Mining Process. https://www.kaggle.com/ edumagalhaes/quality- prediction- in- a- mining- process [3] [n.d.]. SARCOS. http://www .gaussianprocess.org/gpml/data/ [4] Joshua Attenb erg, Panos Ipeirotis, and Foster Provost. 2015. Beat the Ma- chine: Challenging Humans to Find a Predictive Model’s &Ldquo;Unknown Unknowns&Rdquo;. J. Data and Information Quality 6, 1 (2015), 1:1–1:17. [5] Peter L Bartlett and Marten H W egkamp. 2008. Classication with a reject option using a hinge loss. Journal of Machine Learning Research 9, Aug (2008), 1823–1840. [6] Mariusz Bojarski, Davide Del T esta, Daniel Dworakowski, Bernhard Firner , Beat Flepp, Prasoon Goyal, Lawrence D Jackel, Mathew Monfort, Urs Muller , Jiakai Zhang, et al . 2016. End to end learning for self-driving cars. arXiv preprint arXiv:1604.07316 (2016). [7] Leo Breiman. 2001. Random forests. Machine learning 45, 1 (2001), 5–32. [8] Leo Breiman, Jerome H Friedman, Richard A Olshen, and Charles J Stone. 1984. Classication and regression trees . W adsworth & Brooks/Cole Advanced Books & Software. [9] Dua Dheeru and E Karra T aniskidou. 2017. UCI Machine Learning Repositor y . http://archive.ics.uci.edu/ml [10] Y arin Gal and Zoubin Ghahramani. 2016. Dropout as a Bayesian approximation: Representing model uncertainty in deep learning. In international conference on machine learning . 1050–1059. [11] Muriel Gevrey , Ioannis Dimopoulos, and Sovan Lek. 2003. Review and comparison of metho ds to study the contribution of variables in articial neural network models. Ecological mo delling 160, 3 (2003), 249–264. [12] Radu Herbei and Marten H W egkamp. 2006. Classication with reject option. Canadian Journal of Statistics 34, 4 (2006), 709–721. [13] Achin Jain, Truong X Nghiem, Manfred Morari, and Rahul Mangharam. 2018. Learning and control using gaussian processes: towards bridging machine learn- ing and controls for physical systems. In Proceedings of the 9th ACM/IEEE Inter- national Conference on Cyber-Physical Systems . IEEE Press, 140–149. [14] Diederik P Kingma and Max W elling. 2013. Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114 (2013). [15] Jing Lei, Max GâĂŹSell, Alessandr o Rinaldo, Ryan J Tibshirani, and Larr y W asser- man. 2018. Distribution-free predictive inference for regression. J. A mer . Statist. Assoc. (2018), 1–18. [16] Henry C Lin, Izhak Shafran, T o dd E Murphy , Allison M Okamura, David D Y uh, and Gregory D Hager . 2005. Automatic detection and segmentation of robot-assisted surgical motions. In International Conference on Medical Image Computing and Computer-Assisted Intervention . Springer , 802–810. [17] Gary Marcus. 2018. Deep learning: A critical appraisal. arXiv preprint arXiv:1801.00631 (2018). [18] Radford M Neal. 2012. Bayesian learning for neural networks . V ol. 118. Springer Science & Business Media. [19] Sebastian Nusser , Clemens Otte, and W erner Hauptmann. 2008. Interpretable ensembles of local models for safety-related applications.. In ESANN . 301–306. [20] John Paisley , David Blei, and Michael Jordan. 2012. V ariational Bayesian inference with stochastic search. arXiv preprint arXiv:1206.6430 (2012). [21] Kush R. V arshney and Homa Alemzadeh. 2016. On the Safety of Machine Learning: Cyber-Physical Systems, Decision Sciences, and Data Products. 5 (10 2016). [22] Sameer Singh Ribeiro, Marco T ulio and Carlos Guestrin. 2016. Why should i trust you?: Explaining the predictions of any classier . (2016). [23] Christian Robert. 2014. Machine learning, a probabilistic perspective. [24] Rick Salay , Rodrigo Queiroz, and Krzysztof Czarnecki. 2017. An Analysis of ISO 26262: Using Machine Learning Safely in A utomotive Software. CoRR abs/1709.02435 (2017). arXiv:1709.02435 http://ar xiv .org/abs/1709.02435 [25] Matthias Seeger . 2004. Gaussian processes for machine learning. International journal of neural systems 14, 02 (2004), 69–106. [26] Sakshi Udeshi, Pryanshu Arora, and Sudipta Chattopadhyay . 2018. Auto- mated directed fairness testing. Proceedings of the 33rd ACM/IEEE Interna- tional Conference on Automated Software Engineering - ASE 2018 (2018). https: //doi.org/10.1145/3238147.3238165 [27] Kush R V arshney , Ryan J Prenger , Tracy L Marlatt, Barry Y Chen, and William G Hanley . 2013. Practical ensemble classication error bounds for dierent operat- ing points. IEEE Transactions on Knowledge and Data Engineering 25, 11 (2013), 2590–2601. [28] Vladimir V ovk, Alex Gammerman, and Glenn Shafer. 2005. Algorithmic Learning in a Random W orld . Springer-V erlag, Berlin, Heidelberg. [29] Vladimir V ovk, Ilia Nour etdinov , Alex Gammerman, et al . 2009. On-line predictive linear regression. The A nnals of Statistics 37, 3 (2009), 1566–1590. [30] Gary M W eiss. 1995. Learning with rare cases and small disjuncts. (1995), 558–565. [31] Gary M W eiss. 2004. Mining with rarity: a unifying framework. A CM Sigkdd Explorations Newsletter 6, 1 (2004), 7–19. [32] Brian D Williamson, Peter B Gilbert, Noah Simon, and Marco Carone . 2017. Non- parametric variable importance assessment using machine learning techniques. (2017).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment