A Resource-Efficient Embedded Iris Recognition System Using Fully Convolutional Networks

Applications of Fully Convolutional Networks (FCN) in iris segmentation have shown promising advances. For mobile and embedded systems, a significant challenge is that the proposed FCN architectures are extremely computationally demanding. In this ar…

Authors: Hokchhay Tann, Heng Zhao, Sherief Reda

A Resource-Eicient Embedded Iris Re cognition System Using Fully Convolutional Networks HOK CHHA Y T ANN, HENG ZHA O, and SHERIEF REDA, Brown University Applications of Fully Conv olutional Networks (FCN) in iris segmentation have shown promising advances. For mobile and embedded systems, a signicant challenge is that the proposed FCN architectures are extremely computationally demanding. In this article, we propose a resour ce-ecient, end-to-end iris recognition ow , which consists of FCN-base d segmentation, contour tting, follow ed by Daugman normalization and encoding. T o attain accurate and ecient FCN models, we propose a three-step SW/H W co-design methodology consist- ing of FCN architectural exploration, precision quantization, and hardwar e acceleration. In our exploration, we propose multiple FCN models, and in comparison to previous works, our best-performing model requires 50 × less FLOPs per inference while achieving a new state-of-the-art segmentation accuracy . Next, we select the most ecient set of models and further reduce their computational complexity through weights and activations quantization using 8-bit dynamic xed-point (DFP) format. Each mo del is then incorporated into an end-to-end ow for true recognition performance evaluation. A few of our end-to-end pipelines outperform the previous state-of-the-art on two datasets evaluated. Finally , we propose a novel DFP accelerator and fully demonstrate the SW/HW co-design realization of our ow on an embedded FPGA platform. In comparison with the embedded CP U, our hardware acceleration achieves up to 8.3 × speedup for the overall pipeline while using less than 15% of the available FPGA resources. W e also provide comparisons between the FPGA system and an embedded GP U showing dierent benets and drawbacks for the two platforms. CCS Concepts: • Computing methodologies → Neural networks ; • Computer systems organization → Neural networks ; Embedded hardware . Additional Ke y W ords and Phrases: Iris Re cognition, Biometrics, Fully Convolutional Networks, Deep Learning, FPGA A CM Reference Format: Hokchhay T ann, Heng Zhao, and Sherief Reda. . A Resource-Ecient Embe dded Iris Recognition System Using Fully Convolutional Networks. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 1, 1 (Septemb er ), 23 pages. 1 INTRODUCTION Due to the unique and rich signatures in the irises of each individual, iris recognition has been shown as one of the most secure forms of biometric identication [ 10 ]. Unlike other biometric features such as ngerprints and voice, the irises hardly change ov er the course of an individual’s lifetime. Recently , iris recognition becomes increasingly common on various wearable and mobile devices. For these systems, high level of security and ecient recognition processing pipeline with low computational complexity are the tw o stringent requirements for deployment. A variety of algorithms and implementations have been proposed over the y ears for iris recog- nition pipelines [ 9 , 27 , 38 , 39 ]. For typical processing ows, some of the main diculties include obtaining quality iris image and accurately segmenting the iris region. For iris segmentation, several algorithms have b een developed [ 3 , 12 , 25 , 26 , 29 , 37 ] using a diverse set of te chniques such as circular Hough transform and integrodierential operator . With the r e cent success of deep learning, emerging studies on iris recognition adopt various forms of Deep Neural Networks (DNN) to A uthors’ address: Hokchhay T ann, hokchhay_tann@alumni.brown.edu; Heng Zhao, heng_zhao@alumni.br own.edu; Sherief Reda, sherief_reda@brown.edu, Brown University, 182 Hope Street, Pro vidence, Rhode Island, 02912. . T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 2 Hokchhay T ann, Heng Zhao, and Sherief Reda replace dierent parts of traditional pipelines such as segmentation [ 4 , 17 , 21 ] and representation [ 11 , 41 ]. In particular , using ground-truth datasets such as IRISSEG-EP [ 16 ], recent works on fully convolutional network (FCN) base d iris segmentation have shown promising improvements in robustness and accuracy . Despite the improvements in segmentation accuracy with FCNs, existing studies fo cus solely on segmentation accuracy without evaluating the impacts of the models on end-to-end iris recognition systems. As e videnced in the work by Hofbauer et al. [ 15 ], segmentation accuracy alone may be insucient when comparing multiple segmentation algorithms. In their study , they experiment with multiple iris recognition ows and demonstrate that segmentation algorithms with higher segmentation accuracy do not always lead to end-to-end ows with better recognition rate. Thus, when comparing multiple segmentation algorithms or models, it is helpful to evaluate each using the full iris recognition pipeline to select ecient models without sacricing the overall system accuracy performance. Existing works on FCN-based segmentation also lack evaluation of the model deployments on real H W/SW system such as embedded systems, which are popular targets for iris recognition applications. As such, the FCN architectures are designed without taking into account the com- putational overheads in deployment on resource-constraint systems. Instead, the narrow fo cus on segmentation accuracy also leads to FCN-base d designs that are extremely computationally intensive. These models can consist of a large number of layers and parameters and require billions of oating-point operations for each input making them unsuitable for emb edded systems. T o address the current shortfalls, we propose in this work several contributions, which are summarized as follows: • W e propose an end-to-end iris r ecognition pipeline with FCN-based segmentation. T o the best of our knowledge, we are the rst to demonstrate a complete iris recognition ow using FCN-based segmentation. In order to construct this pipeline, we pr opose an accurate contour tting algorithm which computes center points and radii of the pupil and limbic b oundaries from the FCN segmented mask. The complete ow consists of an FCN-based segmentation, a contour tting module, follow ed by Daugman normalization and encoding [ 9 ]. Compared to previous works, our o w sets a new state-of-the-art recognition rate on the two datasets evaluated while incurring signicantly less hardware resour ces. • The FCN-based segmentation portion is identied as the major bottleneck in our iris recogni- tion pipeline. With this observation, we propose a three-step SW/HW co-design methodology to obtain a resource-ecient and accurate FCN model suitable for embedde d platforms. Our technique consists of FCN architectural exploration, pr e cision quantization using dynamic xed-point format, and hardware acceleration. • First, in architectural exploration, we propose and evaluate a large number of FCN architec- tures and demonstrate that small decrease in segmentation accuracy can be traded o for an orders-of-magnitude reduction in overall computational comple xities. Using the end-to-end ow , we highlight the imp ortance of evaluating the impacts of various FCN architectures using overall recognition rates rather than just segmentation accuracy . • As a second step, we further reduce hardware complexities of the models by introducing quantization to 8-bit dynamic xed-p oint for both weights and activations in the FCN models. • Next, we propose a novel custom, dynamic xed-point based hardware accelerator design for the models. T o compare with the oating-point format, we also synthesize a oating-point version of the accelerator . T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 3 (1). Acquired Imag e (2). Iris and P upil Segmenta tion (3). Iris N ormalization (4). Uniqu e Iris Enc oding (5). Mask Fig. 1. T ypical processing pipeline for iris recognition applications based on Daugman [9]. • Finally , we provide a hardware design space exploration and comparisons though imple- mentation of the ow using various hardwar e congurations and precisions, namely CP U , CP U+Accelerator on FPGA, and CP U+GP U. The rest of the paper is organized as follows. In Section 2, we pro vide a background of conven- tional and FCN-based iris segmentation and discussions of related work. In Section 3, we describe in more details of our proposed iris recognition ow . Section 4, we discuss our resource-ecient SW/HW co-design methodology and hardware accelerator implementation. W e discuss our experi- mental setup, as well as our experimental results in Section 5. Finally , Section 6 provides the nal discussions and concludes the paper . 2 BA CKGROUND AND RELA TED WORKS In order to capture the unique features from each individual’s irises and construct their corr espond- ing signatures, the iris recognition pipeline typically consists of multiple stages as shown in Figure 1. First, the iris image is captur e d using a camera, often with near-infrar e d sensitivity . The input image is then preprocessed for specular reections removal and contrast enhancement. Next, a segmentation step is applied to detect the pupil, iris and eyelids boundaries. The segmented iris region is then converted into its polar coordinate form in the normalization step. Finally , a wavelet transform is applied to encode the p olar coordinate array into bitstream, which represents the unique signature of the iris [ 9 ]. Each enco ding is accompanied by a mask bit stream that gives encoding bits corresponding to none-iris areas such as those occluded by the eyelids or glare reection. In this pipeline, the most computationally demanding portions are the preprocessing and iris segmentation [ 14 , 23 ]. In optimizing the pipeline, it is thus most benecial to target these rst few steps, which is the focus of this work. 2.1 Traditional Iris Segmentation Methodologies Accurate iris segmentation has been among the most popular and challenging areas in iris recog- nition. One of the most widely adopted segmentation algorithms was proposed by Daugman [ 9 ] using the integr o dierential operator . In this algorithm, the iris center point is located by sear ching through lo cal-minimum intensity pixels throughout the image in a coarse-to-ne strategy . At each candidate pixel, a circular integrodierential operator is applied while allowing the radius to change from a minimum to a maximum radius. This radius range is predetermined for the dataset to contain the limbic b oundary . After all the candidate pixels are evaluated, the pixel location with the maximum in the blurred partial derivative with respect to the increasing radius is used in a ne-grain search. Here, integrodierential operator is applied to all pixels in a small window surrounding the candidate pixels, which results in a single iris center point with radius, r . Once the iris radius and center points are determined, a similar step is used to search a small ar ea around the iris center point for the pupil centers. Here , the radius range is allowed to vary from 0.1 to 0.8 of T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 4 Hokchhay T ann, Heng Zhao, and Sherief Reda Conv olutional Encoder - Decoder Segmentation Output Input Image Conv + Batch Normalization + ReLU Poo li ng Up Sampling Softmax Elemen t - wise Additi on Fig. 2. Architecture for Encoder-Decoder Fully Convolution Networks with skip connections for semantic segmentation. the computed iris radius. The integrodierential operator is also used to determine the elliptical boundaries of the lower and upper eyelids. Another popular technique use d in many segmentation algorithms is circular Hough Transform [ 19 , 24 , 36 , 38 ]. T ypically , the Hough Transform op erates on an edge map constructed from the input image. The main computation can be written as: ( x − x i ) 2 + ( y − y i ) 2 = r 2 where x i and y i are the center coordinates, and r is the circle radius. Similar to integrodierential operator , the circle radius range for the iris and pupil boundaries are predetermined. A maximum in the Hough space corresponds to a most likely circle at radius r . The operator is use d to compute two circles for the limbic and pupil boundaries. Since the iris region is often partially occluded by the top and bottom eyelids, two parabolic curves are used to approximate their boundaries. The assumption of circular or elliptical limbic and pupil boundaries in the segmentation algo- rithms discussed can b e challenging in some cases. For this reason, active contour-based segmenta- tion algorithms were introduced to locate the true b oundaries of the iris and pupil [ 2 , 8 , 33 ]. Since the segmentation output of active contour can assume any shapes, Abdullah et al. [ 2 ] proposed a new noncircular iris normalization technique to unwrap the segmentation region. Gangwar et al. [ 12 ] proposed a technique based on adaptive ltering and thresholding. Zhao and Kumar [ 40 ] proposed a total variation model to segment visible and infrared images under relaxed constraints. 2.2 Fully Convolutional Networks for Iris Segmentation The challenges with traditional iris segmentation methods stem from the fact that the algorithms tend to be reliant on hand-crafted feature extractions and car eful parameter tuning such as pre- computed radii ranges for the limbic and pupil boundaries. They can also b e highly dependent on certain image intensity proles and pre-processing steps to function correctly . In addition, separate models are typically deployed to detect the eyelids and iris regions. With the recent advances in deep learning-based semantic segmentation, FCN-based iris seg- mentation methodologies have been propose d to solve the challenges facing conventional methods [ 4 , 17 , 18 , 21 ]. Similar to successful ar chite ctures used in other semantic segmentation problems such as SegNet [ 5 ] and U-Net [ 32 ], the state-of-the-art FCN models employed in iris segmentation typically has the form of encoder-deco der format as shown in Figure 2. This architecture allows for pixel-wise labelling which conveniently produces output of the same dimensions as the input. The success of the FCN models stem from their ability to learn and extract increasingly abstract features from the inputs. On the encoder side, it is observed that the hierarchical arrangement of T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 5 convolutional layers allo ws earlier layers to learn lower-le vel features such as edges while latter layers learn more abstract, high-level concepts from the inputs. The underlying computation of each layer can summarized as convolution operations follo wed by a non-linear function such as Rectied Linear Unit (ReLU). The operation can b e formalized as B i , j = f ( b + Õ m Õ n Õ k ( A i + m , j + n , k · W m , n , k )) where A , W , and b are the input tensor , kernel weight matrix, and a scalar bias respectively , and f () is a non-linear function. A subset of the layers is also followed by a subsampling op eration, which reduces the spatial dimension of the input allowing the model to be translation-invariant. On the decoder side, the low-resolution feature maps outputte d by the encoder are upsampled using successions of transpose d convolution layers to produce labeling prediction for each pixel in the original input image. 2.3 Metrics for Iris Segmentation Accuracy In order to evaluate segmentation algorithms, there exists multiple ways to compute the segmenta- tion accuracy . A widely accepte d metric in iris recognition is the F -measure, which is aimed at optimizing the precision and recall performance of the segmentation output [ 31 ]. The resulting mask from a segmentation operation can be categorize d into four dier ent groups: true positive ( T P ), false positive ( F P ), true negative ( T N ) and false negative ( F N ). T P and T N represent fraction of pixels which were classied correctly as iris and none-iris respectively with r esp ect to the gr ound truth segmentation. On the other hand, F P and F N correspond to those which are incorrectly classied as iris and none-iris. For a dataset with N images, the precision is then dened as P B 1 N N Õ i = 1 T P i T P i + F P i , and recall is dened as R B 1 N N Õ i = 1 T P i T P i + F N i . P measures the fraction of predicted iris pixels that is correct while R measures the fraction of iris pixels in the ground truth correctly identied or retrie ved. F is then computed by taking the harmonic mean of R and P : F B 1 N N Õ i = 1 2 R i P i R i + P i . In iris recognition, other segmentation accuracy metrics also exist such as the Noisy Iris Challenge Evaluation - Part I [ 28 ], where segmentation errors for a dataset of N images, with c × r dimension, is dened as E 1 B 1 N N Õ i = 1 1 c × r c × r Õ j = 1 O ( j ) ⊗ C ( j ) ! . Here, O ( j ) and C ( j ) are the pixels from the predicted outputs and ground truth masks respectively , and ⊗ is the XOR operator . A second error measure is also introduced which aims to compensate for the a priori probability disproportions between the iris and non-iris pixels in the input images: E 2 B 1 2 N N Õ i = 1 ( F P i + F N i ) . T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 6 Hokchhay T ann, Heng Zhao, and Sherief Reda Acquired Image FCN Inference Contour Fitting Normal- ization Encoding Fig. 3. Proposed FCN-based iris recognition processing pipeline. In our work, we mainly utilize the F -measure and also report the Precision and Recall performance. The E 1 and E 2 error rates can also be considered, but they would not aect our FCN optimization. 3 PROPOSED FCN-BASED IRIS RECOGNITION PROCESSING PIPELINE Traditional iris recognition pipelines consist of multiple computation stages for image pre-processing, segmentation, normalization, and encoding as depicted in Figure 1. In our ow , the segmentation is p erformed using an FCN model, which allows the pre-processing stage to be eliminated. For normalization and encoding, we employ the well-known rubber sheet model and 1-D log Gabor lter from Daugman [ 10 ]. In order to connect the FCN segmentation output with the normalization stage, we pr op ose a contour tting routine , which will b e described next. Figure 3 illustrates our FCN-based processing pipeline which consists of FCN inference, contour tting, normalization, and encoding. 3.1 Proposed Contour Fiing Algorithm Daugman’s rubber sheet model achieves iris 2D positional and size invariance due a new coordi- nate system created by the center points and radii of the iris and the pupil [ 9 ]. With FCN-based segmentation, each output mask only identies the pixels belonging to the iris and not the exact center coordinates or radii of the iris and the pupil. In or der to extract this information, we develop a contour tting routine as sho wn in Figure 4. Given a segmentation output mask, we rst perform a rough estimate of iris center point and radius. This is done by analyzing the largest connected object in the image and computing its centroid, which is the rough iris center point. The iris radius is then approximated by taking the mean of the object’s major and minor axis lengths. Using the approximated center point and radius, we perform a more ne grained boundar y tting using the Circular Hough Transform (CH T) for circles with similar radii to the rough estimate. After obtaining the nal iris radius ( r ) and center point ( x , y ), we search for the pupil using CH T for circles with radius range in the range [0.1 r 0.8 r ] and whose center points are within a region of interest (ROI) around ( x , y ) . W e select this radius range because biologically , the pupil radius can be anywhere b etween 0.1 and 0.8 of the iris radius [10]. The ROI allows for a less noisy and mor e computationally ecient localization of the pupil boundar y . 3.2 End-to-end Recognition Rates Evaluation The contour tting routine produces as output the information r egarding the center coordinates and radii of the pupil and limbic boundaries. This result is passed on to the normalization step based on Daugman’s rubber she et model [ 9 ], which converts the iris region into a binary grid, 16 × 256 in this work. A 1-D log Gabor lter is then used to extract features from the grid producing a 16 × 256-bit encoding. A 16 × 256-bit mask grid is also produced to identify useful and non-useful encoding bits. Note that, the Daugman normalization used in our current pipelines assumes circular limbic and pupilary boundaries. This assumption may not b e suitable for some datasets such as those explored in [ 8 ] in which the recognition performance may be aected. Howev er , it is a useful rst order approximation, which can be built upon to better t in those cases. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 7 (a) Input imag e (b) Segme ntation ou tput (e) Mask an d Circles Overlaid (f) Normalized I ris (g) Iris encod ing (top) and mask (bottom) (c) Rough limbic boundary estimate (d) Fine - grained Fitting using Circula r Hough Transform Fig. 4. Processing pipeline for contour fiing, normalization and encoding. 1. FCN Architectural Exploration 2. Quantization 3. Hardware Acceleration Fig. 5. Proposed SW/H W co-design strategy to achieve eicient, accurate FCN model and fast inference . T o determine whether there exists a match in the database, the hamming distance (HD) b etween the input encoding { e ncod i n д I } and every stored enco ding { e ncod i n д S } is compute d as follows: H D = | | ( enc odi n д I ⊗ e nc odi n д S ) ∩ mas k I ∩ mas k S | | | | mask I ∩ mask S | | , (1) where { mask I , mask S } are the masks for input and stored encoding respectively . In our work, the HD is computed for dierent degrees of rotation in the range [-35 ◦ , 35 ◦ ] between the two masks. From this, the smallest Hamming distance is recorded. 4 PROPOSED SW/HW CO-DESIGN STRA TEGY W e will show in Section 4.3 that similar to most iris recognition pipelines, the segmentation step is the most compute intensive portion and takes up the majority of the ov erall processing time. In our ow , the segmentation runtime is mostly from FCN inference. Hence , we propose a three-step SW/HW co-design methodology , shown in Figure 5, to reduce the hardware complexities for this processing stage while maintaining high accuracy . Next, we discuss each step in the methodology in more details. 4.1 Fully Convolutional Network Architecture Exploration In developing FCN models to perform iris segmentation, there are many choices for architectural parameters, each of which can lead to drastically dierent segmentation accuracy and computational complexities. Generally , this design process uses empirical results from training and validating the models to rene the architectures. For this work, w e explore network ar chite ctures similar to U-Net model proposed in [ 32 ]. W e do not explore other model types such as DeepLab [ 6 ], Segnet [ 5 ], and Long et al. [ 22 ] since they are targeted for more complex scenes with more training examples than our application. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 8 Hokchhay T ann, Heng Zhao, and Sherief Reda T able 1. Proposed baseline FCN architecture. Each convolution layer (CONV) is followed by Batch Normaliza- tion and ReLU activation layers. Transposed convolution layer (TCON V) is followed by ReLU activation layer . The arrows denote the shortcut connections, where the outputs of tw o layers are added together element-wise before passing to the next layer . The layers are grouped with a specific group numb er . The layers to below group numb er 4 are those belonging to the decoder side. While the enco der side are those to before and including group number 4. There always exists a shortcut connection between the last encoder-side CONV layer and decoder-side TCONV layer for groups with the same group number . For the baseline model, N is set to be 16. Group # Layer Type Filter Size / Stride / Padding Num. Outputs - Image Input – – 0 CONV 3 × 3/1/1 N 1 CONV 3 × 3/2/1 2N CONV 3 × 3/1/1 2N 2 CONV 3 × 3/2/1 2N CONV 3 × 3/1/1 2N 3 CONV 3 × 3/2/1 2N CONV 3 × 3/1/1 2N 4 CONV 3 × 3/2/1 2N CONV 3 × 3/1/1 4N 3 T -CON V 4 × 4/2/0 2N CONV 3 × 3/1/1 2N 2 T -CON V 4 × 4/2/0 2N CONV 3 × 3/1/1 2N 1 T -CON V 4 × 4/2/0 2N CONV 3 × 3/1/1 2N 0 T -CON V 4 × 4/2/0 N CONV 1 × 1/1/1 2 - SOFTMAX – 2 In order to obtain the most ecient set of FCN architectures with good overall recognition performance, we rst create a large po ol of candidate FCN models with varying computational costs. Here, the computational cost is dene d as the number of arithmetic op erations, which is the number of oating point operations (FLOPs) required per inference . W e start by designing a baseline ar chite cture as shown in T able 1. In this model, instead of using pooling lay ers to downsize the input, we employ strided convolution layers (convolutions with stride greater than 1). This has been shown to have no eect on the models’ accuracy performance while oering reduced number of computations [ 34 ]. The larger models with more parameters, i.e. weights, tend to have the highest segmentation accuracy while requiring signicant computational resources. How ever , the number of parameters must also be selected with care relative to the size of the available training data. Models with too many parameters on a small dataset can overt and generalize poorly . With a baseline architecture designed, w e iteratively construct dier ent FCN variants by per- forming a grid search on a few architectural parameters. The choices of parameters are chosen such that they have signicant impact on the computational complexities of the mo dels. The three parameters are as follows: • Input image scaling : The spatial dimensions of the input iris image directly ae ct the number of computation required at each layer . While the original image resolution oers more detaile d T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 9 and ne features, segmentation using scaled-down version of the input could oer signicant reduction in number of computation with limited eect on the segmentation accuracy . W e explore thr ee dierent scaling factors in this w ork, namely , 1 (original resolution), 0.5, and 0.25. For instance, a scaling factor of 0.5 means that the each spatial dimension of the input image is reduced by half. • Number of layers : W e explore FCN models with wide ranging number of layers for each dataset. The maximum number of layers explored is 18 as sho wn in T able 1. W e obtain mo dels with smaller number of layers by removing layers in groups. For instance, r emoving layers with group number 3 would results in model a 14-layer network. However , we set a strict constraint that the spatial dimensions of the smallest feature maps in the models, namely the outputs of group 4, are kept xed at 1 16 the original dataset resolution. • Number of feature maps/channels per layer : This parameter is denoted by variable N in T able 1 and quadratically impacts the computational complexity of each FCN layer . For eciency , we limit the maximum number of output feature maps to be 64 in any lay er . Starting from the baseline architecture, we experiment with four dierent values for N , which are {4, 8, 12, 16}. Howev er , several architectural choices ar e kept constant acr oss all the models. For instance , the lter size of all convolution layers ar e also kept xe d at 3 × 3 except for the last convolution lay er , which is 1 × 1. The size is 4 × 4 for all transposed conv olution layers. None-strided convolution lay ers are padded to keep the spatial dimensions of the output feature maps the same as their inputs. Each candidate model is trained using the backpropagation algorithm with stochastic gradient descent (SGD) and momentum weight updates: ∆ W t + 1 = β ∆ W t − η ∇ L ( W ) W t + 1 = W t + ∆ W t + 1 where β and η are the momentum and learning rate respectively . For loss function L ( W ) , we use cross entropy loss where ther e are two output classes, iris and non-iris for each pixel. This loss can be written as: L ( W ) = − 1 c × r c × r Õ i = 1 ( y i log p i + ( 1 − y i ) log ( 1 − p i )) , where y i ∈ { 0 , 1 } and p i ∈ [ 0 , 1 ] are the ground truth and predicted label for each pixel respectively . This loss function works well in case where the number of pixels in each class is roughly e qual. In reality , most images captured for iris r e cognition contain much smaller iris area compared to non-iris. Thus, we introduce additional parameter to compensate for the disproportionality of the two classes a priori probabilities as: L ( W ) = − 1 c × r c × r Õ i = 1 (( 1 − α )( y i log p i ) + α ( 1 − y i ) log ( 1 − p i )) , where α ∈ [ 0 , 1 ] is ratio of iris to non-iris area and precomputed from the training set. Segmentation accuracy evaluations: W e evaluate two well-known datasets in this work, namely CASIA Interval V4 [ 1 ] and II TD [ 20 ]. Figure 6 shows the F -measure performance and computational complexity , dened as the numb er of FLOPs required p er inference, of candidate FCN models evaluated. For each dataset, the models were traine d on a training set, and the reported F -measures in Figure 6 are obtained using a disjoint test set. The training and validation sets are 80% of the original dataset with the remaining 20% for the test set. For models using scaled-down images, each input is rst downsized according to the scale factor . The output segmentation mask is then resized back to the original resolution before the F -measure is computed. W e use the near est-neighb or T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 10 Hokchhay T ann, Heng Zhao, and Sherief Reda 0.92 0.94 0.96 0.98 1 F-measure 10 6 10 7 10 8 10 9 FLOPs CASIA4 Iris Interval FCN8 FCN7 FCN6 FCN5 FCN4 FCN3 FCN2 FCN1 FCN0 Scale=1 Scale=0.5 Scale=0.25 Pareto Front 0.92 0.94 0.96 0.98 1 F-measure 10 6 10 7 10 8 10 9 FLOPs IITD FCN19 FCN18 FCN17 FCN16 FCN15 FCN14 FCN13 FCN12 FCN11 FCN10 FCN9 Scale=1 Scale=0.5 Scale=0.25 Pareto Front Fig. 6. F -measure segmentation accuracy and computational complexity of candidate FCN models on CASIA Iris Interval V4 and II TD datasets. The scales refer to the ratio of the model input dimensions to the original image resolutions from the datasets. Smaller resolution inputs can significantly reduce the computational complexity of the models. W e label models which make up the Pareto fronts as FCN0-FCN8 for CASIA4 and FCN9-FCN19 for II TD . T able 2. Architectural descriptions for FCN mo dels which form the Pareto fronts in Figure 6 for CASIA Interval V4 and II TD datasets. Scaling denotes the input image scaling, and N determines the numb er of channels as in T able 1. The architectures are shown as a list of group number , each of which corresponds the various layers shown in T able 1. The layers to the right of group number 4 are those belonging to the deco der side. While the encoder side are those to the le of and including group number 4. Similar to T able 1, there exists shortcut connections between the last encoder-side CON V layers and decoder-side TCON V layers for groups with the same group number , which are not shown here. Model CASIA Interval V4 Model II TD Scaling/ N Architecture Scaling/ N Architecture FCN0 1/12 0-1-2-3-4-3-2-1-0 FCN9 1/16 0-1-2-3-4-3-2-1-0 FCN1 1/8 0-1-2-3-4-3-2-1-0 FCN10 1/8 0-1-2-3-4-3-2-1-0 FCN2 1/12 0-1-2-4-2-1-0 FCN11 1/6 0-1-2-3-4-3-2-1-0 FCN3 1/4 0-1-2-3-4-3-2-1-0 FCN12 1/4 0-1-2-3-4-3-2-1-0 FCN4 0.5/8 0-1-2-4-2-1-0 FCN13 1/4 0-1-2-4-2-1-0 FCN5 0.5/4 0-1-2-4-2-1-0 FCN14 0.5/8 0-1-2-4-2-1-0 FCN6 1/4 0-1-2-4-2-1-0 FCN15 0.5/4 0-1-2-4-2-1-0 FCN7 0.25/4 0-1-4-1-0 FCN16 0.25/8 0-1-4-1-0 FCN8 0.25/4 0-4-0 FCN17 0.25/4 0-1-4-1-0 FCN18 0.25/8 0-4-0 FCN19 0.25/4 0-4-0 approach for the both resizing operations. Note that in our architectural explorations, we train separate networks for the two datasets for fair comparisons with previous works. This does not limit the applicability of our models as techniques such as domain adaptation [ 18 ] can be applied for new unseen datasets. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 11 T able 3. Segmentation Accuracy Comparison to Previous W orks DB Method R P F µ σ µ σ µ σ CASIA4 Interval GST [3] 85.19 18 89.91 7.37 86.16 11.53 Osiris [26] 97.32 7.93 93.03 4.95 89.85 5.47 W AHET [37] 94.72 9.01 85.44 9.67 89.13 8.39 CAH T [29] 97.68 4.56 82.89 9.95 89.27 6.67 Masek [25] 88.46 11.52 89.00 6.31 88.30 7.99 IrisSeg [12] 94.26 4.18 92.15 3.34 93.10 2.65 IDN [4] 97.10 2.12 98.10 1.07 97.58 0.99 Our FCN0 99.41 0.40 98.93 0.75 99.17 0.40 II TD GST [3] 90.06 16.65 85.86 10.46 86.60 11.87 Osiris [26] 94.06 6.43 91.01 7.61 92.23 5.80 W AHET [37] 97.43 8.12 79.42 12.41 87.02 9.72 CAH T [29] 96.80 11.20 78.87 13.25 86.28 11.39 Masek [25] 82.23 18.74 90.45 11.85 85.30 15.39 IrisSeg [12] 95.33 4.58 93.70 5.33 94.37 3.88 IDN [4] 98.00 1.56 97.16 1.40 97.56 1.04 Our FCN9 98.92 0.87 98.33 1.13 98.62 0.65 As illustrated in Figure 6, dierent F -measures can result in drastic dierence in FCN computa- tional complexities. For the two datasets, our architectural explorations result in models with three orders of magnitude range in complexity , between 0.002 and 2 GFLOPs. The results also show that models using input size closer to the original resolution tend to perform slightly b etter , however , they are signicantly more complex computationally than the lower resolution counterpart. In addition, for each input size, the dierent architectural choices can lead of orders of magnitude dierence in number of computations and segmentation accuracy . For b oth datasets, the accuracy performance for models using dierent input scaling saturates at dierent point b eyond which small additional accuracy improv ement require orders of magnitude increase in complexity . This saturation behavior is also observed when all scaling factors are combined. W e provide architectural descriptions of each model from the Pareto fronts (FCN0-FCN8 and FCN9-FCN19) for the two datasets in T able 2. T o compare the eciency and segmentation performance of our mo dels to previous w orks, we also evaluate each model using the full dataset. T able 3 shows the results from our best-performing model and those from previous works. The segmentation accuracy of other works r ep orted in the table are obtained from IrisSeg [ 12 ] and IrisDenseNet (IDN) [ 4 ]. Previously , IrisSeg achieved better segmentation accuracy performance in comparison to other none-FCN segmentation methods such as GST [ 3 ], Osiris [ 26 ], Masek [ 25 ], W AHET [ 37 ], and CAH T [ 29 ]. This result was outperformed by FCN-based segmentation metho d proposed by IDN from Arsalan et al. [ 4 ]. In comparison to IDN model, which requires more than 100 GFLOPs per inference, both of our FCN architectures need less than 2 GFLOPs as shown in T able 2, which is 50 × more ecient. This large dierence in computational overhead can be attributed to the fact that our network architectures are signicantly shallower with far fewer numb er of feature maps per layer . In addition, our models utilize few shortcut connections instead of the costly dense connectivity . T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 12 Hokchhay T ann, Heng Zhao, and Sherief Reda 4.2 antization to Dynamic Fixed-Point As demonstrated by Hashemi et al. [ 14 ] and T ann et al. [ 35 ], reducing the data precision in DNNs can signicantly lower the computational ov erheads of the mo dels. With the Pareto front models identied in Figure 6, we co-design their data precision such that they can b e run using lower-cost computational units on the targeted hardware platform. Since quantization is a time-consuming process, we do not target other models which are not on the Pareto fr onts. The numerical ranges of the weights and activations in DNN models can vary drastically between dierent layers. Previous w orks [ 13 ] have shown that even quantizing the weights and activations to a 16-bit uniform xed-point format signicantly degrades the accuracy of models in comparison to the original oating-point repr esentation. In order to represent these dierent ranges using a small number of bits, we propose to quantize the FCN models to dynamic xed-point (DFP) [ 7 ] for both the weights and activations. Within a layer , DFP behaves exactly like a normal xed-point format. However , the radix location is allowed to var y between dierent layers for DFP. In this format, each layer in the FCN models is repr esente d by ve hyperparameters, namely ( w b w , a b w , w f l , a i n , a ou t ), for bitwidths of the weights and activations/feature maps, and fractional lengths of the weights, input feature maps, and output featur e maps respectively . W e x the bitwidths of both weights and activations of all the layers to be 8 bits. In order to determine the proper fractional lengths for the weights and feature maps of each layer , we rst perform pr oling for the weights and activations of the trained oating-point models. For the weights, we select layer-wise fractional lengths such that no overow exists during the quantization. For the activations or feature, the proling is done by using a randomly selecte d subset of training data to perform forward passes with the models. During this inference process, we record the largest activation for each layer . Similar to the weights, w e then select layer-wise fractional lengths such that there is no overow . With these hyperparameters in place, we then quantize the oating models to DFP by employing similar procedure to Hashemi et al. [ 13 ] using the straight-through estimator . 4.3 Hardware A cceleration Iris Recognition Pipeline on Embe dded SoC So far , the majority of work on iris recognition focuses mostly on algorithmic designs such as segmentation and feature extraction. There exists only few studies on the system design and implementation asp ect. Hashemi et al. [ 14 ] and López et al. [ 23 ] implemente d full recognition pipelines on an embedded FPGA platform and showed that careful parameters optimization and software-hardware partitioning are required to achieve acceptable runtime. For iris recognition with FCN-based segmentation, existing studies so far are only concerned with achieving state-of- the-art segmentation accuracy without considerations for computational costs of the proposed designs. As such, full system analysis and implementation of these processing pip elines have not be en demonstrated. In this se ction, we describ e our implementation of the FCN-based iris recognition pipeline targeting an embedded FPGA SoC. W e pro vide analysis of the system runtimes and bottlenecks. W e also propose a hardware accelerator , which is able to achieve signicant speedup computations relative to the onboard CP U core. 4.3.1 Runtime Profiles for Iris Recognition Pipeline. As an initial step, we implement the iris recogni- tion pipeline in software running on the physical CP U cor e on the FPGA SoC. Our pipeline consists of four main modules, namely segmentation, contour tting, normalization, and encoding. The segmentation step can be performe d using dierent FCN models, which can lead to vastly dierent runtimes. On the other hand, the runtimes for the remaining thr e e components stay approximately constant acr oss dier ent input images and FCN models. This is because the dimensions of the input and output images for these three modules are constant. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 13 FCN8 FCN7 FCN6 FCN5 FCN4 FCN3 FCN2 FCN1 FCN0 0 0.2 0.4 0.6 0.8 1 Normalized Runtime Segmentation Circle Fitting Normalization Encoding Fig. 7. FCN-based iris recognition pipeline runtime breakdown for FCN0–FCN8 models from CASIA Inter val V4 Pareto front in Figure 6. From le to right, the FCN mo dels are arranged in increasing computational complexity . T able 4. Runtime profile for FCN inference using the onboard CP U. Function Init Im2Col GEMM Activation (ReLU) Percentage 1.31 10.58 80.77 7.34 With this setup, we prole the runtime of the dierent components in the pipeline, which is shown in Figure 7. Here, we observe that the majority of the runtime is spent in the segmentation stage. This is especially true for larger FCN models where segmentation takes up more than 95% of the total runtime. Therefore , it is reasonable to focus our eorts on accelerating the segmentation component of the pipeline, which is essentially the inference process of the FCN model. T o ee ctively speed up this op eration, we explor e next the runtime proles for FCN model components. 4.3.2 FCN Processing Components. In this work, our FCN models ar e implemented and trained using the Darknet framework [ 30 ]. Each model consists of multiple layers with dierent computa- tional requirements, and each layer consists of multiple components as liste d in T able 4. Here, the Init functions is responsible for ensuring that the output matrices are properly initialized and zeroed out. Note that Batch Normalization (BN) layers are used in training, but they are not sho wn here since the trained normalization parameters ( µ , σ 2 , γ , β ) can be folded into the network parameters in inference as such: ˆ w = γ · w / σ 2 ˆ b = γ · ( b − µ )/ σ 2 + β where w and b are the trained weights and biases of the preceding convolution lay er . With this, the forward computation can be carried out using ˆ w and ˆ b without the BN layers. The Im2Col function is a standard operation which converts the input images/feature maps into column format. With this, the convolution operations can be carried out using a general matrix to matrix multiplication (GEMM) routine . For transp osed convolution layer , a similar operation is used to convert column data to image instead. The GEMM unit is essentially responsible for the multiplication of two matrices, the weights and input feature maps. The results in T able 4 show that the GEMM unit is the most time consuming portion taking up more than 80% of the mo dule runtime. The remaining 20% is spent mostly on Im2Col and activation function, which is the rectify linear unit in this case. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 14 Hokchhay T ann, Heng Zhao, and Sherief Reda Application Pr ocessing Unit Zynq Programmable Logic ARM CPUs FPUs NEON L1 Cache L2 Cache & Controller Snoop Controller OCM DMA Accelera tor ACP Centra l Inter connect GP Ports I/O ACP ACP ACP Buffer A Buffer B Buffer C GEMM Acceler ator Processing Engine Processing Engine Code Synthesi zed int o Hardware for (i = 0; i < M; ++ i ){ for (j = 0; j < N; ++j){ Dtype sum = 0; for (k = 0; k < K; ++k){ sum += A[ i ][k]*B[k][j]; } C[ i ][j] += sum; } } × × × × A[0] B[0] A[1] B[1] A[2] B[2] A[n] B[n] + + + clk Fig. 8. Overall system integration and the hardware accelerator module for the GEMM unit. The code representing the operations of the hardware module is shown in the boom le, where A and B are the multiplicant and multiplier matrices, and C is the resulting output matrix. For DFP version of the accelerator , A and B are 8-bit, and C is 16-bit. A, B and C are all 32-bit floats for the f loating-p oint v ersion. The accelerator module is connected to the Zynq Processor Unit via the Accelerator Coherency Port (A CP). The resources on-board the SoC allow for multiple choices for accelerating the pipeline including parallelization and vectorization using embe dded CP U cores and custom hardware accelerator on the programmable logic (PL) fabric. In comparison to the PL, parallelization and vectorization on the CP U oer limited number of arithmetic processing units; however , accelerators on the PL side can face challenges in the limited on-chip buer and memory bandwidths. Thus, in order to eciently utilize the available hardware resources, we leav e the control logic and memory-access intensive component, Im2Col, in software and move computational intensive module, GEMM, to PL by synthesize a custom accelerator . For the activation function, we process it using the CP U core in parallel to the accelerator unit. Next, we describe in details our accelerator architecture . 4.3.3 Hardware Accelerator A rchitecture. For FCN models, the GEMM op eration is carried out in every lay er between the weight and input feature matrices. The dimensions of the two matrices can be represented by a 3-tuple, ( M, K, N ), where the w eight matrix is M × K , and the input features matrix is K × N . The output feature matrix is then M × N . Between dierent layers of an FCN model, ( M, K, N ) vary signicantly depending the on sizes and number of the input and output feature maps. An evidence of this can be observed in the our network architecture shown in T able 2 for CASIA Interval V4. In this architecture , after Im2Col operation, the ( M, K, N ) dimensions would be (16, 9, 76800) for Layer 1, where as for Layer 2, these dimensions b ecome (32, 144, 19200). Among FCN models which use dierent input image scaling factors, these dimensional dierences are even more drastic. As such, the accelerator unit must be able to accommodate these dimensional variations and maximize utilization across all the models explored. Figure 8 shows the overall system integration and the architecture of the accelerator core. W e implement tiling buers for the weights (Buer A), input features (Buer B), and output featur es (Buer C). The sizes of these buers are selected base d on the greatest common divisor among the mo dels. For the candidate models in Figure 6, these turn out to b e 8 × 9 for matrix A, 9 × 224 for B, and nally 8 × 224 for matrix C. Note that, since we do not target a specic mo del, the sizes T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 15 ACP ACP ACP Buffer A Buffer B Buffer C GEMM Accelerator Processing Engine Shift & Satur ate 16 - bit 8- bit 8- bit 16 - bit 8- bit 8- bit 8- bit Fig. 9. A closer look at the data paths of the buers in the DFP accelerator unit. for A, B, and C may not be optimal for any sp ecic architecture. In nal system deployment, such dimensions can be further optimized according to the chosen FCN model. W e used Vivado High Level synthesis (HLS) to de velop the GEMM accelerator , which is connected to external memory via an AXI4-Full interface to Accelerator Coherency Port ( A CP). A DMA is used to communicate with A CP and ll the accelerator buer . Here, we use the ARM CP Us as the control unit through a separate AXI-Lite connection. The CP U is responsible for preparing and feeding correct addresses in of the input and output matrices as well as sending the start signal. Once this start signal is received, the accelerator unit accesses the input matrices, performs computations and writes the output matrix to the designated address in the DDR RAM. The accelerator in Figure 8 utilizes nine parallel oating-point multipliers each of which is connected to dierent banks of block RAM contain p ortions of input from matrices A and B. This matrix partitioning helps improve the throughput of the design. The output of the multipliers are then summed together using an adder tree consisting of 9 adders. If the output is a partial sum, it is written to buer C for accumulation until completion before being written back to the DRAM. For the oating-point version, all the datapaths and buers ar e 32-bit wide. For the DFP version, Figure 9 provides a closer look at the datapaths. Since DFP representation may result in dierent radix-point location for the feature maps b etween dierent FCN layers, w e need to shift the output results accordingly . After ward, the output feature maps are converted to 8-bit and saturated if necessary . 5 EXPERIMENT AL RESULTS In this section, we discuss the segmentation and recognition performance of our proposed processing pipeline. W e also report the runtime performance for the embe dded FPGA implementation, spee dup achieved using our hardware accelerator , and comparison to an embedded GP U. 5.1 Experimental Setup All of our e xperiments are performed using two well-kno wn and publicly available iris datasets, the CASIA Interval V4 [ 1 ] and II TD [ 20 ]. Both datasets are captured using near-infrared range sensors and reect real-world deployment conditions. The ground truth segmentation masks used in all of our experiments are obtained fr om IRISSEG-EP [ 16 ]. W e use segmentation fr om Operator A for CASIA Interval V4 dataset. For FCN training and deployment, we use the Darknet framework [ 30 ]. W e fully implement our processing pipeline on the ZedBoar d with Xilinx Zynq 7020 FPGA SoC with 28nm process node and 512MB DDR3 memor y . The chip contains two ARM Cortex A9 cores and programmable logic fabric with 85K logic cells and 4.9Mb block RAM. W e also implement the ow on an embedded GP U, namely the NVIDIA Jetson TX1, for comparison. The TX1 SoC has 4 T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 16 Hokchhay T ann, Heng Zhao, and Sherief Reda ARM Corte x A57 cores, 256 Maxwell CUDA Cores all with 20nm process node, and 4GB of LPDDR4 memory . For both systems, our iris recognition ow is run inside an embedde d linux operating system. 5.2 Recognition Performance Comparisons to Previous W orks While isolated evaluation of FCN models using the segmentation accuracy can b e helpful in narrowing down to the most ecient set of models, they ar e not a sucient indicator of the true overall recognition performance. The true trade-o between FCN model computational complexity and recognition performance can only be analyzed using an end-to-end ow . That is each model must be evaluated based on performance metrics such as equal error rate (EER) and its receiver operating characteristics (ROC). Since end-to-end evaluation on all models explored is e xtremely time consuming, we select only the models from the Pareto fronts from Figure 6, which represent the most ecient models across the segmentation accuracy levels. The models on the Pareto fronts are labele d FCN0–FCN8 and FCN9–FCN19 for CASIA Interval V4 and II TD datasets respectively . For each dataset, the labels ar e in decreasing order of computational complexity as well as segmentation accuracy . T o evaluate the recognition performance of each FCN mo del, we perform all possible combina- tions of intra-class, which are dier ent instances of the same iris, and inter-class matchings. For CASIA Interval V4, this results in approximately 9K intra-class and 6.9M inter-class comparisons. For II TD , approximately 4.8K intra-class and 5M inter-class comparisons are performed. In each matching, the hamming distance (HD) is computed as described in Section 3.2. Figure 10 shows the ROC curves for the two datasets. Her e, the ground truth results are obtained by using the segmentation from IRISSEG-EP [ 16 ] along with the rest of our ow , which includes contour tting, normalization, and encoding. As evidenced here, our best performing models achieve ROC close to the ground truth. The EER along with the F -measure results for the models are reported in T able 5. W e also provide comparison to previous methods, CAHT [ 29 ] and IrisSeg [ 12 ]. W e observe that the ground truth EER for each dataset computed using our ow is slightly lower than that reported in IrisSeg. While we cannot provide exact explanation for this result without full access to their experimental setup, w e susp ect that our contour tting step might be the reason for the dierence since both of the studies use Daugman’s normalization and encoding methods. The results in T able 5 show that a few of our FCN models in each dataset outperform previous state-of-the-art EER results from IrisSeg [ 12 ]. For CASIA Inter val V4, FCN0–FCN3 outperform IrisSeg with FCN0 reducing the EER by almost half. For II TD dataset, FCN9–FCN11 surpass the previous methods with FCN9 reducing EER by mor e than half. However , it is interesting to note that some of our models achieve signicantly higher segmentation accuracy than b oth CAH T and IrisSeg, while at the same time, these mo dels underperform the previous methods recognition performance. This discrepancy can be attributed to the nature of FCN-based segmentation, which does not strongly account for ne-grained pupil and limbic boundaries labeling. This problem can throw o the contour tting module in the next stage producing inaccurate center points and radii. This highlights the necessity to e valuate FCN-based design using end-to-end ow rather than segmentation accuracy alone. In futur e work, this problem may be remedied by assigning larger loss to boundary pixels in comparison to other pixels. Another evidence for the necessity to perform end-to-end evaluation is between FCN9 and FCN10, where the model with more than 3 × computational complexity and higher segmentation accuracy performs worse in overall recognition performance. This observation is also true for between FCN12 and FCN13. Figure 10 also veries this observation where the ROC curves for FCN10 and FCN13 fall below those of FCN9 and FCN12 respectively . T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 17 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 FAR 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 FRR ROC for IITD Ground Truth FCN9 FCN10 FCN11 FCN12 FCN13 FCN14 FCN15 FCN16 FCN17 FCN18 FCN19 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 FAR 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 FRR ROC for CASIA Interval V4 Ground Truth FCN0 FCN1 FCN2 FCN3 FCN4 FCN5 FCN6 FCN7 FCN8 Fig. 10. Receiver Operating Characteristic (ROC) cur ves of FCN-based iris recognition pip elines with ground truth segmentation and dierent FCNs models for CASIA Interval V4 and II TD datasets. The X -axis shows the false acceptance rate, and the Y-axis shows the false rejection rate. In the legend of each dataset, the FCN models are arranged in increasing FLOPs from boom to top. The zoom-in axis range is [0 0.02] for both x and y directions. 5.3 Comparisons between DFP and Floating-Point T able 6 shows the segmentation accuracy and end-to-end r ecognition rate comparisons between our oating-point FCN-based pipeline and their DFP counter part. The DFP version of each FCN model is obtained by analyzing and netuning the traine d oating-point weights. From the results in the table, it is evidenced that the quantization process negatively impacts the segmentation accuracy of the models. However , in many cases, the quantization, in fact, improves the overall recognition rates. For instance, for FCN11 and FCN13 the EER improv es signicantly after the quantization to DFP. 5.4 Runtime Performance and Hardware A cceleration Speedup W e report the runtime performance of our FCN-base d iris recognition pipelines using various FCN models in Figure 11. Due to space constraint, we only report results for FCN9–FCN16 for the II TD dataset. Similar trends and conclusions are obser ved for FCN0–FCN8 for the CASIA4 Inter val dataset. Each runtime result is composed of four components, namely segmentation, contour tting, T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 18 Hokchhay T ann, Heng Zhao, and Sherief Reda T able 5. Equal Error Rate (EER) and segmentation accuracy ( F -measure) comparison b etween previous approaches, our FCN-based pipeline and groundtruth (GT). In each dataset, FCN mo dels are arranged in increasing FLOPs and F -measure from top to boom. CASIA Interval V4 II TD Approach EER (%) F -measure GFLOPs Approach EER (%) F -measure GFLOPs CAH T [29] 0.78 89.27 – CAH T [29] 0.68 86.28 – IrisSeg [12] 0.62 93.10 – IrisSeg [12] 0.50 94.37 – FCN5 0.94 98.75 0.016 FCN16 6.96 97.94 0.014 FCN4 0.64 98.93 0.060 FCN15 1.13 98.15 0.038 FCN3 0.50 99.06 0.132 FCN14 0.82 98.24 0.054 FCN2 0.43 99.09 0.380 FCN13 0.50 98.35 0.117 FCN1 0.42 99.14 0.513 FCN12 0.60 98.38 0.154 FCN0 0.38 99.17 1.143 FCN11 0.41 98.50 0.335 FCN10 0.19 98.59 0.453 FCN9 0.29 98.62 1.791 GT 0.31 – – GT 0.16 – – T able 6. Equal Error Rate (EER) and segmentation accuracy ( F -measure) comparison b etween the groundtruth (GT), f loating-point, and DFP FCN-based recognition pipelines using the II TD dataset. Floating-Point DFP Model EER (%) F -measure EER (%) F -measure FCN13 0.50 98.35 0.46 97.23 FCN12 0.60 98.38 0.68 96.49 FCN11 0.41 98.50 0.22 97.24 FCN10 0.19 98.59 0.23 96.97 FCN9 0.29 98.62 0.37 97.14 GT 0.16 – – – normalization and encoding. For each FCN model, we report results for three congurations namely , pure software, v ectorized software and hardware accelerated design using our custom accelerator . As discussed in Section 4.3, contour tting, normalization and encoding are always run using pure software . For contour tting, there ar e small variations b etween dierent input images and FCN models; howe ver , the average runtime is approximately constant across the dierent runs. Hence, the bulk of the dierences among the pipelines stem from the segmentation runtimes using dierent FCN models. 5.4.1 Runtime Results for FPGA SoC. In comparison to none-vectorized software, vectorization using the NEON instruction allows between 2.5 × to 2.8 × speedup. Using our accelerator design, we achieve between 2 . 4 × and 6 . 6 × speedup. W e observe that higher spe edup is realized for larger FCN models since the fraction of runtime spent in segmentation far exceeds that of other components. For the hardware-accelerated implementation, the runtime dierences between dierent FCN pipelines vary by up to two orders of magnitudes, ranging from 0.05s to 5.3s . The resource utilization of our accelerators is reported in T able 7, and the o orplans of the designs are shown in Figur e 12. As discussed earlier , since our target models var y signicantly in architecture and computational requirement, we implement the accelerators using only the gr eatest common divisor among them, which explains the low resource utilization. However , with this design, we demonstrate that signicant speedup can b e achieved while only utilizing a fraction of T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 19 Fig. 11. Runtime results for end-to-end FCN-based iris recognition pipelines based on dierent FCN seg- mentation mo dels for the II TD dataset. Five platform configurations are reported: pure none-vectorized f loating-point soware (CP U F loat), vectorized f loat-point and fixed-point soware using ARM NEON instructions (CP U V ectorized Float, CP U V ectorized DFP) and hardware accelerated with f loating-point and DFP acceleerators (CP U+Accelerator Float, CP U+Accelerator DFP). The spee dup relative to SW Float is reported on top of each bar . T able 7. FPGA r esource utilization for the floating-point and DFP accelerators. The resources include Look-up T ables (LU T), LU T as memor y (LU TRAM), Flip-F lop Registers, Block RAM (BRAM), Digital Signal Processing units (DSP), and Global Clock Buers (BUFG). LU T LU TRAM Flip-F lop BRAM DSP BUFG Floating Point 15% 3% 9% 5% 21% 3% DFP 13% 2% 7% 5% 5% 3% the available r esource. Once a specic model is chosen, a potentially larger speedup can be achie ved by optimizing the accelerator design and parameters. As expecte d, we observe that overall the oating-point accelerator consumes more resources than the DFP counterpart. Specically , the oating-p oint accelerator requires 4 × more DSP resour ces than xed-point. While there is a smaller dierence in LU T counts, this is due to the required shifting and saturation logic required in the DFP accelerator . For BRAM, the tw o accelerators utilize the same amount since we require multiple ports for parallel multiplications and accumulations. 5.4.2 Comparison with Embedded GP U. For comparison, we also implement our iris recognition pipeline on a Jetson TX1 embedded GP U platform. T able 8 provides the runtime comparisons for the end-to-end ow b etween the emb edded FPGA and GP U systems. The results show that the GP U perform signicantly b etter than the FPGA platform for larger models such as FCN9 and FCN10. This performance dierence can b e attributed to the higher op erating frequency and more computational resources such as cores and memory bandwidth on the GP U platform. This, however , results in GP U consuming more than double the power requirement for the FPGA platform. In this case, the platform of choice is therefor e dep endent on the runtime, and energy constraints of the target deployment. For smaller models, surprising runtime results are observed for the GPU platform. From FCN11 to FCN13, the runtime did not de crease as the models become simpler . Our proling using Nvidia’s nvprof and Ninsight Systems shows that most of the runtime is spent in GP U memory allo cation and mov ement. This results in GP U having better energy eciency for larger models but signicantly less eciency for smaller ones. Howe ver , an important note is that the GP U SoC was fabricated with more recent process no de of 20nm, which means that for the same 28nm technology node as the FPGA system, the GP U would consume more energy than the results reported in T able 8. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 20 Hokchhay T ann, Heng Zhao, and Sherief Reda A RM Proces sors G E M M A cc el e r at o r I n t er co n n ect I / O , T i m er , I n t er f a ces (a) Floating-Point A RM Proces sors G E M M A cc el e r at o r I n t er co n n ect I / O , T i m er , I n t er f a ces DFP 8x9 * 9x2 24 (b) Dynamic Fixe d-Point Fig. 12. FPGA f loorplans of our synthesized accelerators and system modules. T able 8. Runtime (in seconds) and energy (in joules) comparison for end-to-end iris r ecognition flows between embedded FPGA and GP U platforms. The best runtime result for each model is shown in bold. The GP U SoC is based on 20nm process node and the FPGA SoC is based on 28nm. Model CP U+Accel (Float) CP U+Accel (DFP) CP U+GP U (Float) Runtime (s) Energy ( J) Runtime (s) Energy ( J) Runtime (s) Energy ( J) FCN13 0.67 3.35 0.57 2.85 0.77 11.55 FCN12 0.89 4.45 0.78 3.90 0.79 11.85 FCN11 1.79 8.95 1.51 7.55 0.76 11.4 FCN10 1.73 8.65 1.43 7.15 0.83 12.5 FCN9 5.32 26.6 4.20 21.0 1.06 15.9 6 CONCLUSION In this work, w e proposed an end-to-end iris r e cognition application with FCN-based segmentation. Through our proling of the overall processing pipeline, we identied that the majority of the runtime is spent on the segmentation step, which was the FCN inference. T argeting this processing stage, we intr o duced a three-step SW/HW co-design methodology to cut down its runtime. First, we introduced a design space exploration for the FCN architecture to select the most ecient set of mo dels. The exploration was performed through a grid search on several architectural parameters including the spatial dimensions of the input image . For each architecture, we evaluated its segmentation accuracy performance as well as the computational ov erheads of each FCN model. W e then identied the most ecient set of models, which forme d a Pareto front. Compared to the FCN architectures from pr evious works, our best-performing models set new state-of-the-art segmentation accuracy on two well-known datasets, namely CASIA Iris Interval V4 and II TD , while being 50 × more resource ecient. Furthermore, we evaluated the true recognition rate of each model using the end-to-end pipeline and showed that the models outperformed the recognition rate from previous works on the two datasets. Our architectural exploration in this design process T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 21 showed that a small EER incr ease of 0.7% can be traded o for orders of magnitude reduction in computational complexities and latency . With this set of models, we co-designed their datatype to dynamic xed-point formats for hardware-friendly execution. Finally , we introduced a novel FPGA - based dynamic xed-point accelerator and demonstrated a full implementation of an accelerate d processing ow on an embe dded FPGA SoC. W e also synthesized a oating-point version of the accelerator for runtime and r esources comparisons. In comparison to the onboard CP U, our accelerator is able to achieve up to 8.3 × speedup for the overall pipeline while using only a small fraction of the available FPGA resource . Finally , we provided comparisons between the FPGA system and an embedde d GP U showing the dierent benets of the two platforms and interesting insights for smaller FCN mo dels. W e release a MA TLAB-version of our iris recognition ow on Github 1 . The design methodology proposed in this work opens many doors for research on end-to-end systems similar to the demonstrated iris recognition. Future work includes further FCN optimization through pruning, accelerator support for sparse matrix, and improved the contour tting for none- circular irises using active contour . A CKNO WLEDGMENTS W e would like to thank Dr . Soheil Hashemi for helpful discussions. W e also thank NVIDIA Corpo- ration for generous GP U donation. This work is supported by NSF grant 1814920. REFERENCES [1] Accessed on September 1, 2018. CASIA Iris V4 Dataset, A vailable online. http://biometrics.idealtest.org/dbDetailForUser . do?id=4. [2] Mohammed AM Abdullah, Satnam S Dlay , Wai L W o o, and Jonathon A Chambers. 2017. Robust iris segmentation method based on a new active contour force with a noncircular normalization. IEEE transactions on systems, man, and cybernetics: Systems 47, 12, 3128–3141. [3] Fernando Alonso-Fernandez and Josef Bigun. 2012. Iris b oundaries segmentation using the generalized structure tensor . A study on the ee cts of image degradation. In IEEE International Conference on Biometrics: Theor y , Applications and Systems . 426–431. [4] Muhammad Arsalan, Rizwan Ali Naqvi, Dong Seop Kim, Phong Ha Nguyen, Muhammad Owais, and Kang Ryoung Park. 2018. IrisDenseNet: Robust Iris Segmentation Using Densely Connected Fully Convolutional Netw orks in the Images by Visible Light and Near-Infrared Light Camera Sensors. Sensors 18, 5, 1501. [5] Vijay Badrinarayanan, Alex Kendall, and Roberto Cipolla. 2015. Segnet: A deep convolutional enco der-decoder architecture for image segmentation. arXiv preprint . [6] Liang-Chieh Chen, George Papandreou, Iasonas Kokkinos, Kevin Murphy , and Alan L Yuille . 2018. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE transactions on pattern analysis and machine intelligence , 834–848. [7] Matthieu Courbariaux, Y oshua Bengio, and Jean-Pierre David. 2014. Training deep neural networks with low precision multiplications. arXiv preprint . [8] John Daugman. 2007. New methods in iris recognition. IEEE Transactions on Systems, Man, and Cybernetics 37, 5, 1167–1175. [9] John Daugman. 2009. How iris recognition works. In The essential guide to image processing . Elsevier , 715–739. [10] John G Daugman. 1993. High condence visual recognition of persons by a test of statistical indep endence. IEEE transactions on pattern analysis and machine intelligence 15, 11, 1148–1161. [11] Abhishek Gangwar and Akanksha Joshi. 2016. DeepIrisNet: Deep iris representation with applications in iris recognition and cross-sensor iris recognition. In IEEE International Conference on Image Processing . 2301–2305. [12] Abhishek Gangwar , Akanksha Joshi, Ashutosh Singh, Fernando Alonso-Fernandez, and Josef Bigun. 2016. IrisSeg: A fast and robust iris segmentation framework for non-ideal iris images. In IEEE International Conference on Biometrics . 1–8. 1 https://github.com/scale-lab/FCNiris T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 22 Hokchhay T ann, Heng Zhao, and Sherief Reda [13] Soheil Hashemi, Nicholas Anthony , Hokchhay T ann, R Iris Bahar , and Sherief Reda. 2017. Understanding the impact of precision quantization on the accuracy and energy of neural networks. In IEEE Design, Automation & T est in Europe Conference & Exhibition . 1474–1479. [14] Soheil Hashemi, Hokchhay T ann, Francesco Buttafuo co, and Sherief Reda. 2018. Approximate computing for biometric security systems: A case study on iris scanning. In IEEE Design, A utomation & T est in Europe Conference & Exhibition . 319–324. [15] Heinz Hof bauer , Fernando Alonso-Fernandez, Josef Bigun, and Andreas Uhl. 2016. Experimental analysis regarding the inuence of iris segmentation on the recognition rate. IET Biometrics 5, 3, 200–211. [16] Heinz Hof bauer , Fernando Alonso-Fernandez, Peter Wild, Josef Bigun, and Andreas Uhl. 2014. A ground truth for iris segmentation. In IEEE International Conference on Pattern Recognition . 527–532. [17] Ehsaneddin Jalilian and Andreas Uhl. 2017. Iris segmentation using fully convolutional encoder–de coder networks. In Deep Learning for Biometrics . Springer , 133–155. [18] Ehsaneddin Jalilian, Andreas Uhl, and Roland K witt. 2017. Domain adaptation for CNN based iris segmentation. BIOSIG , 1–6. [19] WK Kong and D Zhang. 2001. Accurate iris segmentation based on novel reection and eyelash detection model. In IEEE International Symposium on Intelligent Multime dia, Video and Speech Processing . 263–266. [20] Ajay Kumar and Arun Passi. 2010. Comparison and combination of iris matchers for reliable personal authentication. Pattern recognition 43, 3 (2010), 1016–1026. [21] Nianfeng Liu, Haiqing Li, Man Zhang, Jing Liu, Zhenan Sun, and Tieniu T an. 2016. Accurate iris segmentation in non-cooperative environments using fully convolutional networks. In IEEE International Conference on Biometrics . 1–8. [22] Jonathan Long, Evan Shelhamer , and Tre vor Darrell. 2015. Fully convolutional networks for semantic segmentation. In IEEE conference on computer vision and pattern recognition . 3431–3440. [23] Mariano López, John Daugman, and Enrique Cantó. 2011. Hardware-software co-design of an iris recognition algorithm. IET Information Security , 60–68. [24] Li Ma, Y unhong W ang, and Tieniu Tan. 2002. Iris recognition using circular symmetric lters. In IEEE International Conference on Pattern Recognition . 414–417. [25] L Masek and P Kov esi. 2003. MA TLAB Source Code for a Biometric Identication System Based on Iris Patterns. The School of Computer Science and Software Engineering, The University of W estern Australia . [26] D Petrovska and A Mayoue . 2007. Description and documentation of the biosecure software library . Project No IST -2002-507634-BioSecure, Deliverable . [27] Ahmad Poursaberi and Babak N Araabi. 2005. A novel iris recognition system using morphological e dge detector and wavelet phase features. ICGST International Journal on Graphics, Vision and Image Processing 5, 6, 9–15. [28] Hugo Proença and Luís A Alexandre . 2007. The NICE. I: noisy iris challenge evaluation-part I. In IEEE International Conference on Biometrics: Theory , A pplications, and Systems . 1–4. [29] Christian Rathgeb, Andreas Uhl, and Peter Wild. 2012. Iris biometrics: from segmentation to template security . V ol. 59. Springer Science & Business Media. [30] Joseph Redmon. 2013–2016. Darknet: Open Source Neural Networks in C. http://pjreddie.com/darknet/. [31] C. J. V an Rijsbergen. 1979. Information Retrieval (2nd ed.). Butterworth-Heinemann. citation on p. 4 pages. [32] Olaf Ronneb erger , P hilipp Fischer, and Thomas Brox. 2015. U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical image computing and computer-assiste d intervention . Springer , 234–241. [33] Samir Shah and Arun Ross. 2009. Iris segmentation using geodesic active contours. IEEE Transactions on Information Forensics and Security . [34] Jost T obias Springenberg, Alexey Dosovitskiy , Thomas Brox, and Martin Riedmiller . 2014. Striving for simplicity: The all convolutional net. arXiv preprint . [35] Hokchhay T ann, Soheil Hashemi, R Iris Bahar , and Sherief Reda. 2017. Hardware-software co design of accurate, multiplier-free Deep Neural Networks. In IEEE Design Automation Conference . 1–6. [36] Christel-loic Tisse, Lionel Martin, Lionel T orres, Michel Robert, et al . 2002. Person identication technique using human iris recognition. In Proc. Vision Interface , V ol. 294. Citeseer , 294–299. [37] Andreas Uhl and Peter Wild. 2012. W eighted adaptive hough and ellipsopolar transforms for r eal-time iris segmentation. In IEEE International Conference on Biometrics . 283–290. [38] Richard P Wildes, Jane C Asmuth, Gilbert L Green, Stephen C Hsu, Raymond J Kolczynski, James R Matey , and Sterling E McBride. 1994. A system for automated iris recognition. In IEEE W orkshop on Applications of Computer Vision . 121–128. [39] Guangzhu Xu and Zaifeng Zang. 2008. An ecient iris recognition system based on interse cting cortical model neural network. International Journal of Cognitive Informatics and Natural Intelligence 2, 3, 43–56. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019 A Resource-Eicient Embedded Iris Recognition System Using Fully Convolutional Networks 23 [40] Zijing Zhao and Kumar Ajay . 2015. An accurate iris segmentation framework under relaxed imaging constraints using total variation model. In IEEE International Conference on Computer Vision . 3828–3836. [41] Zijing Zhao and Ajay Kumar . 2017. T owards more accurate iris recognition using de eply learne d spatially corresponding features. In IEEE International Conference on Computer Vision . 3809–3818. T o appear in ACM Journal on Emerging T echnologies in Computing Systems 2019

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

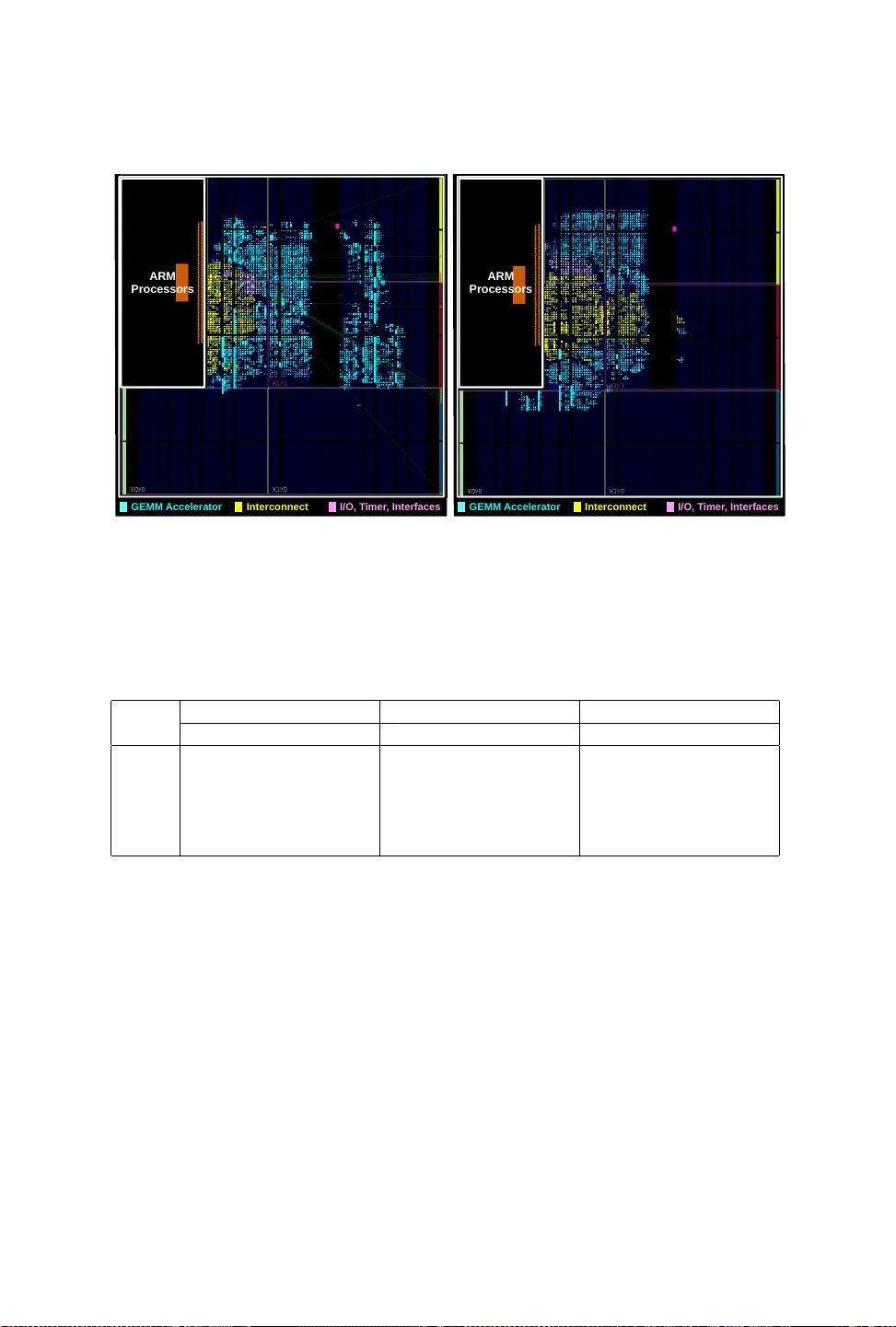

Leave a Comment