Towards Multimodal Emotion Recognition in German Speech Events in Cars using Transfer Learning

The recognition of emotions by humans is a complex process which considers multiple interacting signals such as facial expressions and both prosody and semantic content of utterances. Commonly, research on automatic recognition of emotions is, with f…

Authors: Deniz Cevher, Sebastian Zepf, Roman Klinger

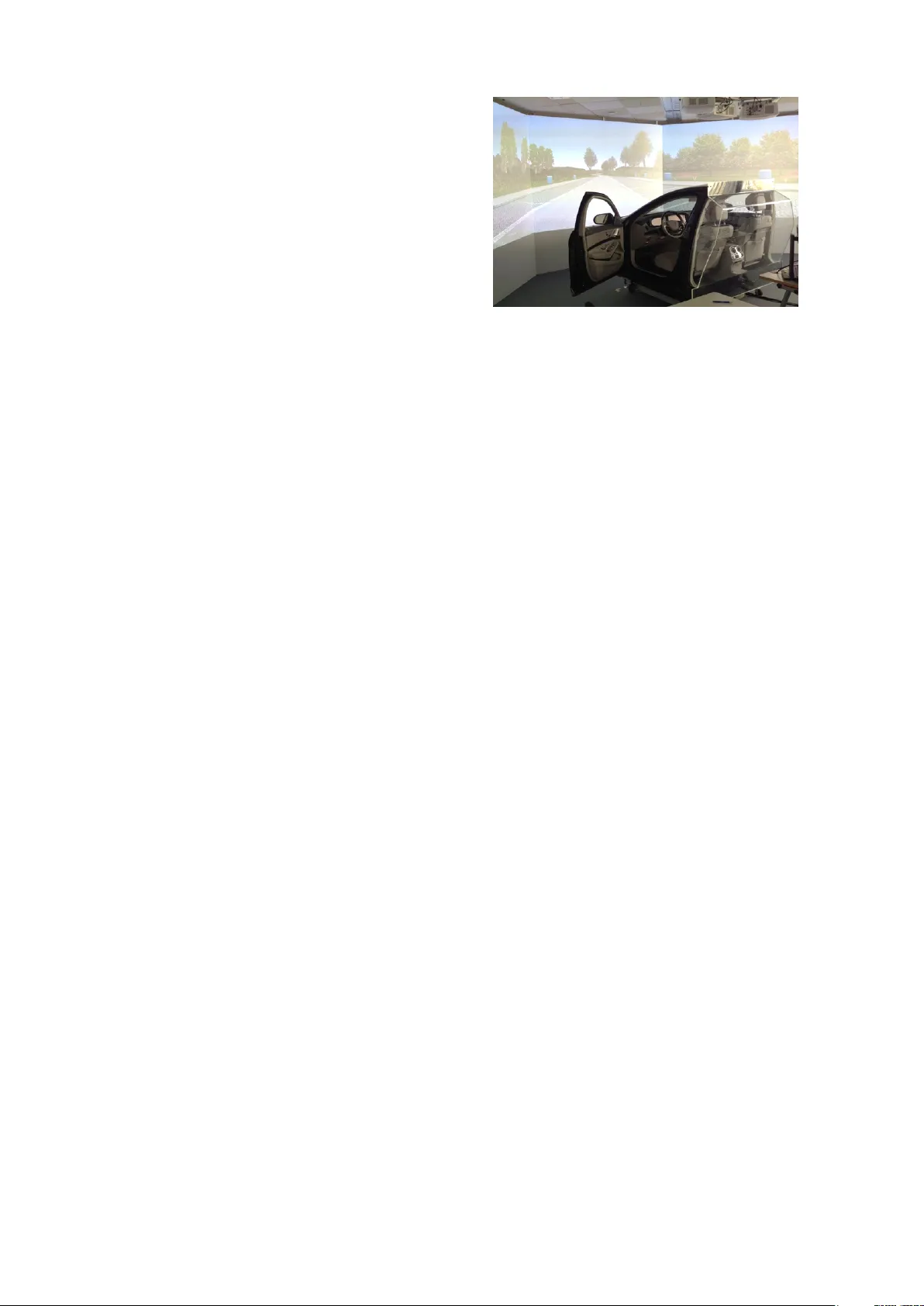

T owards Multimodal Emotion Recognition in German Speech Events in Cars using T ransfer Learning Deniz Cevher 1 , 2 ∗ , Sebastian Zepf 1 ∗ and Roman Klinger 2 1 Mercedes-Benz Research & De velopment, Daimler A G, Sindelfingen, Germany 2 Institut f ¨ ur Maschinelle Sprachverarbeitung, Uni versity of Stuttgart, Germany { firstname.lastname } @daimler.com { firstname.lastname } @ims.uni-stuttgart.de Abstract The recognition of emotions by humans is a complex process which considers mul- tiple interacting signals such as facial ex- pressions and both prosody and semantic content of utterances. Commonly , research on automatic recognition of emotions is, with fe w exceptions, limited to one modal- ity . W e describe an in-car experiment for emotion recognition from speech interac- tions for three modalities: the audio signal of a spoken interaction, the visual signal of the dri ver’ s face, and the manually tran- scribed content of utterances of the dri ver . W e use of f-the-shelf tools for emotion de- tection in audio and face and compare that to a neural transfer learning approach for emotion recognition from text which uti- lizes existing resources from other domains. W e see that transfer learning enables mod- els based on out-of-domain corpora to per- form well. This method contributes up to 10 percentage points in F 1 , with up to 76 micro-av erage F 1 across the emotions jo y , annoyance and insecurity . Our findings also indicate that off-the-shelf-tools ana- lyzing face and audio are not ready yet for emotion detection in in-car speech interac- tions without further adjustments. 1 Introduction Automatic emotion recognition is commonly under- stood as the task of assigning an emotion to a pre- defined instance, for e xample an utterance (as au- dio signal), an image (for instance with a depicted face), or a textual unit (e.g., a transcribed utterance, a sentence, or a T weet). The set of emotions is often follo wing the original definition by Ekman (1992), which includes anger , fear , disgust, sadness, joy , ∗ The first two authors contrib uted equally . and surprise, or the extension by Plutchik (1980) who adds trust and anticipation. Most w ork in emotion detection is limited to one modality . Exceptions include Busso et al. (2004) and Sebe et al. (2005), who in vestigate multimodal approaches combining speech with facial informa- tion. Emotion recognition in speech can utilize semantic features as well (Anagnostopoulos et al., 2015). Note that the term “multimodal” is also used beyond the combination of vision, audio, and text. F or example, Sole ymani et al. (2012) use it to refer to the combination of electroencephalogram, pupillary response and gaze distance. In this paper , we deal with the specific situation of car environments as a testbed for multimodal emotion recognition. This is an interesting en viron- ment since it is, to some degree, a controlled en- vironment: Dialogue partners are limited in mov e- ment, the degrees of freedom for occurring e vents are limited, and sev eral sensors which are useful for emotion recognition are already integrated in this setting. More specifically , we focus on emo- tion recognition from speech e vents in a dialogue with a human partner and with an intelligent agent. Also from the application point of vie w , the do- main is a relev ant choice: Past research has shown that emotional intelligence is beneficial for human computer interaction. Properly processing emo- tions in interactions increases the engagement of users and can improve performance when a specific task is to be fulfilled (Klein et al., 2002; Coplan and Goldie, 2011; Partala and Surakka, 2004; Pantic et al., 2005). This is mostly based on the aspect that machines communicating with humans appear to be more trustworthy when they sho w empathy and are percei ved as being natural (P artala and Surakka, 2004; Brav e et al., 2005; Pantic et al., 2005). V irtual agents play an increasingly important role in the automoti ve conte xt and the speech modality is increasingly being used in cars due to its potential to limit distraction. It has been sho wn that adapting the in-car speech interaction system according to the drivers’ emotional state can help to enhance security , performance as well as the over - all driving experience (Nass et al., 2005; Harris and Nass, 2011). W ith this paper , we in vestigate ho w each of the three considered modalitites, namely facial expres- sions, utterances of a driv er as an audio signal, and transcribed text contrib utes to the task of emotion recognition in in-car speech interactions. W e focus on the fiv e emotions of joy , insecurity , annoyance , r elaxation , and bor edom since terms corresponding to so-called fundamental emotions like fear ha v e been shown to be associated to too strong emo- tional states than being appropriate for the in-car context (Dittrich and Zepf, 2019). Our first contri- bution is the description of the experimental setup for our data collection. Aiming to provok e spe- cific emotions with situations which can occur in real-world dri ving scenarios and to induce speech interactions, the study was conducted in a driving simulator . Based on the collected data, we pro- vide baseline predictions with off-the-shelf tools for face and speech emotion recognition and com- pare them to a neural network-based approach for emotion recognition from text. Our second con- tribution is the introduction of transfer learning to adapt models trained on established out-of-domain corpora to our use case. W e work on German lan- guage, therefore the transfer consists of a domain and a language transfer . 2 Related W ork 2.1 F acial Expr essions A common approach to encode emotions for fa- cial e xpressions is the facial action coding sys- tem F A CS (Ekman and Friesen, 1978; Sujono and Gunaw an, 2015; Lien et al., 1998). As the reli- ability and reproducability of findings with this method hav e been critically discussed (Mesman et al., 2012), the trend has increasingly shifted to per- form the recognition directly on images and videos, especially with deep learning. F or instance, Jung et al. (2015) dev eloped a model which considers tem- poral geometry features and temporal appearance features from image sequences. Kim et al. (2016) propose an ensemble of conv olutional neural net- works which outperforms isolated networks. In the automotiv e domain, F A CS is still popular . Ma et al. (2017) use support vector machines to distinguish happy , bother ed , confused , and con- centrated based on data from a natural driving en vironment. The y found that bothered and con- fused are difficult to distinguish, while happy and concentrated are well identified. Aiming to re- duce computational cost, T ews et al. (2011) ap- ply a simple feature extraction using four dots in the face defining three facial areas. They analyze the v ariance of the three facial areas for the recog- nition of happy , anger and neutral . Ihme et al. (2018) aim at detecting frustration in a simulator en vironment. The y induce the emotion with spe- cific scenarios and a demanding secondary task and are able to associate specific face movements according to F A CS. Paschero et al. (2012) use OpenCV (https://opencv .org/) to detect the eyes and the mouth region and track facial mo vements. They simulate dif ferent lightning conditions and apply a multilayer perceptron for the classification task of Ekman’ s set of fundamental emotions. Overall, we found that studies using facial fea- tures usually focus on continuous driv er monitor- ing, often in driv er -only scenarios. In contrast, our work in vestigates the potential of emotion recogni- tion during speech interactions. 2.2 Acoustic Past research on emotion recognition from acous- tics mainly concentrates on either feature selection or the de velopment of appropriate classifiers. Rao et al. (2013) as well as V erveridis et al. (2004) com- pare local and global features in support vector machines. Next to such discriminati ve approaches, hidden Marko v models are well-studied, ho we ver , there is no agreement on which feature-based clas- sifier is most suitable (El A yadi et al., 2011). Simi- lar to the facial e xpression modality , recent efforts on applying deep learning hav e been increased for acoustic speech processing. For instance, Lee and T ashev (2015) use a recurrent neural network and Palaz et al. (2015) apply a con volutional neural net- work to the raw speech signal. Neumann and V u (2017) as well as T rigeorgis et al. (2016) analyze the importance of features in the conte xt of deep learning-based emotion recognition. In the automoti ve sector , Boril et al. (2011) ap- proach the detection of negati ve emotional states within interactions between driv er and co-driv er as well as in calls of the driver to wards the automated spoken dialogue system. Using real-w orld dri ving data, they find that the combination of acoustic fea- tures and their respecti ve Gaussian mixture model scores performs best. Schuller et al. (2006) collects 2,000 dialog turns directed to wards an automoti ve user interface and inv estigate the classification of anger , confusion , and neutr al . The y sho w that au- tomatic feature generation and feature selection boost the performance of an SVM-based classifier . Further , they analyze the performance under sys- tematically added noise and de velop methods to mitigate ne gati ve ef fects. For more details, we re- fer the reader to the surv ey by Schuller (2018). In this work, we explore the straight-forward applica- tion of domain independent software to an in-car scenario without domain-specific adaptations. 2.3 T ext Pre vious work on emotion analysis in natural lan- guage processing focuses either on resource cre- ation or on emotion classification for a specific task and domain. On the side of resource creation, the early and influential work of Pennebaker et al. (2015) is a dictionary of words being associated with dif ferent psychologically relev ant categories, including a subset of emotions. Another popular resource is the NRC dictionary by Mohammad and T urney (2012). It contains more than 10000 words for a set of discrete emotion classes. Other re- sources include W ordNet Affect (Strappara va and V alitutti, 2004) which distinguishes particular word classes. Further , annotated corpora hav e been cre- ated for a set of different domains, for instance fairy tales (Alm et al., 2005), Blogs (Aman and Sz- pako wicz, 2007), T witter (Mohammad et al., 2017; Schuf f et al., 2017; Mohammad, 2012; Mohammad and Bra vo-Marquez, 2017a; Klinger et al., 2018), Facebook (Preo t ¸ iuc-Pietro et al., 2016), news head- lines (Strapparav a and Mihalcea, 2007), dialogues (Li et al., 2017), literature (Kim et al., 2017), or self reports on emotion ev ents (Scherer , 1997) (see (Bostan and Klinger , 2018) for an overvie w). T o automatically assign emotions to textual units, the application of dictionaries has been a popu- lar approach and still is, particularly in domains without annotated corpora. Another approach to ov ercome the lack of huge amounts of annotated training data in a particular domain or for a spe- cific topic is to exploit distant supervision: use the signal of occurrences of emoticons or specific hash- tags or words to automatically label the data. This is sometimes referred to as self-labeling (Klinger et al., 2018; Pool and Nissim, 2016; Felbo et al., 2017; W ang et al., 2012). Figure 1: The setup of the dri ving simulator . A variety of classification approaches hav e been tested, including SNoW (Alm et al., 2005), support vector machines (Aman and Szpako wicz, 2007), maximum entropy classification, long short-term memory network, and con volutional neural net- work models (Schuf f et al., 2017, i.a. ). More re- cently , the state of the art is the use of transfer learning from noisy annotations to more specific predictions (Felbo et al., 2017). Still, it has been sho wn that transferring from one domain to another is challenging, as the way emotions are e xpressed v aries between areas (Bostan and Klinger , 2018). The approach by Felbo et al. (2017) is dif ferent to our work as the y use a huge noisy data set for pre- training the model while we use small high quality data sets instead. Recently , the state of the art has also been pushed forward with a set of shared tasks, in which the participants with top results mostly exploit deep learning methods for prediction based on pretrained structures like embeddings or language models (Klinger et al., 2018; Mohammad et al., 2018; Mo- hammad and Brav o-Marquez, 2017a). Our w ork follo ws this approach and b uilds up on embeddings with deep learning. Furthermore, we approach the application and adaption of te xt-based classifiers to the automoti ve domain with transfer learning. 3 Data set Collection The first contribution of this paper is the construc- tion of the AMMER data set which we describe in the following. W e focus on the dri vers’ interactions with both a virtual agent as well as a co-dri ver . T o collect the data in a safe and controlled environ- ment and to be able to consider a v ariety of prede- fined driving situations, the study was conducted in a dri ving simulator . T ype Example D–A, be- ginning W ie geht es dir gerade und wie sind deine Gedanken zur bev orstehenden Fahrt? How are you doing right now? What ar e your thoughts about the up- coming drive? D–A, reaching destina- tion Bei ¨ uber 50 T eilnehmern hast du die zweitschnellste Zeit erreicht. W as glaubst du? W ie hast du es geschafft so schnell zu sein? Among mor e than 50 participants you achieved the sec- ond best r esult. What do you think? How did you manage to ac hieve that? D–A, after dri ving Du hast im letzten Streckenabschnitt ein paar Mal stark gebremst. W as ist da passiert? In the last section, you slowed down multiple times. What hap- pened? D–Co, lo w- demand section Erinnern Sie sich an Ihren letzten Urlaub . Bitte beschreiben Sie, wie dieser Urlaub f ¨ ur Sie war? Remember your last vacation. Please describe how it was. T able 1: Examples for triggered interactions with translations to English. (D: Dri ver , A: Agent, Co: Co-Dri ver) 3.1 Study Setup and Design The study en vironment consists of a fixed-base driv- ing simulator running V ires’ s VTD (V irtual T est Dri ve, v2.2.0) simulation software (https://vires. com/vtd- vires- virtual- test- driv e/). The v ehicle has an automatic transmission, a steering wheel and gas and brake pedals. W e collect data from video, speech and biosignals (Empatica E4 to record heart rate, electrodermal acti vity , skin temperature, not further used in this paper) and questionnaires. T wo RGB cameras are fix ed in the v ehicle to capture the driv ers face, one at the sun shield above the dri vers seat and one in the middle of the dashboard. A microphone is placed on the center console. One experimenter sits ne xt to the driv er , the other be- hind the simulator . The virtual agent accompany- ing the driv e is realized as W izard-of-Oz prototype which enables the e xperimenter to manually trigger prerecorded voice samples playing trough the in- car speakers and to bring new content to the center screen. Figure 1 sho ws the dri ving simulator . The experimental setting is comparable to an e veryday driving task. Participants are told that the goal of the study is to e v aluate and to improv e an intelligent driving assistant. T o increase the probability of emotions to arise, participants are in- structed to reach the destination of the route as fast as possible while follo wing traf fic rules and speed limits. They are informed that the time needed for the task would be compared to other partici- pants. The route comprises highways, rural roads, and city streets. A navigation system with v oice commands and information on the screen k eeps the participants on the predefined track. T o trigger emotion changes in the participant, we use the following e vents: (i) a car on the right lane cutting of f to the left lane when participants try to ov ertake follo wed by trucks blocking both lanes with a slow ov ertaking maneuver (ii) a skateboarder who appears unexpectedly on the street and (iii) participants are praised for reaching the destination unexpectedly quickly in comparison to previous participants. Based on these e vents, we trigger three inter- actions (T able 1 provides examples) with the in- telligent agent ( Driver-Agent Interactions, D–A ). Pretending to be aware of the current situation, e. g. , to recognize unusual dri ving beha vior such as strong braking, the agent asks the driv er to ex- plain his subjecti ve perception of these e vents in detail. Additionally , we trigger two more interac- tions with the intelligent agent at the be ginning and at the end of the driv e, where participants are asked to describe their mood and thoughts reg arding the (upcoming) dri ve. This results in fiv e interactions between the dri ver and the virtual agent. Furthermore, the co-driv er asks three different questions during sessions with light traffic and lo w cogniti ve demand ( Driver -Co-Driver Interactions, D–Co ). These questions are more general and non- traf fic-related and aim at triggering the participants’ memory and fantasy . Participants are asked to de- scribe their last v acation, their dream house and their idea of the perfect job . In sum, there are eight interactions per participant (5 D–A, 3 D–Co). 3.2 Procedur e At the beginning of the study , participants were welcomed and the upcoming study procedure was explained. Subsequently , participants signed a con- sent form and completed a questionnaire to pro- vide demographic information. After that, the co- dri ving experimenter started with the instruction E IT Example J A Ich glaube, weil ich ziemlich schnell auf Situationen reagieren kann, weil ich eine ziemlich gute Reaktion habe. Und ich w ¨ urde auch behaupten, dass ich relati v v orausschauend fahre, weil ich schon einiges an Fahrerfahrung mitbringe. I think because I can r espond to situations very quickly because my r eaction is very good. And I would say that I drive foresightful because I have a lot of driving e xperience. J C Letzter Urlaub war im September 2018. Singapur und Bali. W ar sehr sch ¨ on. Erholung, andere Kultur , andere L ¨ ander . W ar sehr gut und ist zu wiederholen. Last vacation was in September 2018. Singapore and Bali. It was beautiful. Recr eation, differ ent culture , differ ent countries. It was very good and needs r epetition. A A Zwei bis drei Mal Fahrzeuge, die K olonne fuhren. Und das letzte Fahrzeug hat, f ¨ ur mein Gef ¨ uhl, sehr ruckartig und mit wenig nach hinten zu schauen, die Spur ge wechselt und mich dazu gezwungen, dann doch noch meine Geschwindigkeit zu reduzieren. T wo or thr ee times vehicles wer e driving behind each other . The last vehicle cut off my lane, in my opinion very quickly and without looking back and forced me to slow down. A C Mir geht es nicht besonders gut. Die Fahrt war sehr stressig. Ich schwitze ziemlich. I’m not feeling well. The ride was str essful. I am sweating. I A Letzter Urlaub war nicht so gut f ¨ ur mich. Obwohl. Naja doch. Der letzte war schon wieder gut. Das war im Sommer . Da war es n ¨ amlich so abartig warm dieses Jahr . Und wir haben bei uns daheim. Also ich komme ja vom Land. W ir haben bei uns daheim auf dem Land unseren W ohnwagen ausgebaut. Last vacation was not so good for me. Although. W ell, yes. The last one was good. It was in summer . It was very warm this year . And we have at home. I come fr om the countryside. W e have furnished our mobile home. I C Ein Mensch ist ¨ uber die Straße gelaufen und ich habe ihn zuerst nicht gesehen. A human cr ossed the str eet and I haven’ t seen him in the first moment. B A Ich habe mich immer an die Richtgeschwindigkeit gehalten. Und ja. Ich weiß auch nicht. I always followed the r ecommended velocity . And, well. I don’t know . B C Ja. Nicht viel arbeiten und viel Geld verdienen. Y es. Not working much and earning a lot of money . R A Mir geht es gut und ich bin gespannt auf die Fahrt. Ich denke, es macht Spaß. I am fine and I am looking forwar d to the ride. I think it will be fun. R C Ja, ich erinnere mich an den letzten Urlaub und der war sch ¨ on, war erholsam und war warm. Y es, I r emember the last vacation. It was nice, r ecr eative and warm. N A Es sind Autos von der rechten Spur auf meine Spur gezogen, welche da v or deutlich langsamer waren. Cars wer e changing into my lane , which wer e slower before . N C Ein Haus, das relati v alleine f ¨ ur sich steht. Am besten am Meer und mit einem gr ¨ unen Garten. Und ja. V iel Platz f ¨ ur sich. A house with space around. In the best case at the sea and with a green gar den. And yes. A lot of space for us. T able 2: Examples from the collected data set (with translation to English). E: Emotion, IT : interaction type with agent (A) and with Codriv er (C). J: Jo y , A: Anno yance, I: Insecurity , B: Boredom, R: Relaxation, N: No emotion. in the simulator which was follo wed by a familiar - ization driv e consisting of highway and city driv- ing and cov ering dif ferent driving maneuv ers such as tight corners, lane changing and strong brak- ing. Subsequently , participants started with the main dri ving task. The driv e had a duration of 20 minutes containing the eight previously men- tioned speech interactions. After the completion of the driv e, the actual goal of improving automatic emotional recognition was re vealed and a stan- dard emotional intelligence questionnaire, namely the TEIQue-SF (Cooper and Petrides, 2010), w as handed to the participants. Finally , a retrospec- ti ve interview w as conducted, in which participants were played recordings of their in-car interactions and asked to gi ve discrete (annoyance, insecurity , joy , relaxation, boredom, none, following (Dittrich and Zepf, 2019)) w as well as dimensional (valence, arousal, dominance (Posner et al., 2005) on a 11- point scale) emotion ratings for the interactions and the according situations. W e only use the discrete class annotations in this paper . 3.3 Data Analysis Overall, 36 participa nts aged 18 to 64 years ( µ =28.89, σ =12.58) completed the experiment. This leads to 288 interactions, 180 between dri ver and the agent and 108 between driv er and co- dri ver . The emotion self-ratings from the partic- ipants yielded 90 utterances labeled with joy , 26 with annoyance , 49 with insecurity , 9 with bor e- dom , 111 with relaxation and 3 with no emotion . One example interaction per interaction type and emotion is shown in T able 2. For further experi- ments, we only use joy , annoyance/anger , and in- security/fear due to the small sample size for bore- dom and no emotion and under the assumption that relaxation brings little expressi vity . Embeddings BiLSTM Dense Dense Softmax Pretraining Embeddings BiLSTM Dense Dense Softmax T ransfer F ea r Anger Jo y Insec. Anno y . Jo y Figure 2: Model for Transfer Learning from T ext. Grey box es contain frozen parameters in the corre- sponding learning step. 4 Methods 4.1 Emotion Recognition from Facial Expressions W e preprocess the visual data by extracting the sequence of images for each interaction from the point where the agent’ s or the co-driv er’ s question was completely uttered until the dri ver’ s response stops. The av erage length is 16.3 seconds, with the minimum at 2.2s and the maximum at 54.7s. W e apply an off-the-shelf tool for emotion recogni- tion (the manufacturer cannot be disclosed due to licensing restrictions). It deli vers frame-by-frame scores ( ∈ [ 0; 100 ] ) for discrete emotional states of joy , anger and fear . While joy corresponds directly to our annotation, we map anger to our label annoy- ance and fear to our label insecurity . The maximal av erage score across all frames constitutes the ov er- all classification for the video sequence. Frames where the software is not able to detect the face are ignored. 4.2 Emotion Recognition from A udio Signal W e extract the audio signal for the same sequence as described for facial expressions and apply an of f-the-shelf tool for emotion recognition. The software deli vers single classification scores for a set of 24 discrete emotions for the entire utterance. W e consider the outputs for the states of joy , anger , and fear , mapping analogously to our classes as for facial expressions. Lo w-confidence predictions are interpreted as “no emotion”. W e accept the emotion with the highest score as the discrete prediction otherwise. 4.3 Emotion Recognition from T ranscribed Utterances For the emotion recognition from te xt, we manu- ally transcribe all utterances of our AMMER study . T o e xploit e xisting and av ailable data sets which are larger than the AMMER data set, we develop a transfer learning approach. W e use a neural net- work with an embedding layer (frozen weights, pre- trained on Common Cra wl and W ikipedia (Grav e et al., 2018)), a bidirectional LSTM (Schuster and Paliw al, 1997), and tw o dense layers follo wed by a soft max output layer . This setup is inspired by (Andryushechkin et al., 2017). W e use a dropout rate of 0.3 in all layers and optimize with Adam (Kingma and Ba, 2015) with a learning rate of 10 − 5 (These parameters are the same for all further ex- periments). W e build on top of the K eras library with the T ensorFlow backend. W e consider this setup our baseline model . W e train models on a variety of corpora, namely the common format published by (Bostan and Klinger , 2018) of the F igur eEight (formally known as Crowdflo wer) data set of social media, the ISEAR data (Scherer and W allbott, 1994) (self- reported emotional e vents), and, the T witter Emo- tion Corpus (TEC, weakly annotated T weets with #anger , #disgust, #fear , #happy , #sadness, and #sur- prise, Mohammad (2012)). From all corpora, we use instances with labels fear , anger , or joy . These corpora are English, howe v er , we do predictions on German utterances. Therefore, each corpus is preprocessed to German with Google Translate 1 . W e remo ve URLs, user tags (“@Username”), punc- tuation and hash signs. The distributions of the data sets are sho wn in T able 3. T o adapt models trained on these data, we ap- ply transfer learning as follo ws: The model is first trained until con ver gence on one out-of-domain corpus (only on classes fear , joy , anger for com- patibility reasons). Then, the parameters of the bi-LSTM layer are frozen and the remaining layers are further trained on AMMER. This procedure is illustrated in Figure 2 5 Results 5.1 F acial Expressions and A udio T able 4 shows the confusion matrices for facial and audio emotion recognition on our complete AMMER data set and T able 5 sho ws the re- 1 http://translate.google.com, performed on January 4, 2019 Data set Fear Anger Joy T otal Figure8 8,419 1,419 9,179 19,017 EmoInt 2,252 1,701 1,616 5,569 ISEAR 1,095 1,096 1,094 3,285 TEC 2,782 1,534 8,132 12,448 AMMER 49 26 90 165 T able 3: Class distribution of the used data sets for the considered emotional states (Figure8 (Figure Eight, 2016), EmoInt (Mohammad and Brav o-Marquez, 2017b), ISEAR, (Scherer , 1997), TEC (Mohammad, 2012), AMMER (this paper)). V ision Fear Anger Joy T otal Insecurity 11 17 21 49 Annoyance 10 7 9 26 Joy 24 27 39 90 T otal 45 51 69 165 Audio Fear Anger Joy No T otal Insecurity 17 14 1 17 49 Annoyance 12 7 0 7 26 Joy 27 26 4 33 90 T otal 56 47 5 57 165 T ransfer Learning T ext Fear Anger Joy No T otal Insecurity 33 0 16 49 Annoyance 7 4 15 26 Joy 1 1 88 90 T otal 41 5 119 165 T able 4: Confusion Matrix for F ace Classification and Audio Classification (on full AMMER data) and for transfer learning from text (training set of EmoInt and test set of AMMER). Insecurity , annoyance and joy are the gold labels. Fear , anger and joy are predictions. sults per class for each method, including facial and audio data and micro and macro av erages. The classification from facial expressions yields a macro-a veraged F 1 score of 33 % across the three emotions joy , insecurity , and annoyance (P=0.31, R=0.35). While the classification results for joy are promising (R=43 %, P=57 %), the distinction of insecurity and annoyance from the other classes appears to be more challenging. Regarding the audio signal, we observ e a macro F 1 score of 29 % (P=42 %, R=22 %). There is a bias to wards ne gati ve emotions, which results in a small number of detected joy predictions (R=4 %). Insecurity and annoyance are frequently confused. 5.2 T ext from T ranscribed Utterances The experimental setting for the ev aluation of emo- tion recognition from text is as follows: W e ev al- uate the BiLSTM model in three dif ferent e xper - iments: (1) in-domain, (2) out-of-domain and (3) transfer learning. F or all experiments we train on the classes anger / annoyance , fear / insecurity and joy . T able 6 sho ws all results for the comparison of these experimental settings. 5.2.1 Experiment 1: In-Domain application W e first set a baseline by validating our models on established corpora. W e train the baseline model on 60 % of each data set listed in T able 3 and ev aluate that model with 40 % of the data from the same do- main (results shown in the column “In-Domain” in T able 6). Excluding AMMER, we achiev e an av er - age micro F 1 of 68 %, with best results of F 1 =73 % on TEC. The model trained on our AMMER cor- pus achiev es an F1 score of 57%. This is most probably due to the small size of this data set and the class bias towards joy , which makes up more than half of the data set. These results are mostly in line with Bostan and Klinger (2018). 5.2.2 Experiment 2: Simple Out-Of-Domain application No w we analyze ho w well the models trained in Experiment 1 perform when applied to our data set. The results are shown in column “Simple” in T a- ble 6. W e observe a clear drop in performance, with V ision Audio T ext (TL) P R F 1 P R F 1 P R F 1 Insecurity 24 22 23 31 35 33 80 67 73 Annoyance 14 39 21 15 27 19 80 15 26 Joy 57 43 49 80 4 8 74 98 84 Macro-avg 32 35 33 42 22 29 78 60 68 Micro-avg 34 34 34 26 17 21 76 76 76 T able 5: Performance for classification from vision, audio, and transfer learning from text (training set of EmoInt). Out-of-domain T rain Corpus In-Domain Simple Joint C. T ransfer L. Figure8 66 55 59 76 EmoInt 62 48 56 76 TEC 73 55 58 76 ISEAR 70 35 59 72 AMMER 57 — — — T able 6: Results in micro F 1 for Experiment 1 (in- domain), Experiment 2 and 3 (out-of-domain with and without transfer learning). an average of F 1 =48 %. The best performing model is again the one trained on TEC, en par with the one trained on the Figure8 data. The model trained on ISEAR performs second best in Experiment 1, it performs worst in Experiment 2. 5.2.3 Experiment 3: T ransfer Learning application T o adapt models trained on pre viously existing data sets to our particular application, the AMMER cor- pus, we apply transfer learning. Here, we perform leav e-one-out cross v alidation. As pre-trained mod- els we use each model from Experiment 1 and further optimize with the training subset of each crossv alidation iteration of AMMER. The results are sho wn in the column “T ransfer L. ” in T able 6. The confusion matrix is also depicted in T able 4. W ith this procedure we achiev e an average per- formance of F 1 =75 %, being better than the results from the in-domain Experiment 1. The best per - formance of F 1 =76 % is achiev ed with the model pre-trained on each data set, except for ISEAR. All transfer learning models clearly outperform their simple out-of-domain counterpart. T o ensure that this performance increase is not only due to the larger data set, we compare these results to training the model without transfer on a corpus consisting of each corpus together with AMMER (again, in leav e-one-out crossv alidation). These results are depicted in column “Joint C. ”. Thus, both settings, “transfer learning” and “joint corpus” hav e access to the same information. The results sho w an increase in performance in contrast to not using AMMER for training, how- e ver , the transfer approach based on partial retrain- ing the model shows a clear impro vement for all models (by 7pp for Figure8, 10pp for EmoInt, 8pp for TEC, 13pp for ISEAR) compared to the ”Joint” setup. 6 Summary & Future W ork W e described the creation of the multimodal AM- MER data with emotional speech interactions be- tween a driv er and both a virtual agent and a co- dri ver . W e analyzed the modalities of facial e xpres- sions, acoustics, and transcribed utterances regard- ing their potential for emotion recognition during in-car speech interactions. W e applied off-the-shelf emotion recognition tools for facial expressions and acoustics. F or transcribed text, we de veloped a neu- ral network-based classifier with transfer learning exploiting e xisting annotated corpora. W e find that analyzing transcribed utterances is most promising for classification of the three emotional states of joy , annoyance and insecurity . Our results for f acial expressions indicate that there is potential for the classification of jo y , how- e ver , the states of annoyance and insecurity are not well recognized. Future work needs to in vesti- gate more sophisticated approaches to map frame predictions to sequence predictions. Furthermore, mov ements of the mouth region during speech inter- actions might negati vely influence the classification from facial expressions. Therefore, the question remains ho w facial expressions can best contrib ute to multimodal detection in speech interactions. Regarding the classification from the acoustic signal, the application of of f-the-shelf classifiers without further adjustments seems to be challeng- ing. W e find a strong bias tow ards negati ve emo- tional states for our experimental setting. For in- stance, the personalization of the recognition al- gorithm ( e. g. , mean and standard deviation nor- malization) could help to adapt the classification for specific speakers and thus to reduce this bias. Further , the acoustic en vironment in the vehicle interior has special properties and the recognition software might need further adaptations. Our transfer learning-based text classifier sho ws considerably better results. This is a substantial result in its own, as only one previous method for transfer learning in emotion recognition has been proposed, in which a sentiment/emotion spe- cific source for labels in pre-training has been used, to the best of our knowledge (Felbo et al., 2017). Other applications of transfer learning from gen- eral language models include (Rozental et al., 2018; Chronopoulou et al., 2018, i.a. ). Our approach is substantially dif ferent, not being trained on a huge amount of noisy data, b ut on smaller out-of-domain sets of higher quality . This result suggests that emotion classification systems which w ork across domains can be de veloped with reasonable ef fort. For a productive application of emotion detec- tion in the context of speech events we conclude that a deployed system might perform best with a speech-to-text module follo wed by an analysis of the text. Further , in this work, we did not explore an ensemble model or the interaction of different modalities. Thus, future work should in vestigate the fusion of multiple modalities in a single classi- fier . Acknowledgment W e thank Laura-Ana-Maria Bostan for discussions and data set preparations. This research has par - tially been funded by the German Research Council (DFG), project SEA T (KL 2869/1-1). References Cecilia Ovesdotter Alm, Dan Roth, and Richard Sproat. 2005. Emotions from text: Machine learning for text-based emotion prediction. In Pr oceed- ings of Human Language T echnology Confer ence and Confer ence on Empirical Methods in Natural Language Pr ocessing , pages 579–586, V ancouv er , British Columbia, Canada, October . Association for Computational Linguistics. Saima Aman and Stan Szpakowicz. 2007. Identifying expressions of emotion in text. In V ´ aclav Matou ˇ sek and Pa vel Mautner , editors, T e xt, Speech and Dia- logue , pages 196–205, Berlin, Heidelberg. Springer Berlin Heidelberg. Christos-Nikolaos Anagnostopoulos, Theodoros Iliou, and Ioannis Giannoukos. 2015. Features and classi- fiers for emotion recognition from speech: a survey from 2000 to 2011. Artificial Intelligence Review , 43(2). Vladimir Andryushechkin, Ian W ood, and James O’ Neill. 2017. NUIG at EmoInt-2017: BiLSTM and SVR ensemble to detect emotion intensity . In Pr oceedings of the 8th W orkshop on Computational Appr oaches to Subjectivity , Sentiment and Social Media Analysis , pages 175–179, Copenhagen, Den- mark, September . Association for Computational Linguistics. Hynek Boril, Omid Sadjadi, and John H. L. Hansen Hansen. 2011. UTDri ve: emotion and cognitive load classification for in-vehicle scenarios. In th Bi- ennial W orkshop on Digital Signal Pr ocessing for In-V ehicle Systems, DSP 2011 . Laura-Ana-Maria Bostan and Roman Klinger . 2018. An analysis of annotated corpora for emotion clas- sification in text. In Pr oceedings of the 27th Inter- national Confer ence on Computational Linguistics , pages 2104–2119, Santa Fe, Ne w Me xico, USA, Au- gust. Association for Computational Linguistics. Scott Brave, Clifford Nass, and Ke vin Hutchinson. 2005. Computers that care: in v estigating the effects of orientation of emotion e xhibited by an embod- ied computer agent. International journal of human- computer studies , 62(2). Carlos Busso, Zhig ang Deng, Serdar Y ildirim, Murtaza Bulut, Chul Min Lee, Abe Kazemzadeh, Sungbok Lee, Ulrich Neumann, and Shrikanth Narayanan. 2004. Analysis of emotion recognition using facial expressions, speech and multimodal information. In Pr oceedings of the 6th International Conference on Multimodal Interfaces , ICMI ’04, pages 205–211, New Y ork, NY , USA. A CM. Alexandra Chronopoulou, Aikaterini Mar gatina, Chris- tos Baziotis, and Alexandros Potamianos. 2018. NTU A-SLP at IEST 2018: Ensemble of neural trans- fer methods for implicit emotion classification. In Pr oceedings of the 9th W orkshop on Computational Appr oaches to Subjectivity , Sentiment and Social Media Analysis , pages 57–64, Brussels, Belgium, October . Association for Computational Linguistics. Andrew Cooper and Konstantinos V assilis Petrides. 2010. A psychometric analysis of the trait emotional intelligence questionnaire–short form (TEIQue–SF) using item response theory . Journal of personality assessment , 92(5):449–457. Amy Coplan and Peter Goldie. 2011. Empathy: Philo- sophical and psyc hological per spectives . Oxford Univ ersity Press. Monique Dittrich and Sebastian Zepf. 2019. Exploring the v alidity of methods to track emotions behind the wheel. In Harri Oinas-Kukkonen, Khin Than W in, Evangelos Karapanos, Pasi Karppinen, and Eleni Kyza, editors, P ersuasive T echnology: Development of P ersuasive and Behavior Change Support Sys- tems , pages 115–127, Cham. Springer International Publishing. Paul Ekman and W allace V . Friesen. 1978. Facial ac- tion coding system: In vestig ator’ s guide. Consulting Psychologists Pr ess . Paul Ekman. 1992. An argument for basic emotions. Cognition & emotion , 6. Moataz El A yadi, Mohamed S Kamel, and Fakhri Kar- ray . 2011. Survey on speech emotion recognition: Features, classification schemes, and databases. P at- tern Recognition , 44(3). Bjarke Felbo, Alan Mislov e, Anders Søgaard, Iyad Rahwan, and Sune Lehmann. 2017. Using millions of emoji occurrences to learn an y-domain represen- tations for detecting sentiment, emotion and sarcasm. In Pr oceedings of the 2017 Conference on Empiri- cal Methods in Natur al Langua ge Pr ocessing , pages 1615–1625, Copenhagen, Denmark, September . As- sociation for Computational Linguistics. Figure Eight. 2016. Sentiment analysis: Emotion in text. Online. https://www .figure- eight.com/data/ sentiment- analysis- emotion- text/. Edouard Grave, Piotr Bojanowski, Prakhar Gupta, Ar- mand Joulin, and T omas Mikolov . 2018. Learning word vectors for 157 languages. In Proceedings of the Eleventh International Confer ence on Language Resour ces and Evaluation (LREC-2018) , Miyazaki, Japan, May . European Languages Resources Associ- ation (ELRA). Helen Harris and Clifford Nass. 2011. Emotion reg- ulation for frustrating driving contexts. In Pr oceed- ings of the SIGCHI Confer ence on Human F actors in Computing Systems , CHI ’11, pages 749–752. Klas Ihme, Christina D ¨ omeland, Maria Freese, and Meike Jipp. 2018. Frustration in the Face of the Driv er: A Simulator Study on Facial Muscle Acti v- ity during Frustrated Driving. Interaction Studies , 19. Heechul Jung, Sihaeng Lee, Junho Y im, Sunjeong Park, and Junmo Kim. 2015. Joint fine-tuning in deep neural networks for facial expression recognition. In 2015 IEEE International Confer ence on Computer V ision (ICCV) , pages 2983–2991. Bo-Kyeong Kim, Jihyeon Roh, Suh-Y eon Dong, and Soo-Y oung Lee. 2016. Hierarchical committee of deep con volutional neural networks for robust fa- cial expression recognition. Journal on Multimodal User Interfaces , 10(2). Evgeny Kim, Sebastian Pad ´ o, and Roman Klinger . 2017. In vestig ating the relationship between liter - ary genres and emotional plot dev elopment. In Pr o- ceedings of the Joint SIGHUM W orkshop on Com- putational Linguistics for Cultural Heritage, Social Sciences, Humanities and Literatur e , pages 17–26, V ancouver , Canada, August. Association for Com- putational Linguistics. Diederik P . Kingma and Jimmy Ba. 2015. Adam: A method for stochastic optimization. In International Confer ence on Learning Repr esentations . Jonathan Klein, Y oungme Moon, and Rosalind W . Pi- card. 2002. This computer responds to user frustra- tion: Theory , design, and results. Interacting with computers , 14(2). Roman Klinger , Orph ´ ee De Clercq, Saif Mohammad, and Alexandra Balahur . 2018. IEST: W ASSA-2018 implicit emotions shared task. In Pr oceedings of the 9th W orkshop on Computational Appr oaches to Subjectivity , Sentiment and Social Media Analysis , pages 31–42, Brussels, Belgium, October . Associa- tion for Computational Linguistics. Jinkyu Lee and Ivan T ashe v . 2015. High-level feature representation using recurrent neural network for speech emotion recognition. In Interspeech . ISCA – International Speech Communication Association. Y anran Li, Hui Su, Xiaoyu Shen, W enjie Li, Ziqiang Cao, and Shuzi Niu. 2017. DailyDialog: A manu- ally labelled multi-turn dialogue dataset. In Pr oceed- ings of the Eighth International J oint Confer ence on Natural Language Pr ocessing (V olume 1: Long P apers) , pages 986–995, T aipei, T aiwan, November . Asian Federation of Natural Language Processing. James J. Lien, T akeo Kanade, Jeffre y F . Cohn, and Ching-Chung Li. 1998. Automated facial expres- sion recognition based on facs action units. In Pr oceedings Thir d IEEE International Confer ence on Automatic F ace and Gestur e Recognition , pages 390–395. Zhiyi Ma, Marwa Mahmoud, Peter Robinson, Ed- uardo Dias, and Lee Skrypchuk. 2017. Auto- matic detection of a driv er’ s complex mental states. In Osvaldo Gervasi, Beniamino Murg ante, Sanjay Misra, Giuseppe Borruso, Carmelo M. T orre, Ana Maria A.C. Rocha, David T aniar , Bernady O. Ap- duhan, Elena Stankov a, and Alfredo Cuzzocrea, ed- itors, Computational Science and Its Applications – ICCSA 2017 , pages 678–691, Cham. Springer Inter- national Publishing. Judi Mesman, Harriet Oster , and Linda Camras. 2012. Parental sensitivity to infant distress: what do dis- crete negativ e emotions hav e to do with it? Attach- ment & Human Development , 14(4). Saif Mohammad and Felipe Brav o-Marquez. 2017a. Emotion intensities in tweets. In Pr oceedings of the 6th Joint Confer ence on Lexical and Computational Semantics (*SEM 2017) , pages 65–77, V ancouver , Canada, August. Association for Computational Lin- guistics. Saif Mohammad and Felipe Brav o-Marquez. 2017b. Emotion intensities in tweets. In Pr oceedings of the 6th Joint Confer ence on Lexical and Computational Semantics (*SEM 2017) , pages 65–77, V ancouver , Canada, August. Association for Computational Lin- guistics. Saif M Mohammad and Peter D T urney . 2012. Crowd- sourcing a wordemotion association lexicon. Com- putational Intelligence , 29(3). Saif M. Mohammad, Parinaz Sobhani, and Sv etlana Kiritchenko. 2017. Stance and sentiment in tweets. A CM T rans. Internet T echnol. , 17(3). Saif Mohammad, Felipe Bra vo-Marquez, Moham- mad Salameh, and Svetlana Kiritchenko. 2018. SemEval-2018 task 1: Affect in tweets. In Pr oceed- ings of The 12th International W orkshop on Seman- tic Evaluation , pages 1–17, Ne w Orleans, Louisiana, June. Association for Computational Linguistics. Saif Mohammad. 2012. #emotional tweets. In *SEM 2012: The F irst Joint Confer ence on Lexical and Computational Semantics – V olume 1: Pr oceedings of the main confer ence and the shar ed task, and V ol- ume 2: Proceedings of the Sixth International W ork- shop on Semantic Evaluation (SemEval 2012) , pages 246–255, Montr ´ eal, Canada. Association for Com- putational Linguistics. Clifford Nass, Ing-Marie Jonsson, Helen Harris, Ben Reav es, Jack Endo, Scott Brave, and Leila T akayama. 2005. Improving automotive safety by pairing driver emotion and car voice emotion. In CHI ’05 Extended Abstracts on Human F actors in Computing Systems , CHI EA ’05, pages 1973–1976. Michael Neumann and Ngoc Thang V u. 2017. At- tentiv e con v olutional neural network based speech emotion recognition: A study on the impact of in- put features, signal length, and acted speech. In In- terspeech . ISCA – International Speech Communi- cation Association. Dimitri Palaz, Mathew Magimai-Doss, and Ronan Col- lobert. 2015. Analysis of cnn-based speech recog- nition system using raw speech as input. In Inter- speech . ISCA – International Speech Communica- tion Association. Maja Pantic, Nicu Sebe, Jef frey F . Cohn, and Thomas Huang. 2005. Af fecti ve multimodal human- computer interaction. In Pr oceedings of the 13th An- nual ACM International Conference on Multimedia , MUL TIMEDIA ’05, pages 669–676. T imo Partala and V eikko Surakka. 2004. The ef fects of affecti ve interventions in human–computer inter- action. Interacting with computers , 16(2). Maurizio Paschero, G. Del V escov o, L. Benucci, An- tonello Rizzi, Marco Santello, Gianluca Fabbri, and F . M. Frattale Mascioli. 2012. A real time classi- fier for emotion and stress recognition in a vehicle driv er . In 2012 IEEE International Symposium on Industrial Electr onics , pages 1690–1695. James W Pennebaker , Ryan L Bo yd, Kayla Jordan, and Kate Blackb urn. 2015. The de velopment and psy- chometric properties of LIWC2015. Robert Plutchik. 1980. A general psychoev olutionary theory of emotion. Theories of emotion , 1. Chris Pool and Malvina Nissim. 2016. Distant su- pervision for emotion detection using F acebook re- actions. In Pr oceedings of the W orkshop on Com- putational Modeling of P eople’s Opinions, P erson- ality , and Emotions in Social Media (PEOPLES) , pages 30–39, Osaka, Japan, December . The COL- ING 2016 Organizing Committee. Jonathan Posner , James A. Russell, and Bradley S. Pe- terson. 2005. The circumplex model of affect: An integrati ve approach to af fective neuroscience, cog- nitiv e dev elopment, and psychopathology . De velop- ment and psychopathology , 17(3):715–734. Daniel Preot ¸ iuc-Pietro, H. Andrew Schwartz, Gregory Park, Johannes Eichstaedt, Margaret Kern, L yle Un- gar , and Elisabeth Shulman. 2016. Modelling va- lence and arousal in F acebook posts. In Pr oceedings of the 7th W orkshop on Computational Appr oaches to Subjectivity , Sentiment and Social Media Analy- sis , pages 9–15, San Diego, California, June. Asso- ciation for Computational Linguistics. K. Sreeniv asa Rao, Shashidhar G. Koolagudi, and Ramu Reddy V empada. 2013. Emotion recognition from speech using global and local prosodic features. International journal of speech tec hnology , 16(2). Alon Rozental, Daniel Fleischer , and Zohar Kelrich. 2018. Amobee at IEST 2018: T ransfer learning from language models. In Pr oceedings of the 9th W orkshop on Computational Appr oaches to Subjec- tivity , Sentiment and Social Media Analysis , pages 43–49, Brussels, Belgium, October . Association for Computational Linguistics. Klaus R Scherer and Harald G. W allbott. 1994. Evi- dence for univ ersality and cultural variation of dif fer- ential emotion response patterning. Journal of per- sonality and social psychology , 66(2). Klaus R. Scherer . 1997. Profiles of emotion- antecedent appraisal: T esting theoretical predictions across cultures. Cognition & Emotion , 11(2). Hendrik Schuff, Jeremy Barnes, Julian Mohme, Sebas- tian P ad ´ o, and Roman Klinger . 2017. Annotation, modelling and analysis of fine-grained emotions on a stance and sentiment detection corpus. In Pr o- ceedings of the 8th W orkshop on Computational Ap- pr oaches to Subjectivity , Sentiment and Social Me- dia Analysis , pages 13–23, Copenhagen, Denmark, September . Association for Computational Linguis- tics. Bj ¨ orn W . Schuller, Manfred K. Lang, and Gerhard Rigoll. 2006. Recognition of Spontaneous Emo- tions by Speech within Automoti v e Environment. In T agungsband F ortschritte der Akustik – D AGA 2006 , pages 57–58. Bj ¨ orn W . Schuller . 2018. Speech emotion recognition: T wo decades in a nutshell, benchmarks, and ongoing trends. Communications of the ACM , 61(5):90–99. Mike Schuster and Kuldip K. Paliwal. 1997. Bidirec- tional recurrent neural netw orks. IEEE T ransactions on Signal Pr ocessing , 45(11). Nicu Sebe, Ira Cohen, and Thomas S. Huang, 2005. Handbook of P attern Recognition and Computer V ision , chapter Multimodal Emotion Recognition. W orld Scientific. Mohammad Soleymani, Maja Pantic, and Thierry Pun. 2012. Multimodal emotion recognition in response to videos. IEEE T ransactions on Affective Comput- ing , 3(2). Carlo Strapparav a and Rada Mihalcea. 2007. SemEval-2007 task 14: Affecti ve text. In Proceed- ings of the F ourth International W orkshop on Se- mantic Evaluations (SemEval-2007) , pages 70–74, Prague, Czech Republic, June. Association for Com- putational Linguistics. Carlo Strapparav a and Alessandro V alitutti. 2004. W ordNet af fect: an affecti ve extension of W ord- Net. In Pr oceedings of the F ourth International Confer ence on Language Resour ces and Evaluation (LREC’04) , Lisbon, Portugal, May . European Lan- guage Resources Association (ELRA). Sujono and Alexander A. S. Gunawan. 2015. Face ex- pression detection on kinect using acti ve appearance model and fuzzy logic. In W idodo Budiharto, edi- tor , Pr oceedings of the International Conference on Computer Science and Computational Intelligence (ICCSCI 2015) , v olume 59 of Pr ocedia Computer Science , pages 268–274. Elsevier . T essa-Karina T ews, Michael Oehl, Felix W . Siebert, Rainer H ¨ oger , and Helmut Faasch. 2011. Emo- tional human-machine interaction: Cues from facial expressions. In Michael J. Smith and Gavriel Sal- vendy , editors, Human Interface and the Manage- ment of Information. Interacting with Information , pages 641–650. Springer Berlin Heidelberg. George Trigeor gis, F abien Ringev al, Raymond Brueck- ner , Erik Marchi, Mihalis A. Nicolaou, Bj ¨ orn Schuller , and Stefanos Zafeiriou. 2016. Adieu features? end-to-end speech emotion recognition using a deep con volutional recurrent network. In 2016 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 5200–5204. Dimitrios V erveridis, Constantine Kotropoulos, and Ioannis Pitas. 2004. Automatic emotional speech classification. In 2004 IEEE International Confer- ence on Acoustics, Speec h, and Signal Pr ocessing (ICASSP) , volume 1, pages I–593–I–596. W enbo W ang, Lu Chen, Krishnaprasad Thirunarayan, and Amit P . Sheth. 2012. Harnessing twitter ”big data” for automatic emotion identification. In 2012 International Conference on Privacy , Security , Risk and T rust and 2012 International Confernece on So- cial Computing , pages 587–592.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment