Poly-GAN: Multi-Conditioned GAN for Fashion Synthesis

We present Poly-GAN, a novel conditional GAN architecture that is motivated by Fashion Synthesis, an application where garments are automatically placed on images of human models at an arbitrary pose. Poly-GAN allows conditioning on multiple inputs a…

Authors: Nilesh P, ey, Andreas Savakis

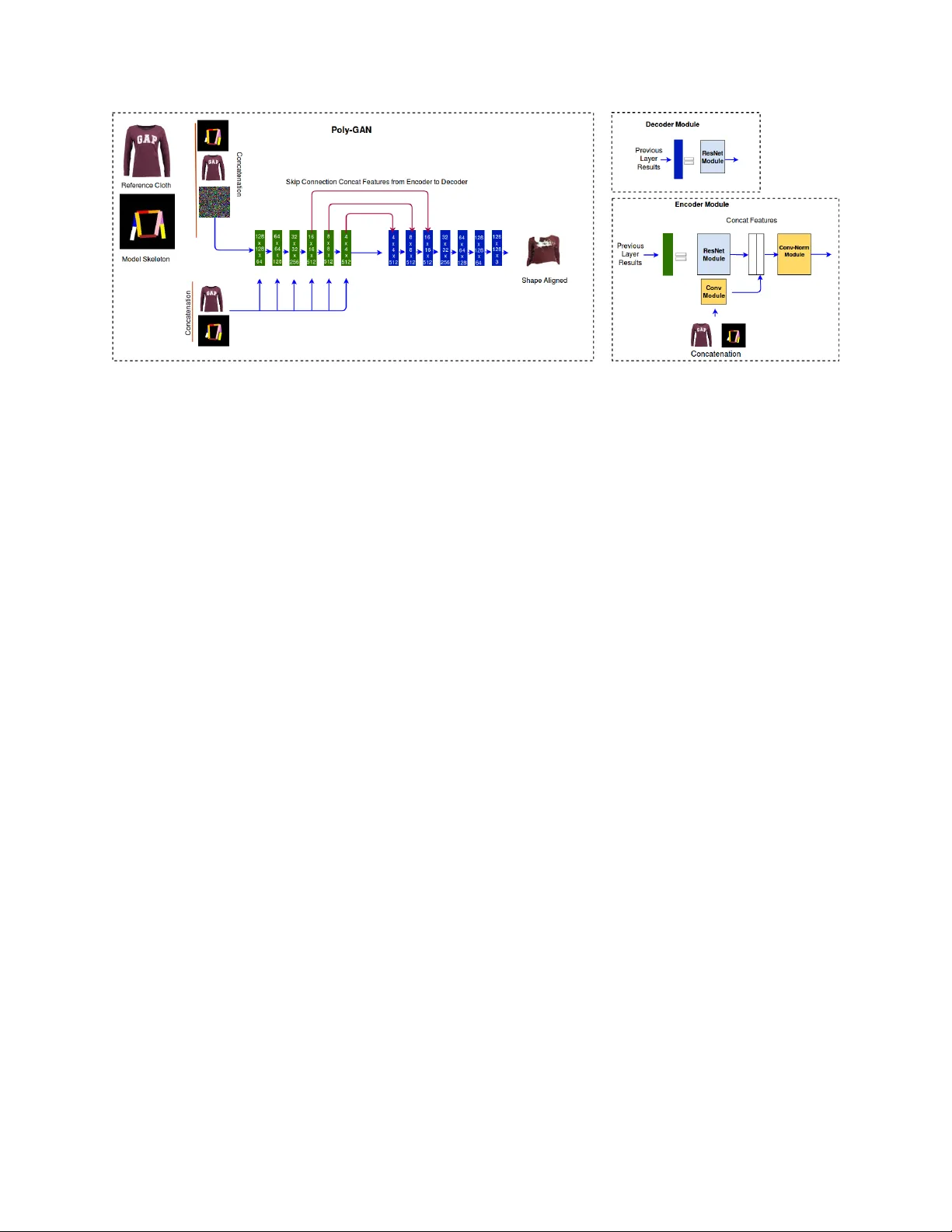

P O L Y - G A N : M U L T I - C O N D I T I O N E D G A N F O R F A S H I O N S Y N T H E S I S A P R E P R I N T Nilesh Pandey , Andreas Sav akis ∗ Department of Computer Engineering Rochester Institute of T echnology Rochester , NY 14623 np9207@rit.edu andreas.savakis@rit.edu September 6, 2019 A B S T R AC T W e present Poly-GAN, a novel conditional GAN architecture that is motiv ated by Fashion Synthesis, an application where garments are automatically placed on images of human models at an arbitrary pose. Poly-GAN allows conditioning on multiple inputs and is suitable for many tasks, including image alignment, image stitching and inpainting. Existing methods hav e a similar pipeline where three different netw orks are used to first align garments with the human pose, then perform stitching of the aligned garment and finally refine the results. Poly-GAN is the first instance where a common architecture is used to perform all three tasks. Our novel architecture enforces the conditions at all layers of the encoder and utilizes skip connections from the coarse layers of the encoder to the respecti ve layers of the decoder . Poly-GAN is able to perform a spatial transformation of the g arment based on the RGB skeleton of the model at an arbitrary pose. Additionally , Poly-GAN can perform image stitching, regardless of the garment orientation, and inpainting on the garment mask when it contains irregular holes. Our system achie ves state-of-the-art quantitati ve results on Structural Similarity Index metric and Inception Score metric using the DeepF ashion dataset. 1 Introduction Generativ e Adversarial Networks (GANs) ha ve been one of the most exciting dev elopments in recent years, as they hav e demonstrated impressive results in v arious applications including F ashion Synthesis ZhuS et al. [33] , Karras et al. [14], Han et al. [9]. Fashion Synthesis is a challenging task that requires placing a reference garment on a source model who is at an arbitrary pose and wears a dif f erent garment Han et al. [9] W angB et al. [29] Dong et al. [5] Lassner et al. [15] Cui et al. [4] . The arbitrary human pose requirement creates challenges, such as handling self occlusion or limited av ailability of training data, as the training dataset may or not have the model’ s desired pose. Some of the challenges in Fashion Synthesis are encountered in other applications, such as person re-identification Dong et al. [6] , person modeling LiuJ et al. [18] , and Image2Image translation Jetche v et al. [11] . Existing methods for Fashion Synthesis follo w a pipeline consisting of three stages, each requiring different tasks, that are performed by dif ferent networks. These tasks include performing an affine transformation to align the reference garment with the source model Rocco et al. [25] , stitching the garment on the source model, and refining or post-processing to reduce artif acts after stitching. The problem encountered with this pipeline is that stitching the warped garment often results in artifacts due to self occlusion, spill of color , and blurriness in the generation of missing body regions. In this paper , we take a more univ ersal approach by proposing a single architecture for all three tasks in the F ashion Synthesis pipeline. Instead of using an affine transformation to warp the garments to the body shape, we generate ∗ Use footnote for pro viding further information about author (webpage, alternati ve address)— not for acknowledging funding agencies. A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 Figure 1: Examples of Fashion Synthesis results generated with Poly-GAN. Shown in the columns from left to right: model image, reference garment, Poly-GAN result. garments with our GAN conditioned on an arbitrary human pose. Generating transformed garments ov ercomes the problem of self occlusion and generates occluding arms and other body parts v ery effecti vely . The same architecture is then trained to perform stitching and inpainting. W e demonstrate that our proposed GAN architecture not only achiev es state of the art results for Fashion Synthesis, b ut it is also suitable for many tasks. Thus, we name our architecture Poly-GAN. Figure 1 shows representati ve examples of the performance achie ved with Poly-GAN. Our Fashion Synthesis approach consists of the following stages, illustrated in Figure 2. Stage 1 performs image generation conditioned on an arbitrary human pose, which changes the shape of the reference g arment so it can precisely fit on the human body . Stage 2 performs image stitching of the ne wly generated garment (from Stage 1) with the model after the original garment is segmented out. Stage 3 performs refinement by inpainting the output of Stage 2 to fill any missing regions or spots. Stage 4 is a post-processing step that combines the results from Stages 2 and 3, and adds the model head for the final result. Our approach achiev es state of the art quantitativ e results compared to the popular V irtual T ry On (VTON) method W angB et al. [29]. The main contributions of this paper can be summarized as follo ws: 1. W e propose a ne w conditional GAN architecture, which can operate on multiple conditions that manipulate the generated image. 2. In our Poly-GAN architecture, the conditions are fed to all layers of the encoder to strengthen their effects throughout the encoding process. Additionally , skip connections are introduced from the coarse layers of the encoder to the respectiv e layers of the decoder . 3. W e demonstrate that our architecture can perform many tasks, including shape manipulation conditioned on human pose for affine transformations, image stitching of a g arment on the model, and image inpainting. 4. Poly-GAN is the first GAN to perform an affine transformation of the reference garment based on the RGB skeleton of the model at an arbitrary pose. 5. Our method is able to preserve the desired pose of human arms and hands without color spill, ev en in cases of self occlusion, while performing Fashion Synthesis. 2 Related W ork Building on the successes of generati ve adversarial nets Goodfello w et al. [8] , conditional GANs Mirza et al. [20] ,Chen et al. [3] incorporate a specific conditional restriction in their generator network, so that it learns to generate fake samples under that condition. Conditional GAN incorporate a binary mask or label as conditional input by concatenating it with the input image or with a latent noise vector . In the progressi ve GAN architecture Karras et al. [13] , layers are added to both the generator and the discriminator during training. The addition of ne w layers increases the fine detail as training progresses. A random noise v ector 2 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 Figure 2: Poly-GAN pipeline. Stage 1: Garment transformation with Poly-GAN conditioned on the RGB skeleton of the model and the reference garment. Stage 2: Garment stitching with Poly-GAN conditioned on the se gmented model, the RGB skeleton and the transformed garment. Stage 3: Refinement for hole filling with Poly-GAN conditioned on the stitched image and dif ference mask indicating missing regions. Stage 4: Postprocessing for combining the outputs of Stages 2 and 3 with the model head for the final result. is giv en as an input to the generator . It is possible to have an embedding layer Mirza et al. [20] at the input of the Progressiv e GAN which provides class conditional information to the network. Howe ver , ev en with the class information, the generated image will be random in nature with no control ov er the generated structure or te xture of the image. The family of methods that try to swap the source garment with the target g arment are referred to as V irtual Try On Networks (VTON) W angB et al. [29] Dong et al. [5] Han et al. [9] . Methods that fall under VTON usually have a similar pipeline consisting of three main components performed by different netw orks: a) Pose Alignment Network b) Stitch/Swap Network c) Refinement Netw ork. The pose alignment network aligns the tar get garment with the source image by learning an affine transformation Rocco et al. [25] . The stitch/swap network is a GAN with some approaches Dong et al. [5] , but there are cases where it simply performs stitching W angB et al. [29] . The refinement process generally differs among methods, as e very approach has dif ferent shortcomings to refine. The GAN based VTON methods Jetchev et al. [11] , Cui et al. [4] , Lassner et al. [15] are becoming popular due to the ability of their GAN to generate images conditioned on image data such as mask, garment or pose. One of the first methods to use GANs for VTON Jetchev et al. [11] uses the cycle consistency approach to generate humans in different g arments. The conditional GAN in Jetchev et al. [11] can accept the reference garment, model image, and model garment as inputs to the network, which gi ves the ability to control the generation of results conditioned on the reference garment. 3 Poly-GAN Poly-GAN, a new conditional GAN for fashion synthesis, is the first instance where a common architecture is used to perform many tasks pre viously performed by dif ferent networks. Poly-GAN is flexible and can accept multiple conditions as inputs for various tasks. W e begin with an ov erview of the pipeline used for Fashion Synthesis, sho wn in Figure 2, and then we present the details of the Poly-GAN architecture. 3.1 Pipeline for Fashion Synthesis T wo images are inputs to the pipeline, namely the reference garment image and the model image, which is the source person on whom we wish to place the reference garment. A pre-trained pose estimator is used to extract the pose 3 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 Figure 3: Poly-GAN architecture. The left side shows the encoder in green and decoder in blue. The example sho wn is for garment transformation (Stage 1) but the architecture is the same for image stitching and inpainting. The conditions of reference garment and body pose are fed at all of the encoder layers. Skip connections from coarse layers of the encoder are fed to the corresponding layers of the decoder . The top right block shows the decoder module and the bottom right block shows the encoder module. skeleton of the model, as sho wn in Figure 2. The model image is passed to the segmentation network to extract the segmented mask of the garment, which is used to replace the old garment on the model. The entire flo w can be divided into 4 stages illustrated in Figure 2. In Stage 1, the RGB pose sk eleton is concatenated with the reference garment and passed to the Poly-GAN. The RGB skeleton acts as a condition for generating a garment that is reshaped according to an arbitrary human pose. The Stage 1 output is a ne wly generated garment which matches the shape and alignment of the RGB skeleton on which Poly-GAN is conditioned. The transformed garment from Stage 1 along with the segmented human body (without garment and without head) and the RGB skeleton are passed to the Poly-GAN in Stage 2. Stage 2 serves the purpose of stitching the generated garment from Stage 1 to the segmented human body which has no garment. In Stage 2, Poly-GAN assumes that the incoming garment may be positioned at any angle and does not require garment alignment with the human body . This assumption makes Poly-GAN more rob ust to potential misalignment of the generated garment during the transformation in Stage 1. Due to dif ferences in size between the reference garment and the segmented g arment on the body of the model, there may be blank areas due to missing regions. T o deal with missing regions at the output of Stage 2, we pass the resulting image to Stage 3 along with the dif ference mask indicating missing regions. In Stage 3, Poly-GAN learns to perform inpainting on irregular holes and refines the final result. In Stage 4, we perform post processing by combining the results of Stage 2 and Stage 3, and stitching the head back on the body for the final result. W e used established architectures for se gmentation and pose estimation. For the garment se gmentation task, we trained U-Net++ ZhouZ et al. [32] Ronneberger et al. [26] from scratch on the DeepFashion dataset Liu et al. [17] . It is important for the segmentation module to precisely separate the source g arment from the body , so that the reference garment can be placed at the correct location. Otherwise the segmentation process could lead to artifacts in the form of holes or a visible outline around the garment. These artifacts were sometimes present in the U-Net++ se gmentation results. T o extract the RGB skeleton, we used the pretrained LCR-net++ pose estimation method Rogez et al. [24] . The network was trained on MPII Human Pose dataset Andriluka et al. [1] and performed reliably . The pose estimator generated a missing pose ev en in cases with partial occlusion. W e also created human parsing data using a pre-trained method Nie et al. [21] Nie et al. [22] . The human parsing data is used for segmenting the head from the body of the model, as well as for creating data to train U-Net++. 3.2 Poly-GAN Architectur e The Poly-GAN architecture, shown in Figure 3, follows the encoder-decoder style of generator . The discriminator architecture is shown in Figure 4. The netw ork architecture is inspired by recent de velopments in generativ e adversarial networks, and includes some unique features. W e explain the details of our architecture next. 4 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 Figure 4: Architecture of the Discriminator . The example sho wn is for garment transformation but the architecture is the same for image stitching (Stage 2) and inpainting (Stage 3). 3.2.1 Encoder The encoder in our architecture, shown in Figure 3, can be divided in three main components, a) Con v Module, b) ResNet Module, and c) Con v-Norm Module. W e discuss the use of two new modules that are Con v Module and Con v-Norm Module. The ResNet module follo ws the standard architecture of residual networks He, K. et al. [10]. Con v Module. Conditional GANs Mirza et al. [20] , Chen et al. [3] hav e conditional inputs at the first layer . e.g., the source image or latent noise. An important benefit of our architecture is that it includes conditional inputs at e very layer , instead of only ha ving conditional inputs at the first layer . W e observed that if the conditional input is limited to the first layer , then its effect diminishes as the features propagate deeper in the netw ork. As a result, there is hardly any conditional information left in deeper layers. W e decided to feed the conditional inputs at ev ery layer through a Con v module shown in the encoder module of Figure 3. The Con v Module consists of 3 con volution layers, each follo wed by a ReLU activ ation function which outputs the number of features required in the layer under consideration. Con v-Norm Module. The ResNet module outputs ne wly learned features, which are concatenated with the features learned from the Con v Module. There is significant dif ference between the features from ResNet and features from the Con v Module. Thus, we pass the concatenated features to the Conv-Norm module to tak e advantage of the v ariation in features from the two modules. The Con v-Norm module has 2 con volution layers, with each con volution layer followed by an instance normalization layer and activ ation function. 3.2.2 Decoder The decoder part of the network learns to generate the desired image based on the features encoded by the encoder . The decoder part of Poly-GAN, shown in the decoder block of Figure 3, is similar to the decoder used in other GANs, e.g., Cycle GAN ZhuJ et al. [34] . The decoder consists of a series of ResNet modules follo wed by transposed con volution to up-sample the input in each block. Howe ver , there are fe wer layers in the decoder which receiv e information from the encoder through skip connections. 3.2.3 Skip Connections One of the important design decisions of Poly-GAN is the placement of skip connections. From previous works Karras et al. [14] , it is understood that coarser spatial resolution results in higher lev el spatial change, such as pose and shape. W e use skip connections to pass the information from the encoded coarse layers (4x4, 8x8, 16x16) to the respecti ve decoder layers (4x4, 8x8, 16x16) and concatenate to augment the feature representation. W e observed that if we use skip connections between encoder and decoder at spatial resolution abov e (16x16), then the generated image is not deformable enough to be close to the ground truth. If we don’t use skip connections above spatial resolution of (16x16), then the problem of missing minute details arises in the generated image. This is illustrated in the results of Figure 5. Using skip connection to connect all layers of the encoder to their respecti ve decoder layer w ould help in passing minute details from the encoder side, but w ould also hinder learning and generating new images ef fectiv ely . 3.3 Discriminator The discriminator used in our experiments is the same discriminator used in the Super Resolution GAN Ledig et al. [16] . The architecture of the discriminator is sho wn in Figure 4. The reason behind the selection of the discriminator is to penalize for blurry images, which are often generated by GANs using the L 2 loss function. 3.4 Loss Function Our loss function consists of three components: adversarial loss L adv , GAN loss L g an and identity loss L id . The total loss and its components are presented belo w , where D is the discriminator , G is the generator , x i , i = 1 , ..., N represent 5 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 N input samples from different distrib utions p i ( x i ) , i = 1 , ...N , t is the target, F are fake labels and R are real labels. L loss = L adv + L gan + l id (1) min D L adv = λ 1 E t ∼ p d ( t ) k D ( t ) − R k 2 2 + λ 2 E x 1 ∼ p 1 ( x 1 ) ,..,x N ∼ p N ( x N ) k D ( G ( x 1 , .., x N ) − F k 2 2 (2) min G L gan = λ 3 E x 1 ∼ p 1 ( x 1 ) ,..,x N ∼ p N ( x N ) k D ( G ( x 1 , .., x N ) − R k 2 2 (3) L id = λ 4 E t ∼ p d ( t ) ,x 1 ∼ p 1 ( x 1 ) ,..,x N ∼ p N ( x N ) k G ( x 1 , .., x N ) − t k 1 (4) In the abo ve equations, λ 1 to λ 4 are hyperparamters that are tuned during training. W e use the L 2 function as the adversarial loss for our GAN, similar to Mao et al. [19] . W e also use the L 1 function for the identity loss, which helps reduce te xture and color shift between the generated image and the ground truth. W e considered adding other loss functions, such as the perceptual loss Johnson et al. [12] and SSIM loss W angZ et al. [31] , but the y did not improve our results. 4 Experiments 4.1 T raining Methodology The work on StyleGAN Karras et al. [14] rev ealed certain input conditions that contributed to the generation of super realistic images, improving upon the blurry or distorted images generated by pre vious works. These conditions are a) images in the dataset should be of similar zoom ratio; b) the input to the GAN should be simple; c) diversity of the data should be balanced. In our initial experiments with DCGAN Radford et al. [23] , Progressi veGAN Karras et al. [13] , CycleGAN ZhuJ et al. [34] , and StyleGAN, we observed that if we pass the whole body with the head attached, then the results are blurry and the GAN fails to generate properly . In our experiments we made sure that the dataset is clean enough to hav e similar zoom ratio on the subject, images are div erse, and we removed any complex distractions, such as the head, before passing to the Poly-GAN inputs. W e chose to use the RGB sk eleton o ver a binary image of the pose. Our decision was based on the observation that color coded feature presentation are more ef fective. The other important observ ation we made while experimenting with Poly-GAN is that the results are af fected by the order in which the conditions are concatenated and we adjusted the inputs accordingly . W e used hyperparameters similar to CycleGAN ZhuJ et al. [34] for Poly-GAN training. W e used a learning rate of 0.0002, an Adam optimizer with β 1 = 0 . 5 and β 1 = 0 . 999 , batch size of 1 in all our experiments, and image size of 128x128 to feed images in the network. W e also used an image buf fer during training similar to the one suggested in Shri vastav a et al. [28] . The image buf fer helps in stabilizing the discriminator by k eeping a history of a fixed number of generated images which are randomly passed to the discriminator . All the experiments are performed on a workstation with 16GB of RAM using an Nvidia 1080ti GPU and Ub untu 16.04. 4.2 Dataset W e follow the process used in VITON Han et al. [9] while creating our training and testing datasets from the publicly av ailable DeepFashion dataset Liu et al. [17] . W e use LCR-net++ Rogez et al. [24] for 2D human pose estimation, and Pytorch-MULA Nie et al. [21] Nie et al. [22] to get the parsing results for the models. The human parser Nie et al. [21] is used to create data to train U-Net++ ZhouZ et al. [32] , to segment the garments from the models, and to remo ve the head from the models. W e have 14,221 training samples, as in the VITON dataset, and 900 paired samples for testing our method. W e note that the authors in VIT ON Han et al. [9] hav e used Gong et al. [7] for human parsing and Cao et al. [2] for 2D human pose estimation. 4.3 Quantitative Evaluation W e use the Structural Similarity Index metric (SSIM) W angZ et al. [31] and Inception Score metric (IS) Salimans et al. [27] which are widely accepted metrics for ev aluation of images generated by GANs. SSIM predicts the similarity between two images, where the higher the score the better the network is in generating realistic images. The Inception Score compares the quality of an image to human lev el grading, and is sensitive to blurring in an image. W e compare our results to the state of art method CP-VTON W angB et al. [29] . Since the code for CP-VT ON is publicly released W angB et al. [30] , we are able to obtain results on our dataset for comparison. The state of the art method MG-VTON Dong et al. [5] tries to solve the problem in a significantly dif ferent way than ours, but unfortunately their 6 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 T able 1: Fashion Synthesis Quantitativ e Results. Bold numbers indicate best performance. Metric Method SSIM IS CP-VTON 0.6889 2.6049 Poly-GAN Stage 2 0.7174 2.8193 Poly-GAN Stage 3 0.7369 2.6549 Poly-GAN Stage 4 0.7251 2.7904 Figure 5: Poly-GAN results shown from left to right column: Model image; Pose Skeleton; Reference Garment; Stage 1: transformed garment; Stage 2: garment stitched on segmented model; Stage 3: refinement of outputs from stage 2: Stage 4: post-process result from stage 2 and stage 3 with head in original model image. code is not available. Therefore, our comparison was limited to CP-VTON. W e perform our ev aluation on test data that consist of 900 paired images. The results are sho wn in T able 1. SSIM and IS scores are included for the final stage of Poly-GAN, as well as for Stages 2 and 3 (after stitching the head of the model). The results illustrate that Poly-GAN outperforms CP-VTON in all cases. By comparing the scores of dif ferent stages, we see that the output of Stage 2 is the sharpest and has the highest IS score, but it suf fers from holes due to blank regions. The output of Stage 3 has the highest SSIM score, i.e. is the most similar to the desired output, but it suf fers from blurriness artifacts. The combination on the two gi ves a balanced score without scoring the highest for either metric. 4.4 Qualitative Evaluation W e provide a visual comparison of our results with CP-VTON in Figure 6. Our Poly-GAN method is able to k eep body parts intact. especially in cases where the arms occlude the body . For example, in Figure 6 the second image from top shows that CP-VTON fails in the case of self occlusion which our method is able to handle in almost all cases. Poly-GAN is able to precicely map the generated garment from Stage 1 (see Figure 2) to the missing garment region on the body in Stage 2, thus av oiding the spill of color, which is e vident in CP-VTON. While the placement of the garment on the model is very good, both methods suf fer from slight color shift in some samples, which is a common problem with GANs that are asked to generate colors from v arious distrib utions. One limitation of our method is texture preserv ation when generating letters, graphics or patterns that are present in the reference garment. This artifact is due to the use of a GAN to reshape the g arment, instead of using image warping methods. A less pronounced artifact in some images is a visible boundary outline that is caused by errors in the segmentation by U-Net++. This limitation can be ov ercome by using a larger and more div erse dataset for training. 7 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 Figure 6: Comparison examples of Poly-GAN with CP-VT ON. Shown in columns from left to right: model image, reference garment, CP-VTON result, Poly-GAN result. Figure 7: Results of models randomly generated using StyleGAN. For qualitative ev aluation, we also present images that were randomly generated with StyleGAN in Figure 7. It is e vident that although StyleGAN is not conditioned on pose, the generated images suf fer from color spill due to occlusion and artifacts in preserving textures and graphics on the g arments. 5 Conclusion W e offer a no vel approach to the Fashion Synthesis problem by introducing Poly-GAN, a new multi-conditioned GAN architecture that is suitable for man y tasks. Qualitativ e and quantitativ e results on DeepFashion demonstrate the benefits of our approach by achie ving state of the art results. Future research will focus on further exploring the Poly-GAN architecture for a variety of applications. References [1] Andriluka, M., Pishchulin, L., Gehler, P ., & Schiele, B. (2014). 2D Human Pose Estimation: New Benchmark and State of The Art Analysis. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 3686-3693). [2] Cao, Z., Simon, T ., W ei, S. E., & Sheikh, Y . (2017). Realtime Multi-Person 2D Pose Estimation using P art Affinity Fields. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 7291-7299). [3] Chen, X., Duan, Y ., Houthooft, R., Schulman, J., Sutske ver , I., & Abbeel, P . (2016). InfoGAN: Interpretable Representation Learning by Information Maximizing Generativ e Adversarial Nets. In Advances in Neural Information Processing Systems(pp. 2172-2180). [4] Cui, Y . R., Liu, Q., Gao, C. Y ., & Su, Z. (2018). FashionGAN: Display your fashion design using Conditional Generativ e Adversarial Nets. In Computer Graphics Forum (V ol. 37, No. 7, pp. 109-119). [5] Dong, H., Liang, X., W ang, B., Lai, H., Zhu, J., & Y in, J. (2019). T owards Multi-pose Guided V irtual T ry-on Network. arXi v preprint 8 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 [6] Dong, H., Liang, X., Gong, K., Lai, H., Zhu, J., & Y in, J. (2018). Soft-Gated W arping-GAN for Pose-Guided Person Image Synthesis. In Advances in Neural Information Processing Systems (pp. 474-484). [7] Gong, K., Liang, X., Zhang, D., Shen, X., & Lin, L. (2017). Look into Person: Self-supervised Structure-sensitiv e Learning and a New Benchmark for Human Parsing. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 932-940). [8] Goodfellow , I., Pouget-Abadie, J., Mirza, M., Xu, B., W arde-Farley , D., Ozair , S., ... & Bengio, Y . (2014). Generativ e Adversarial Nets. In Advances in Neural Information Processing Systems (pp. 2672-2680). [9] Han, X., W u, Z., W u, Z., Y u, R., & Da vis, L. S. (2018). VITON: An Image-based V irtual Try-on Network. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 7543-7552). [10] He, K., Zhang, X., Ren, S., & Sun, J. (2016). Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 770-778). [11] Jetchev , N., & Bergmann, U. (2017). The Conditional Analogy GAN: Swapping Fashion Articles on People Images. In Proceedings of the IEEE International Conference on Computer V ision (pp. 2287-2292). [12] Johnson, J., Alahi, A., & Fei-Fei, L. (2016). Perceptual Losses for Real-T ime Style T ransfer and Super -Resolution. In European Conference on Computer V ision (pp. 694-711). Springer , Cham. [13] Karras, T ., Aila, T ., Laine, S., & Lehtinen, J. (2017). Progressi ve Gro wing of GANs for Impro ved Quality , Stability , and V ariation. arXiv preprint arXi v:1710.10196. [14] Karras, T ., Laine, S., & Aila, T . (2018). A Style-Based Generator Architecture for Generative Adversarial Networks. arXi v preprint [15] Lassner , C., Pons-Moll, G., & Gehler , P . V . (2017). A Generati ve Model of People in Clothing. In Proceedings of the IEEE International Conference on Computer V ision (pp. 853-862). [16] Ledig, C., Theis, L., Huszár , F ., Caballero, J., Cunningham, A., Acosta, A., ... & Shi, W . (2017). Photo-Realistic Single Image Super-Resolution using a Generati ve Adversarial Network. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 4681-4690). [17] Liu, Z., Luo, P ., Qiu, S., W ang, X., & T ang, X. (2016). Deepfashion: Powering Rob ust Clothes Recognition and Retrie val with Rich Annotations. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 1096-1104). [18] Liu, J., Ni, B., Y an, Y ., Zhou, P ., Cheng, S., & Hu, J. (2018). Pose T ransferrable Person Re-Identification. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 4099-4108). [19] Mao, X., Li, Q., Xie, H., Lau, R. Y ., W ang, Z., & P aul Smolley , S. (2017). Least Squares Generati ve Adversarial Networks. In Proceedings of the IEEE International Conference on Computer V ision (pp. 2794-2802). [20] Mirza, M., & Osindero, S. (2014). Conditional Generativ e Adversarial Nets. [21] Nie, X., Feng, J., & Y an, S. (2018). Mutual Learning to Adapt for Joint Human P arsing and Pose Estimation. In Proceedings of the European Conference on Computer V ision (ECCV) (pp. 502-517). [22] Nie, X. (2018). Mutual Learning to Adapt for Joint Human Parsing and Pose Estimation. Retriev ed from https://github .com/NieXC/pytorch-mula [23] Radford, A., Metz, L., & Chintala, S. (2015). Unsupervised Representation Learning with Deep Con volutional Generativ e Adversarial Networks. arXi v preprint [24] Rogez, G., W einzaepfel, P ., & Schmid, C. (2019). LCR-net++: Multi-Person 2d and 3d Pose Detection in Natural Images. IEEE T ransactions on Pattern Analysis and Machine Intelligence. [25] Rocco, I., Arandjelovic, R., & Sivic, J. (2017). Con volutional Neural Network Architecture for Geometric Matching. In Proceedings of the IEEE Conference on Computer V ision and P attern Recognition (pp. 6148-6157). [26] Ronneberger , O., Fischer , P ., & Brox, T . (2015). U-Net: Conv olutional Networks for Biomedical Image Seg- mentation. In International Conference on Medical Image Computing and Computer -Assisted Intervention (pp. 234-241). Springer , Cham. [27] Salimans, T ., Goodfellow , I., Zaremba, W ., Cheung, V ., Radford, A., & Chen, X. (2016). Improved T echniques for T raining GANs. In Advances in Neural Information Processing Systems (pp. 2234-2242). [28] Shriv astav a, A., Pfister, T ., T uzel, O., Susskind, J., W ang, W ., & W ebb, R. (2017). Learning from Simulated and Unsupervised Images through Adversarial T raining. In Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (pp. 2107-2116). 9 A P R E P R I N T - S E P T E M B E R 6 , 2 0 1 9 [29] W ang, B., Zheng, H., Liang, X., Chen, Y ., Lin, L., & Y ang, M. (2018). T o ward Characteristic-Preserving Image- based V irtual T ry-On Network. In Proceedings of the European Conference on Computer V ision (ECCV) (pp. 589-604). [30] W ang, B. (2018). CP-VTON - Reimplemented code for "T o ward Characteristic-Preserving Image-based V irtual T ry-On Network". Retriev ed from https://github .com/sergeyw ong/cp-vton. [31] Z. W ang, A. C. Bo vik, H. R. Sheikh and E. P . Simoncelli. (2004). Image Quality Assessment: From Error V isibility to Structural Similarity . IEEE Transactions on Image Processing, v ol. 13, no. 4 (pp. 600-612). [32] Zhou, Z., Siddiquee, M. M. R., T ajbakhsh, N., & Liang, J. (2018). UNet++: A Nested U-Net Architecture for Medical Image Segmentation. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support (pp. 3-11). Springer , Cham. [33] Zhu, S., Fidler , S., Urtasun, R., Lin, D., & Loy , C. C. (2017). Be Y our Own Prada: F ashion Synthesis with Structural Coherence. In Proceedings of the IEEE International Conference on Computer V ision (ICCV). [34] Zhu, J. Y ., Park, T ., Isola, P ., & Efros, A. A. (2017). Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks. In Proceedings of the IEEE International Conference on Computer V ision (pp. 2223-2232). 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment