A Communication-Efficient Algorithm for Exponentially Fast Non-Bayesian Learning in Networks

We introduce a simple time-triggered protocol to achieve communication-efficient non-Bayesian learning over a network. Specifically, we consider a scenario where a group of agents interact over a graph with the aim of discerning the true state of the…

Authors: Aritra Mitra, John A. Richards, Shreyas Sundaram

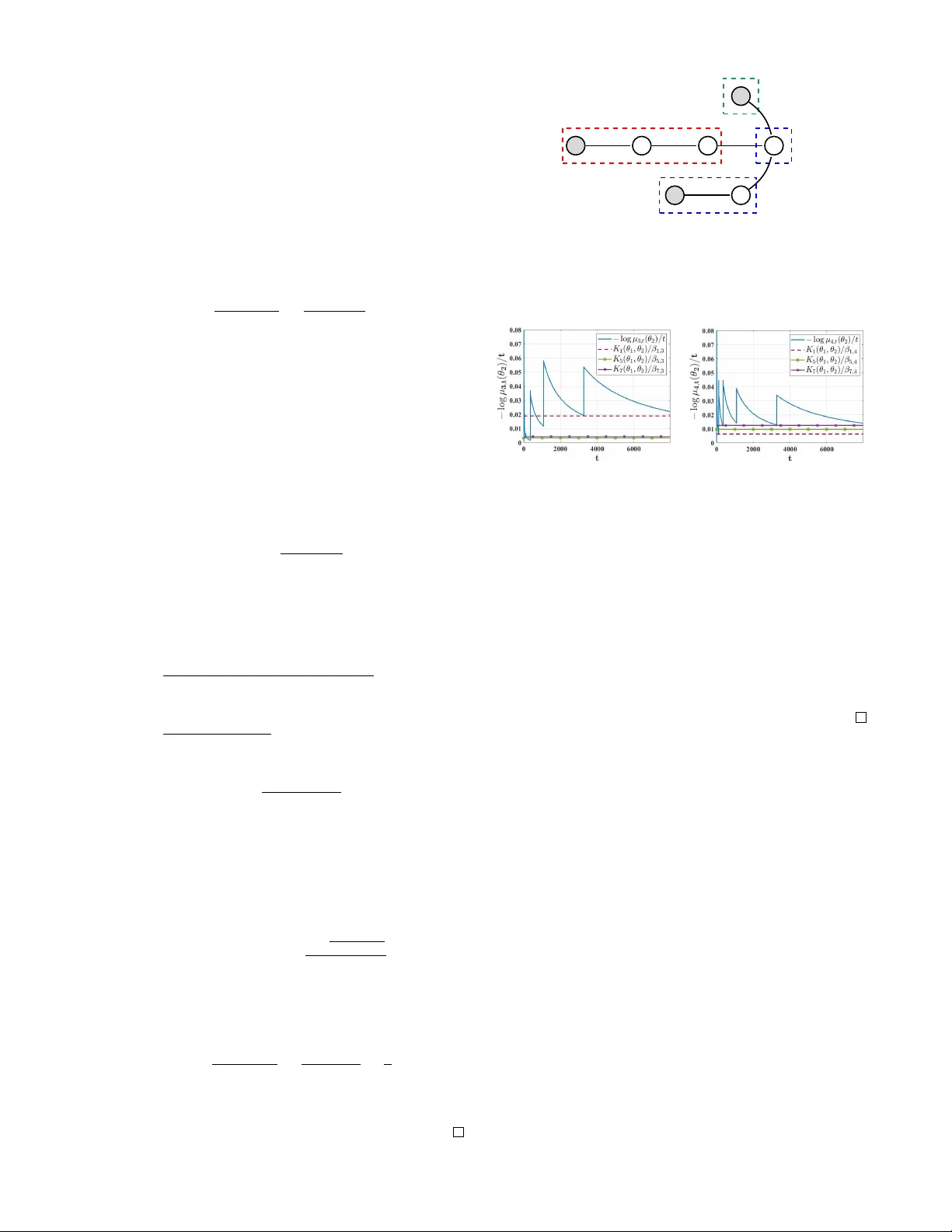

A Communication-Efficient Algorithm f or Exponentially F ast Non-Bayesian Lear ning in Networks Aritra Mitra, John A. Richards, and Shreyas Sundaram Abstract — W e introduce a simple time-trigger ed protocol to achieve communication-efficient non-Bayesian learning over a network. Specifically , we consider a scenario where a group of agents interact over a graph with the aim of discer ning the true state of the world that generates their joint observation profiles. T o address this problem, we pr opose a novel distributed learning rule wherein agents aggr egate neighboring beliefs based on a min-protocol, and the inter-communication intervals gro w geometrically at a rate a ≥ 1 . Despite such sparse communication, we show that each agent is still able to rule out every false hypothesis exponentially fast with probability 1 , as long as a is finite. F or the special case when communication occurs at every time-step, i.e., when a = 1 , we prov e that the asymptotic learning rates resulting from our algorithm are network-structure independent, and a strict improv ement upon those existing in the literature. In contrast, when a > 1 , our analysis re veals that the asymptotic learning rates vary across agents, and exhibit a non-trivial dependence on the network topology coupled with the r elative entropies of the agents’ likelihood models. This motivates us to consider the problem of allocating signal structures to agents to maximize appropriate performance metrics. In certain special cases, we show that the eccentricity centrality and the decay centrality of the underlying graph help identify optimal allocations; for mor e general scenarios, we bound the deviation from the optimal allocation as a function of the parameter a , and the diameter of the communication graph. I . I N T RO D U C T I O N A typical problem in networked systems in volves a global task that needs to be accomplished by a group of entities or agents via local computations and information exchanges ov er the network. These agents, ho wev er , are typically en- dowed with partial information about the state of the system; as such, inter-agent communication becomes indispensable for achieving the common goal. Given this premise, it is natural to ask: how frequently must the agents communi- cate to solve the desired problem? Owing to its practical relev ance, the question posed above has received significant recent interest by the control system, information theory and machine learning communities in the context of a variety of problems, namely av erage consensus [1], optimization A. Mitra, and S. Sundaram are with the School of Electrical and Computer Engineering at Purdue University . J. A. Richards is with Sandia National Laboratories. Email: { mitra14, sundara2 } @purdue.edu , jaricha@sandia.gov . This work was supported in part by NSF CA- REER aw ard 1653648, and by a grant from Sandia National Laboratories. Sandia National Laboratories is a multimission laboratory managed and operated by National T echnology & Engineering Solutions of Sandia, LLC, a wholly owned subsidiary of Honeywell International Inc., for the U.S. Department of Energy’ s National Nuclear Security Administration under contract DE-NA0003525. The views expressed in the article do not necessarily represent the views of the U.S. Department of Energy or the United States Gov ernment. [2]–[4], and static parameter estimation [5]. Our goal in this paper is to extend such in vestigations to the problem of non-Bayesian learning in a network, also known as the distributed hypothesis testing problem [6]–[11]. Specifically , the global task in this setting in volv es learning the true state of the world (among a finite set of hypotheses) that explains the priv ate observ ations of each agent in the network. T wo notable features that are specific to this problem are as follows. Unlike consensus or distributed optimization, agents are privy to exogenous signals, which, if informativ e, can enable them to eliminate a subset of the false hypotheses exponentially fast. A related problem where agents receiv e exogenous signals (measurements) is that of distributed state estimation [12], [13] where the global task entails tracking potentially unstable dynamics. In contrast, the true state of the world remains fix ed ov er time in our setting, considerably simplifying the objective. These attributes play in fa vor of the problem at hand, motiv ating us to ask the following questions. (i) Can we design an algorithm that enables each agent to learn the truth with sparse communication schedules (and in fact, e ven sparser than typically employed for other classes of distributed problems)? (ii) If so, how fast do the agents learn the truth? (iii) Can we quantify the trade-off(s) between sparsity in communication and the rate of learning? T o the best of our kno wledge, these questions remain largely unexplored. In this paper, we take a preliminary step towards responding to them via the following contributions . W e develop and analyze a simple time-triggered learning rule that builds on our recent work on distributed hypothesis testing [11]. Specifically , the data-aggregation step of our al- gorithm in volves a min-protocol as opposed to the consensus- based averaging schemes intrinsic to existing linear [6], [7] and log-linear [8]–[10] learning rules. The basic strategy we employ to achie ve communication-efficienc y is in line with those proposed in [1], [2], [5], where inter-agent commu- nications become progressively sparser as time ev olves. In particular , the authors in [1] and [2] explore deterministic rules where the inter-communication interv als gro w logarith- mically and polynomially in time, respectively . In contrast, the authors in [5] propose a rule where at each time-step, an agent communicates with its neighbors in the graph with a probability that decays to zero at a sub-linear rate. In essence, these approaches establish that as long as the inter- communication intervals do not grow too fast, the global task can still be achieved. W e depart from these approaches by allo wing the inter-communication intervals to grow much faster: at a geometric rate a ≥ 1 , where the parameter a can be adjusted to control the frequency of communication. While more refined approaches to achiev e communication- efficienc y are conceiv able, we show that our simple time- triggered protocol yields strong guarantees. Specifically , we prov e that ev en with an arbitrarily large a (which leads to a highly sparse communication schedule), each agent is still able to learn the truth with probability 1 , provided a is finite. Furthermore, we establish that such learning occurs e xponen- tially fast, and characterize the limiting error exponents as a function of certain parameters of our model, and the constant a . In particular , our characterization quantifies the trade-offs between communication-efficiency and the speed of learning for the specific problem under consideration. Our analysis subsumes the special case when communica- tion occurs at ev ery time-step, i.e., when a = 1 , which cor- responds to the scenario studied in our pre vious work [11]. While the general approach in [11] was shown to be robust to worst-case adversarial attack models, a con vergence-rate analysis of the same was missing. A significant contribution of this paper is to fill this gap by establishing that when a = 1 , the asymptotic learning rates r esulting fr om our pr oposed algorithm ar e network-structure independent, and a strict impr ovement over the rates pr ovided by existing algorithms in the literatur e . In contrast, when a > 1 , we show that the asymptotic learning rates differ from agent to agent, and depend not only on the rela tiv e entropies of the agents’ signal models, but also on properties of the underlying network. Giv en this result, we introduce two new measures of the quality of learning, and study the problem of allocating signal structures to agents to maximize such measures. In certain special cases, we show that the eccentricity centrality and the decay centrality of the communication network play key roles in identifying the optimal allocations. For more general cases, we bound the deviation from the optimal allocation as a function of the parameter a , and the diameter of the graph. I I . M O D E L A N D P R O B L E M F O R M U L ATI O N Network Model: W e consider a setting comprising of a group of agents V = { 1 , 2 , . . . , n } . At certain specific time-steps (to be decided by a time-triggered communication schedule), these agents interact with each other ov er a directed graph G = ( V , E ) . An edge ( i, j ) ∈ E indicates that agent i can directly transmit information to agent j ; in such a case, agent i will be called a neighbor of agent j . The set of all neighbors of agent i will be denoted N i . For a strongly-connected graph G , we will use d ( i, j ) to denote the length of the shortest directed path from agent i to agent j , and ¯ d ( G ) to denote the diameter of the graph. 1 Observation Model: Let Θ = { θ 1 , θ 2 , . . . , θ m } denote m possible states of the world, with each state representing a hypothesis. A specific state θ ? ∈ Θ , referred to as the true state of the world, gets realized. Conditional on its realiza- tion, at each time-step t ∈ N + , every agent i ∈ V priv ately observes a signal s i,t ∈ S i , where S i denotes the signal space 1 A graph is said to be strongly-connected if it has a directed path between ev ery pair of agents; the diameter of such a graph is the length of the longest shortest path between the agents. of agent i . 2 The joint observ ation profile so generated across the network is denoted s t = ( s 1 ,t , s 2 ,t , . . . , s n,t ) , where s t ∈ S , and S = S 1 × S 2 × . . . S n . Specifically , the signal s t is generated based on a conditional likelihood function l ( ·| θ ? ) , the i -th marginal of which is denoted l i ( ·| θ ? ) , and is av ailable to agent i . The signal structure of each agent i ∈ V is thus characterized by a family of parameterized marginals l i = { l i ( w i | θ ) : θ ∈ Θ , w i ∈ S i } . W e make certain standard assumptions [6]–[10]: (i) The signal space of each agent i , namely S i , is finite. (ii) Each agent i has knowledge of its local likelihood functions { l i ( ·| θ p ) } m p =1 , and it holds that l i ( w i | θ ) > 0 , ∀ w i ∈ S i , and ∀ θ ∈ Θ . (iii) The observation sequence of each agent is described by an i.i.d. random process over time; howe ver , at any giv en time-step, the observations of different agents may potentially be correlated. (i v) There exists a fixed true state of the world θ ? ∈ Θ (that is unknown to the agents) that generates the observations of all the agents. The probability space for our model is denoted (Ω , F , P θ ? ) , where Ω , { ω : ω = ( s 1 , s 2 , . . . ) , ∀ s t ∈ S , ∀ t ∈ N + } , F is the σ -algebra generated by the observ ation profiles, and P θ ? is the probability measure induced by sample paths in Ω . Specifically , P θ ? = ∞ Q t =1 l ( ·| θ ? ) . W e will use the abbre viation a.s. to indicate almost sure occurrence of an e vent w .r .t. P θ ? . Giv en the above setting, the goal of each agent in the network is to ev entually learn the true state of the world θ ? . Howe ver , the signal structure of any given agent is in general only partially informativ e, thereby precluding this task from being achieved by any agent in isolation. Specifically , let Θ θ ? i , { θ ∈ Θ : l i ( w i | θ ) = l i ( w i | θ ? ) , ∀ w i ∈ S i } represent the set of hypotheses that are observationally equivalent to the true state θ ? from the perspectiv e of agent i . An agent i is deemed partially informative about the truth if | Θ θ ? i | > 1 . Since potentially every agent can be partially informativ e in the sense described abov e, inter-agent communications become necessary for each agent to learn the truth. In this context, our objectiv es in this paper are to de velop an understanding of (i) the amount of leeway that the abov e problem affords in terms of sparsifying inter-agent commu- nications without compromising the objecti ve of learning the truth, and (ii) the trade-offs between sparse communication and the rate of learning. T o this end, we recall the following definition from [11] that will prove useful in our subsequent dev elopments. Definition 1. ( Source agents ) An agent i is said to be a sour ce ag ent for a pair of distinct hypotheses θ p , θ q ∈ Θ if it can distinguish between them, i.e., if D ( l i ( ·| θ p ) || l i ( ·| θ q )) > 0 , wher e D ( l i ( ·| θ p ) || l i ( ·| θ q )) r epr esents the KL-diver gence [14] between the distributions l i ( ·| θ p ) and l i ( ·| θ q ) . The set of source agents for pair ( θ p , θ q ) is denoted S ( θ p , θ q ) . Throughout the rest of the paper, we will use K i ( θ p , θ q ) as a shorthand for D ( l i ( ·| θ p ) || l i ( ·| θ q )) . 2 W e use N and N + to refer to the set of non-negati ve integers and positi ve integers, respectiv ely . I I I . A C O M M U N I C AT I O N - E FFIC I E N T L E A R N I N G R U L E In this section, we formally introduce a simple time- triggered belief update rule parameterized by a constant a ∈ N + that determines the frequency of communication (to be made more precise below). In order to collaborativ ely learn the true state of the world, ev ery agent i maintains a local belief vector π i,t , and an actual belief vector µ i,t , each of which are probability distributions over the hypothesis set Θ . These vectors are initialized with π i, 0 ( θ ) > 0 , µ i, 0 ( θ ) > 0 , ∀ θ ∈ Θ , ∀ i ∈ V (b ut otherwise arbitrarily), and subse- quently updated as follows. • Update of the local beliefs : At each time-step t + 1 ∈ N + , the local belief vectors are updated based on a standard Bayesian rule: π i,t +1 ( θ ) = l i ( s i,t +1 | θ ) π i,t ( θ ) m P p =1 l i ( s i,t +1 | θ p ) π i,t ( θ p ) . (1) • Update of the actual beliefs : Let I = { t k } k ∈ N + de- note a sequence of time-steps satisfying t k +1 − t k = a k , ∀ k ∈ N + , with t 1 = 1 . If t + 1 ∈ I , then µ i,t +1 is updated as µ i,t +1 ( θ ) = min {{ µ j,t ( θ ) } j ∈N i , π i,t +1 ( θ ) } m P p =1 min {{ µ j,t ( θ p ) } j ∈N i , π i,t +1 ( θ p ) } . (2) If t + 1 / ∈ I , µ i,t +1 is simply held constant as follows: µ i,t +1 ( θ ) = µ i,t ( θ ) . (3) In words, while the local beliefs are updated at ev ery time- step, the actual beliefs are updated only at time-steps that belong to the set I , i.e., an agent i ∈ V is allowed to transmit µ i,t to its out-neighbors, and receive µ j,t from each in-neighbor j in G if and only if t + 1 ∈ I . When a = 1 , the actual beliefs get updated as per ( 2 ) at every time-step, and we recover the rule proposed in [11]. When a > 1 , note that the inter-communication intervals grow exponentially at a rate dictated by the parameter a . Our goal in this paper is to precisely characterize the impact of such sparse communication on the asymptotic rate of learning of each agent. Prior to doing so, a few comments are in order . First, notice that the data-aggregation rule in ( 2 ) is based on a min-protocol, as opposed to any form of “belief- av eraging” commonly employed in the existing distributed learning literature [6]–[10]. Essentially , while the local belief updates ( 1 ) capture what an agent can learn by itself, the actual belief updates ( 2 ) incorporate information from the rest of the network. As demonstrated by Corollary 1 in the next section, when a = 1 , such a min-protocol yields better asymptotic learning rates than all existing schemes. This motiv ates us to use a belief update rule of the form ( 2 ) for studying the case when a > 1 . Second, we note that the pro- posed time-triggered protocol is simple, easy to implement and computationally cheap. At the same time, the exponen- tially gro wing interv als af ford a much sparser communication schedule relative to related literature. Third, while one can potentially consider extensions of this algorithm that account for asynchronicity , communication failures, delays etc., we focus on the scheme here in order to (i) concretely isolate the trade-off between sparse communication and the quality of learning as measured by the asymptotic learning rates, and (ii) provide insights into how the network structure impacts such rates. A final comment needs to made re garding the choice of achieving communication-efficiency by cutting down on communication rounds as opposed to truncating the number of bits exchanged per communication round, an approach pursued in quantization-based schemes [15]. As argued in [3], communication latency acts as the bottleneck of overall performance and dominates message-size depen- dent transmission latency when it comes to transmitting small messages, such as the m -dimensional actual belief vectors in our setting. This justifies our sparse communication scheme. W ith these points in mind, we proceed to the analysis of the algorithm developed in this section. I V . M A I N R E S U LT A N D D I S C U S S I O N The main result of the paper is as follo ws; the proof of this result is presented in Section V . Theorem 1. Suppose the communication parameter satisfies a > 1 , and the following conditions are met. (i) F or every pair of hypotheses θ p , θ q ∈ Θ , the corre- sponding source set S ( θ p , θ q ) is non-empty . (ii) The communication graph G is str ongly-connected. (iii) Every agent i ∈ V has a non-zer o prior belief on each hypothesis, i.e., π i, 0 ( θ ) > 0 , µ i, 0 ( θ ) > 0 for all i ∈ V , and for all θ ∈ Θ . Then, the time-trigger ed distributed learning rule described by equations ( 1 ) , ( 2 ) , ( 3 ) pr ovides the following guarantees. • (Consistency) : F or each agent i ∈ V , µ i,t ( θ ? ) → 1 a.s. • (Asymptotic Rate of Rejection of F alse Hypotheses) : F or each agent i ∈ V , and for each false hypothesis θ ∈ Θ \ { θ ? } , the following holds: lim inf t →∞ − log µ i,t ( θ ) t ≥ max v ∈S ( θ ? ,θ ) K v ( θ ? , θ ) a ( d ( v ,i )+1) a.s. (4) W e obtain the following important corollary , the proof of which follo ws readily from that of Theorem 1 in Section V . Corollary 1. Suppose communication occurs at every time- step, i.e., suppose a = 1 . Let conditions (i)-(iii) in the statement of Theor em 1 hold. Then, our pr oposed learning rule guarantees consistency in the same sense as in Theor em 1 . Furthermore, for each agent i ∈ V , and for each false hypothesis θ ∈ Θ \ { θ ? } , the following holds: lim inf t →∞ − log µ i,t ( θ ) t ≥ max v ∈S ( θ ? ,θ ) K v ( θ ? , θ ) a.s. (5) W e remark on the implications of the above results. Implications of Theorem 1 : W e first note that despite its simplicity , the time-triggered algorithm proposed in Section III provides strong guarantees: Eqn. ( 4 ) indicates that al- though the inter-communication intervals grow exponentially at an arbitrarily large (but finite) rate a , each agent is still able to eliminate ev ery false hypothesis at an exponential rate with probability 1 . More interestingly , ( 4 ) rev eals that the asymptotic learning rates are agent-specific , i.e., different agents may discover the truth at dif ferent rates. 3 In particular , when considering the asymptotic rate of rejection of a particular false hypothesis at a giv en agent i , notice from the RHS of ( 4 ) that one needs to account for the attenuated relativ e entropies of the corresponding source agents, where the attenuation factor scales exponentially with the distances of agent i from such source agents. This contrasts with existing literature [6]–[10], and the case when a = 1 in Corollary 1 , where all agents learn the truth at identical rates. Implications of Corollary 1 : In sharp contrast to the case when a > 1 , Corollary 1 indicates that when communication occurs at ev ery time-step (i.e., a = 1 ), the asymptotic learning rates are network-structur e independent , and iden- tical for each agent. Since this case represents the standard distributed hypothesis testing setup studied in literature, it becomes important to know ho w such rates compare with those resulting from existing “belief-a veraging” schemes [6]– [10]. T o this end, we note that under the same set of assumptions as in Theorem 1 , both linear [6], [7] and log- linear [8]–[10] opinion pooling lead to an asymptotic rate of rejection of the form P i ∈V ν i K i ( θ ? , θ ) for each false hypothesis θ ∈ Θ \ { θ ? } , and the rate is identical for each agent. Here, ν i represents the eigenv ector centrality of agent i ∈ V . It is well known that for a strongly-connected graph, ν i > 0 , ∀ i ∈ V . Thus, based on the above discussion, and referring to ( 5 ), we conclude that a significant contribution of the algorithm proposed in this paper is that it yields strictly better asymptotic learning rates than those existing in the literature, for the standard setting when a = 1 . 4 T rade-Off between Sparse Communication and Qual- ity of Learning : From ( 4 ), it is apparent that sparser communication schedules (corresponding to larger a ’ s) come at the cost of lower asymptotic learning rates. Furthermore, since such rates depend upon the network-structure when a > 1 , a poor allocation of signal structures to agents can hav e adverse effects on the learning rates of certain agents. Howe ver , the abov e problem is readily bypassed when a = 1 , since the learning rates for that case solely depend on the relativ e entropies of the agents, as shown by ( 5 ). V . P R O O F O F T H E M A I N R E S U LT In order to prove Theorem 1 , we require a fe w intermediate results. The first one is a standard consequence of Bayesian updating, and characterizes the behavior of the local belief trajectories generated via ( 1 ); for a proof, see [11]. 3 W e use the lower bounds deriv ed in ( 4 ), ( 5 ) as a proxy when referring to the corresponding asymptotic learning rates. 4 Recently , in [16], we showed that this result continues to hold even if the underlying graph changes with time, but satisfies a mild joint-strong connectivity condition. Lemma 1. Consider a false hypothesis θ ∈ Θ \ { θ ? } , and an agent i ∈ S ( θ ? , θ ) . Suppose π i, 0 ( θ p ) > 0 , ∀ θ p ∈ Θ . Then, the update rule ( 1 ) ensur es that (i) π i,t ( θ ) → 0 a.s., (ii) π i, ∞ ( θ ? ) , lim t →∞ π i,t ( θ ? ) exists a.s. and satisfies π i, ∞ ( θ ? ) ≥ π i, 0 ( θ ? ) , and (iii) the following holds: lim t →∞ 1 t log π i,t ( θ ) π i,t ( θ ? ) = − K i ( θ ? , θ ) a.s. (6) Lemma 2. Suppose the conditions in Theor em 1 hold, and the learning rule given by ( 1 ) , ( 2 ) , and ( 3 ) is employed by each agent. Then, there e xists a set ¯ Ω ⊆ Ω with the following pr operties: (i) P θ ? ( ¯ Ω) = 1 , and (ii) for each ω ∈ ¯ Ω , there exist constants η ( ω ) ∈ (0 , 1) and t 0 ( ω ) ∈ (0 , ∞ ) such that π i,t ( θ ? ) ≥ η ( ω ) , µ i,t ( θ ? ) ≥ η ( ω ) , ∀ t ≥ t 0 ( ω ) , ∀ i ∈ V . (7) Pr oof. Let ¯ Ω ⊆ Ω denote the set of sample paths for which the assertions in Lemma 1 hold for each false hypothesis θ ∈ Θ \ { θ ? } . Based on Lemma 1 , we note that P θ ? ( ¯ Ω) = 1 . Consequently , to pro ve the result, it suffices to establish the existence of η ( ω ) ∈ (0 , 1) , and t 0 ( ω ) ∈ (0 , ∞ ) such that ( 7 ) holds for each sample path ω ∈ ¯ Ω . T o this end, pick an arbitrary sample path ω ∈ ¯ Ω . W e first argue that the local beliefs of ev ery agent on the true state θ ? are bounded away from 0 on ω . T o see this, pick any agent i ∈ V . Suppose there exists some θ ∈ Θ \ { θ ? } for which i ∈ S ( θ ? , θ ) . Then, based on our choice of ω , it follows directly from Lemma 1 that π i, ∞ ( θ ? ) ≥ π i, 0 ( θ ? ) > 0 , where the last inequality follows from condition (iii) in Theorem 1 . In particular , given the structure of the update rule ( 1 ), it follows that π i,t ( θ ? ) > 0 for all time (since if π i,t ( θ ? ) = 0 at any instant, then the corresponding belief would remain at 0 for all subsequent time-steps, thereby violating the fact that π i, ∞ ( θ ? ) ≥ π i, 0 ( θ ? ) > 0 ). If there exists no θ ∈ Θ \ { θ ? } for which i ∈ S ( θ ? , θ ) , then every hypothesis in Θ is observationally equiv alent to θ ? from the point of vie w of agent i . In this case, it is easy to see that based on ( 1 ), π i,t = π i, 0 , ∀ t ∈ N + . In particular , this implies π i,t ( θ ? ) = π i, 0 ( θ ? ) > 0 , ∀ t ∈ N + . This establishes our claim that on ω , the local beliefs of all the agents remain bounded away from 0 . T o proceed, define γ 1 , min i ∈V π i, 0 ( θ ? ) > 0 , where the inequality follows from condition (iii) in Theorem 1 . Pick a small number δ > 0 such that δ < γ 1 , and notice that our discussion concerning the evolution of the local beliefs readily implies the existence of a time-step t 0 ( ω ) , such that for all t ≥ t 0 ( ω ) , π i,t ( θ ? ) ≥ γ 1 − δ > 0 , ∀ i ∈ V . Now define γ 2 ( ω ) , min i ∈V { µ i,t 0 ( ω ) ( θ ? ) } , and observe that γ 2 ( ω ) > 0 . This observ ation follo ws from the f act that gi ven the structure of the update rules ( 2 ) and ( 3 ), and condition (iii) in Theorem 1 , γ 2 ( ω ) can equal 0 if and only if some agent in the network sets its local belief on θ ? to 0 at some time-step prior to t 0 ( ω ) . Howe ver , this possibility is ruled out in view of the previously established fact that on ω , π i,t ( θ ? ) > 0 , ∀ t ∈ N , ∀ i ∈ V . Let η ( ω ) = min { γ 1 − δ, γ 2 ( ω ) } > 0 . It is apparent from the preceding discussion that π i,t ( θ ? ) ≥ η ( ω ) , ∀ t ≥ t 0 ( ω ) , ∀ i ∈ V . It remains to establish a similar result for the actual beliefs µ i,t ( θ ? ) . T o this end, let ¯ t ( ω ) > t 0 ( ω ) be the first time-step following t 0 ( ω ) that belongs to the set I . Based on ( 3 ), notice that µ i,t ( θ ? ) ≥ η ( ω ) for all t ∈ [ t 0 ( ω ) , ¯ t ( ω )) , and for each i ∈ V . Based on ( 2 ), at time-step ¯ t ( ω ) ∈ I , µ i, ¯ t ( ω ) ( θ ? ) for an agent i ∈ V satisfies: µ i, ¯ t ( ω ) ( θ ? ) ≥ η ( ω ) m P p =1 min {{ µ j, ¯ t ( ω ) − 1 ( θ p ) } j ∈N i , π i, ¯ t ( ω ) ( θ p ) } ≥ η ( ω ) m P p =1 π i, ¯ t ( ω ) ( θ p ) = η ( ω ) , (8) where the last equality follows from the fact that the lo- cal belief vectors generated via ( 1 ) are v alid probability distributions over the hypothesis set Θ at each time-step, and hence m P p =1 π i, ¯ t ( ω ) ( θ p ) = 1 . The above argument applies identically to each agent in V . Furthermore, it is easily seen that based on ( 3 ), and a similar reasoning as above, identical conclusions can be drawn for each time-step t > t 0 ( ω ) , t ∈ I when the agents update their actual beliefs based on ( 2 ). This readily establishes ( 7 ), and completes the proof. Lemma 3. Consider a false hypothesis θ ∈ Θ \ { θ ? } and an agent v ∈ S ( θ ? , θ ) . Suppose the conditions stated in Theorem 1 hold. Then, the learning rule described by equations ( 1 ) , ( 2 ) and ( 3 ) guarantee the following for each agent i ∈ V : lim inf t →∞ − log µ i,t ( θ ) t ≥ K v ( θ ? , θ ) a ( d ( v ,i )+1) a.s. (9) Pr oof. Throughout this proof, we use the same notation as in the proof of Lemma 2 . W ith ¯ Ω as in Lemma 2 , pick an arbitrary sample path ω ∈ ¯ Ω , an agent v ∈ S ( θ ? , θ ) , and an agent i ∈ V . Since condition (ii) in Theorem 1 is met, there exists a directed path of shortest length from agent v to agent i in G . T o prove the result, we shall induct on the length of such a path. First, we consider the base case when d ( v , i ) = 0 , i.e., when i = v . In other words, we will analyze the asymptotic rate of rejection of θ at the source agent v . Fix > 0 , and notice that since v ∈ S ( θ ? , θ ) , Lemma 1 implies that there exists t v ( ω , θ , ) ∈ N + , such that: π v ,t ( θ ) < e − ( K v ( θ ? ,θ ) − ) t , ∀ t ≥ t v ( ω , θ , ) . (10) Since ω ∈ ¯ Ω , Lemma 2 guarantees the existence of a time- step t 0 ( ω ) < ∞ , and a constant η ( ω ) > 0 , such that on ω , π i,t ( θ ? ) ≥ η ( ω ) , µ i,t ( θ ? ) ≥ η ( ω ) , ∀ t ≥ t 0 ( ω ) , ∀ i ∈ V . Let ¯ t v ( ω , θ , ) = max { t 0 ( ω ) , t v ( ω , θ , ) } . For the remainder of the proof, to simplify the notation, we suppress the dependence of various quantities on the parameters ω , θ , and , since such dependence can be easily inferred from context. Let ˜ t > ¯ t v be the first time-step follo wing ¯ t v that belongs to I , i.e., a time-step when agent v updates its actual beliefs based on ( 2 ). Then, based on the preceding discussion and ( 2 ), we have: µ v, ˜ t ( θ ) ( a ) ≤ π v, ˜ t ( θ ) m P p =1 min {{ µ j, ˜ t − 1 ( θ p ) } j ∈N i , π v, ˜ t ( θ p ) } ( b ) < e − ( K v ( θ ? ,θ ) − ) ˜ t η ( ω ) = C ( ω ) e − ( K v ( θ ? ,θ ) − ) ˜ t , (11) where C ( ω ) = η ( ω ) − 1 . Regarding the inequalities in ( 11 ), (a) follows directly from ( 2 ), whereas (b) follows from ( 10 ) and the fact that η ( ω ) lower bounds the beliefs (both local and actual) of all agents on the true state θ ? . Note that consecutiv e trigger -points t k , t k +1 ∈ I satisfy t k +1 = at k +1 . Based on ( 3 ), we then hav e: µ v ,t ( θ ) < C ( ω ) e − ( K v ( θ ? ,θ ) − ) ˜ t , ∀ t ∈ [ ˜ t, a ˜ t + 1) . (12) Based on our rule, the next update of µ v ,t ( θ ) takes place at time-step a ˜ t + 1 . Employing the same reasoning as we did to arrive at ( 11 ), we obtain: µ v ,a ˜ t +1 ( θ ) < C ( ω ) e − ( K v ( θ ? ,θ ) − )( a ˜ t +1) . (13) Coupled with the above inequality , ( 3 ) once again implies: µ v ,t ( θ ) < C ( ω ) e − ( K v ( θ ? ,θ ) − )( a ˜ t +1) , ∀ t ∈ [ a ˜ t +1 , a 2 ˜ t + a +1) . (14) Generalizing the above reasoning, we obtain: µ v ,t ( θ ) < C ( ω ) e − ( K v ( θ ? ,θ ) − )( a p ˜ t + f ( p )) , (15) ∀ t ∈ [ a p ˜ t + f ( p ) , a ( p +1) ˜ t + af ( p ) + 1) , p ∈ N , where f ( p ) = ( a p − 1) ( a − 1) . (16) This immediately leads to the conclusion that for any t ≥ ˜ t : µ v ,t ( θ ) < C ( ω ) e − ( K v ( θ ? ,θ ) − )( a p ( t ) ˜ t + f ( p ( t ))) , (17) where p ( t ) = b g ( t ) c , g ( t ) = log ( a − 1) t +1 ( a − 1) ˜ t +1 log a . (18) T aking the natural log on both sides of ( 17 ), di viding throughout by t , and simplifying, we obtain that ∀ t ≥ ˜ t : − log µ v,t ( θ ) t > ( K v ( θ ? , θ ) − )( a p ( t ) ˜ t + f ( p ( t ))) t − log C ( ω ) t . (19) Let α v ( θ , ) = ( K v ( θ ? , θ ) − ) . Then, taking the limit inferior on both sides of the abov e inequality yields: lim inf t →∞ − log µ v ,t ( θ ) t ≥ α v ( θ , ) lim t →∞ 1 t a p ( t ) ˜ t + a p ( t ) − 1 a − 1 ≥ α v ( θ , ) a lim t →∞ 1 t a g ( t ) ( ˜ t + 1 a − 1 ) = α v ( θ , ) a , (20) where the second inequality follows from the fact that b x c > x − 1 , ∀ x ∈ R , and the final equality results from further simplifications based on ( 18 ). Finally , note that can be made arbitrarily small in the abov e inequality , and that the above conclusions hold for a generic sample path ω ∈ ¯ Ω , where P θ ? ( ¯ Ω) = 1 . This establishes ( 9 ) for the case when d ( v , i ) = 0 , and completes the proof of the base case of our induction. T o proceed, suppose ( 9 ) holds for each node i ∈ V satisfying 0 ≤ d ( v , i ) ≤ q , where q is a non-negati ve integer satisfying q ≤ ¯ d ( G ) − 1 (recall that ¯ d ( G ) represents the diameter of the graph G ). Let i ∈ V be such that d ( v , i ) = q + 1 . Thus, there must exist some node l ∈ N i such that d ( v , l ) = q . The induction hypothesis applies to this node l , and hence, we have: lim inf t →∞ − log µ l,t ( θ ) t ≥ K v ( θ ? , θ ) a ( q +1) a.s. (21) Let ˜ Ω ⊆ Ω be the set of sample paths for which the abov e inequality holds. W ith ¯ Ω defined as before, notice that P θ ? ( ˜ Ω ∩ ¯ Ω) = 1 , since ˜ Ω and ¯ Ω each have P θ ? -measure 1 . Pick an arbitrary sample path ω ∈ ˜ Ω ∩ ¯ Ω , and notice that based on arguments identical to the base case, on the sample path ω there exists a time-step ¯ t l , such that the beliefs of all agents on θ ? are bounded below by η ( ω ) following ¯ t l , and µ l,t ( θ ) < e − ( H l ( θ ? ,θ ) − ) t , ∀ t ≥ ¯ t l , (22) where > 0 is an arbitrary small number and H l ( θ ? , θ ) = K v ( θ ? , θ ) a ( q +1) . (23) Proceeding as in the base case, let τ > ¯ t l be the first time- step following ¯ t l that belongs to the set I . Noting that l ∈ N i , using ( 2 ), ( 22 ), and similar arguments as those used to arriv e at ( 11 ), we obtain: µ i,τ ( θ ) ≤ µ l,τ − 1 ( θ ) m P p =1 min {{ µ j,τ − 1 ( θ p ) } j ∈N i , π i,τ ( θ p ) } < e − ( H l ( θ ? ,θ ) − )( τ − 1) η ( ω ) = C l ( ω ) e − ( H l ( θ ? ,θ ) − ) τ , (24) where C l ( ω ) = e ( H l ( θ ? ,θ ) − ) η ( ω ) . (25) Repeating the above analysis for each time-step of the form a p τ + f ( p ) , p ∈ N + , using ( 3 ), and follo wing similar arguments as in the base case yields that ∀ t ≥ τ , µ i,t ( θ ) < C l ( ω ) e − ( H l ( θ ? ,θ ) − )( a ¯ p ( t ) τ + f ( ¯ p ( t ))) , (26) where ¯ p ( t ) = b ¯ g ( t ) c , ¯ g ( t ) = log ( a − 1) t +1 ( a − 1) τ +1 log a . (27) Notice that the inequality in ( 26 ) resembles that in ( 17 ). Thus, the remaining steps can be completed identically as the base case to yield: lim inf t →∞ − log µ i,t ( θ ) t ≥ H l ( θ ? , θ ) a − a . (28) The induction step, and in turn the proof can be completed by substituting the expression for H l ( θ ? , θ ) in the above inequality and recalling that d ( v , i ) = q + 1 . 1 2 3 4 5 6 7 Fig. 1. The figure represents the network for the simulation example in Section VI . Based on the parameters of the model, Theorem 1 implies that the asymptotic rates of rejection of θ 2 for the agents enclosed in the red, blue and green rectangles are dictated by the relativ e entropies of agents 1, 7 and 5, respectively , illustrating the agent-specific learning rate phenomenon. Fig. 2. The figure plots the instantaneous rates of decay of the beliefs of agents 3 and 4 on the false hypothesis θ 2 (giv en by − log µ i,t ( θ 2 ) /t, i ∈ { 3 , 4 } ), for the model described in Section VI . The parameter β i,j = a ( d ( i,j )+1) in the above plots represents the factor by which the signal strength of agent i is attenuated at the location of agent j . W e are now in position to prove Theorem 1 . Pr oof. ( Theorem 1 ) Fix a θ ∈ Θ \ { θ ? } . Based on condition (i) of the Theorem, S ( θ ? , θ ) is non-empty , and based on con- dition (ii), there exists a path from each agent v ∈ S ( θ ? , θ ) to ev ery agent in V \ { v } . Eq. ( 4 ) then follows from Lemma 3 . By definition of a source set, K v ( θ ? , θ ) > 0 , ∀ v ∈ S ( θ ? , θ ) ; ( 4 ) then implies lim t →∞ µ i,t ( θ ) = 0 a.s., ∀ i ∈ V . V I . S I M U L A T I O N E X A M P L E Consider a binary hypothesis testing scenario where Θ = { θ 1 , θ 2 } , and θ 1 is the true state of the world. The network of agents is depicted in Figure 1 . The signal space for every agent is identical, and gi ven by S i = { 1 , 2 } , ∀ i ∈ { 1 , . . . , 7 } . The agent likelihood models satisfy: l i (1 | θ 1 ) = 0 . 5 , ∀ i ∈ { 1 , . . . , 7 } , l 1 (1 | θ 2 ) = 0 . 9 , l 5 (1 | θ 2 ) = 0 . 7 , l 7 (1 | θ 2 ) = 0 . 85 , and l i (1 | θ 2 ) = 0 . 5 , ∀ i ∈ { 2 , 3 , 4 , 6 } . Thus, only agents 1 , 5 and 7 can distinguish between θ 1 and θ 2 , with their relativ e entropies satisfying K 1 ( θ 1 , θ 2 ) > K 7 ( θ 1 , θ 2 ) > K 5 ( θ 1 , θ 2 ) > 0 (all other agents hav e K i ( θ 1 , θ 2 ) = 0) . W ith a = 3 , we have K 7 ( θ 1 , θ 2 ) /a 2 > K 5 ( θ 1 , θ 2 ) /a > K 1 ( θ 1 , θ 2 ) /a 3 . Figure 2 plots the instantaneous rates of rejection of the false hypothesis θ 2 for agents 3 and 4 , resulting from our proposed algorithm. Based on Figures 1 and 2 , a few ke y observations are: (i) each informativ e agent dominates the speed of learning of agents that are close to it in the network, (ii) the rate of rejection of the false hypothesis is indeed agent-specific, and (iii) the simulation results agree very closely with the theoretical lo wer bounds on the limiting rates of rejection in Theorem 1 . V I I . T H E I M PAC T O F I N F O R M A T I O N A L L O C A T I O N O N A S Y M P T OT I C L E A R N I N G R A T E S Theorem 1 indicates that the asymptotic learning rates of the agents are shaped by a non-trivial interplay between the relativ e entropies of their signal models and the structure of the network. In vie w of this fact, our next goal is to conduct a preliminary analysis of how information should be allocated to the agents in order to maximize appropriate performance metrics that are a function of the asymptotic learning rates. Our in vestigation is inspired by similar questions in [7]; ho w- ev er , as we discuss next, our formulation differs considerably from [7]. Specifically , unlike [7], our proposed learning rule leads to asymptotic learning rates that are agent-dependent when a > 1 (as seen in Section VI ). Consequently , the performance metrics that we seek to maximize differ from those in [7]. As we shall soon see, while the eigen vector centrality plays a key role in shaping the speed of learning in [7], alternate network centrality measures become important when it comes to the belief dynamics generated by our rule. T o make the above ideas precise, suppose we are giv en a strongly-connected communication graph G , and a set of n signal structures L = { l 1 , . . . , l n } , where each l i represents a family of parameterized marginals as defined in Section II . By an allocation of signal structures to agents, we imply a bijection ψ : L → V between the elements of L and the elements of the vertex set of G , namely V . Let Ψ represent the set of all possible bijections between the elements of L and V . Our objectiv e is to optimally pick ψ ∈ Ψ so as to maximize the performance metrics that we define next. T o this end, giv en a distinct pair of hypotheses θ p , θ q ∈ Θ , recall from ( 4 ) that based on our proposed learning rule, ρ ψ i ( θ p , θ q ) , max v ∈S ψ ( θ p ,θ q ) K ψ v ( θ p , θ q ) a ( d ( v ,i )+1) (29) lower bounds the limiting rate at which agent i rules out θ q when θ p is realized as the true state; the superscript ψ reflects the dependence of the corresponding objects on the allocation policy ψ . W e now introduce two measures of the quality of learning that are specific to our setting: ρ ψ avg , min θ p ,θ q ∈ Θ 1 n P i ∈V ρ ψ i ( θ p , θ q ) , ρ ψ min , min θ p ,θ q ∈ Θ min i ∈V ρ ψ i ( θ p , θ q ) . (30) While ρ ψ avg captures the av erage rate of learning across the network, ρ ψ min focuses on the agent that conv erges the slowest; giv en that any state in Θ can be realized, these metrics account for the pair of states that are the hardest to tell apart. W e seek to maximize ρ ψ avg and ρ ψ min ov er the set of allocations Ψ . Our first result on this topic makes a connection to two popular network centrality measures, namely , the eccentricity centrality and the decay centrality , defined as follows. For a strongly-connected graph G , the eccentricity centrality ξ i [17], and the decay centrality κ i ( δ ) [18], of an agent i ∈ V are giv en by ξ i = 1 max j ∈V \{ i } d ( i, j ) , κ i ( δ ) = X j ∈V \{ i } δ d ( i,j ) , (31) where 0 < δ < 1 is the decay parameter . The eccentricity centrality is a distance-based centrality measure that aims to find the ‘center’ of a graph such that a process originating at the center minimizes the response time to any other agent. The decay centrality is also a closeness- based centrality measure where an agent is re warded for being close to other agents, with agents at higher distances contributing less to the centrality as compared to those that are closer . W e have the following result. Proposition 1. Let G be str ongly-connected. Suppose a > 1 , and let ther e exist a signal structure l u ∈ L such that the following is true for all θ p , θ q ∈ Θ , K l u ( θ p , θ q ) a ¯ d ( G ) > K l w ( θ p , θ q ) , ∀ l w ∈ L \ { l u } . 5 (32) Then, (i) any allocation ψ ∈ Ψ such that ψ ( l u ) ∈ argmax i ∈V ξ i maximizes ρ ψ min , and (ii) any allocation ψ ∈ Ψ such that ψ ( l u ) ∈ arg max i ∈V κ i ( 1 a ) maximizes ρ ψ avg . Pr oof. For part (i), consider two allocations ψ 1 , ψ 2 ∈ Ψ such that ψ 1 ( l u ) = x 1 ∈ arg max i ∈V ξ i , and ψ 2 ( l u ) = x 2 . Based on condition ( 32 ), and ( 29 ), it is easy to see that for any pair θ p , θ q ∈ Θ , and for each i ∈ V : ρ ψ 1 i ( θ p , θ q ) = K ψ 1 x 1 ( θ p , θ q ) a ( d ( x 1 ,i )+1) , ρ ψ 2 i ( θ p , θ q ) = K ψ 2 x 2 ( θ p , θ q ) a ( d ( x 2 ,i )+1) . (33) Based on ( 31 ), we then obtain: min i ∈V ρ ψ 1 i ( θ p , θ q ) − min i ∈V ρ ψ 2 i ( θ p , θ q ) = K ψ 1 x 1 ( θ p , θ q ) a (1 /ξ x 1 +1) − K ψ 2 x 2 ( θ p , θ q ) a (1 /ξ x 2 +1) = K l u ( θ p , θ q ) a 1 a 1 /ξ x 1 − 1 a 1 /ξ x 2 ≥ 0 , (34) where the second equality follo ws from the fact that the signal structure of agent x 1 under allocation ψ 1 , and agent x 2 under allocation ψ 2 , are each equal to l u , and the last inequality follows by noting that ξ x 1 ≥ ξ x 2 based on the choice of agent x 1 . The proof of part (i) then follo ws by noting that the inequality in ( 34 ) holds for ev ery pair θ p , θ q ∈ Θ . For part (ii), we proceed as in part (i) and compare two allocations ψ 1 , ψ 2 ∈ Ψ such that ψ 1 ( l u ) = x 1 ∈ argmax i ∈V κ i ( 1 a ) , and ψ 2 ( l u ) = x 2 . The equalities in ( 33 ) hold once again, and combined with ( 31 ) lead to: 1 n X i ∈V ρ ψ j i ( θ p , θ q ) = K ψ j x j ( θ p , θ q ) an 1 + κ x j 1 a , (35) where j ∈ { 1 , 2 } . The proof can be completed as in part (i) by noting that κ x 1 ( 1 a ) ≥ κ x 2 ( 1 a ) . The intuition behind the above result is simple, and as follows. Suppose there exists a signal structure that is suffi- ciently stronger in its discriminatory power than the others w .r .t. ev ery pair of hypotheses. Then, the agent allocated such 5 Here, the quantity K l u ( θ p , θ q ) should be interpreted differently from K u ( θ p , θ q ) ; whereas the former indicates a relative entropy associated with the signal structure l u , the latter indicates a relative entropy associated with agent u once it has been allocated a certain signal structure (which may not necessarily be l u ). a structure will govern the rate of learning of every other agent in the network. T o expedite learning, it thus makes sense to allocate such a dominant signal structure to the most central agent in the network (where the specific centrality measure depends on the performance metric). Remark 1. W e point out that eccentricity centrality and decay centrality have been widely studied in the context of information spr ead over social and economic networks [19]– [21]. F or instance, while the former bears connections to information cascades [19], the latter facilitates the selection of an “implant” node that maximizes the diffusion of a certain pr oduct or idea over a network [21]. Proposition 1 identifies conditions under which the above centrality measur es have similar implications for the belief dynamics generated by our pr oposed learning rule. While Proposition 1 allows one to identify the optimal allocation strategy by simply computing the appropriate centrality measures, the scenario becomes much more com- plicated if no additional structure is imposed either on the network or on the agents’ likelihood models. For such general cases, we provide a coarse upper bound on the suboptimality of any given allocation. Proposition 2. Let G be str ongly-connected. Suppose a > 1 , and let ψ ? α ∈ Ψ and ψ ? β ∈ Ψ be allocations that maximize ρ ψ min and ρ ψ avg , respectively . Then, for any allocation ψ ∈ Ψ , ρ ψ ? α min ρ ψ min ≤ a ¯ d ( G ) , ρ ψ ? β avg ρ ψ avg ≤ a ¯ d ( G ) . (36) Pr oof. W e only prove the second inequality in ( 36 ) since the first follows from similar arguments. Consider any ψ ∈ Ψ , and suppose the pair ( θ m , θ n ) minimizes ρ ψ avg for this allocation. The following inequality is then apparent from the definition of the quantities in volv ed: ρ ψ ? β avg ρ ψ avg ≤ P i ∈V ρ ψ ? β i ( θ m , θ n ) P i ∈V ρ ψ i ( θ m , θ n ) . (37) Now fix an agent i , and suppose that under the allocation ψ ? β , the signal structure that governs the quantity ρ ψ ? β i ( θ m , θ n ) (i.e., the structure that maximizes the right hand side of ( 29 )) is l u . Suppose l u is allocated to agents v 1 and v 2 under ψ ? β and ψ , respectiv ely . An inspection of ( 29 ) then rev eals: ρ ψ ? β i ( θ m , θ n ) ρ ψ i ( θ m , θ n ) ≤ a d ( v 2 ,i ) − d ( v 1 ,i ) ≤ a ¯ d ( G ) . (38) The above bound applies to e very agent i ∈ V , and hence, substituting it in ( 37 ) leads to the desired result. V I I I . C O N C L U S I O N W e developed and analyzed a simple time-triggered pro- tocol for achie ving communication-efficient non-Bayesian learning over a network. Unlike existing approaches, we al- lowed the inter-communication intervals to grow unbounded ov er time at an arbitrarily large (but finite) geometric rate a ≥ 1 . W e showed that despite such sparse communication, our approach enables each agent to learn the true state exponentially fast with probability 1. W e then characterized the limiting error exponents of the agents as a function of the primitives of our model and the parameter a . For the special case when communication occurs at ev ery time-step, i.e., when a = 1 , we prov ed that our approach yields strictly better asymptotic learning rates than those existing in the literature. Finally , for a > 1 , we studied the impact of signal allocations on the speed of learning. As future work, we plan to explore ev ent-triggered rules for the problem under consideration, and inv estigate in more detail the aspect of information allocation initiated in Section VII . R E F E R E N C E S [1] A. Olshevsk y , I. C. Paschalidis, and A. Spiridonoff, “Fully asyn- chronous push-sum with growing intercommunication intervals, ” in Pr oceedings of the American Contr ol Confer ence , 2018, pp. 591–596. [2] K. Tsianos, S. Lawlor , and M. G. Rabbat, “Communica- tion/computation tradeoffs in consensus-based distributed optimiza- tion, ” in Advances in Neural Info. Proc. systems , 2012, pp. 1943–1951. [3] T . Chen, G. Giannakis, T . Sun, and W . Y in, “Lag: Lazily aggregated gradient for communication-efficient distrib uted learning, ” in Advances in Neural Info. Pr oc. Systems , 2018, pp. 5055–5065. [4] G. Lan, S. Lee, and Y . Zhou, “Communication-efficient algorithms for decentralized and stochastic optimization, ” Mathematical Pr ogram- ming , pp. 1–48, 2017. [5] A. K. Sahu, D. Jakov etic, and S. Kar , “Communication op- timality trade-of fs for distributed estimation, ” arXiv preprint arXiv:1801.04050 , 2018. [6] A. Jadbabaie, P . Molavi, A. Sandroni, and A. T ahbaz-Salehi, “Non- Bayesian social learning, ” Games and Economic Behavior , vol. 76, no. 1, pp. 210–225, 2012. [7] A. Jadbabaie, P . Molavi, and A. T ahbaz-Salehi, “Information hetero- geneity and the speed of learning in social networks, ” Columbia Bus. Sch. Res. P aper , pp. 13–28, 2013. [8] S. Shahrampour, A. Rakhlin, and A. Jadbabaie, “Distributed detection: Finite-time analysis and impact of network topology , ” IEEE T rans. on Autom. Contr ol , vol. 61, no. 11, pp. 3256–3268, 2016. [9] A. Nedi ´ c, A. Olshevsky , and C. A. Uribe, “F ast con vergence rates for distributed Non-Bayesian learning, ” IEEE T rans. on Autom. Contr ol , vol. 62, no. 11, pp. 5538–5553, 2017. [10] A. Lalitha, T . Javidi, and A. Sarwate, “Social learning and distributed hypothesis testing, ” IEEE Tr ans. on Info. Theory , vol. 64, no. 9, 2018. [11] A. Mitra, J. A. Richards, and S. Sundaram, “ A new approach for distributed hypothesis testing with extensions to Byzantine-resilience, ” in Proc. of the American Contr ol Confer ence , 2019. [12] S. Park and N. C. Martins, “Design of distributed L TI observers for state omniscience, ” IEEE Tr ans. on Autom. Control , vol. 62, no. 2, pp. 561–576, 2017. [13] A. Mitra and S. Sundaram, “Distributed observers for L TI systems, ” IEEE Tr ans. on Autom. Control , vol. 63, no. 11, pp. 3689–3704, 2018. [14] T . M. Cov er and J. A. Thomas, Elements of information theory . John W iley & Sons, 2012. [15] A. T . Suresh, F . X. Y u, S. Kumar, and H. B. McMahan, “Distributed mean estimation with limited communication, ” in Proc. of the Int. Conf. on Machine Learning , vol. 70. JMLR, 2017, pp. 3329–3337. [16] A. Mitra, J. A. Richards, and S. Sundaram, “ A new approach to distributed hypothesis testing and non-Bayesian learning: Improv ed learning rate and Byzantine-resilience, ” , 2019. [17] P . Hage and F . Harary , “Eccentricity and centrality in netw orks, ” Social networks , vol. 17, no. 1, pp. 57–63, 1995. [18] N. Tsakas, “On decay centrality , ” The BE Journal of Theoretical Economics , 2016. [19] M. Jalili and M. Perc, “Information cascades in complex networks, ” Journal of Complex Networks , vol. 5, no. 5, pp. 665–693, 2017. [20] M. O. Jackson and A. W olinsky , “ A strategic model of social and economic networks, ” Journal of econ. theory , vol. 71, no. 1, pp. 44– 74, 1996. [21] K. Chatterjee and B. Dutta, “Credibility and strategic learning in networks, ” Int. Economic Review , vol. 57, no. 3, pp. 759–786, 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment