A Semi-Automated Usability Evaluation Framework for Interactive Image Segmentation Systems

For complex segmentation tasks, the achievable accuracy of fully automated systems is inherently limited. Specifically, when a precise segmentation result is desired for a small amount of given data sets, semi-automatic methods exhibit a clear benefi…

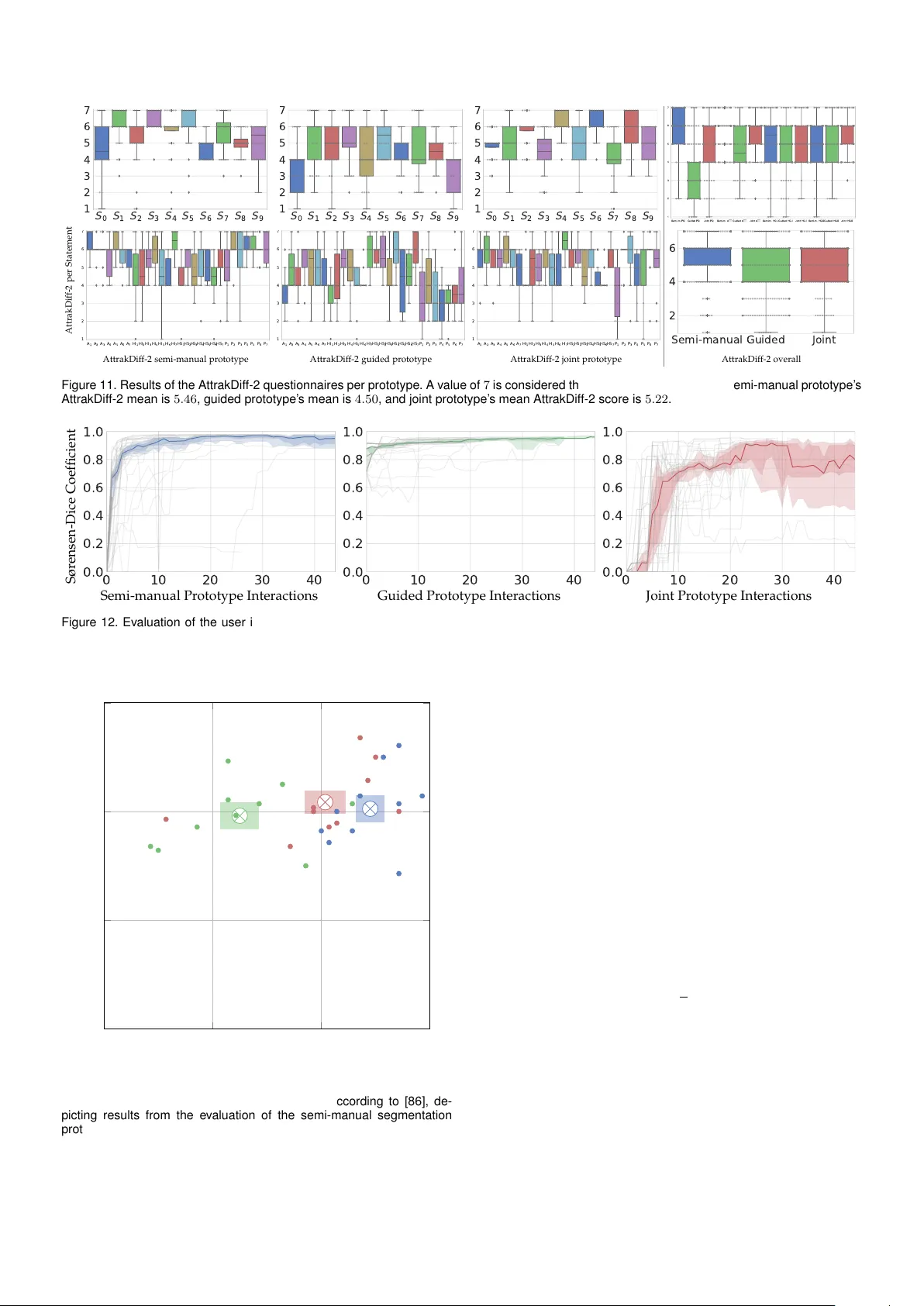

Authors: Mario Amrehn, Stefan Steidl, Reinier Kortekaas