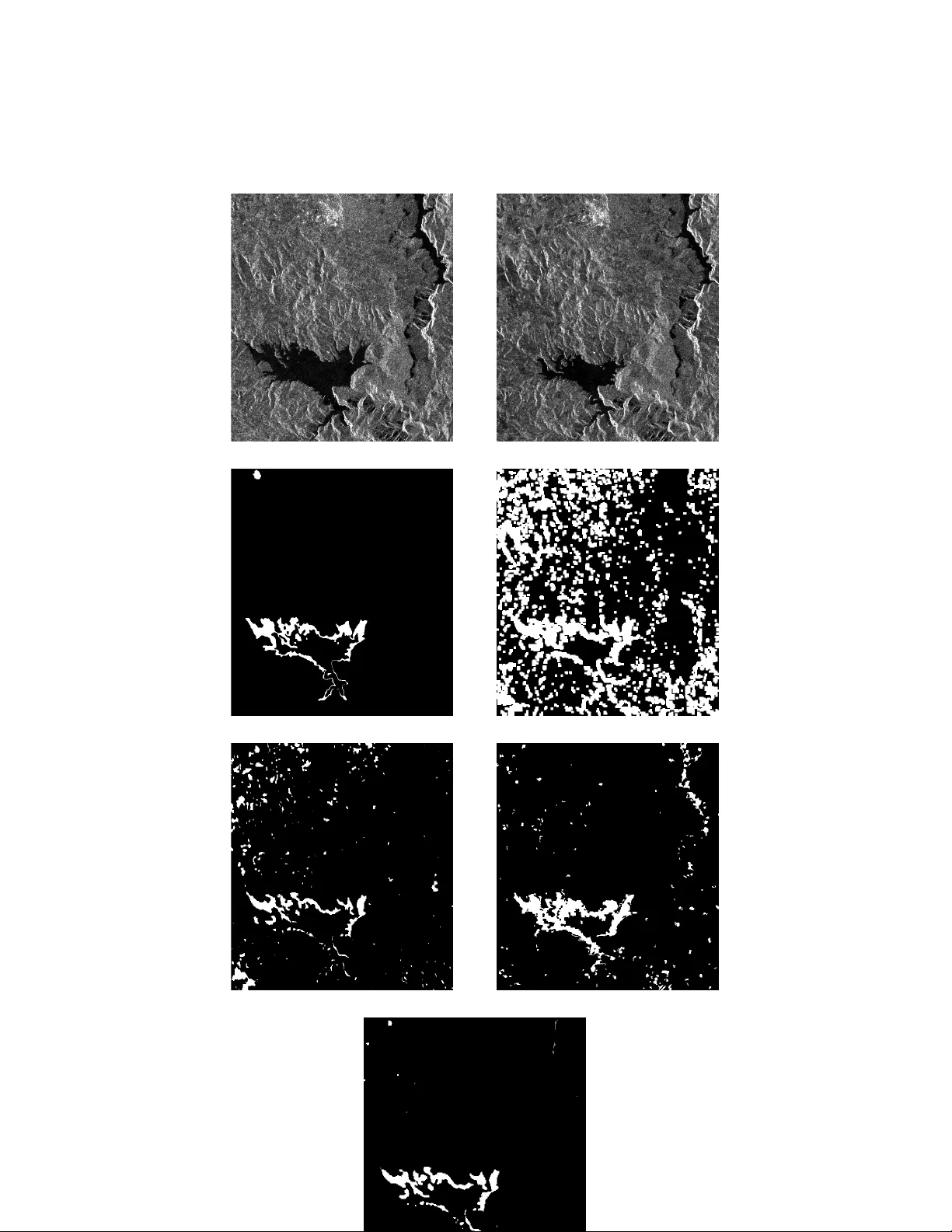

Coupled dictionary learning for unsupervised change detection between multi-sensor remote sensing images

Archetypal scenarios for change detection generally consider two images acquired through sensors of the same modality. However, in some specific cases such as emergency situations, the only images available may be those acquired through sensors of di…

Authors: Vinicius Ferraris, Nicolas Dobigeon, Yanna Cavalcanti