A Robust Billboard-based Free-viewpoint Video Synthesizing Algorithm for Sports Scenes

We present a billboard-based free-viewpoint video synthesizing algorithm for sports scenes that can robustly reconstruct and render a high-fidelity billboard model for each object, including an occluded one, in each camera. Its contributions are (1) …

Authors: Jun Chen, Ryosuke Watanabe, Keisuke Nonaka

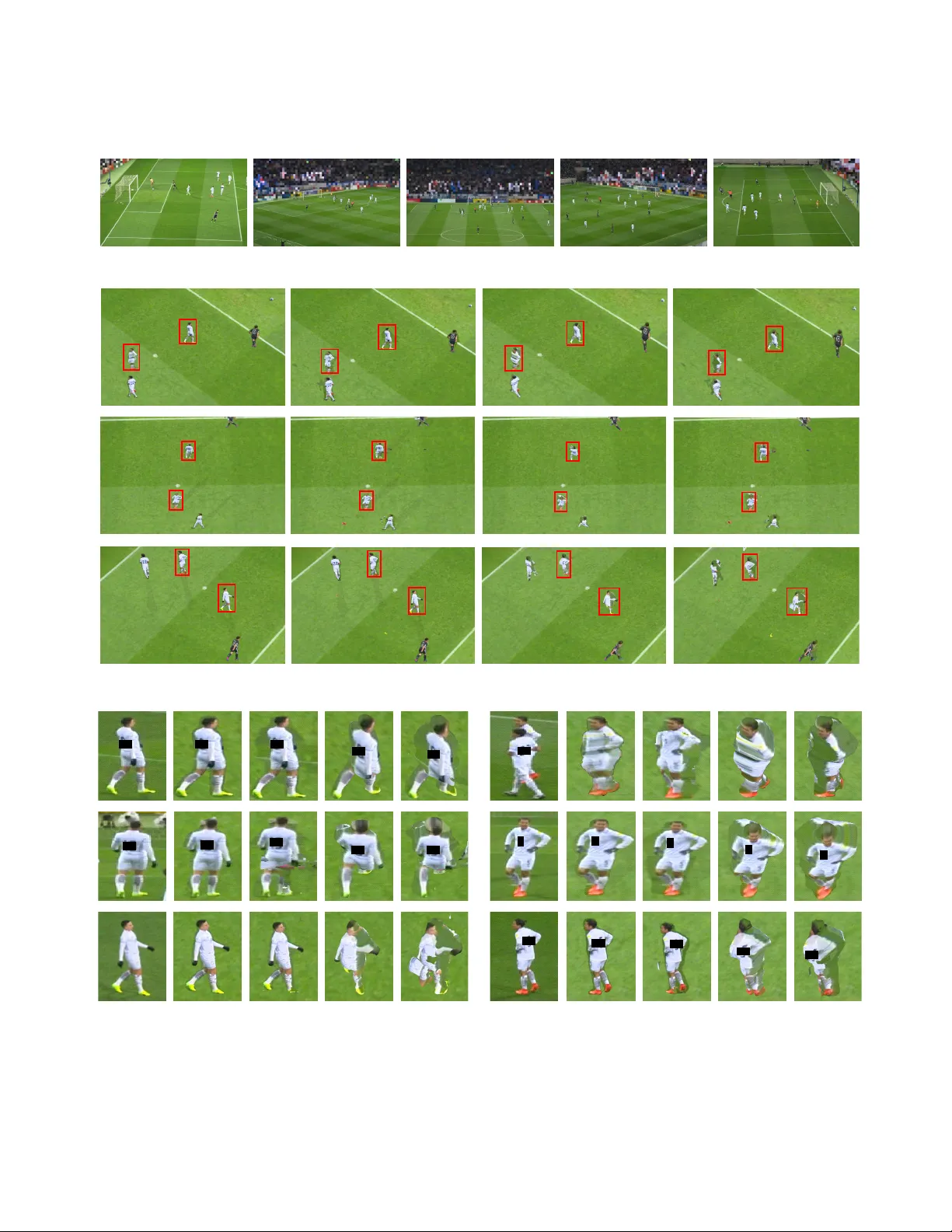

JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 1 A Billboard-based Free-vie wpoint V ideo Synthesizing Algorithm for Sports Scenes Jun Chen, Ryosuke W atanabe, K eisuke Nonaka, T omoaki Konno, Hiroshi Sank oh, and Sei Naito Abstract —W e present a billboard-based free-viewpoint video synthesizing algorithm for sports scenes that can rob ustly r econ- struct and render a high-fidelity billboard model for each object, including an occluded one, in each camera. Its contributions are (1) applicable to a challenging shooting situation where a high precision 3D model cannot be b uilt because only a small number of cameras, featuring wide-baseline are av ailable; (2) capable of repr oducing the appearance of occlusions, which is one of the most significant issues for billboard-based approaches due to the ineffective detection of overlaps. T o achieve these goals above, the proposed method does not attempt to find a high-quality 3D model but utilizes a raw 3D model that is obtained dir ectly fr om space carving. Although the model is insufficiently accurate f or producing an impr essive visual effect, pr ecise object segmentation and occlusions detection can be performed by back-projection onto each camera plane. The billboard model of each object in each camera is render ed according to whether it is occluded or not, and its location in the virtual stadium is determined by considering the barycenter of its 3D model. W e synthesized free-viewpoint videos of two soccer sequences recorded by five cameras, using the proposed and state-of-the-art methods to demonstrate the effectiveness of the proposed method. Index T erms —Free-viewpoint V ideo Synthesis, 3D V ideo, Mul- tiple V iew Reconstruction, Image Processing. I . I N T R O D U C T I O N F REE-VIEWPOINT video synthesis is an acti ve research field in computer vision, aimed at providing a be yond-3D experience, in which audiences can vie w virtual media from any preferred angle and position. In a free-viewpoint video system, the virtual vie wpoint can be interactiv ely selected to see a part of the field from angles where a camera cannot be mounted. Moreov er , the viewpoint can be mo ved around the stadium to allo w audiences to have a walk-through or fly- through experience [1], [2], [3], [4]. The primary way to produce such a visual effect is to equip the observed scene with a synchronized camera-network [5], [6], [7]. A free-vie wpoint video is then created by using multi-view geometry techniques, such as 3D reconstruction or view-dependent representation. The 3D model representa- tion, by means of a 3D mesh or point cloud [8], [9], [10], [11], provides full freedom of virtual view and continuous appearance changes for objects. Therefore, this representation is close to the original concept of a free-viewpoint video. An example of this technology is the ”Intel True V iew” for Super Bowl LIII [12] that enables immersiv e viewing experiences by transforming video data captured from 38 5 K J. Chen, R. W atanabe, K. Nonaka, T . Konno, H. Sankoh, S. Naito are with Ultra-realistic Communication Group, KDDI Research, Inc., Fujimino, Japan (corresponding author (J. Chen) T el: +81-70-3825-9914; e-mail: ju- chen@kddi-research.jp). ultra-high-definition cameras into a 3D video. This technology achiev es impressiv e results. Ho wev er, a camera-network with many well-calibrated cameras is required to obtain a precise model. This makes these methods difficult to deploy cost- effecti vely . Moreover , the heavy computational process of rendering leads to a non-real-time video display , especially for portable devices like smartphones. The vie w-dependent representation techniques [13], [14], [15], [5] do not pro vide a consistent solution for all input cameras, but compute a separate reconstruction for each viewpoint. In general, these techniques do not require a large number of cameras. As reported in [16], a nov el vie w can be synthesized employing only two cameras by using sparse point correspondences and a coarse-to-fine reconstruction method. The requirement for numerous physical de vices was relaxed. But at the same time, this introduces new challenges. The biggest challenge in these methods is the detection and rendering of “occlusion”, which is the overlap of multiple objects in a camera vie w . W ith the con vergence of technologies from computer vision and deep learning [17], [18], an alternativ e way to create a free-viewpoint video is to con vert a single camera signal into a proper 3D representation [19], [20]. The ne w way mak es a creation easily controllable, flexible, con venient, and cheap. As noted in [19], it uses a CNN to estimate a player body depth map to reconstruct a soccer game from just a single Y ouT ube video. Despite their generality , howe ver , there are numerous challenges in this setup due to se veral factors. First, it cannot reproduce an appropriate appearance ov er the entire range of virtual views due to the limited information. For example, the surface texture of an opposite side, beyond the camera’ s sight, is unlikely to produce a satisfactory visual effect. The detection and treatment of occlusions caused by ov erlaps of multiple objects in a single camera view remain to be solved. Also, errors in occlusion detection lead to inaccurate depth estimation. In this paper , we focus on a multi-camera setup to provide an immersiv e free-viewpoint video for a sports scene, such as soccer or rugby , that in volv es a large field. Its goal is to resolv e the conflicting creation of a high-fidelity free-vie wpoint video with the requirement for many cameras. T o be specific, we proposed an algorithm to robustly reconstruct an accurate billboard model for each object, including occluded ones, in each camera. It can be applied to challenging shooting conditions where only a few cameras featuring wide-baseline are present. Our ke y ideas are: (1) accurate depth estimation and object segmentation are achiev ed by projecting labelled 3D models, obtained from shape-from-silhouette without op- timization, onto each camera plane; (2) the occlusion of each JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 2 object is detected using the acquired 2D segmentation map without the inv olvement of parameters and rob ust against self- occlusion; (3) a reasonable 3D coordinate of each billboard model in a virtual stadium is calculated according to the barycenter of the raw 3D model to provide a stereovision effect. W e present the synthesized results of two soccer contents that were recorded by fiv e cameras. Our results can be viewed on a PC, smartphone, smartglass, or head-mounted display , enabling free-viewpoint navigation to any virtual viewpoint. Comparativ e results are also pro vided to sho w the ef fectiv eness of the proposed method in terms of the naturalness of the surface appearance in the synthesized billboard models. I I . R E L A T E D W O R K S A. F r ee-viewpoint V ideo Creation fr om Multiple V iews 1) 3D model Repr esentation: The visual hull [21], [22], [23] is a 3D reconstruction technique that approximates the shape of observed objects from a set of calibrated silhouettes. It usually discretizes a pre-defined 3D volume into voxels and tests whether a vox el is a vailable or not by determining whether it falls inside or outside the silhouettes. Coupled with the marching cubes algorithm [24], [25], the discrete vox el representation can be con verted into a triangle mesh form. Some approaches focus on the direct calculation of a mesh representation by analyzing the geometric relation between silhouettes and a visual hull surface based on the assumption of local smoothness or point-plane duality [26], [27]. V isual hull approaches suffer from two main limitations. First, many calibrated cameras need to be placed in a 360 - degree circle to obtain a relati vely precise model. Second, it giv es the maximal volume consistent with objects’ silhou- ettes, failing to reconstruct conca vities. More generally , visual hull approaches serve as initialization for more elaborate 3D reconstruction. The photo-hull [28], [29], [30] approximates the maximum boundaries of objects using photo-consistency of a set of calibrated images. It eliminates the process of silhouette extraction but introduces more restrictions, such as highly precise camera calibration, sufficient texture, and dif- fuse surface reflectance. As noted in [31], [32], [33], [34], [35], advanced approaches combine photo-consistency , silhouette- consistency , sparse feature correspondence, and more, to solv e the problem of high-quality reconstruction. Howe ver , it takes time to process parameter-tuning to balance the constraints. 2) V iew-dependent Repr esentation: V iew-dependent rep- resentations can be classified into view interpolation and billboard-based methods by their different procedures. V iew interpolation [14], [36], [37] utilizes the projectiv e geometry between neighboring cameras to synthesize a view without explicit reconstruction of a 3D model. It has the advantage of av oiding the processes of camera calibration and 3D model estimation. Howe ver , the quality of a synthesized view is restricted by the accuracy of the correspondences among cameras, which means that the optimal baseline is constrained in a relati vely narrow range. An interpolation method [38] that renders a scene using both pix el correspondence and a depth map was reported to improve the visual effect. Nevertheless, it still suffers from a narro w baseline. Billboard-based methods [13], [39], [40], [41] construct a single planar billboard for each object in each camera. The billboards rotate around individual points of the virtual stadium as the vie wpoint mov es, providing walk-through and fly-through experiences. These methods cannot reproduce continuous changes in the appearance of an object, but the representation can easily be reconstructed.Our pre vious work [5] ov ercomes the problem of occlusion by utilizing conservati ve 3D models to se gment objects. Its underlying assumption is that the back-projection area of a conservati ve 3D model in a camera is always larger than the input silhouette. It outperforms conv entional methods in terms of robustness on camera setup and naturalness of texture. Ho wev er , we find that the reconstruction of rough 3D models increases noise and de grades the final visual effect. B. F r ee-viewpoint V ideo Creation fr om a Single V iew Creating a free-viewpoint video from a single camera (generally a moving camera) is a delicate task, which in- volv es automatic camera calibration, semantic segmentation, and monocular depth estimation. The calibration methods [42], [43], [44] are generally composed of three processes, including field line extraction, cross point calculation, and field model matching. W ith an assumption of small movement between consecutiv e frames, [45] calibrates the first frame using conv entional methods and propagates the parameters of the current frame from previous frames by estimating the homographic matrix. Semantic segmentation [46], [47], [17] is a pixel-le vel dense prediction task that labels each pixel of an image with a corresponding class of what is being represented. In an application of free-viewpoint video creation, it works out what objects there are, and where are the y in an image, to the information needed for further processing. Estimating depth is a crucial step in scene reconstruction. Unlike the estimation approach in multiple views that can use the correspondences among cameras, monocular depth estimation [48], [49], [50] is a technique of estimating depth from a single RGB image. Many recent works [51], [52], [53] follow an end-to-end learning paradigm consisting of a Con volutional Network for 2D/3D body joint localization and a subsequent optimization step to regress to a 3D pose. The constraint on these methods is the requirement of images with 2D/3D pose ground truth for training. The study [19] presented here describes the first- ev er method that can transform a monocular video of a soccer game into a free-viewpoint video by combining the techniques mentioned abo ve. It constructs a dataset of depth-map / image pairs from FIF A video game for the restricted soccer scenario to improve the accuracy of depth estimation. The approach reported in [15] can also create a free-viewpoint video from a single video. The major deficienc y of creation from a single view is that it can not reproduce any surface appearance that the camera does not observ e. I I I . A L G O R I T H M F O R F R E E - V I E W P O I N T V I D E O C R E A T I O N An ov erview of our proposed solution is shown in Fig. 1. It includes six steps: data capturing, silhouette segmentation, 3D reconstruction, depth estimation and 2D segmentation, JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 3 Fig. 1: W orkflow of the proposed method. billboard model creation, and free-viewpoint video rendering. Processes (b)-(e) work of f-line in a server -side, while the ren- dering is performed in real-time on the client-side according to the user’ s operation. The input data are captured using a synchronized camera network, in which the camera view is fixed during recording.Each camera is calibrated by the method reported in [43] to estimate the extrinsic parameters, intrinsic parameters, and lens distortion. A. Silhouette Se gmentation For a sports scene, it is reasonable to assume that the objects, including players and ball, are mo ving. Therefore, ob- jects can be e xtracted by a background subtraction method [54] that includes three processes: global extraction, classification, and local refinement. In the first process, a background image is obtained by taking the a verage of hundreds of consecuti ve video frames. The difference in pixels between each frame and the background image is then calculated. The pix el positions whose dif ferences are less than a certain threshold are regarded as background, with the remaining pix els judged to be fore- ground. In the second process, we classify the shado w area into independent shadow and dependent shadow according to the shadow’ s luminance, shape, and size. The independent shadows are remov ed here. Finally , a refinement is conducted to remove the dependent shadow based on the assumption that the chrominance difference between objects and background is recognizable. The threshold is adjusted dynamically according to the chrominance in each local area. B. Raw 3D Model Reconstruction Our method to estimate the 3D shape of observed objects from a wide-baseline camera network is to use an algorithm of shape from silhouettes. It discretizes a pre-defined 3D volume into vox els, projects each voxel onto all the camera image planes, and removes the vox els that fall outside the silhouettes. The set of remaining voxels called a volumetric visual hull [21] giv es a shape approximation to the observ ed scene. After a volumetric visual hull is obtained, the individual objects are segmented employing a connected components labeling algorithm [55], and an identifier label is assigned to each object. W e extract the 0 th- and 1 st-order moment M α,β ,γ ( V t ) { ( α, β , γ ) = (0 , 0 , 0) , (1 , 0 , 0) , (0 , 1 , 0) , (0 , 0 , 1) } of each object with Eq. 1 to determine their sizes and locations with Eq. 2. M α,β ,γ ( V t ) = X ( x,y ,z ) ∈V t x α y β z γ . (1) { N ( V t ) , X ( V t ) , Y ( V t ) , Z ( V t ) } = { M 0 , 0 , 0 ( V t ) , M 1 , 0 , 0 ( V t ) M 0 , 0 , 0 ( V t ) , M 0 , 1 , 0 ( V t ) M 0 , 0 , 0 ( V t ) , M 0 , 0 , 1 ( V t ) M 0 , 0 , 0 ( V t ) } . (2) Here, V t expresses the t th object. { x, y , z } denotes the 3D coordinate of an occupied voxel. N ( V t ) and { X ( V t ) , Y ( V t ) , Z ( V t ) } indicate the number of voxels in V t and its barycenter , respectively . In the next step, we b uild mesh models by coupling a volumetric visual hull with a marching cubes algorithm [24]. The visual hull may contain noise that comes from imperfect silhouettes. W e remove such noisy regions, taking into account the number of v oxels of an object as illustrated in the following equation: V t = ( O F F , if T min < N ( V t ) < T max O N , otherwise . (3) An object is remov ed if its number of v oxels is less than a minimum threshold T min or exceeds a maximum threshold T max . Our solution focuses on outdoor sports scenes, such as soccer or rugby match, so that it is practical to give reasonable assignments to T min and T max by considering the actual sizes of ball and athletes. The bottom image in Fig. 1 (c) presents an example of se gmentation in which a unique color is JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 4 (a) depth map (b) segmentation map Fig. 2: Depth estimation and 2D segmentation. assigned to each object while the up-right rectangles illustrate the minimum bounding box of each object. C. Depth Estimation and 2D Se gmentation T o estimate the depth map in a camera view , we projected the mesh models onto the camera plane to associate 2D image pixels with 3D triangles on the mesh surface. The projection of a 3D triangle is a 2D triangle so that we defined the associations of a 3D triangle as the pixels bounded by its 2D projection. The depth of the i th pixel d i is assigned as the depth of the nearest corresponding triangle, as expressed in the following equation: d i = min d i 1 , d i 2 , · · · , d i n . (4) Here, n indicates the number of 3D triangles that correspond with pixel i . d i j ( j = 1 , 2 , · · · , n ) denotes the distance from the j th triangle to the camera center . Fig. 2 (a) presents a depth map, in which light gray coloration identifies objects as nearer to the camera center, while objects that are farther away are dark. While estimating the depth map we also record the label of the nearest corresponding triangle, to indicate which object the pixel is associated with. This can be reg arded as a process of segmentation in which each object is separated from the others. Fig. 2 (b) demonstrates the result of se gmentation, in which pixels with the same color intensity correspond to the same object. D. Billboard Model Reconstruction In a billboard free-viewpoint video, each object is repre- sented as a planar image with texture, while the 3D visual effect is produced by placing the planar images in the proper position in a virtual stadium. In our study , we created a billboard model in the three steps described below . 3 1 2 (a ) sou rce i m ag e (b) obj ect 1 (c ) obj ect 2 (d) obj ect 3 Fig. 3: Individual object extraction. (a) presents a cropped image where object 1 overlaps with object 2, and object 3 is isolated. (b), (c), and (d) respectiv ely are the extracted individual objects, where the gray color indicates an occluded region. W e manually blocked the uniform number using black rectangle to av oid copyright issues. This process remains the same in the following chapters. (a) object 1 (b) object 2 (c) object 3 Fig. 4: T exture extraction. 1) Individual Object Extraction: W e successiv ely project the segmented mesh models onto a specific camera plane to extract an indi vidual 2D region for each object and determine their states, visible or not. The regions that map with a single object are certainly visible to the camera, while the others that are associated with two or more objects are ambiguous. T o judge the visibility of an ambiguous region, we compare the label of the projecting polygons with the label stored in the 2D segmentation map. It is visible when the two labels are the same. Otherwise, it is blocked by other objects. Fig. 3 shows a demonstration in which the visible and in visible regions are respectiv ely expressed with white and gray . Compared with the visibility detection method using a ray-casting algorithm [5] that introduces an intractable threshold, our proposed method runs without parameters and is robust against self-occlusion. 2) T extur e Extr action: For the visible pix els in an indi vidual object region, surface textures can be reproduced directly by extracting the color of the same pixels from the input image. The in visible pixels are rendered from the neighboring cameras by coupling with the depth map and corresponding polygons. Fig. 4 presents the rendering result of the objects in Fig. 3. In the case of objects 1 and 3, our method produces a good appearance because their textures come from the facing camera without a blending process. Concerning the object 2 that is partially occluded, it introduces small but acceptable visual artifacts. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 5 (a) mesh model (b) billboard model Fig. 5: Location determination. Fig. 6: The selection of a reference camera. 3) Location Determination: T o accurately locate billboard models on the ground, we calculate the 2D barycentre of each object region and associate it with the 3D barycentre of its mesh model, as shown in Fig. 5. The red marks respecti vely present the 2D and 3D barycentre while the red rectangle indicates the 3D area occupied by the mesh model. E. F r ee-viewpoint V ideo Rendering The free-viewpoint video is rendered on the client-side, where the 3D coordinate and direction of a virtual viewpoint can be obtained from the user’ s operation. W e identify the reference camera for rendering as the nearest camera by calculating the Euler distance between a virtual viewpoint and each recording camera. Fig. 6 sho ws an example of the selection of a reference camera. The first camera is nearer to virtual view 1 than the second camera, so the billboards in camera 1 render its virtual image, and vice versa. In the rendering process, billboards of a reference camera are placed in a virtual stadium and rotated according to the user-selected viewpoint. I V . E X P E R I M E N TA L R E S U LT S T o demonstrate the performance of our method, we compare it to the following methods: (a) the first content (b) the second content Fig. 7: Camera configuration. - RB [5] as the more recent representati ve of the billboard- based free-viewpoint video production approach, which extracts object regions in each camera by reconstructing a rough 3D model. - FFVV [7] as a more recent and fast representati ve of a full model free-viewpoint video generation method, which can produce a free-viewpoint video in real-time. - CVH [9] as a con ventional full model production method. W e applied the proposed and comparison methods to two types of soccer contents to v alidate their usability under different shooting conditions. The vision of the cameras of the first content focuses on half of a pitch while the observation area of the second content tar gets the penalty area. Both of the contents were captured with fiv e synchronized cameras. The resolution of each camera was 3840 × 2160 , and the frame rate was 30 fps. Fig. 7 shows the camera configurations for the two contents, in which black and red symbols respecti vely show the position of recording cameras and virtual cameras. For the first content, we define the 3D space for reconstruc- tion as 68 meters wide, 4 meters high, and 55 . 5 meters deep. The camera threshold of RB and CVH for the construction of a rough 3D shape was 4 , which remains the same in the production of the second content. The vox el size for shape approximation in all the methods was 1 cm × 1 cm × 1 cm. The thresholds, T min and T max , for noise filtering were 3 × 10 4 and 3 × 10 5 , respectiv ely . Fig. 8 (a) sho ws the cropped input image of each recording camera to highlight the region covered by virtual cameras. Fig. 8 (b) presents comparisons of three virtual viewpoint images produced by the proposed and reference methods, respectiv ely . Fig. 8 (c) and (d) present the surface texture of two selected objects. First, let us focus on the reproduced images from the first virtual viewpoint, sho wn in the first row of Fig. 8 (b), (c), and (d). The viewpoint was set with the same direction with “cam01” so that the methods (proposed method, RB, and FFVV) employing view-dependent rendering techniques can produce a high-quality texture. Nev ertheless, CVH that utilizes global rendering techniques fails to gi ve a proper appearance due to the inaccurate 3D shape approxi- mation. Next, let us look at the images constructed from the second virtual vie wpoint, sho wn in the second ro w of Fig. 8 (b), (c), and (d). The virtual camera was set as bird’ s-eye from the above whose nearest reference camera is “cam02”. It can be seen that the proposed method successfully recovers the color appearance of an occluded object. Howe ver , the other techniques introduce se vere artifacts or lea ve some important JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 6 camera 01 camera 02 camera 03 camera 04 camera 05 (a) Cropped input images. W e manually blurred the commercial billboards to a void copyright issues. This process remains the same for the other experiments. pro posed m etho d RB FF VV CVH (b) Synthesized free-viewpoint video viewing from three virtual viewpoints i npu t RB FF VV CVH pro po sed meth od (c) Close up vie w of a selected player i npu t RB FFVV CVH pro po sed m e t ho d (d) Close up vie w of another selected player Fig. 8: Free-viewpoint video of the first content. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 7 prop os e d m eth o d RB came ra 01 came ra 02 came ra 03 c am era 04 came ra 05 (a) player 1 prop os e d m eth o d RB came ra 01 came ra 02 came ra 03 c a me ra 0 4 came ra 05 (b) player 2 Fig. 9: Projections of billboard models of the first content on capturing vie wpoints. The red and blue masking respecti vely indicate the visible and occluded regions. (a) proposed method (b) RB Fig. 10: Projections of the 3D model of the first content on the XY -plane. The red and yello w circles respecti vely highlight the noises and segmentation faults. parts unrendered. Finally , let us observe the images (last row of Fig. 8 (b), (c), and (d)) rendered by a virtual camera that w as placed on the opposite side of “cam01”. Since the input images did not provide sufficient information for interpolation, the full model expression methods, FFVV and CVH, were incapable of offering a suitable chromatic appearance. Howe ver , the billboard methods ha ve the potential to handle situations like this because they represent objects using planar billboard models that obtained from the nearest camera. T o illustrate the differences between our method and RB, we projected the billboard models back to the capturing viewpoints. The region mapped with a billboard model is marked with orange or blue. Orange means that the region is visible, while blue indicates an ov erlapped area. Fig. 9 presents examples of projections of two objects. Comparison sho ws that the billboard models of our method are reliable and accurate, while RB tends to expand the individual object region and make a wrong judgment for occlusion. In the meantime, we projected the 3D models used in our method and RB onto the XY -plane to rev eal the dif ference of 3D models, as sho wn in Fig. 10. It can be seen that the model reconstructed by RB contains many noises that are highlighted by red circles in the figure. Moreov er , RB mistakenly recognizes two separate objects as one object, as demonstrated by the yellow circle in Fig. 10. For the second content, the 3D space for production and the vox el size for shape approximation in all the methods were defined as 55 m × 4 m × 23 m and 0 . 5 cm × 0 . 5 cm × 0 . 5 cm, respecti vely . The thresholds, T min and T max , for noise filtering were 2 . 4 × 10 5 and 2 . 4 × 10 7 , respectively . Fig. 11 demonstrates the input images, synthesized images from three virtual viewpoints, and the highlighted surface texture of two selected objects. All the virtual viewpoints were set as bird’ s-eye from above to ev aluate the texture quality when the virtual facing directions are far from the recording directions. Concerning the result in the figure, it can be observed that our method and RB, acting as billboard-based methods, outperforms the full model representation approach in all the tests. The reason for this phenomenon is that the shape approximated from five cameras featuring wide-baseline is quite inaccurate. The horizontal slice of a reconstructed model is more likely to be a pentagon but not a circle or ellipse with a smooth edge. Thus the rendering quality is far from satisf actory . Next, let us focus on the difference between the proposed method with RB. Besides the misalignment in rendering an occluded area, it can be seen that there are sev eral artifacts or noise in the result of RB (the second row of Fig. 11 (c) and the third row of Fig. 11 (d)). The relaxed shape-from- silhouette approach is lik ely to introduce noises with irre gular shape and size, as shown in Fig. 13. Consequently , parts of the visible region in some cameras are judged to be occlusion, as demonstrated in Fig. 12. Even though RB dev eloped some noise filtering approaches, it is a challenging task to remove all noises, especially when their shapes resemble a ball. V . D I S C U S S I O N In this section, we discuss some factors that may affect the visual effect of a reconstructed free-viewpoint video. First, camera calibration plays a vital role in free-vie wpoint video creation. Most of the reported approaches work based on the assumption that a sports field, such as a soccer field or rugby field, is the same as a design drawing. Howe ver , the assumption fails in most cases. This is sometimes because of human error when marking an actual sports field. Moreover , sports associations usually provide rough guidelines, but not a specific number with reliable precision. For e xample, soccer field dimensions are within the range found optimal by FIF A: 110 − 120 yards ( 100 − 110 m) long by 70 − 80 yards ( 64 − 73 m) wide. Thus the camera calibration is not accurate enough, leading to errors in 3D shape reconstruction and texture rendering. Second, a camera-network should be laid out as carefully as possible to create a high-quality free-viewpoint video. The primary requirement is that the cameras should be JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 8 camera 01 camera 02 ca m era 03 ca m era 04 camera 05 (a) Cropped input images pro po s ed m e th od RB FF VV CV H (b) Synthesized free-viewpoint video viewing from three virtual viewpoints i npu t RB FF VV CVH pro po sed m e t ho d (c) Close up vie w of a selected player i np ut RB FFVV CVH pro po sed m e t ho d (d) Close up vie w of another selected player Fig. 11: Free-viewpoint video of the second content. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 9 pro posed m e t ho d RB ca m e ra 01 ca m e ra 02 ca m e ra 03 ca m e ra 04 ca m e ra 05 (a) player 1 pro posed m e t ho d RB ca m e ra 01 ca m e ra 02 ca m e ra 03 c am e ra 04 ca m e ra 05 (b) player 2 Fig. 12: Projections of billboard models of the second content on capturing viewpoints. (a) proposed method (b) RB Fig. 13: Projections of 3D model of the second content on XY -plane. distributed uniformly in a stadium. This setup is more likely to get well-rounded te xture information that enhances the quality of reproduced surface appearances. In addition, this setup can provide continuous changes when switching viewpoint because a virtual vie w is represented by the billboard model of its nearest recording camera. A third factor is the number of cameras. There is no doubt that the more equipped cameras there are, the better . Ho wev er, we recommend the full model representation to be made as if there is an unlimited number of cameras. The proposed method should recei ve top priority when only a small number of cameras is provided. From our experience, five cameras are suf ficient to create a high-fidelity free-viewpoint video. Finally , our method is appropriate for scenes in volving many players, such as soccer , rugby , and basketball, but not suitable for simple scenarios with few players, such as judo, taekwondo, and wrestling. The proposed method creates a stereo visual effect by placing 2D billboard model on dif ferent positions of a virtual stadium. The scenarios with fewer players create fe wer billboard models in each camera. Especially when the players grapple with each other , the proposed method only constructs one billboard model in each camera. When all the players are represented by one billboard model, the spatial relationships among players are lost, making their 3D visual ef fectiv eness weak. V I . C O N C L U S I O N In this paper , we presented a novel billboard-based synthesis approach suitable for free-vie wpoint video production for sports scenes. It con verts 2D images captured by a syn- chronized camera network to a high-quality 3D video. Our approach has high flexibility because only a few cameras are required. Therefore, it can apply to challenging shooting conditions where the cameras are sparsely placed around a wide area. W e approximate 3D models of objects using a con ventional shape-from-silhouette technique and then project them onto each image plane to e xtract indi vidual object regions and discover occlusions. Each object region is rendered by a view-dependent approach in which the textures of non- occluded portions are taken from the nearest camera, while sev eral cameras are used to reproduce the appearance of occlu- sions. Experimental results of soccer contents have prov ed that the surface texture of each object, including occluded ones, can be reproduced more naturally than by the other state-of- the-art methods. In the future, we will parallelize our method and combine it with efficient data compression and streaming methods for delivering real-time free-viewpoint video. R E F E R E N C E S [1] Masayuki T animoto, “Overvie w of free viewpoint television, ” Signal Pr ocessing: Image Communication , vol. 21, no. 6, pp. 454–461, 2006. [2] Aljoscha Smolic, “3d video and free viewpoint videofrom capture to display , ” P attern r ecognition , vol. 44, no. 9, pp. 1958–1968, 2011. [3] T akeo Kanade, Peter Rander, and PJ Narayanan, “Virtualized reality: Constructing virtual worlds from real scenes, ” IEEE multimedia , vol. 4, no. 1, pp. 34–47, 1997. [4] T . Matsuyama and T . T akai, “Generation, visualization, and editing of 3d video, ” in Proceedings. F irst International Symposium on 3D Data Pr ocessing V isualization and Tr ansmission , June 2002, pp. 234–245. [5] Hiroshi Sank oh, Sei Naito, Keisuke Nonaka, Houari Sabirin, and Jun Chen, “Robust billboard-based, free-viewpoint video synthesis algorithm to ov ercome occlusions under challenging outdoor sport scenes, ” in Pr oceedings of the 26th ACM International Conference on Multimedia . 2018, MM ’18, pp. 1724–1732, ACM. [6] Keisuke Nonaka, Ryosuke W atanabe, Jun Chen, Houari Sabirin, and Sei Naito, “Fast plane-based free-viewpoint synthesis for real-time liv e streaming, ” in 2018 IEEE V isual Communications and Image Processing (VCIP) . IEEE, 2018, pp. 1–4. [7] Jun Chen, Ryosuke W atanabe, Keisuke Nonaka, T omoaki Konno, Hi- roshi Sankoh, and Sei Naito, “ A fast free-viewpoint video synthesis algorithm for sports scenes, ” in 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems . IEEE, 2019, (accepted). [8] Christian Theobalt, Gernot Ziegler , Marcus Magnor , and Hans-Peter Seidel, “Model-based free-viewpoint video: Acquisition, rendering, and encoding, ” in Pr oceedings of Picture Coding Symposium, San F rancisco, USA , 2004, pp. 1–6. [9] J. Kilner, J. Starck, A. Hilton, and O. Grau, “Dual-mode deformable models for free-viewpoint video of sports e vents, ” in Sixth International Confer ence on 3-D Digital Imaging and Modeling (3DIM 2007) , 2007, pp. 177–184. [10] Y ebin Liu, Qionghai Dai, and W enli Xu, “ A point-cloud-based multivie w stereo algorithm for free-viewpoint video, ” IEEE transactions on visualization and computer gr aphics , v ol. 16, no. 3, pp. 407–418, 2010. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 10 [11] Alvaro Collet, Ming Chuang, Pat Sweene y , Don Gillett, Dennis Evsee v , David Calabrese, Hugues Hoppe, Adam Kirk, and Stev e Sulliv an, “High-quality streamable free-viewpoint video, ” ACM T ransactions on Graphics (T oG) , vol. 34, no. 4, pp. 69, 2015. [12] “Intel T rue V iew, ” https://www .intel.com/content/www/us/en/sports/ technology/true- view .html?wapkw=true+vie w/. [13] Marcel Germann, Alexander Hornung, Richard Keiser , Remo Ziegler , Stephan W ¨ urmlin, and Markus Gross, “ Articulated billboards for video- based rendering, ” in Computer Graphics F orum . W iley Online Library , 2010, vol. 29, pp. 585–594. [14] Naho Inamoto and Hideo Saito, “V irtual viewpoint replay for a soccer match by view interpolation from multiple cameras, ” IEEE T ransactions on Multimedia , vol. 9, no. 6, pp. 1155–1166, 2007. [15] Houari Sabirin, Qiang Y ao, Keisuk e Nonaka, Hiroshi Sankoh, and Sei Naito, “T oward real-time deliv ery of immersi ve sports content, ” IEEE MultiMedia , vol. 25, no. 2, pp. 61–70, 2018. [16] Marcel Germann, Tiberiu Popa, Richard Keiser , Remo Ziegler , and Markus Gross, “Novel-view synthesis of outdoor sport e vents using an adapti ve view-dependent geometry , ” in Computer Graphics F orum . W iley Online Library , 2012, v ol. 31, pp. 325–333. [17] Kaiming He, Georgia Gkioxari, Piotr Doll ´ ar , and Ross Girshick, “Mask r-cnn, ” in Computer V ision (ICCV), 2017 IEEE International Confer ence on . IEEE, 2017, pp. 2980–2988. [18] Shih-En W ei, V arun Ramakrishna, T akeo Kanade, and Y aser Sheikh, “Con volutional pose machines, ” in Proceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2016, pp. 4724–4732. [19] Konstantinos Rematas, Ira Kemelmacher-Shlizerman, Brian Curless, and Stev e Seitz, “Soccer on your tabletop, ” in Proceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2018, pp. 4738–4747. [20] Armin Mustafa and Adrian Hilton, “Semantically coherent co- segmentation and reconstruction of dynamic scenes, ” in Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2017, pp. 422–431. [21] Aldo Laurentini, “The visual hull concept for silhouette-based image understanding, ” IEEE T ransactions on pattern analysis and machine intelligence , vol. 16, no. 2, pp. 150–162, 1994. [22] German KM Cheung, T akeo Kanade, J-Y Bouguet, and Mark Holler, “ A real time system for robust 3d vox el reconstruction of human motions, ” in Computer V ision and P attern Recognition, 2000. Pr oceedings. IEEE Confer ence on . IEEE, 2000, vol. 2, pp. 714–720. [23] Alexander Ladikos, Selim Benhimane, and Nassir Nav ab, “Efficient visual hull computation for real-time 3d reconstruction using cuda, ” in Computer V ision and P attern Reco gnition W orkshops, 2008. CVPRW’08. IEEE Computer Society Confer ence on . IEEE, 2008, pp. 1–8. [24] William E Lorensen and Harve y E Cline, “Marching cubes: A high res- olution 3d surface construction algorithm, ” in A CM siggraph computer graphics . A CM, 1987, v ol. 21, pp. 163–169. [25] Timothy S Newman and Hong Y i, “ A survey of the marching cubes algorithm, ” Computers & Graphics , vol. 30, no. 5, pp. 854–879, 2006. [26] Chen Liang and K-YK W ong, “Complex 3d shape recov ery using a dual-space approach, ” in Computer V ision and P attern Recognition, 2005. CVPR 2005. IEEE Computer Society Confer ence on . IEEE, 2005, vol. 2, pp. 878–884. [27] Jean-S ´ ebastien Franco and Edmond Boyer , “Efficient polyhedral mod- eling from silhouettes, ” IEEE Tr ansactions on P attern Analysis and Machine Intelligence , vol. 31, no. 3, pp. 414–427, 2009. [28] Greg Slabaugh, Ron Schafer, and Mat Hans, “Image-based photo hulls, ” in Proceedings. F irst International Symposium on 3D Data Pr ocessing V isualization and T ransmission . IEEE, 2002, pp. 704–862. [29] Shufei Fan and Frank P Ferrie, “Photo hull regularized stereo, ” Imag e and V ision Computing , vol. 28, no. 4, pp. 724–730, 2010. [30] Gregory G Slabaugh, Ronald W Schafer , et al., “Image-based photo hulls, ” Dec. 13 2005, US Patent 6,975,756. [31] Y asutaka Furukawa and Jean Ponce, “Carved visual hulls for image- based modeling, ” International Journal of Computer V ision , vol. 81, no. 1, pp. 53–67, 2009. [32] V . Leroy, J. Franco, and E. Boyer, “Multi-view dynamic shape refinement using local temporal integration, ” in 2017 IEEE International Confer ence on Computer V ision (ICCV) , Oct 2017, pp. 3113–3122. [33] Jonathan Starck and Adrian Hilton, “Surface capture for performance- based animation, ” IEEE computer graphics and applications , vol. 27, no. 3, 2007. [34] Nadia Robertini, Dan Casas, Helge Rhodin, Hans-Peter Seidel, and Christian Theobalt, “Model-based outdoor performance capture, ” in 2016 F ourth International Conference on 3D V ision (3DV) . IEEE, 2016, pp. 166–175. [35] Matthew Loper , Naureen Mahmood, and Michael J Black, “Mosh: Motion and shape capture from sparse markers, ” A CM T ransactions on Graphics (TOG) , vol. 33, no. 6, pp. 220, 2014. [36] W enfeng Li, Jin Zhou, Baoxin Li, and M Ibrahim Sezan, “V irtual view specification and synthesis for free viewpoint television, ” IEEE T ransactions on Circuits and Systems for V ideo T echnology , vol. 19, no. 4, pp. 533–546, 2009. [37] C. V erleysen, T . Maugey, P . Frossard, and C. De Vleeschouwer, “Wide- baseline foreground object interpolation using silhouette shape prior, ” IEEE T ransactions on Image Processing , vol. 26, no. 11, pp. 5477– 5490, Nov 2017. [38] Christian Lipski, Felix Klose, and Marcus Magnor, “Correspondence and depth-image based rendering a hybrid approach for free-viewpoint video, ” IEEE Tr ansactions on Cir cuits and Systems for V ideo T echnol- ogy , vol. 24, no. 6, pp. 942–951, 2014. [39] T omoyuki T ezuka Mehrdad Panahpour T ehrani Keita T akahashi T oshi- aki Fujii Ryo Suenaga, Kazuyoshi Suzuki, “ A practical implementation of free viewpoint video system for soccer games, ” 2015. [40] K. Nonaka, Q. Y ao, H. Sabirin, J. Chen, H. Sankoh, and S. Naito, “Billboard deformation via 3d vox el by using optimization for free- viewpoint system, ” in 2017 25th Eur opean Signal Processing Confer - ence (EUSIPCO) , Aug 2017, pp. 1500–1504. [41] Keisuke Nonaka, Houari Sabirin, Jun Chen, Hiroshi Sankoh, and Sei Naito, “Optimal billboard deformation via 3d voxel for free-viewpoint system, ” IEICE TRANSA CTIONS on Information and Systems , vol. 101, no. 9, pp. 2381–2391, 2018. [42] Qiang Y ao, Akira Kubota, Kaoru Kawakita, Keisuke Nonaka, Hiroshi Sankoh, and Sei Naito, “Fast camera self-calibration for synthesizing free viewpoint soccer video, ” in 2017 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 1612–1616. [43] Qiang Y ao, Hiroshi Sankoh, Keisuk e Nonaka, and Sei Naito, “ Automatic camera self-calibration for immersi ve navigation of free viewpoint sports video, ” in 2016 IEEE 18th International W orkshop on Multimedia Signal Pr ocessing (MMSP) . IEEE, 2016, pp. 1–6. [44] Dirk Farin, Susanne Krabbe, W olfgang Effelsber g, et al., “Robust camera calibration for sport videos using court models, ” in Storag e and Retrieval Methods and Applications for Multimedia 2004 . International Society for Optics and Photonics, 2003, vol. 5307, pp. 80–92. [45] Qiang Y ao, Keisuke Nonaka, Hiroshi Sankoh, and Sei Naito, “Robust moving camera calibration for synthesizing free vie wpoint soccer video, ” in 2016 IEEE International Conference on Image Pr ocessing (ICIP) . IEEE, 2016, pp. 1185–1189. [46] Jonathan Long, Evan Shelhamer , and Tre vor Darrell, “Fully con volu- tional networks for semantic segmentation, ” in Proceedings of the IEEE confer ence on computer vision and pattern recognition , 2015, pp. 3431– 3440. [47] Liang-Chieh Chen, George Papandreou, Florian Schrof f, and Hartwig Adam, “Rethinking atrous con volution for semantic image segmenta- tion, ” arXiv preprint , 2017. [48] Huan Fu, Mingming Gong, Chaohui W ang, Kayhan Batmanghelich, and Dacheng T ao, “Deep ordinal regression network for monocular depth estimation, ” in Proceedings of the IEEE Conference on Computer V ision and P attern Recognition , 2018, pp. 2002–2011. [49] Dan Xu, Elisa Ricci, W anli Ouyang, Xiaogang W ang, and Nicu Sebe, “Monocular depth estimation using multi-scale continuous crfs as se- quential deep networks, ” IEEE transactions on pattern analysis and machine intelligence , 2018. [50] Amlaan Bhoi, “Monocular depth estimation: A surve y , ” arXiv pr eprint arXiv:1901.09402 , 2019. [51] Georgios Pavlakos, Xiaowei Zhou, K onstantinos G. Derpanis, and K ostas Daniilidis, “Coarse-to-fine volumetric prediction for single-image 3d human pose, ” 2017 IEEE Conference on Computer V ision and P attern Recognition (CVPR) , Jul 2017. [52] Denis T ome, Chris Russell, and Lourdes Agapito, “Lifting from the deep: Con volutional 3d pose estimation from a single image, ” 2017 IEEE Conference on Computer V ision and P attern Reco gnition (CVPR) , Jul 2017. [53] Xingyi Zhou, Qixing Huang, Xiao Sun, Xiangyang Xue, and Y ichen W ei, “T owards 3d human pose estimation in the wild: A weakly- supervised approach, ” 2017 IEEE International Confer ence on Com- puter V ision (ICCV) , Oct 2017. [54] Qiang Y ao, Hiroshi Sankoh, Houari Sabirin, and Sei Naito, “ Accurate silhouette extraction of multiple moving objects for free viewpoint sports video synthesis, ” in 2015 IEEE 17th International W orkshop on Multimedia Signal Pr ocessing (MMSP) . IEEE, 2015, pp. 1–6. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2015 11 [55] Jun Chen, Keisuke Nonaka, Hiroshi Sankoh, Ryosuke W atanabe, Houari Sabirin, and Sei Naito, “Efficient parallel connected component labeling with a coarse-to-fine strategy , ” IEEE Access , vol. 6, pp. 55731–55740, 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment