Optimal Number of Choices in Rating Contexts

In many settings people must give numerical scores to entities from a small discrete set. For instance, rating physical attractiveness from 1--5 on dating sites, or papers from 1--10 for conference reviewing. We study the problem of understanding whe…

Authors: Sam Ganzfried, Farzana Yusuf

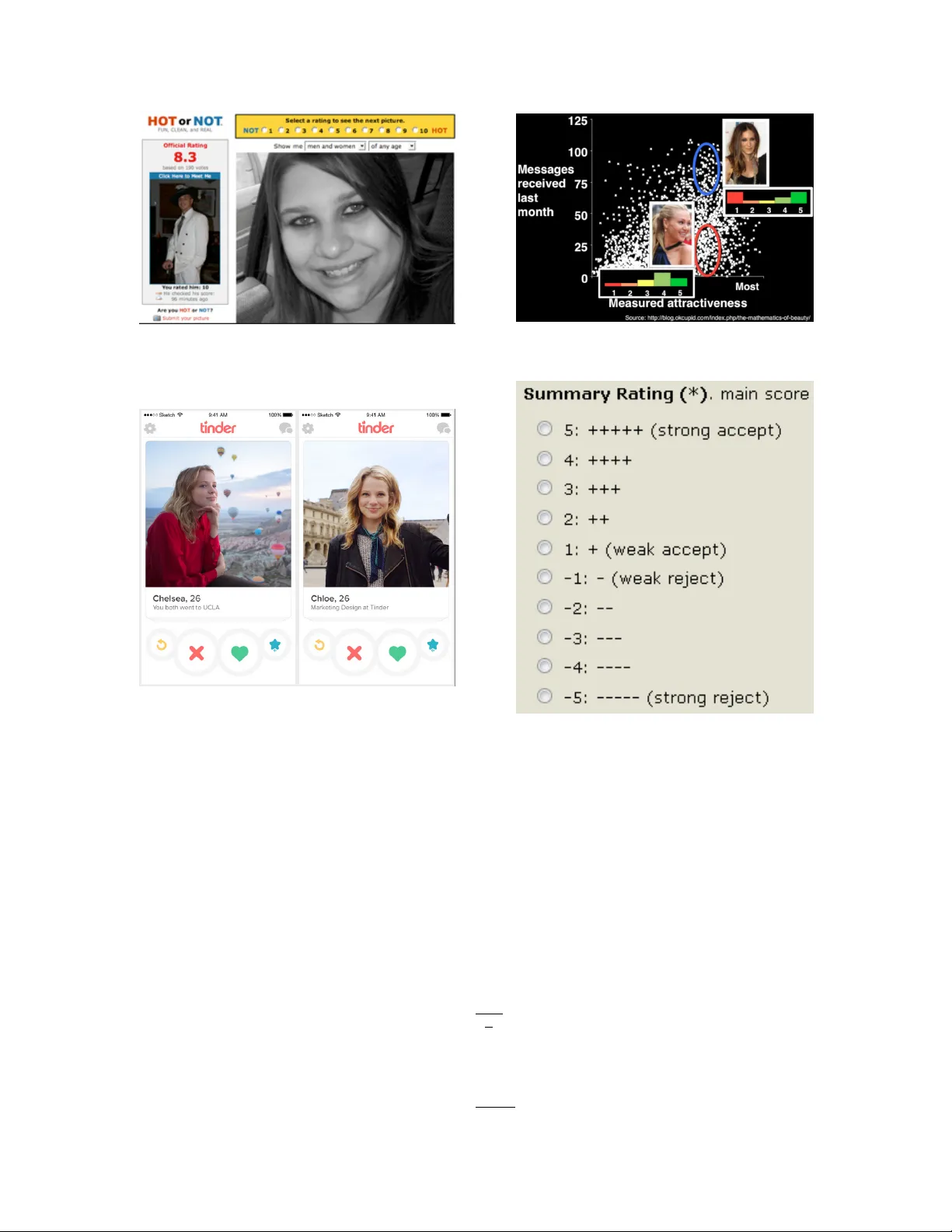

Optimal Number of Choices in Rating Conte xts Sam Ganzfried Ganzfried Research sam@ganzfriedresearch.com Farzana Y usuf Florida International Uni versity fyusu003@fiu.edu Abstract In many settings people must gi v e numerical scores to entities from a small discrete set. For instance, rating physical attracti veness from 1–5 on dating sites, or papers from 1–10 for conference re vie wing. W e study the problem of understanding when using a different number of options is optimal. W e consider the case when scores are uniform random and Gaussian. W e study computationally when using 2, 3, 4, 5, and 10 options out of a total of 100 is optimal in these models (though our theoretical analysis is for a more general setting with k choices from n total options as well as a continuous underlying space). One may expect that using more options would al ways improv e performance in this model, b ut we sho w that this is not necessarily the case, and that using fewer choices—ev en just two—can surprisingly be optimal in certain situations. While in theory for this setting it would be optimal to use all 100 options, in practice this is prohibitiv e, and it is preferable to utilize a smaller number of options due to humans’ limited computational resources. Our results could hav e many potential applications, as settings requiring entities to be ranked by humans are ubiquitous. There could also be applications to other fields such as signal or image processing where input values from a large set must be mapped to output values in a smaller set. 1 Intr oduction Humans rate items or entities in man y important settings. F or e xample, users of dating websites and mobile applications rate other users’ ph ysical attracti veness, teachers rate scholarly work of students, and re vie wers rate the quality of academic conference submissions. In these settings, the users assign a numerical (integral) score to each item from a small discrete set. Howe ver , the number of options in this set can v ary significantly between applications, and e ven within different instantiations of the same application. F or instance, for rating attractiveness, three popular sites all use a dif ferent number of options. On “Hot or Not, ” users rate the attracti veness of photographs submitted voluntarily by other users on a scale of 1–10 (Figure 1 1 ). These scores are aggregated and the average is assigned as the overall “score” for a photograph. On the dating website OkCupid, users rate other users on a scale of 1–5 (if a user rates another user 4 or 5 then the rated user receiv es a notification) 2 (Figure 2 3 ). And on the mobile application T inder users “swipe right” (green heart) or “swipe left” (red X) to e xpress interest in other users (tw o users are allo wed to message each other if the y mutually swipe right), which is essentially equiv alent to using a binary { 1 , 2 } scale (Figure 3 4 ). Education is another important application area requiring human ratings. For the 2016 International Joint Conference on Artificial Intelligence, revie wers assigned a “Summary Rating” score from -5–5 (equiv alent to 1–10) for each submitted paper (Figure 4) 5 . The papers are then discussed and scores aggre gated to produce an acceptance or rejection decision based on the av erage of the scores. 1 http://blog.mrmeyer.com/2007/are- you- hot- or- not/ 2 The likelihood of receiving an initial message is actually much more highly correlated with the variance—and particularly the number of “5” ratings—than with the av erage rating [10]. 3 http://blog.okcupid.com/index.php/the- mathematics- of- beauty/ 4 https://tctechcrunch2011.files.wordpress.com/2015/11/tinder- two.jpg 5 https://easychair.org/conferences/?conf=ijcai16 1 Figure 1: Hot or Not users rate attracti veness 1–10. Figure 2: OkCupid users rate attracti veness 1–5. Figure 3: T inder users rate attractiv eness 1–2. Figure 4: IJCAI re viewers rate papers -5–5. Despite the importance and ubiquity of the problem, there has been little fundamental research done on the problem of determining the optimal number of options to allow in such settings. W e study a model in which users have an underlying integral ground truth score for each item in { 1 , . . . , n } and are required to submit an integral rating in { 1 , . . . , k } , for k << n . (For ease of presentation we use the equiv alent formulation { 0 , . . . , n − 1 } , { 0 , . . . , k − 1 } .) W e use two generativ e models for the ground truth scores: a uniform random model in which the fraction of scores for each value from 0 to n − 1 is chosen uniformly at random (by choosing a random value for each and then normalizing), and a model where scores are chosen according to a Gaussian distribution with a gi ven mean and v ariance. W e then compute a “compressed” score distribution by mapping each full score s from { 0 , . . . , n − 1 } to { 0 , . . . , k − 1 } by applying s ← $ s n k % . (1) W e then compute the a verage “compressed” score a k , and compute its error e k according to e k = a f − n − 1 k − 1 · a k , (2) 2 where a f is the ground truth av erage. The goal is to pick argmin k e k (in our simulations we also consider a metric of the frequency at which each value of k produces lowest error ov er all the items that are rated). While there are many possible generati ve models and cost functions, these seem to be the most natural, and we plan to study alternati ve choices in future work. W e deri ve a closed-form e xpression for e k that depends on only a small number ( k ) of parameters of the underlying distrib ution for an arbitrary distrib ution. 6 This allo ws us to exactly characterize the performance of using each number of choices. In simulations we repeatedly compute e k and compare the a verage v alues. W e focus on n = 100 and k = 2 , 3 , 4 , 5 , 10 , which we believ e are the most natural and interesting choices for initial study . One could argue that this model is some what “trivial” in the sense that it would be optimal to set k = n to permit all the possible scores, as this would result in the “compressed” scores agreeing exactly with the full scores. Howe ver , there are sev eral reasons that would lead us to prefer to select k << n in practice (as all of the examples previously described hav e done), thus making this analysis worthwhile. It is much easier for a human to assign a score from a small set than from a large set, particularly when rating many items under time constraints. W e could hav e included an additional term into the cost function e k that explicitly penalizes larger values of k , which would have a significant ef fect on the optimal value of k (pro viding a fav oritism for smaller values). Ho wev er the selection of this function would be somewhat arbitrary and would make the model more complex, and we leave this for future study . Giv en that we do not include such a penalty term, one may e xpect that increasing k will always decrease e k in our setting. While the simulations sho w a clear negativ e relationship, we show that smaller v alues of k actually lead to smaller e k surprisingly often. These smaller v alues would receiv e further preference with a penalty term. One line of related theoretical research that also has applications to the education domain studies the impact of using finely grained numerical grades (100, 99, 98) vs. coarse letter grades (A, B, C) [7]. They conclude that if students care primarily about their rank relativ e to the other students, they are often best moti vated to work by assigning them coarse categories than exact numerical scores. In a setting of “dis- parate” student abilities they show that the optimal absolute grading scheme is always coarse. Their model is game-theoretic; each player (student) selects an ef fort le vel, seeking to optimize a utility function that depends on both the relative score and effort lev el. Their setting is quite different from ours in many ways. For one, they study a setting where it is assumed that the underlying “ground truth” score is known, yet may be disguised for strate gic reasons. In our setting the goal is to approximate the ground truth score as closely as possible. While we are not aware of prior theoretical study of our exact problem, there have been experimental studies on the optimal number of options on a “Likert scale” [17, 19, 26, 6, 9]. The general conclusion is that “the optimal number of scale categories is content specific and a function of the conditions of mea- surement. ” [11] There has been study of whether including a “mid-point” option (i.e., the middle choice from an odd number) is beneficial. One e xperiment demonstrated that the use of the mid-point category decreases as the number of choices increases: 20% of respondents choose the mid-point for 3 and 5 op- tions while only 7% did for 7 , 9 , . . . , 19 [20]. They conclude that it is preferable to either not include a mid-point at all or use a large number of options. Subsequent experiments demonstrated that eliminating a mid-point can reduce social desirability bias which results from respondents’ desires to please the inter- vie wer or not gi ve a perceiv ed socially unacceptable answer [11]. There has also been significant research on questionnaire design and the concept of “feeling thermometers, ” particularly from the fields of psychology and sociology [25, 21, 16, 4, 18, 23]. One study concludes from experimental data: “in the measurement of 6 For theoretical simplicity we theoretically study a continuous version where scores are chosen according to a distrib ution over (0 , n ) (though the simulations are for the discrete version) and the compressed scores are over { 0 , . . . , k − 1 } . In this setting we use a normalization factor of n k instead of n − 1 k for the e k term. Continuous approximations for large discrete spaces have been studied in other settings; for instance, they ha ve led to simplified analysis and insight in poker games with continuous distributions of priv ate information [2]. 3 satisfaction with v arious domains of life, 11-point scales clearly are more reliable than comparable 7-point scales” [1]. Another study shows that “people are more likely to purchase gourmet jams or chocolates or to undertake optional class essay assignments when offered a limited array of 6 choices rather than a more extensi ve array of 24 or 30 choices” [24]. Since the experimental conclusions are dependent on the specific datasets and seem to vary from domain to domain, we choose to focus on formulating theoretical models and computational simulations, though we also include results and discussion from se veral datasets. W e note that we are not necessarily claiming that our model or analysis perfectly models reality or the psychological phenomena behind ho w humans actually behave. W e are simply proposing simple and natural models that to the best of our knowledge hav e not been studied before. The simulation results seem some what counterintuitiv e and merit study on their o wn. W e admit that further study is needed to determine ho w realistic our assumptions are for modeling human behavior . For example, some psychology research suggests that human users may not actually have an underlying integral ground truth value [8]. Research from the recommender systems community indicates that while using a coarser granularity for rating scales provides less absolute predictiv e v alue to users, it can be viewed a providing more value if viewed from an alternati ve perspectiv e of preference bits per second [14]. Some work considers the setting where ratings ov er { 1 , ..., 5 } are mapped into a binary “thumbs up“ / “thumbs do wn” (analogously to the swipe right/left e xample for T inder above) [5]. Generally users mapped original ratings of 1 and 2 to “thumbs down” and original ratings of 3, 4, and 5 to “thumbs up, ” which can be vie wed as being similar to the floor compression procedure described abov e. W e consider a more generalized setting where ratings over { 1 , ..., n } are mapped do wn to a smaller space (which could be binary but may hav e more options). In addition, we also consider a rounding compression technique in addition to the flooring compression. Some prior w ork has presented an approach for mapping continuous prediction scores to ordinal prefer- ences with heterogeneous thresholds that is also applicable to mapping continuous-valued ‘true preference’ scores [15]. W e note that our setting can apply straightforwardly to provide continuous-to-ordinal mapping in the same way as it performs ordinal-to-ordinal mapping initially . (In fact for our theoretical analysis and for the Jester dataset we study our mapping is continuous-to-ordinal.) An alternativ e model assumes that users compare items with pairwise comparisons which form a weak ordering, meaning that some items are gi ven the same “mental rating, ” while for our setting the ratings would be much more likely to be unique in the fine-grained space of ground-truth scores [3, 13]. In comparison to prior work, the main takeaway from our work is the closed-form e xpression for simple natural models, and the new simulation results which sho w precisely for the first time how often each number of choices is optimal using sev eral metrics (num- ber of times it produces lowest error and the lo west a verage error). W e include e xperiments on datasets from sev eral domains for completeness, though as prior work has shown results can vary significantly be- tween datasets, and further research from psychology and social science is needed to make more accurate predictions of ho w humans actually behav e in practice. W e note that our results could also hav e impact out- side of human user systems, for example to the problems of “quantization” and data compression in signal processing. 2 Theor etical characterization Suppose scores are giv en by continuous pdf f (with cdf F ) on (0 , 100) , and we wish to compress them to two options, { 0 , 1 } . Scores below 50 are mapped to 0, and above 50 to 1. The average of the full distrib ution is a f = E [ X ] = R 100 x =0 xf ( x ) dx. The a verage of the compressed version is a 2 = Z 50 x =0 0 f ( x ) dx + Z 100 x =50 1 f ( x ) dx = 1 − F (50) . 4 So e 2 = | a f − 100(1 − F (50)) | = | E [ X ] − 100 + 100 F (50) | . For three options, a 3 = Z 100 3 x =0 0 f ( x ) dx + Z 200 3 x = 100 3 1 f ( x ) dx + Z 100 x = 200 3 2 f ( x ) dx = 2 − F (100 / 3) − F (200 / 3) e 3 = | a f − 50(2 − F (100 / 3) − F (200 / 3)) | = | E [ X ] − 100 + 50 F (100 / 3) + 50 F (200 / 3) | In general for n total and k compressed options, a k = k − 1 X i =0 Z n ( i +1) k x = ni k if ( x ) dx = k − 1 X i =0 i F n ( i + 1) k − F ni k = ( k − 1) F ( n ) − k − 1 X i =1 F ni k = ( k − 1) − k − 1 X i =1 F ni k e k = a f − n k − 1 ( k − 1) − k − 1 X i =1 F ni k ! = E [ X ] − n + n k − 1 k − 1 X i =1 F ni k (3) Equation 3 allows us to characterize the relative performance of choices of k for a given distrib ution f . For each k it requires only knowing k statistics of f (the k − 1 values of F ni k plus E [ X ] ). In practice these could likely be closely approximated from historical data for small k values (though prior work has pointed out that there may be some challenges in order to closely approximate the cdf values of the ratings from historical data, due to the historical data not being sampled at random from the true rating distribution [22]). As an example we see that e 2 < e 3 if f | E [ X ] − 100 + 100 F (50) | < E [ X ] − 100 + 50 F 100 3 + 50 F 200 3 Consider a full distribution that has half its mass right around 30 and half its mass right around 60 (Figure 5). Then a f = E [ X ] = 0 . 5 · 30 + 0 . 5 · 60 = 45 . If we use k = 2 , then the mass at 30 will be mapped down to 0 (since 30 < 50 ) and the mass at 60 will be mapped up to 1 (since 60 > 50) (Figure 6). So a 2 = 0 . 5 · 0 + 0 . 5 · 1 = 0 . 5 . Using normalization of n k = 100 , e 2 = | 45 − 100(0 . 5) | = | 45 − 50 | = 5 . If we use k = 3 , then the mass at 30 will also be mapped down to 0 (since 0 < 100 3 ); but the mass at 60 will be mapped to 1 (not the maximum possible value of 2 in this case), since 100 3 < 60 < 200 3 (Figure 6). So again a 3 = 0 . 5 · 0 + 0 . 5 · 1 = 0 . 5 , but no w using normalization of n k = 50 we ha ve e 3 = | 45 − 50(0 . 5) | = | 45 − 25 | = 20 . So, surprisingly , in this example allowing more ranking choices actually significantly increases error . 5 Figure 5: Example distribution for which compressing with k = 2 produces lo wer error than k = 3 . Figure 6: Compressed distributions using k = 2 and k = 3 for example from Figure 5. If we happened to be in the case where both a 2 ≤ a f and a 3 ≤ a f , then we could remove the absolute v alues and reduce the expression to see that e 2 < e 3 if f R 50 x = 100 3 f ( x ) dx < R 200 3 x =50 f ( x ) dx. One could perform more comprehensive analysis considering all cases to obtain better characterization and intuition for the optimal v alue of k for distributions with dif ferent properties. 3 Rounding compression An alternative model we could have considered is to use rounding to produce the compressed scores as opposed to using the floor function from Equation 1. For instance, for the case n = 100 , k = 2 , instead of di viding s by 50 and taking the floor , we could instead partition the points according to whether they are closest to t 1 = 25 or t 2 = 75 . In the example abov e, the mass at 30 would be mapped to t 1 and the mass at 60 would be mapped to t 2 . This would produce a compressed av erage score of a 2 = 1 2 · 25 + 1 2 · 75 = 50 . No normalization would be necessary , and this would produce error of e 2 = | a f − a 2 | = | 45 − 50 | = 5 , as the floor approach did as well. Similarly , for k = 3 the region midpoints will be q 1 = 100 6 , q 2 = 50 , q 3 = 500 6 . The mass at 30 will be mapped to q 1 = 100 6 and the mass at 60 will be mapped to q 2 = 50 . This produces a compressed a verage score of a 3 = 1 2 · 100 6 + 1 2 · 50 = 100 3 . This produces an error of e 3 = | a f − a 3 | = 45 − 100 3 = 35 3 = 11 . 67 . Although the error for k = 3 is smaller than for the floor case, it is still significantly larger than k = 2 ’ s, and using two options still outperforms using three for the example in this ne w model. In general, this approach would create k “midpoints” { m k i } : m k i = n (2 i − 1) 2 k . For k = 2 we ha ve 6 a 2 = Z 50 x =0 25 + Z 100 x =50 75 = 75 − 50 F (50) e 2 = | a f − (75 − 50 F (50)) | = | E [ X ] − 75 + 50 F (50) | One might wonder whether the floor approach would ever outperform the rounding approach (in the example above the rounding approach produced lower error k = 3 and the same error for k = 2 ). As a simple example to see that it can, consider the distribution with all mass on 0. The floor approach would produce a 2 = 0 giving an error of 0, while the rounding approach would produce a 2 = 25 giving an error of 25. Thus, the superiority of the approach is dependent on the distribution. W e explore this further in the experiments. For three options, a 3 = Z 100 3 0 100 6 f ( x ) + Z 200 3 100 3 50 f ( x ) + Z 100 200 3 500 6 f ( x ) = 500 6 − 100 3 F 100 3 − 100 3 F 200 3 e 3 = E [ X ] − 500 6 + 100 3 F 100 3 + 100 3 F 200 3 For general n and k , analysis as abov e yields a k = k − 1 X i =0 Z n ( i +1) k x = ni k m k i +1 f ( x ) dx = n (2 k − 1) 2 k − n k k − 1 X i =1 F ni k e k = a f − " n (2 k − 1) 2 k − n k k − 1 X i =1 F ni k # (4) = E [ X ] − n (2 k − 1) 2 k + n k k − 1 X i =1 F ni k (5) Like for the floor model e k requires only knowing k statistics of f . The rounding model has an advantage ov er the floor model that there is no need to conv ert scores between dif ferent scales and perform normaliza- tion. One drawback is that it requires knowing n (the expression for m k i is dependent on n ), while the floor model does not. In our experiments we assume n = 100 , but in practice it may not be clear what the agents’ ground truth granularity is and may be easier to just deal with scores from 1 to k . Furthermore, it may seem unnatural to essentially ask people to rate items as “ 100 6 , 50 , 200 6 ” rather than “ 1 , 2 , 3 ” (though the con version between the score and m k i could be done behind the scenes essentially circumv enting the potential practical complication). One can generalize both the floor and rounding model by using a score of s ( n, k ) i for the i ’th re gion. For the floor setting we set s ( n, k ) i = i , and for the rounding setting s ( n, k ) i = m k i = n (2 i +1) 2 k . 4 Computational simulations The above analysis leads to the immediate question of whether the example for which e 2 < e 3 was a fluke or whether using fewer choices can actually reduce error under reasonable assumptions on the generati ve model. W e study this question using simulations with what we believ e are the two most natural models. While we hav e studied the continuous setting where the full set of options is continuous over (0 , n ) and the 7 compressed set is discrete { 0 , . . . , k − 1 } , we no w consider the perhaps more realistic setting where the full set is the discrete set { 0 , . . . , n − 1 } and the compressed set is the same (though it should be noted that the two settings are likely quite similar qualitati vely). The first generativ e model we consider is a uniform model in which the values of the pmf for each of the n possible values are chosen independently and uniformly at random. The second is a Gaussian model in which the v alues are generated according to a normal distribution with specified mean µ and standard de viation σ (v alues below 0 are set to 0 and abov e n − 1 to n − 1 ). Algorithm 1 Procedure for generating full scores in uniform model Inputs : Number of scores n scoreSum ← 0 for i = 0 : n do r ← random(0,1) scores[ i ] ← r scoreSum = scoreSum + r for i = 0 : n do scores[ i ] = scores[ i ] / scoreSum Algorithm 2 Procedure for generating scores in Gaussian model Inputs : Number of scores n , number of samples s , mean µ , standard de viation σ f or i = 0 : s do r ← randomGaussian( µ, σ ) if r < 0 then r = 0 else if r > n − 1 then r ← n − 1 ++scores[round( r )] f or i = 0 : n do scores[ i ] = scores[ i ] / s For our simulations we used n = 100 , and considered k = 2 , 3 , 4 , 5 , 10 , which are popular and natural v alues. For the Gaussian model we used s = 1000 , µ = 50 , σ = 50 3 . F or each set of simulations we computed the errors for all considered v alues of k for m = 100 , 000 “items” (each corresponding to a dif ferent distribution generated according to the specified model). The main quantities we are interested in computing are the number of times that each v alue of k produces the lowest error o ver the m items, and the av erage value of the errors o ver all items for each k value. The simulation procedure is specified in Algorithm 3. Note that this procedure could take as input any generati ve model M (not just the two we considered), as well as the parameters for the model, which we designate as ρ . It takes a set { k 1 , . . . , k C } of C different compressed scores, and returns the number of times that each one produces the lowest error ov er the m items. Note that we can easily also compute other quantities of interest with this procedure, such as the average v alue of the errors which we also report in some of the experiments (though we note that certain quantities could be overly dependent on the specific parameter v alues chosen). In the first set of experiments, we compared performance between using k = 2, 3, 4, 5, 10 to see for ho w many of the trials each value of k produced the minimal error (T able 1). Not surprisingly , we see that the number of victories increases monotonically with the value of k , while the average error decreased monotonically (recall that we would hav e zero error if we set k = 100 ). Howe ver , what is perhaps surprising 8 Algorithm 3 Simulation procedure Inputs : generati ve model M , parameters ρ , number of items m , number of total scores n , set of compressed scores { k 1 , . . . , k C } scores[][] ← array of dimension m × n av erages[] ← array of dimension m f or i = 0 : m do scores[i] ← M ( n, ρ ) av erages[i] ← av erage(scores[i]) f or i = 0 : C do scoresCompressed[][] ← array of dimension m × C av erages[] ← array of dimension m f or j = 0 : m do scoresCompressed[j][i] ← Compress(scores[j]) av eragesCompressed[j] ← av erage(scoresCompressed[j]) f or j = 0 : m do min ← MAX-V ALUE minIndex ← -1 numV ictories[] ← array of dimension C f or i = 0 : C do e i ← | averages[ j ] - n − 1 k − 1 av eragesCompressed[ j ] | if e i < min then min ← e i minIndex ← i ++numV ictories[minIndex] retur n numV ictories is that using a smaller number of compressed scores produced the optimal error in a far from negligible number of the trials. For the uniform model, using 10 scores minimized error only around 53% of the time, while using 5 scores minimized error 17% of the time, and ev en using 2 scores minimized it 5.6% of the time. The results were similar for the Gaussian model, though a bit more in fa vor of larger values of k , which is what we would expect because the Gaussian model is less likely to generate “fluke” distributions that could fa vor the smaller v alues. 2 3 4 5 10 Uniform # victories 5564 9265 14870 16974 53327 Uniform av erage error 1.32 0.86 0.53 0.41 0.19 Gaussian # victories 3025 7336 14435 17800 57404 Gaussian av erage error 1.14 0.59 0.30 0.22 0.10 T able 1: Number of times each v alue of k in { 2,3,4,5,10 } produces minimal error and average error v alues, ov er 100,000 items generated according to both models. W e next explored the number of victories between just k = 2 and k = 3 , with results in T able 2. Again we observed that using a larger value of k generally reduces error , as expected. Howe ver , we find it extremely surprising that using k = 2 produces a lower error 37% of the time. As before, the larger k value performs relati vely better in the Gaussian model. W e also looked at results for the most extreme comparison, k = 2 vs. k = 10 (T able 3). Using 2 scores outperformed 10 8.3% of the time in the uniform setting, which was 9 larger than we expected. In Figures 7 – 8, we present a distribution for which k = 2 particularly outperformed k = 10 . The full distribution has mean 54.188, while the k = 2 compression has mean 0.548 (54.253 after normalization) and k = 10 has mean 5.009 (55.009 after normalization). The normalized errors between the means were 0.906 for k = 10 and 0.048 for k = 2 , yielding a difference of 0.859 in f avor of k = 2 . 2 3 Uniform number of victories 36805 63195 Uniform av erage error 1.31 0.86 Gaussian number of victories 30454 69546 Gaussian av erage error 1.13 0.58 T able 2: Results for k = 2 vs. 3. 2 10 Uniform number of victories 8253 91747 Uniform av erage error 1.32 0.19 Gaussian number of victories 4369 95631 Gaussian av erage error 1.13 0.10 T able 3: Results for k = 2 vs. 10. Figure 7: Example distribution where compressing with k = 2 produces significantly lo wer error than k = 10 . The full distribution has mean 54.188, while the k = 2 compression has mean 0.548 (54.253 after normalization) and the k = 10 compression has mean 5.009 (55.009 after normalization). The normalized errors between the means were 0.906 for k = 10 and 0.048 for k = 2 , yielding a dif ference of 0.859 in fa vor of k = 2 . W e next repeated the extreme k = 2 vs. 10 comparison, but we imposed a restriction that the k = 10 option could not giv e a score below 3 or above 6 (T able 4). (If it selected a score below 3 then we set it to 3, and if abov e 6 we set it to 6). For some settings, for instance paper re viewing, extreme scores are very uncommon, and we strongly suspect that the vast majority of scores are in this middle range. Some possible explanations are that re viewers who gi ve extreme scores may be required to put in additional w ork to justify their scores and are more likely to be in volved in arguments with other re viewers (or with the authors in the rebuttal). Revie wers could also experience higher regret or embarrassment for being “wrong” and possibly of f-base in the revie w by missing an important nuance. In this setting using k = 2 outperforms k = 10 nearly 1 3 of the time in the uniform model. 10 Figure 8: Compressed distribution for k = 2 vs. 10 for example from Figure 7. W e also considered the situation where we restricted the k = 10 scores to f all between 3 and 7 (as opposed to 3 and 6). Note that the possible scores range from 0–9, so this restriction is asymmetric in that the lo west three possible scores are eliminated while only the highest two are. This is moti vated by the intuition that raters may be less inclined to give extremely low scores which may hurt the feelings of an author (for the case of paper revie wing). In this setting, which is seemingly quite similar to the 3–6 setting, k = 2 produced lower error 93% of the time in the uniform model! 2 10 Uniform number of victories 32250 67750 Uniform av erage error 1.31 0.74 Gaussian number of victories 10859 89141 Gaussian av erage error 1.13 0.20 T able 4: Number of times each value of k in { 2,10 } produces minimal error and average error values, over 100,000 items generated according to both models. For k = 10 , we only permitted scores between 3 and 6 (inclusi ve). If a score was belo w 3 we set it to be 3, and above 6 to 6. 2 10 Uniform number of victories 93226 6774 Uniform av erage error 1.31 0.74 Gaussian number of victories 54459 45541 Gaussian av erage error 1.13 1.09 T able 5: Number of times each value of k in { 2,10 } produces minimal error and average error values, over 100,000 items generated according to both generativ e models. For k = 10 , we only permitted scores between 3 and 7 (inclusi ve). If a score was belo w 3 we set it to be 3, and above 7 to 7. W e next repeated these experiments for the rounding compression function. There are sev eral interesting observ ations from T able 6. In this setting, k = 3 is the clear choice, performing best in both models (by a large margin for the Gaussian model). The smaller values of k perform significantly better with rounding than flooring (as indicated by lower errors) while the larger values perform significantly worse, and their errors seem to approach 0.5 for both models. T aking both compressions into account, the optimal overall approach would still be to use flooring with k = 10 , which produced the smallest av erage errors of 0.19 and 0.1 in the two models, while using k = 3 with rounding produced errors of 0.47 and 0.24. The 2 vs. 3 experiments produced very similar results for the two compressions (T able 7). The 2 vs. 10 results were quite 11 dif ferent, with 2 performing better almost 40% of the time with rounding, vs. less than 10% with flooring (T able 8). In the 2 vs. 10 truncated 3–6 experiments 2 performed relatively better with rounding for both models (T able 9), and for the 2 vs. 10 truncated 3–7 experiments k = 2 performed better nearly all the time (T able 10). 2 3 4 5 10 Uniform # victories 15766 33175 21386 19995 9678 Uniform av erage error 0.78 0.47 0.55 0.52 0.50 Gaussian # victories 13262 64870 10331 9689 1848 Gaussian av erage error 0.67 0.24 0.50 0.50 0.50 T able 6: Number of times each value of k produces minimal error and av erage error values, over 100,000 items generated according to both models with rounding compression. 2 3 Uniform number of victories 33585 66415 Uniform av erage error 0.78 0.47 Gaussian number of victories 18307 81693 Gaussian av erage error 0.67 0.24 T able 7: k = 2 vs. 3 with rounding compression. 2 10 Uniform number of victories 37225 62775 Uniform av erage error 0.78 0.50 Gaussian number of victories 37897 62103 Gaussian av erage error 0.67 0.50 T able 8: k = 2 vs. 10 with rounding compression. 2 10 Uniform number of victories 55676 44324 Uniform av erage error 0.79 0.89 Gaussian number of victories 24128 75872 Gaussian av erage error 0.67 0.34 T able 9: k = 2 vs. 10 with rounding compression. For k = 10 only scores permitted between 3 and 6. 2 10 Uniform number of victories 99586 414 Uniform av erage error 0.78 3.50 Gaussian number of victories 95692 4308 Gaussian av erage error 0.67 1.45 T able 10: k = 2 vs. 10 with rounding compression. For k = 10 only scores permitted between 3 and 7. 12 5 Experiments The empirical analysis of ranking-based datasets depends on the av ailability of lar ge amounts of data depict- ing dif ferent types of real scenarios. For our experimental setup we used two different datasets from “Rating and Combinatorial Preference Data” of http://www.preflib.org/data/ . One of these datasets contains 675,069 ratings on scale 1-5 of 1,842 hotels from the TripAdvisor website. The other consists of 398 approval ballots and subjective ratings on a 20-point scale collected ov er 15 potential candidates for the 2002 French Presidential election. The rating was provided by students at Institut d’Etudes Politiques de Paris. The main quantities we are interested in computing are the number of times that each value of k produces the lowest error o ver the items, and the av erage value of the errors ov er all items for each k value. W e also provide e xperimental results from the Jester Online Recommender System on joke ratings. 5.1 T ripAdvisor hotel rating In the first set of experiments, the dataset contains different types of ratings based on the price, quality of rooms, proximity of location, etc., as well as overall rating provided by the users scraped from T ripAdvisor . W e compared performance between using k = 2 , 3 , 4 , 5 to see for how many of the trials each value of k produced the minimal error using the floor approach (T ables 11 and 12). Surprisingly , we see that the number of victories sometimes decreases with the increase in value of k , while the average error decreased monotonically (recall that we would hav e zero error if we set k to the actual maximum rating point). The number of victories increases for some cases with k=2 vs. 3 compared to 2 vs. 4 (T able 13). W e next explored rounding to generate the ratings (T ables 14 – 17). For each v alue of k , all ratings pro- vided by users were compressed with the computed k midpoints and the av erage score was calculated. T able 14 sho ws the average error induced by the compression which performs better than the floor approach for this dataset. An interesting observ ation found for rounding is that using k = n = 5 was outperformed by using k = 4 for sev eral ratings, using both the av erage error and number of victories metrics, as shown in T able 17. A verage error k = 2 3 4 Overall 1.04 0.31 0.15 Price 1.07 0.27 0.14 Rooms 1.06 0.32 0.16 Location 1.47 0.42 0.16 Cleanliness 1.43 0.40 0.16 Front Desk 1.34 0.33 0.14 Service 1.24 0.32 0.14 Business Service 0.96 0.28 0.18 T able 11: A verage flooring error for hotel ratings. 5.2 French presidential election W e next experimented on data from the 2002 French Presidential Election. This dataset had both approv al ballots and subjectiv e ratings of the candidates by each voter . V oters rated the candidates on a scale of 20 where 0.0 is the lo west possible rating and -1.0 indicates a missing v alue (our experiments ignored the candi- dates with -1). The number of victories and minimal flooring error were consistent for all comparisons, with higher error achieved for lower k v alues for each candidate. On the other hand, with rounding compression 13 Minimal error k = 2 3 4 Overall 235 450 1157 Price 181 518 1143 Rooms 254 406 1182 Location 111 231 1500 Cleanliness 122 302 1418 Front Desk 120 387 1335 Service 140 403 1299 Business Service 316 499 1027 T able 12: Number of times each k minimizes flooring error . # of victories k= 2 vs. 3 2 vs. 4 3 vs. 4 Overall 243, 1599 277, 1565 5, 1837 Price 187, 1655 211, 1631 4, 1838 Rooms 275, 1567 283, 1559 10, 1832 Location 126, 1716 122, 1720 11, 1831 Cleanliness 126, 1716 141, 1701 5, 1837 Front Desk 130, 1712 133, 1709 8, 1834 Service 153, 1689 152, 1690 11, 1831 Business Service 368, 1474 329, 1513 22, 1820 T able 13: Number of times k minimizes flooring error . A verage error k = 2 3 4 Overall 0.50 0.28 0.15 Price 0.48 0.31 0.15 Rooms 0.48 0.30 0.16 Location 0.63 0.41 0.22 Cleanliness 0.6 0.4 0.21 Front Desk 0.55 0.39 0.21 Service 0.52 0.36 0.18 Business Service 0.39 0.36 0.18 T able 14: A verage error using rounding approach. Minimal error k = 2 3 4 Overall 82 132 1628 Price 92 74 1676 Rooms 152 81 1609 Location 93 52 1697 Cleanliness 79 44 1719 Front Desk 89 50 1703 Service 102 29 1711 Business Service 246 123 1473 T able 15: Number of times k minimizes error with rounding. 14 # of victories k= 2 vs. 3 2 vs. 4 3 vs. 4 Overall 161, 1681 113, 1729 486, 1356 Price 270, 1572 101, 1741 385, 1457 Rooms 344, 1498 173, 1669 575, 1267 Location 275, 1567 109, 1733 344, 1498 Cleanliness 210, 1632 90, 1752 289, 1553 Front Desk 380, 1462 95, 1747 332, 1510 Service 358, 1484 109, 1733 399, 1443 Business Service 870, 972 278, 1564 853, 989 T able 16: Number of times k minimizes error with rounding. Overall A verage error 0.15, 0.21 # of victories 1007, 835 Price A verage error 0.15, 0.17 # of victories 955, 887 Rooms A verage error 0.15, 0.23 # of victories 1076, 766 Location A verage error 0.22, 0.22 # of victories 694, 1148 Cleanliness A verage error 0.21, 0.19 # of victories 653, 1189 Front Desk A verage error 0.21, 0.17 # of victories 662, 1180 Service A verage error 0.18, 0.18 # of victories 827, 1015 Business Service A verage error 0.18, 0.31 # of victories 1233, 609 T able 17: # victories and a verage rounding error , k in { 4,5 } . the minimal error was achieved for k = 2 for one candidate, while it was achiev ed for the tw o highest values k = 8 or 10 for the others. 5.3 Joke recommender system W e also experimented on anonymous ratings data from the Jester Online Joke Recommender System [12]. Data was collected from 73,421 anonymous users between April 1999–May 2003 who have rated 36 or more jokes with ratings of real v alues ranging from − 10 . 00 to +10 . 00 . W e included data from 24,983 users in our experiment. Each row of the dataset represents the rating from single user . The first column contains the number of jokes rated by a user and the next 100 columns gi ve the ratings for jokes 1–100. Due to space limitations we only experimented on a subset of columns (the ten most densely populated). The results are sho wn in T ables 20 and 21. For the T ripAdvisor and French election data, the errors decrease intuitiv ely as the number of choices increase. But surprisingly for the Jester dataset we observe that the a verage errors are very close for all of the options ( k = 2 , 3 , 4 , 5 , 10 ) with rounding compression (though with flooring they decrease monotonically with increasing k value). These results suggest that while using more options seems to generally be better on real data using our models and metrics, this is not always the case. In the future we would lik e to explore 15 A verage error 2 3 4 5 8 10 Francois Bayrou 3.18 1.5 0.94 0.66 0.3 0.2 Oli vier Besancenot 1.7 0.8 0.5 0.35 0.16 0.1 Christine Boutin 1.15 0.54 0.34 0.24 0.11 0.07 Jacques Cheminade 0.64 0.3 0.19 0.13 0.06 0.04 Jean-Pierre Che venement 3.69 1.74 1.09 0.77 0.35 0.23 Jacques Chirac 3.48 1.64 1.03 0.72 0.33 0.21 Robert Hue 2.39 1.12 0.7 0.49 0.22 0.14 Lionel Jospin 5.45 2.57 1.61 1.13 0.52 0.33 Arlette Laguiller 2.2 1.04 0.65 0.46 0.21 0.13 Brice Lalonde 1.53 0.72 0.45 0.32 0.14 0.09 Corine Lepage 2.24 1.06 0.67 0.47 0.22 0.14 Jean-Marie Le Pen 0.4 0.19 0.12 0.08 0.04 0.02 Alain Madelin 1.93 0.91 0.57 0.4 0.18 0.12 Noel Mamere 3.68 1.74 1.09 0.77 0.35 0.23 Bruno Maigret 0.31 0.15 0.09 0.06 0.03 0.02 T able 18: A verage flooring error for French election. A verage error 2 3 4 5 8 10 Francois Bayrou 1.65 0.73 0.91 0.75 0.48 0.62 Oli vier Besancenot 3.88 2.39 2.14 1.7 1.31 1.25 Christine Boutin 3.87 2.39 1.84 1.5 0.9 0.86 Jacques Cheminade 4.34 2.72 2.07 1.65 1.02 0.88 Jean-Pierre Che venement 1.47 0.65 1.2 0.82 0.55 0.61 Jacques Chirac 1.64 1.0 1.13 0.88 0.55 0.64 Robert Hue 2.51 1.27 1.14 1.09 0.67 0.77 Lionel Jospin 0.33 0.49 0.87 0.67 0.51 0.63 Arlette Laguiller 2.62 1.34 1.34 1.02 0.6 0.63 Brice Lalonde 3.45 1.9 1.55 1.21 0.66 0.78 Corine Lepage 2.89 1.59 1.56 1.16 0.79 0.87 Jean-Marie Le Pen 4.92 3.26 2.55 2.06 1.39 1.2 Alain Madelin 3.18 1.8 1.52 1.17 0.72 0.7 Noel Mamere 2.02 1.55 1.77 1.44 1.29 1.41 Bruno Maigret 4.88 3.23 2.46 1.99 1.28 1.1 T able 19: A verage rounding error for French election. deeper and understand what properties of the distribution and dataset determine when a smaller value of k can outperform the larger ones. 6 Conclusion Settings in which humans must rate items or entities from a small discrete set of options are ubiquitous. W e hav e singled out sev eral important applications—rating attractiveness for dating websites, assigning grades to students, and revie wing academic papers. The number of av ailable options can vary considerably , e ven within dif ferent instantiations of the same application. For instance, we sa w that three popular sites for attracti veness rating use completely different systems: Hot or Not uses a 1–10 system, OkCupid uses 1–5 16 A verage error 2 3 4 5 10 Joke 5 0.57 0.53 0.52 0.51 0.5 Joke 7 1.32 0.88 0.74 0.66 0.54 Joke 8 1.51 0.97 0.8 0.71 0.56 Joke 13 2.52 1.45 1.09 0.91 0.61 Joke 15 2.48 1.43 1.08 0.91 0.62 Joke 16 3.72 2.01 1.44 1.16 0.69 Joke 17 1.94 1.18 0.92 0.8 0.58 Joke 18 1.51 0.97 0.79 0.71 0.56 Joke 19 0.8 0.64 0.58 0.56 0.51 Joke 20 1.77 1.1 0.87 0.76 0.57 T able 20: A verage flooring error for Jester dataset. A verage error 2 3 4 5 10 Joke 5 0.48 0.47 0.48 0.47 0.48 Joke 7 1.2 1.2 1.2 1.2 1.2 Joke 8 1.44 1.43 1.42 1.43 1.42 Joke 13 2.43 2.43 2.43 2.42 2.42 Joke 15 2.34 2.34 2.33 2.33 2.33 Joke 16 3.59 3.58 3.57 3.57 3.57 Joke 17 1.84 1.82 1.82 1.81 1.81 Joke 18 1.45 1.44 1.44 1.44 1.44 Joke 19 0.72 0.72 0.71 0.71 0.71 Joke 20 1.65 1.63 1.63 1.63 1.63 T able 21: A verage rounding error for Jester dataset. “star” system, and T inder uses a binary 1–2 “swipe” system. Despite the problem’ s importance, we have not seen it studied theoretically pre viously . Our goal is to select k to minimize the av erage (normalized) error between the compressed av erage score and the ground truth average. W e studied two natural models for generating the scores. The first is a uniform model where the scores are selected independently and uniformly at random, and the second is a Gaussian model where they are selected according to a more structured procedure that giv es preference for the options near the center . W e pro vided a closed-form solution for continuous distrib utions with arbitrary cdf. This allo ws us to characterize the relativ e performance of choices of k for a giv en distrib ution. W e saw that, counterintuitively , using a smaller v alue of k can actually produce lower error for some distributions (ev en though we know that as k approaches n the error approaches 0): we presented specific distributions for which using k = 2 outperforms 3 and 10. W e performed numerous simulations comparing the performance between different values of k for dif- ferent generati ve models and metrics. The main metric was the absolute number of times for which values of k produced the minimal error . W e also considered the a verage error over all simulated items. Not surpris- ingly , we observed that performance generally improv es monotonically with k as expected, and more so for the Gaussian model than uniform. Howe ver , we observe that small k values can be optimal a non-negligible amount of the time, which is perhaps counterintuitiv e. In fact, using k = 2 outperformed k = 3 , 4 , 5 , and 10 on 5.6% of the trials in the uniform setting. Just comparing 2 vs. 3, k = 2 performed better around 37% of the time. Using k = 2 outperformed 10 8.3% of the time, and when we restricted k = 10 to only 17 assign values between 3 and 7 inclusive, k = 2 actually produced lower error 93% of the time! This could correspond to a setting where raters are ashamed to assign e xtreme scores (particularly e xtreme lo w scores). W e compared two dif ferent natural compression rules—one based on the floor function and one based on rounding—and weighed the pros and cons of each. For smaller k rounding leads to significantly lo wer error than flooring, with k = 3 the clear optimal choice, while for larger k rounding leads to much larger error . A future avenue is to e xtend our analysis to better understand specific distributions for which dif ferent k v alues are optimal, while our simulations are in aggregate over many distributions. Application domains will hav e distributions with dif ferent properties, and improv ed understanding will allow us to determine which k is optimal for the types of distributions we expect to encounter . This impro ved understanding can be coupled with further data exploration. Refer ences [1] Duane F . Alwin. Feeling thermometers versus 7-point scales: Which are better? Sociological Methods and Resear ch , 25:318–340, February 1997. [2] Jerrod Ankenman and Bill Chen. The Mathematics of P oker . ConJelCo LLC, Pittsbur gh, P A, USA, 2006. [3] Laura Bl ´ edait ´ e and Francesco Ricci. Pairwise preferences elicitation and exploitation for con versa- tional collaborativ e filtering. In Pr oceedings of the 26th ACM Confer ence on Hypertext & Social Media , HT ’15, pages 231–236, Ne w Y ork, NY , USA, 2015. ACM. [4] Carolyn C Preston and Andrew Colman. Optimal number of response categories in rating scales: Reliability , v alidity , discriminating power , and respondent preferences. Acta psychologica , 104:1–15, 04 2000. [5] Dan Cosley , Shyong K. Lam, Istvan Albert, Joseph A. Konstan, and John Riedl. Is seeing believing?: Ho w recommender system interfaces affect users’ opinions. In Pr oceedings of the SIGCHI Confer ence on Human F actors in Computing Systems , CHI ’03, pages 585–592, Ne w Y ork, NY , USA, 2003. ACM. [6] Eli P . Cox III. The optimal number of response alternativ es for a scale: A revie w . Journal of Marketing Resear ch , 17:407–442, 1980. [7] Pradeep Dubey and John Geanakoplos. Grading exams: 100, 99, 98, . . . or A, B, C? Games and Economic Behavior , 69:72–94, May 2010. [8] Baruch Fischhoff. V alue elicitation: Is there anything in there? American Psychologist , 46:835–847, 1991. 10.1037/0003-066X.46.8.835. [9] Hershey H. Friedman, Y onah W ilamowsk y , and Linda W . Friedman. A comparison of balanced and unbalanced rating scales. The Mid-Atlantic Journal of Business , 19(2):1–7, 1981. [10] Hannah Fry . The Mathematics of Love . TED Books, New Y ork, NY , 2015. [11] Ron Garland. The mid-point on a rating scale: is it desirable? Marketing Bulletin , 2:66–70, 1991. Research Note 3. [12] K en Goldberg, Theresa Roeder , Dhruv Gupta, and Chris Perkins. Eigentaste: A constant time collab- orati ve filtering algorithm. Information Retrieval , 4(2):133–151, 2001. http://eigentaste.berkeley .edu. 18 [13] Saikishore Kalloori. Pairwise preferences and recommender systems. In Pr oceedings of the 22Nd International Conference on Intelligent User Interfaces Companion , IUI ’17 Companion, pages 169– 172, Ne w Y ork, NY , USA, 2017. ACM. [14] Daniel Kluver , Tien T . Nguyen, Michael Ekstrand, Shilad Sen, and John Riedl. How many bits per rating? In Pr oceedings of the Sixth ACM Confer ence on Recommender Systems , RecSys ’12, pages 99–106, Ne w Y ork, NY , USA, 2012. ACM. [15] Y ehuda Koren and Joe Sill. Ordrec: An ordinal model for predicting personalized item rating distri- butions. In Pr oceedings of the F ifth A CM Confer ence on Recommender Systems , RecSys ’11, pages 117–124, Ne w Y ork, NY , USA, 2011. ACM. [16] James R. Lewis and O ˇ guzhan Erdinc ¸ . User experience rating scales with 7, 11, or 101 points: Does it matter? J. Usability Studies , 12(2):73–91, feb 2017. [17] Rensis A. Likert. A technique for measurement of attitudes. Ar chives of Psycholo gy , 22:1–, 01 1932. [18] Luis Lozano, Eduardo Garca-Cueto, and Jos Muiz. Effect of the number of response cate gories on the reliability and v alidity of rating scales. Methodology , 4:73–79, 05 2008. [19] Michael S. Matell and Jacob Jacoby . Is there an optimal number of alternativ es for likert scale items? Study 1: reliability and v alidity . Educational and psycholo gical measur ements , 31:657–674, 1971. [20] Michael S. Matell and Jacob Jacoby . Is there an optimal number of alternativ es for likert scale items? Ef fects of testing time and scale properties. J ournal of Applied Psycholo gy , 56(6):506–509, 1972. [21] Aubrey C. McK ennell. Surve ying attitude structures: A discussion of principles and procedures. Qual- ity and Quantity , 7(2):203–294, Sep 1974. [22] Bruno Pradel, Nicolas Usunier, and Patrick Gallinari. Ranking with non-random missing ratings: Influ- ence of popularity and positi vity on ev aluation metrics. In Pr oceedings of the Sixth ACM Confer ence on Recommender Systems , RecSys ’12, pages 147–154, New Y ork, NY , USA, 2012. A CM. [23] Donald R. Lehmann and James Hulbert. Are three-point scales al ways good enough? Journal of Marketing Resear ch , 9:444, 11 1972. [24] Sheena S. Iyengar and Mark Lepper . When choice is demoti vating: Can one desire too much of a good thing? Journal of personality and social psychology , 79:995–1006, 01 2001. [25] Seymour Sudman, Norman M. Bradburn, and Norbert Schwartz. Thinking About Answers: The Appli- cation of Cognitive Pr ocesses to Survey Methodology . Jossey-Bass Publishers, San Francisco, Califor - nia, 1996. [26] Albert R. W ildt and Michael B. Mazis. Determinants of scale response: label versus position. Journal of Marketing Resear ch , 15:261–267, 1978. 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment