Automated Generation of Test Models from Semi-Structured Requirements

[Context:] Model-based testing is an instrument for automated generation of test cases. It requires identifying requirements in documents, understanding them syntactically and semantically, and then translating them into a test model. One light-weigh…

Authors: Jannik Fischbach, Maximilian Junker, Andreas Vogelsang

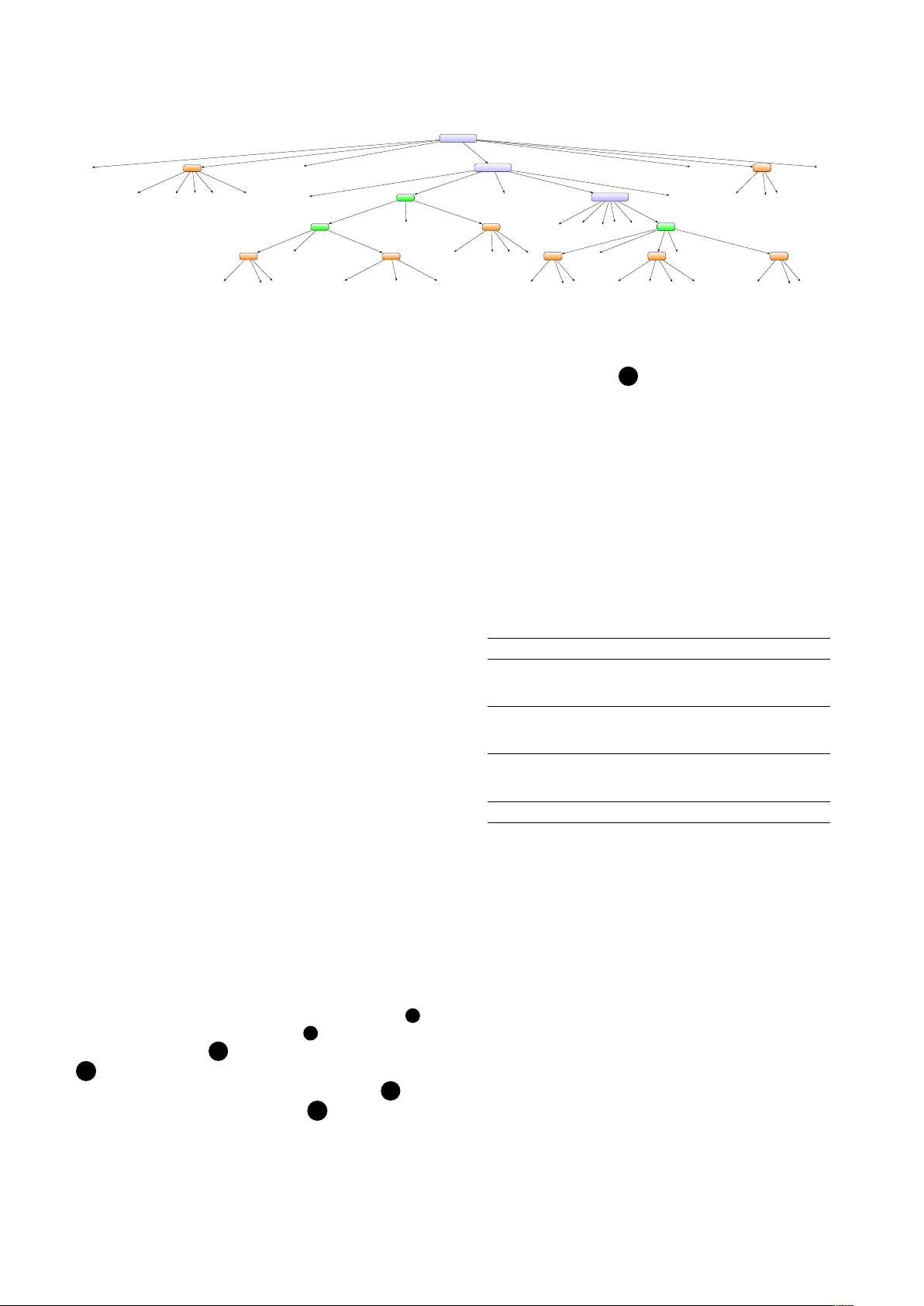

A ut oma t e d Generation of T est Models from Se m i-Struc t ur ed Requirements Ja nnik Fischbach, Maximilian Junker Q ualicen GmbH, German y {firstname.lastname}@qualicen.de A ndreas V ogelsang T U Berlin, German y a ndreas.vogelsang@tu-berlin.de D i e tm a r Freudenstein Allianz Deutschland A G, German y die t ma r.f reudenstein@ allianz.de Abstract —[Context:] Model-based testing is an instrument f or automated generation of test cases. It requir es identifying r equirements in documents, understanding them synt actically and semantically , and then translating them into a test model. One light-weight language for these test models are Cause-Effect- Gr ap hs (CEG) that can be used to derive test cases. [ Problem:] The creation of test models is laborious and we lack an automated solution that covers the entir e process from requirement detection t o test model creation. In addition, the majority of requirements is e xp ressed in natural language (NL), which is hard t o translate to t est models automatically . [Principal Idea:] W e build on the fact t h at not all NL requir ements ar e equally unstructured. W e f ound t h at 14 % of the lines in requirements documents of our industry partne r contain "pseudo-code"-like descriptions of business rules. W e apply Machine Learning to identify such semi-structur e d r equirements descriptions and propose a rule-based appr oach f or their translation into CEGs. [Contribution:] W e make three c on tributions: (1) an algorithm for t he automatic detection of semi-structured requirements descriptions in documents, (2) an algorithm for the automatic translation of the identifi ed r equirements into a CEG and (3) a study demonstrating that our proposed solution leads to 86% time savings for test model c reation without loss of quality . Index T erms —machine learning, model-based testing, natural language r equirements I . I N T RO D U C T I O N T esting is crucial in modern dev elopment projects and c ontributes significantly to the success of IT projects. It ensures both the quality of the developed system and its compliance w i th customer requirements. For complete test cov erage, all spe cified requirements need to be covered by corresponding te st cases. Dri v en by the increasing de velopment speed in a gil e IT projects, test cases must be created instantaneously in order to receiv e immediate fee dback on the current status of the system. This requires increasing automation in test c ase creation. A substantial contribution tow ards a higher de gree of automation is Model-Based T esting [ 1 ] . The desired syste m behavior is represented by test models and subse quently c on verted into test cases automatically . T est models do not only se rve as an instrument for the automated generation of test c ases b ut also enable early detection of issues in requirement spe cifications. Furthermore, they allow teams to transparently disc us s the des ired system setup. In the past, the generation of test cases from test models has been widely automated [ 2 ] , w hi le practitioners today encounter a variety of problems when c reating and maintaining these models [3]. T he creation of test models requires a number of tasks, w hich a re currently mostly performed manually . T est designers n eed to identify and extract relev ant information from requirements spe cifications mos tly written in natural language (NL) [ 4 ] , [ 5 ] , fully understand them and finally transform them into a suitable test model. Particularly in lar ge softw are projects, the e xtraction of these requirements prov es to be challenging be cause the documents quickly become complex and contain a r ange of other information in addition to the actual requirements (p arameter definitions, conte xt information, examples, etc.) [ 6 ] . Furthe rmore, the complexity of the requirements increases si- multane ousl y with growing comple xity of IT systems requiring a number of dif ferent inputs and outputs to be considered when creating test models. This results in a time-consuming a nd error-prone test model creation process because the quality of the test model strongly depends on the capabilities of the te st designer . The lack of au tomation of the process also leads to additional maintenance costs. Any change in a requirement r equires a revision of the test model. The notation of requirements significantly influences the c reation of a t est model. Informal and unstructured descriptions a re usually imprecise and ambiguous representing a barrier for the automated transfer into test mode ls. In some domains, suc h as embedded software, we see an increasing trend to w ards more structured and model-based specifications of r equirements. In other domains, such as business information syste m s, natural lan guage is still the dominant way to specify r equirements. W e build on the fact that not all NL requirements a re equally unstructured. In fact, in many NL requirements spe cifications, we see descriptions that clearly e xhibits a certain struc ture. Examples include user stories created along one of the proposed templates ( A s an , I want , so that . ), requirements created along the lines of a c onstrained natural language (CNL) notation such as EARS [ 7 ] , or textual descriptions of business rules. Sometimes, even logic al constructs such as If, Then, Else a re embodied in natural la nguage constructs as a w ay of describing the expected system be havior more precisely [ 8 ] . While analyzing 11 requirements doc uments from our indus try partne r , an av erage of 14% of the lines in the documents contained "pseudo-code"-like de scriptions of business rules. These requirement descriptions r epresent a good starting point for the automation of test model c reation due to their structured nature. In the remainder of the pa per, we will call these parts of requirements specifications se mi-structur ed requir eme nt s —r equirements expressed in a struc tured form of natural language text. Idea lly , we can ide nt ify and interpret these requirements within the documents c 2019 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in an y current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating new collective works, for resale or redistribution to servers or lists, or reuse of any copyrighted component of this w ork in other works. a nd then c on vert them into a test model. Drawing on the de scribed problems and our observations gathered in practice, we conclude tw o main research challenges (RC): RC 1: W e need to automatically detect and extract semi- structur ed r equir ements w i thin requirement docu- me nt s. RC 2: W e need to automatically transform se mi-structured r equirements into test models. In this paper , we addr ess both research challenges and present a n approach that supports practitioners in creating test models. O ur approach consists of two steps: (1) automatically identify se mi-structur ed r equir ements in requirements specifications a nd (2) automatically transform the identified semi-structur ed r equirements into Cause- Effect-Graphs (CEG), which are well suited to deriv e test cases according to our preliminary study [ 9 ] . An automated transfer of requirements into a CEG would thus complement the test case creation process. Our work ma kes the follo wing key contributions (C): C 1: W e present a Machine Learning based approach for the detection of semi-structur ed r equir ements . O ur proposed method is e v aluated on a dataset of 11 requirement specifications provided by Allianz De utschland. C 2: W e introduce a method that allows us to analyze th e ide nt ified semi-structur ed r equir ements both syntacti- cally and semantically and to con vert them into CEGs. C 3: W e present a case study ev aluating our algorithm in pr actice. It leads to a 86% time saving compared to the ma nual creation without loss of quality in the model. I I . B AC K G RO U N D A N D R E L A T E D W O R K I n t he follo wing, we describe fundamentals and related wo rk in the field of extracting test models from requirement spe cifications. A . Notation of Requir ements Req ui rements can be specified in dif ferent styles, which can be divided in three dif ferent classes: informal, semi-formal, a nd formal techniques [ 10 ] , [ 11 ], [ 12 ] . System beha viour c an be described in a narrativ e form by using unrestricted na tural language (informal technique). Informal descriptions do not follow a special syntax or semantics. Semi-formal r equirements are expressed in a predefined and fixed syntax tha t does not have a formally defined syntax. Example of semi- f ormal requirements descriptions include unrestricted UML mode l s or controlled natural language [ 7 ] . Formal notations f oll o w a precise syntax and semantics. An example of such a specification language r epresents Z , which is based on ma t hemati cal notations. W e focus on descriptions t hat are somewhere between inf ormal and semi-formal descriptions and call them se mi- structur ed r equirements . Semi-structur ed r equir ements a re similar to controlled natural language. Using control structures suc h as W hen, If, Then, While and Wher e , requirements are f ed into a specific structure to prevent common issues such a s v agueness. An example is the Unwanted Behavi our Pattern in EARS [ 7 ] , which enforces the formulation as follo ws: I F Page 1 BR: Deactivate Legacy Objects Author John Doe Created on 27.08.15 1.1. Parameters Name Beschreibung VERTR# Primary Key 1.2. General Description of the Busine ss Rule This section outlines why the business rule should be i mplemented and how it relates to ex isting rules. The description is only informal and does not describe any technical processes . 1.3. Business Rule Workflow The detailed procedure is described within this section in a technical manner. It is enriched with logical constructs to make it easier to read ( “ pseudo-code ” -like requirements). In som e cases the pseudo code is extended by comments or other language descriptions . The pseudo code can occur at different plac- es in the document. IF OBJ.TCONTRACT.NUM# not included in IN.LIST_CONTRACT # THEN IF .<> = [A] /*comment*/ AND .<> SMALLER VAR_ALLOWANCE OR .<> IS reversal [R ] THEN IF = [Y] THEN OUT _KZ_PAYMENT = [N] AND Deactivate OBJ.TPROD to product / detail ELSE OUT_KZ_ PAYMENT = [J] IF-E ND IF-END ELSE OBJ.TCONTRACT = [Y] IF-END 1.4. History of Changes Date Changed by # Content and reason for the change 27.08.15 John Doe 1 First creation 14.05.18 John Doe 2 Extension of the business rule Semi-structured REQ Fig. 1: Exemplary requirements document containing semi- structur ed requir ements ( see orange dotted frame). This is not a n original document of Allianz Deutschland. , THEN the sha ll . Hence, EARS requirements follow a clear syntax and the values between the con trol structures c onsis t of a limited v ocabulary . Although control structures a re used, our considered requirements do not fit seamlessly into the three described notations categories due to their lack of rigid syntax and limited vocab ulary . W e therefore consider the m to be between informal and semi-formal requirements. B . Cause-Effect-Gr aphing Cau s e-Effect-G raphing originates f rom the idea of Elmendor f a nd Myers [ 13 ] , [ 14 ] . It aims at illustrating the desired system be havior in the form of input and output combina tions. The inputs represent the causes and the outputs the respecti ve e ffects. A Cause-Effect-Gr aph can thus be interpreted as a c ombi na torial l ogic network, which describes the interaction of c auses and effects by Boolean logic. Both causes and effects a re associated by four different relationships: conjunction ∧ , disjunction ∨ , ne gation ¬ an d implication ⇒ . Let G = ( E , C ) be the CEG shown in Figure 2 with effect set E a nd cause se t C . In the example, | C | = 5 inc l uding c 1 , c 2 , c 3 , c 4 a nd AND BR 2 BR 1 .<> SMALLER VAR_ALLOWANCE .<> IS reversal [R] .<> = [A] OBJ.TCONTRACT.NUM# not included in IN.LIST_CONTRACT# = [Y] Deactivate OBJ.TPROD to prod uct / detail OUT_KZ_PAYMENT = [N] OBJ.TCONTRACT = [Y] OUT_KZ_PAYMENT = [J] BR 3 ∧ ∧ c1 c2 c3 c4 c5 e1 e2 e3 e4 Fig. 2: Ex emplary Cause- Effect-Gr aph fo r Fig. 1. c 5 w hi le | E | = 4 w i th e 1 , e 2 , e 3 a nd e 4 . Examining the r equirement specification in Fig. 1, it emerges that several If c lauses are nested within each other. This is illustrated by inse rti ng intermediate nodes for each If clause resp. "business r ule" (BR). T o deriv e test cases from the CEG, the graph is tr av ersed back from the effects to the causes and test cases are c reated according to specific decision rules, which can be found in [ 13 ] . W e integrated these rules into the Open Source tool Spe cmate 1 a nd demonstrated that the tool creates a minimum numbe r of test cases inst ead of the 2 n possible test cases (n is the number of causes) [ 9 ] . Thus, CEG models are well suited f or balancing between sufficient test coverage and the lowest possible number of te st cases. T his is also indicated by T able I, wh i ch contains the test cases generated by Specmate for G . T ABLE I: Generated test cases from Fig. 2 T est Case Input Output c1 c2 c3 c4 c5 e1 e 2 e3 e4 TC 1 1 0 0 1 0 0 0 0 1 TC 2 1 0 0 1 1 0 1 1 0 TC 3 0 0 0 1 1 1 0 0 1 TC 4 1 1 1 0 1 0 1 1 0 TC 5 1 0 1 0 1 0 0 0 1 TC 6 1 1 0 0 1 0 0 0 1 C. State-of- the-Art T o our knowledge, there is no work that covers the entire pr ocess from identifying requirements in documents to the a ctual translation into test models. A first promising approach f or the recognition of pseudocode similar content is provided by T uarob et al. [ 15 ]. The de veloped method is aimed at the r ecognition of mathematical algorithms and is therefore not suitable for the detection of requirements. W e follow th e idea to use Machine Learning for the recognition of pseudo code a nd design new features which target the characteristics of se mi-structur ed r equirements . T he translation of functional requirements into test models is a n acti ve area of research. There is a number of papers dealing w i th the translation into State Diagrams [ 16 ] , [ 17 ] , [ 18 ] , Use Ca s e Diagrams [ 19 ] and other types of UML Diagrams. So far 1 https://specmate.io/ the re is no approach to automatically translate requirements into CEGs. Though Mogyorodi outlines how to manually generate the CEG, there is no automation of the process [ 20 ] . W e address this research g ap and provide a suitable method. I I I . A P P RO A C H : O V E R V I E W Ou r approach aims at two goals: 1) T he recognition of semi-structur ed requir ements in re- quir ement documents. 2) T he translation of se mi-structur ed r equir ements into CEGs. F or e xtracting specific content from documents, rule-based me t hods are usually a pplied. Howe v er , these are not suitable f or achieving our first objecti v e. It requires a disproportionately la rge set of rules to cov er dif ferent pseudo code spellings (other logic al constructs, languages, etc.). The maintenance of these r ules is time-consuming, error-prone and causes the recognition to be inflexible. In practice, writing conv entions are usually not respected. Throughout the analysis of the requirement doc uments we observed that often parts of the pseudo code are missing (e.g. the delimiters). Rule-based approaches tend t o be too rigid in this respect and therefore lead to wrong results. O ur observations coincide with the findings of Tuarob et al. w ho disco vered a similar problem in mathematical pseudo c ode [ 15 ] . W e therefore use Machine Learning to meet Goal 1. I nst e ad of focusing on the exact spelling of the pseudo code, w e differe ntiate its general syntax from that of natural language. W e address Goal 2 by identifying cause-effect-patterns within the identified require ments. First, we translate the requirement into a syntax tree in order to synthesize the effects and causes. Subseque ntl y , we trav erse the tree using a specific visitor pa tt ern, analyze its semantics and translate it into a CEG. I V . A U T O M AT I C D E T E C T I O N O F S E M I - S T RU C T U R E D R E Q U I R E M E N T S W e argue that a requirements specification represents a c oll ectio n of coherent lines that contain either natural language or pseudo code (PC). The detection of PC can be therefore unde rstood as a binary problem classifying lines as follo ws: C 0 : Line do es not contain any pseudo code or C 1 : Line co ntains pseudo code In order to solve this classification problem we implement a a lgorithm , which assigns the lines to one of the two categories. I t first splits the specification into individual lines, then c lassifi e s each line based on four different criteria and lastly r eturns all lines classified as PC. Our detection algorithm the refore implements function f : R 4 → { C 0 , C 1 } , where e ach v ector represents a single line. The criteria originate from a n analysis of requirement specifications provided by Allianz D eutschland and represent the following properti es of PC: 1) Numbe r of special characters: Whereas in natural lan- gua ge sentences only dots, commas or colons are used to structure the content, ps eudo code contains a variety of other specia l characters. These are usually applied for de claring v ariables or performing logical queries. 2) Numbe r of words: Observing the pseudo code, it becomes e vident that the lines are often significantly shorter than na tural language lines. This can be demonstrated by the logic al constructs like If and Then , which are presented in separate lines for a better readability . 3) D e gree of indentation: In contrast to natural language, pse udo code often does not start at the beginning of a line, but is rather positioned in the middle of a line due to inde nt at i ons. T o cov er this property , we count the number of whitespaces between the beginning of the line and the first letter per line. 4) Capital letters to letters ratio: A further distincti ve c haracteristic of pseudo code is the intensi v e use of c apital letters to denote variables and logical constructs. T o illustrate this property , we calculate the ratio between c apital letters used and the total number of letters for each line. A . Dataset Since no scientific work has yet been carried out on the r ecognition of semi-structur ed r equirements on the basis of ML, there is no data set a v ailable for this purpose. Hence, we ha v e create d our own data set representing 11 requirements spe cifications from our industry partner . For this purpose, we use d a Python script that splits the original documents into single lines, calculates the corresponding feature values for e ach line, and generates a .csv file. Each line was assigned a numbe r and manually labeled by the first au thor with either 0 or 1 to indicate pseudo code lines as well as natural language lines to enable Supe rvised Learning . T o ensure the validity of the labeling, the second author randomly inspected t he data. O ur generated data set contains all 11 requirement documents a nd consists of 4450 lines of which 613 are pseudo code. B . Balancing the T r aining Data Th e generated data set was imbalanced. Only 13.8% are positi v e samples (i.e., lines with pseudo code). T o av oid the class imbalance problem, we apply dif ferent balancing te chniques and compare their impact on the performance of the classifiers. W e applied the follo wing methods: 1) Sampling-ba s ed Methods: R andom Over Sampling (R OS), R andom Under Sampling (R US), T omek Links (TL), Sy nthetic Minority Oversampling T echnique (SMO TE). 2) Cos t-sensitiv e Methods: Class W eighting (CL). C. Classification Algorithms W e benchmarked 6 different algorithms, which are widely e mpl o yed for binary classification in research and practice: D ecision T ree , Lo gistic Re gression , Support V e ctor Machines , R andom F orest , Naive Bayes and K-Ne ar est Neighbor . D . Evaluation Strate gy and Metrics W e follow the idea of Cr oss V alidation and divide the data se t in two segments: a training and a validation set. While the tr aining set is used for fitting the algorithm, the latter is utilized f or its e v aluation based on real world data. W e opt for a 10-fo ld Cr oss V alidation since a number of studies hav e indicated that a model that has been trained this way demonstrate s low bias a nd v ariance [ 21 ]. Selecting a metric requires to consider which T ABLE II: Evaluation of the classifiers. Class W eighting (CL) is not supported by K-Ne ar est Neighbor and Naive Bayes . Classifier Balancing Recall P recision Random Forest R OS 0.834 0.710 R US 0.910 0.628 SMO TE 0.876 0.597 TL 0.834 0.621 CL 0.824 0.608 Logistic Regression R OS 0.674 0.592 R US 0.636 0.572 SMO TE 0.688 0.583 TL 0.152 0.392 CL 0.671 0.579 Support V e ctor Machines R OS 0.621 0.539 R US 0.613 0.567 SMO TE 0.607 0.563 TL 0.149 0.291 CL 0.622 0.559 K-Nearest Neighbor ROS 0.829 0.631 R US 0.880 0.532 SMO TE 0.841 0.642 TL 0.758 0.687 CL - - Decision Tree R OS 0.812 0.681 R US 0.837 0.617 SMO TE 0.794 0.657 TL 0.784 0.672 CL 0.795 0.674 Naiv e Bayes R OS 0.684 0.577 R US 0.726 0.554 SMO TE 0.703 0.593 TL 0.257 0.548 CL - - miscla s sifica t ion ( F alse Negative (FN) or F alse P ositive (FP)) ma t te rs most resp. causes the highest co sts. Every pseudo code line has to be identified in order to create the CEG entirely . T he costs of FN are t herefore significantly higher than those of FP because, otherwise, parts of the CEG are missing and the generated test case is incorrect. As a result, in the tradeoff be tween Pr ec ision a nd Recall we clearly opt for the latter . E . Results T able II presents the performance of the individual classifiers. A nalyzing the metrics rev eals that Random F or est and K- Ne arest Neighbor are particularly suitable f or our use case. T hey demonstrate the highest Recall v alues and are therefore c apable of recognizing of about 90% of the pseudo code lines. H ere, ho we ver , the choice of the respective balancing method is of crucial importance, as can be observed in the example of K-Ne arest Neighbor . Applying T omek Links leads to a R ecall v alue of 75%, whereas the use of Random Under Sampling r esults in a Recall value of 88%. Similarly , Random F orest a chieves v ery different values when using certain balancing me t hods. Considering the respective Pr ecision v alues, it is striking that the algorithms produce a high number of FP . Fo r instanc e, the K-Near est Neighbor algorithm recognizes 88% of the pseudo code lines, but strongly ignores the Pr ecision if the training set has been balanced using R andom Under Sam pl ing . It classifies too many lines as pseudo code, of which only 53% are act ua l l y pseudo code. Ultimately , this leads to an unc lean output containing almost all the pseudo code, b ut also ma ny other lines that are not needed to create the CEG models. T hi s requires that exce ss lines are manually removed before the CEG ca n be created automatically . As the Random F orest e xhibits a greater precision v alue and consequently a better e xplanatory po wer , we selec t it as a suitable algorithm. The r emaining algorithms perform worse than the Random F or est w i th regard to the main decision criterion and are therefore not suitable for capturing pseudo code comprehensiv ely , excluding the m from further consideration. V . T R A N S L A T I O N O F S E M I - S T RU C T U R E D R E Q U I R E M E N T S I N T O T E S T M O D E L S A fter detecting the pseudo code, the nex t step is to con vert it into a corresponding CEG. This requires the determination of c ause-effect-patterns within the pseudo code (syntac tic analysis) a nd the subsequent comprehension of the relationships between the identified causes and ef fects (semantic analysis). In the f oll o wing, we outline how we implemented these two analysis ste ps . A . Syntactic Analysis W hen synthesizing the cause-effect-patterns, it must be noted tha t the pseudo code does not follow a rigid grammar . The f ormulation of the causes and effects conforms to no particular f orm of notation such as < variable name> < operator> . O ur approach is to identify the position of the respectiv e cau ses a nd ef fects based on the l ogical constructs. W e apply a l oosely de fi ne d grammar 2 , which we implemented using ANTLR, and c reate an Abstr act Syntax T r ee ( AST) for each pseudo code ( see Fig. 3). It pro vides an overvie w of both the input s to be processed by the system (causes) and the expected system be havior (ef fects). B . Semantic Analysis - T erminology T o reflect the relation between causes and effects in the CEG, we semantically analyze the AST . W e traverse the tree f rom bottom to top (i.e. post-order), create corresponding CEG node s and link them according to predefined decision rules. For a precise description of these rules we introduce the followin g te rminol ogy . 1) P arse-T r ee-V iew: W ithin the AST , we distinguish between tw o dif ferent types of nodes. A ss ignme n t Nodes Assignme nt-nodes are labeled with “ cause” or “effect” (see green nodes in Fig. 3). They do not con tain information about the cause or effect itself b ut serve as a link between them. Assignment no des ha v e three children, of whom the middle child indicates the type of connection. If this child contains the value “ AND”, it specifies a conjunction and the node can be c onsidered as an Assignment-node con . If the value equals “ OR”, the connection is a disjunction, meaning that the node represents an Assignment-node dis . C on t ent Nodes L i ke assignment-nodes, Content-nodes are a lso labeled as “cause” or “effect”(see orange nodes in Fig. 3). In contrast to the A s signment-nodes , their children c ontain information about the content of causes or effects. T he children of Content-nodes a re lea ve s in the parse tree. W e differentiate betwee n Content-node cause a nd Content- node effect . Business Rule W e define each If, Then, Else clause as a B us ine ss -rule . Nested clauses are therefore indicated by node s with the label “businessRule” (see blue nodes in Fig. 3). 2) CEG- Model-V iew: The CEG model consists of the fol- lo wing elements. C E G Nodes CEG- nodes represent Content-node s a nd B us ine ss -rules . W e use the following notation to re- f er to the CEG-nodes : CEG-node w i th ty pe ∈ { effe ct , c ause , BR } . C E G Connection A l l connection types presented in Sec ti on II are modeled by CEG- con nections . W e use the follo wing syntax : CEG-connection w i th ty pe ∈ { implic , ne g , dis , c o n } C. Se mantic Analysis - Procedur e T he transformation of the AST into a CEG comprises the f oll o wing three steps. W e welcome fello w researchers to inspect, r euse and adapt our source code on Github 2 . C.1 T ranslate Content-nodes and Business-rules to CEG-nodes I n the first step, we create a CEG-node for each identified Content-node and B usiness-rule . In the example of Fig. 3, one CEG-n odes cause ne eds to be created to cov er the causes of both the first and third business rule, and three CEG- nodes cause f or the second business rule. Since the pseudo code contains four dif ferent effects, another four separate CEG-nodes effect must be c reated. Additionally , three CEG-nodes BR a re created. C.2 Re late Causes to Business Rules F or each business rule, the respectiv e causes hav e to be link ed to each other . Four cases can be separated here: 1) T he b usiness rule cov ers only one cause: Create a CEG- c onnection implic a nd link the cause to the corresponding b usiness rule. 2) T he causes are connected only by Assignment-nodes con : Cre ate a CEG-connection con a nd associate all causes dir ectly with the corresponding business rule. 3) T he causes are connected only by Assignment-nodes dis : Cre ate a CEG-connection dis a nd associate all causes dir ectly with the corresponding business rule. 4) T he causes are connected by a combination of Assignment- node con a nd Assignment-node dis : T o detect related and iso- la t e d causes, iterate o ver the parse tr ee and seek the longest se quence of A ss ignme nt-nodes con tha t are not interrupted by Assignment-nodes dis . Insert an intermediate node for the Assignment-nodes con on the lowest depth and connect r elated causes to this node by a CEG-connection con to ensure the minimum number of intermediate nodes. A fterward, connect the intermediate node and isolated c auses to the business rule using CEG- connection dis . 2 The algorithm as well as the applied grammar can be found on https: //github .com/fischJan/Automated_CEG_Creation.git businessRule IF cause OBJ.TCONTRACT .NUM# not included in IN.LIST_CONTRACT# THEN businessRule IF cause cause cause .«DEBT» = [ A ] AND cause .«BALANCE» SMALLER V AR_ALLOW ANCE OR cause .«ST A TE» IS reversal [ R ] THEN businessRule IF = [ Y ] THEN e ffect effect OUT_KZ_P AYMENT = [ N ] AND effect Deactivate OBJ.TPROD to product/detail ELSE effect OUT_KZ_P AYMENT = [ J ] END-IF ELSE effect OBJ.TCONTRACT = [ Y ] END-IF Fig. 3: Abstr act Syntax T ree representing the requirement specification in Figure 1. Assignment-nodes are marked in green, Content-node s in orange and Busi ne ss - rul e s in blue. C.3 Re late Business Rules to Effects E ach effect needs to be associated with its respectiv e b usiness r ule. This process is more complex than the pre vious step, as some exceptions have to be covered due to the nesting of the business rules and their Else blocks. In the example of Fig. 3, three b usiness rules are ne sted within each other . F or the e ffects of a b usiness rule to occur , the causes of the superior b usiness rule need to eme r ge. A business rule is therefore a lways a condition for all effects of the subordinate business r ules. Here, both the first and second business rule is required so that the effects of the third business rule can arise. In ge neral, a CEG- node BR must be linked to e very effect that is part of a business rule with a higher depth. The degree of depth thus reflects the degree of nesting. Therefore, the a lgorithm distinguishes between two categories of effects: On the one hand its individual effects and on the other hand the e ffects of subordinate BRs. Joining the latter it uses CEG- c onnections implic , while connecting to indi vidual effects two c ases ha ve to be considered: 1) Ca us es that are not located in the E lse block are linked to the business rule by CEG-connections implic . 2) Ca us e s that are located in the Else bloc k are linke d to the b usiness rule by CEG-connections ne g . V I . C A S E S T U DY O ur study aims to examine whether our ap proach can spe ed up the process of CEG creation in practice. W e want to answer the follo wing research question: How much time can be sav ed by using the algorithm compared to a manual CEG creation? A . Study Setup W e conducted the case study with 3 test designers, who are e xperienced in the creation of CEGs. W e use three additional doc uments provided by our industry partner and complete the procedure of CEG creation with each participant. W e ask e ach study participant to translate the pseudo code sections of ea ch doc ument into a CEG first manually (stage 1 ) and the n by applying the a lgorithm (stage 2 ) . The manual creation pr oceeds as follows: 1a se arch for the pseudo code section, 1b tr anslate the pseudo code into a CEG. The applica tion of the algorithm includes the follo wing sub-steps: 2a e xecute the detection algorithm (automated), 2b c ompare the output w i th the pseudo code in the document including additions/ de letions (manual) and 2c e x ecute the translation algorithm (autom ated). The dif ference between both required time efforts illustrates the impac t of the algorithm on the CEG g eneration pr ocess. Here, it is important that the three documents di ffer in their complexity . The first document (201 lines) contains only a single short pseudo code section (5 lines). The second doc ument (327 lines) includes 2 pseudo code sections which a re significantly longer (11 and 17 lines). The third document (4 37 lines) shows the greatest complexity as it consists of two ne sted pseudo code sections, which are nested multiple times ( 19 and 23 lines). T his difference in complexity permits a pa rticularly detailed insight into usabili ty and rev eals potential we aknesses in handling complex documents. T AB LE III: CEG creation times in the case study (in min.) # Man ual T ool-Supported Time Sa ving Participant 1 1 02:04 00:33 73.4% 2 11:31 01:07 90.3% 3 16:21 02:44 83.3% Participant 2 1 01:54 00:36 68.4% 2 10:05 01:20 86.8% 3 13:40 01:18 90.5% Participant 3 1 01:21 00:35 56.8% 2 05:36 00:48 85.7% 3 08:42 00:57 89.1% Mean 07:55 01:06 86.1% B . Study Results T he use of our semi-automated approach results in an a verage time saving of 86% (see T able III). Although the process has not ye t been fully automated, it is already lea ding to a significant impro v ement in the day-to-day business of test designer s . The ma nual ef fort required to compare the identified pseudo code lines with the actual existing ones was within reasonable time limits. All participants consider the significant time saving a s the mai n reason to apply the algorithm in practice. A c omparison of the manually created CEGs with the CEGs c reated by our approach reveals that their content is the same. H ence, the quality of the created test models does not suf fer due to the use of the algorithms and is consequently suitable as a replacement for manual creation. The algorithm is particularly suitable for handling complex requirement documents. Multiple ne sted pseudo code sections con tain a number of different c auses and effects as well as logical constructs. As a result, the y are difficult to read and therefore opaque, which may lead to mistakes during manual creation. According to all study pa rticipants, this represents the largest field of application for the algorithm, as it not only helps recognizing the pseudo code b ut also to interpret it. This has been particularly evident in the tr anslation of the third test document. Initially , all participants ha d to understand the structure of t he pseudo code before the y c ould translate it. During this process some causes and e ffects were ov erlooked, which required se veral adjustments w i th negati ve effects on the creation time. W e hypothesize tha t this became considerably easier by using t he algorithm a nd that our approach leads to less errors when processing r equirements documents. V I I . L I M I TA T I O N S A N D T H R E A T S T O V A L I D I T Y Th e detection of semi-structur ed requir ements defined i n r equirements documents could not be fully automated. The ma j ority of the pseudo code lines were detected during the e v aluation, though approx. 10% had to be inserted manually a fterwards. Due to the low Pr ecision v alue, additional adjust- me nt s are required to remove the lines that are not relev ant f or CEG creation. Re garding the translation into the CEG, our app roach depends on a consistent syntax of the pseudo c ode. In case of spelling and grammar errors, the translation f ails. Future research should employ compensation techniques suc h as calculating the Levensthein Dis tance to make the tr anslation more robust. A threat to internal validity is socia l pr essure in the ex ecution of group experiments, especially when r ecording times. T o pre vent the test designers from competing with each other in the creation of the CEG, we inspected the thr ee test documents individually with the study participants. Furthe rmore, none of the study participants was informed of the time spent by the others. The translation of semi-structur ed r equirements into a CEG is subject to potential maturation e ffects. Howe v er we argue that this effect can be neglected sinc e the actual creation of the CEG (step 2c ) was performed a utom atically and v ery little of the second phase of the study w as per for m e d manually (step 2b ) . W ith regard to external v alidity , it can be stated that some parts of our app roach need to be adapted for use across other domains. This requires e xtending the detection algorithm with additional features and sma l l modifications of the ANTLR grammar to handle furth er nota t ion styles. V I I I . C O N C L U S I O N S A N D O U T L O O K T est models ha ve prov en to successfully describe the de sired system behavior and derive suitable test cases. They the refore constitute an integral part of modern development pr ojects, but their cre ation and maintenance is v ery time- c onsumi ng, error-prone and is usually performed manually . W e pr esent an approach that generates Cause-Effect-Gr aphs fr om se mi-structured r equirements . Specifically , we extract relev ant r equirements from requireme nts documents using Machine L earning and analyze them both syntactically and semantica lly to cre ate the corresponding CEG. Our study with 3 experts on 3 exemplary cases shows that the process could not y et b e f ull y automated but the application of our algorithm results in 86% time sa vings. In future work, we see potential to tr ansfer our approach to user stories and t heir acceptance c riteria. Specifically , we w ant to translate acceptance criteria e xpressed in the G herkin format into CEGs and thus tailor the te st model generation even more to the agile context. R E F E R E N C E S [1] M. Broy , B. Jonsson, J.-P . Katoen, M. Leucker, and A. Pretschner, Model- Based T esting of Reactive Systems: Advanced Lectur es , 2005. [2] M. Utting, A. Pretschner , and B. Legeard, “ A taxonomy of model-ba sed testing approaches, ” Softwar e T esting, V erification and Reliability , vol. 22, no. 5, 2012. [3] Kramer , A. and Legeard, B., and Binder, R. V ., “2016/2017 model-based testing user survey: Results, ” 2017. [Online]. A vailable: http://www .cftl. fr/wp- content/uploads/2017/02/2016- MBT - User- Survey- Re sults.pdf [4] L. Mich, F . Mar iangela, and I. Pierluigi, “Market research for require- ments analysis using linguistic tools, ” R equirements Engineering , vol. 9, no. 1, 2004. [5] M. Kassab, C. Neill, and P . Laplante, “State of practice in requirements engineering: contemporary data, ” Innovations in Systems an d Software Engineering , vol. 10, no. 4, 2014. [6] J. Winkler and A. V ogelsang, “ Automatic classification of requir ements based on con v olutional neural netw orks, ” in A IRE , 2016. [7] A. Mavin, P . Wilkinson, A. Harwood, and M. Novak, “Easy approa ch to requirements syntax (E ARS), ” in R E , 2009. [8] M. Beckmann, A. V ogelsang, and C. Reuter , “ A case study on a specifi- cation approach using activity diagrams in requirements documents, ” in RE , 2017. [9] D. Freudenstein, J. Radduenz, M. Junker , S. Eder, and B. Hauptmann, “ Automated test-design from requirements: The specmate tool, ” in R ET , 2018. [10] J. Ryser, S. Berner, and M. Glinz, “On the state of the art in requirements- based validation and test of software, ” Uni versity of Zurich, T ech. Rep., 1999. [11] M. D. Fraser , K. Kumar, and V . K. V aishnavi, “Informal and formal re- quirements specification languages: bridging the gap, ” IEEE Tr ansactions on Software Engineering (TSE) , v ol. 17, no. 5, 1991. [12] R. Wieringa and E. Dubois, “Integrating semi-formal and formal software specification techniques, ” I nformation Systems , vol. 23, no. 3, 1998. [13] G. J. Myers, The Art of Software T esting . Wiley , 1979. [14] W . R. Elmendorf, “Cause-ef fect graphs in functional testing, ” IBM Systems Development Division, T ech. Rep., 1973. [15] S. Tuarob, S. Bhatia, P . Mitra, and C. L. Giles, “ Automatic detection of pseudocodes in scholarly documents using machine learning, ” in I CD AR , 2013. [16] G. Carvalho, F . Barros, F . Lapschies, U. Schulze, and J. Peleska, “Model- based testing from controlled natural language requirement s, ” in F TSCS , C. Artho and P . C. Ölv eczk y , Eds., 2014. [17] P . Fröhlich and J. Link, “ Automated test case generation from dynamic models, ” in ECOOP , 2000. [18] J. Ryser and M. Glinz, “SCENT: A method employing scenarios to systematically derive test cases for system test, ” T ech. Rep., 2000. [19] M. Alksasbeh, B. Alqaralleh, T . A. Alramadin, and K. Ali Alemerien, “ An automated use case diagrams generator from natural language re- quirements, ” Journal of Theor etical and Applied Information T echnology , vol. 95, 2017. [20] G. Mogyorodi, “What is requirements-based testing?” in STC , 2003. [21] G. James, D. W itten, T . Hastie, and R. E. Tibshirani, An Intr oduction to Statistical Learning , 1st ed. Springer , 2013 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment