A Sharp Threshold Phenomenon for the Distributed Complexity of the Lovasz Local Lemma

The Lov'{a}sz Local Lemma (LLL) says that, given a set of bad events that depend on the values of some random variables and where each event happens with probability at most $p$ and depends on at most $d$ other events, there is an assignment of the variables that avoids all bad events if the LLL criterion $ep(d+1)<1$ is satisfied. In this paper, we study the dependency of the distributed complexity of the LLL problem on the chosen LLL criterion. We show that for the fundamental case of each random variable of the considered LLL instance being associated with an edge of the input graph, that is, each random variable influences at most two events, a sharp threshold phenomenon occurs at $p = 2^{-d}$: we provide a simple deterministic (!) algorithm that matches a known $\Omega(\log^* n)$ lower bound in bounded degree graphs, if $p < 2^{-d}$, whereas for $p \geq 2^{-d}$, a known $\Omega(\log \log n)$ randomized and a known $\Omega(\log n)$ deterministic lower bounds hold. In many applications variables affect more than two events; our main contribution is to extend our algorithm to the case where random variables influence at most three different bad events. We show that, surprisingly, the sharp threshold occurs at the exact same spot, providing evidence for our conjecture that this phenomenon always occurs at $p = 2^{-d}$, independent of the number $r$ of events that are affected by a variable. Almost all steps of the proof framework we provide for the case $r=3$ extend directly to the case of arbitrary $r$; consequently, our approach serves as a step towards characterizing the complexity of the LLL under different exponential criteria.

💡 Research Summary

The paper investigates how the distributed complexity of the Lovász Local Lemma (LLL) depends on the strength of the LLL criterion, focusing on the symmetric setting where each bad event occurs with probability at most p and depends on at most d other events. The authors discover a sharp threshold at p = 2⁻ᵈ.

Main contributions

-

Deterministic algorithm for r = 2 (variables affect at most two events). By interpreting each variable as an edge of the dependency graph, the algorithm processes edges one by one, fixing the associated random variable to a value that increases the conditional probability of each incident bad event by at most a factor of 2. Since each event has at most d incident edges, after all edges are fixed the probability of any event is bounded by p·2ᵈ < 1, which forces the event to never occur. The process is completely deterministic and local; using a proper edge‑coloring it can be parallelized, yielding a distributed runtime of O(d + log* n) rounds.

-

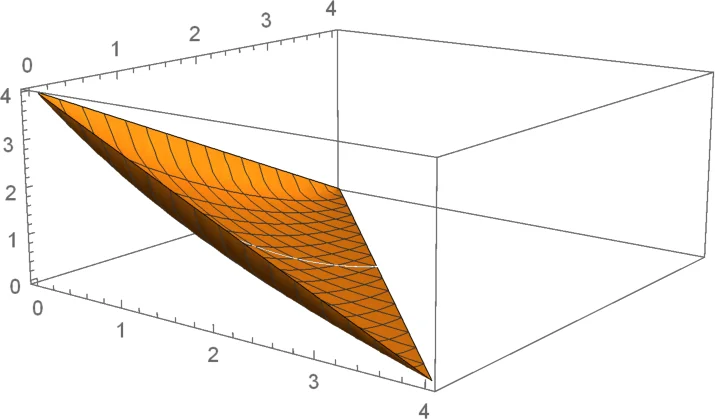

Extension to r = 3 (variables affect up to three events). The naïve extension would require a criterion p < 3⁻ᵈ, because fixing a variable could multiply probabilities by up to 3. The authors develop a more sophisticated “book‑keeping” technique that tracks how much each event’s probability has grown. When fixing a variable, they choose a value that keeps the increase of any single event bounded by a factor of 2, while allowing larger growth on neighboring events that are already unlikely. The existence of such a choice is reduced to the convexity of a certain function; for r = 3 the function can be expressed in closed form and shown to be convex. Consequently, the same threshold p < 2⁻ᵈ suffices. By first computing a 2‑hop coloring (requiring O(d²) colors) the algorithm can be parallelized, achieving a deterministic distributed runtime of O(d² + log* n) rounds.

-

Sharp threshold phenomenon. For both r = 2 and r = 3, if p ≥ 2⁻ᵈ the known lower bounds apply: any randomized algorithm needs Ω(log log n) rounds and any deterministic algorithm needs Ω(log n) rounds. Thus the threshold p = 2⁻ᵈ exactly separates a regime where the problem can be solved in O(log* n) rounds from a regime where polylogarithmic time is unavoidable.

-

Discussion of general r. The authors argue that most parts of their framework extend to arbitrary r, but the key analytic step—showing convexity of the probability‑growth function—remains open for r > 3. They conjecture that the same threshold p = 2⁻ᵈ holds universally, independent of r.

-

Applications. The results immediately improve deterministic algorithms for classic distributed problems that are reducible to LLL, such as sinkless orientation, edge‑coloring, and certain weak‑splitting problems. Notably, the deterministic algorithm works under the much weaker condition p < 2⁻ᵈ instead of the far stricter p < 1/n required by previous deterministic approaches for low‑degree graphs.

Technical highlights

- The algorithm’s core is a greedy, one‑pass fixing of variables that never revisits a variable.

- For r = 2, the guarantee that each edge contributes at most a factor‑2 increase follows from a simple counting argument.

- For r = 3, the authors introduce a potential function that captures the “danger” of each event; they prove that a suitable assignment exists by solving a small convex optimization problem per variable.

- Parallelization relies on standard edge‑coloring (for r = 2) and 2‑hop coloring (for r = 3), both achievable in O(log* n) rounds in the LOCAL model.

Implications

The discovery of a clean, criterion‑independent threshold reshapes our understanding of the distributed LLL landscape. It shows that, once the probability of bad events is below 2⁻ᵈ, randomness is unnecessary: a deterministic algorithm can achieve the optimal O(log* n) complexity. Conversely, crossing the threshold re‑introduces inherent randomness or logarithmic‑scale deterministic overhead, matching known lower bounds. This dichotomy mirrors phase‑transition phenomena in statistical physics and suggests a universal principle governing the tractability of local constraint satisfaction problems in distributed settings.

Future work includes extending the convexity analysis to arbitrary r, reducing the polynomial dependence on d for higher‑r cases, and exploring whether similar thresholds exist for asymmetric LLL criteria or for other distributed models (e.g., CONGEST). The paper thus opens a promising line of research toward a complete classification of distributed LLL complexity.

Comments & Academic Discussion

Loading comments...

Leave a Comment