Investigating kernel shapes and skip connections for deep learning-based harmonic-percussive separation

In this paper we propose an efficient deep learning encoder-decoder network for performing Harmonic-Percussive Source Separation (HPSS). It is shown that we are able to greatly reduce the number of model trainable parameters by using a dense arrangement of skip connections between the model layers. We also explore the utilisation of different kernel sizes for the 2D filters of the convolutional layers with the objective of allowing the network to learn the different time-frequency patterns associated with percussive and harmonic sources more efficiently. The training and evaluation of the separation has been done using the training and test sets of the MUSDB18 dataset. Results show that the proposed deep network achieves automatic learning of high-level features and maintains HPSS performance at a state-of-the-art level while reducing the number of parameters and training time.

💡 Research Summary

**

This paper presents a novel deep‑learning architecture for Harmonic‑Percussive Source Separation (HPSS) that combines two key ideas: dense skip connections inspired by DenseNet (as used in the Multi‑scale DenseNet, MDenseNet) and a multi‑branch design employing convolutional kernels of three distinct shapes. The authors argue that HPSS exhibits characteristic patterns in the time‑frequency domain—percussive components appear as vertical structures (short in time, broad in frequency) while harmonic components appear as horizontal structures (long in time, narrow in frequency). Traditional HPSS methods based on median filtering exploit these patterns with handcrafted filters, but they are limited by rigid assumptions. Recent deep‑learning approaches, especially encoder‑decoder networks such as U‑Net, have shown superior performance because they can learn features directly from data. However, U‑Net’s exponential growth of channel numbers with depth leads to a large number of parameters, which hampers efficiency and scalability.

To address this, the authors adopt the MDenseNet architecture, which replaces the conventional widening of channels with dense blocks where each layer receives the concatenated outputs of all preceding layers. This dense connectivity preserves information flow, mitigates vanishing gradients, and dramatically reduces the total parameter count while maintaining expressive power. Building on MDenseNet, the paper introduces the “Three‑Way MDenseNet” (3W‑MDenseNet). Instead of a single encoder‑decoder path, three parallel MDenseNet branches are employed. One branch uses the standard 3 × 3 square kernels; the second uses tall, narrow kernels of size 13 × 1 to capture vertical (percussive) patterns; the third uses wide, short kernels of size 1 × 13 to capture horizontal (harmonic) patterns. After independent processing, the three branches are concatenated and fed into a final dense block. Two 1 × 1 convolutional layers with sigmoid activation then produce soft masks for the percussive and harmonic sources, which are applied to the input magnitude spectrogram and combined with the original phase via inverse STFT to reconstruct time‑domain signals.

The experimental setup uses the MUSDB18 dataset, the standard benchmark for music source separation. Audio is converted to mono, transformed with a 1024‑point STFT (50 % overlap) yielding magnitude spectrograms of size 512 × 128 (≈1.5 s). Log1p normalization is applied globally across the training set. Supervised training uses the drums stem as the ground‑truth percussive source and the mixture minus drums as the harmonic source. The loss is a weighted sum of mean‑squared errors between the estimated and true masks (λ = 0.5 for each source). Training employs the Adam optimizer with learning‑rate reduction on plateau and early stopping after 15 epochs without validation improvement. The model contains roughly 555 k trainable parameters, far fewer than the 8.7 M parameters of a conventional U‑Net and comparable to other state‑of‑the‑art models that often exceed several million parameters.

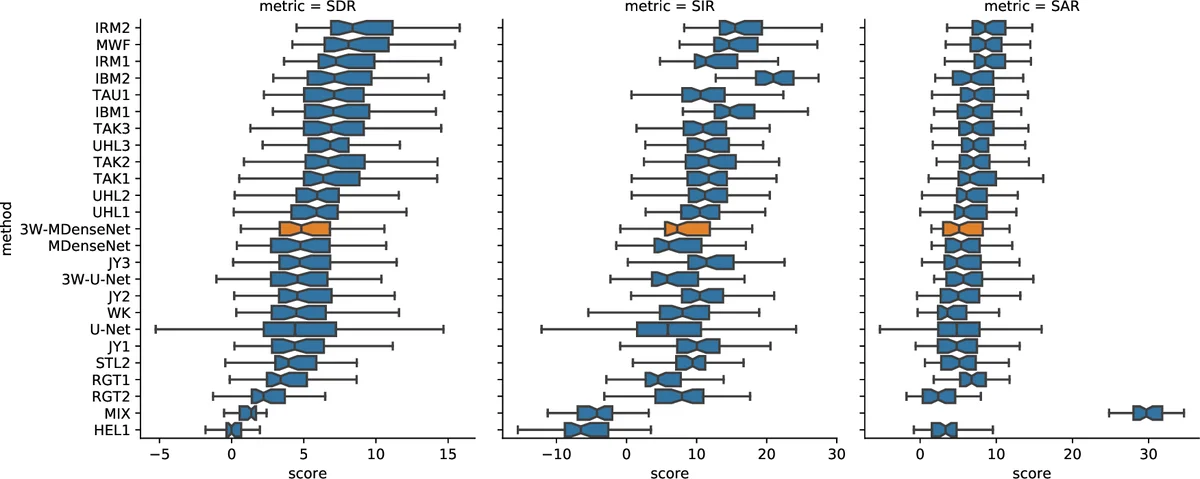

Four models are compared: (1) a baseline U‑Net with 3 × 3 kernels and exponential channel growth; (2) a “3W‑U‑Net” that shares the three‑branch, multi‑kernel design but lacks dense blocks; (3) the original MDenseNet with only 3 × 3 kernels; and (4) the proposed 3W‑MDenseNet. All models are constrained to a similar parameter budget (≈550–610 k). Performance is measured with the museval toolkit, reporting Signal‑to‑Distortion Ratio (SDR), Signal‑to‑Interference Ratio (SIR), and Signal‑to‑Artifact Ratio (SAR) for both sources across the 50‑song test set.

Results (Table 1) show that 3W‑MDenseNet achieves the highest scores on every metric: for the percussive source, SDR = 3.70 dB, SIR = 5.84 dB, SAR = 5.35 dB; for the harmonic source, SDR = 9.71 dB, SIR = 10.48 dB, SAR = 13.32 dB. These figures surpass the baseline U‑Net (percussive SDR = 3.16 dB, harmonic SDR = 12.15 dB) and the MDenseNet (percussive SDR = 3.48 dB, harmonic SDR = 12.46 dB). The improvements are attributed to two complementary effects: dense skip connections enable efficient feature reuse and stable gradient propagation, while the specialized kernel shapes directly target the anisotropic structures of percussive and harmonic components, reducing the burden on the network to discover such patterns from generic square filters.

The authors conclude that tailoring network architecture to the intrinsic time‑frequency geometry of HPSS yields both parameter efficiency and performance gains. The 3W‑MDenseNet’s modest size (≈0.55 M parameters) makes it suitable for real‑time or embedded applications where computational resources are limited. Moreover, the design principles—dense connectivity and multi‑shape convolutions—are readily extensible to other audio separation tasks (e.g., vocal‑instrument separation, multi‑instrument extraction) and potentially to other domains where directional patterns dominate (e.g., speech enhancement, biomedical signal analysis). Future work may explore adaptive kernel selection, deeper multi‑scale dense hierarchies, or integration with phase‑aware loss functions to further close the gap toward ideal source separation.

Comments & Academic Discussion

Loading comments...

Leave a Comment