A comprehensive study of speech separation: spectrogram vs waveform separation

Speech separation has been studied widely for single-channel close-talk microphone recordings over the past few years; developed solutions are mostly in frequency-domain. Recently, a raw audio waveform separation network (TasNet) is introduced for single-channel data, with achieving high Si-SNR (scale-invariant source-to-noise ratio) and SDR (source-to-distortion ratio) comparing against the state-of-the-art solution in frequency-domain. In this study, we incorporate effective components of the TasNet into a frequency-domain separation method. We compare both for alternative scenarios. We introduce a solution for directly optimizing the separation criterion in frequency-domain networks. In addition to speech separation objective and subjective measurements, we evaluate the separation performance on a speech recognition task as well. We study the speech separation problem for far-field data (more similar to naturalistic audio streams) and develop multi-channel solutions for both frequency and time-domain separators with utilizing spectral, spatial and speaker location information. For our experiments, we simulated multi-channel spatialized reverberate WSJ0-2mix dataset. Our experimental results show that spectrogram separation can achieve competitive performance with better network design. Multi-channel framework as well is shown to improve the single-channel performance relatively up to +35.5% and +46% in terms of WER and SDR, respectively.

💡 Research Summary

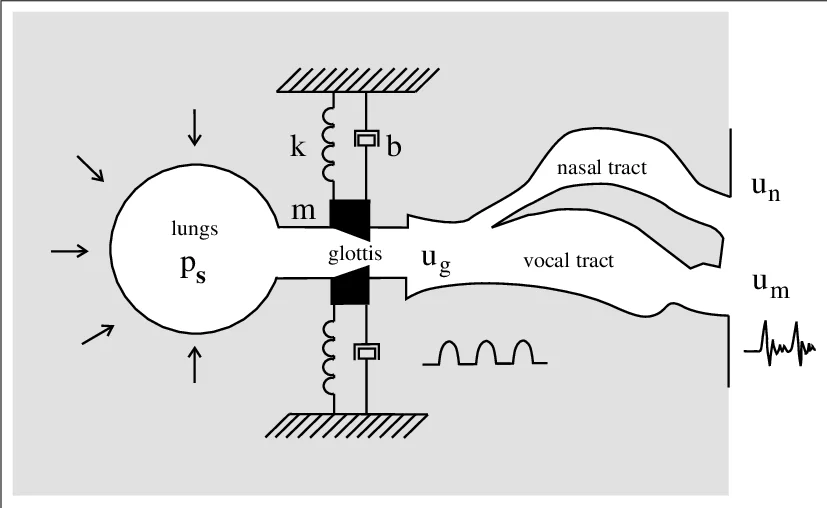

This paper presents a comprehensive comparative study of two dominant paradigms for single‑channel speech separation—frequency‑domain (spectrogram) masking and time‑domain (waveform) processing—while also extending both approaches to multi‑channel scenarios. The authors first revisit the classic spectrogram pipeline, which relies on a fixed short‑time Fourier transform (STFT) and inverse STFT (ISTFT) to decompose the mixture into magnitude and phase, then estimates masks for each source using a separation network (typically BLSTM‑based). In contrast, TasNet operates entirely in the time domain: a learnable 1‑D convolutional encoder maps raw samples to a latent representation, a deep convolutional separator predicts source masks, and a transposed‑convolution decoder reconstructs the waveforms. TasNet’s key advantage is the use of a scale‑invariant signal‑to‑noise ratio (Si‑SNR) loss that directly optimizes the evaluation metric.

The authors integrate two critical components of TasNet into the spectrogram framework: (1) the deep convolutional network architecture, replacing the conventional BLSTM, and (2) the Si‑SNR loss function, implemented by expressing STFT and ISTFT as fixed Conv‑1D and ConvTranspose‑1D layers with a Hann window kernel. This enables end‑to‑end back‑propagation of the Si‑SNR objective through the frequency‑domain pipeline. Experiments on a simulated multi‑channel, reverberant version of the WSJ0‑2mix dataset (six‑mic circular array, 0.05–0.5 s T₆₀, random speaker and array positions) show that the Si‑SNR‑trained spectrogram model achieves performance comparable to TasNet while using far fewer parameters when the CNN separator is employed.

To explore spatial information, the paper introduces inter‑microphone phase difference (IPD) features and speaker‑direction angle features. IPD is computed as cosine and sine of the phase difference for six microphone pairs and concatenated with the magnitude of the reference channel. Angle features are derived from steering vectors and encode the presumed direction of arrival for each target speaker. In the spectrogram case, these features are appended to the magnitude input; in the waveform case, they are concatenated to the encoder output after a Conv‑1D STFT step. Both single‑channel and multi‑channel configurations are evaluated using Si‑SNR, SDR, PESQ, and word error rate (WER) from a clean‑trained Kaldi ASR system (mix WER ≈ 89.9 %).

Results demonstrate that (i) Si‑SNR loss consistently outperforms MSE loss for spectrogram models (average Si‑SNR gain ≈ 0.9 dB, SDR gain ≈ 0.7 dB); (ii) the CNN separator, while slightly behind BLSTM on single‑channel data, offers a dramatic reduction in model size (≈ 8 M vs. 67 M parameters); (iii) incorporating IPD yields 3–5 dB additional Si‑SNR improvement over baseline; (iv) adding angle features further boosts performance, achieving the best scores among all tested systems. Multi‑channel models reduce WER by up to 35.5 % relative (from 89.9 % to ≈ 58 %) and improve SDR by up to 46 % relative compared to single‑channel baselines. Target‑speaker extraction using a TGT‑SiSNR loss (requiring only the reference of the desired speaker) shows even larger WER reductions for the target while still suppressing interferers.

The paper’s contributions are threefold: (1) a successful transfer of TasNet’s architectural and loss‑function innovations to the frequency‑domain, proving that spectrogram‑based systems can match waveform‑based performance; (2) a unified multi‑channel framework that leverages IPD and directional cues for both spectrogram and waveform separators; (3) extensive empirical validation on a realistic reverberant, multi‑mic dataset, including objective separation metrics and downstream ASR impact. The findings suggest that future speech separation systems for real‑world applications (e.g., smart assistants, conference devices) should combine efficient convolutional separators, Si‑SNR‑based training, and spatial features to achieve both high separation quality and downstream recognition benefits. Future work may address real‑time implementation, adaptive handling of varying numbers of speakers, and integration with end‑to‑end speech recognition pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment