VS-Net: Variable splitting network for accelerated parallel MRI reconstruction

In this work, we propose a deep learning approach for parallel magnetic resonance imaging (MRI) reconstruction, termed a variable splitting network (VS-Net), for an efficient, high-quality reconstruction of undersampled multi-coil MR data. We formula…

Authors: Jinming Duan, Jo Schlemper, Chen Qin

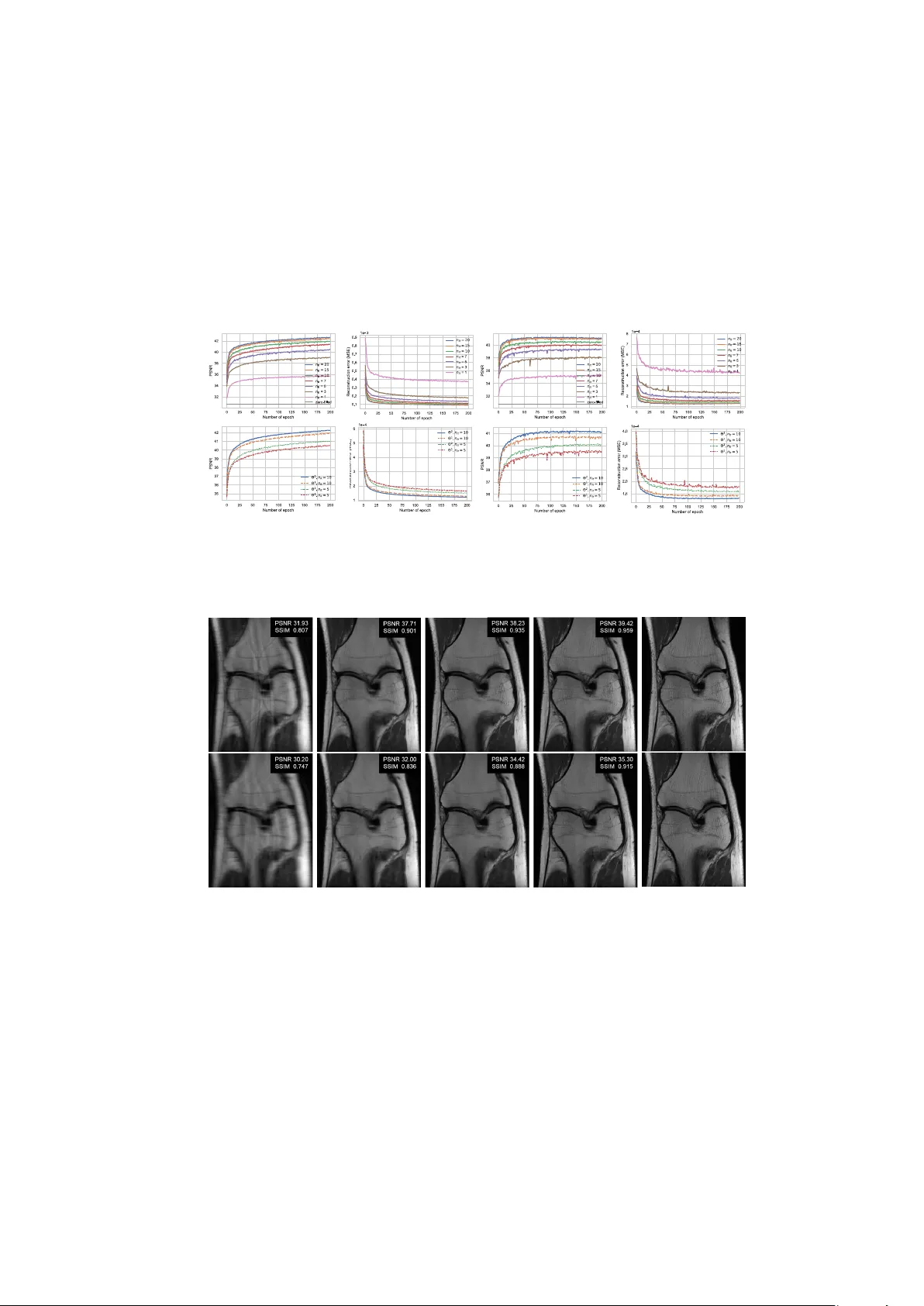

VS-Net: V ariable splitting net w ork for accelerated parallel MRI reconstruction Jinming Duan † 1 , 2 ( ) , Jo Sc hlemp er † 2 , Chen Qin 2 , Cheng Ouy ang 2 , W enjia Bai 2 , Carlo Biffi 2 , Ghalib Bello 3 , Ben Statton 3 , Declan P O’Regan 3 , Daniel Ruec kert 2 1 Sc ho ol of Computer Science, Universit y of Birmingham, Birmingham, UK j.duan@cs.bham.ac.uk 2 Biomedical Image Analysis Group, Imp erial College London, London, UK 3 MR C London Institute of Medical Sciences, Imp erial College London, London, UK Abstract. In this w ork, we prop ose a deep learning approac h for paral- lel magnetic resonance imaging (MRI) reconstruction, termed a v ariable splitting net work (VS-Net), for an efficien t, high-quality reconstruction of undersampled multi-coil MR data. W e formulate the generalized parallel compressed sensing reconstruction as an energy minimization problem, for which a v ariable splitting optimization metho d is derived. Based on this formulation we prop ose a no vel, end-to-end trainable deep neural net work architecture b y unrolling the resulting iterative process of such v ariable splitting scheme. VS-Net is ev aluated on complex v alued m ulti- coil knee images for 4-fold and 6-fold acceleration factors. W e show that VS-Net outperforms state-of-the-art deep learning reconstruction algo- rithms, in terms of reconstruction accuracy and p erceptual qualit y . Our co de is publicly a v ailable at https://gith ub.com/j-duan/VS-Net. 1 In tro duction Magnetic resonance imaging (MRI) is an imp ortan t diagnostic and research tool in many clinical scenarios. How ever, its inheren tly slo w data acquisition pro cess is often problematic. T o accelerate the scanning pro cess, parallel MRI (p-MRI) and compressed sensing MRI (CS-MRI) are often emplo yed. These metho ds are designed to facilitate fast reconstruction of high-quality , artifact-free images from minimal k -space data. Recently , deep learning approaches for MRI reconstruc- tion [ 1 , 2 , 3 , 4 , 5 , 6 , 7 , 8 , 9 , 10 ] hav e demonstrated great promises for further accelera- tion of MRI acquisition. How ev er, not all of these techniques [ 1 , 2 , 5 , 7 , 8 ] are able to exploit parallel image acquisitions whic h are common in clinical practice. In this pap er, w e in vestigate accelerated p-MRI reconstruction using deep learning. W e prop ose a nov el, end-to-end trainable approach for this task which w e refer to as a v ariable splitting netw ork (VS-Net). VS-Net builds on a gen- eral parallel CS-MRI concept, whic h is formulated as a m ulti-v ariable energy minimization pro cess in a deep learning framework. It has three computational † con tributed equally . 2 J Duan, et al. blo c ks: a denoiser blo c k, a data consistency blo c k and a weigh ted av erage blo c k. The first one is a denoising conv olutional neural netw ork (CNN), while the latter t wo hav e p oin t-wise closed-form solutions. As such, VS-Net is computationally efficien t yet simple to implement. VS-Net accepts complex-v alued multi-c hannel MRI data and learns all parameters automatically during offline training. In a series of exp erimen ts, we monitor reconstruction accuracies obtained from v ary- ing the num b er of stages in VS-Net. W e studied differen t parameterizations for w eight parameters in VS-Net and analyzed their numerical b eha viour. W e also ev aluated VS-Net performance on a multi-coil knee image dataset for different acceleration factors using the Cartesian undersampling patterns, and show ed impro ved image quality and preserv ation of tissue textures. T o this end, we p oin t out the differences b et ween our metho d and related w orks in [ 1 , 2 , 3 , 4 , 8 ], as w ell as highlight our no vel con tributions to this area. First, data consistency (DC) la yer introduced in [ 1 ] w as designed for single- coil images. MoDL [ 4 ] extended the cascade idea of [ 1 ] to a m ulti-coil setting. Ho wev er, its DC la yer implemen tation was through iteratively solving a linear system using the conjugate gradien t in their net work, whic h can b e very com- plicated. In contrast, DC lay er in VS-Net naturally applies to multi-coil images, and is also a p oin t-wise, analytical solution, making VS-Net b oth computation- ally efficien t and numerically accurate. V ariational netw ork (VN) [ 3 ] and [ 8 ] w ere applicable to multi-coil images. Ho wev er, they were based gradien t-descent op- timization and pro ximal metho ds resp ectiv ely , whic h do es not imp ose the exact DC. Compared ADMM-net [ 2 ] to VS-Net, the former w as also only applied to single-coil images. Moreov er, ADMM-Net w as deriv ed from the augmen ted La- grangian metho d (ALM), while VS-Net uses a penalty function method, whic h results in a simpler netw ork arc hitecture. ALM in tro duces Lagrange multipli- ers to w eaken the dep endence on penalty w eight selection. While these weigh ts can b e learned automatically in netw ork training, the need for a net work with a more complicated ALM is not clear. In ADMM-Net and VN, the regular- ization term w as defined via a set of explicit learnable linear filter kernels. In con trast, VS-Net treats regularization implicitly in a CNN-denoising pro cess. Consequen tly , VS-Net has the flexibility of using v arying adv anced denoising CNN architectures while av oiding exp ensiv e dense matrix inv ersion - a encoun- tered problem in ADMM-Net. A final distinction is that the effect of different w eight parameterizations is studied in VS-Net, while this w as not inv estigated in the aforemen tioned works. 2 VS-Net for accelerated p-MRI reconstruction General CS p-MRI mo del: Let m ∈ C N b e a complex-v alued MR image stac ked as a column v ector and y i ∈ C M ( M < N ) b e the under-sampled k - space data measured from the i th MR receiv er coil. Recov ering m from y i is an ill-p osed in v erse problem. According to compressed sensing (CS) theory , one can estimate the reconstructed image m by minimizing the following unconstrained VS-Net: V ariable splitting deep neural net work 3 optimisation problem: min m ( λ 2 n c X i =1 kD F S i m − y i k 2 2 + R ( m ) ) , (1) In the data fidelity term (first term), n c denotes the num b er of receiver coils, D ∈ R M × N is the sampling matrix that zeros out entries that hav e not b een acquired, F ∈ C N × N is the F ourier transform matrix, S i ∈ C N × N is the i th coil sensitivit y , and λ is a mo del weigh t that balances the t w o terms. Note that the coil sensitivit y S i is a diagonal matrix, which can be pre-computed from the fully sampled k -space center using the E-SPIRiT algorithm [ 11 ]. The second term is a general sparse regularization term, e.g. (nonlo cal) total v ariation [ 12 , 13 ], total generalized v ariation [ 12 , 14 ] or the ` 1 penalty on the discrete wa v elet transform of m [ 15 ]. V ariable splitting: In order to optimize ( 1 ) efficien tly , we dev elop a v ariable splitting metho d. Sp ecifically , we introduce the auxiliary splitting v ariables u ∈ C N and { x i ∈ C N } n c i =1 , con verting ( 1 ) into the following equiv alent form min m,u,x i λ 2 n c X i =1 kD F x i − y i k 2 2 + R ( u ) s.t. m = u, S i m = x i , ∀ i ∈ { 1 , 2 , ..., n c } . The introduction of the first constraint m = u decouples m in the regularization from that in the data fidelity term so that a denoising problem can b e explicitly form ulated (see Eq. ( 3 ) top). The introduction of the second constraint S i m = x i is also crucial as it allo ws decomp osition of S i m from DF S i m in the data fidelit y term suc h that no dense matrix inv ersion is inv olv ed in subsequent calculations (see Eq. ( 4 ) middle and bottom). Using the p enalt y function method, we then add these constrain ts back into the mo del and minimize the single problem min m,u,x i λ 2 n c X i =1 kD F x i − y i k 2 2 + R ( u ) + α 2 n c X i =1 k x i − S i m k 2 2 + β 2 k u − m k 2 2 , (2) where α and β are in tro duced p enalt y w eigh ts. T o minimize ( 2 ), which is a multi- v ariable optimization problem, one needs to alternativ ely optimize m , u and x i b y solving the following three subproblems: u k +1 = arg min u β 2 k u − m k k 2 2 + R ( u ) x k +1 i = arg min x i λ P n c i =1 kD F x i − y i k 2 2 + α 2 P n c i =1 k x i − S i m k k 2 2 m k +1 = arg min m α 2 P n c i =1 k x k +1 i − S i m k 2 2 + β 2 k u k +1 − m k 2 2 , (3) Here k ∈ { 1 , 2 , ..., n it } denotes the k th iteration. An optimal solution ( m ∗ ) ma y b e found b y iterating ov er u k +1 , x k +1 i and m k +1 un til conv ergence is ac hieved or the n umber of iterations reaches n it . An initial solution to these subproblems 4 J Duan, et al. can b e derived as follows u k +1 = denoiser ( m k ) x k +1 i = F − 1 (( λ D T D + αI ) − 1 ( α F S i m k + λ D T y i )) ∀ i ∈ { 1 , 2 , ..., n c } m k +1 = ( β I + α P n c i =1 S H i S i ) − 1 ( β u k +1 + α P n c i =1 S H i x k +1 i ) . (4) Here S H i is the conjugate transp ose of S i and I is the identit y matrix of size N b y N . D T D is a diagonal matrix of size N by N , whose diagonal entries are either zero or one corresp onding to a binary sampling mask. D T y i is an N -length v ector, representing the k -space measurements ( i th coil) with the unsampled p ositions filled with zeros. In this step we ha ve turned the original problem ( 1 ) in to a denoising problem (denoted b y denoiser ) and tw o other equations, b oth of which ha v e closed-form solutions that can be computed point-wise due to the nature of diagonal matrix inv ersion. W e also note that the middle equation efficien tly imp oses the consistency betw een k -space data and image space data coil-wisely , and the b ottom equation simply computes a weigh ted av erage of the results obtained from the first tw o equations. Next, we will show an appropriate net work architecture can b e deriv ed by unfolding the iterations of ( 4 ). DB WA B DCB DB WA B DCB Input … DB WA B DCB Stage 1 Stage n it Output Stage 2 Sensitivities Undersampled k ‐ space Mask Fig. 1: Ov erall architecture of the prop osed v ariable splitting netw ork (VS-Net). Net work architecture: W e propose a deep cascade netw ork that naturally in tegrates the iterativ e pro cedures in Eq. ( 4 ). Fig. 1 depicts the netw ork ar- c hitecture. Specifically , one iteration of an iterativ e reconstruction is related to one stage in the netw ork. In each stage, there are three blo c ks: denoiser block (DB), data consistency blo c k (DCB) and weigh ted av erage blo c k (W AB), which resp ectiv ely corresp ond to the three equations in ( 4 ). The netw ork takes four in- puts: 1) the single sensitivity-w eigh ted undersampled image which is computed using P n c i S H i F − 1 D T y i ; 2) the pre-computed coil sensitivity maps { S i } n c i =1 ; 3) the binary sampling mask D T D ; 4) the undersampled k -space data {D T y i } n c i =1 . Note that the sensitivity-w eigh ted undersampled image is only used once for DB and DCB in Stage 1. In contrast, {D T y i } n c i =1 , { S i } n c i =1 and the mask are required for W AB and DCB at each stage (see Fig. 1 and 2 ). As the netw ork is guided VS-Net: V ariable splitting deep neural net work 5 b y the iterative pro cess resulting from the v ariable splitting metho d, we refer to it as a V ariable Splitting Net work (VS-Net). DB ReLU 3x3 Conv ReLU 3x3 Conv ReLU 3x3 Conv ReLU 3x3 Conv 3x3 Conv n f =64 n f =64 n f =64 n f =64 n f =2 Denoiser Block (via CNN) DCB WA B m k , S i , k 0 , mask Eq. 4 middle … m k u k+1 Eq. 4 middle Eq. 4 middle Input i =1 i =2 i = n c … .. .. .. Output Input Output Data Consistency Block , , , … u k+1 Eq. 4 bottom m k+1 Input Output Wei gh t Avera ge Block Fig. 2: Detailed structure of each blo c k in VS-net. DB, DCB and W AB stand for Denoiser Blo c k, Data Consistency Block and W eighted Av erage Blo c k, resp ec- tiv ely . In Fig. 2 , w e illustrate the detailed structures of k ey building blo c ks of the net work (DB, DCB and W AB) at Stage k in VS-Net. In DB, we intend to denoise a complex-v alued image with a con volutional neural netw ork (CNN). T o handle complex v alues, we stack real and imaginary parts of the undersampled input in to a real-v alued t wo-c hannel image. ReLU’s are used to add nonlinearities to the denoiser to increase its denoising capability . Note that while we use a simple CNN here, our setup allows for incorp oration of more adv anced denoising CNN arc hitectures. In DCB, m k from the upstream blo c k, { S i } n c i =1 , { k i } n c i =1 (i.e. the undersampled k -space data of all coils) and the mask are tak en as inputs, passing through the middle equation of ( 4 ) and outputting { x k +1 i } n c i =1 . The outputs u k +1 and { x k +1 i } n c i =1 from DCB and W AB, concurren tly with the coil sensitivit y maps, are fed to W AB pro ducing m k +1 , whic h is then used as the input to DB and DCB in the next stage in VS-Net. Due to the existence of analytical solutions, no iteration is required in W AB and DCB. F urther, all the computational op er- ations in W AB and DCB are p oin t-wise. These features make the calculations in the tw o blo c ks simple and efficient. The pro cess pro ceeds in the same manner un til Stage n it is reac hed. Net work loss and parameterizations: T raining the prop osed VS-Net is an- other optimization process, for whic h a loss function m ust be explicitly formu- lated. In MR reconstruction, the loss function often defines the similarity betw een the reconstructed image and a clean, artifact-free reference image. F or example, a common choice for the loss function used in this w ork is the mean squared error (MSE), giv en by L ( Θ ) = min Θ 1 2 n i n i X i =1 k m n it i ( Θ ) − g i k 2 2 , (5) 6 J Duan, et al. where n i is the num b er of training images, and g is the reference image, which is a sensitivity-w eighted fully sampled image computed by P n c j S H j F − 1 f j . Here f j represen ts the fully sampled raw data of the j th coil. Θ abov e are the netw ork parameters Θ to b e learned. In this work we study tw o parameterizations for Θ , i.e., Θ 1 = { W l } n it l =1 , λ, α, β and Θ 2 = { W l , λ l , α l , β l } n it l =1 . Here { W l } n it l =1 are learnable parameters for all ( n it ) CNN denoising blocks in VS-Net. Moreo v er, in b oth cases we also mak e the data fidelity weigh t λ and the penalty weigh ts α and β learnable parameters. In contrast, for Θ 1 w e let the w eigh ts λ , α and β b e shared by the W ABs and DCBs across VS-Net, while for Θ 2 eac h W AB and DCB hav e their own learnable w eigh ts. Since all the blocks are differen tiable, bac kpropagation (BP) can b e effectiv ely employ ed to minimize the loss with resp ect to the netw ork parameters Θ in an end-to-end fashion. 3 Exp erimen ts results Datasets and training details: W e used a publicly av ailable clinical knee dataset 1 in [ 3 ], whic h has the following 5 image acquisition proto cols: coronal proton-densit y (PD), coronal fat-saturated PD, axial fat-saturated T 2 , sagittal fat-saturated T 2 and sagittal PD. F or eac h acquisition proto col, the same 20 sub jects w ere scanned using an off-the-shelf 15-element knee coil. The scan of eac h sub ject cov er appro ximately 40 slices and eac h slice has 15 c hannels ( n c = 15). Coil sensitivit y maps pro vided in the dataset w ere precomputed from a data blo c k of size 24 × 24 at the center of fully sampled k -space using BAR T [ 16 ]. F or training, we retrosp ectiv ely undersampled the original k -space data for 4-fold and 6-fold acceleration factors (AF) with Cartesian undersampling, sampling 24 lines at the cen tral region. F or each acquisition proto col, w e split the sub jects into training and testing subsets (eac h with sample size of 10), and trained VS-Net to reconstruct eac h slice in a 2D fashion. The net work parameters was optimized for 200 epo c hs, using Adam with learning rate 10 − 3 and batch size 1. W e used PSNR and SSIM as quan titative p erformance metrics. P arameter b eha viour: T o show the impact of the stage n umber n it (see Fig 1 ), we first exp erimen t on the sub jects under the coronal PD proto col with 4-fold AF. W e set n it = { 1 , 3 , 5 , 7 , 10 , 15 , 20 } and plotted the training and testing quan titative curves versus the n um b er of ep ochs in the upp er p ortion of Fig 3 . As the plots show, increasing the stage num b er improv es netw ork p erformance. This is ob vious for tw o reasons: 1) as num b er of parame ters increases, so do es the netw ork’s learning capability; 2) the embedded v ariable splitting minimiza- tion is an iterativ e pro cess, for which sufficien t iterations (stages) are required to con verge to an ideal solution. W e also found that: i) the p erformance difference b et w een n it = 15 and n it = 20 is negligible as the netw ork gradually conv erges after n it = 15; ii) there is no o verfitting during netw ork training despite the use of a relatively small training set. Second, we examine the netw ork perfor- mance when using tw o different parameterizations: Θ 1 = { W l } n it l =1 , λ, α, β and 1 h ttps://github.com/VLOGroup/mri-v ariationalnetw ork VS-Net: V ariable splitting deep neural net work 7 Θ 2 = { W l , λ l , α l , β l } n it l =1 . F or a fair comparison, w e used the same initializa- tion for b oth parameterizations and exp erimen ted with tw o cases n it = { 5 , 10 } . As shown in the b ottom p ortion of Fig 3 , in b oth cases the netw ork with Θ 1 p erforms slightly w orse than the one with Θ 2 . In p enalt y function metho ds, a p enalt y weigh t is usually shared (fixed) across iterations. Ho wev er, our exp er- imen ts indicated improv ed performance if the mo del weigh ts ( λ , α and β ) are non-shared or adaptiv e at each stage in the netw ork. Fig. 3: Quantitativ e measures versus num b er of epo c hs at training (first t wo columns) and testing (last t w o columns). 1st ro w shows the net w ork performance using differen t stage num bers. 2nd column shows the net w ork p erformance using differen t parameterizations of Θ in the loss ( 5 ). Fig. 4: Visual comparison of results obtained by different metho ds for Cartesian undersampling with AF 4 (top) and 6 (bottom). F rom left to right: zero-filling results, ` 1-SPIRiT results, VN results, VS-Net results, and ground truth. Click here for more visual comparison. Numerical comparison: W e compared our VS-Net with the iterative ` 1- SPIRiT [ 17 ] and the v ariational net work (VN) [ 3 ], with the zero-filling recon- struction as a reference. F or VS-Net, we used n it (10) and Θ 2 , although the 8 J Duan, et al. net work’s performance can be further b oosted with a larger n it . F or VN, we carried out training using mostly default hyper-parameters from [ 3 ], except for the batch size, which (using original image size) w as set to 1 to b etter fit GPU memory . F or b oth VS-Net and VN, we trained a separate mo del for eac h proto- col, resulting in a total of 20 mo dels for the 4-fold and 6-fold AFs. In T able 1 , w e summarize the quan titative results obtained b y these metho ds. As is evident, learning-based methods VS-Net and VN outp erformed the iterative ` 1-SPIRiT. VN pro duced comparable SSIMs to VS-Net in some scenarios. The resulting PNSRs were how ever lo wer than that of VS-Net for all acquisition proto cols and AFs, indicating the sup erior numerical p erformance of VS-Net. In Fig 4 , w e presen t a visual comparison on a coronal PD image reconstruction for both AFs. Apart from zero-filling, all metho ds remov ed aliasing artifacts successfully . Among ` 1-SPIRiT, VN and VS-Net, VS-Net recov ered more small, textural de- tails and thus achiev ed the b est visual results, relative to the ground truth. The quan titative metrics in Fig 4 further show that VS-Net is the b est. T able 1: Quantitativ e results obtained by differen t metho ds on the test set includ- ing ∼ 2000 image slices across 5 acquisition proto cols. Eac h metric was calculated on ∼ 400 image slices, and mean ± standard deviation are rep orted. 4-fold AF 6-fold AF Proto col Metho d PSNR SSIM PSNR SSIM Coronal fat-sat. PD Zero-filling 32.34 ± 2.83 0.80 ± 0.11 30.47 ± 2.71 0.74 ± 0.14 ` 1-SPIRiT 34.57 ± 3.32 0.81 ± 0.11 31.51 ± 2.21 0.78 ± 0.08 VN 35.83 ± 4.43 0.84 ± 0.13 32.90 ± 4.66 0.78 ± 0.15 VS-Net 36.00 ± 3.83 0.84 ± 0.13 33.24 ± 3.44 0.78 ± 0.15 Coronal PD Zero-filling 31.35 ± 3.84 0.87 ± 0.11 29.39 ± 3.81 0.84 ± 0.13 ` 1-SPIRiT 39.38 ± 2.16 0.93 ± 0.03 34.06 ± 2.41 0.88 ± 0.04 VN 40.14 ± 4.97 0.94 ± 0.12 36.01 ± 4.63 0.90 ± 0.13 VS-Net 41.27 ± 5.25 0.95 ± 0.12 36.77 ± 4.84 0.92 ± 0.14 Axial fat-sat. T 2 Zero-filling 36.47 ± 2.34 0.94 ± 0.02 34.90 ± 2.39 0.92 ± 0.02 ` 1-SPIRiT 39.38 ± 2.70 0.94 ± 0.03 35.44 ± 2.87 0.91 ± 0.03 VN 42.10 ± 1.97 0.97 ± 0.01 37.94 ± 2.29 0.94 ± 0.02 VS-Net 42.34 ± 2.06 0.96 ± 0.01 39.40 ± 2.10 0.94 ± 0.02 Sagittal fat-sat. T 2 Zero-filling 37.35 ± 2.69 0.93 ± 0.07 35.25 ± 2.68 0.90 ± 0.09 ` 1-SPIRiT 41.27 ± 2.95 0.94 ± 0.06 36.00 ± 2.67 0.92 ± 0.05 VN 42.84 ± 3.47 0.95 ± 0.07 38.92 ± 3.23 0.93 ± 0.09 VS-Net 43.10 ± 3.44 0.95 ± 0.07 39.07 ± 3.33 0.92 ± 0.09 Sagittal PD Zero-filling 37.12 ± 2.58 0.96 ± 0.04 35.96 ± 2.57 0.94 ± 0.05 ` 1-SPIRiT 44.52 ± 1.94 0.97 ± 0.02 39.14 ± 2.12 0.96 ± 0.02 VN 46.34 ± 2.75 0.98 ± 0.05 39.71 ± 2.58 0.96 ± 0.05 VS-Net 47.22 ± 2.89 0.98 ± 0.04 40.11 ± 2.46 0.96 ± 0.05 4 Conclusion In this pap er, we proposed the v ariable spitting netw ork (VS-Net) for acceler- ated reconstruction of parallel MR images. W e ha ve detailed how to formulate VS-Net: V ariable splitting deep neural net work 9 VS-Net as an iterativ e energy minimization pro cess embedded in a deep learn- ing framework, where eac h stage essentially corresp onds to one iteration of an iterativ e reconstruction. In exp eriments, we hav e shown that the p erformance of VS-Net gradually plateaued as the netw ork stage num b er increased, and that setting parameters in each stage as learnable improv ed the quan titative results. F urther, we hav e ev aluated VS-Net on a multi -coil knee image dataset for 4-fold and 6-fold acceleration factors under Cartesian undersampling and show ed its sup eriorit y ov er tw o state-of-the-art metho ds. 5 Ac kno wledgements This w ork was supp orted by the EPSR C Programme Grant (EP/P001009/1) and the British Heart F oundation (NH/17/1/32725). The TIT AN Xp GPU used for this researc h was kindly donated by the NVIDIA Corp oration. References 1. Sc hlemp er, J., et al.: A deep cascade of conv olutional neural netw orks for dynamic MR image reconstruction. IEEE T rans. Med. Imag. 37 (2) (2018) 491–503 2. Y an, Y., et al.: Deep ADMM-Net for compressive sensing MRI. In: NIPS. (2016) 10–18 3. Hammernik, K., et al.: Learning a v ariational netw ork for reconstruction of accel- erated MRI data. Magn. Reson. Med. 79 (6) (2018) 3055–3071 4. Aggarw al, H.K., et al.: MoDL: Mo del-based deep learning architecture for inv erse problems. IEEE T rans. Med. Imag. 38 (2) (2019) 394–405 5. Han, Y., et al.: k-space deep learning for accelerated MRI. arXiv:1805.03779 (2018) 6. Ak cak ay a, M., et al.: Scan-sp ecific robust artificial-neural-netw orks for k-space in terp olation (RAKI) reconstruction: Database-free deep learning for fast imaging. Magn. Reson. Med. 81 (1) (2018) 439–453 7. Jin, K., et al.: Self-sup ervised deep active accelerated MRI. (2019) 8. Mardani, M., et al.: Deep generativ e adversarial neural netw orks for compressive sensing MRI. IEEE T rans. Med. Imag. 38 (1) (2019) 167–179 9. T ezcan, K., et al.: MR image reconstruction using deep density priors. IEEE T rans. Med. Imag. (2018) 10. Zhang, P ., et al.: Multi-c hannel generative adversarial net work for parallel magnetic resonance image reconstruction in k-space. In: MICCAI. (2018) 180–188 11. Uec ker, M., et al.: Espirit an eigenv alue approach to auto calibrating parallel MRI: where sense meets grappa. Magn. Reson. Med. 71 (3) (2014) 990–1001 12. Lu, W., et al.: Implemen tation of high-order v ariational mo dels made easy for image pro cessing. Math. Metho d Appl. Sci. 39 (14) (2016) 4208–4233 13. Lu, W., et al.: Graph-and finite elemen t-based total v ariation mo dels for the in verse problem in diffuse optical tomography . Biomed. Opt. Express 10 (6) (2019) 2684–2707 14. Duan, J., et al.: Denoising optical coherence tomography using second order total generalized v ariation decomp osition. Biomed. Signal Pro cess. Con trol 24 (2016) 120–127 10 J Duan, et al. 15. Liu, R.W., et al.: Undersampled cs image reconstruction using nonconv ex nons- mo oth mixed constraints. Multimed. T ools Appl. 78 (10) (2019) 12749–12782 16. Uec ker, M., et al.: Soft ware to olbox and programming library for compressed sensing and parallel imaging, Citeseer 17. Murph y , M., et al.: F ast l1-spirit compressed sensing parallel imaging MRI: Scalable parallel implementation and clinically feasible runtime. IEEE T rans. Med. Imag. 31 (6) (2012) 1250–1262

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment