Image Super Resolution via Bilinear Pooling: Application to Confocal Endomicroscopy

Recent developments in image acquisition literature have miniaturized the confocal laser endomicroscopes to improve usability and flexibility of the apparatus in actual clinical settings. However, miniaturized devices collect less light and have fewe…

Authors: Saeed Izadi, Darren Sutton, Ghassan Hamarneh

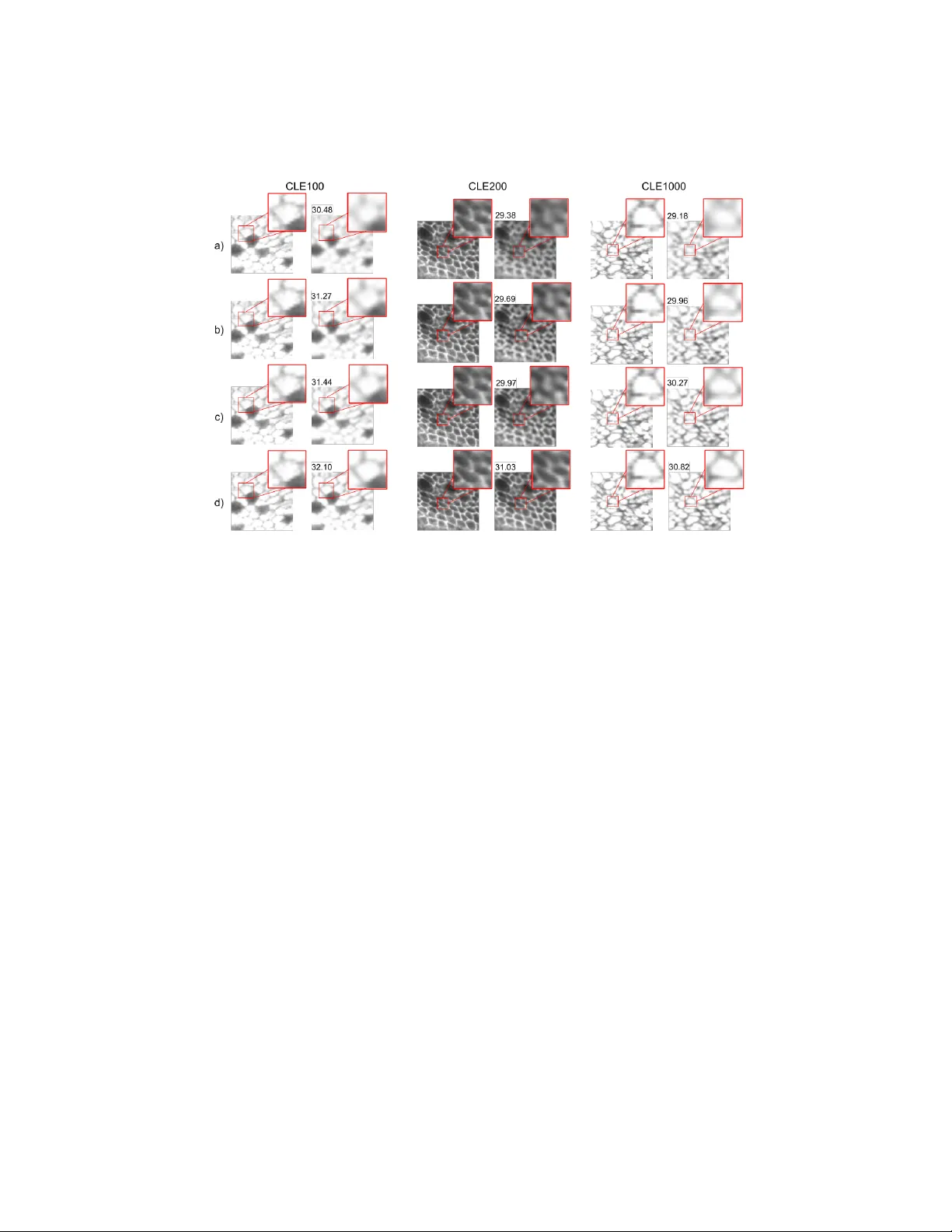

Image Sup er Resolution via Bilinear P o oling: Application to Confo cal Endomicroscop y Saeed Izadi, Darren Sutton, and Ghassan Hamarneh Sc ho ol of Computing Scienc e, Simon F raser Universit y , Canada { saeedi, darrens, hamarneh } @sfu.ca Abstract. Recen t developmen ts in image acquisition literature hav e miniaturized the confo cal laser endomicroscopes to improv e usabilit y and flexibilit y of the apparatus in actual clinical settings. How ever, miniatur- ized devices collect less ligh t and hav e few er optical comp onen ts, resulting in pixelation artifacts and lo w resolution images. Owing to the strength of deep net works, many supervised metho ds known as sup er resolution ha ve achiev ed considerable success in restoring low resolution images b y generating the missing high frequency details. In this work, w e prop ose a nov el attention mechanism that, for the first time, com bines 1st- and 2nd-order statistics for p o oling op eration, in the spatial and channel-wise dimensions. W e compare the efficacy of our method to 10 other exist- ing single image sup er resolution techniques that compensate for the reduction in image qualit y caused by the necessity of endomicroscop e miniaturization. All ev aluations are carried out on three publicly av ail- able datasets. Exp erimen tal results show that our metho d can pro duce sup erior results against state-of-the-art in terms of PSNR, and SSIM metrics. Additionally , our prop osed metho d is ligh tw eight and suitable for real-time inference. 1 In tro duction Colorectal cancer is known as the fourth most-common cancer and remains one of the leading causes of cancer related mortality in the world. In 2018, more than 1 million p eople were affected b y colorectal cancer worldwide, resulting in an estimated 550,000 deaths [2]. Rapid histopathologic assessment is an important to ol that may improv e disease prognosis b y detecting early-stage cancer and pre- cancerous conditions. Although biopsy and ex-vivo tissue examination are widely accepted as the diagnostic gold standard, such pro cedures tak e time and may limit the ability of the endoscopist to rapidly gauge disease sev erity . Confo cal laser endomicroscop y (CLE), on the other hand, has substantially impro ved real- time in-vivo visualization of the subsurface of living cells, v ascular structures, and tissue patterns during endoscopic examination [10]. 2 F or in-vivo histological examination, the large size of the microscop e com- plicates navigation of the in terior of the b ody in a clinical setting. Therefore, it is necessary to reduce the size of the microscop e to completely and safely access the organ(s) of in terest. Ho wev er, miniaturization reduces the n umber of optical elements in the microscop e prob e, introducing pixelation artifacts in the acquired images. One strategy to remov e image artifacts and enhance image qualit y is to directly p ost-pro cess degraded images. An emerging process in the field of image pro cessing, referred to as single image super-resolution (SR), aims to reconstruct an accurate high-resolution (HR) image giv en its low-resolution (LR) counterpart. Thus, SR is a promising softw are metho d to mitigate image degradation due to hardw are miniaturization. Among traditional SR algorithms, Huang et al. [8] prop osed leveraging self- similarit y mo dulo affine transformations to accommodate natural deformation of recurring statistical priors within and across scales of an image. Timofte et al. [18,19] used a combination of neighbour embedding and sparse dictionary learning ov er an external database and proposed anc hored neigh b orhoo d regres- sion in the dictionary atom space. Recently , CNNs hav e adv anced the SR research field b y directly learning the mapping b et ween LR and HR images [4,11,12,13,1]. Dong et al. [4] demonstrated that a fully conv olutional netw ork trained end- to-end can p erform LR-to-HR nonlinear mapping. Kim et al. [11] suggested a trained net work to predict additive details in the form of a residual image, whic h is summed with the interpolated image. Kim et al. [12] addressed model o ver- fitting b y reducing the num b er of parameters via recursiv e con volutional la yers. Lai et al. [13] designed a net work whic h progressiv ely reconstructs the s ub-band residuals of high-resolution images at multiple pyramid levels. Ahn et al. [1] impro ved sp eed and efficiency of SR mo dels by designing a cascade mechanism o ver residual net works. Lastly , Cheng et al. [3] exploited recursiv e squeeze and excitation mo dules in a netw ork to exploit relationships betw een c hannels. Izadi et al. [9] reported the first attempt to deplo y CNNs on CLE images. They used a densely connected CNN to transform syn thetic LR images in to HR ones. Ra vi et al. [15] emplo yed a CNN to restore missing details into LR images. They collected a set of consecutive LR frames and generated synthetic HR images using a video registration technique. In a more recen t study [16], Ravi et al. trained a CNN for unsup ervised SR on CLE images using a cycle consistency regularization, designed to imp ose acquisition prop erties on the sup er-resolv ed images. In this pap er, w e present a ligh tw eight con volutional neural net work (CNN) that is appropriate for frame-wise SR by incorporating a nov el attention mec h- anism. In contrast to SESR [3], which leverages atten tion mo dules from the Squeeze-and-Excitation netw ork (SENet) [7] to re-weigh t c hannels, we in tro- duce a no vel w eighting sc heme to recalibrate learned features based on pairwise relationships. Our attention mo dules compromise b oth 1 st -order p ooling and 2 nd -order po oling (a.k.a. bilinear po oling), impro ving the quality of learned fea- tures in the netw ork by considering pairwise correlations along feature channels 3 Fig. 1: (a) The o verall arc hitecture of our prop osed net work. (b) RBAM arc hitecture. (c) c hannel-wise and (d) spatial attention architectures. and spatial regions [5]. The compactness and computational speed of our net- w ork lends well to real-tim e implementation during in-vivo examination. W e demonstrate that stacking atten tion mo dules in the middle of a low-lev el feature extraction head and a feature in tegration tail quantitativ ely and qualitatively pro duces sup erior results against existing SR methods and generalizes w ell ov er unseen microscopic datasets. 2 Metho d Net work Ov erview. Fig. 1-a depicts the ov erall architecture of our prop osed LR-to-SR netw ork. Let L LR ∈ R 1 × W × H , I SR ∈ R 1 × rH × rW , and r denote the lo w resolution input and super-res olv ed output, and the downsample factor, resp ectiv ely . W e use a conv olution la yer, denoted by F ( · ), with a 3 × 3 kernel and C output channels to extract initial features H 0 ∈ R C × H × W , i.e. H 0 = F c (I LR ; θ 0 ) , (1) where θ refers to the learnable parameters. In our proposed netw ork, the initial features H 0 are up dated by sequential residual atten tion mo dules, denoted as G ( · ) and a skip connection. The entire high-lev el feature extraction stage is denoted as B ( · ): H B = B (H 0 ) = G b ( G b − 1 ( ... ( G 1 (H 0 )) ... )) + H 0 . (2) T o upsample the feature maps, w e use sub-pixel con volutions, denoted as U ( · ), follo wed b y a single channel 1 × 1 conv olution for SR reconstruction: I SR = F 1 ( U (H B ; θ up ); θ rec ) . (3) Residual Bilinear Atten tion Module. In our proposed RBAM, w e com bine 1 st - and 2 nd -order po oling op erations spatially and channel-wise to recalibrate learned features for efficien t netw ork training. Fig. 1-b illustrates the structure 4 of our prop osed RBAM. Mathematically , we form ulate RBAM as: H b = G b (H b − 1 ) = Q b (H b − 1 ) + H b − 1 , (4) where Q ( · ) denotes the atten tion mo dules before the skip connection. Giv en the input feature maps H b ∈ R C × H × W , tw o con volutions with 3 × 3 k ernel size in terleav ed with a ReLU activ ation function are p erformed to pro duce high-lev el feature maps H b conv ∈ R C × H × W as input to the atten tion branches: H b conv = F c ( F c (H b − 1 ; θ b 1 ); θ b 2 ) . (5) Channel-wise Atten tion (CA) Branc h. CA leverages the in ter-channel cor- resp ondence b et ween feature resp onses (Fig. 1-c). 1 st - and 2 nd -order p ooling mec hanisms op erate on H b conv , producing t wo vectors F 1st ca , F 2nd ca ∈ R C × 1 × 1 . F 1st ca is the 1 st -order CA obtained b y spatial av erage po oling to squeeze the feature map of eac h c hannel [7]. T o obtain 2 nd -order CA, pairwise channel correlations are computed in the form of a cov ariance matrix Σ ∈ R C × C b y spatial flatten- ing, dimension p erm utation, and matrix multiplication. Each ro w in Σ enco des the statistical dep endency of a channel with respect to every other channel [5]. Giv en the cov ariance matrix Σ, we adopt a row-wise con volution with 1 × C k ernel size to produce the 2 nd -order CA v ector F 2nd ca . Finally , t wo successive 1-D con volutions interlea ved with a ReLU activ ation function op erate on a v ector formed b y the sum of F 1st ca + F 2nd ca . The output of the conv olution op eration is fed in to a sigmoid function σ , follo w ed b y elemen t-wise multiplication ⊗ to pro duce the b th up dated features maps H b ca : H b ca = H b conv ⊗ σ ( F c ( F c 4 (F 1st ca + F 2nd ca ; θ b 3 ); θ b 4 )) . (6) Spatial A ttention (SA) Branch. SA indicates shared corresp ondence b et ween spatial regions across all feature maps (Fig. 1-d). Given H b conv as the input, the 1 st -order spatial attention matrix, F 1st sa ∈ R 1 × H × W , is computed by the a verage p ooling operation along channel dimension to aggregate information for eac h spatial lo cation across all features. T o compute 2 nd -order spatial atten tion matrix, F 2nd sa ∈ R 1 × H × W , we first reduce the spatial size of feature maps to H 0 × W 0 (8 × 8 in our implemen tation) b y applying a verage po oling. Then, appropriate reshaping, dimension p erm utation and matrix multiplication is adopted to obtain the co v ariance matrix Σ ∈ R H 0 W 0 × H 0 W 0 . Similar to channel-wise atten tion, a ro w-wise conv olution with 1 × H 0 W 0 k ernel size is applied on Σ. Even tually , dimension p erm utation and nearest neighbor interpolation pro duce F 2nd sa . W e add these t wo matrices together element-wise and apply a conv olution with 1 × 1 kernel size that feeds a sigmoid function. Spatial atten tion is realised b y elemen t-wise multiplication o ver all feature maps, form ulated as: H b sa = H b conv ⊗ σ ( F c (F 1st sa + F 2nd sa ; θ b 5 )) (7) 5 Fig. 2: Qualitativ e results and their PSNR scores at × 4 SR. Eac h ro w sho ws the side- b y-side comparison of HR with a) bicubic, b) GR, c) SESR, and d) RBAM across three datasets. HR images are sho wn for each pair for ease of visual comparison. A ttention F usion. The up dated features are concatenated (+ +) and aggregated via a con volution with kernel 1 × 1 kernel. Lastly , H b is added via skip connection: H b = F c (H b ca + + H b sa ; θ b 6 ) + H b − 1 . (8) 3 Results and Discussion Data . W e ev aluate existing state-of-the-art SR metho ds, as w ell as our prop osed RBAM, on three publicly a v ailable CLE datasets (T able 1). W e select images rich in texture by assessing the SR performance of bicubic in terp olation on the unseen test set. As depicted in Fig. 3, images with PSNR scores b elo w the mean PSNR score of the bicubic method ev alulated on the test set are deemed ’texture rich’, and are used for ev aluation, whereas images associated with scores abov e the mean are deemed ’texture p o or’. In other words, images which can be effectively restored using bicubic interpolation are rejected for ev aluation, as they con tain little information on whic h to assess the p erformance of state-of-the-art metho ds. Ev aluation assesses the metho ds’ ability to reconstruct 1024 × 1024 HR image from a syn thesized LR counterpart obtained via bicubic do wnsampling with the appropriate factor ( × 2 or × 4). T raining Settings . W e train all metho ds on a random partition (80%) of CLE100, and ev aluate them on the remaining 20% as w ell as CLE200, and 6 T able 1: Details of the datasets used in our ev aluation. dataset pro vided b y #patien ts #images anatomical site image size CLE100 Leong e t al. [14] 30 181 small in testine 1024 × 1024 CLE200 Grisan et al. [6] 32 262 esophagus 1024 × 1024 CLE1000 S ¸ tef˘ anescu et al. [17] 11 1025 colorectal mucosa 1024 × 1024 Fig. 3: (a) Examples of images from the partitioned test set. Images b elonging to the ’texture rich’ partition are used for ev aluation. CLE1000. F or DL-based metho ds, we replicated the rep orted training settings, and used public code for traditional algorithms. F or our model, we use B = 5 RBAMs and set the n umber of features to C = 64 to create a ligh tw eight net- w ork. In each training batch, 16 LR patches of size 48 × 48 are randomly extracted as inputs, and augmen ted by random 90 ◦ rotations and horizon tal/vertical flip. W e use Adam optimizer and L1 loss to train our netw ork for 300 ep o c hs. Initial learning rate is set to 10 − 4 and is halv ed every 50 epo c hs. Ablation In vestigation . W e discern the effectiveness of the individual com- p onen ts in our netw ork mo dules b y ablating atten tion blo c ks and ev aluating p erformance after 50 epo c hs. Our in vestigation shows that, for CLE100 at × 2 SR, attention-based v ariants outperform the baseline, demonstrating the mer- its of incorp orating spatial and c hannel-wise contextual information. W e also observ ed that using b oth 1 st and 2 nd -order p ooling operations sim ultaneously outp erform using either 1 st or 2 nd -order channel-wise p o oling individually . W e similarly note that using both spatial and c hannel-wise attention outp erforms either one alone. Comparison to State-of-the-art. W e compare the p erformance of traditional algorithms including ANR [18], GR [18] and A+ [19], as w ell as DL-based tech- niques including SR CNN [4], VDSR [11], DR CN [12], LapSRN [13], SESR [3] and our proposed RBAM. T able 2 summarizes the quantitativ e comparisons in terms of p eak signal-to-noise-ratio (PSNR-SEM), structural similarit y (SSIM), and in- ference time at × 2, and × 4 SR. F rom the table, one can see that most DL-based metho ds consistently outperform traditional SR algorithms in PSNR and SSIM metrics. P articularly , RBAM significan tly outp erforms the mean PSNR ov er all datasets b y 0.18dB and 0.13dB for × 2 and × 4 SR, resp ectiv ely . F urthermore, RBAM is a practical compromise b et w een inference time, and generalization. Our results show a mo derate quantitativ e increase in PSNR score and a consid- 7 T able 2: Quan titative results of SR mo dels at × 2 and × 4 factors. Bold indicates the b est result. D and = denote traditional and DL-based metho ds, resp ectiv ely . PSNR scores are rep orted with the standard error of the mean (SEM) for eac h method. Methods CLE100 CLE200 CLE1000 time Scale × 2 PSNR SSIM PSNR SSIM PSNR SSIM Bicubic 33.69 ± 0.06 0.8693 35.53 ± 0.01 0.9029 34.45 ± 0.01 0.8920 0.02 A+ D [19] 34.22 ± 0.07 0.8928 36.14 ± 0.01 0.9218 35.04 ± 0.01 0.9114 6.72 ANR D [18] 36.44 ± 0.13 0.9226 39.10 ± 0.01 0.9559 37.64 ± 0.01 0.9559 6.07 GR D [18] 36.56 ± 0.13 0.9243 39.26 ± 0.01 0.9579 37.79 ± 0.01 0.9448 4.47 SRCNN = [4] 35.75 ± 0.11 0.9181 38.25 ± 0.01 0.9494 36.87 ± 0.01 0.9380 0.06 VDSR = [11] 36.72 ± 0.13 0.9276 39.31 ± 0.01 0.9578 37.89 ± 0.01 0.9462 0.25 DRCN = [12] 36.65 ± 0.13 0.9257 39.29 ± 0.01 0.9575 37.83 ± 0.01 0.9452 0.48 LapSRN = [13] 36.71 ± 0.13 0.9264 39.25 ± 0.01 0.9583 37.91 ± 0.01 0.9462 0.07 SESR = [3] 36.76 ± 0.13 0.9282 39.36 ± 0.01 0.9583 37.91 ± 0.01 0.9462 0.27 RBAM (Ours) = 36.91 ± 0.12 0.9321 39.45 ± 0.01 0.9590 38.22 ± 0.01 0.9501 0.18 Scale × 4 Bicubic 31.29 ± 0.04 0.6673 32.45 ± 0.01 0.7318 31.78 ± 0.01 0.7278 0.02 A+ [19] 31.57 ± 0.04 0.7042 32.76 ± 0.01 0.7607 32.06 ± 0.01 0.7517 3.03 ANR [18] 31.68 ± 0.04 0.7160 32.93 ± 0.01 0.7736 32.23 ± 0.01 0.7671 2.88 GR [18] 31.70 ± 0.04 0.7201 32.95 ± 0.01 0.7736 32.25 ± 0.01 0.7703 2.31 SRCNN [4] 31.59 ± 0.04 0.7073 32.76 ± 0.01 0.7617 32.07 ± 0.01 0.7566 0.06 VDSR [11] 31.66 ± 0.04 0.7144 32.86 ± 0.01 0.7804 32.16 ± 0.01 0.7635 0.25 DRCN [12] 31.70 ± 0.04 0.7214 32.92 ± 0.01 0.7750 32.21 ± 0.01 0.7635 0.48 LapSRN [13] 31.68 ± 0.04 0.7190 32.76 ± 0.01 0.7617 32.29 ± 0.01 0.7737 0.08 SESR [3] 31.76 ± 0.04 0.7249 32.99 ± 0.01 0.7804 32.29 ± 0.01 0.7737 0.33 RBAM (Ours) 31.84 ± 0.04 0.7315 33.11 ± 0.01 0.7852 32.47 ± 0.01 0.7874 0.07 erable increase in qualitativ e p erformance - this is similar to previous works in single image sup er resolution [20]. Fig. 2 sho ws selected image patches from eac h dataset for qualitativ e assessment. RBAM can delicately restore high-frequency cues, such as gran ular textures and sudden changes in gra yscale pixel in tensity . This manifests qualitatively in the form of improv ed restoration of high frequency details suc h as cell membranes (CLE200, CLE1000 examples) and intracellular spaces (CLE100 example). Motiv ation for Bilinear P o oling. W e combine 1 st -order and 2 nd -order po ol- ing to recalibrate learned features based on channels that activ ate often or corre- sp ond to feature rich inputs, resp ectiv ely . Channels that activ ate often are likely resp onding to common, low frequency image features. Conv ersely , channels that are highly correlated may b e resp onding to feature rich instances in the image space that activ ate m ultiple filters simultaneously . High frequency features tend to b e complex, and not as common semantically compared to low frequency im- age details. Therefore, c hannels that learn complex image features ma y not b e emphasized b y first order p o oling operations alone. Combining first and second order po oling in an attention mo dule assures that hard w orking channels are rew arded without diminishing the optimization of c hannels that learn complex features in the lo w to high resolution image mapping space. 8 4 Conclusion W e prop osed the first net work that sim ultaneously leverages both first and sec- ond order statistics for p ooling in spatial and channel-wise atten tion mechanisms, resulting in a light weigh t and fast mo del that restores high frequency image de- tails. W e compared our prop osed mo del with v arious traditional and DL-based SR techniques on three CLE datasets in terms of image quality assessment met- rics and inference time. Our RBAM netw ork outperforms existing light weigh t metho ds across differen t datasets, downsampling factors, and SR performance ev aluation criteria. Exp erimen tal results also highlight the p oten tial applica- bilit y of inexp ensiv e soft ware-based p ost-processing SR mo dules that impro ve degraded images in miniaturized CLE devices in real-time. Ac knowledgmen ts . Thanks to the NVIDIA Corp oration for the donation of Titan X GPUs used in this researc h and to the Collaborative Health Research Pro jects (CHRP) for funding. References 1. N. Ahn et al. F ast, accurate, and light weigh t super-resolution with cascading residual n etw ork. In ECCV , 2018. 2. F. Bray et al. Global cancer statistics 2018: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 coun tries. CA: a c anc er journal for clinicians , 68(6 ):394–424, 2018. 3. X. Cheng et al. Sesr: Single image super resolution with recursive squeeze and excitation n etw orks. In IEEE ICPR , pages 147–152, 2018. 4. C. Dong et al. Image sup er-resolution using deep conv olutional netw orks. IEEE TP AMI , 38(2):295–307, 2016. 5. Gao et al. Global second-order p ooling neural netw orks. , 2018. 6. E. Grisan et al. 239 computer aided diagnosis of barrett’s esophagus using confocal laser end omicroscopy: Preliminary data. Gast. Endosc. , 75(4,):AB126, 2012. 7. J. Hu et al. Squeeze-and-excitation netw orks. In IEEE CVPR , 2018. 8. J. Huang et al. Single image sup er-resolution from transformed self-exemplars. In IEEE CVPR , pages 5197–5206, 2015. 9. S. Izadi et al. Can deep learning relax endomicroscopy hardw are miniaturization requiremen ts? In MICCAI 2018 , pages 57–64, 2018. 10. R. Kiesslic h et al. Confocal laser endoscopy for diagnosing in traepithelial neoplasias and colo rectal cancer in viv o. Gastro enter ology , 127(3):706–713, 2004. 11. J. Kim et al. Accurate image super-resolution using v ery deep con volutional net- w orks. In IEEE CVPR , pages 1646–1654, 2016. 12. J. Kim et al. Deeply-recursive conv olutional netw ork for image sup er-resolution. In IEEE CVPR , pages 1637–1645, 2016. 13. W. Lai et al. F ast and accurate image sup er-resolution with deep laplacian pyramid net works. IEEE TP AMI , pages 1–1, 2018. 14. R. W. Leong et al. In viv o confocal endomicroscopy in the diagnosis and ev aluation of celiac disease. Gastr o enter olo gy , 135(6):1870 – 1876, 2008. 15. D. Rav ` ı et al. Effective deep learning training for single-image sup er-resolution in endomicroscop y exploiting video-registration-based reconstruction. International Journal of Computer Assiste d R adiolo gy and Sur gery , 13:917–924, 2018. 9 16. D. Ra v et al. Adversarial training with cycle consistency for unsupervised sup er- resolution in endomicroscop y . Medic al Image A nalysis , 53:123 – 131, 2019. 17. D. S ¸ tef˘ anescu et al. Computer aided diagnosis for confo cal laser endomicroscopy in adv anced colorectal adenocarcinoma. PloS ONE , 11(5):e0154863, 2016. 18. R. Timofte et al. Anc hored neighborho od regression for fast example-based sup er- resolution. In IEEE ICCV , pages 1920–1927, 2013. 19. R. Timofte et al. A+: Adjusted anc hored neighborho od regression for fast sup er- resolution. In A CCV , pages 111–126, 2015. 20. W. Y ang, X. Zhang, Y. Tian, W. W ang, J.-H. Xue, and Q. Liao. Deep learning for single im age super-resolution: A brief review. IEEE T r ans. on Multime dia , 2019.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment