Using Automatic Differentiation as a General Framework for Ptychographic Reconstruction

Coherent diffraction imaging methods enable imaging beyond lens-imposed resolution limits. In these methods, the object can be recovered by minimizing an error metric that quantifies the difference between diffraction patterns as observed, and those …

Authors: Saugat K, el, S. Maddali

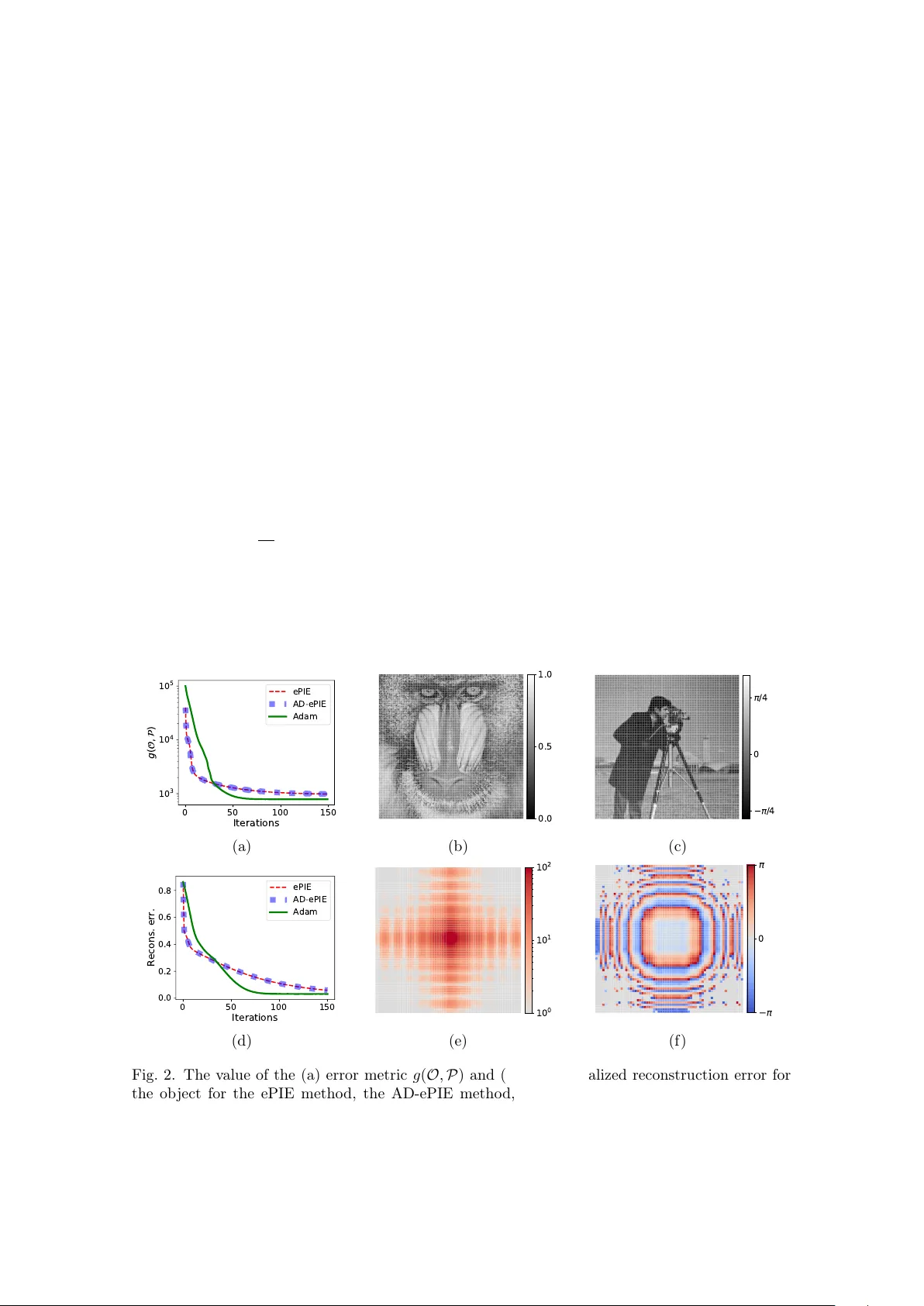

Using automatic differen tiation as a general framew ork for pt yc hographic reconstruction Saugat Kandel 1 , S. Maddali 2 , Marc Allain 3 , Stephan O Hruszk ewycz 2 , Chris Jacobsen 4,5,6 , and Y oussef S G Nashed 7,* 1 Applied Ph ysics, Northw estern Univ ersit y , Ev anston, Illinois 60208, USA 2 Materials Science Division, Argonne National Lab oratory , Lemont, IL 60439, USA 3 Aix Marseille Univ, CNRS, Cen trale Marseille, Institut F resnel, Marseille, F rance 4 A dv anced Photon Source, Argonne National Lab oratory , Lemont, Illinois 60439, USA 5 Departmen t of Physics & Astronomy , Northw estern Univ ersity , Ev anston, Illinois 60208, USA 6 Chemistry of Life Pro cesses Institute, Northw estern Univ ersity , Ev anston, Illinois 60208, USA 7 Mathematics and Computer Science Division, Argonne National Lab oratory , Lemon t, Illinois 60439, USA * Corresp onding author: ynashed@anl.go v Abstract Coheren t diffraction imaging metho ds enable imaging b eyond lens-imp osed resolution lim- its. In these methods, the ob ject can be recov ered by minimizing an error metric that quan- tifies the difference betw een diffraction patterns as observ ed, and those calculated from a presen t guess of the ob ject. Efficien t minimization metho ds require analytical calculation of the deriv ativ es of the error metric, which is not alwa ys straightforw ard. This limits our abilit y to explore v ariations of basic imaging approaches. In this paper, we propose to substi- tute analytical deriv ative expressions with the automatic differentiation metho d, whereb y we can achiev e ob ject reconstruction by sp ecifying only the physics-based exp erimental forw ard mo del. W e demonstrate the generalit y of the proposed metho d through straigh tforward ob ject reconstruction for a v ariety of complex ptyc hographic exp erimental mo dels. 1 In tro duction Pt ychograph y is a coherent diffraction imaging (CDI) technique that acquires a series of intensit y diffraction patterns through spatial shifts of the illumination (prob e) across the sample (ob ject) in a set of o verlapping b eam p ositions. Given a large num b er of o verlapping b eam p ositions, the pt ychograph y exp eriment yields sufficient redundant information with which we can reconstruct the ob ject structure to sub-b eam-size spatial resolution, and ev en determine additional exp erimental parameters such as the structure of the prob e itself. First prop osed by Hopp e in 1969 [1], the pt ychographic technique was realized exp erimen tally and rapidly developed algorithmically in the 2000s [2, 3, 4, 5, 6]. By removing the typical CDI limitation that the prob e size has to b e larger than the sample, ptyc hograph y has enabled high resolution imaging of extended ob jects, making it a p ow erful imaging tec hnique. As suc h, the ptyc hographic technique has found application not only as a 2D far-field diffraction imaging metho d but also as 2D near-field diffraction imaging metho d [7], a 3D Bragg imaging metho d [8], a 3D m ultislice imaging metho d [9], and a part of the 3D tomographic imaging metho d [10] including for ob jects b eyond the depth of fo cus limit [11]. Imaging with pt yc hography inv olv es solving the c hallenging phase retriev al problem, where one attempts to reconstruct an ob ject from only the magnitude of its F ourier transform. In general, the phase retriev al problem is ill-p osed [12]; solving it requires the use of ov ersampling and supp ort constraints. These are t ypically used in an iterative pro jection framew ork that up dates 1 the ob ject guess b y applying a F ourier magnitude pro jection and a real-space constraint pro jection [13, 14, 15, 16]. Alternativ ely , w e can also frame phase retriev al as a nonlinear minimization problem, where we minimize an error metric using a gradient-based approach. The gradient-based approac h is flexible and can include in the forw ard model a large v ariety of the physical phenomena related to the probing light (suc h as partial coherence [17], source fluctuations [18], and errors in p ositions [19, 20]), or the detection pro cess (such as the measuremen t noise [21, 22] and the finite size of the pixel [23]). As such, this metho d has b een the fo cus of m uch recen t literature, leading to the dev elopment of steep est descen t methods [16, 24, 25], conjugate gradient metho ds [4, 16, 26], Gauss-Newton metho ds [27], and quasi-Newton metho ds [28]. These algorithms hav e found application in the far-field ptyc hographic problem not only to solve for the ob ject alone [24, 25, 29, 30, 31, 32] but also to additionally solve for the prob e [32, 33] as well. Gradien t-based phase retriev al metho ds in the literature tend to rely on the av ailabilit y of a closed-form expression for the gradient calculation. This closed-form expression is t ypically obtained by writing down an explicit expression for the error metric to minimize, then sym b olically differen tiating the error metric with resp ect to the individual input parameters [23]. Calculating the gradient in this fashion is lab orious; a sligh t mo dification of the forw ard mo del usually requires a complete rederiv ation and algorithmic reimplementation of the gradien t expressions. This b ecomes esp ecially limiting if we desire to explore v ariations of, or introduce new capabilities to, our basic exp erimen tal metho dology . As suc h, it is more than desirable to ha ve an approach b eyond symbolic differen tiation in order to easily explore a v ariety of algorithms and approaches. A utomatic differ entiation [34], or algorithmic differ entiation , provides such an alternative to sym b olic differentiation. This approach is based up on the observ ation that v ector-v alued functions can, in general, b e interpreted as comp osites of basic arithmetic op erations and a limited num b er of elementary functions (including exponentials and trigonometric operations). Differentiation of functions can then b e understo o d as a recursive application of the chain rule of differentiation, wherein we repeatedly differentiate the same elementary functions (with kno wn deriv ativ es), only with different input parameters. This is a mechanistic pro cess and can hence b e p erformed en- tirely in softw are. Given a set of input numeric parameters, the automatic differen tiation metho d computes the exact deriv ativ e b y accum ulating the numerical v alues of the elemen tary functions and their deriv atives, without ever calculating the closed form deriv ativ e expression (Section 2). While the field of automatic differen tiation has a long and storied history [34], it is only recently that the emergence of deep neural netw ork metho ds has driv en its widespread adoption in the optimization and machine learning communities. Sp ecifically , there has no w arisen a need to p erform gradient-based minimization to optimize state-of-the-art neural netw orks, which can b e comp ositions of thousands or ev en millions of individual elementary functions. Since calculating closed-form deriv ativ es for these is not feasible, automatic differentiation has become the to ol of c hoice, th us leading to the recent rapid adoption and adv ancemen t of such soft ware. In 2014, when Jurling and Fien up first prop osed an automatic differentiation framew ork for phase retriev al [23], they commented on the lack of suitable existing softw are pack ages. Since then, w e hav e seen the rise of multiple p ow erful, easy-to-use, and computationally efficien t automatic differentiation framew orks such as T ensorFlow [35], PyT orc h [36], and Autograd [37]. More recently , we hav e ev en seen pro of-of-concept demonstrations [38, 39] that successfully adapt these softw are pack ages to solve the phase retriev al problem. In this pap er, we first pro vide an ov erview of the r everse-mo de automatic differentiation algo- rithm (also referred to as the b ackpr op agation algorithm) for gradient calculations (Section 2), and then mathematically justify the application of this algorithm as a general framework for ptyc ho- graphic phase retriev al. W e demonstrate the n umerical correctness of the reverse-mode automatic differen tiation framework through a comparison with the p opular symbolic-gradient-based ePIE metho d (Section 3). Finally , w e demonstrate the generalizability of this framework through success- ful phase retriev al for increasingly complex ptyc hographic forward mo dels–near-field ptyc hography and 3D Bragg pro jection ptyc hography–emphasizing the flexibility and p otential capacity of the approac h for non-standard ptyc hography exp erimen ts (Section 4). This shows that the reverse- mo de automatic differen tiation framew ork allo ws the practitioner to up date and change the forward mo del as necessary to b etter reflect the physics of the problem, without prior consideration tow ards ho w to symbolically differentiate the error metric. 2 2 Ov erview of automatic differen tiation In this section, we pro vide a limited ov erview of the automatic differen tiation pro cedure, fo cusing singularly on the r everse-mo de automatic differ entiation (or r everse-mo de AD ) framew ork, finally motiv ating the application of this framew ork to the phase retriev al problem. Detailed and rigorous examinations of the automatic differentiation pro cedure–the v arious mo des and the algorithmic framew orks–are av ailable [34], as is a detailed exp osition of the reverse-mode AD pro cedure in application to the phase retriev al problem [23]. T o demonstrate the idea of automatic differentiation, w e first consider differen tiable functions f , φ 1 , φ 2 , φ 3 : R → R with the function comp osition f = φ 3 ◦ φ 2 ◦ φ 1 , with the assumption that φ 1 , φ 2 , and φ 3 are elemen tary functions with priorly av ailable individual function deriv atives d φ 1 d x , d φ 2 d x , and d φ 3 d x (for an y x ∈ R ). At a giv en p oint x = c , we can compute the v alue of f through the sequence of successive ev aluations sho wn in T able 1. W e refer to this sequence of computations as the forwar d p ass . T able 1: Schema for reverse-mode automatic differentiation. F orw ard Pass Bac kward Pass Computational graph v 0 = c v 1 = φ 1 ( v 0 ) v 2 = φ 2 ( v 1 ) v 3 = φ 3 ( v 2 ) f ( c ) = v 3 ¯ v 3 = 1 ¯ v 2 = ¯ v 3 d v 3 d v 2 ¯ v 1 = ¯ v 2 d v 2 d v 1 ¯ v 0 = ¯ v 1 d v 1 d v 0 ¯ c = ¯ v 0 v 0 v 1 v 2 v 3 ¯ v 0 ¯ v 1 ¯ v 2 ¯ v 3 φ 1 φ 2 φ 3 d v 3 d v 2 d v 2 d v 1 d v 1 d v 0 F orward pass Bac kward pass The gradient is ev aluated through an accumulation of in termediate v alues calculated for the individual elementary functions and their deriv atives. In the ev aluation trace shown in T able 1, w e follo w the notation in the literature [34, 40, 41] and index the v ariables stored in the memory as v i , with i ≤ 0 for the input v ariables, and i > 0 for the intermediate computed v ariables. T o calculate the deriv ative of f at x = c , we use the c hain rule of differentiation: d f d x x = c = d φ 3 d x x = φ 2 ( φ 1 ( c )) · d φ 2 d x x = φ 1 ( c ) · d φ 1 d x x = c . T o ev aluate this deriv ative, w e p erform a backw ard pass ( i.e. , a reversed sequence of computations) during whic h w e asso ciate eac h intermediate v ariable from the forw ard pass, v i , with a new adjoint v ariable ¯ v i = d f d v i ; we then ev aluate ¯ v i from i = 3 to i = 0 . This is sho wn in T able 1. Noting that d v i +1 d v i = d φ i +1 d x x = v i , (1) w e can see that each step in the backw ard pass requires as input not only the deriv ative v alues calculated in the previous steps, but also the intermediate v ariables calculated during the forward pass. This is illustrated in the computational graph in T able 1. Thus, to calculate the final deriv ative v alue, we need to: 1. iden tify the deriv atives of the elementary functions φ 1 , φ 2 , and φ 3 , 2. p erform a forward pass ev aluation of the function and store the intermediate v alues calculated, and 3. p erform a backw ard pass to accum ulate the final deriv ative. This rev erse-mo de automatic differentiation scheme calculates the exact deriv ative v alue (up to floating p oin t errors) without relying on the closed form expression of the deriv ative; instead, it relies only on the structure of the computational graph. 3 F rom T able 1, w e can see that for the reverse-mode AD gradien t calculation, the v alues of the intermediate v ariables calculated during the forw ard pass are shared with the backw ard pass; eac h elemen tary expression is only computed once but reused multiple times. This ensures that the gradien t calculation is v ery e fficien t computationally . In fact, for functions of the form f : R n → R , if the function ev aluation (forward pass) requires ops ( f ) = N floating point operations, then the num b er of floating p oin t op erations required for the gradient ev aluation (backw ard pass) is alwa ys given by ops ( ∇ f ) = k · ops ( f ) = kN , with 0 < k < 6 a constant , suc h that k typically satisfies k ∈ [2 , 3] , no matter the v alue of n [34, 40]. In other words, for rev erse-mo de AD, barring memory limitations, the time required to calculate the gradient ∇ f is always within an order of magnitude of the time required to calculate the function v alue itself. This is known as the che ap gr adient principle . As such, the reverse-mode AD pro cedure is ideally suited to provide gradien t-based iterations aiming at solving of optimization problems. A quintessen tial case in p oint is machine learning with neural net works–the ‘training step’ in large neural netw orks boils do wn to the numerical optimization of an error metric inv olving a large num b er of functional comp ositions and up to millions of input parameters. In such a situation, the deriv ation of the closed-form gradient with p en and pap er is simply out of reach. Thus, the implementation of gradient descent pro cedures that use the AD framework has b een key to the recent meteoric rise in machine learning applications. In contrast to the neural netw ork case, a typical error metric for the phase retriev al problem only inv olves a limited num b er of functional comp ositions, and the closed form gradien ts can b e calculated man ually . This has b een demonstrated in prior phase retriev al literature: the Wirtinger flo w metho d and its v arian ts [24, 25, 42], the PIE family of metho ds [32], and n umerous other metho ds prop osed in the literature [14, 16, 43, 22, 29] are examples of gradient-based descent pro cedures that rely on such closed-form gradient expressions. How ever, the impressiv ely flexible AD framework not only simplifies these selfsame gradient calculations, but also allows us to modify the forward mo del; this emp ow ers us to address the full range of problems that are related to phase retriev al. Consequently , w e expect that the AD framew ork should also greatly b enefit the phase retriev al communit y . As we demonstrate in Section 3 for the ptyc hographic problem, the error metric for the phase retriev al problem is a scalar-v alued multiv ariate ob jective function of complex v ariables, f : C n → R [44, 16]. T o minimize such a function using a gradient descen t approach, we adopt the Wirtinger gradien t descen t formalism [45, 46, 47]. F or some z ∈ C n , the Wirtinger gradien t op erator is defined as ∇ f ( z ) = ∂ f ∂ z ∗ = 1 2 ∂ f ∂ R [ z ] + i ∂ f ∂ I [ z ] , (2) where i = √ − 1 , z ∗ is the element-wise complex conjugate of z , R [ z ] and I [ z ] are resp ectively the real and imaginary parts of z , and ∂ f ∂ R [ z ] and ∂ f ∂ I [ z ] the comp onent wise partial deriv atives with resp ect to the real and imaginary parts of z . Since the partial deriv atives ∂ f ∂ R [ z ] and ∂ f ∂ I [ z ] are b oth individually real-v alued, w e can calculate them by separately using the reverse-mode AD framew ork in the same fashion as shown in T able 1. This ensures that the gradient calculation pro cedure is v ery efficient, with the time cost once again comparable to that for the ob jective function itself. 3 V alidation: F ar-field transmission ptyc hograph y In this section, we first establish a forward mo del for far-field transmission ptyc hography . W e then use rev erse-mo de AD to set up a gradient descent procedure that is, b y construction, equiv alent to the ePIE reconstruction metho d [32] which uses a closed-form gradien t expression. W e compare these frameworks numerically and establish that the automatic differentiation framework calcu- lates gradient v alues that are iden tical to those calculated via the closed-form gradien t expressions. This comparison to the w ell-known ePIE metho d serv es to establish the v alidity of the AD frame- w ork for phase retriev al. The ePIE metho d, how ev er, cannot b e used out-of-the-b ox for ob ject 4 reconstruction once we mo dify the ptyc hographic forw ard mo del–as suc h, it is not well-suited for use with the AD framework. Instead, we demonstrate that w e can use the flexible and easy-to-use state-of-the-art accelerated adaptive gradient descent algorithms (like the Adaptiv e Momen t Esti- mation or A dam algorithm [48]) that are commonly av ailable out-of-the-b ox with AD softw are to efficien tly solve the ptyc hographic reconstruction problem. 3.1 F orward mo del for far-field transmission ptyc hograph y In far-field transmission ptyc hography , an unkno wn ob ject is illuminated with a coherent b eam, called the prob e, whic h is lo calized to a small area on the ob ject. The intensit y of the w av efront transmitted through the ob ject is then measured at the far field b y a pixel-arra y detector. The b eam is used to raster scan the ob ject in a grid of J spatially ov erlapping illumination spots, generating a set of J diffraction patterns at the detector plane. The forw ard mo del for far-field pt ychograph y consists of the following steps: 1. The complex v alued ob ject transmission function O is appro ximated b y a total of N pixels in the ob ject plane. F or the illumination p osition r j , a shift op erator S j , with an M × N matrix represen tation, extracts the M ob ject pixels illuminated by the prob e b eam P con taining M pixels in the ob ject plane. The thus transmitted wa ve function is represen ted by the exit w av e ψ j ∈ C M : ψ j = P ( S j O ) for j = 1 , 2 , ..., J ; (3) where is the elemen t-wise Hadamard pro duct op erator. 2. Eac h transmitted exit wa ve ψ j propagates to the far field detector plane, where the detec- tor then records a real-v alued intensit y pattern containing M pixels. If there is no noise fluctuation, the exp ected intensit y of this j th wa ve-field at the detector plane reads h j = | F ψ j | 2 + ν j (4) where ν j is the exp ected level of background even ts, F is the matrix represen tation of the t wo-dimensional digital F ourier transform, and | · | extracts the mo dulus element-wise for a complex-v alued vector. 3. The mo del in Eq. (4) describ es the b ehavior of the detected intensit y in av erage. During an exp eriment, eac h diffraction pattern is sub ject to random fluctuations pro duced by the instrumen tal and the Poisson coun ting fluctuations (shot-noise). If one further assumes that the instrumen tal (thermal) noise is negligible, a Gaussian additive p erturbation is usually accurate enough to describ e ho w the counting fluctuation plagues the square-root of the measured intensit y (see, for instance, [22] and references therein): y 1 / 2 j = h 1 / 2 j + ε j (5) where ε j is a centered Gaussian random vector. The ab o ve relations are the forward (observ ation) mo del that predicts how the observ ations { y 1 / 2 j } J j =1 b eha ve when the sample and the prob e are join tly given. The inv ersion/reconstruction step simply aims at retrieving b oth these quantities from the observ ations. F or that purp ose, the maxim um likelihoo d estimator defines the solution of this reconstruction step via a minimization problem that can b e easily derived from the fluctuation mo del of Eq. (5). In our case it leads to ( O ? , P ? ) ∈ argmin O , P g ( O , P ) (6) where g is a separable fitting-function that reads g = J X j =1 g j with g j ( O , P ) := || y 1 / 2 j − h 1 / 2 j ( O , P ) || 2 (7) where k·k denotes the usual Euclidian norm in C N , and h 1 / 2 j and y 1 / 2 j denote the comp onent wise square ro ots of the vectors h j and y j resp ectiv ely . While this fitting-function has b een used in 5 the phase retriev al literature for decades, the re exist some alternativ e noise mo dels that would in turn provide alternative functionals to minimize [22, 4]. In an y case, the optimization step derived from the noise mo del in volv es a large-scale phase retriev al problem that is rather c hallenging. Phase retriev al problems are NP-hard problems b ecause of their inherent non-conv ex structure and the search for go o d and computationally efficient heuristics is still an op en problem. The o verlapping b etw een successive scanning prob e is how ever recognized as a key factor that helps in prev enting stagnation for standard, deriv ative-based iterations. Thanks to AD, these deriv atives can b e efficien tly computed and further used by one of the iterative solvers that will b e discussed in the next section. 3.2 Iterativ e ptyc hographic reconstruction with rev erse-mo de AD: ePIE and A dam W e first consider the extende d Ptycho gr aphic al Iter ative Engine or ePIE algorithm [5], which can b e summarized in a generalized form in Algorithm 1. Algorithm 1 Generalized ePIE gradient descent iteration Require: Probe and ob ject initial guesses P 0 and O 0 . Require: Minibatc h size b . F or the original ePIE pro cedure, b = 1 . 1: Randomly shuffle the prob e p ositions J = { 1 , 2 , ..., J } shuffle − − − − → J 0 . 2: for k = 1 to J /b do 3: With the prob e p ositions indexed as j ∈ J 0 , calculate the partial deriv atives: ∂ O g k := bk X j = b ( k − 1)+1 ∂ O g ( O k − 1 j ) and ∂ P g k := bk X j = b ( k − 1)+1 ∂ P g ( P k − 1 j ) (8) 4: Calculate the step sizes α k O := 1 / P k − 1 2 max and α k P := 1 / bk X j = b ( k − 1)+1 S ∗ j O k − 1 2 max . (9) where k·k max denotes the maximum element of the enclosed v ector. 5: Set O k = O k − 1 − α k O ∂ O g k and P k = P k − 1 − α k P ∂ P g k (10) 6: end for With b = 1 , Algorithm 1 exhibits one iteration of the ePIE pro cedure, during which it uses the information in single diffraction patterns to cyclically up date the current prob e and ob ject estimates. The core (or “Engine”) of this up date is the correction (Eq. (10)) computed from the considered prob e p osition j ∈ J 0 using the deriv ative of g with resp ect to the sample O and the prob e P . In its original formulation, the ePIE pro cedure uses the well-kno wn closed form expression of these deriv atives (see Eqs. (6) and (7) in [5]) to compute these up dates. Alternativ ely , we can use the reverse-mode AD pro cedure for the numerical computation of these deriv atives b y relying on the Wirtinger formalism (Eq. (2)) for complex deriv atives. The deriv ative with respect to the real and imaginary parts of the complex v ariable are ev aluated separately , and they are then accumulated according to the equations ∂ O g k := 1 2 ∂ ∂ g R [ O ] O k − 1 + i ∂ g ∂ I [ O ] O = O k − 1 ! (11) and ∂ P g k := 1 2 ∂ g ∂ R [ P ] P = P k − 1 + i ∂ g ∂ I [ P ] P = P k − 1 ! . (12) The AD version of ePIE is mathematically equiv alent to the original ePIE [5]; thus, b oth the 6 algorithms should generate the same sequence of iterates. W e refer to the AD version of the ePIE solv er as AD-ePIE. In Fig. 1 we present a simplified representation of the computational graph structure that generates the AD-ePIE iterates (see also T able 1). W e can see from the figure that the mo dular rev erse-mo de AD framew ork is agnostic as to the choice of the error metric, the update scheme, and even to the forw ard mo del itself; it generalizes straightforw ardly to any v ariations in these. W e demonstrate this in Section 4, where we v ary b oth the forward mo del and the up date scheme. Ob ject( O ) ψ j = P ( S j O ) h j = k F ψ j k 2 + ν j g ( O ) = J X j =1 √ y j − p h j 2 Prob e( P ) In tensities( y j ) F ar-field detection ∂ g ∂ O ∗ = ∂ ψ j ∂ O ∗ ∂ g ∂ ψ j ∂ g ∂ ψ j = ∂ y j ∂ ψ j ∂ g ∂ h j ∂ g ∂ y j Illumination p osition j Error function Fig. 1. Simplified representation of the forw ard and backw ard passes for the ob ject up date for far-field ptyc hography . The solid blue arrows indicate the forward pass direction. The dashed orange arrows indicate the backw ard pass direction. A natural extension to the ePIE/AD-ePIE algorithm is to use several prob e p ositions to com- pute a single update: that is, to increase the minibatc h size b ≥ 1 in Algorithm 1. In this p ersp ective, Algorithm 1 belongs to a larger family of iterates that takes adv an tage of the natural partitioning of the dataset [22]. Similar or iden tical optimization strategies are indeed well known under different names in sev eral comm unities, such as ‘ordered-subset’ in image pro cessing [49], ‘incremen tal gradient’ [50, Chapter 1] in the optimization literature, or ‘sto chastic gradient’ for neural-net work learning algorithms [51]. A salient feature of ePIE/AD-ePIE is that the chosen step sizes α k O and α k P (Eq. (9)) for the ob ject and probe up dates respectively equate to the in verse of the Lipschitz c onstant of the partial gradients ∂ O g k and ∂ P g k [33]. This choice of step sizes is well-kno wn in the optimization literature, and is particularly useful in a batc h gradien t descent setting where the deriv atives in the up date (Eq. (10)) are calculated using al l av ailable prob e p ositions at once (setting b = J ). As an example, with a steep est-descent iteration algorithm [50] these step sizes would then ensure global con vergence (tow ard a lo cal minimizer). How ever, the Lipsc hitz constants are not usually known a priori ; they generally hav e to b e carefully derived using the closed-form gradien t expressions [33]. Changes to the forw ard mo del, or the inclusion of additional terms in the error metric (e.g. regularizers), can thus require a re-deriving of b oth the closed form gradient expression and the Lipsc hitz constan t. As such, using the Lipschitz constan ts to calculate the step sizes would negate the flexibility that is the hallmark of the AD pro cedure. T o circumv ent this limitation and enable phase retriev al within the AD framework, we can substitute the ePIE/AD-ePIE method with a c hoice of state-of-the-art adaptiv e gradien t descent algorithms that are widely used in the machine learning literature [52], such as Momentum, Nes- tero v Momen tum, Adagrad, Adadelta, RMSProp, or A dam. The performance of these exotic metho ds sp ecifically in phase retriev al applications is a new but promising area of researc h, with the recent literature [32, 53, 54] demonstrating that momentum-based accelerated gradien t descen t metho ds con verge to a solution faster than standard gradien t descen t metho ds. In this w ork, we use the A dam (Adaptiv e Moment Estimation) gradient descent procedure [48] that uses a momentum- lik e acceleration and, crucially , do es not rely on the Lipsc hitz constant for the gradien t descent. As suc h, the Adam metho d is robust to changes in the error metric and therefore well-suited to phase retriev al applications. The A dam optimization metho d is av ailable out-of-the-b ox in the commonly used AD to olsets T ensorFlow [35] and PyT orch [36], so that it can be used directly without first implemen ting the underlying algorithm. Nevertheless, for the sake of clarity , w e present in App endix A the parameter initialization step and the v ariable update step that mak e up the A dam optimizer 7 for the ob ject v ariable (the probe up dates are similarly calculated). T o solve the pty chograph y problem, we incorp orate the A dam optimizer in a fashion iden tical to Algorithm 1, but with steps 4 and 5 substituted with the up date computed from the A dam optimizer. In Section 3.3, w e pro vide a minimal comparison of p erformance of the Adam method to the ePIE/AD-ePIE metho d b y iterating through the individual prob e p ositions and calculating the ob ject and prob e up dates separately p er prob e p osition. In Section 3.4, w e presen t the search strategy used for the choice of the Adam hyp erp ar ameters (viz., the initial prob e up date step size, the initial ob ject up date step size, and the minibatch size) adopted in this work, and provide heuristic guidelines for the choice of h yp erparameters to ac hieve computationally efficient ptyc hographic reconstruction. Beyond our heuristic approach, a more detailed ev aluation of the application of the A dam algorithm for phase retriev al is an imp ortant research question but is b ey ond the scop e of this pap er. 3.3 Numerical results F or a numerical v alidation of the rev erse-mo de AD pro cedure, w e sim ulated a far-field transmis- sion ptyc hography exp eriment with an incident x-ra y of energy 8 . 7 ke V. W e used the ‘Mandrill’ and ‘Cameraman’ images, eac h 128 × 128 pixels in size, as the test ob ject magnitude and phase resp ectiv ely , and embedded the test ob ject at the cen ter of a simulation b ox of size 190 × 190 pixels (with tight supp ort). W e illuminated the test ob ject with a complex-v alued prob e function appro ximated using an arra y of size 64 × 64 pixels and with a total integrated intensit y of 10 6 photons at the ob ject plane. The prob e function w as obtained by propagating the exit wa v e from a square ap erture of width 7 µ m to a distance of ζ = 15 cm so that it contained diffraction fringes c haracterized by √ λζ = 4 . 6 µ m. The raster grid for the ob ject scan was obtained b y translating the prob e latitudinally and longitudinally in steps of 3.5 µ m (6 pixels). The real-space pixel grid was obtained by assuming exact Nyquist sampling with respect to a detector with a pixel pitch of 55 µ m placed at a distance of 14 . 6 m from the ob ject. W e thereby generated 484 far-field diffraction patterns at the detector p osition, then incorp orated P oisson noise into these diffraction patterns to get the simulated far-field data p oints. 0 50 100 150 Iterations 1 0 3 1 0 4 1 0 5 g ( , ) ePIE AD-ePIE Adam 0.0 0.5 1.0 / 4 0 / 4 (a) (b) (c) 0 50 100 150 Iterations 0.0 0.2 0.4 0.6 0.8 Recons. err. ePIE AD-ePIE Adam 1 0 0 1 0 1 1 0 2 0 (d) (e) (f ) Fig. 2. The v alue of the (a) error metric g ( O , P ) and (d) the normalized reconstruction error for the ob ject for the ePIE metho d, the AD-ePIE metho d, and the Adam metho d for the far-field pt ychograph y exp eriment. Adam reconstructions for the (b) ob ject magnitude, (c) ob ject phase, (e) prob e magnitude, and (f ) probe phase. The normalized ro ot-mean-squared reconstruction error (NRMSE) was 0.03 for the ob ject and 0.02 for the prob e. Our implemen tation of the AD-based pt ychographic reconstruction framework follows previous w ork [38, 39] and uses the T ensorFlow [35] machine learning library for the gradien t calculation. 8 F or the reconstruction, we used a 7 µ m (12 pixels) square aperture as the initial prob e guess, and a random complex arra y as the initial ob ject guess. Using the same starting parameters, we p erformed 150 iterations through the data set (with the prob e v alue held constant for the first iteration) for the ePIE, AD-ePIE, and the Adam metho ds, with the same randomized sequence of diffraction patterns for all three algorithms. F or the Adam metho d, we used initial up date step sizes 0 . 1 and 0 . 001 for the prob e and ob ject resp ectively . As we demonstrate in Fig. 2, the AD-ePIE metho d follo wed the same reconstruction path as the ePIE metho d for b oth the error metric g ( O , P ) , and the normalized ob ject reconstruction error calculated p er pixel from the ground truth [55]. This demonstrates that the reverse-mode AD framework described in this pap er calculates gradient v alues n umerically identical to those calculated using the closed form symbolic deriv atives [5]. Additionally , reconstruction using the A dam algorithm also conv erges to the same final prob e and ob ject structures as the ePIE algo- rithm: the final Adam ob ject and prob e structures only differ by 5% and 4% resp ectiv ely from the corresp onding final ePIE structures. The reconstruction algorithms implemen ted in this work do not use any adv anced domain decomp osition tec hniques to parallelize the ptyc hographic reconstruction [56, 38]; they iterate sequen tially through minibatches of diffraction patterns. When these algorithms were implemen ted to run on a single 3.00 GHz Intel Xeon processor with a minibatch b = 1 , the runtime for eac h minibatc h up date for the forward mo del and the ePIE algorithm (implemented with the numpy library in Python) w ere found to be ≈ 1 ms, and ≈ 1 . 2 ms respectively . The corresp onding run time for the AD-ePIE and Adam algorithms (implem en ted with T ensorFlow) were found to b e ≈ 4 ms, and ≈ 5 . 8 ms respectively . Disregarding back end discrepancies, this is in agreement with the exp ected computational costs describ ed in Section 2—the ePIE algorithm implements the sym b olic gradient expressions directly and has a runtime comparable to the forward mo del itself. The AD-ePIE metho d has a runtime that is within a small scaling factor of that for the forw ard model, and the A dam algorithm requires some additional computation for the necessary momen t up dates (Algorithm 2). In practice, the A dam algorithm conv erges to the final solution in 80 iterations ( ≈ 225 s) while the ePIE algorithm requires 150 iterations ( ≈ 87 s) to achiev e con vergence. Once we use larger minibatch sizes, how ev er, as w e demonstrate in Section 3.4, the A dam algorithm can conv erge to a solution muc h faster than the basic ePIE approach. 3.4 Cho osing Adam hyperparameters for efficien t pt ychographic recon- struction In this work, for reconstructions with the Adam algorithm (Algorithms 1 and 2), w e man ually supply three k ey h yp erparameters: the minibatch size b , and the initial A dam ob ject and prob e up date step sizes α A O and α A P . F or optimal p erformance of these reconstruction algorithms, we need to choose hyperparameter v alues that simultaneously optimize the hardware utilization, the time cost p er minibatch up date, and the total n umber of minibatch up dates required to conv erge to a solution—this makes for a difficult research problem [57]. As suc h, in this work, w e take a primarily heuristic tw o-step approach: 1) w e pic k a minibatch size that optimizes the hardw are utilization and time cost per minibatc h up date, and then, with this n umber size fixed, 2) w e p erform a gridsearch to identify the v alues for α A O and α A P that reduce the num b er of iterations required for the reconstruction. Pt ychographic reconstruction with b = 1 , as used in Section 3.3, has optimally low time cost p er minibatch up date, but is not suitable for implementation on parallel computing hardware. In fact, when implemen ted for use in a n VIDIA K40 GPU, this reconstruction procedure has minimal GPU utilization ( < 10% ), and shows no noticeable impro vemen t ov er the CPU version in the time cost p er minibatch up date. This suggests the use of larger minibatc hes for optimal GPU utilization. As we increase the minibatc h size, how ever, the num b er of intermediate v alues stored in the memory also increases prop ortionally . As such, we need to c ho ose a minibatch size that ensures that the resulting computational graph, including all the intermediate v alues calculated in the forward and backw ard passes, fits within the GPU memory . F or the ptyc hographic dataset sim ulated in Section 3.3, w e can set b = 100 ; this choice combines muc h greater GPU utilization ( > 50% ) with minimal time cost p er minibatch up date ( ≈ 5 . 2 ms), and also maintains sto chasticit y in the gradient directions computed p er up date. With the minibatc h size fixed, we can then p erform a gridsearch to identify the α A O and α A P v alues that lead to fast pt ychographic reconstruction. While there exist sophisticated metho ds to 9 mo dify these parameters within the descent pro cedure [48], our algorithms use the more common approac h that uses fixed step sizes. Similarly , while there exist e arly stopping metho ds to identify the conv ergence of the reconstruction pro cedure [58], w e simply monitor the error metric g ( O , P ) [Eq. (7)] for a fixed, large, n umber of iterations and c ho ose the initial step sizes that give us the lo west v alues for the error metric. T o examine ho w the choice of these hyperparameters affects the reconstruction process at differen t experimental conditions, w e follow ed the pro cedure in Section 3.3, but with the in tegrated prob e intensit y set to 10 3 and 10 9 photons resp ectively to generate tw o additional ptyc hographic datasets. T o reconstruct the ob ject and prob e from each dataset, we used the Adam algorithm with a minibatch b = 100 , and p erformed a gridsearch on a logarithmic grid of initial prob e up date step sizes [100 , 10 , 1 , 0 . 1 , 0 . 01] and initial ob ject up date step sizes of [10 , 1 , 0 . 1 , 0 . 01 , 0 . 001] . (a) (b) (c) Fig. 3. (a) Reconstruction errors (NRMSE) obtained after 1500 iterations of the Adam algorithm (with b = 100 ) as a function of initial ob ject and probe up date step sizes, for incident prob e in tegrated in tensities of 10 3 (left), 10 6 (mid), and 10 9 (righ t) respectively . F or clarity , the NRMSEs plotted are capp ed at 0.6. (b) Final ob ject magnitudes and (c) phases obtained for α A O = 0 . 01 and α P = 1 . 0 . F or the low photon count of 10 3 (left), the reconstructed structures are deteriorated due to the raster grid artifact. In Fig. 3, we show the ob ject NRMSE obtained for eac h of these hyperparameter sets after 1500 iterations (a runtime of ≈ 30 s). W e can see that α A O = 0 . 01 remains an optimal ob ject up date step size for all three exp eriments. The prob e up date step size requires more careful tuning, with α A P = 1 . 0 a go o d starting choice for the tuning procedure. F or ( α A O , α A P ) = (0 . 01 , 1 . 0) , after 1500 iterations, w e obtained ob ject and prob e [ ( O , P ) ] reconstruction errors of (0 . 28 , 0 . 51) , (0 . 03 , 0 . 02) , and (0 . 004 , 0 . 0004) respectively for integrated prob e intensities of 10 3 , 10 6 , and 10 9 . In comparison, when we stop the ePIE algorithm ( b = 1 ) after 150 iterations ( ≈ 87 s) without ensuring conv ergence, w e get the corresp onding reconstructions errors of (0 . 28 , 0 . 50) , (0 . 06 , 0 . 04) , and (0 . 05 , 0 . 008) . This 10 demonstrates that the Adam algorithm, with the provided h yp erparameter search strategy , is a fast and robust choice for ptyc hographic reconstruction within the AD framework. 4 Applications to other pt yc hographic forw ard mo dels In this section, we apply the reverse-mode AD framew ork developed in Section 3 for the far-field transmission ptyc hography exp eriment to the more complex exp erimental regimes of near-field pt ychograph y and multi-angle Bragg ptyc hography . 4.1 Near-field pt yc hography Just as in the far-field case, the near-field ptyc hography e xp eriment uses a prob e beam to raster scan the ob ject in a grid of spatially ov erlapping illumination sp ots, generating a set of J exit w av es after the prob e-ob ject interaction. The detector, how ever, is placed at a distance z from the ob ject so that the F resnel num b er satisfies the condition W 2 /λz 1 , where λ is the wa v elength of the incident prob e, and W is the lateral extent of the illuminated area [7] at any given prob e p osition. This is the high F resnel num b er condition for the near-field regime. In this regime, given a probe P , an ob ject O , a lateral shift op erator S j , and an exit w av e ψ j = P ( S j O ) , the exp ected in tensity at the detector plane is given by h j = k D z { ψ j }k 2 + ν j (13) where ν j is the exp ected background, and D z is the F resnel free-space propagator for the distance z defined by the expression D z { ψ j } = F − 1 ( F ψ j ) exp − iz λ 4 π q 2 x + q 2 y (14) where ( q x , q y ) the recipro cal space co ordinates [59]. The experimentally measured intensit y pat- terns are again denoted as y j . Detailed characterizations of this near-field pt ychograph y exp erimen t can b e found in [7], [60], [61], and [62]. W e sim ulated a near-field pt ychograph y experiment using an incident 8.7 k e V probe beam appro ximated using an array of size 512 × 512 pixels at the ob ject plane, with the pixel pitch set to 0 . 6 µ m. The probe was initialized as a Gaussian with a FWHM of 19 µ m (50 pixels), then passed through a diffuser and modeled as a speckle pattern with an a verage flux of 10 4 photons/pixel. The sim ulated ob ject consists of the ‘Mandrill’ and ‘Cameraman’ images of size 192 × 192 pixels, mo deled as the ob ject magnitude and phase resp ectively . The raster grid w as obtained by translating the ob ject in the horizontal and vertical in steps of 10 µ m (44 pixels), generating a total of 25 diffraction patterns at a detector placed 4 . 7 cm from the ob ject plane. W e added Poisson noise to the diffraction patterns to obtain our simulated dataset. F or the near-field reconstruction, we obtained an initial probe estimate b y bac kpropagating the a verage measured intensit y , y avg = ( P J j =1 y j ) /J , to giv e P 0 = F − 1 ( F √ y avg ) exp iz λ 4 π ( q 2 x + q 2 y ) . (15) The ob ject was initialized as a 192 × 192 arra y of random complex n umbers. T o solv e for the prob e and ob ject structures, w e again used the A dam optimizer in the approac h outlined in Algorithm 1, but considering 5 prob e p ositions p er iteration ( i.e. with a minibatc h size of 5). The initial Adam up date step sizes w ere set to α A O = 0 . 01 and α A P = 10 for the ob ject and prob e resp ectively . The minibatc h size and initial step size were chosen as describ ed in Section 3.4. After 10,000 iterations of A dam gradien t descen t, we obtained the ob ject and prob e reconstruc- tions shown in Fig. 4. The ob ject w as reconstructed with an NRMSE of 0.01, and the prob e with an NRMSE of 0.12. The discrepancies in the reconstructed probe were contained at the edges of the full-field prob e, in regions which did not in teract with the ob ject and were th us unconstrained. These reconstructions demonstrate that the straigh tforward generalization of the rev erse-mo de AD reconstruction framework from the far-field ptyc hography case ( Fig. 1) to the near-field ptyc hog- raph y case successfully solves the near-field ptyc hograph y phase retriev al problem. 11 0.0 0.5 1.0 0 / 4 (a) (b) 1 0 1 1 0 2 1 0 3 0 (c) (d) Fig. 4. Near-field ptyc hographic reconstruction with reverse-mode AD. Shown here are the suc- cessfully reconstructed (a) ob ject magnitude, (b) ob ject phase, (c) probe magnitude, and (d) prob e phase. The ob ject and prob e w ere reconstructed with ov erall NRMSEs of 0.01 and 0.12 resp ectively . 4.2 Multi-angle Bragg pt ychograph y Multi-angle Bragg pro jection ptyc hography (maBPP) [63] is a pt ychographic exp eriment that al- lo ws for tw o degrees of freedom in the scan parameters: 1) the choice of the planar scan p ositions for the usual tw o-dimensional pt ychographic scan, and 2) the c hoice of angular scan p ositions cor- resp onding to small ob ject rotations of a crystalline ob ject oriented to satisfy a Bragg diffraction condition. The maBPP exp eriment uses the far-field ptyc hography setup, but with the detector placed to measure a crystalline Bragg peak, typically displaced from the direct b eam by tens of degrees. The dete ctor records a set of tw o-dimensional (2D) coheren t diffraction patterns at ov er- lapping scan p ositions at one or more angles near the Bragg diffraction condition. The diffraction patterns are then used to reconstruct a three-dimensional (3D) strain-sensitive image of an ex- tended crystalline sample [64]. An example exp erimental geometry with the exit beam at a 60 ◦ angle to the incident b eam direction is sho wn in fig. 5. T o develop the maBPP forw ard mo del, we consider a 3D diffracting crystal volume O and an illumination volume P . The incident prob e direction k i and the exit b eam direction k f satisfy the Bragg diffraction condition when the scattering vector q = k f − k i coincides with some recipro cal lattice v ector G hkl for the crystal. At the Bragg condition, if the prob e volume interacts with the slice of the ob ject indicated b y the lateral shift op erator S j , then the 2D exit wa ve ψ j is calculated as [64] ψ j = R P ( S j O ) (16) where R is the matrix op erator p erforming the pro jection along the exit b eam direction. When the ob ject is rotated by a small angle ∆ θ j , the scattering v ector deviates from the Bragg condition b y Q j = q j − G hkl . The corresp onding change in the ob ject-prob e interaction can be enco ded in terms of a phase shift operator defined as Q j = exp ( i r · Q j ) . The 2D exit wa ve is then giv en by [63] ψ j = R Q j ( P ( S j O )) , (17) and the exp ected intensit y at the detector plane is then h j = k F ψ j k 2 + ν j = k F R Q j ( P ( S j O )) k 2 + ν j , (18) 12 z x y q z q x q y 2 θ =6 0° k i k f I n c o m i n g s p e c k l e d x - r a y b e a m E x i t w a v e D e t e c t o r S a m p l e (a) y z (b) y z Δθ (c) Fig. 5. (a) Sim ulated exp erimen tal geometry for multi-angle Bragg pt ychograph y . The incident ( k i ) and exit ( k f ) beams define a scanning angle. (b) At each scanning angle, the incident b eam is shifted along an o verlapping raster grid in the y z plane to generate a set of 2D coheren t diffraction patterns. (c) F or angular sampling around the Bragg p eak (say with 2 θ = 60 ◦ ), the sample is rotated through small angles ∆ θ , and the incident and exit b eams are mo dulated corresp ondingly . where ν j accoun ts for the background even ts. The exp erimentally measured in tensities are denoted b y y j . W e can again adapt the forward mode l of the far-field pt ychograph y exp erimen t to the multi- angle Bragg exp eriment by generalizing the prob e and ob ject mo dels to 3D, and applying the angle-dep enden t phase shifts Q j along with the pro jection op erator R . This 3D generalization comes at the cost of a dramatic increase in the parameter space, and the reconstruction b ecomes corresp ondingly more difficult. With this in mind, for ease of reconstruction we use a sp eckle pattern, which was found to help in solving the phase retriev al problem for 2D CDI [65, 66], as a highly diverse structured prob e illumination. W e also impose the commonly applied [64] additional constraints on the reconstruction problem: we assume that the probe structure and the ob ject thickness (along the x − direction) are known in adv ance. F or the multi-angle Bragg ptyc hography experiments, we simulated the ob ject as a compact crystal represented by set of grid p oints inside a faceted volume. W e set the interior p oints to ha ve magnitude 0.5 and a spatially v arying phase structure em ulating a strain field. W e defined the orthonormal real space axes ( x, y , z ) such that eac h ob ject v oxel is of size 66 × 66 × 66 nm 3 , and such that the p olyhedral crystal is situated obliquely within (with ≈ 32% of the volume of ) a cub oid of size 26 × 56 × 50 v oxels ( ≈ 25 µ m 3 ). The cub oid w as itself placed at the cen ter of a sim ulation b ox of total size 64 × 162 × 112 v oxels ( ≈ 334 µ m 3 ). A 64 × 64 pixel detector with a pixel pitch of 55 µ m was placed at a distance of 1.5 m from the ob ject, normal to the real space y -axis. The probe w as initialized as Gaussian b eam of energy 8.7 ke V with an FWHM of 396 nm (6 pixels) contained entirely within a 64 × 64 pixel array , then passed through a diffuser and mo dele d as a sp eckle pattern. The prob e was then interpolated to approximate the incident b eam at a Bragg condition of 2 θ = 60 ◦ (fig. 5) such that k i ⊥ z and k f k y . The b eam profile was assumed to b e constant along the propagation direction during its propagation through the simulation b o x. T o obtain the ptyc hographic data set, w e applied phase mo dulations corresp onding to an angular shift b etw een ± 0 . 14 ◦ around the Bragg condition using an angular step size of ∆ θ = 0 . 02 ◦ , for a total of 15 angles. At eac h such angle, we translated the prob e along the y and z directions with a step size of 132 nm (2 pixels) in a raster grid of 41 × 24 scan p ositions, i.e. with an ov erlap of ≈ 86% p er step in the y direction and ≈ 83% p er step in the z direction. This generated a total of 14760 diffraction patterns, to eac h of whic h w e added Poisson noise, whic h ga ve us a data set 13 with an ov erall intensit y maximum of 13560 photons/pixel. (a) z y 660 nm 1 0 1 1 0 0 1 0 1 (b) y x | O t r u e | | O r e c o n s | 330 nm 0.2 0.0 0.2 (c) (d) z y 660 nm 0 (e) y x [ O t r u e ] [ O r e c o n s ] 330 nm 0 (f ) Fig. 6. Multi-angle Bragg pt ychographic reconstruction with reverse-mode AD. (a) T rue and (d) reconstructed ob ject structure with surface phase v ariation. Y Z cross-section of the (b) prob e magnitude and (e) phase at x = 32 . (c) X Y cross-section of the error in the reconstructions of the ob ject (c) magnitude and (f ) phase at z = 41 . F or the sake of clarity , the error in the phase is set to 0 wherev er kO recons k < 0 . 01 . The normalized error (NRMSE) for the 3D reconstruction is 0.09. During the reconstruction, we solv ed for a volume of size 30 × 78 × 82 v oxels, initialized as a random complex array , and placed centrally in the simulation b ox. The ob ject supp ort along the x − direction is set to b e sligh tly ( ≈ 15% ) larger than the actual ob ject thickness. This allows for the exp ected spreading of the ob ject induced b y resolution effects due to the lo w photon count [67]. T o solve for the ob ject structure, we again adapted the approac h outlined in Algorithm 1 to use the A dam optimizer with the initial up date step size 0 . 01 , and with minibatches each containing 400 diffraction patterns selected in a stochastic fashion irrespective of either prob e position or ob ject rotation. The minibatch size and initial up date step size were chosen as describ ed in Section 3.4. After 70 iterations of A dam gradien t descen t, we obtained a reconstruction of the crystal (and the lo ose supp ort) shown in Fig. 6 The ov erall normalized reconstruction error, calculated using a 3D adaptation of the sub-pixel registration algorithm presented in [55], was found to b e 0.09, which is m uch larger than the NRMSE observed for the 2D ob jects in Sections 3.3 and 4.1. As we can see in Fig. 6, the error in the reconstruction is primarily due to discrepancies at the ob ject edges—this is a physical effect that can b e attributed to the presence of shot noise that corrupts the diffraction data [67]. This results demonstrates that the reconstruction accurately captures the physics of the exp erimen t. 5 Conclusion Our results in this pap er demonstrate that the automatic differentiation technique can b e used to construct a general gradient-based inv ersion framew ork for ptyc hographic phase retriev al. Sp ecifi- cally , w e hav e shown that we can minimize a ptyc hographic error metric by first using reverse-mode AD to numerically calculate the gradients of the error metric, then using these within a state-of- the-art adaptive gradient descent algorithm. W e can thereby solve the ptyc hographic problem without ever p erforming an analytical deriv ation for any closed-form gradient expression. This in version framework is robust to changes in the forward mo del and can b e used iden tically for phase retriev al in suc h v arying experimental configurations as far-field transmission ptyc hography , near-field ptyc hography , and multi-angle Bragg ptyc hography . The inv ersion framework show cased in this work do es not rely on any particular choice of the error metric or the exp erimental configuration. W e can change the error metric either to accommo- 14 date alternate noise models, or to incorporate a priori knowledge ab out the exp eriment through the c hoice of a regularizer. W e can tailor the exp erimen tal setup and introduce new degrees of freedom when necessary , then simply change the forwar d mo del corresp ondingly . A dditionally , by using the state-of-the-art T ensorFlow library for the gradient calculations, w e gain in-built scalabilit y and parallelization (multi-CPU/GPU architectures), th us allowing for con venien t phase retriev al for exp erimental mo dels large and small. In the future, we aim to leverage these flexibilities to in- corp orate a v ariety of physical phenomena–suc h as prob e fluctuations, p ositional inaccuracies, and limitations in the detection process–into the phase retriev al framew ork. This would enable high- resolution phase retriev al from near-field/far-field 3D (or 2D) Bragg and non-Bragg exp eriments, all within a single consistent platform. The flexible AD-based inv ersion framework is exp ected to pro vide a unified approach to phase retriev al for general (ptyc hographic and non-ptyc hographic) CDI exp eriments with x-ra ys, elec- trons, or optical wa ves, even as we mov e tow ards increasingly complex imaging regimes. This w ould p otentially giv e researchers the ability to explore v ariations of basic CDI metho dologies in a con venien t and straightforw ard manner, and could thereb y pro ve a p ow erful addition to the phase retreiv er’s to olbox. A P arameter up date with the A dam optimizer T o examine how the Adam optimizer p erforms the parameter up dates, we consider the far-field transmission ptyc hography setting from Section 3.1, with the error metric g ( O , P ) which is to b e minimized with respect to the ob ject parameter O . The optimization is p erformed in the minibatch setting describ ed in Algorithm 1, wherein the partial deriv ativ e at the k th step, ∂ O g k , is calculated using Eq. (8). The Adam optimization step consists of a parameter initialization step and a v ariable up date step. The initialization step, whic h precedes the gradient descent iterations, is outlined in Al- gorithm 2a. The parameter update step in Algorithm 2b replaces Steps 4 and 5 in the iterativ e descen t setting outlined in Algorithm 1. Typical out-of-the-b ox implementations of the Adam opti- mizer only support gradient descen t for real-v alued v ariables, in which case the real and imaginary comp onen ts of the ob ject O are separately up dated. T o formalize this up date step, we can separate the real and imaginary comp onents of ∂ O g k as ∂ R [ O ] g k = 1 2 ∂ g k ∂ R [ O ] and ∂ I [ O ] g k = 1 2 ∂ g k ∂ I [ O ] (19) suc h that ∂ O g k = ∂ R [ O ] g k + i ∂ I [ O ] g k . W e can no w see that the first and second moment parameters created during the initialization step for R [ O ] (and similarly for I [ O ] ) are subsequently up dated alongside the ob ject v ariable throughout the optimization pro cess. These updates dep end on b oth the current gradien t v alue and an exp onentially w eighted accumulation of all past gradien t v alues, thereby adapting the magnitude of direction of the parameter up date at every step. F or an appropriately chosen initial step size α A O , up dating the optimization parameter in this fashion leads to fast and stable conv ergence [48]. 15 Algorithm 2a Adam ob ject initialization Require: Initial Adam step size α A O . 1: Initialize the moment vectors m 0 R [ O ] ← − 0 m 0 I [ O ] ← − 0 ) First moments, m R [ O ] , m I [ O ] ∈ R N v 0 R [ O ] ← − 0 v 0 I [ O ] ← − 0 ) Second moments, v R [ O ] , v I [ O ] ∈ R N 2: Initialize the exp onential decay rates with their recommended default v alues [48]: β 1 = 0 . 9 ( Deca y rate for m R [ O ] , m I [ O ] ) β 2 = 0 . 999 ( Deca y rate for v R [ O ] , v I [ O ] ) = 10 − 8 ( Constan t used to av oid division by zero ) Algorithm 2b Adam ob ject up date (at step k ) Require: Ob ject estimate O k − 1 and the partial deriv ative ∂ O g k . 1: Update the first and second moments: m k R [ O ] = β 1 m k − 1 R [ O ] + (1 − β 1 ) ∂ R [ O ] g k 1 − ( β 1 ) k v k R [ O ] = β 2 v k − 1 R [ O ] + (1 − β 2 ) ∂ R [ O ] g k 2 1 − ( β 2 ) k (and similarly for m k I [ O ] and v k I [ O ] ). 2: Update the ob ject estimate: R [ O ] k = R [ O ] k − 1 − α A O m k R [ O ] q v k R [ O ] + I [ O ] k = I [ O ] k − 1 − α A O m k I [ O ] q v k I [ O ] + F unding W e thank the following for funding of this work: Lab oratory Directed Research and Developmen t (LDRD) and the Adv anced Photon Source, Argonne National Lab oratory , pro vided by the Director, Office of Science, of the U.S. Department of Energy under Contract No. DE-AC02-06CH11357; National Institutes of Health under R01 MH115265; and the Department of Energy , Office of Science, Basic Energy Sciences, Materials Science and Engineering Division. 16 References [1] W. Hopp e, “ Beugung im inhomogenen Primarstrahlwellenfeld. I I I. Amplituden-und Phasen b estimmung b ei unperio dischen Ob jekten,” A cta Crystal lo gr. A , vol. 25, no. 4, pp. 508– 514, 1969. [2] J. M. Ro denburg and H. M. F aulkner, “A phase retriev al algorithm for shifting illumination,” Appl. Phys. L ett ., vol. 85, no. 20, pp. 4795–4797, 2004. [3] H. M. L. F aulkner and J. Ro denburg, “Mov able ap erture lensless transmission microscopy: A no vel phase retriev al algorithm,” Phys. R ev. L ett ., vol. 93, p. 023903, July 2004. [4] M. Guizar-Sicairos and J. Fienup, “Phase retriev al with transverse translation diversit y: a nonlinear optimization approach,” Opt. Expr ess , vol. 16, pp. 7264–7278, May 2008. [5] A. M. Maiden and J. M. Ro denburg, “An improv ed ptyc hographical phase retriev al algorithm for diffractive imaging,” Ultr amicr osc opy , v ol. 109, pp. 1256–1262, Aug. 2009. [6] P . Thibault, M. Dierolf, O. Bunk, A. Menzel, and F. Pfeiffer, “Prob e retriev al in ptyc hographic coheren t diffractive imaging,” Ultr amicr osc opy , vol. 109, pp. 338–343, Mar. 2009. [7] M. Sto ckmar, P . Clo etens, I. Zanette, B. Enders, M. Dierolf, F. Pfeiffer, and P . Thibault, “Near-field ptyc hograph y: phase retriev al for inline holography using a structured illumina- tion,” Sci. R ep orts , vol. 3, p. 1927, 2013. [8] P . Go dard, G. Carbone, M. Allain, F. Mastropietro, G. Chen, L. Cap ello, A. Diaz, T. Metzger, J. Stangl, and V. Chamard, “Three-dimensional high-resolution quantitativ e microscopy of extended crystals,” Nat. Commun ., vol. 2, p. 568, 2011. [9] A. M. Maiden, M. J. Humphry , and J. M. Rodenburg, “Pt ychographic transmission microscop y in three dimensions using a multi-slice approach,” J. Opt. So c. Am. A , v ol. 29, pp. 1606–1614, Aug. 2012. [10] M. Dierolf, A. Menzel, P . Thibault, P . Sc hneider, C. M. Kewish, R. W epf, O. Bunk, and F. Pfeiffer, “Ptyc hographic x-ra y computed tomography at the nanoscale,” Nat ., vol. 467, pp. 436–439, Sept. 2010. [11] M. A. Gilles, Y. S. G. Nashed, M. Du, C. Jacobsen, and S. M. Wild, “ 3D x-ra y imaging of con tinuous ob jects b eyond the depth of fo cus limit,” Opt ., v ol. 5, pp. 1078–1085, 2018. [12] R. H. T. Bates, “F ourier phase problems are uniquely solv able in more than one dimension. I. Underlying theory ,” Optik , v ol. 61, pp. 247–262, 1982. [13] R. W. Gerc hberg and W. O. Saxton, “A practical algorithm for the determination of phase from image and diffraction plane pictures,” Optik , vol. 35, no. 2, pp. 237–246, 1972. [14] J. R. Fienup, “Phase retriev al algorithms: a comparison,” Appl. Opt ., v ol. 21, no. 5, pp. 2758– 2769, 1982. [15] V. Elser, “Phase retriev al by iterated pro jections,” J. Opt. So c. A m. A , v ol. 20, no. 1, pp. 40–55, 2003. [16] S. Marchesini, “A unified ev aluation of iterativ e pro jection algorithms for phase retriev al,” R ev. Sci. Instruments , v ol. 78, p. 011301, Jan. 2007. [17] J. Clark, X. Huang, R. Harder, and I. Robinson, “High-resolution three-dimensional partially coheren t diffraction imaging,” Nat. Commun ., vol. 3, p. 993, 2012. [18] P . Thibault and A. Menzel, “Reconstructing state mixtures from diffraction m easuremen ts,” Nat ., vol. 494, pp. 68–71, F eb. 2013. [19] A. T ripathi, I. McNult y , and O. G. Shpyrk o, “Ptyc hographic ov erlap constraint errors and the limits of their n umerical reco very using conjugate gradient de scen t metho ds,” Opt. Expr ess , v ol. 22, no. 2, pp. 1452–1466, 2014. 17 [20] P . Dwivedi, A. Konijnen b erg, S. Pereira, and H. Urbach, “Lateral p osition correction in pty- c hography using the gradien t of intensit y patterns,” Ultr amicr osc opy , vol. 192, pp. 29–36, 2018. [21] P . Thibault and M. Guizar-Sicairos, “Maximum-lik eliho o d refinement for coherent diffractiv e imaging,” New J. Phys ., vol. 14, p. 063004, June 2012. [22] P . Go dard, M. Allain, V. Chamard, and J. Ro denburg, “Noise mo dels for lo w coun ting rate coheren t diffraction imaging,” Opt. Expr ess , vol. 20, pp. 25914–25934, Nov 2012. [23] A. S. Jurling and J. R. Fienup, “Applications of algorithmic differentiation to phase retriev al algorithms,” JOSA A , vol. 31, no. 7, pp. 1348–1359, 2014. [24] E. J. Candes, X. Li, and M. Soltanolkotabi, “ Phase retriev al via Wirtinger flow: theory and algorithms,” IEEE T r ansactions on Inf. The ory , vol. 61, pp. 1985–2007, apr 2015. [25] H. Zhang and Y. Liang, “Reshap ed wirtinger flow for solving quadratic system of equations,” A dv. Neur al Inf. Pr o c ess. Syst ., v ol. 29, pp. 2622–2630, 2016. [26] Z. W ei, W. Chen, C.-W. Qiu, and X. Chen, “ Conjugate gradien t method for phase retriev al based on the Wirtinger deriv ative,” J. Opt. So c. Am. A , vol. 34, p. 708, may 2017. [27] J. Zhong, L. Tian, P . V arma, and L. W aller, “Nonlinear optimization algorithm for partially coheren t phase retriev al and source reco very ,” IEEE T r ansactions on Comput. Imaging , vol. 2, no. 3, pp. 310–322, 2016. [28] J. Li and T. Zhou, “Numerical optimization algorithms for w av efront phase retriev al from m ultiple measurements,” Inverse Pr obl. & Imaging , v ol. 11, no. 4, pp. 721–743, 2017. [29] J. Qian, C. Y ang, A. Schirotzek, F. Maia, and S. Marchesini, “Efficien t algorithms for ptyc ho- graphic phase retriev al,” Inverse Pr obl. Appl. Contemp. Math , v ol. 615, pp. 261–280, 2014. [30] A. W. Y an, A. J. D’A lfonso, A. J. Morgan, C. T. Putkunz, and L. J. Allen, “F ast deterministic pt ychographic imaging using x-rays,” Micr osc. Micr o anal ., v ol. 20, no. 4, pp. 1090–1099, 2014. [31] L.-H. Y eh, “Analysis and comparison of fourier ptyc hographic phase retriev al algorithms,” tec h. rep., T echnical Rep ort No. UCB/EECS-2016-86 (Universit y of California, 2016), 2016. [32] A. M. Maiden, D. Johnson, and P . Li, “F urther impro vemen ts to the pt ychographical iterative engine,” Opt ., vol. 4, no. 7, pp. 736–745, 2017. [33] R. Hesse, D. R. Luke, S. Sabach, and M. K. T am, “Proximal heterogeneous blo ck implicit- explicit method and application to blind ptyc hographic diffraction imaging,” SIAM J. on Imaging Sci ., vol. 8, no. 1, pp. 426–457, 2015. [34] A. Griew ank and A. W alther, Evaluating derivatives: principles and te chniques of algorithmic differ entiation , v ol. 105. SIAM, 2008. [35] M. Abadi, A. Agarwal, P . Barham, E. Brevdo, Z. Chen, C. Citro, G. S. Corrado, A. Davis, J. Dean, M. Devin, S. Ghemaw at, I. J. Go o dfellow, A. Harp, G. Irving, M. Isard, Y. Jia, R. Józefowicz, L. Kaiser, M. Kudlur, J. Leven b erg, D. Mané, R. Monga, S. Mo ore, D. G. Murra y , C. Olah, M. Sch uster, J. Shlens, B. Steiner, I. Sutskev er, K. T alwar, P . A. T uck er, V. V anhouck e, V. V asudev an, F. B. Viégas, O. Vin yals, P . W arden, M. W attenberg, M. Wick e, Y. Y u, and X. Zheng, “T ensorflow: Large-scale machine learning on heterogeneous distributed systems,” CoRR , vol. abs/1603.04467, 2016. [36] A. Paszk e, S. Gross, S. Chintala, G. Chanan, E. Y ang, Z. De Vito, Z. Lin, A. Desmaison, L. Antiga, and A. Lerer, “Automatic differentiation in pytorc h,” in NIPS-W , 2017. [37] D. Maclaurin, Mo deling, infer enc e and optimization with c omp osable differ entiable pr o c e dur es . PhD thesis, Harv ard Universit y , 2016. [38] Y. S. G. Nashed, T. Peterk a, J. Deng, and C. Jacobsen, “Distributed automatic differentiation for ptyc hography ,” Pr o c e dia Comput. Sci ., vol. 108, pp. 404–414, 2017. 18 [39] S. Ghosh, Y. S. Nashed, O. Cossairt, and A. Katsaggelos, “ ADP : Automatic differentiation pt ychograph y,” 2018 IEEE Int. Conf. on Comput. Photo gr. (ICCP) , 2018. [40] A. G. Baydin, B. A. Pearlm utter, A. A. Radul, and J. M. Siskind, “ Automatic differentiation in machine learning: a survey,” J. Mach. L e arn. R es ., vol. 18, pp. 1–43, 2015. [41] P . H. Hoffmann, “A hitchhik er’s guide to automatic differentiation,” Numer. Algorithms , v ol. 72, no. 3, pp. 775–811, 2016. [42] L. Bian, J. Suo, G. Zheng, K. Guo, F. Chen, and Q. Dai, “F ourier pt ychographic reconstruction using wirtinger flow optimization,” Opt. Expr ess , vol. 23, no. 4, pp. 4856–4866, 2015. [43] Z. W en, C. Y ang, X. Liu, and S. Marchesini, “Alternating direction metho ds for classical and pt ychographic phase retriev al,” Inverse Pr obl ., vol. 28, no. 11, p. 115010, 2012. [44] J. R. Fienup, T. R. Crimmins, and W. Holszt ynski, “Reconstruction of the supp ort of an ob ject from the supp ort of its autocorrelation,” J. Opt. So c. Am ., vol. 72, no. 5, pp. 610–624, 1982. [45] D. Brandw o o d, “A complex gradien t op erator and its application in adaptiv e arra y theory ,” IEE Pr o c. F-Communic ations, R adar Signal Pr o c ess ., v ol. 130, no. 1, pp. 11–16, 1983. [46] K. Kreutz-Delgado, “The complex gradient op erator and the cr-calculus,” arXiv pr eprint arXiv:0906.4835 , 2009. [47] L. Sorb er, M. V. Barel, and L. D. Lathau wer, “Unconstrained optimization of real functions in complex v ariables,” SIAM J. on Optim ., vol. 22, no. 3, pp. 879–898, 2012. [48] D. P . Kingma and J. Ba, “Adam: A metho d for sto chastic optimization,” CoRR , v ol. abs/1412.6980, 2014. [49] S. Ahn and J. A. F essler, “Globally con vergen t image reconstruction for emission tomography using relaxed ordered subsets algorithms,” IEEE T r ansactions on Me d. Imaging , v ol. 22, no. 5, pp. 613–626, 2003. [50] D. P . Bertsek as, Nonline ar pr o gr amming . Athena Scientific, 1999. [51] Y. A. LeCun, L. Bottou, G. B. Orr, and K.-R. Müller, “Efficient backprop,” in Neur al networks: T ricks of the tr ade , pp. 9–48, Springer, 2012. [52] S. Ruder, “An ov erview of gradient descent optimization algorithms,” arXiv pr eprint arXiv:1609.04747 , 2016. [53] E. J. R. P auw els, A. Beck, Y. C. Eldar, and S. Sabach, “On fien up metho ds for sparse phase retriev al,” IEEE T r ansactions on Signal Pr o c ess ., vol. 66, no. 4, pp. 982–991, 2018. [54] R. Xu, M. Soltanolk otabi, J. P . Haldar, W. Unglaub, J. Zusman, A. F. Levi, and R. M. Leah y , “Accelerated wirtinger flow: A fast algorithm for ptyc hography ,” arXiv pr eprint arXiv:1806.05546 , 2018. [55] M. Guizar-Sicairos, S. T. Thurman, and J. R. Fienup, “Efficien t subpixel image registration algorithms,” Opt. L ett ., v ol. 33, pp. 156–158, Jan 2008. [56] Y. S. Nashed, D. J. Vine, T. Peterk a, J. Deng, R. Ross, and C. Jacobsen, “Parallel ptyc ho- graphic reconstruction,” Opt. Expr ess , vol. 22, no. 26, p. 223015, 2014. [57] J. Bergstra and Y. Bengio, “Random searc h for hyper-parameter optimization,” J. Mach. L e arn. R es ., vol. 13, no. F eb, pp. 281–305, 2012. [58] Y. Y ao, L. Rosasco, and A. Cap onnetto, “On early stopping in gradient descen t learning,” Constr. Appr ox ., vol. 26, no. 2, pp. 289–315, 2007. [59] J. W. Go o dman, Intr o duction to F ourier Optics . W.H. F reeman, 2017. [60] M. Sto ckmar, I. Zanette, M. Dierolf, B. Enders, R. Clare, F. Pfeiffer, P . Cloetens, A. Bonnin, and P . Thibault, “ X-ray near-field ptyc hography for optically thick sp ecimens,” Phys. R ev. Appl ., vol. 3, no. 1, pp. 1–6, 2015. 19 [61] R. M. Clare, M. Sto c kmar, M. Dierolf, I. Zanette, and F. Pfeiffer, “ Characterization of near- field ptyc hography,” Opt. Expr ess , vol. 23, no. 15, p. 19728, 2015. [62] A. L. Robisch, K. Kröger, A. Rack, and T. Salditt, “ Near-field ptyc hography using lateral and longitudinal shifts,” New J. Phys ., vol. 17, no. 7, 2015. [63] M. O. Hill, I. Calvo-Almazan, M. Allain, M. V. Holt, A. Ulv estad, J. T reu, G. Koblm uller, C. Huang, X. Huang, H. Y an, et al. , “Measuring three-dimensional strain and structural defects in a single InGaAs nano wire using coheren t x-ray multiangle Bragg pro jection ptyc hography ,” Nano L ett ., vol. 18, no. 2, pp. 811–819, 2018. [64] S. O. Hruszk ewycz, M. Allain, M. V. Holt, C. E. Murray , J. R. Holt, P . H. F uoss, and V. Chamard, “High-resolution three-dimensional structural microscopy by single-angle Bragg pt ychograph y ,” Nat. Mater ., v ol. 16, pp. 244–251, F eb. 2017. [65] A. F annjiang, “Absolute uniqueness of phase retriev al with random illumination,” Inverse Pr obl ., v ol. 28, no. 7, p. 075008, 2012. [66] T. B. Edo, D. J. Batey , A. M. Maiden, C. Rau, U. W agner, Z. D. Pešić, T. A. W aigh, and J. M. Ro denburg, “Sampling in x-ra y ptyc hography ,” Phys. R ev. A , vol. 87, p. 053850, Ma y 2013. [67] V. Chamard, M. Allain, P . Go dard, A. T alneau, G. P atriarche, and M. Burghammer, “Strain in a silicon-on-insulator nanostructure revealed by 3D x-ra y Bragg pt ychograph y ,” Sci. R ep orts , v ol. 5, p. 9827, 2015. 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment