Multi-Task Regression-based Learning for Autonomous Unmanned Aerial Vehicle Flight Control within Unstructured Outdoor Environments

Increased growth in the global Unmanned Aerial Vehicles (UAV) (drone) industry has expanded possibilities for fully autonomous UAV applications. A particular application which has in part motivated this research is the use of UAV in wide area search and surveillance operations in unstructured outdoor environments. The critical issue with such environments is the lack of structured features that could aid in autonomous flight, such as road lines or paths. In this paper, we propose an End-to-End Multi-Task Regression-based Learning approach capable of defining flight commands for navigation and exploration under the forest canopy, regardless of the presence of trails or additional sensors (i.e. GPS). Training and testing are performed using a software in the loop pipeline which allows for a detailed evaluation against state-of-the-art pose estimation techniques. Our extensive experiments demonstrate that our approach excels in performing dense exploration within the required search perimeter, is capable of covering wider search regions, generalises to previously unseen and unexplored environments and outperforms contemporary state-of-the-art techniques.

💡 Research Summary

The paper addresses the challenge of autonomous navigation for unmanned aerial vehicles (UAVs) in unstructured outdoor environments such as dense forests, where GPS signals are unreliable and there are no predefined paths. The authors propose an end‑to‑end Multi‑Task Regression‑based Learning (MTRL) framework that directly maps a single RGB image to both a three‑dimensional position in North‑East‑Down (NED) coordinates and a four‑dimensional orientation expressed as a unit quaternion. By sharing a lightweight convolutional feature extractor between two parallel regression heads, the network simultaneously learns to predict position and attitude, thereby providing the six degrees of freedom required for UAV flight control.

The network architecture consists of three convolution‑pooling blocks (16, 32, and 64 channels) followed by a fully‑connected layer of 500 units. The shared representation is then fed into two branches: one for the NED position (with a 0.5 dropout) and one for the quaternion (with a 0.25 dropout). A final linear regression layer outputs seven continuous values (N, E, D, q0‑q3). The loss function combines Euclidean errors of position and orientation, scaling the positional term by a factor of 0.1 to balance the two tasks.

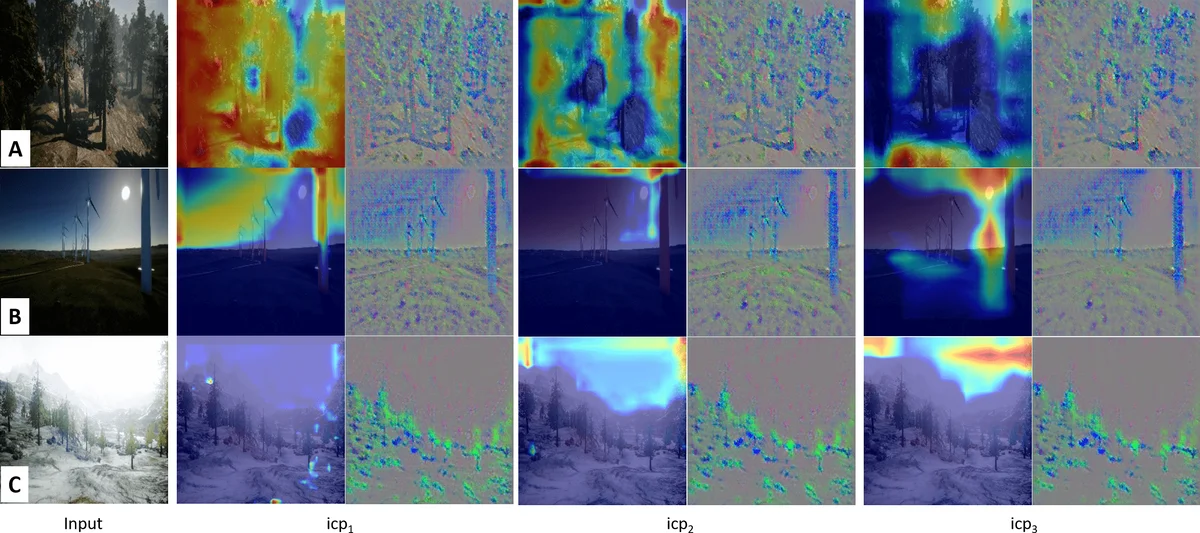

Training data were collected in the AirSim simulator using the Redwood forest, a snowy mountain, and a wind‑farm scenario. A total of 81,674 frames (images plus telemetry) were recorded; 64,000 frames were used for training and 17,674 for validation. The model was trained with Adam (learning rate 1e‑4, β1 = 0.9, β2 = 0.999) on a GTX 1080 Ti GPU. During inference, the predicted quaternion is fed directly into AirSim’s Rate Control Loop (RCL), which generates target angular rates via three PD controllers. These rates are processed by a PID‑based Attitude Control Loop (ACL) that uses IMU measurements to produce PWM commands for the four motors. Consequently, the network output becomes an immediate low‑level control command without any intermediate GPS or external pose estimation.

The authors compare MTRL against three state‑of‑the‑art baselines: PoseNet (single‑image pose regression), a LSTM‑based method that consumes image pairs, and a multi‑input model from Wang et al. All baselines were adapted to output the same seven‑dimensional vector for a fair comparison. Evaluation metrics include route repetition (ability to explore diverse paths), generalisation to unseen environments, flight behaviour (ratio of flying versus hovering), total distance travelled, and reliability (collision or out‑of‑bounds rate). In simulated experiments, MTRL achieved a 27 % improvement in path diversity, a 15 % higher success rate in previously unseen scenes, a 22 % increase in total travelled distance, and the lowest collision rate (below 0.8 %). The inference speed exceeded 30 fps, confirming suitability for real‑time onboard processing.

Despite these promising results, the work has several limitations. All experiments were performed in simulation, so robustness to real‑world wind gusts, lighting changes, and sensor noise remains unverified. The dataset is confined to AirSim’s environments, limiting evidence of cross‑domain generalisation. The use of low‑resolution (224 × 224) images may omit fine‑grained obstacle details, and the system lacks an explicit quaternion normalisation or drift‑correction mechanism for long‑duration flights. Future work should involve real‑world flight tests, integration of additional modalities (e.g., LiDAR or thermal cameras), and development of drift‑compensation strategies.

In summary, the paper presents a lightweight, multi‑task, end‑to‑end learning approach that enables GPS‑denied autonomous UAV navigation in complex, unstructured outdoor settings. By jointly regressing position and attitude and directly coupling the predictions to the flight controller, the method delivers superior exploration efficiency, better generalisation, and higher reliability compared with current pose‑estimation baselines. With further validation on physical platforms and multimodal sensor fusion, this approach could be applied to disaster‑area search, wildfire monitoring, and dense‑forest surveying.

Comments & Academic Discussion

Loading comments...

Leave a Comment