ASVspoof 2019: Future Horizons in Spoofed and Fake Audio Detection

ASVspoof, now in its third edition, is a series of community-led challenges which promote the development of countermeasures to protect automatic speaker verification (ASV) from the threat of spoofing. Advances in the 2019 edition include: (i) a cons…

Authors: Massimiliano Todisco, Xin Wang, Ville Vestman

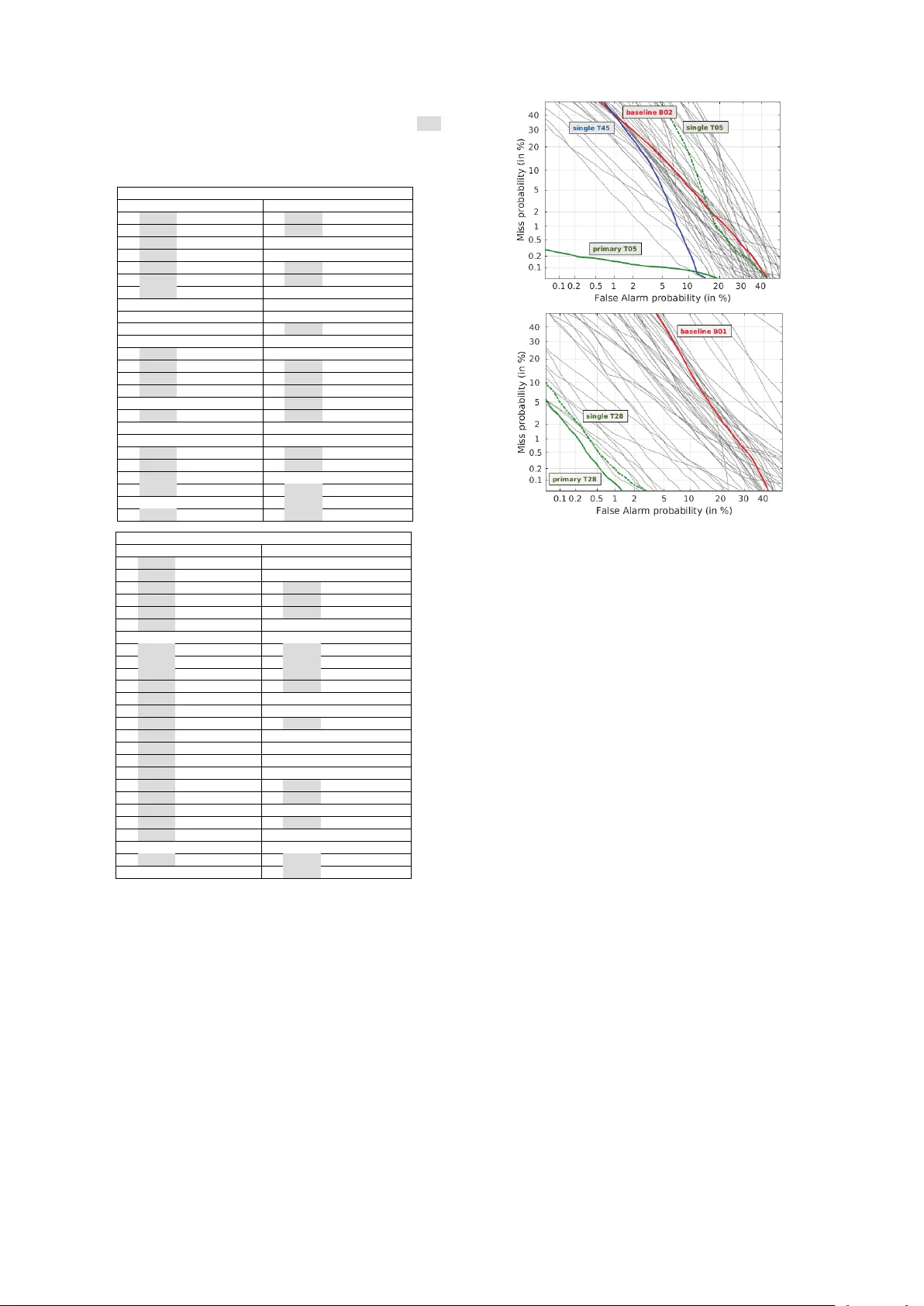

ASVspoof 2019: Futur e Horizons in Spoofed and F ake A udio Detection Massimiliano T odisco 1 , Xin W ang 2 , V ille V estman 3 , 6 , Md Sahidullah 4 , H ´ ector Delgado 1 , Andr eas Nautsc h 1 , J unic hi Y amagishi 2 , 5 , Nicholas Evans 1 , T omi Kinnunen 3 and K ong Aik Lee 6 1 EURECOM, France – 2 National Institute of Informatics, Japan – 3 Uni versity of Eastern Finland, Finland – 4 Inria, France – 5 Uni versity of Edinb ur gh, UK – 6 NEC Corp., Japan info@asvspoof.org Abstract ASVspoof, now in its third edition, is a series of community- led challenges which promote the de velopment of countermea- sures to protect automatic speaker verification (ASV) from the threat of spoofing. Advances in the 2019 edition include: (i) a consideration of both logical access (LA) and physical access (P A) scenarios and the three major forms of spoofing attack, namely synthetic, con verted and replayed speech; (ii) spoof- ing attacks generated with state-of-the-art neural acoustic and wa veform models; (iii) an improved, controlled simulation of replay attacks; (i v) use of the tandem detection cost function (t- DCF) that reflects the impact of both spoofing and countermea- sures upon ASV reliability . Even if ASV remains the core fo- cus, in retaining the equal error rate (EER) as a secondary met- ric, ASVspoof also embraces the growing importance of fake audio detection . ASVspoof 2019 attracted the participation of 63 research teams, with more than half of these reporting sys- tems that impro ve upon the performance of two baseline spoof- ing countermeasures. This paper describes the 2019 database, protocols and challenge results. It also outlines major findings which demonstrate the real progress made in protecting against the threat of spoofing and fake audio. Index T erms : spoofing, automatic speaker verification, ASVspoof, presentation attack detection, fake audio. 1. Introduction The ASVspoof initiativ e [1, 2, 3] spearheads research in anti- spoofing for automatic speaker verification (ASV). Pre vious ASVspoof editions focused on the design of spoofing coun- termeasures for synthetic and con verted speech (2015) and re- played speech (2017). ASVspoof 2019 [4], the first edition to focus on all three major spoofing attack types, extends pre vious challenges in several directions, not least in terms of adressing two different use case scenarios: logical access (LA) and phys- ical access (P A). The LA scenario in v olves spoofing attacks that are injected directly into the ASV system. Attacks in the LA scenario are generated using the latest text-to-speech synthesis (TTS) and voice con version (VC) technologies. The best of these algo- rithms produce speech which is perceptually indistinguishable from bona fide speech. ASVspoof 2019 thus aims to deter- mine whether the advances in TTS and VC technology pose a greater threat to the reliability of ASV systems, as well as spoofing countermeasures. For the P A scenario, speech data is assumed to be captured by a microphone in a physical, rever - berant space. Replay spoofing attacks are recordings of bona fide speech which are assumed to be captured, possibly surrep- titiously , and then re-presented to the microphone of an ASV system using a replay device. In contrast to the 2017 edition of ASVspoof, the 2019 edition P A database is constructed from a far more controlled simulation of replay spoofing attacks that is also relev ant to the study of fake audio detection in the case of, e.g . smart home devices. While the equal err or rate (EER) metric of previous edi- tions is retained as a secondary metric, ASVspoof 2019 mi- grates to a new primary metric in the form of the ASV -centric tandem decision cost function (t-DCF) [5]. While the challenge is still a stand-alone spoofing detection task which does not re- quire expertise in ASV , adoption of the t-DCF ensures that scor- ing and ranking reflects the comparativ e impact of both spoofing and countermeasures upon an ASV system . This paper describes the ASVspoof 2019 challenge, the LA and P A scenarios, the ev aluation rules and protocols, the t-DCF metric, the common ASV system, baseline countermeasures and challenge results. 2. Database The ASVspoof 2019 database encompasses two partitions for the assessment of LA and P A scenarios. Both are deriv ed from the VCTK base corpus 1 which includes speech data cap- tured from 107 speakers (46 males, 61 females). Both LA and P A databases are themselv es partitioned into three datasets, namely training, de velopment and e valuation which comprise the speech from 20 (8 male, 12 female), 10 (4 male, 6 female) and 48 (21 male, 27 female) speakers respecti vely . The three partitions are disjoint in terms of speakers and the recording conditions for all source data are identical. While the training and de velopment sets contain spoofing attacks generated with the same algorithms/conditions (designated as known attacks), the ev aluation set also contains attacks generated with differ - ent algorithms/conditions (designated as unknown attacks). Re- liable spoofing detection performance therefore calls for sys- tems that generalise well to pre viously-unseen spoofing attacks. W ith full descriptions av ailable in the ASVspoof 2019 ev alua- tion plan [4], the following presents a summary of the specific characteristics of the LA and P A databases. 2.1. Logical access The LA database contains bona fide speech and spoofed speech data generated using 17 different TTS and VC systems. Data used for the training of TTS and VC systems also comes from the VCTK database but there is no overlap with the data con- tained in the 2019 database. Six of these systems are desig- nated as known attacks, with the other 11 being designated as unknown attacks. The training and de velopment sets con- tain known attacks only whereas the evaluation set contains 2 known and 11 unknown spoofing attacks. Among the 6 known attacks there are 2 VC systems and 4 TTS systems. VC sys- tems use a neural-netw ork-based and spectral-filtering-based 1 http://dx.doi.org/10.7488/ds/1994 approaches [6]. TTS systems use either wa veform concatena- tion or neural-network-based speech synthesis using a con ven- tional source-filter v ocoder [7] or a W aveNet-based v ocoder [8]. The 11 unknown systems comprise 2 VC, 6 TTS and 3 hybrid TTS-VC systems and were implemented with various wav e- form generation methods including classical vocoding, Griffin- Lim [9], generativ e adversarial networks [10], neural wav e- form models [8, 11], wav eform concatenation, wa veform fil- tering [12], spectral filtering, and their combination. 2.2. Physical access Inspired by work to analyse and improve ASV reliability in re- verberant conditions [13, 14] and a similar approach used in the study of replay reported in [15], both bona fide data and spoofed data contained in the P A database are generated according to a simulation [16, 17, 18] of their presentation to the microphone of an ASV system within a reverberant acoustic en vironment. Replayed speech is assumed first to be captured with a recording device before being replayed using a non-linear replay device. T raining and dev elopment data is created according to 27 dif fer- ent acoustic and 9 different replay configurations. Acoustic con- figurations comprise an exhaustiv e combination of 3 categories of room sizes, 3 cate gories of reverberation and 3 categories of speaker/talker 2 -to-ASV microphone distances. Replay con- figurations comprise 3 categories of attacker -to-talker record- ing distances, and 3 categories of loudspeaker quality . Replay attacks are simulated with a random attacker-to-talker record- ing distance and a random loudspeaker quality corresponding to the giv en configuration category . Both bona fide and replay spoofing access attempts are made with a random room size, rev erberation level and talker -to-ASV microphone distance. Evaluation data is generated in the same manner as train- ing and development data, albeit with different, random acous- tic and replay configurations. The set of room sizes, le vels of reverberation, talker-to-ASV microphone distances, attacker - to-talker recording distances and loudspeaker qualities, while drawn from the same configuration categories, are different to those for the training and development set. Accordingly , while the categories are the same and known a priori, the specific im- pulse responses and replay devices used to simulate bona fide and replay spoofing access attempts are dif ferent or unknown . It is expected that reliable performance will only be obtained by countermeasures that generalise well to these conditions, i.e. countermeasures that are not over -fitted to the specific acoustic and replay configurations observ ed in training and development data. 3. Perf ormance measur es and baselines ASVspoof 2019 focuses on assessment of tandem systems con- sisting of both a spoofing countermeasure (CM) (designed by the participant) and an ASV system (provided by the organis- ers). The performance of the two combined systems is evaluated via the minimum normalized tandem detection cost function (t-DCF , for the sake of easier tractability) [5] of the form: t-DCF min norm = min s { β P cm miss ( s ) + P cm fa ( s ) } , (1) where β depends on application parameters (priors, costs) and ASV performance (miss, false alarm, and spoof miss rates), while P cm miss ( s ) and P cm fa ( s ) are the CM miss and false alarm rates at threshold s . The minimum in (1) is taken over all thresholds 2 From hereon we refer to talkers in order to av oid potential confu- sion with loud speakers used to mount replay spoofing attacks. on giv en data (development or evaluation) with a known ke y , corresponding to oracle calibration. While the challenge rank- ings are based on pooled performance in either scenario (LA or P A), results are also presented when decomposed by attack. In this case, β depends on the effecti veness of each attack. In par- ticular , with everything else being constant, β is in versely pr o- portional to the ASV false accept rate for a specific attack : the penalty when a CM falsely rejects bona fide speech is higher in the case of less effecti ve attacks. Like wise, the relative penalty when a CM falsely accepts spoofs is higher for more effecti ve attacks. Thus, while (1) appears to be deceptiv ely similar to the NIST DCF , β (hence, the cost function itself) is automati- cally adjusted according to the ef fectiv eness of each attack. Full details of the t-DCF metric and specific configuration parame- ters as concerns ASVspoof 2019 are presented in [4]. The EER serves as a secondary metric. The EER corresponds to a CM operating point with equal miss and false alarm rates and was the primary metric for pre vious editions of ASVspoof. W ithout an explicit link to the impact of CMs upon the reliability of an ASV system, the EER may be more appropriate as a metric for fake audio detection, i.e . where there is no ASV system. The common ASV system uses x-vector speaker embed- dings [14] together with a pr obabilistic linear discriminant analysis (PLD A) [19] backend. The x-vector model used to compute ASV scores required to compute the t-DCF is pre- trained 3 with the Kaldi [20] V oxCeleb [21] recipe. The original recipe is modified to include PLD A adaptation using disjoint, bona fide, in-domain data. Adaptation was performed sepa- rately for LA and P A scenarios since bona fide recordings for the latter contain additional simulated acoustic and recording effects. The ASV operating point, needed in computing β in (1), is set to the EER point based on target and nontar get scores. ASVspoof 2019 adopted two CM baseline systems. They use a common Gaussian mixture model (GMM) back-end clas- sifier with either constant Q cepstral coefficient (CQCC) fea- tures [22, 23] (B01) or linear fr equency cepstral coefficient (LFCC) features [24] (B02). 4. Challenge results T able 1 shows results 4 in terms of the t-DCF and EER for pri- mary systems, pooled o ver all attacks. For the LA scenario, 27 of the 48 participating teams produced systems that outper- formed the best baseline B02. For the P A scenario, the per - formance of B01 was bettered by 32 of the 50 participating teams. There is substantial variation in minimum t-DCF and EER for both LA and P A scenarios. The top-performing sys- tem for the LA scenario, T05, achieved a t-DCF of 0.0069 and EER of 0.22%. The top-performing system for the P A scenario, T28, achiev ed a t-DCF of 0.0096 and EER of 0.39%. Confirm- ing observations reported in [5], monotonic increases in the t- DCF that are not al ways mirrored by monotonic increases in the EER show the importance of considering the performance of the ASV and CM systems in tandem . T able 1 also shows that the top 7 (LA) and 6 (P A) systems used neural networks whereas 9 (LA) and 10 (P A) systems used an ensemble of classifiers. 4.1. CM analysis Corresponding CM detection error trade-off (DET) plots (no combination with ASV) are illustrated for LA and P A scenar- 3 http://kaldi- asr.org/models/m7 4 As for pre vious editions of ASVspoof, results are anonymised, with individual teams being able to identify their position in the evaluation rankings via an identifier communicated separately to each of them. T able 1: Primary system results. Results shown in terms of min- imum t-DCF and the CM EER [%]. IDs highlighted in gre y signify systems that used neural networks in either the fr ont- or back-end. IDs highlighted in bold font signify systems that use an ensemble of classifiers. ASVspoof 2019 LA scenario # ID t-DCF EER # ID t-DCF EER 1 T05 0.0069 0.22 26 T57 0.2059 10.65 2 T45 0.0510 1.86 27 T42 0.2080 8.01 3 T60 0.0755 2.64 28 B02 0.2116 8.09 4 T24 0.0953 3.45 29 T17 0.2129 7.63 5 T50 0.1118 3.56 30 T23 0.2180 8.27 6 T41 0.1131 4.50 31 T53 0.2252 8.20 7 T39 0.1203 7.42 32 T59 0.2298 7.95 8 T32 0.1239 4.92 33 B01 0.2366 9.57 9 T58 0.1333 6.14 34 T52 0.2366 9.25 10 T04 0.1404 5.74 35 T40 0.2417 8.82 11 T01 0.1409 6.01 36 T55 0.2681 10.88 12 T22 0.1545 6.20 37 T43 0.2720 13.35 13 T02 0.1552 6.34 38 T31 0.2788 15.11 14 T44 0.1554 6.70 39 T25 0.3025 23.21 15 T16 0.1569 6.02 40 T26 0.3036 15.09 16 T08 0.1583 6.38 41 T47 0.3049 18.34 17 T62 0.1628 6.74 42 T46 0.3214 12.59 18 T27 0.1648 6.84 43 T21 0.3393 19.01 19 T29 0.1677 6.76 44 T61 0.3437 15.66 20 T13 0.1778 6.57 45 T11 0.3742 18.15 21 T48 0.1791 9.08 46 T56 0.3856 15.32 22 T10 0.1829 6.81 47 T12 0.4088 18.27 23 T54 0.1852 7.71 48 T14 0.4143 20.60 24 T38 0.1940 7.51 49 T20 1.0000 92.36 25 T33 0.1960 8.93 50 T30 1.0000 49.60 ASVspoof 2019 P A scenario # ID t-DCF EER # ID t-DCF EER 1 T28 0.0096 0.39 27 T29 0.2129 8.48 2 T45 0.0122 0.54 28 T01 0.2129 9.07 3 T44 0.0161 0.59 29 T54 0.2130 11.93 4 T10 0.0168 0.66 30 T35 0.2286 7.77 5 T24 0.0215 0.77 31 T46 0.2372 8.82 6 T53 0.0219 0.88 32 T34 0.2402 10.35 7 T17 0.0266 0.96 33 B01 0.2454 11.04 8 T50 0.0350 1.16 34 T38 0.2460 9.12 9 T42 0.0372 1.51 35 T59 0.2490 10.53 10 T07 0.0570 2.45 36 T03 0.2593 11.26 11 T02 0.0614 2.23 37 T51 0.2617 11.92 12 T05 0.0672 2.66 38 T08 0.2635 10.97 13 T25 0.0749 3.01 39 T58 0.2767 11.28 14 T48 0.1133 4.48 40 T47 0.2785 10.60 15 T57 0.1297 4.57 41 T09 0.2793 12.09 16 T31 0.1299 5.20 42 T32 0.2810 12.20 17 T56 0.1309 4.87 43 T61 0.2958 12.53 18 T49 0.1351 5.74 44 B02 0.3017 13.54 19 T40 0.1381 5.95 45 T62 0.3641 13.85 20 T60 0.1492 6.11 46 T19 0.4269 21.25 21 T14 0.1712 6.50 47 T36 0.4537 18.99 22 T23 0.1728 7.19 48 T41 0.5452 28.98 23 T13 0.1765 7.61 49 T21 0.6368 27.50 24 T27 0.1819 7.98 50 T15 0.9948 42.28 25 T22 0.1859 7.44 51 T30 0.9998 50.19 26 T55 0.1979 8.19 52 T20 1.0000 92.64 ios in Fig. 1. Highlighted in both plots are profiles for the two baseline systems B01 and B02, the best performing primary systems for teams T05 and T28, and the same teams’ single systems. Also shown are profiles for the overall best perform- ing single system for the LA and P A scenarios submitted by teams T45 and, again, T28 respectiv ely . For the LA scenario, very few systems deliver EERs below 5%. A dense concentra- tion of systems deli ver EERs between 5% and 10%. Of interest is the especially low EER deli vered by the primary T05 system, which deliv ers a substantial improvement ov er the same team’ s best performing single system. Even the overall best performing single system of T45 is some way behind, suggesting that reli- able performance for the LA scenario depends upon the fusion of complementary sub-systems. This is likely due to the div er- sity in attack families, namely TTS, VC and hybrid TTS-VC (a) (b) Figure 1: CM DET pr ofiles for (a) LA and (b) P A scenarios. systems. Observations are different for the P A scenario. There is a greater spread in EERs and the difference between the best performing primary and single systems (both from T28) is much narrower . That a low EER can be obtained with a single system suggests that reliable performance is less dependent upon effec- tiv e fusion strategies. This might be due to lesser variability (as compared to that for the LA) in replay spoofing attacks; there is only one family of attack which exhibits dif ferences only in the lev el of conv olutional channel noise. 4.2. T andem analysis Fig. 2 illustrates boxplots of the t-DCF when pooled (left-most) and when decomposed separately for each of the spoofing at- tacks in the ev aluation set. Results are shown individually for the best performing baseline, primary and single systems whereas the boxes illustrate the variation in performance for the top-10 performing systems. Illustrated to the top of each box- plot are the EER of the common ASV system (when subjected to each attack) and the median CM EER across all primary sys- tems. The ASV system deliv ers baseline EERs (without spoof- ing attacks) of 2.48% and 6.47% for LA and P A scenarios re- spectiv ely . As shown in Fig. 2(a) for the LA scenario, attacks A10, A13 and, to a lesser extent, A18, degrade ASV performance while being challenging to detect. They are end-to-end TTS with W a- veRNN and a speak er encoder pretrained for ASV [25], VC using moment matching networks [26] and wav eform filtering [12], and i-vector/PLD A based VC [27] using a DNN glottal vocoder [28], respecti vely . Although A08, A12, and A15 also use neural wav eform models and threaten ASV , they are easier to detect than A10. One reason may be that A08, A12, A15 are pipeline TTS and VC systems while A10 is optimized in an end-to-end manner . Another reason may be that A10 transfers ASV kno wledge into TTS, implying that adv ances in ASV also improv e the LA attacks. A17, a V AE-based VC [29] with w av e- form filtering, poses little threat to the ASV system, b ut it is the most dif ficult to detect and lead to the highest t-DCF . All the pooled A07 A08 A09 A10 A11 A12 A13 A14 A15 A16 A17 A18 A19 CM ASV t-DCF 7.83 44.66 0.02 59.68 0.09 40.39 0.06 8.38 12.21 57.73 0.59 59.64 3.75 46.18 12.41 46.78 2.88 64.01 3.22 58.85 0.02 64.52 15.93 3.92 5.59 7.35 0.06 14.58 primary T05 B01 B02 single T45 10 -4 10 -3 10 -2 10 -1 10 0 EER [%] pooled AA AB AC BA BB BC CA CB CC 8.09 40.48 17.01 45.03 5.64 43.98 2.21 41.01 14.32 40.56 4.40 39.96 1.67 37.85 12.99 38.83 4.28 38.16 1.96 36.42 primary T28 B01 B02 single T28 (a) (b) Figure 2: Boxplots of the top-10 performing LA (a) and P A (b) ASVspoof 2019 submissions. Results illustrated in terms of t-DCF decomposed for the 13 (LA) and 9 (P A) attacks in the evaluation partion. ASV under attack and the median CM EER [%] of all the submitted systems ar e shown above the boxplots. A16 and A19 are known attac ks. abov e attacks are new attacks not included in ASVspoof 2015. More consistent trends can be observed for the P A sce- nario. Fig. 2(b) shows the t-DCF when pooled and decom- posed for each of the 9 replay configurations. Each attack is a combination of different attacker -to-talker recording dis- tances { A,B,C } X, and replay device qualities X { A,B,C } [4]. When subjected to replay attacks, the EER of the ASV sys- tem increases more when the attacker -to-talker distance is low (near-field effect) and when the attack is performed with higher quality replay de vices (fe wer channel ef fects). There are similar observations for CM performance and the t-DCF; lo wer quality replay attacks can be detected reliably whereas higher quality replay attacks present more of a challenge. 5. Discussion Care must be ex ercised in order that t-DCF results are inter - preted correctly . The reader may find it curious, for instance, that LA attack A17 corresponds to the highest t-DCF while, with an ASV EER of 3.92%, the attack is the least ef fective . Con versely , attack A16 provok es an ASV EER of almost 65% 5 , yet the median t-DCF is among the lowest. So, does A17 — a weak attack — really pose a problem? The answer is affir - mativ e: A17 is problematic, as far as the t-DCF is concerned. Further insight can be obtained from the attack-specific weights β of (1). F or A17, a value of β ≈ 26 , indicates that the in- duced cost function provides 26 times higher penalty for reject- ing bona fide users, than it does for missed spoofing attacks passed to the ASV system. The behavior of primary system T05 in Fig. 1(a), with an aggressively tilted slope to wards the low false alarm region, may explain why the t-DCF is near an order of magnitude better than the second best system. 6. Conclusions ASVspoof 2019 addressed two dif ferent spoofing scenarios, namely LA and P A, and also the three major forms of spoofing attack: synthetic, conv erted and replayed speech. The LA sce- nario aimed to determine whether advances in countermeasure design have kept pace with progress in TTS and VC technolo- gies and whether , as result, today’ s state-of-the-art systems pose a threat to the reliability of ASV . While findings show that the most recent techniques, e.g. those using neural wav eform mod- els and wa veform filtering, in addition to those resulting from transfer learning (TTS and VC systems borrowing ASV tech- niques) do indeed provok e greater degradations in ASV perfor- mance, there is potential for their detection using countermea- sures that combine multiple classifiers. The P A scenario aimed 5 Scores produced by spoofing attacks are higher than those of gen- uine trials. to assess the spoofing threat and countermeasure performance via simulation with which factors influencing replay spoofing attacks could be carefully controlled and studied. Simulations consider variation in room size and reverberation time, replay device quality and the physical separation between both talkers and attackers (making surreptitious recordings) and talkers and the ASV system microphone. Irrespectiv e of the replay config- uration, all replay attacks degrade ASV performance, yet, reas- suringly , there is promising potential for their detection. Also new to ASVspoof 2019 and with the objective of as- sessing the impact of both spoofing and countermeasures upon ASV reliability , is adoption of the ASV -centric t-DCF metric. This strategy marks a departure from the independent assess- ment of countermeasure performance in isolation from ASV and a shift tow ards cost-based ev aluation. Much of the spoofing attack research across different biometric modalities re volv es around the premise that spoofing attacks are harmful and should be detected at any cost. That spoofing attacks ha ve potential for harm is not in dispute. It does not necessarily follow , howe ver , that every attack must be detected. Depending on the appli- cation, spoofing attempts could be extremely rare or, in some cases, inef fectiv e. Preparing for a worst case scenario, when that worst case is unlikely in practice, incurs costs of its own, i.e. degraded user con venience. The t-DCF framew ork enables one to encode explicitly the rele vant statistical assumptions in terms of a well-defined cost function that generalises the classic NIST DCF . A key benefit is that the t-DCF disentangles the roles of ASV and CM dev elopers as the error rates of the two systems are still treated independently . As a result, ASVspoof 2019 fol- lowed the same, familiar format as previous editions, inv olving a lo w entry barrier — participation still requires no ASV e xper- tise and participants need submit countermeasures scores only — the ASV system is provided by the organisers and is common to the assessment of all submissions. W ith the highest number of submissions in ASVspoof ’ s history , this strategy appears to hav e been a resounding success. Acknowledgements: The authors express their profound gratitude to the 27 persons from 14 or ganisations who contributed to creation of the LA database. The work was partially supported by: JST CREST Grant No. JPMJCR18A6 (V oicePersonae project), Japan; MEXT KAKENHI Grant Nos. (16H06302, 16K16096, 17H04687, 18H04120, 18H04112, 18KT0051), Japan; the V oicePersonae and RESPECT projects funded by the French Agence Nationale de la Recherche (ANR); the Academy of Finland (NOTCH project no. 309629); Region Grand Est, France. The authors at the Uni versity of Eastern Finland also gratefully ac- knowledge the use of computational infrastructures at CSC – IT Center for Science, and the support of NVIDIA Corporation with the donation of a T itan V GPU used in this research. 7. References [1] N. Ev ans, T . Kinnunen, and J. Y amagishi, “Spoofing and coun- termeasures for automatic speaker verification, ” in Proc. Inter- speech, Annual Conf. of the Int. Speech Comm. Assoc. , L yon, France, August 2013, pp. 925–929. [2] Z. W u, T . Kinnunen, N. Evans, J. Y amagishi, C. Hanilc ¸ i, M. Sahidullah, and A. Sizov , “ASVspoof 2015: the first automatic speaker verification spoofing and countermeasures challenge, ” in Pr oc. Interspeech, Annual Conf . of the Int. Speech Comm. Assoc. , Dresden, Germany , September 2015, pp. 2037–2041. [3] T . Kinnunen, M. Sahidullah, H. Delgado, M. T odisco, N. Evans, J. Y amagishi, and K. Lee, “The ASVspoof 2017 challenge: As- sessing the limits of replay spoofing attack detection, ” in Pr oc. Interspeech, Annual Conf . of the Int. Speech Comm. Assoc. , 2017, pp. 2–6. [4] ASVspoof 2019: the automatic speaker v erification spoofing and countermeasures challenge ev aluation plan. [Online]. A v ailable: http://www .asvspoof.org/asvspoof2019/ asvspoof2019 ev aluation plan.pdf [5] T . Kinnunen, K. Lee, H. Delgado, N. Evans, M. T odisco, M. Sahidullah, J. Y amagishi, and D. A. Reynolds, “t-DCF: a detection cost function for the tandem assessment of spoofing countermeasures and automatic speaker verification, ” in Pr oc. Odyssey , Les Sables d’Olonne, France, June 2018. [6] D. Matrouf, J.-F . Bonastre, and C. Fredouille, “Effect of speech transformation on impostor acceptance, ” in Pr oc. ICASSP , vol. 1. IEEE, 2006, pp. 933–936. [7] M. Morise, F . Y okomori, and K. Ozawa, “WORLD: A vocoder- based high-quality speech synthesis system for real-time applica- tions, ” IEICE Tr ans. on Information and Systems , vol. 99, no. 7, pp. 1877–1884, 2016. [8] A. v . d. Oord, S. Dieleman, H. Zen, K. Simonyan, O. V inyals, A. Grav es, N. Kalchbrenner, A. Senior, and K. Kavukcuoglu, “W av enet: A generativ e model for raw audio, ” arXiv pr eprint arXiv:1609.03499 , 2016. [9] D. Griffin and J. Lim, “Signal estimation from modified short- time Fourier transform, ” IEEE T rans. ASSP , vol. 32, no. 2, pp. 236–243, 1984. [10] K. T anaka, T . Kaneko, N. Hojo, and H. Kameoka, “Synthetic-to- natural speech wa veform con version using cycle-consistent adver- sarial networks, ” in Pr oc. SLT . IEEE, 2018, pp. 632–639. [11] X. W ang, S. T akaki, and J. Y amagishi, “Neural source-filter-based wav eform model for statistical parametric speech synthesis, ” in Pr oc. ICASSP , 2019, p. (to appear). [12] K. K obayashi, T . T oda, G. Neubig, S. Sakti, and S. Nakamura, “Statistical singing voice conv ersion with direct wav eform modi- fication based on the spectrum differential, ” in Pr oc. Interspeech , 2014, pp. 2514–2518. [13] T . Ko, V . Peddinti, D. Pov ey , M. L. Seltzer , and S. Khudan- pur , “ A study on data augmentation of reverberant speech for ro- bust speech recognition, ” in Proc. IEEE Int. Conf. on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2017, pp. 5220–5224. [14] D. Snyder , D. Garcia-Romero, G. Sell, D. Povey , and S. Khudan- pur , “X-vectors: Robust DNN embeddings for speaker recogni- tion, ” in Pr oc. IEEE Int. Conf. on Acoustics, Speec h and Signal Pr ocessing (ICASSP) , 2018, pp. 5329–5333. [15] A. Janicki, F . Alegre, and N. Evans, “ An assessment of automatic speaker verification vulnerabilities to replay spoofing attacks, ” Se- curity and Communication Networks , vol. 9, no. 15, pp. 3030– 3044, 2016. [16] D. R. Campbell, K. J. Palom ¨ aki, and G. Brown, “A MA TLAB simulation of “shoebox” room acoustics for use in research and teaching.” Computing and Information Systems Journal, ISSN 1352-9404 , vol. 9, no. 3, 2005. [17] E. V incent. (2008) Roomsimove. [Online]. A vailable: http: //homepages.loria.fr/evincent/softw are/Roomsimov e 1.4.zip [18] A. Nov ak, P . Lotton, and L. Simon, “Synchronized swept-sine: Theory , application, and implementation, ” J. Audio Eng. Soc , vol. 63, no. 10, pp. 786–798, 2015. [Online]. A vailable: http://www .aes.org/e- lib/browse.cfm?elib=18042 [19] S. J. Prince and J. H. Elder , “Probabilistic linear discriminant anal- ysis for inferences about identity , ” in 2007 IEEE 11th Interna- tional Confer ence on Computer V ision . IEEE, 2007, pp. 1–8. [20] D. Pove y , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The Kaldi speech recognition toolkit, ” IEEE Signal Processing Society , T ech. Rep., 2011. [21] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxceleb: a large-scale speaker identification dataset, ” arXiv preprint arXiv:1706.08612 , 2017. [22] M. Todisco, H. Delgado, and N. Evans, “A new feature for auto- matic speaker verification anti-spoofing: Constant Q cepstral co- efficients, ” in Proc. Odysse y , Bilbao, Spain, 6 2016. [23] M. T odisco, H. Delgado, and N. Evans, “Constant Q cepstral co- efficients: A spoofing countermeasure for automatic speaker ver- ification, ” Computer Speech & Language , vol. 45, pp. 516 – 535, 2017. [24] M. Sahidullah, T . Kinnunen, and C. Hanilc ¸ i, “ A comparison of features for synthetic speech detection, ” in Pr oc. Interspeec h, An- nual Conf. of the Int. Speech Comm. Assoc. , Dresden, Germany , 2015, pp. 2087–2091. [25] Y . Jia, Y . Zhang, R. J. W eiss, Q. W ang, J. Shen, F . Ren, Z. Chen, P . Nguyen, R. P ang, I. Lopez-Moreno, and Y . W u, “T ransfer learn- ing from speaker verification to multispeaker text-to-speech syn- thesis, ” CoRR , vol. abs/1806.04558, 2018. [26] Y . Li, K. Swersky , and R. Zemel, “Generati ve moment match- ing netw orks, ” in International Confer ence on Machine Learning , 2015, pp. 1718–1727. [27] T . Kinnunen, L. Juvela, P . Alku, and J. Y amagishi, “Non-parallel voice con version using i-vector PLD A: T owards unifying speaker verification and transformation, ” in 2017 IEEE International Con- fer ence on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2017, pp. 5535–5539. [28] L. Juvela, B. Bollepalli, X. W ang, H. Kameoka, M. Airaksinen, J. Y amagishi, and P . Alku, “Speech wa veform synthesis from MFCC sequences with generative adversarial networks, ” in 2018 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 5679–5683. [29] C.-C. Hsu, H.-T . Hwang, Y .-C. Wu, Y . Tsao, and H.-M. W ang, “V oice con version from unaligned corpora using variational au- toencoding W asserstein generative adversarial networks, ” in Pr oc. Interspeech 2017 , 2017, pp. 3364–3368.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment