Feature exploration for almost zero-resource ASR-free keyword spotting using a multilingual bottleneck extractor and correspondence autoencoders

We compare features for dynamic time warping (DTW) when used to bootstrap keyword spotting (KWS) in an almost zero-resource setting. Such quickly-deployable systems aim to support United Nations (UN) humanitarian relief efforts in parts of Africa wit…

Authors: Raghav Menon, Herman Kamper, Ewald van der Westhuizen

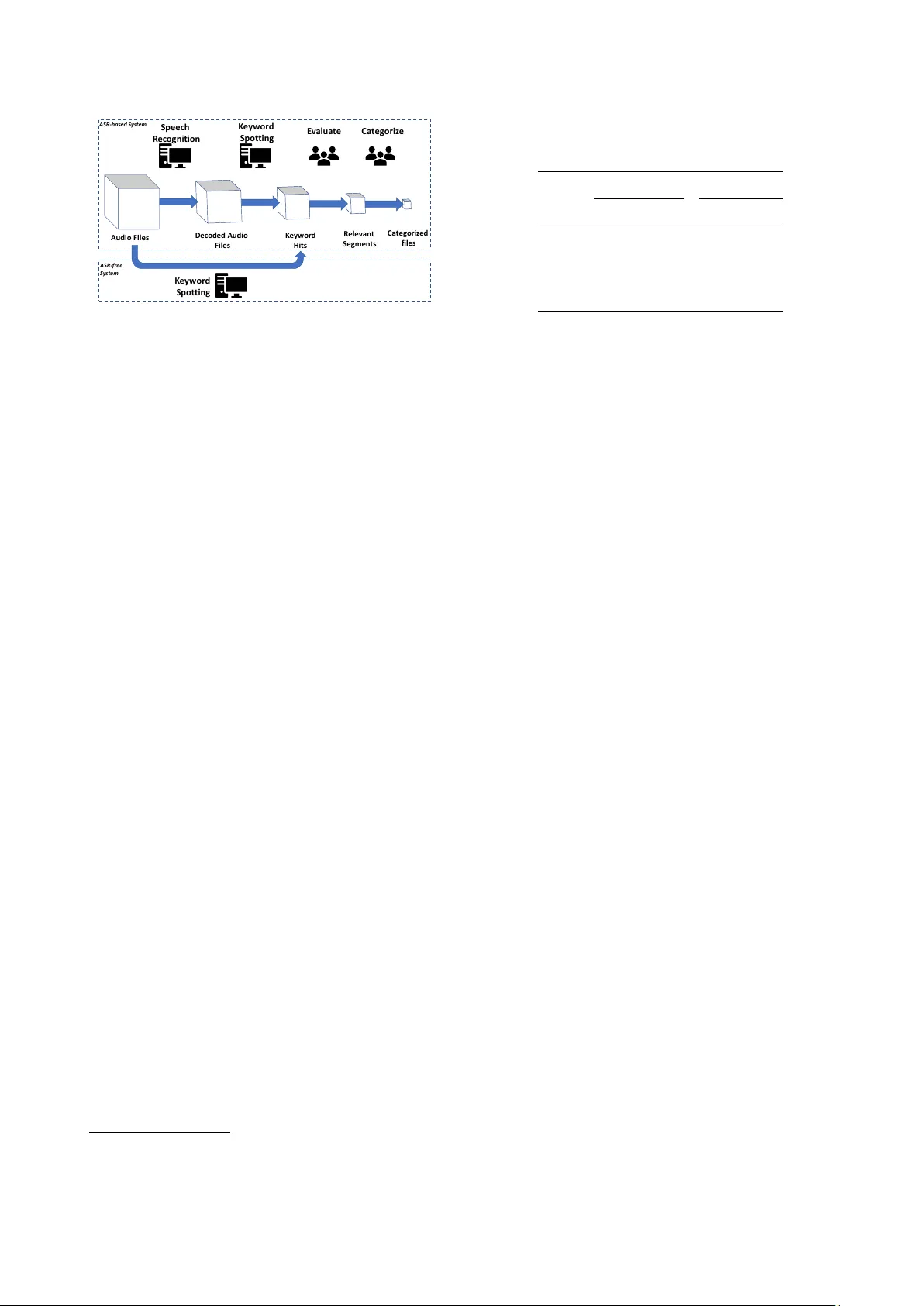

F eatur e exploration f or almost zer o-resour ce ASR-free keyword spotting using a multilingual bottleneck extractor and correspondence autoencoders Raghav Menon 1 , Herman Kamper 1 , Ewald van der W esthuizen 1 , John Quinn 2 , 3 , 4 , Thomas Niesler 1 1 Department of Electrical and Electronic Engineering, Stellenbosch Uni versity , South Africa 2 UN Global Pulse, Kampala, Uganda 3 Department of Computer Science, Makerere Uni versity , Uganda 4 School of Informatics, Uni versity of Edinb urgh, UK rmenon@sun.ac.za kamperh@sun.ac.za, ewaldvdw@sun.ac.za, trn@sun.ac.za Abstract W e compare features for dynamic time warping (DTW) when used to bootstrap keyw ord spotting (KWS) in an almost zero- resource setting. Such quickly-deployable systems aim to sup- port United Nations (UN) humanitarian relief efforts in parts of Africa with sev erely under-resourced languages. Our objective is to identify acoustic features that provide acceptable KWS perfor- mance in such en vironments. As supervised resource, we restrict ourselves to a small, easily acquired and independently compiled set of isolated ke ywords. For feature e xtraction, a multilingual bottleneck feature (BNF) extractor , trained on well-resourced out-of-domain languages, is integrated with a correspondence autoencoder (CAE) trained on extremely sparse in-domain data. On their own, BNFs and CAE features are shown to achiev e a more than 2% absolute performance improvement ov er baseline MFCCs. Howe ver , by using BNFs as input to the CAE, even better performance is achie ved, with a more than 11% absolute improv ement in R OC A UC ov er MFCCs and more than twice as many top-10 retrie v als for two e valuated languages, English and Luganda. W e conclude that integrating BNFs with the CAE allows both lar ge out-of-domain and sparse in-domain resources to be exploited for impro ved ASR-free ke yword spotting. Index T erms : Keyw ord spotting, low-resource speech process- ing, multilingual features, correspondence autoencoder, zero- resource speech technology 1. Introduction In Uganda, internet infrastructure is often poorly developed, pre- cluding the use of social media to gauge sentiment. Instead, community radio phone-in talk shows are used to voice vie ws and concerns. In a project piloted by the United Nations (UN), radio bro wsing systems hav e been dev eloped to monitor such radio shows [1, 2]. Currently , these systems are actively and successfully supporting relief and de velopmental programmes by the organisation. Howe ver , the deployed radio browsing sys- tems use automatic speech recognition (ASR) and are therefore highly dependent on the av ailability of substantial transcribed speech corpora in the target language. This has proved to be a serious impediment when quick interv ention is required, since the dev elopment of such a corpus is always time-consuming. In a con ventional ke yword spotting system, where a speech database is searched for a set of k eywords, ASR is used to gener- ate lattices which are in turn searched for the presence or absence of keyw ords [3, 4]. In resource-constrained settings where ASR is not av ailable and cannot be developed, ASR-free keyword spotting approaches become attractiv e, because these are de vel- oped without substantial labelled data [5 – 10]. One approach to ASR-free keyword spotting is to extend query-by-example search (QbE), where the search query is provided as audio rather than a written keyw ord. QbE can be performed by using dy- namic time warping (DTW) to perform a direct match between a search query and utterances in the search collection [11 – 14]. This approach uses a number of labelled spoken keyw ord in- stances as templates. Each template is used as a query for the DTW -based QbE. Since the class of each template is known, the individual per-ex emplar QbE results can be aggregated to determine whether a certain keyword occurs in a particular utter- ance. The advantage of this approach is that only a small set of labelled keyw ords is required and not a large transcribed corpus as used for ASR-based keyw ord spotting [6, 7]. Recent interest in zero-resource QbE has led researchers to consider the use of v arious features [15 – 21]. Among these, multilingual bottleneck feature (BNF) extractors, trained on well- resourced but out-of-domain languages, have been shown to improv e on the performance of MFCCs [7, 22–30]. Our goal is to improve DTW -based keyw ord spotting by combining the advantages of using labelled resources from well- resourced languages for learning features, with the advantage of fine-tuning on e xtremely sparse labelled data in the lo w-resource target language. For fine-tuning on target data, we use the cor- respondence autoencoder (CAE), a model originally de veloped for the zero-resource setting where only unlabelled data is av ail- able [21, 31]. As target language data, we use a small number of labelled isolated ke ywords that can be easily and quickly g ath- ered. These keyw ord instances do not form part of the radio talk show training and e valuation data and can thus be consid- ered out-of-corpus augmentation data. By learning a mapping between all possible combinations of alternative utterances of the same ke yword type, the CAE can learn to disre gard aspects not common to the keywords, such as speaker , gender and chan- nel, while capturing aspects that are, such as word identity . Our work builds on the ideas established in [22, 23], where a CAE trained on BNFs using a large set of in-corpus, ground truth word pairs outperformed other methods in intrinsic e v aluations. This improvement, howe ver , did not hold consistently when automatically discovered word segments were used, in which case the CAE training was completely unsupervised. In contrast, we show here that consistent improvements can be obtained by combining BNFs with a CAE when fine-tuning on a small number of out-of-corpus gathered keyword instances, i.e. lightly supervised. W e benchmark CAE features against MFCCs and BNFs and show that, when a CAE is trained on top of the BNFs, best keyw ord spotting results are achie ved. This indicates that mul- tilingual feature extraction and tar get language fine-tuning can S p e e c h R e c og n itio n K e y w ord S po t t i ng E v a lua t e C a t e g ori z e A u d i o F i l es D ec o d ed A u d i o F i l es K e y w o r d H i t s Re l e v a n t S e g me n t s Ca te g o r i z ed f i l e s K e y w ord S p ot t ing A S R - b a s e d S y s t e m A S R - f r e e S y s t e m Figure 1: The United Nations (UN) radio br owsing system. be complementary . W e ev aluate our approach for two languages: English, which is a proxy language for experimentation; and Luganda, which is a low-resource language of current interest for humanitarian relief efforts. 2. Radio browsing system The existing UN radio bro wsing system, sho wn in the top half of Figure 1, uses ASR to decode the audio and produces lat- tices that are searched for k eywords. Human analysts filter the detected ke ywords and their metadata is compiled into a struc- tured, categorised and searchable format. The ASR-free system (bottom half) bypasses the ASR and lattice search by detecting occurrences of the keywords directly in the incoming audio [6, 7]. High f alse positi ve keyword spotting rates can be accommodated due to the presence of the human analysts, and the output of the system as a whole has been in continuous successful opera- tion for se veral months. A more detailed discussion on the role of human analysts and the detected topics of interest has been presented in [2]. 1 3. Data W e used a 23-hour English corpus of South African Broadcast News (SABN) [32] and a 9.6-hour corpus of Luganda phone-in talk radio speech as search data in two separate experiments. Since transcriptions are available for these data sets, it allo ws system performance to be experimentally ev aluated. Howe ver , in all other respects we consider the data as untranscribed. English is used as a proxy on which we can perform extensi ve ev aluation, while the implementation in Luganda is a practical application of the system in a truly low-resource language. T able 1 shows how the corpora have been split into training, de velopment and test sets. T o train the English keyw ord spotter, we use a small inde- pendent corpus of 40 isolated keywords, each uttered at least once by 24 South African speakers (12 male, 12 female). The resulting set of 1160 isolated ke yword utterances represents the only labelled in-domain data the English keyword spotter uses for training. There is no speaker ov erlap with the SABN dataset, which is treated exclusi vely as search data. T o train the Luganda keyword spotter , we use a small in- dependent corpus of 18 isolated keywords uttered by various male and female speakers in varying recording conditions. Ap- proximately 32 utterances per keyw ord type were retained after performing quality control on the recordings. The resulting set of 603 isolated ke yword utterances represents the only labelled 1 Examples a vailable at http://radio.unglobalpulse.net . T able 1: The South African English Br oadcast News (SABN) and Luganda datasets. (#utts: Number of utterances; dur: Speech duration in hours; Dev: Development set.) Set English Luganda #utts dur #utts dur T rain 5 231 7.94 6 052 5.57 Dev 2 740 5.37 1 786 2.04 T est 5 005 10.33 1 420 1.99 T otal 12 976 23.64 9 258 9.06 in-domain data our ke yword spotter uses for training. There is no speaker ov erlap with the Luganda talk radio dataset, which is treated exclusi vely as search data. Se ven ke yword types which had frequencies higher than 10 in the corpus dev elopment were retained for e valuation against the dev elopment set. This was done to avoid errors in calculating the metrics caused by very low and zero frequenc y keywords. For the test set, the full set of keyw ords was used for e valuation. The mismatch between the query and search datasets for both languages is intentional as it reflects the operational setting of the radio browsing systems. 4. Dynamic time warping-based keyword spotting Dynamic time warping (DTW) is an appropriate approach to keyw ord detection when only a fe w isolated ex emplars of ke y- words are av ailable, because it requires as little as a single audio template. DTW aligns two time series, represented as feature vector sequences, by warping the relative time axes iterati vely until an optimal match is found. For DTW -based keyword spotting, features are extracted for both the keyword exemplar and the search utterance in which the keyword is to be detected. In our straightforward imple- mentation, the ke yword ex emplar is slid progressiv ely ov er the search utterance and at each step DTW computes the alignment cost between the keyword and the portion of the utterance under alignment. Using a step of 3 frames, the overall best alignment for each search utterance is determined and taken as a score indicating how likely it is that the search utterance contains the keyw ord. Since we have more than one exemplar of the same keyw ord type, the best score across all templates of the same keyw ord type is used. By applying an appropriate threshold to this score, a decision can be taken regarding the presence or absence of the ke yword in each search utterance. More refined DTW -based search approaches have been proposed [11 – 14], mainly to improv e efficiency , but here we restrict ourselves to this straightforward implementation. Future work will consider more advanced matching approaches. 5. Neural network feature extraction W e inv estigate different types of input features for our DTW - based keyw ord spotter . While transcribed in-domain data is dif- ficult, time-consuming and expensi ve to compile, untranscribed in-domain speech audio data is much easier to obtain in sub- stantial quantities. W e in vestigate the use of autoencoders and correspondence autoencoders as a means of taking advantage of such untranscribed data. The latter requires a sparse set of labelled examples in the target language. In addition, although large amounts of transcribed in-domain speech data may not be av ailable, large annotated speech resources do exist for se veral well-resourced languages. These datasets can be used to train multilingual bottleneck feature extractors. 5.1. A utoencoder featur es An autoencoder (AE) is a feedforward neural network trained to reconstruct its input at its output. A single-layer AE consists of an input layer, a hidden layer and an output layer . The AE takes input x ∈ R D and maps it to a hidden representation h = σ ( W (0) x + b (0) ) , with σ denoting a non-linear activ ation (we use tanh ). The output of the AE is obtained by decoding the hidden representation: y = σ ( W (1) h + b (1) ) . The network is trained to reconstruct the input using the loss || x − y || 2 . A stacked AE [33] is obtained by stacking se veral AEs, each AE-layer taking as input the encoding from the previous layer . The stacked network is trained one layer at a time, each layer minimizing the loss of its output with respect to its input. A number of studies hav e shown that hidden representations from an intermediate layer in such a stacked AE are useful as features in speech applications [31, 33–38]. W e train an 8-layer stacked AE feature extractor on the training set shown in T able 1, disregarding the transcriptions. 39-dimensional MFCCs consisting of 13 cepstra, delta and delta- delta coef ficients are used as input. All layers have 100 hidden units, apart from the last hidden layer , which has 39 units. This layer provides the features used in the AE MFCC and AE BNF ex- periments. This last hidden layer feeds into a linear output layer, producing the predicted MFCC vector . 5.2. Correspondence autoencoder featur es While an AE is trained using the same speech frames as input and output, a correspondence autoencoder (CAE) uses frames from different instances of the same keyword type as input and output. Using the set of isolated k eywords, we consider all possible pairs of words of the same type. For each pair , DTW is used to find the minimum-cost frame-lev el alignment between the two w ords, as illustrated in Figure 2. Individual aligned frame pairs are then used as input-output pairs to the CAE. The CAE is therefore trained on pairs of speech features ( x ( a ) , x ( b ) ) , where x ( a ) is a frame from one ke yword, and x ( b ) the corresponding aligned frame from another keyword of the same type. Given input x ( a ) , the output of the network y is then trained to minimise the the CAE loss || y − x ( b ) || 2 , as shown in Figure 2. T o obtain useful features, it is essential to pretrain the CAE as a conv entional AE [31]. Our CAE has the same structure as the AE described in Section 5.1 and pretraining follows the same procedure described there. The pretrained network is then fine-tuned on the set of isolated keywords using the CAE loss described above. Hence, the CAE takes advantage of a large amount of untranscribed data for initialisation, and then com- bines this with a weak form of supervision on a small amount of labelled keyword data. Output features are extracted from the last 39-dimensional hidden layer . The intention is to use the CAE to obtain features that are insensitiv e to factors not common to keyword pairs, such as speaker , gender and channel, while remaining dependent on fac- tors that are, such as the word identity . Furthermore, the number of input-output pairs on which the CAE is fine-tuned is much larger than the total number of frames in the ke yword segments themselves, because all pairwise combinations of different in- stances of a ke yword type are considered. For e xample, for the x ( a ) x ( b ) DTW wo rd ( b ) wo rd ( a ) Figure 2: The correspondence autoencoder (CAE) is trained to r econstruct a frame in one wor d from a fr ame in another . SABN dataset, the ke ywords contain approximately 120k frames in total, while the pairwise combinations yield approximately two million unique aligned frame pairs. Furthermore, frame pairs are presented to the CAE in both input-output directions, thereby doubling the number of training instances to four million. 5.3. Bottleneck features Multilingual bottleneck feature (BNF) extractors trained on a set of well-resourced languages ha ve been sho wn to perform well in a number of studies [7, 22 – 30], and can be applied directly in an almost zero-resource setting. BNFs are obtained by training a deep neural network jointly on transcribed data from multiple languages. The lower layers of the network are shared among all languages. The output layer has phone or HMM state labels as targets and may either be shared by or be separate for each language. The layer directly preceding the output layer often has a lo wer dimensionality than the preceding layers, because it should capture aspects that are common to all the languages, hence, the term “bottleneck. ” Different neural network architectures can be used to ob- tain BNFs. W e used the 6-layer time-delay neural networks (TDNN) trained on 10 languages from the GlobalPhone cor- pus described in [22]. The network uses ReLU activ ations and batch normalisation, with a 39-dimensional bottleneck layer . 40-dimensional high resolution MFCCs appended with 100- dimensional i-vectors for speak er adaptation are used as inputs to the network. 6. Experimental setup In addition to MFCCs, we use each of the neural networks described abov e as feature extractors, using features from the intermediate/bottleneck layers of the CAE, AE and BNF as input to our DTW -based keyw ord spotter . All the neural networks take MFCCs as input. Each takes advantage of resources in a particular way: the AE is trained on untranscribed target lan- guage data; the CAE is initialised on untranscribed data and then fine-tuned on a small amount of labelled target language data; and the BNFs use larger amounts of labelled non-target language data. The complimentary effect of these approaches are also inv estigated by performing experiments in which the AE and CAE are trained with BNFs rather than MFCCs as input. Hyperparameters for the CAE were taken directly from [31], i.e., no further tuning was performed on the dev elopment set, hence, it can be considered a second test set. Ke yword spotting performance is assessed using a number of standard metrics. The receiv er operating characteristic (R OC) is obtained by plotting the false positive rate against the true positiv e rate as the keyw ord detection threshold is varied. The T able 2: English and Luganda ke ywor d spotting performance on development and test data using the differ ent featur e r epr esentations. Subscripts ar e in the column headings to distinguish whether MFCCs or BNFs wer e used as inputs to the AE and CAE. Metric Development (%) T est (%) MFCC AE MFCC CAE MFCC BNF AE BNF CAE BNF MFCC CAE MFCC BNF CAE BNF English A UC 73.32 73.01 77.14 77.81 78.38 86.98 74.10 76.86 76.99 86.39 EER 32.34 33.51 28.91 28.72 28.23 19.24 32.19 30.05 30.12 20.12 P @10 15.75 16.50 25.25 17.00 17.75 42.25 17.00 30.25 22.75 45.75 P @ N 9.43 9.68 14.66 13.99 13.64 30.88 9.75 16.45 12.85 29.99 Luganda A UC 66.51 67.52 69.62 71.24 72.73 78.09 69.57 69.74 73.33 80.59 EER 38.68 38.57 37.20 33.26 31.20 29.33 37.20 37.24 33.73 29.00 P @10 11.43 14.29 28.57 11.43 10.00 45.71 18.89 27.78 26.11 41.67 P @ N 10.72 10.13 13.95 9.99 11.61 26.11 13.87 18.77 18.21 28.80 area under this curve (A UC) is used as a single metric across all operating points. The equal error rate (EER) is the point at which the false positive rate equals the false negati ve rate, i.e. a lo wer EER indicates better system performance. Precision at 10 ( P @10) and precision at N ( P @ N ) are the proportion of correct keyword detections among the top 10 and top N hits, respectiv ely . 7. Results The keyw ord spotting results for both languages are presented in T able 2. The column headings with ‘ M F C C ’ and ‘ B N F ’ are used to distinguish between networks trained using MFCCs and BNFs as input features. The results for MFCC, AE MFCC and CAE MFCC features show that the CAE consistently outperforms the MFCC baseline, while the AE does not pro vide any benefit in this case. The BNF and CAE MFCC results are comparable in the case of SABN English, while BNFs outperform CAE MFCC for Luganda. Using a small amount of labelled data in a tar- get language can therefore be just as beneficial as using large amounts of labelled data from se veral non-tar get languages for feature learning. This may be important in situations where large out-of-domain datasets are not av ailable. Our best overall model on both the de velopment and test data is the CAE BNF . It achieves precision values of approximately 1.7 times better than the closest competitor, while the A UC and EER are approximately 7–9% and 4–10% better than standard BNFs, respectiv ely . Compared to the baseline MFCCs, A UC and EER improv e by 8–12% when using the CAE BNF features. The AE BNF can also achiev e improv ements over its MFCC counterpart, b ut not to the same degree as CAE BNF . The CAE BNF shows the benefits of incorporating features learned from well-resourced non-target languages with fine-tuning on a small amount of la- belled target language data after pretraining on untranscribed in-domain speech. W e sho w this directly in an extrinsic k eyword spotting task that uses features obtained from a lightly super- vised neural network model. In contrast to the work of [22, 23], where discov ered word pairs were used for unsupervised CAE training and the benefit of CAE training on top of BNFs were inconclusiv e, we obtain consistent improv ements in our setting. 8. Conclusion W e in vestigated the use of dif ferent neural network features for improving ASR-free DTW -based keyw ord spotting in an almost zero-resource setting. The only labelled data used were a small number of isolated keyw ord utterances. Features were extracted using a multilingual bottleneck network (BNF), a stacked au- toencoder (AE) and a correspondence autoencoder (CAE). W e also considered combining these, feeding the AE and CAE with BNFs instead of MFCCs. The best performance was achieved with a CAE trained on BNFs. This model combines the benefit of labelled data in well-resourced out-of-domain languages with a technique that can be used on e xtremely sparse in-domain data. Another interesting finding is that, in the absence of multilingual resources to train a BNF extractor , features from a CAE trained on MFCCs can yield comparable performance. Future work includes integrating this model into our larger k eyword spotting framew ork [6] and applying it to languages such as Somali, Ru- tooro and Lugbara, which are spoken in areas where the system will be deployed next. 9. Acknowledgements W e thank NVIDIA Corporation for donating GPU equipment used for this work, and acknowledge the support of T elkom South Africa. HK is supported by a Google Faculty A ward. W e would also like to thank Enno Hermann for assisting with bottleneck feature extraction. 10. References [1] R. Menon et al. , “Radio-browsing for de velopmental monitoring in Uganda, ” in Pr oc. ICASSP , 2017. [2] A. Saeb et al. , “V ery low resource radio bro wsing for agile de- velopmental and humanitarian monitoring, ” in Pr oc. Interspeech , 2017. [3] M. Larson and G. J. F . Jones, “Spoken content retriev al: A survey of techniques and technologies, ” F ound. T rends Inform. Retrieval , vol. 5, no. 4-5, pp. 235–422, 2012. [4] A. Mandal, K. P . Kumar , and P . Mitra, “Recent developments in spoken term detection: a survey , ” Int. J. of Speech T echnol. , vol. 17, no. 2, pp. 183–198, 2014. [5] K. Audhkhasi, A. Rosenberg, A. Sethy , B. Ramabhadran, and B. Kingsbury , “End-to-end ASR-free keyw ord search from speech, ” in Pr oc. ICASSP , 2017. [6] R. Menon, H. Kamper, J. Quinn, and T . Niesler, “F ast ASR-free and almost zero-resource keyword spotting using DTW and CNNs for humanitarian monitoring, ” in Proc. Interspeech , 2018. [7] ——, “ASR-free CNN-DTW keyword spotting using multilingual bottleneck features for almost zero-resource languages, ” in Pr oc. SLTU , 2018. [8] T . N. Sainath and C. Parada, “Con volutional neural networks for small-footprint keyw ord spotting, ” in Proc. Interspeech , 2015. [9] G. Chen, C. Parada, and G. Heigold, “Small-footprint ke yword spotting using deep neural networks. ” in Pr oc. ICASSP , 2014. [10] R. T ang and J. Lin, “Deep residual learning for small-footprint keyw ord spotting, ” in Proc. ICASSP , 2018. [11] T . J. Hazen, W . Shen, and C. White, “Query-by-e xample spoken term detection using phonetic posteriorgram templates, ” in Pr oc. ASR U , 2009. [12] Y . Zhang and J. R. Glass, “Unsupervised spoken keyword spotting via segmental DTW on gaussian posterior grams, ” in Proc. ASRU , 2009. [13] A. S. Park and J. R. Glass, “Unsupervised pattern discovery in speech, ” IEEE T rans. Audio, Speech, Languag e Process. , v ol. 16, no. 1, pp. 186–197, 2008. [14] A. Jansen and B. V an Durme, “Indexing raw acoustic features for scalable zero resource search, ” in Proc. Interspeech , 2012. [15] J. V avrek, M. Plev a, and J. Juh ´ ar , “T uke mediae val 2012: Spoken web search using DTW and unsupervised SVM, ” in MediaEval , 2012. [16] M. A. Carlin, S. Thomas, A. Jansen, and H. Hermansky , “Rapid ev aluation of speech representations for spoken term discovery , ” in Pr oc. Interspeech , 2011. [17] A. Jansen, B. V an Durme, and P . Clark, “The JHU-HL TCOE spoken web search system for MediaEval 2012. ” in MediaEval , 2012. [18] P . Lopez-Otero, L. Docio-Fernandez, and C. Garcia-Mateo, “Find- ing relev ant features for zero-resource query-by-example search on speech, ” Speech Commun. , vol. 84, pp. 24–35, 2016. [19] E. Dunbar et al. , “The Zero Resource Speech Challenge 2017, ” in Pr oc. ASRU , 2017. [20] M. V erstee gh, X. Anguera, A. Jansen, and E. Dupoux, “The Zero Resource Speech Challenge 2015: Proposed approaches and re- sults, ” in Proc. SLTU , 2016. [21] D. Renshaw , H. Kamper , A. Jansen, and S. J. Goldwater , “ A com- parison of neural network methods for unsupervised representation learning on the Zero Resource Speech Challenge, ” in Pr oc. Inter- speech , 2015. [22] E. Hermann and S. J. Goldwater , “Multilingual bottleneck fea- tures for subword modeling in zero-resource languages, ” in Pr oc. Interspeech , 2018. [23] E. Hermann, H. Kamper , and S. J. Goldw ater, “Multilingual and unsupervised subword modeling for zero resource languages, ” In Submission , 2018. [24] K. V esel ` y, M. Karafi ´ at, F . Gr ´ ezl, M. Janda, and E. Egorov a, “The language-independent bottleneck features, ” in Proc. SLT , 2012. [25] N. T . V u, W . Breiter, F . Metze, and T . Schultz, “ An inv estigation on initialization schemes for multilayer perceptron training using multilingual data and their effect on asr performance, ” in Pr oc. Interspeech , 2012. [26] J. Cui et al. , “Multilingual representations for lo w resource speech recognition and keyw ord search, ” in Proc. ASRU , 2015. [27] T . Alum ¨ ae, S. Tsakalidis, and R. M. Schwartz, “Impro ved multi- lingual training of stacked neural network acoustic models for low resource languages, ” in Proc. Interspeech , 2016. [28] S. Thomas, S. Ganapathy , and H. Hermansky , “Multilingual mlp features for low-resource lvcsr systems, ” in Pr oc. ICASSP , 2012. [29] H. Chen, C.-C. Leung, L. Xie, B. Ma, and H. Li, “Multilingual bottle-neck feature learning from untranscribed speech, ” in Pr oc. ASR U , 2017. [30] Y . Y uan et al. , “Extracting bottleneck features and word-like pairs from untranscribed speech for feature representation, ” in Proc. ASR U , 2017. [31] H. Kamper, M. Elsner , A. Jansen, and S. Goldwater , “Unsuper- vised neural network based feature extraction using weak top-down constraints, ” in Proc. ICASSP , 2015. [32] H. Kamper, F . De W et, T . Hain, and T . Niesler, “Capitalising on North American speech resources for the development of a South African English large vocab ulary speech recognition system, ” Comput. Speech Languag e , vol. 28, no. 6, pp. 1255–1268, 2014. [33] J. Gehring, Y . Miao, F . Metze, and A. W aibel, “Extracting deep bottleneck features using stacked auto-encoders, ” in Pr oc. ICASSP , 2013. [34] M. D. Zeiler et al. , “On rectified linear units for speech processing, ” in Pr oc. ICASSP , 2013. [35] L. Badino, C. Caneva ri, L. Fadig a, and G. Metta, “ An auto-encoder based approach to unsupervised learning of subword units, ” in Pr oc. ICASSP , 2014. [36] G. Hinton et al. , “Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Pr ocess. Mag. , vol. 29, no. 6, pp. 82–97, 2012. [37] L. Deng, M. L. Seltzer, D. Y u, A. Acero, A. R. Mohamed, and G. Hinton, “Binary coding of speech spectrograms using a deep auto-encoder , ” in Proc. Interspeech , 2010. [38] T . N. Sainath, B. Kingsbury , and B. Ramabhadran, “ Auto-encoder bottleneck features using deep belief networks, ” in Pr oc. ICASSP , 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment