Framework Code Samples: How Are They Maintained and Used by Developers?

Background: Modern software systems are commonly built on the top of frameworks. To accelerate the learning process of features provided by frameworks, code samples are made available to assist developers. However, we know little about how code samples are actually developed. Aims: In this paper, we aim to fill this gap by assessing the characteristics of framework code samples. We provide insights on how code samples are maintained and used by developers. Method: We analyze 233 code samples of Android and SpringBoot, and assess aspects related to their source code, evolution, popularity, and client usage. Results: We find that most code samples are small and simple, provide a working environment to the clients, and rely on automated build tools. They change frequently over time, for example, to adapt to new framework versions. We also detect that clients commonly fork the code samples, however, they rarely modify them. Conclusions: We provide a set of lessons learned and implications to creators and clients of code samples to improve maintenance and usage activities.

💡 Research Summary

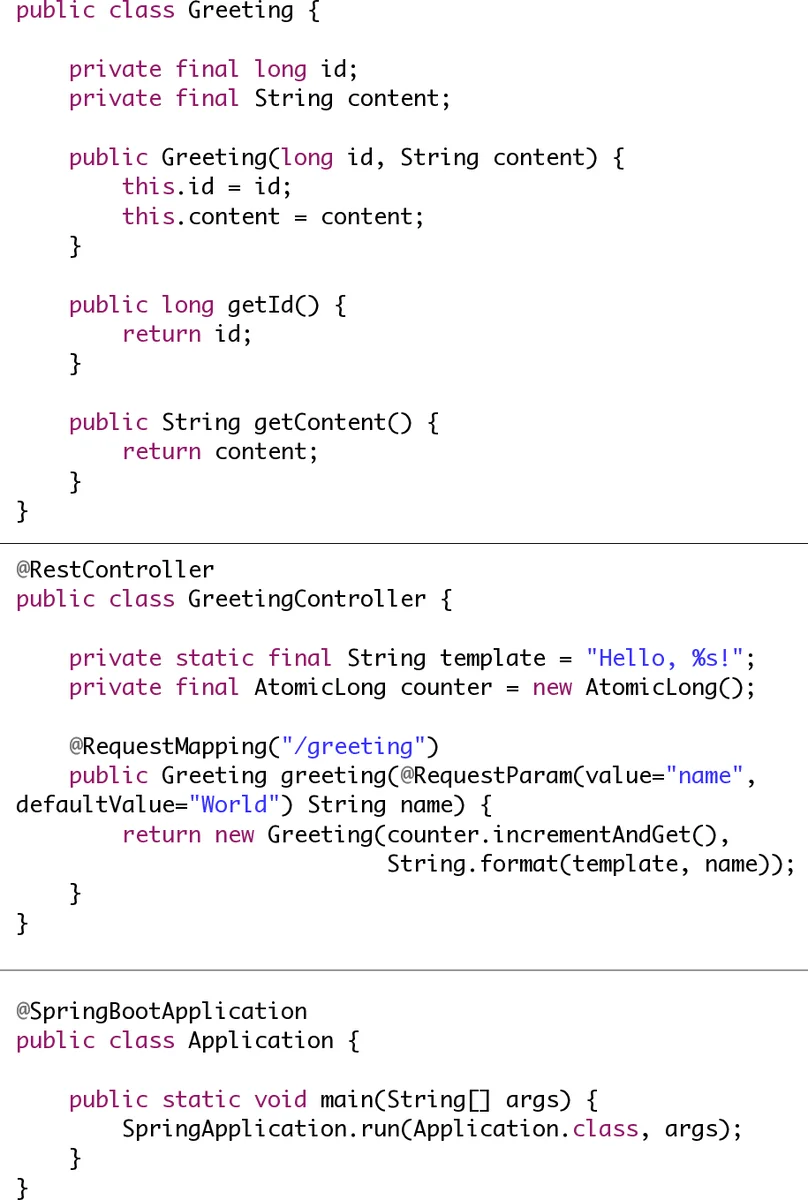

The paper investigates the often‑overlooked lifecycle of framework code samples, focusing on how they are created, evolved, and consumed by developers. The authors selected two widely used Java‑based ecosystems—Android and SpringBoot—and collected a total of 233 publicly available samples (176 Android, 57 SpringBoot) hosted on GitHub. Four research questions guided the empirical study: (1) What are the source‑code characteristics of framework samples? (2) How do these samples evolve over time? (3) Which attributes differentiate popular from unpopular samples? (4) How are the samples used by developers?

For RQ1, the authors measured basic source‑code metrics (number of files, lines of code, cyclomatic complexity, comment density) using the Understand tool. The median Android sample contained 47 files, 24 commits, and 95 stars; the median SpringBoot sample had 27 files, 137 commits, and 45 stars. Most samples were small, with low complexity, and contained extensive comments. File‑extension analysis revealed a rich mix of Java source files together with configuration artifacts such as XML, JSON, YAML, Dockerfiles, and build scripts (Maven pom.xml, Gradle build.gradle). This composition indicates that the samples provide a ready‑to‑run environment rather than a bare‑bones code snippet.

RQ2 examined the evolutionary dimension by extracting the full commit history of each repository. Frequency of commits and project lifetime (days between first and last commit) were computed. Android samples showed a median of 24 commits, while SpringBoot samples exhibited a median of 137 commits, reflecting more active maintenance in the web‑framework domain. The authors also tracked changes in file extensions and configuration files across versions, confirming that updates frequently touch both source code and build‑tool descriptors. Migration delay—defined as the time elapsed between a new framework release and the corresponding sample update—averaged around 45 days, demonstrating that sample maintainers strive to keep examples aligned with the latest APIs.

In RQ3, popularity was operationalized via GitHub stars. Samples were split into the top 50 % (popular) and bottom 50 % (unpopular). Statistical comparison using the Mann‑Whitney test (α = 0.05) and effect‑size measurement (Cliff’s Δ) showed that popular samples have significantly more files (≈1.8×), more commits (≈2.3×), and longer lifetimes than unpopular ones. The effect sizes were in the medium range, indicating that richer file structures and more frequent updates are associated with higher community interest.

RQ4 explored actual developer usage through the fork metric. While 68 % of the samples were forked at least once, 82 % of those forks showed little to no subsequent activity (≤2 commits), suggesting that most developers clone samples for reference or learning rather than for extensive modification. A minority (≈18 %) of forks involved genuine development work, such as adding features or adapting the sample to a specific context.

Based on these findings, the authors propose actionable recommendations. Sample creators should adopt automated build tools, maintain comprehensive configuration files, and establish continuous‑integration pipelines to ensure regular updates, especially after framework releases. Providing clear documentation and comments further enhances learnability. For developers who fork samples, the authors advise regularly syncing with upstream changes to benefit from security patches and API updates, thereby keeping their derived projects current.

Overall, the study fills a gap in software‑engineering literature by quantifying the maintenance practices of framework code samples and revealing how popularity correlates with upkeep. The insights are valuable for framework vendors, open‑source maintainers, and developers who rely on sample code to accelerate learning and prototype development.

Comments & Academic Discussion

Loading comments...

Leave a Comment