End-to-End Speech Recognition with High-Frame-Rate Features Extraction

State-of-the-art end-to-end automatic speech recognition (ASR) extracts acoustic features from input speech signal every 10 ms which corresponds to a frame rate of 100 frames/second. In this report, we investigate the use of high-frame-rate features extraction in end-to-end ASR. High frame rates of 200 and 400 frames/second are used in the features extraction and provide additional information for end-to-end ASR. The effectiveness of high-frame-rate features extraction is evaluated independently and in combination with speed perturbation based data augmentation. Experiments performed on two speech corpora, Wall Street Journal (WSJ) and CHiME-5, show that using high-frame-rate features extraction yields improved performance for end-to-end ASR, both independently and in combination with speed perturbation. On WSJ corpus, the relative reduction of word error rate (WER) yielded by high-frame-rate features extraction independently and in combination with speed perturbation are up to 21.3% and 24.1%, respectively. On CHiME-5 corpus, the corresponding relative WER reductions are up to 2.8% and 7.9%, respectively, on the test data recorded by microphone arrays and up to 11.8% and 21.2%, respectively, on the test data recorded by binaural microphones.

💡 Research Summary

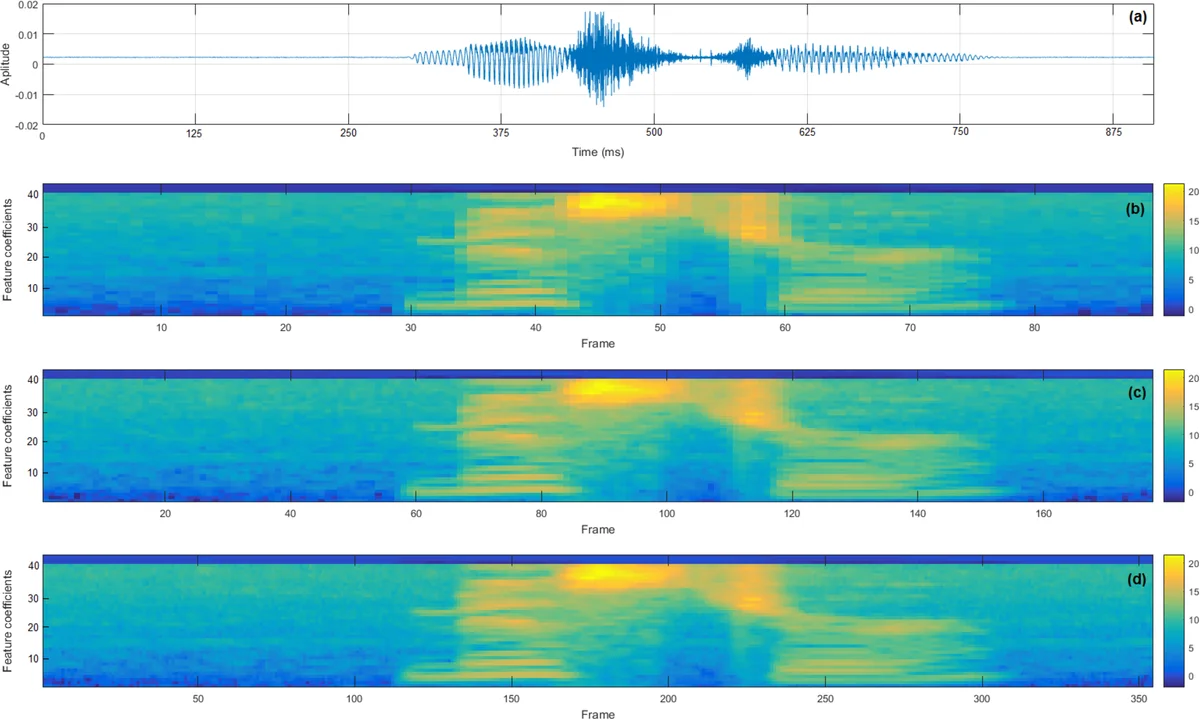

This paper investigates whether extracting acoustic features at a higher frame rate than the conventional 100 frames‑per‑second (fps) can improve end‑to‑end automatic speech recognition (ASR). State‑of‑the‑art end‑to‑end ASR systems typically compute 40‑dimensional Mel‑filterbank (FBANK) features together with three pitch‑related dimensions (pitch, delta‑pitch, voicing probability) every 10 ms, yielding a 100 fps feature matrix. The authors reduce the hop size to 5 ms and 2.5 ms, thereby generating feature streams at 200 fps and 400 fps respectively. The hypothesis is that a finer temporal resolution provides more detailed acoustic cues, which can be exploited by the encoder networks—particularly convolutional neural networks (CNN) and pyramid bidirectional LSTM (pBLSTM) layers that rely on temporal context.

Two corpora are used for evaluation: the Wall Street Journal (WSJ) read‑speech dataset (clean, 37 k training utterances) and the CHiME‑5 conversational speech dataset (real‑world multi‑microphone recordings, ~167 h training). Both corpora are processed with the same front‑end pipeline: pre‑emphasis, 25 ms analysis windows, FBANK extraction, pitch augmentation, and utterance‑level mean normalization. For CHiME‑5, development data recorded by a reference microphone array are beam‑formed with BeamformIt before feature extraction.

The ASR architecture follows the hybrid CTC/attention model implemented in ESPnet. Two encoder variants are examined: (1) a VGG‑net (six convolutional layers with 3×3 kernels and 3×3 max‑pooling, stride 2) followed by a 4‑layer pBLSTM (320 cells per direction, subsampling factor 4), and (2) a pure 4‑layer pBLSTM. The decoder is a single‑layer LSTM (300 cells). Training combines CTC and attention losses with λ = 0.2 for WSJ and λ = 0.1 for CHiME‑5, using AdaDelta and gradient clipping over 15 epochs. An external 1‑layer LSTM language model (RNN‑LM) is incorporated during joint beam search (beam width 20, LM weight 0.1).

Data augmentation is performed via speed perturbation: each training set is tripled by resampling the audio to 0.9× and 1.1× of the original speed, which changes both the duration and the number of frames. Speed perturbation is applied only to training data, whereas high‑frame‑rate feature extraction is applied to training, development, and test data.

Results are presented in four tables. On WSJ, high‑frame‑rate features alone reduce word error rate (WER) by up to 21.3 % relative on the Dev93 set and 12.1 % on Eval92 when using the VGG‑net + pBLSTM encoder. When combined with speed perturbation, the relative reductions improve to 24.1 % and 15.1 % respectively. The pBLSTM‑only encoder also benefits, though gains are slightly smaller. On CHiME‑5, the improvements are more modest for the microphone‑array recordings (up to 2.8 % reduction with high‑frame‑rate alone, 7.9 % when combined with speed perturbation) but considerably larger for the binaural recordings (up to 11.8 % alone, 21.2 % combined). Across both corpora, the VGG‑net + pBLSTM encoder consistently outperforms the pBLSTM‑only encoder, indicating that convolutional front‑ends can better exploit the richer temporal information provided by higher frame rates.

The analysis shows that increasing the frame rate from 200 fps to 400 fps yields additional gains on the clean WSJ data but not consistently on the noisy CHiME‑5 data, suggesting that the benefit of finer temporal resolution may be limited by the presence of background noise and reverberation. Moreover, high‑frame‑rate extraction and speed perturbation are complementary: the former adds more frames per utterance without altering the acoustic content, while the latter creates new utterances with altered spectral characteristics. Their combination leads to the largest WER reductions.

In conclusion, extracting acoustic features at higher frame rates is a simple yet effective way to improve end‑to‑end ASR performance, especially when paired with modern CNN‑BLSTM encoders and conventional data augmentation techniques. The paper opens several avenues for future work, including adaptive or variable frame‑rate strategies, multi‑scale feature fusion, and real‑time deployment considerations.

Comments & Academic Discussion

Loading comments...

Leave a Comment