End-to-end Text-to-speech for Low-resource Languages by Cross-Lingual Transfer Learning

End-to-end text-to-speech (TTS) has shown great success on large quantities of paired text plus speech data. However, laborious data collection remains difficult for at least 95% of the languages over the world, which hinders the development of TTS i…

Authors: Tao Tu, Yuan-Jui Chen, Cheng-chieh Yeh

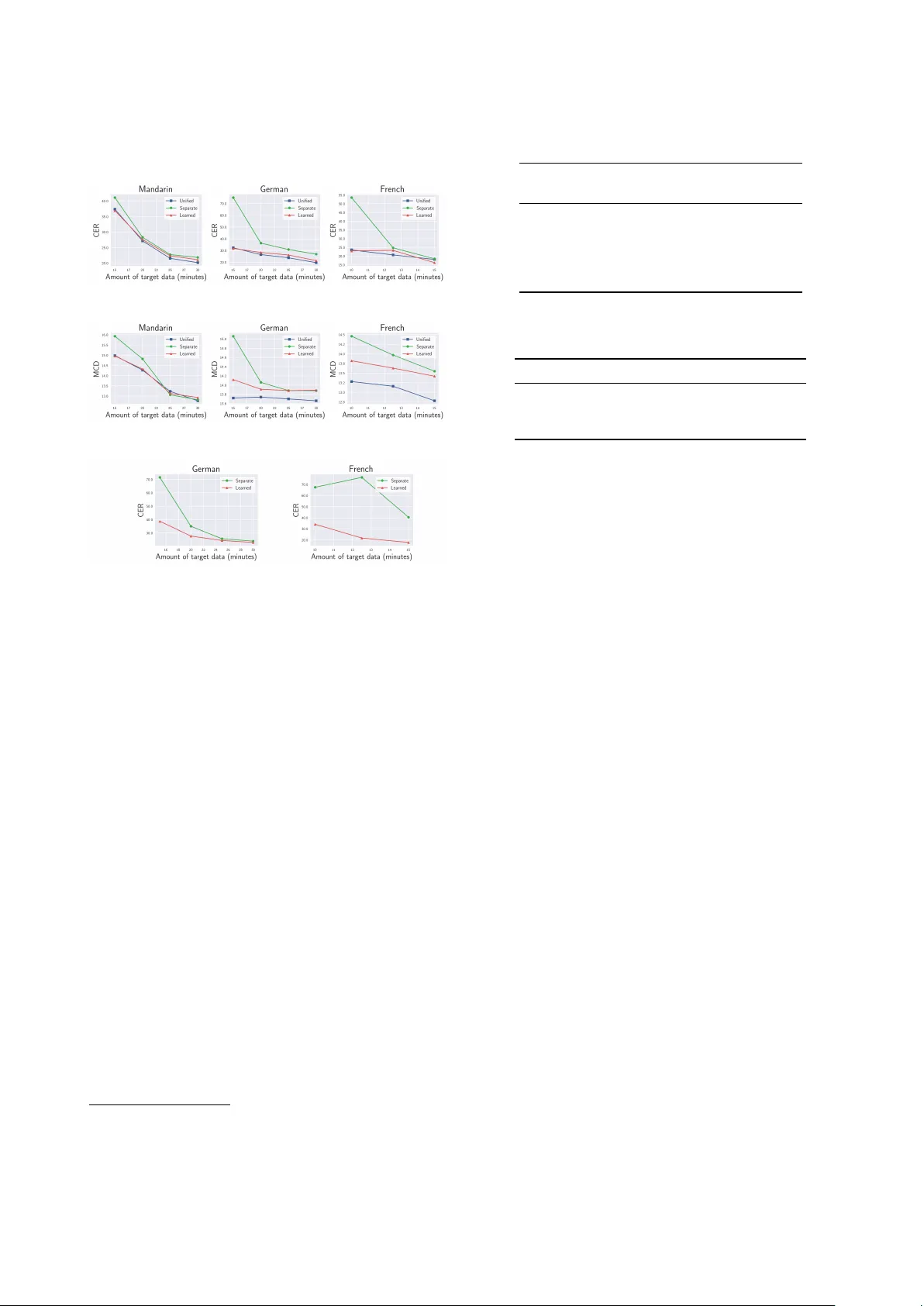

End-to-end T ext-to-speech f or Low-r esour ce Languages by Cr oss-Lingual T ransfer Learning Y uan-Jui Chen ∗ 1 , T ao T u ∗ 1 , Cheng-chieh Y eh 1 , Hung-yi Lee 1 1 College of Electrical Engineering and Computer Science, National T aiwan Univ ersity { r07922070, r07922022, r06942067, hungyilee } @ntu.edu.tw Abstract End-to-end text-to-speech (TTS) has sho wn great success on large quantities of paired text plus speech data. Howe ver , la- borious data collection remains difficult for at least 95% of the languages ov er the world, which hinders the de velopment of TTS in different languages. In this paper , we aim to build TTS systems for such low-resource (target) languages where only very limited paired data are available. W e show such TTS can be effecti vely constructed by transferring kno wledge from a high-resource (source) language. Since the model trained on source language cannot be directly applied to target language due to input space mismatch, we propose a method to learn a mapping between source and target linguistic symbols. Benefit- ing from this learned mapping, pronunciation information can be preserved throughout the transferring procedure. Prelimi- nary experiments show that we only need around 15 minutes of paired data to obtain a relativ ely good TTS system. Fur- thermore, analytic studies demonstrated that the automatically discov ered mapping correlate well with the phonetic expertise. Index T erms : end-to-end, speech synthesis, transfer learning, cross-lingual, low-resource 1. Introduction Recent research on end-to-end text-to-speech (TTS) [1, 2, 3, 4, 5, 6] has gained success in terms of human-like and high- quality generated speech. Moreov er , with re gard to cloning prosody style or speaker characteristics, end-to-end TTS sys- tems also demonstrate a powerful capability [7, 8, 9, 10, 11]. Howe ver , training end-to-end TTS systems requires lar ge quan- tities of text-audio paired data. In order to improve data ef- ficiency , semi-supervised training framework is proposed for T acotron [1] by leveraging non-parallel large-scale text and speech resources [12]. Nev ertheless, there is little discussion on end-to-end TTS for low-resource languages, where only v ery limited paired data are av ailable. Previous research on multi-lingual multi-speaker (MLMS) statistical parametric speech synthesis (SPSS) has discussed us- ing high-resource languages to help construct TTS systems for low-resource languages. Some research shows that the model trained on multiple languages can benefit from cross-lingual information and aid the adaptation to new languages using only a small amount of data [13, 14]. In their methods, lin- guistic inputs of each language are con verted internally into language-independent representations. On the contrary , in an- other work [15], inputs are mapped to the International Phonetic Alphabet (IP A) [16], which is a unified canonical representa- tion. The authors propose a language-agnostic model and also show that the model trained on many languages is sometimes better than the monolingual system built from small amounts *Equal contribution of data. Likewise, another work indicates that training data for building a ne w TTS system can be reduced by pooling phono- logically close languages, where a special phoneme inv entory is presented for sharing as more regularities across languages as possible [17]. Although pre vious w ork demonstrates that utiliz- ing cross-lingual information is beneficial to TTS, this idea has not been widely studied on end-to-end TTS yet. In this paper , we introduce cross-lingual transfer learning for lo w-resource languages to end-to-end TTS. W e first pretrain a TTS model by lev eraging data from high-resource (source) language, and then try to adapt it to low-resource (target) lan- guages. T o tackle input space mismatch across languages, we propose a Phonetic Transformation Network (PTN) model to discov er a mapping between source and tar get linguistic sym- bols according to their pronunciation. The idea is similar to probabilistic phoneme mapping model [18, 19], while our ap- proach is pure deep-learning, and we use connectionist temporal classification (CTC) loss [20] as the training objecti ve. Benefit- ing from the learned mapping, pronunciation information can be preserved throughout the transferring procedure. Objectiv e and subjectiv e tests show that a fe w paired data on target language is required for our transfer learning approach to generate intelligi- ble speech 1 . Under the scenario that input linguistic symbols of source and target languages are both phonemes, our approach is competiti ve with the transfer learning method which uses the handcrafted mapping based on IP A. Furthermore, e ven when lexicons of target languages are not accessible, our symbol map- ping is still applicable and enables TTS to transfer from the source languages with phonemes as input to target languages with characters as input. Finally , analytic studies demonstrated that the automatically discov ered mapping correlate well with the phonetic expertise. 2. Proposed appr oach Giv en an input symbol sequence, end-to-end TTS system first transforms each symbol into a symbol embedding by an em- bedding matrix, and then according to the symbol embeddings, a generative model 2 outputs the spectrogram or raw wav eform. W e can formulate end-to-end text-to-speech as f θ,W : X L → Y (1) where θ denotes the parameters of the generati ve model, W denotes learnable symbol embeddings, and Y denotes the space of human speech. X L is the text space for a specific language, X L = {{ s t } T t =1 | ∀ t s t ∈ L , T ∈ N } (2) where L is the linguistic symbol set for this language, and T is the length of the input symbol sequence. Our goal is to construct 1 Sound demos can be found at https://henryhenrychen. github.io/CL- transfer- demo 2 For example, sequence-to-sequence model as in T acotron [1]. p s r c p t g t ASR Fixed ASR PTN Stage1 (highresource) Separate (a) Unified (b) Learned (c) (d) Source TTS T arget TTS Source TTS T arget TTS Source TTS T arget TTS AE IH SH NG AO ɔ ɪ ŋ æ ʂ AE IH SH NG AO ɔ ɪ ŋ æ ʂ Source symboldistribution T arget symboldistribution T argetaudio Stage2 (lowresource) θ ε ʊ ʁ ð PTN Sourceaudio Figure 1: Approac hes to transfer TTS model fr om source lan- guage to tar get languag e. (a) separate symbol space , (b) unified symbol space, and (c) learned symbol space. (d) the training scheme of phonetic transformation network (PTN) for obtain- ing the learned symbol space. TTS systems for low-resource (target) languages by transfer- ring knowledge from high-resource (source) language. W e can directly use θ src learned from source language to initialize the training of θ tgt on tar get language because both θ src and θ tgt take embeddings as input and generate speech 3 . Howe ver , the same idea cannot be directly applied to W src and W tgt . An ob- vious problem is that s src and s tgt come from dif ferent symbol sets, i.e., L src 6 = L tgt . T o deal with the input space mismatch problem during the transferring procedure, we present two naive baselines and propose a nov el transfer learning approach which utilizes a learned mapping between s src and s tgt . 2.1. Separate symbol space The first approach simply considers linguistic symbol sets for source and target language as two different symbol sets. In this approach, θ tgt is deri ved by finetuning θ src , b ut the tar get sym- bol embeddings W tgt is learned from scratch. 2.2. Unified symbol space Howe ver , some of the sound units are shared by different lan- guages. If we discard W src and train new W tgt , some use- ful pronunciation information learned previously may be lost. This can be resolved by mapping L src and L tgt to a unified symbol set L uni , where the mapping is handcrafted and relies on linguistic expertise. In this way , we can use W src to ini- tialize W tgt because they hav e the same set of input symbols L uni . Note that this method necessitates experts to design sym- bol mapping for the source and the target language. This kind of mapping is not always av ailable especially when the symbol 3 Here src and tg t stand for source and target, respectiv ely . set of one language is phoneme, while the other is character . 2.3. Learned symbol space T o preserve pronunciation information during transferring while not using linguistic e xpertise, we propose Phonetic Trans- formation Network (PTN), a model that can automatically learn how to map source symbols to target symbols according to their sounds. 2.3.1. Phonetic transformation network First, we pretrain an automatic speech recognition (ASR) sys- tem on source language, as illustrated in stage 1 of Figure 1(d). The ASR system learns to output symbol (phoneme) sequence of the source language by CTC loss. Afterward, we fix the pre- trained ASR system and concatenate our proposed PTN model with it. PTN can be formulated as h : p src 7→ p tgt (3) where p src and p tgt are probability distributions o ver L src and L tgt for a specific timestep. In our case, p src is also the ASR output symbol posteriorgram. The concatenation of the pre- trained ASR system and PTN is then further trained on the target language data by maximizing the log-likelihood of target sym- bol labellings (phonemes or characters of the target language) using CTC loss, as illustrated in stage 2 of Figure 1(d). In stage 2, the parameters of the ASR system are fixed, so what PTN has learned is to find the most possible target symbols giv en the ASR output which are source symbols. Since the pretrained ASR system is capable of transcribing an audio frame in target language into a posterior gram of source symbols, the training in stage 2 enables PTN to learn a strate gy to conv ert the symbols (phoneme) of the source language into the symbols (phonemes or characters) of the target language. 2.3.2. Symbol mapping discovery W ith PTN, we can derive the most similar target symbol to a certain source symbol according to their sound. Given the i-th source symbol s i src , we can simply pass a one-hot vector o i , whose i-th dimension is marked as 1, to PTN. If the sound of s i src is shared among source and target language, PTN will con- vert o i to a target symbol with high probability . Accordingly , we can map each source symbol to a target symbol by the fol- lowing formulation. map ( s i src ) = ( s j tgt if h j ( o i ) > ξ , j = arg max k h k ( o i ) None other wise (4) where h k ( · ) denotes the k-th output dimension of PTN h ( · ) , s j tgt denotes j-th symbol in the target language and ξ is the transformation threshold. Once obtaining the mapping, we can transfer the embedding weight of a source symbol to its corre- sponding target symbol. If a target symbol is mapped by many source symbols, we transfer the embedding weight of the one with the highest probability . For those symbols in the target language which are not mapped by any source symbol, their embedding weights are still learned from scratch. 3. Implementation 3.1. TTS model In this work, we adopt original T acotron architecture [1] as our end-to-end TTS model, which has an encoder-decoder archi- tecture with attention mechanism. Spectral analysis setting is also the same as theirs [1] in the paper . Since our goal is to study transfer learning in the small-data regime, we simply use Griffin-Lim [21] as the wa veform synthesizer and leav e explor - ing other architectures [3, 8] as our future work. 3.2. ASR and PTN model A pure-CNN model is adopted for our ASR system, which is modified from the previous work [22]. A pyramidal recur- rent neural network (RNN) model [23] was also experimented, whereas we find it performed not as expected in preliminary studies. W e conjecture that RNN with multiple layers has learned strong language model on source language, which laid constraints on model’ s outputs and hindered the training of sub- sequent PTN. As for PTN, it is composed of 3-layer fully connected layers with ReLU acti vation function. Dropout is also applied with 0.4 dropout rate for each layer . 4. Experiments T o verify whether TTS model can benefit from cross-lingual transfer learning and generate clear speech with small amounts of data, both objecti ve and subjecti ve tests are conducted. For the objectiv e tests, we use google’ s cloud speech-to-text API to recognize the generated speech and use the character error rate (CER) as the measurement metric for clarity . Addition- ally , we also use mel-cepstral distortion (MCD) [24] for e valua- tion, which measures the distance between synthesis and ground truth in the space of mel-frequency cepstrum — the smaller the better . For subjectiv e measurements, mean opinion score (MOS) tests are run for naturalness assessment. For simplicity , ”phn2phn” denotes the situation using phoneme as input in both source and target languages, and ”phn2char” denotes the situation using phoneme input in source language but character input in target languages. Likewis e, we denote the model that transfers with separate symbol space, unified symbol space and learned symbol space by ”Separate”, ”Unified” and ”Learned”, respectiv ely . 4.1. Data setup 4.1.1. Sour ce language In our experiments, English was selected as our high-resource language. For pretraining an initial TTS model, LJ Speech Dataset [25] is used, which is a public domain speech dataset consisting of around 24 hours of text speech paired data. As for ASR training in Section 2.3, we use the LibriSpeech Dataset [26], which is an ASR corpus based on public domain audio books. The training set of 100 hours clean speech and the clean dev elopment set are utilized for training and early stop- ping. 4.1.2. T arg et language Mandarin, German, and French are chosen as the target lan- guages. An internal corpus recorded by a female speaker is used for Mandarin experiments. The German data deriv es from the German LibriV ox corpus which is organized by M- AILABS [27]. Data from a female speaker Eva K is used. As for French, we use the data from a female speaker FR010 in the GlobalPhone collection [28], which only consists of approxi- mate 18 minutes paired data. W e split the data into training and testing sets as illustrated in T able 1 4 . T able 1: Data statistic of tar get languages Language T rain (minutes) T est (utterances) Mandarin 30 250 German 30 120 French 15 100 4.2. Experimental setup The initial TTS model is obtained by pretraining on source lan- guage for 10k parameter updates. For all transfer learning meth- ods, we continue training on the tar get language pair with the same initial TTS model parameters. In ”Separate” (Section 2.1), embedding matrices for tar get languages are randomly initialized according to the normal dis- tribution with 0 mean and 0.3 standard deviation. In ”Unified” (Section 2.2), all symbols are mapped to IP A. Accordingly , for each symbol of the target language, we initialize its embedding weight from the source symbol that shares the same IP A repre- sentation. The embeddings for the remaining symbols are ran- domly initialized as explained for ”Separate”. As for ”Learned” (Section 2.3), ASR model in stage 1 is pretrained on source language for 300k parameter updates and the best model is se- lected by the dev elopment set. The training data for PTN is the same for finetuning the TTS model on the target languages. The transformation threshold ξ is set to 0.4 for all target lan- guages. Finally , embeddings for tar get symbols are initialized in the same w ay as ”Unified”, except that the mapping is now learned automatically . 4.3. Experiment results 4.3.1. Objective tests First of all, we sho w the results in the situation ”phn2phn”, where lexicons for target languages are accessible. The CER results are shown in Figure 2. W e can see that for any language and any amount of used target data, ”Unified” and ”Learned” consistently outperform ”Separate”, which implies that trans- ferring knowledge with the symbol (phoneme) mapping is ben- eficial. When the size of target data decreases, ”Separate” de- teriorates the most and ”Learned” sticks with ”Unified”. This also indicates that the mapping information is especially effec- tiv e under very scarce data circumstances and that our learned mapping is competiti ve with the one based on IP A. Besides, we can notice from Figure 3 that the results of MCD tests also align with the results of CER. The model trained from scratch, where all network weights are randomly initialized, is experimented. Howe ver , ev en if all training data is used, it cannot produce understandable speech and results in CER larger than 80% for ev ery language. Thus, we do not plot its results. In addition, ”phn2char” setting is also inv estigated. Be- cause under such setting, the input symbols of target languages are characters, ”Unified” approach is not applicable. In Fig- ure 4, a lar ge gap between ”Learned” and ”Separate” can be ob- 4 Mandarin and German use the same test sets for both CER and MCD measurements. Howev er , since there is very fe w French data and MCD test needs ground-truth audio, we randomly select needed training data and lea ve the rest for testing. This procedure is run three times and the av erage score is reported. served on German and French 5 . This sho ws that our proposed method performs well e ven when source symbols are phoneme- lev el and target symbols are character -lev el. Figure 2: Results of CER under ”phn2phn” scenario. Figure 3: Results of MCD tests under ”phn2phn” scenario. Figure 4: Results of CER under ”phn2char” scenario. 4.3.2. Subjective tests T o further examine the impact of target data size on the quality of generated speech, we conduct a series of MOS tests. W e use 25 minutes and 15 minutes Mandarin paired data for this test under ”phn2phn” setting. The model trained from scratch (denoted by ”Scratch”) is also measured for comparison. In MOS tests, 40 subjects were asked to rate the naturalness for the given speech audio and 80 audio of unseen utterances were used for testing. Each utterance receiv ed 5 ratings at least. After listening to each stimulus with headphone, the subjects were asked to rate the naturalness in a five-point Likert scale score (1: Bad, 2: Poor , 3: F air , 4: Good, 5: Excellent). From T able 2, we can observe that when 25 minutes of paired data is used, three transfer learning methods ”Separate”, ”Unified” and ”Learned” perform almost the same and all of them outperform ”Scratch”. When training data is reduced to 15 minutes, ”Separate” degrades obviously , which is consistent with the discov ery of pre vious objectiv e tests. The results show that given a few but still sufficient paired data (25 min) on the target language, three transfer learning approaches can benefit from pretraining and generate intelligible speech. When paired data becomes fe wer (15 min), our proposed approach ”Learned” is comparable to ”Unified” and giv es promising results without using background linguistic expertise. 4.4. Symbol mapping studies In this part, we sho w that our learned symbol mappings are rea- sonable and e valuate them according to IP A under ”phn2phn” 5 Because the characters of Mandarin correspond to syllable instead of phoneme, ”phn2char” is not reasonable for Mandarin, so its perfor- mance is not presented here. T able 2: Mean Opinion Scor e (MOS) ratings with 95% confi- dence intervals for naturalness . MOS score Method 25 minutes 15 minutes Ground T ruth 4 . 89 ± 0 . 045 Separate 3 . 94 ± 0 . 085 2 . 90 ± 0 . 176 Unified 4 . 01 ± 0 . 085 3 . 48 ± 0 . 119 Learned 3 . 99 ± 0 . 086 3 . 46 ± 0 . 117 Scratch 1 . 39 ± 0 . 153 1 . 26 ± 0 . 094 T able 3: Precision and recall of found mapping on 15-minute tar get data. Mapping Precision Recall Recall random EN → DE 82 . 6% 63 . 3% 3 . 4% EN → FR 73 . 7% 56 . 0% 4 . 0% EN → ZH 64 . 7% 47 . 8% 4 . 5% setting. If one source phoneme and its learned corresponding target phoneme share the same IP A representation, we regard this learned mapping correct. T o calculate recall score, we de- riv e total correct mappings from the overlap of two language phoneme sets after being mapped to IP A. For the sake of com- parison, we also show the recall score in the case that each source phoneme is randomly mapped to a target phoneme in the ov erlap. From T able 3, we can observ e that our method retriev es highly informative mapping and is far better than random guess- ing. Besides, we can notice that our method performs better on German and French than on Mandarin, which may result from the similarity to the source language, English. Despite relatively low recall score on Mandarin, our method still disco vers some mappings between two similar -sounding phonemes which ha ve different IP A representations. For example, symbol < ù > and symbol < S > are mapped. Although they are not identical ac- cording to IP A, their pronunciations are quite alike and similar to ”sh” in English. For more details about the learned mapping please refer to the demo page. 5. Conclusion In this paper, we explored cross-lingual transfer learning in end-to-end TTS for low-resource languages. W e proposed an approach to discover cross-lingual symbol mapping for help- ing model better transferred with knowledge learned previously from abundant source data. Experiment results show that our method enables the model to produce far more natural-sounding speech than the model trained only on target data and achiev es promising results compared with the method using strong lin- guistic background expertise. 6. References [1] Y . W ang, R. Skerry-Ryan, D. Stanton, Y . W u, R. J. W eiss, N. Jaitly , Z. Y ang, Y . Xiao, Z. Chen, S. Bengio et al. , “T acotron: T owards end-to-end speech synthesis, ” Interspeech , pp. 4006– 4010, 2017. [2] A. V an Den Oord, S. Dieleman, H. Zen, K. Simonyan, O. V inyals, A. Graves, N. Kalchbrenner, A. W . Senior, and K. Kavukcuoglu, “W avenet: A generative model for raw audio. ” [3] J. Shen, R. Pang, R. J. W eiss, M. Schuster , N. Jaitly , Z. Y ang, Z. Chen, Y . Zhang, Y . W ang, R. Skerrv-Ryan et al. , “Natural tts synthesis by conditioning wav enet on mel spectrogram pre- dictions, ” in International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 4779–4783. [4] W . Ping, K. Peng, A. Gibiansky , S. O. Arik, A. Kannan, S. Narang, J. Raiman, and J. Miller, “Deep voice 3: Scaling text- to-speech with con volutional sequence learning, ” arXiv preprint arXiv:1710.07654 , 2017. [5] Y . T aigman, L. W olf, A. Polyak, and E. Nachmani, “V oiceloop: V oice fitting and synthesis via a phonological loop, ” arXiv pr eprint arXiv:1707.06588 , 2017. [6] J. Sotelo, S. Mehri, K. Kumar , J. F . Santos, K. Kastner , A. Courville, and Y . Bengio, “Char2wa v: End-to-end speech syn- thesis, ” 2017. [7] R. Skerry-Ryan, E. Battenberg, Y . Xiao, Y . W ang, D. Stanton, J. Shor, R. J. W eiss, R. Clark, and R. A. Saurous, “T ow ards end-to-end prosody transfer for expressiv e speech synthesis with tacotron, ” arXiv preprint , 2018. [8] Y . W ang, D. Stanton, Y . Zhang, R. Skerry-Ryan, E. Battenber g, J. Shor, Y . Xiao, F . Ren, Y . Jia, and R. A. Saurous, “Style tokens: Unsupervised style modeling, control and transfer in end-to-end speech synthesis, ” arXiv preprint , 2018. [9] Y . Jia, Y . Zhang, R. W eiss, Q. W ang, J. Shen, F . Ren, P . Nguyen, R. Pang, I. L. Moreno, Y . W u et al. , “T ransfer learning from speaker verification to multispeaker text-to-speech synthesis, ” in Advances in Neural Information Pr ocessing Systems , 2018, pp. 4480–4490. [10] J. Lorenzo-Trueba, F . Fang, X. W ang, I. Echizen, J. Y amag- ishi, and T . Kinnunen, “Can we steal your vocal identity from the internet?: Initial in vestigation of cloning obama’ s voice us- ing gan, wavenet and lo w-quality found data, ” arXiv preprint arXiv:1803.00860 , 2018. [11] S. Arik, J. Chen, K. Peng, W . Ping, and Y . Zhou, “Neural voice cloning with a few samples, ” in Advances in Neural Information Pr ocessing Systems , 2018, pp. 10 019–10 029. [12] Y .-A. Chung, Y . W ang, W .-N. Hsu, Y . Zhang, and R. Skerry-Ryan, “Semi-supervised training for improving data efficienc y in end-to- end speech synthesis, ” in International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2019, pp. 6940– 6944. [13] Q. Y u, P . Liu, Z. W u, S. K. Ang, H. Meng, and L. Cai, “Learning cross-lingual information with multilingual blstm for speech syn- thesis of low-resource languages, ” in International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2016, pp. 5545–5549. [14] A. Gutkin, “Uniform multilingual multi-speaker acoustic model for statistical parametric speech synthesis of lo w-resourced lan- guages, ” 2017. [15] B. Li and H. Zen, “Multi-language multi-speaker acoustic mod- eling for lstm-rnn based statistical parametric speech synthesis, ” 2016. [16] I. P . Association, I. P . A. Staff et al. , Handbook of the Interna- tional Phonetic Association: A guide to the use of the Interna- tional Phonetic Alphabet . Cambridge Uni versity Press, 1999. [17] I. Demirsahin, M. Jansche, and A. Gutkin, “ A unified phonologi- cal representation of south asian languages for multilingual text- to-speech, ” 2018. [18] K. C. Sim and H. Li, “Conte xt-sensitiv e probabilistic phone map- ping model for cross-lingual speech recognition, ” in Ninth Annual Confer ence of the International Speech Communication Associa- tion , 2008. [19] K. C. Sim, “Discriminative product-of-expert acoustic mapping for cross-lingual phone recognition, ” in 2009 IEEE W orkshop on Automatic Speech Recognition & Understanding . IEEE, 2009, pp. 546–551. [20] A. Grav es, S. Fern ´ andez, F . Gomez, and J. Schmidhuber , “Con- nectionist temporal classification: labelling unsegmented se- quence data with recurrent neural networks, ” in Pr oceedings of the 23rd international conference on Machine learning . A CM, 2006, pp. 369–376. [21] D. Griffin and J. Lim, “Signal estimation from modified short- time fourier transform, ” IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , vol. 32, no. 2, pp. 236–243, 1984. [22] K. Krishna, L. Lu, K. Gimpel, and K. Livescu, “ A study of all- con volutional encoders for connectionist temporal classification, ” in International Confer ence on Acoustics, Speech and Signal Pr o- cessing (ICASSP) . IEEE, 2018, pp. 5814–5818. [23] W . Chan, N. Jaitly , Q. Le, and O. V inyals, “Listen, attend and spell: A neural network for large vocabulary conv ersational speech recognition, ” in International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2016, pp. 4960– 4964. [24] J. Kominek, T . Schultz, and A. W . Black, “Synthesizer voice qual- ity of new languages calibrated with mean mel cepstral distor- tion, ” in Spoken Languages T echnologies for Under-Resour ced Languages , 2008. [25] K. Ito et al. , “The lj speech dataset, ” 2017. [26] V . Panayoto v , G. Chen, D. Povey , and S. Khudanpur, “Lib- rispeech: an asr corpus based on public domain audio books, ” in International Confer ence on Acoustics, Speech and Signal Pr o- cessing (ICASSP) . IEEE, 2015, pp. 5206–5210. [27] M. A. I. Laboratories, “The m-ailabs speech dataset, ” https:// www .caito.de/2019/01/the- m- ailabs- speech- dataset/, 2019. [28] T . Schultz, “Globalphone: a multilingual speech and te xt database dev eloped at karlsruhe univ ersity , ” in Seventh International Con- fer ence on Spoken Language Pr ocessing , 2002.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment