A Fusion Adversarial Underwater Image Enhancement Network with a Public Test Dataset

Underwater image enhancement algorithms have attracted much attention in underwater vision task. However, these algorithms are mainly evaluated on different data sets and different metrics. In this paper, we set up an effective and pubic underwater t…

Authors: Hanyu Li, Jingjing Li, Wei Wang

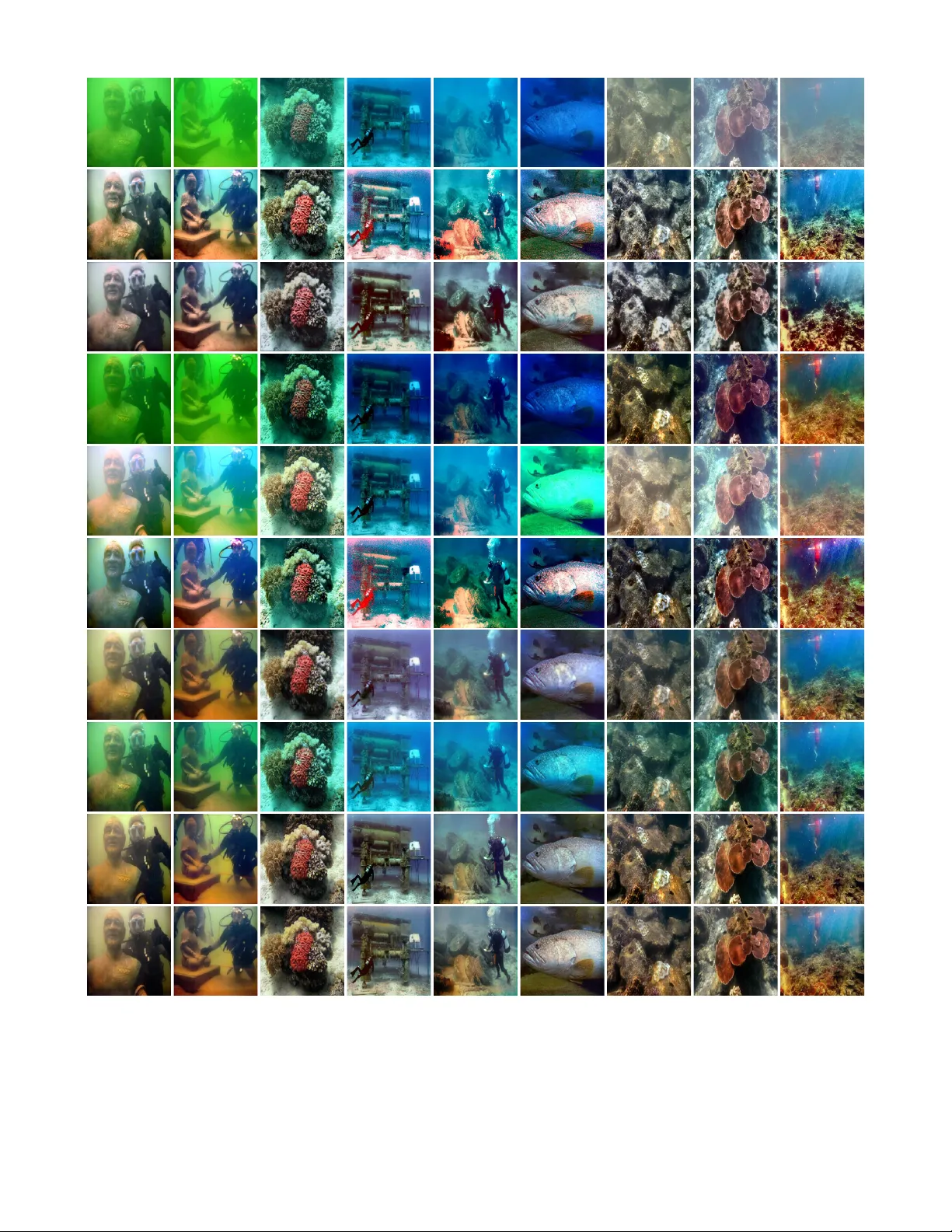

1 A Fusion Adv ersarial Underwater Image Enhancement Network with a Public T est Dataset Hanyu Li, Jingjing Li, and W ei W ang Abstract —Underwater image enhancement algorithms ha ve attracted much attention in underwater vision task. Howe ver , these algorithms are mainly e valuated on different data sets and differ ent metrics. In this paper , we set up an effective and public underwater test dataset named U45 including the color casts, low contrast and haze-like effects of underwater degradation and pr opose a fusion adversarial netw ork for en- hancing underwater images. Meanwhile, the well-designed the adversarial loss including L gt loss and L f e loss is presented to focus on image features of ground truth, and image features of the image enhanced by fusion enhance method, respectiv ely . The proposed network corrects color casts effectively and owns faster testing time with fewer parameters. Experiment results on U45 dataset demonstrate that the proposed method achieves better or comparable perf ormance than the other state-of-the-art methods in terms of qualitative and quantitative evaluations. Moreov er , an ablation study demonstrates the contributions of each component, and the application test further shows the effectiveness of the enhanced images. Index T erms —Underwater image enhancement, generative ad- versarial network, fusion, underwater image test dataset. I . I N T R O D U C T I O N The underwater optical imaging quality is of great sig- nificance to the exploration and utilization of deep ocean [1]. Howe ver , raw underwater images seldom fulfill the re- quirements concerning low-le vel and high-lev el vision tasks because of the serious underwater degradation model depicted in Fig. 1. The underwater images are rapidly degraded by two major factors. Due to the depth, light conditions, water type, and dif ferent light wa velengths, the color tones of underwater images are often distorted. For example, in clean water , red light disappears first at 5 m water depth. As the depth of water increases, orange, yellow , and green lights disappear , respectiv ely . The blue light has the shortest wav elength and trav els the furthest distance in the water [2]. In addition, both suspended particles and water affect the scene contrast and produce haze-like effects by absorbing and scattering light to the camera lenses [3]. T o solve this problem, existing underwater image enhance- ment methods can be divided into three categories: non model- based methods [4]–[6], model-based methods [2], [7]–[11] and deep learning-based methods [12]–[19]. The non model- based methods focus on adjusting image pixel v alues to produce a subjectiv ely and visually appealing image without Corresponding author: *Hanyu Li (email:lihanyu1204@gmail.com). H. Li is with Nanjing University of Information Science and T echnology , Nanjing, China. J. Li and W . W ang are engineers graduated from Nanjing Uni versity of Information Science and T echnology , Nanjing, China. for w ar d scatterin g ba ck scatter ing floating particles targ et 5m 10m 20m 30m 60m wa t er surfac e t a e t dire ct scattering Fig. 1: The simplified underwater optical imaging model. modeling the underwater image formation process. Ancuti et al. [4] proposed a multi-scale fusion method by blending a white balanced version and a filtered version. The model- based methods recover underwater images by constructing the degradation model and then estimate model parameters from prior assumptions. Peng et al. [10] presented an underwater depth estimation prior based on image blurriness and light absorption, which can be employed in the underwater optical imaging model to improve underwater image quality . Due to the lack of ab undant training data, the pixel v alues adjustment and physical priors can not perform well in various underw ater scenes. Deep learning [20] obtains con vincing success on lo w- lev el vision tasks, such as image defogging [21], image de- raining [22], and image deblurring [23], and some researchers apply deep learning to underwater image processing. Li et al. [12] proposed a weakly supervised underwater color transfer model based on cycle-consistent generative adversarial net- work (CycleGAN) [24] and multi-term loss function. Contrast to a previous application, our model blends two inputs to correct color effecti vely and owns faster testing time with fewer parameters. In this paper , we propose a nov el fusion generati ve adv ersar- ial network (FGAN), and the main contributions of this paper are summarized as follows: • T o the best of our knowledge, this is the first attempt to consider the multiple inputs fusion GAN in under- water image enhancement task. Besides, the simple and effecti ve FGAN owns fast processing speed and fewer parameters without manually adjusting the image pixel 2 Bas ic Blo ck Bas ic Blo ck B N B N B N Le a ky Re LU B N B N B N B N B N Le a ky R e LU 3 3 256 , 2 conv ´ 3 3 128 , 2 conv ´ Ta nh 3 3 3 , 2 deconv ´ Le a ky Re LU 3 3 64, 2 deconv ´ Le a ky Re LU Le a ky Re LU Le a ky ReL U Leaky ReLU Leaky ReLU Leaky ReLU Contact 1 1 256, 1 conv ´ 3 3 256, 1 conv ´ 5 5 256, 1 conv ´ 3 3 32, 1 conv ´ 3 3 32, 1 conv ´ 3 3 3, 1 conv ´ Fig. 2: Architecture of generator network. “con v” denotes conv olution layer while “deconv” denotes decon volution layer . Red arrow represents the process of fusion enhance method, and “BN” represents batch normalization. 3 × 3 denotes con volution kernel size, 128 denotes the number of con volution kernel, and 1 denotes con volution stride. values or designing the prior assumptions. • The well-designed objectiv e function is lev eraged for preserving image content, and the spectral normalization is utilized to improve image quality . In addition, an ablation study shows the ef fect of each component in the proposed network. • In order to ev aluate quantitativ ely different algorithms, we set up an effecti ve and public underwater test dataset (U45) including the color casts, low contrast and haze- like effects of underwater degradation. The enhanced images on U45 dataset and videos demonstrate the su- periority of the proposed method in both qualitativ e and quantitativ e ev aluations. Furthermore, the enhanced results by the proposed method could benefit lo w-lev el and high-level vision tasks, such as cann y edge detection and object detection. I I . M E T H O D O L O G Y T o solve color casts, low contrast and haze-like ef fects, we effecti vely blend multiple inputs and the generative adversarial network [25]. As sho wn in Fig. 2, the generator network employs two inputs in a fully con volutional network and combines two simple basic blocks. L g t loss and L f e loss preserve image features of ground truth x , and preserve image features of enhanced images x f e produced by fusion enhance method [4], respecti vely . A. Network ar chitectur e As discussed in the literature [18], fusion enhance method [4] performs relati vely well in most cases, thus we take the image enhanced by fusion enhance method as anther input of network. In this paper, the outputs of the simple network fed by x f e are added to the outputs of the network fed by raw underwater images, which uses less computer resources than the elementwise product of matrices employed by DUIENet [18]. Combined with inception architecture [26] and shortcut connection [27], Fig. 2 depicts the detailed structure of our basic block, which has different kernel sizes to detect the feature-maps at dif ferent scales. Each layer in block uses the conv olutional kernel with stride 1 to facilitate the con- catenation operation. Besides, the last 1 × 1 con volution of basic block reduces the output feature-maps to the number of input feature-maps of basic block, and thus impro ves computa- tional ef ficiency and facilitates residual learning. The generator network employing two basic blocks achiev es comparable performance without introducing too many blocks. The discriminator network utilizes fi ve conv olutional layers with spectral normalization [28], similar to the work of 70 × 70 PatchGAN, where PatchGAN is first used in pix2pix [29] and then extends to apply in later CycleGAN [24]. Furthermore, spectral normalization is computationally light and easy to implement. In ablation study , we notice that the discriminator with spectral normalization has better objectiv e quality score than the discriminator without spectral normalization. B. GAN objective function The proposed loss function includes RaGAN loss [30], L g t loss and L f e loss: L F GAN = L RaS GAN D + L RaS GAN G + λ gt L gt ( G ) + λ f e L f e ( G ) (1) 3 T ABLE I: Underwater image quality ev aluation of different enhancement methods on U45 dataset. Dataset Metric Raws FE RB UDCP UIBLA DP A TN CycleGAN WSCT UGAN FGAN Green UCIQE 0.5036 0.6444 0.6029 0.5896 0.5732 0.6369 0.5972 0.5717 0.6039 0.5935 UIQM 1.4536 3.4962 4.7391 2.8791 1.9330 4.0728 4.0209 2.4742 4.7144 4.8362 UICM -111.2208 -46.5145 -2.9019 -71.3505 -76.6133 -53.4647 -22.7221 -82.3234 -3.6953 -0.0883 UIConM 0.6789 0.7422 0.7600 0.7976 0.5733 0.9792 0.7285 0.7504 0.7557 0.7693 UISM 7.3238 7.2951 7.1235 6.9063 6.9213 7.0420 6.9660 7.1552 7.1685 7.0718 Blue UCIQE 0.4905 0.6568 0.6131 0.6002 0.5569 0.6693 0.5749 0.5663 0.6151 0.5885 UIQM 2.5669 4.2666 4.9896 4.3057 2.8483 4.3456 4.5665 3.3357 5.0442 5.2583 UICM -67.3196 -30.7335 -2.2822 -25.1365 -72.2416 -18.4767 -18.0013 -62.9050 -4.9056 1.7935 UIConM 0.6466 0.8275 0.8125 0.8272 0.7813 0.7650 0.8384 0.8338 0.8533 0.8689 UISM 7.2921 7.3641 7.2776 6.9665 7.0846 7.0846 7.0321 7.2082 7.2186 7.1148 Haze-like UCIQE 0.4502 0.6336 0.6022 0.5719 0.5668 0.6473 0.5785 0.5844 0.6141 0.5925 UIQM 3.0212 4.5659 5.0887 4.4425 4.0316 5.2218 4.6104 4.0479 5.1675 5.2106 UICM -47.8476 -21.6721 -1.6179 -22.6640 -30.3830 -1.0538 -7.0986 -32.7013 7.3280 8.7317 UIConM 0.6176 0.8509 0.8409 0.8197 0.7807 0.8658 0.7674 0.7984 0.7949 0.8042 UISM 7.3227 7.2289 7.2058 7.2836 7.1020 7.3007 6.9999 7.1645 7.1749 7.0750 T otal UCIQE 0.4814 0.6449 0.6061 0.5872 0.5656 0.6512 0.5835 0.5741 0.6110 0.5915 UIQM 2.3472 4.1096 4.9391 3.8758 2.9376 4.5467 4.3993 3.2859 4.9754 5.1017 UICM -75.4627 -32.9734 -2.2673 -39.7170 -59.7460 -24.3317 -15.9406 -59.3099 -0.4243 3.4790 UIConM 0.6477 0.8069 0.8045 0.8148 0.7118 0.8700 0.7781 0.7942 0.8013 0.8141 UISM 7.3129 7.2960 7.2023 7.0521 7.0359 7.1871 6.9993 7.1760 7.1874 7.0872 The RaGAN loss can be expressed as: L RaS GAN D = − E x r [log( ˜ D ( x r ))] − E x f [log(1 − ˜ D ( x f ))] (2) L RaS GAN G = − E x f [log( ˜ D ( x f ))] − E x r [log(1 − ˜ D ( x r ))] (3) where ˜ D ( x r ) = sigmoid ( C ( x r ) − E x f C ( x f )) , ˜ D ( x f ) = sigmoid ( C ( x f ) − E x r C ( x r )) . C ( x ) denotes as the non- transformed discriminator output. The relati vistic discrimina- tor aims to predict the probability that a real image x r is relativ ely more realistic than a fake one x f [30]. λ g t and λ f e are the weight of L g t loss and L f e loss, respectively . Considering that the proposed network has two inputs, we explore this option by using L g t loss and L f e loss: L gt ( G ) = E [ || x − G ( y ) || 1 ] (4) L f e ( G ) = E [ || x f e − G ( y ) || 1 ] (5) where x f e represents the image enhanced by fusion enhance method. x denotes ground truth and G ( y ) denotes result produced by the generator . I I I . E X P E R I M E N T S In this section, we first discuss the detail setup of the proposed method and U45 test dataset. W e then show the performance of the network by comparing it with the other state-of-the-art methods tested on U45 dataset. Finally , we conduct an ablation study to demonstrate the effect of each component and carry out application tests on low-le vel and high-lev el vision tasks to further demonstrate the ef fectiv eness of the proposed method. A. Setup 1) Data Set: Our proposed method is conducted in a paired system by using the 6128 image pairs from the underwater GAN (UGAN) [14], where one set of underwater images with no distortion and the another set of underwater images with distortion. Furthermore, we gather 240 real underwater images from the related papers [3]–[5], [9], [14], [18], Imagenet [31], SUN [32], and the sea bed close to the Zhangzi island in the Y ello w Sea, China. Considering that underwater enhancement task does not have a public and effecti ve test dataset like Set5 [33] or Set14 [34] in image super-resolution task, thus we carefully select 45 real underwater images named U45 out of the above-mentioned images. The U45 is sorted into three subsets of the green, blue, and haze-like categories, where subsets correspond to the color casts, low contrast and haze- like effects of underwater degradation. W e do not set a low- contrast data set alone because the other three subsets contain various lo w contrast underwater images. 2) Hyperparameter Setting: In our training process, train- ing and test images hav e dimensions 256 × 256 × 3 , λ g t = 10 , λ f e = 0 . 5 , Leaky ReLU [35] with a slope of 0.2 and Adam algorithm [36] with the learning rate of 0.0001. The discriminator updates 5 times per generator update. Batch size is set as 16 due to our limited GPU memory . The entire network was trained on the T ensorFlow [37] backend for 60 epochs. 3) Compar ed Methods: The competitive methods include Fusion Enhance (FE) [4], Retine x-Based (RB) [5], UDCP [8], UIBLA [10], DP A TN [11], CycleGAN [24], W eakly Supervised Color T ransfer (WSCT) [12], and UGAN [14]. For fair comparisons, all the ev aluations are implemented on the 256 × 256 image, and the deep learning-based methods are trained on the same training data from the literature [14]. B. Subjective and objective assessment The enhanced results of different methods tested on U45 are shown in Fig. 3. The entire results and scores includ- ing U45 are av ailable in https://github .com/IPNUISTlegal/ underwater - test- dataset- U45- . 4 Fig. 3: Subjectiv e comparisons on U45. From top to down: Raws, FE, RB, UDCP , UIBLA, DP A TN, CycleGAN, WSCT , UGAN, and FGAN. Best vie wed with zoom-in. FE can correct both green and haze-lik e underw ater images well. Ho wev er, the inaccurate color correction algorithm used by fusion enhance method causes obvious reddish color shift in bluish scenes. RB fails to deal with image brightness well in most underwater scenes because the same color correction is applied to the three RGB channels, which is inappropriate for underwater scene. W e notice that UDCP aggrav ates the blue- green effect but ef fectiv ely reduces haze effects. The results 5 T ABLE II: T esting time and parameters of generator of dif ferent enhancement methods. FE RB UDCP UIBLA DP A TN CycleGAN WSCT UGAN FGAN T esting time (s) 0.1600 0.2776 3.6275 6.2115 1.1068 0.1460 0.1960 0.0297 0.0286 Parameters —– —– —– —– —– 2.85M 2.85M 38.67M 1.11M of UIBLA are unnatural, especially in the blue scene, because the prior of the background light and the medium transmission estimate is suboptimum. The prior-aggre gated DP A TN is the better choice for haze-like images rather than green and blue images. For example, blue underwater images enhanced by DP A TN introduce unpleasant artifacts and color casts. In this paper , CycleGAN and WSCT are trained on image pairs rather than the weakly supervised manner . WSCT tends to generate inauthentic results in some underwater scenarios because they remain cycle consistency loss more suitable for image to image translation and abandon the L 1 loss employed for giving results some sense of ground truth, where the WSCT presents visually less appealing than the images appeared in CycleGAN. By a general visual inspection it can be noticed that the proposed method is able to correct color distortion and preserve image details than UGAN in green and blue scene. F or e xample, the coral of green scene presents a bright red, and the fish of blue scene presents visually appealing natural color . In addition, the raw underwater video and enhanced video by the proposed method are presented at https://youtu.be/JZTFnBHNGr0. In order to e valuate the underwater images, we choose underwater color image quality ev aluation (UCIQE) [38] and underwater image quality measure (UIQM) [39]. The UCIQE utilizes a linear combination of chroma, saturation and contrast to quantify the nonuniform color cast, blurring, and lo w contrast, respectiv ely . The UIQM comprises three properties of underwater images, such as the underwater image colorfulness measure (UICM), the underwater image sharpness measure (UISM), and the underwater image contrast measure (UIConM). Higher v alues of UCIQE and UIQM denote better image quality . T ABLE I giv es the quantitati ve scores of the compared methods a veraged on green underwater images, blue underwa- ter images, haze-like water images, and the entire U45. The best result and second best result of UCIQE and UIQM is denoted by the red font and blue font, respecti vely . FE yields inconsistent results on UCIQE and UIQM assessments and ranks the lo w place under UIQM because the inaccurate color correction algorithm causing the decreased value of UICM. DP A TN aggregated both prior (i.e., domain knowledge) and data (i.e., haze distribution) information boosts UCIM scores of all categories and UCIQE scores of haze-like images, b ut these results are visually relativ ely poor . The proposed method has adv antages in correcting color casts and stably obtains high UIQM values in all subsets and U45. Simultaneously , FGAN obtains better subjective perception at the cost of the part decreased performance of UCIQE. Last but not least, the nov el dark channel prior loss [13] can remo ve the distinctive appearance between a hazy image and its clear version in a dark channel. Ho wev er , the hyperparameter tuning still requires much time to improve the performance. Thus, we leav e this part in our future work. W e note that there e xist discrepancies between the subjecti ve and objecti ve assessment in same underwater scene. And, UCIQE and UIQM may yield inconsistent assessments on same dataset, which has been found in the literature [3]. Recently , W ang et al. [40] proposed an imaging-inspired no- reference underwater color image quality assessment metric, dubbed the CCF , which sho ws limitations on balance of human visual perception and objectiv e assessment because CCF ob- tains the e xtremely high place in visually poor UDCP results. Compared to state-of-the-art opinion-aware blind image qual- ity assessment methods, the superior quality-prediction per- formance of IL-NIQE method [41] is demonstrated to without the need of any distorted sample images nor subjective quality scores for training. Howe ver , it is hard to find high quality underwater images used to learn the pristine MVG model used to create IL-NIQE. Besides, researchers also dev elop deep learning to image quality assessment [42]–[44], which obtains remarkable performance and could apply to underwater scene in the future work. In summary , the ev aluation of underwater image quality needs more appropriate and effecti ve metric related with human visual perception. C. T esting time and P arameters T ABLE II compares the average testing time of different methods on the same computer with Intel(R) i5-8400 CPU, 16GB RAM. Even in the case of combining two inputs, the proposed FGAN owns very fast processing speed with a size of 256 × 256 within 0.0286s. Simultaneously , the generator of FGAN contains fe wer parameters than other generator of deep learning methods. UGAN employs many conv olution layers with 512 kernels, causing too man y network parameters. Besides, the network of WSCT is based on CycleGAN with 9 blocks for 256 × 256 resolution training images, thus they hav e the exact same pararmeters. D. Ablation Study and Application tests Ablation study aims to reveal the ef fect of each component. W e carry out the dif ferent variants tested on the U45 dataset. 1) FGAN has different λ f e value, 2) FGAN remov es spectral normalization (-SN), 3) FGAN replaces all basic blocks with four layers of 3 × 3 kernel size, 256 conv olution kernels, and stride 1 (-BB). As shown in Fig. 4, L f e loss has rapid con vergence with the increasing numerical index of weight λ f e . Simultaneously , the total G loss decreases with the decreasing numerical index of weight λ f e . As depicted in Fig. 5, we notice that when the λ f e increases, L f e loss plays more important role in training phase and the enhanced result is trained to be 6 0.0 0.5 1.0 1.5 2.0 I terations 1e4 0 1 2 3 4 FE_loss 0.5 5 10 0.0 0.5 1.0 1.5 2.0 I terations 1e4 4 6 8 10 G_loss 0.5 5 10 Fig. 4: The graph plot of L f e loss and total G loss. Blue lines denote λ f e = 0 . 5 . Red lines denote λ f e = 5 . Green lines denote λ f e = 10 . (a) λ f e =0 (b) λ f e =0.5 (c) λ f e =5 (d) λ f e =10 (e) FE Fig. 5: The enhanced result by dif ferent v ariants and FE. 1 2 3 4 5 6 v ariants 0.570 0.575 0.580 0.585 0.590 0.595 UCIQE 1 2 3 4 5 6 v a r i a n t s 4 . 6 4 . 7 4 . 8 4 . 9 5 . 0 5 . 1 5 . 2 UI Q M Fig. 6: The graph plot of UCIQE and UIQM. Number 1 to 6 are λ f e =0, λ f e =0.5, λ f e =5, λ f e =10, -SN and -BB, respectively . Fig. 7: Application tests. From left to right and top to down: raw image, canny edge detection of raw image, object detec- tion of raw image, enhanced image, canny edge detection of enhanced image and object detection of enhanced image. near the fusion enhance method. Fig. 6 depicts the av erage UCIQE and UIQM of different variants on U45 test dataset. For better subjectiv e and objective assessment, the proposed network takes λ f e = 0 . 5 as the final version. Furthermore, we observe that both basic blocks and spectral normalization could improve the performance. Some application tests, including canny edge detection [45] and object detection, are employed to further demonstrate the effecti veness of the proposed method. As shown in the Fig. 7, the pre-trained YOLO-V3 model [46] fails to capture objects with a raw image. The detection performance of the enhanced images has an obvious improv ement, such as sculpture and div er, and more edge detection features are rendered. I V . C O N C L U S I O N This paper sets up an effecti ve and public U45 test dataset including the color casts, low contrast and haze-like effects of underwater degradation and presents a fusion adversarial 7 network for underwater image enhancement. The proposed network combined with basic blocks and multi-term loss function could correct color effecti vely and produce visually pleasing enhanced results, which is the first attempt to blend two inputs in generative adversarial network underwater tasks. Numerous experiments on U45 and an ablation study are conducted to demonstrate the superiority of the proposed method. Besides, the low-le vel and high-le vel vision tasks further demonstrate the effecti veness of the proposed method. R E F E R E N C E S [1] J. S. Jaf fe, “Underwater optical imaging: the past, the present, and the prospects, ” IEEE Journal of Oceanic Engineering , vol. 40, no. 3, pp. 683–700, 2014. [2] D. Berman, T . Treibitz, and S. A vidan, “Diving into haze-lines: Color restoration of underwater images, ” in Proc. British Machine V ision Confer ence (BMVC) , vol. 1, no. 2, 2017. [3] R. Liu, M. Hou, X. Fan, and Z. Luo, “Real-world underwater enhance- ment: Challenging, benchmark and efficient solutions, ” arXiv pr eprint arXiv:1901.05320 , 2019. [4] C. Ancuti, C. O. Ancuti, T . Haber, and P . Bekaert, “Enhancing underwa- ter images and videos by fusion, ” in 2012 IEEE Confer ence on Computer V ision and P attern Recognition . IEEE, 2012, pp. 81–88. [5] X. Fu, P . Zhuang, Y . Huang, Y . Liao, X.-P . Zhang, and X. Ding, “ A retinex-based enhancing approach for single underwater image, ” in 2014 IEEE International Conference on Image Processing (ICIP) . IEEE, 2014, pp. 4572–4576. [6] X. Fu, Z. F an, M. Ling, Y . Huang, and X. Ding, “T wo-step approach for single underwater image enhancement, ” in 2017 International Sym- posium on Intelligent Signal Processing and Communication Systems (ISP ACS) . IEEE, 2017, pp. 789–794. [7] C.-Y . Li, J.-C. Guo, R.-M. Cong, Y .-W . Pang, and B. W ang, “Underwater image enhancement by dehazing with minimum information loss and histogram distribution prior, ” IEEE T ransactions on Imag e Processing , vol. 25, no. 12, pp. 5664–5677, 2016. [8] P . L. Drews, E. R. Nascimento, S. S. Botelho, and M. F . M. Campos, “Underwater depth estimation and image restoration based on single images, ” IEEE computer graphics and applications , vol. 36, no. 2, pp. 24–35, 2016. [9] A. Galdran, D. P ardo, A. Pic ´ on, and A. Alvarez-Gila, “ Automatic red- channel underw ater image restoration, ” J ournal of V isual Communica- tion and Image Repr esentation , vol. 26, pp. 132–145, 2015. [10] Y .-T . Peng and P . C. Cosman, “Underwater image restoration based on image blurriness and light absorption, ” IEEE transactions on imag e pr ocessing , vol. 26, no. 4, pp. 1579–1594, 2017. [11] R. Liu, X. Fan, M. Hou, Z. Jiang, Z. Luo, and L. Zhang, “Learning aggregated transmission propagation networks for haze removal and beyond, ” IEEE transactions on neural networks and learning systems , no. 99, pp. 1–14, 2018. [12] C. Li, J. Guo, and C. Guo, “Emer ging from water: Underwater image color correction based on weakly supervised color transfer , ” IEEE Signal Pr ocessing Letters , vol. 25, no. 3, pp. 323–327, 2018. [13] X. Chen, J. Y u, S. Kong, Z. W u, X. Fang, and L. W en, “T owards real-time advancement of underwater visual quality with gan, ” IEEE T ransactions on Industrial Electronics , 2019. [14] C. Fabbri, M. J. Islam, and J. Sattar , “Enhancing underwater imagery using generativ e adversarial networks, ” in 2018 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2018, pp. 7159–7165. [15] M. Hou, R. Liu, X. Fan, and Z. Luo, “Joint residual learning for underwater image enhancement, ” in 2018 25th IEEE International Confer ence on Image Processing (ICIP) . IEEE, 2018, pp. 4043–4047. [16] S. Anwar, C. Li, and F . Porikli, “Deep underwater image enhancement, ” arXiv pr eprint arXiv:1807.03528 , 2018. [17] X. Y u, Y . Qu, and M. Hong, “Underwater-gan: Underwater image restoration via conditional generativ e adv ersarial network, ” in Interna- tional Confer ence on P attern Recognition . Springer , 2018, pp. 66–75. [18] C. Li, C. Guo, W . Ren, R. Cong, J. Hou, S. Kwong, and D. T ao, “ An underwater image enhancement benchmark dataset and be yond, ” arXiv pr eprint arXiv:1901.05495 , 2019. [19] Y . Hu, K. W ang, X. Zhao, H. W ang, and Y . Li, “Underwater image restoration based on conv olutional neural network, ” in Asian Conference on Machine Learning , 2018, pp. 296–311. [20] Y . LeCun, Y . Bengio, and G. Hinton, “Deep learning, ” nature , vol. 521, no. 7553, p. 436, 2015. [21] B. Cai, X. Xu, K. Jia, C. Qing, and D. T ao, “Dehazenet: An end-to-end system for single image haze removal, ” IEEE T ransactions on Image Pr ocessing , vol. 25, no. 11, pp. 5187–5198, 2016. [22] Z. Fan, H. W u, X. Fu, Y . Huang, and X. Ding, “Residual-guide network for single image deraining, ” in 2018 ACM Multimedia Confer ence on Multimedia Confer ence . A CM, 2018, pp. 1751–1759. [23] O. Kupyn, V . Budzan, M. Mykhailych, D. Mishkin, and J. Matas, “Deblurgan: Blind motion deblurring using conditional adversarial net- works, ” in Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , 2018, pp. 8183–8192. [24] J.-Y . Zhu, T . Park, P . Isola, and A. A. Efros, “Unpaired image-to-image translation using cycle-consistent adversarial networks, ” in Pr oceedings of the IEEE international conference on computer vision , 2017, pp. 2223–2232. [25] I. Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair, A. Courville, and Y . Bengio, “Generative adversarial nets, ” in Advances in neural information pr ocessing systems , 2014, pp. 2672– 2680. [26] C. Szegedy , W . Liu, Y . Jia, P . Sermanet, S. Reed, D. Anguelov , D. Erhan, V . V anhoucke, and A. Rabinovich, “Going deeper with conv olutions, ” in Proceedings of the IEEE confer ence on computer vision and pattern r ecognition , 2015, pp. 1–9. [27] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Proceedings of the IEEE conference on computer vision and pattern recognition , 2016, pp. 770–778. [28] T . Miyato, T . Kataoka, M. Koyama, and Y . Y oshida, “Spectral normalization for generative adv ersarial networks, ” arXiv pr eprint arXiv:1802.05957 , 2018. [29] P . Isola, J.-Y . Zhu, T . Zhou, and A. A. Efros, “Image-to-image translation with conditional adversarial networks, ” in Pr oceedings of the IEEE confer ence on computer vision and pattern recognition , 2017, pp. 1125– 1134. [30] A. Jolicoeur-Martineau, “The relativistic discriminator: a key element missing from standard gan, ” arXiv preprint , 2018. [31] O. Russakovsky , J. Deng, H. Su, J. Krause, S. Satheesh, S. Ma, Z. Huang, A. Karpathy , A. Khosla, M. Bernstein et al. , “Imagenet large scale visual recognition challenge, ” International journal of computer vision , vol. 115, no. 3, pp. 211–252, 2015. [32] J. Xiao, J. Hays, K. A. Ehinger , A. Oliv a, and A. T orralba, “Sun database: Large-scale scene recognition from abbey to zoo, ” in 2010 IEEE Computer Society Confer ence on Computer V ision and P attern Recognition . IEEE, 2010, pp. 3485–3492. [33] M. Bevilacqua, A. Roumy , C. Guillemot, and M. L. Alberi-Morel, “Low- complexity single-image super-resolution based on nonne gativ e neighbor embedding, ” 2012. [34] R. Zeyde, M. Elad, and M. Protter , “On single image scale-up using sparse-representations, ” in International confer ence on curves and sur- faces . Springer , 2010, pp. 711–730. [35] A. L. Maas, A. Y . Hannun, and A. Y . Ng, “Rectifier nonlinearities improve neural network acoustic models, ” in Pr oc. icml , vol. 30, no. 1, 2013, p. 3. [36] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [37] M. Abadi, P . Barham, J. Chen, Z. Chen, A. Davis, J. Dean, M. Devin, S. Ghemaw at, G. Irving, M. Isard et al. , “T ensorflow: A system for large- scale machine learning, ” in 12th { USENIX } Symposium on Operating Systems Design and Implementation ( { OSDI } 16) , 2016, pp. 265–283. [38] M. Y ang and A. So wmya, “ An underwater color image quality evaluation metric, ” IEEE T ransactions on Imag e Pr ocessing , vol. 24, no. 12, pp. 6062–6071, 2015. [39] K. Panetta, C. Gao, and S. Agaian, “Human-visual-system-inspired underwater image quality measures, ” IEEE Journal of Oceanic Engi- neering , vol. 41, no. 3, pp. 541–551, 2015. [40] Y . W ang, N. Li, Z. Li, Z. Gu, H. Zheng, B. Zheng, and M. Sun, “ An imaging-inspired no-reference underwater color image quality assess- ment metric, ” Computers & Electrical Engineering , vol. 70, pp. 904– 913, 2018. [41] L. Zhang, L. Zhang, and A. C. Bo vik, “ A feature-enriched completely blind image quality evaluator , ” IEEE T ransactions on Image Processing , vol. 24, no. 8, pp. 2579–2591, 2015. [42] K.-Y . Lin and G. W ang, “Hallucinated-iqa: No-reference image quality assessment via adversarial learning, ” in Pr oceedings of the IEEE Confer- ence on Computer V ision and P attern Recognition , 2018, pp. 732–741. 8 [43] H. Ren, D. Chen, and Y . W ang, “Ran4iqa: Restorative adversarial nets for no-reference image quality assessment, ” in Thirty-Second AAAI Confer ence on Artificial Intelligence , 2018. [44] H.-T . Lim, H. G. Kim, and Y . M. Ra, “Vr iqa net: Deep virtual reality image quality assessment using adversarial learning, ” in 2018 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 6737–6741. [45] J. Canny , “ A computational approach to edge detection, ” in Readings in computer vision . Else vier, 1987, pp. 184–203. [46] J. Redmon and A. Farhadi, “Y olov3: An incremental improvement, ” arXiv pr eprint arXiv:1804.02767 , 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment