Asymptotic near-efficiency of the Gibbs-energy (GE) and empirical-variance estimating functions for fitting Mat{e}rn models -- II: Accounting for measurement errors via conditional GE mean

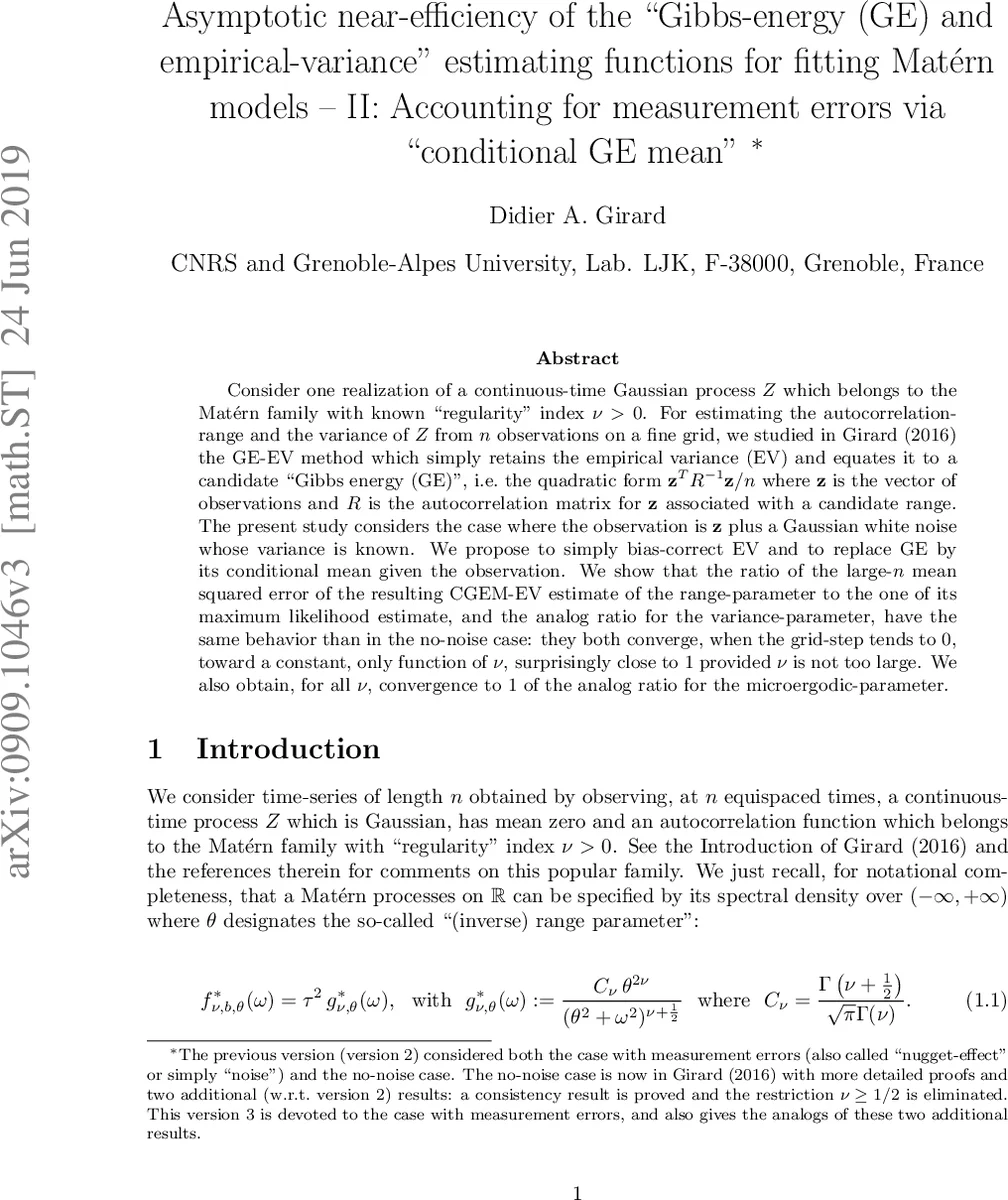

Consider one realization of a continuous-time Gaussian process $Z$ which belongs to the Mat' ern family with known regularity'' index $\nu >0$. For estimating the autocorrelation-range and the variance of $Z$ from $n$ observations on a fine grid, we studied in Girard (2016) the GE-EV method which simply retains the empirical variance (EV) and equates it to a candidate Gibbs energy (GE)’’ i.e.~the quadratic form ${\bf z}^T R^{-1} {\bf z}/n$ where ${\bf z}$ is the vector of observations and $R$ is the autocorrelation matrix for ${\bf z}$ associated with a candidate range. The present study considers the case where the observation is ${\bf z}$ plus a Gaussian white noise whose variance is known. We propose to simply bias-correct EV and to replace GE by its conditional mean given the observation. We show that the ratio of the large-$n$ mean squared error of the resulting CGEM-EV estimate of the range-parameter to the one of its maximum likelihood estimate, and the analog ratio for the variance-parameter, have the same behavior than in the no-noise case: they both converge, when the grid-step tends to $0$, toward a constant, only function of $\nu$, surprisingly close to $1$ provided $\nu$ is not too large. We also obtain, for all $\nu$, convergence to 1 of the analog ratio for the microergodic-parameter.

💡 Research Summary

This paper addresses the problem of estimating the range (θ) and variance (τ²) parameters of a continuous‑time Gaussian process Z that follows a Matérn covariance structure, when the observations are contaminated by additive Gaussian white noise (the so‑called nugget effect) of known variance σ_N². The smoothness parameter ν is assumed to be known. In the noise‑free setting, Girard (2016) introduced the GE‑EV method, which equates the empirical variance (EV) to a candidate Gibbs energy (GE) defined as the quadratic form zᵀR_θ⁻¹z/n, where R_θ is the autocorrelation matrix for a given range. The present work extends this idea to the noisy case by proposing the CGEM‑EV (Conditional Gibbs‑Energy Mean + Empirical Variance) procedure.

The CGEM‑EV algorithm proceeds in two steps. First, the empirical variance of the observed vector y is bias‑corrected: τ̂²_EV|σ_N = (1/n) yᵀy – σ_N², yielding an estimator of the signal‑to‑noise ratio b̂ = τ̂²_EV|σ_N / σ_N². Second, for a candidate pair (b,θ) a “signal extraction” matrix A_{b,θ}=bR_θ(I_n+bR_θ)⁻¹ is defined, and the conditional Gibbs energy is CGEM(b,θ)= (1/n) yᵀA_{b,θ}R_θ⁻¹A_{b,θ}y + b tr(I_n – A_{b,θ}). This quantity can be shown to be the conditional expectation of the original Gibbs energy given the noisy data. The range estimator θ̂_GEV|σ_N is obtained by solving the estimating equation CGEM(b̂,θ)=b̂σ_N² for θ (the smallest positive root is chosen when multiple solutions exist).

The theoretical contribution consists of several asymptotic results under a “dense‑grid” regime where the sampling interval δ→0 while the number of observations n→∞. Lemma 2.1–2.3 provide small‑δ expansions for the spectral density of the noisy series and for the filter a_{δ,b,θ} that appears in the quadratic forms. Theorem 3.1 establishes three key convergences: (i) the normalized estimating equation CGEM(b,θ)–bσ_N² converges in probability to a deterministic limit φ(δ,b,θ,b₀,θ₀); (ii) as δ→0 this limit simplifies to 2ν(2ν+1)⁻¹ (b₀θ₀^{2ν})/(bθ^{2ν}); (iii) the derivative ∂_θ CGEM(b,θ) converges to a strictly negative value for sufficiently small δ, guaranteeing local well‑posedness of the estimating equation. Theorem 3.3, using the general theory of estimating equations (Kessler et al., 2012), shows that if the bias‑corrected empirical variance is consistent for b₀, then the CGEM‑EV range estimator is consistent for θ₀.

Efficiency is assessed by comparing the asymptotic mean‑squared error (MSE) of the CGEM‑EV estimators with that of the maximum‑likelihood estimators (MLE). The ratios MSE(θ̂_CGEM)/MSE(θ̂_MLE) → c_θ(ν), MSE(τ̂²_CGEM)/MSE(τ̂²_MLE) → c_τ(ν) converge to constants that depend only on the smoothness ν. For ν in the practically relevant range (0.5 ≤ ν ≤ 3), these constants lie between 1 and about 1.33, meaning that CGEM‑EV is at most 33 % less efficient than MLE and often much closer to the optimal. Remarkably, for the microergodic parameter c₀=τ²θ^{2ν}, the ratio is exactly 1 for any ν>0, indicating that CGEM‑EV attains the same asymptotic precision as MLE for this quantity.

From a computational standpoint, CGEM‑EV avoids repeated evaluation of log‑determinants and matrix inverses required by MLE. The estimating equation only involves the matrix A_{b,θ}, which can be computed via solving a linear system with matrix (I_n+bR_θ); the condition number of this matrix, rather than that of R_θ itself, governs numerical stability. Adding a small nugget (even when the true noise variance is zero) improves conditioning, a well‑known practice in kriging and spatial statistics.

In summary, the paper provides a rigorous asymptotic justification for the CGEM‑EV method in the presence of known measurement error. It demonstrates near‑efficiency relative to MLE, exact efficiency for the microergodic parameter, and superior numerical stability. The authors suggest extensions to multivariate fields, irregular sampling designs, and joint estimation of ν, as well as integration with Bayesian frameworks, as promising directions for future work.

Comments & Academic Discussion

Loading comments...

Leave a Comment