HPLFlowNet: Hierarchical Permutohedral Lattice FlowNet for Scene Flow Estimation on Large-scale Point Clouds

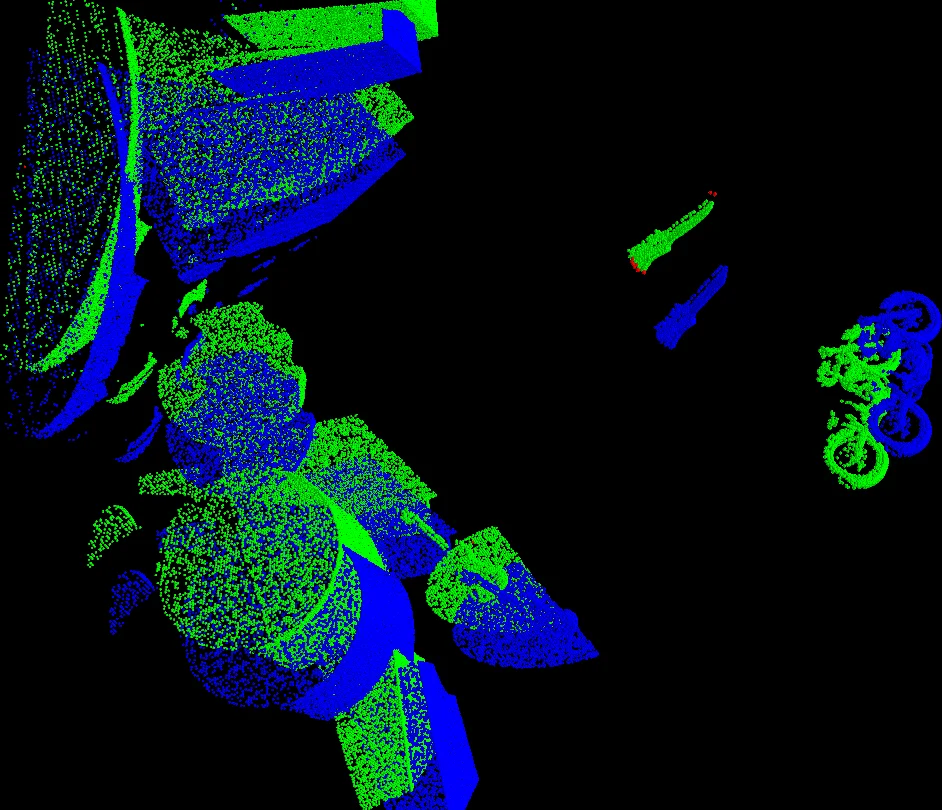

We present a novel deep neural network architecture for end-to-end scene flow estimation that directly operates on large-scale 3D point clouds. Inspired by Bilateral Convolutional Layers (BCL), we propose novel DownBCL, UpBCL, and CorrBCL operations that restore structural information from unstructured point clouds, and fuse information from two consecutive point clouds. Operating on discrete and sparse permutohedral lattice points, our architectural design is parsimonious in computational cost. Our model can efficiently process a pair of point cloud frames at once with a maximum of 86K points per frame. Our approach achieves state-of-the-art performance on the FlyingThings3D and KITTI Scene Flow 2015 datasets. Moreover, trained on synthetic data, our approach shows great generalization ability on real-world data and on different point densities without fine-tuning.

💡 Research Summary

HPLFlowNet introduces a novel end‑to‑end deep neural network for estimating dense 3D scene flow directly from large‑scale point clouds. The authors build on the Bilateral Convolutional Layer (BCL) concept, which maps unordered point features onto a high‑dimensional lattice, performs sparse convolution, and interpolates the results back to the original points. To overcome the prohibitive memory and computation costs of standard BCLs on millions of points, the paper adopts the permutohedral lattice—a d‑dimensional simplex‑based lattice that enables O(d²) computation of barycentric weights and dramatically reduces the number of active lattice sites.

Three new operators are proposed: DownBCL, UpBCL, and CorrBCL. DownBCL replaces the full Splat‑Conv‑Slice pipeline with a two‑step Splat‑Conv operation, progressively downsampling the point cloud by splatting features onto coarser lattice resolutions. Because each subsequent DownBCL receives only the non‑empty lattice points from the previous layer, the data size becomes independent of the original point count and depends only on the occupied volume. UpBCL mirrors this process in reverse, using Conv‑Slice to upsample from coarse to fine lattices, ultimately restoring per‑point predictions. This hierarchical down‑up architecture forms an hourglass‑shaped network that can be made arbitrarily deep without exploding memory usage.

CorrBCL is designed to fuse information from two consecutive frames. Since both frames are splatted onto the same permutohedral lattice, CorrBCL first computes a patch‑wise correlation by concatenating the feature vectors of corresponding lattice neighborhoods and feeding them through a small convolutional network (instead of simple element‑wise multiplication). Then, a displacement‑filtering step restricts the search to a local motion window, aggregating correlations across plausible displacements with another convolutional filter. This mechanism is analogous to cost‑volume construction and warping in stereo or optical flow, but it operates directly on the sparse lattice, keeping computational cost low while preserving fine‑grained matching cues.

The network is trained end‑to‑end on the synthetic FlyingThings3D dataset and evaluated on both FlyingThings3D and the real‑world KITTI Scene Flow 2015 benchmark. HPLFlowNet achieves state‑of‑the‑art End‑Point Error (EPE) and accuracy, outperforming prior point‑cloud methods such as FlowNet3D and PointPWC‑Net. Notably, a model trained only on synthetic data generalizes well to KITTI without any fine‑tuning, demonstrating robustness to sensor noise, varying point densities, and domain shift.

Efficiency is a central claim: the model processes a pair of KITTI frames containing up to 86 000 points per frame on a single GPU (12 GB) in roughly 120 ms, far below the limits of previous point‑cloud networks that require chunking or aggressive subsampling. The reduction in memory footprint stems from (1) the use of permutohedral lattice which scales with occupied space rather than point count, (2) the two‑step DownBCL/UpBCL pipelines that eliminate redundant splatting or slicing, and (3) the sparse hash‑table implementation of convolutions.

In summary, HPLFlowNet contributes (i) an efficient lattice‑based representation for unordered 3‑D data, (ii) hierarchical down‑sampling and up‑sampling layers that decouple computational cost from point cloud size, (iii) a novel correlation layer that merges two frames in a learnable, locally constrained manner, and (iv) a practical, real‑time capable system that sets new performance standards on both synthetic and real benchmarks. The approach opens the door for large‑scale, real‑time 3‑D motion understanding in autonomous driving, robotics, and augmented reality applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment