PAN: Projective Adversarial Network for Medical Image Segmentation

Adversarial learning has been proven to be effective for capturing long-range and high-level label consistencies in semantic segmentation. Unique to medical imaging, capturing 3D semantics in an effective yet computationally efficient way remains an …

Authors: Naji Khosravan, Aliasghar Mortazi, Michael Wallace

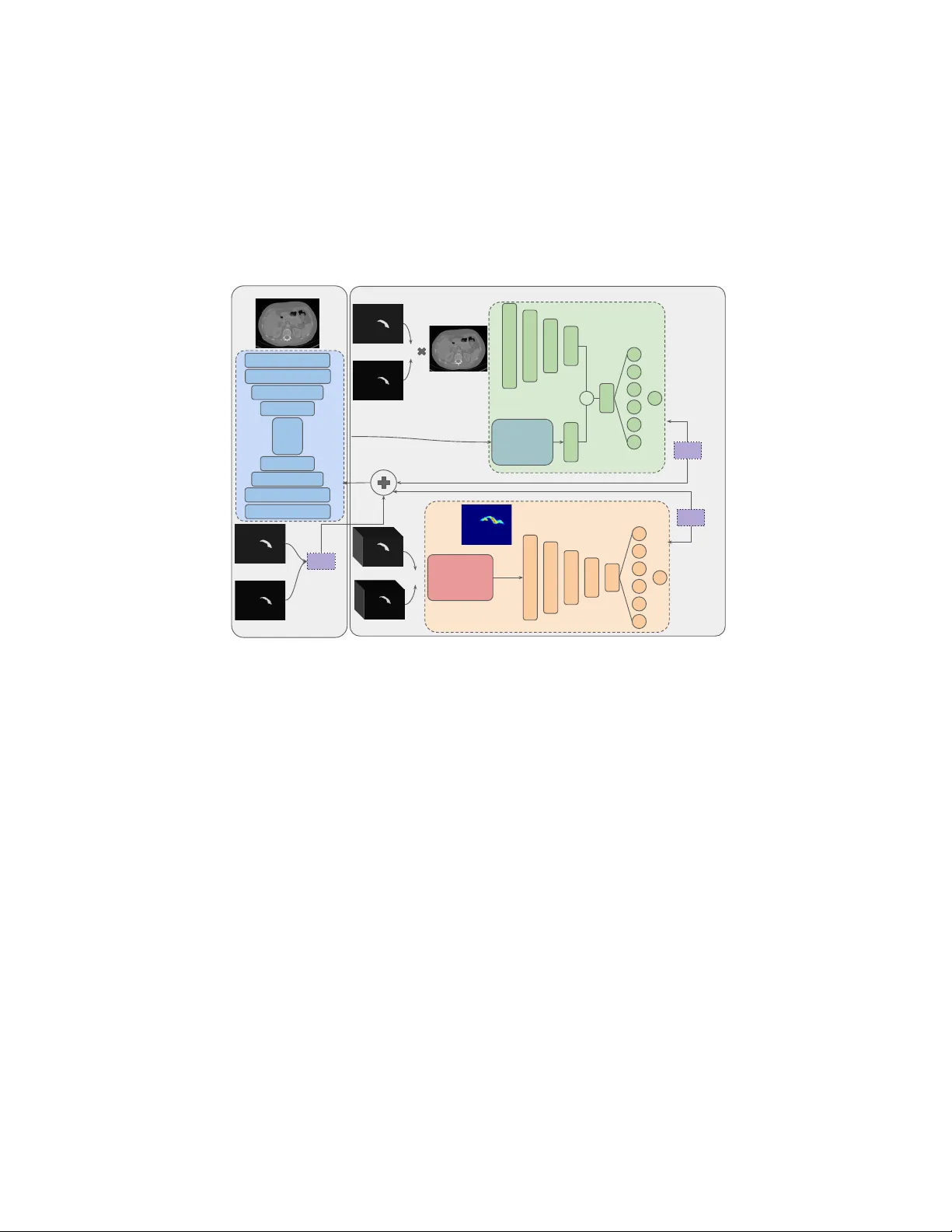

P AN: Pro jectiv e Adv ersarial Net w ork for Medical Image Segmen tation Na ji Khosra v an 2 , Aliasghar Mortazi 2 , Mic hael W allace 1 , Ulas Bagci 2 1 Ma yo Clinic Cancer Center, Jac ksonville, FL. 2 Cen ter for Research in Computer Vision (CRCV), Sc ho ol of Computer Science, Universit y of Central Florida, Orlando, FL. Abstract. Adv ersarial learning has b een prov en to b e effectiv e for cap- turing long-range and high-level lab el consistencies in semantic segmen- tation. Unique to medical imaging, capturing 3D seman tics in an effectiv e y et computationally efficien t wa y remains an open problem. In this study , w e address this computational burden b y prop osing a no vel pro jective adv ersarial net work, called P AN, whic h incorp orates high-level 3D infor- mation through 2D pro jections. F urthermore, w e in tro duce an atten tion mo dule in to our framew ork that helps for a selective integration of global information directly from our se gmentor to our adv ersarial net work. F or the clinical application we c hose pancreas segmentation from CT scans. Our prop osed framew ork achiev ed state-of-the-art p erformance without adding to the complexity of the segmentor. Keyw ords: Ob ject Segmen tation · Deep Learning · Adv ersarial Learn- ing · Atten tion · Pro jective · Pancreas. 1 In tro duction Segmen tation has b een a ma jor area of in terest within the fields of computer vision and medical imaging for y ears. Owing to their success, deep learning based algorithms hav e b ecome the standard choice for semantic segmen tation in the literature. Most state-of-the-art studies mo del segmentation as a pixel-level classification problem [2 – 4]. Pixel-lev el loss is a promising direction but, it fails to incorp orate global seman tics and relations. T o address this issue researchers hav e prop osed a v ariet y of strategies. A great deal of previous research uses a post- pro cessing step to capture pairwise or higher level relations. Conditional Random Field (CRF) w as used in [2] as an offline post-pro cessing step to mo dify edges of ob jects and remov e false p ositives in CNN output. In other studies, to av oid offline p ost-pro cessing and provide an end-to-end framework for segmen tation, mean-field appro ximate inference for CRF with Gaussian pairwise p oten tials w as mo deled through Recurren t Neural Netw ork (RNN) [17]. In parallel to post processing attempts, another branch of researc h tried to capture this global context through m ulti-scale or pyramid framew orks. In [2 – 4], sev eral spatial p yramid po oling at differen t scales with b oth conv entional con- v olution lay ers and Atr ous con volution lay ers were used to keep b oth con textual 2 N. Khosrav an et al. and pixel-lev el information. Despite such efforts, combining lo cal and global in- formation in an optimal manner is not a solved problem, yet. F ollowing b y the seminal w ork b y Goo dfellow et.al. in [7] a great deal of researc h has b een done on adversarial learning [8, 10, 14, 15]. Sp ecific to segmen- tation, for the first time, Luc et. al. [8] prop osed the use of a discriminator along with a segmentor in an adversarial min-max game to capture long-range lab el consistencies. In another study Se gAN was introduced, in which the segmentor pla ys the role of generator b eing in a min-max game with a discriminator with a m ulti-scale L1 loss [14]. A similar approach w as tak en for structure correction in c hest X-rays segmen tation in [5]. A conditional GAN approach w as tak en in [10] for brain tumor segmen tation. In this pap er, we fo cused on the challenging problem of pancreas segmenta- tion from CT images, although our framework is generic and can b e applied to an y 3D ob ject segmentgation problem. This particular application has unique c hallenges due to the complex shape and orientation of pancreas, having low con- trast with neighbouring tissues and relatively small and v arying size. Pancreas segmen tation were studied widely in the literature. Y u et al. introduced a recur- rence saliency transformation net work, which uses the information from previous iteration as a spatial w eight for curren t iteration [16]. In another attempt, U- Net with an attention gate was prop osed in [9]. Similarly , a tw o-cascaded-stage based metho d was used to lo calize and segment pancreas from CT scans in [13]. A prediction-segmen tation mask w as used in [18] for constraining the segmen tation with a coarse-to-fine strategy . F urthermore, a segmen tation netw ork with RNN w as proposed in [1] to capture the spatial information among slices. The unique c hallenges of pancreas segmentation (complex shap e and small organ) shifted the literature tow ards metho ds with coarse-to-fine and multi-stage frameworks, promising but computationally exp ensiv e. Summary of our contributions: The current literature on segmentation fails to capture 3D high-level shap e and seman tics with a low-computation and effectiv e framew ork. In this pap er, for the fist time in the literature, we prop ose a pro jective adversarial net w ork (P AN) for segmen tation to fill this researc h gap. Our metho d is able to capture 3D relations through 2D pro jections of ob jects, without relying on 3D images or adding to the complexit y of the segmen tor. F ur- thermore, we introduce an attention mo dule to selectively integrate high-lev el, whole-image features from the se gmentor in to our adversarial netw ork. With comprehensiv e ev aluations, we sho wed that our prop osed framew ork ac hieves the state-of-the-art p erformance on publicly a v ailable CT pancreas segmen tation dataset [11] even when a simple enco der-deco der net w ork w as used as se gmentor . 2 Metho d Our prop osed metho d is built up on the adversarial netw orks. The prop osed framew ork’s ov erview is illustrated in Figure 1. W e ha ve three netw orks: a seg- men tor ( S in Figure 1), which is our main netw ork and was used during the test phase, and tw o adversarial netw orks ( D s and D p in Figure 1), each with a P AN 3 sp ecific task. The first adversarial net work ( D s ) captures high-level sp atial lab el con tiguity while the second adversarial netw ork ( D p ) enforces the 3D seman- tics through a 2D pro jection learning strategy . The adversarial netw orks were used only during the training phase to b o ost the p erformance of the segmentor without adding to its complexit y . CT image(I n ) D P Bottleneck features f(I n ) Groundtruth (y n ) Probability map S(I n ) L bce Groundtruth (y n ) Probability map S(I n ) Or 0 Or 1 prediction L Ds Stack of y n Stack of S(I n ) Projection Module (P) 0 Or 1 prediction 2D Projection P(I n ) Or L Dp S Auxiliary networks D S Attention Module (A) C 9x9@16 7x7@16 4(3x3@32) 4(3x3@64) 4(3x3 @128) 3(3x3@64) 3(3x3@32) 3(3x3@16) 3x3@1 3x3@16 3x3@32 3x3@64 3x3@128 3x3@128 3x3@256 3x3@16 3x3@32 3x3@64 3x3@128 3x3@256 @512 @1 @512 @1 Fig. 1. The prop osed framework consists of a segmen tor S and tw o adversarial net- w orks, D s and D p . S w as trained with a hybrid loss from D s , D p and the ground-truth. 2.1 Segmen tor (S) Our base netw ork is a simple fully conv olutoinal net work with an enco der- deco der architecture. The input to the segmentor is a 2D grey-scale image and the output is a pixel-level probability map. The probabilit y map shows prob- abilit y of presence of the ob ject at eac h pixel. W e use a h ybrid loss function (explained in details in Section 2.3) to update the parameters our segmen tor ( S ). This loss function is comp osed of three terms enforcing: (1) pixel-lev el high- resolution details, (2) spatial and high-range lab el con tinuit y , (3) 3D shap e and seman tics, through our nov el pro jective learning strategy . As can b e seen in Figure 1, the segmentor contains 10 conv lay ers in the enco der, 10 conv lay ers in the decoder and 4 conv lay ers as the b ottleneck. The last conv lay er is a 1 × 1 conv lay er with the channel output of 1, com bining c hannel-wise information in the highest scale. This lay er is follow ed b y a sigmoid function to create the notion of probabilit y . 4 N. Khosrav an et al. 2.2 Adv ersarial Net works Our adversarial netw orks are designed with the goal of comp ensating for the missing global relations and correcting higher-order inconsistencies, produced b y a single pixel-lev el loss. Each of these netw orks pro duces an adv ersarial signal and apply it to the segmen tor as a term in the o v erall loss function (Equation 2). The details of eac h netw ork is describ ed b elow: Spatial semantics net work (D s ): This net work is designed to capture spa- tial consistencies within each frame. The input to this net work is either the segmen ted ob ject by the ground-truth or by the segmen tor’s prediction. The Spatial seman tics net work ( D s ) is trained to discriminate b etw een these tw o inputs with a binary cross-entrop y loss, formulated as in Equation 4. The ad- v ersarial signal pro duced by the negative loss of D s to S forces S to produce predictions closer to ground-truth in terms of spatial semantics. As illustrated in Figure 1 top right, D s has a tw o-branc h architecture with a late fusion. The top branc h processes the segmented ob jects by ground-truth or segmen tor’s prediction. W e prop ose an extra branch of pro cessing, getting the b ottlenec k features corresp onding to the original gray-scale input image, and passing them to an atten tion mo dule for an information selection. The pro cessed features are then concatenated with the first branc h and passed through the shared la yers. W e b elieve that ha ving the high-lev el features of whole image along with the segmen tations improv es the p erformance of D s . Our attention mo dule learns where to attend in the feature space to hav e a more discriminative information selection and pro cessing. The details of the atten tion mo dule are describ ed in the following. H × W × C f(I n ) 1 × 1 Conv softmax weight Attention Module H × W × 1 H × W × C f(I n ) Fig. 2. Atten tion mo dule assigns a w eight to each feature allowing for a soft selection of information. A ttention mo dule (A): W e feed the high- lev el features form the segmen tor’s b ottle- nec k to D s . These features contain global in- formation ab out the whole frame. W e use a soft-atten tion mechanism, in which our at- ten tion mo dule assigns a weigh t to each fea- ture based on its imp ortance for discrimina- tion. The attention mo dule gets the features with shap e w × h × c , as input, and outputs a weigh t set with a shap e of w × h × 1. A is comp osed of tw o 1 × 1 conv olution lay ers follow ed by a softmax lay er (Figure 2). The softmax la yer in tro duces the notion of soft sele ction to this mo dule. The output of A is then m ultiplied to the features b efore b eing passed to the rest of the net work. Pro jective net w ork (D p ): An y 3D ob ject can be pro jected into 2D planes from sp ecific viewpoints, resulting in multiple 2D images. The 2D pro jection con tains 3D semantics information, to b e retrieved. In this section, we in tro duce our pro jective netw ork ( D p ). The main task of D p is to capture 3D seman tics P AN 5 without relying on 3D data and from the 2D pro jections. Inducing 3D shap es form 2D images has previously b een done for 3D shape generation [6]. Unlik e existing notions, ho wev er, in this pap er we propose 3D semantics induction from 2D pro jections, to b enefit segmen tation for the first time in the literature. The pro jection mo dule ( P ) pro jects a 3D volume (V) on a 2D plane as: P (( i, j ) , V ) = 1 − exp − P k V ( i,j,k ) , (1) where each pixel in the 2D pro jection P (( i, j ) , V ) gets a v alue in the range of [0 , 1] based on the n um b er of v oxel o ccupancy in the third dimension of corresponding 3 D v olume ( V ). F or the sak e of simplicit y , we refer to the pro jection of a 3D v olume V as P ( V ). W e pass each 3D image through our segmen tor ( S ) slice by slice and stac k the corresp onding prediction maps. Then, these maps are fed to the pro jection mo dule ( P ) and are pro jected in the axial view. The input to D p is either the pro jected ground-truth or pro jected prediction map pro duced by S . D p is trained to discriminate these inputs using the loss function defined in Equation 5. The adv ersarial term pro duced by D p in Equa- tion 2 forces S to create predictions whic h are closer to ground-truth in terms of 3D semantics. Incorp orating D p as an adversarial netw ork to our segmenta- tion framew ork helps S to capture 3D information through a v ery simple 2D arc hitecture and without adding to its complexity in the test time. 2.3 Adv ersarial training T o train our framework, we use a hybrid loss function, which is a weigh ted sum of three terms. F or a dataset of N training samples of images and ground truths ( I n , y n ), w e define our hybrid loss function as: l hy br id = N X n =1 l bce ( S ( I n ) , y n ) − λl D s − β l D p , (2) where l D s and l D p are the losses corresp onding to D s and D p and S ( I n ) is the segmentor’s prediction. The first term in Equation 2 is a w eighted binary cross-en tropy loss. This loss is the state-of-the-art loss function for seman tic segmen tation and for a grey-scale image I with size H × W × 1 is defined as: l bce ( ˆ y , y ) = − H × W X i =1 ( w y i log ˆ y i + (1 − y i ) log (1 − ˆ y i )) , (3) where w is the weigh t for p ositive samples, y is the ground-truth lab el and ˆ y is the netw ork’s prediction. Equation 3 encourages S to pro duce predictions similar to ground-truth and penalizes eac h pixel indep endently . High-order relations and seman tics cannot b e captured by this term. T o account for this drawbac k, the second and third terms are added to train our auxiliary net works. l D s and l D p are defined b elo w, resp ectively: 6 N. Khosrav an et al. l D s = l bce ( D s ( I n , y n ) , 1) + l bce ( D s ( I n , S ( I n )) , 0) , (4) l D p = l bce ( D p ( P I n , P y n ) , 1) + l bce ( D p ( P I n , P S ( I n ) ) , 0) . (5) Here P is the pro jection module, l bce is the binary cross-en tropy loss with w = 1 in Equation 3 corresp onding to a single num ber (0 or 1) as the output. 3 Exp erimen ts and Results W e ev aluated the efficacy of our prop osed system with the challenging problem of pancreas segmen tation. This particular problem w as selected due to the complex and v arying shap e of pancreas and relatively more difficult nature of the seg- men tation problem compared to other ab dominal organs. In our exp eriments w e sho w that our prop osed framework outp erforms other state-of-the-art metho ds and captures the complex 3D seman tics with a simple enco der-deco der. F ur- thermore, w e hav e created an extensiv e comparison to some baselines, designed sp ecifically to sho w the effects of each blo ck of our framew ork. Data and ev aluation: W e used the publicly av ailable TCIA CT dataset from NIH [11]. This dataset contains a total of 82 CT scans. The resolution of scans is 512 × 512 × Z , Z ∈ [181 , 466] is the num b er of slices in the axial plane. The v oxels spacing ranges from 0 . 5 mm to 1 . 0 mm . W e used a randomly selected set of 62 images for training and 20 for testing to perform a 4-fold cross-v alidation. Dice Similarit y Co efficient (DSC) is used as the metric of ev aluation. Comparison to baselines: Mo del DSC% Enco der-deco der (S) 57.7 A trous pyramid 48.2 S + D s 85.0 S + D s + A 85.9 1-fold S + D s + A + D p 86.8 T able 1. Comparison with base- lines. T o sho w the effect of eac h building block of our framew ork w e designed an extensive set of experiments. In our exp erimen ts we start from only training a single segmentor (S) and go to our final prop osed framework. F ur- thermore, we show comparison of enco der- deco der architecture with other state-of-the- art seman tic segmentation architectures. T able 1 sho ws the results adding of eac h building blo c k of our framew ork. The ecco der-deco der arc hitecture is the one sho wed in Figure 1 as S , while the Atrous p yramid architecture is similar to the recen t work of [4]. This arc hitecture is cur- ren tly state-of-the-art for semantic segmentation. In whic h an A trous p yramid is used to capture global context. W e added an A trous p yramid with 5 different scales: 4 Atrous conv olutions at rates of 1 , 2 , 6 , 12, with the global image p ooling. W e also replaced the deco der with 2 simple upsampling and conv lay ers similar to the main pap er [4]. W e refer the readers to the main pap er for more details ab out this architecture due to space limitations [4]. W e found out having an extensiv e pro cessing in the deco der improv es the results compared to the A trous p yramid arc hitecture (p ossibly a b etter choice for segmentation of ob jects at m ultiple scales). This is b ecause our ob ject of interest is relatively small. P AN 7 Moreo ver, we show ed that adding a spatial adversarial notw ork ( D s ) can b o ost the p erformance of S dramatically , in our task. Introducing attention ( A ) helps for a better information selection (as describ ed in section 2.2) and b o osts the p erformance further. Finally , our b est results is achiev ed by adding the pro jective adv ersarial net work ( D p ), which adds integration of 3D semantics in to the framework. This supports our hypothesis that our segmen tor has enough capacit y in terms of parameters to capture all this information and with proper and explicit sup ervision can ac hieve state-of-the-art results. Comparison to the state-of-the-art: W e provide the comparison of our method’s p erformance with current state-of-the-art literature on the same TCIA CT dataset for pancreas segmen tation. As can b e seen from exp erimental v alidation, our metho d outp erforms the state-of-the-art with dice scores, provides b etter effi- ciency (less computational burden). Of a note, the proposed algorithm’s least ac hievemen t is consistently higher than the state of the art metho ds. Approac h Av erage DSC% Max DSC% Min DSC% Roth et al. [11] 71 . 42 ± 10 . 11 86.29 23.99 Roth et al. [12] 78 . 01 ± 8 . 20 88.65 34.11 Roth et al. [13] 81 . 27 ± 6 . 27 88.96 50.69 Zhou et al. [18] 82 . 37 ± 5 . 68 90.85 62.43 Cai et al. [1] 82 . 40 ± 6 . 70 90.10 60.00 Y u et al. [16] 84 . 50 ± 4 . 97 91.02 62.81 4-fold cross validation Ours 85.53 ± 1.23 88.71 83.20 T able 2. Comparison with state-of-the-art on TCIA dataset. 4 Conclusion In this pap er we prop osed a nov el adv ersarial framework for 3D ob ject segmen- tation. W e introduced a nov el pro jective adv ersarial netw ork, inferring 3D shap e and semantics form 2D pro jections. The motiv ation b ehind our idea is that inte- gration of 3D information through a fully 3D netw ork, having all slices as input, is computationally infeasible. P ossible w ork arounds are: 1)down-sampling the data or 2)sacrificing n umber of parameters, which are sacrificing information or computational capacity , respectively . W e also in tro duced an attention mo dule to selectively pass whole-frame high-lev el feature from the segmen tor’s b ottle- nec k to the adversarial netw ork, for a b etter information pro cessing. W e show ed that with proper and guided sup ervision through adv ersarial signals a simple enco der-deco der architecture, with enough parameters, achiev es state-of-the-art p erformance on the challenging problem of pancreas segmentation. W e achiev ed a dice score of 85.53% , which is state-of-the art p erformance on pancreas seg- men tation task, outperforming previous metho ds. F urthermore, w e argue that our framework is general and can b e applied to any 3D ob ject segmen tation problem and is not sp ecific to a single application. 8 N. Khosrav an et al. References 1. Cai, J., Lu, L., Xie, Y., Xing, F., Y ang, L.: Impro ving deep pancreas segmen ta- tion in ct and mri images via recurrent neural contextual learning and direct loss function. arXiv preprint arXiv:1707.04912 (2017) 2. Chen, L.C., P apandreou, G., Kokkinos, I., Murphy , K., Y uille, A.L.: Deeplab: Se- man tic image segmen tation with deep conv olutional nets, atrous con volution, and fully connected crfs. IEEE transactions on pattern analysis and machine intelli- gence 40(4), 834–848 (2018) 3. Chen, L.C., Papandreou, G., Schroff, F., Adam, H.: Rethinking atrous conv olution for semantic image segmen tation. arXiv preprin t arXiv:1706.05587 (2017) 4. Chen, L.C., Zh u, Y., Papandreou, G., Sc hroff, F., Adam, H.: Enco der-deco der with atrous separable con volution for semantic image segmentation. In: Proceedings of the Europ ean Conference on Computer Vision (ECCV). pp. 801–818 (2018) 5. Dai, W., Dong, N., W ang, Z., Liang, X., Zhang, H., Xing, E.P .: Scan: Structure correcting adversarial net work for organ segmentation in chest x-ra ys. In: Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Supp ort, pp. 263–273. Springer (2018) 6. Gadelha, M., Ma ji, S., W ang, R.: 3d shap e induction from 2d views of multiple ob jects. In: 2017 International Conference on 3D Vision (3D V). pp. 402–411. IEEE (2017) 7. Go odfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., W arde-F arley , D., Ozair, S., Courville, A., Bengio, Y.: Generative adversarial nets. In: Adv ances in neural information pro cessing systems. pp. 2672–2680 (2014) 8. Luc, P ., Couprie, C., Chintala, S., V erb eek, J.: Semantic segmentation using ad- v ersarial net works. arXiv preprint arXiv:1611.08408 (2016) 9. Okta y , O., Schlemper, J., F olgo c, L.L., Lee, M., Heinrich, M., Misaw a, K., Mori, K., McDonagh, S., Hammerla, N.Y., Kainz, B., et al.: Atten tion u-net: learning where to lo ok for the pancreas. arXiv preprint arXiv:1804.03999 (2018) 10. Rezaei, M., Harm uth, K., Gierke, W., Kellermeier, T., Fischer, M., Y ang, H., Meinel, C.: A conditional adv ersarial netw ork for semantic segmentation of brain tumor. In: International MICCAI Brainlesion W orkshop. pp. 241–252. Springer (2017) 11. Roth, H.R., Lu, L., F arag, A., Shin, H.C., Liu, J., T urkbey , E.B., Summers, R.M.: Deep organ: Multi-level deep conv olutional netw orks for automated pancreas seg- men tation. In: In ternational conference on medical image computing and computer- assisted interv en tion. pp. 556–564. Springer (2015) 12. Roth, H.R., Lu, L., F arag, A., Sohn, A., Summers, R.M.: Spatial aggregation of holistically-nested net works for automated pancreas segmentation. In: Inter- national Conference on Medical Image Computing and Computer-Assisted Inter- v ention. pp. 451–459. Springer (2016) 13. Roth, H.R., Lu, L., Lay , N., Harrison, A.P ., F arag, A., Sohn, A., Summers, R.M.: Spatial aggregation of holistically-nested conv olutional neural netw orks for auto- mated pancreas localization and segmentation. Medical image analysis 45, 94–107 (2018) 14. Xue, Y., Xu, T., Zhang, H., Long, L.R., Huang, X.: Segan: Adv ersarial netw ork with m ulti-scale l 1 loss for medical image segmen tation. Neuroinformatics 16(3-4), 383–392 (2018) 15. Yi, X., W alia, E., Babyn, P .: Generative adv ersarial netw ork in medical imaging: A review. arXiv preprint arXiv:1809.07294 (2018) P AN 9 16. Y u, Q., Xie, L., W ang, Y., Zhou, Y., Fishman, E.K., Y uille, A.L.: Recurren t saliency transformation netw ork: Incorporating m ulti-stage visual cues for small organ seg- men tation. In: Pro ceedings of the IEEE Conference on Computer Vision and P at- tern Recognition. pp. 8280–8289 (2018) 17. Zheng, S., Ja yasumana, S., Romera-P aredes, B., Vineet, V., Su, Z., Du, D., Huang, C., T orr, P .H.: Conditional random fields as recurrent neural net works. In: Pro- ceedings of the IEEE in ternational conference on computer vision. pp. 1529–1537 (2015) 18. Zhou, Y., Xie, L., Shen, W., W ang, Y., Fishman, E.K., Y uille, A.L.: A fixed- p oin t mo del for pancreas segmentation in abdominal ct scans. In: International Conference on Medical Image Computing and Computer-Assisted Interv en tion. pp. 693–701. Springer (2017)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment