Adversarial Mahalanobis Distance-based Attentive Song Recommender for Automatic Playlist Continuation

In this paper, we aim to solve the automatic playlist continuation (APC) problem by modeling complex interactions among users, playlists, and songs using only their interaction data. Prior methods mainly rely on dot product to account for similaritie…

Authors: Thanh Tran, Renee Sweeney, Kyumin Lee

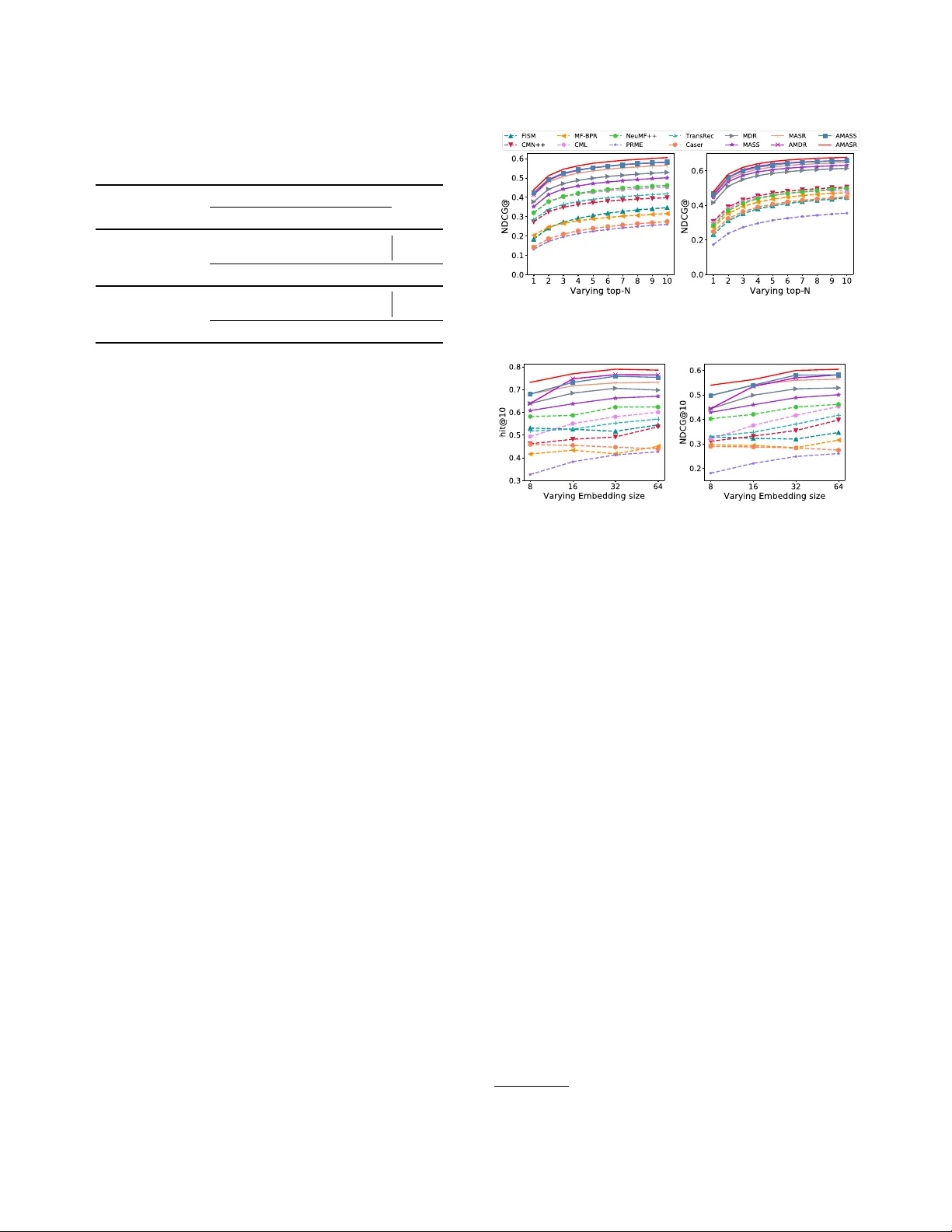

Adv ersarial Mahalanobis Distance-based Aentive Song Recommender for A utomatic Playlist Continuation Thanh Tran, Renee Sw eeney , K yumin Lee Department of Computer Science W or cester Polytechnic Institute Massachusetts, USA {tdtran, rasweeney , kmlee}@wpi.edu ABSTRA CT In this paper , we aim to solve the automatic playlist continuation (APC) problem by modeling complex interactions among users, playlists, and songs using only their interaction data. Prior meth- ods mainly rely on dot pr oduct to account for similarities, which is not ideal as dot product is not metric learning, so it do es not convey the important inequality pr operty . Base d on this observa- tion, we propose three novel deep learning approaches that utilize Mahalanobis distance. Our rst appr oach uses user-playlist-song interactions, and combines Mahalanobis distance scores between (i) a target user and a target song, and (ii) b etween a target playlist and the target song to account for both the user’s preference and the playlist’s theme. Our se cond approach measures song-song similarities by considering Mahalanobis distance scores between the target song and each member song (i.e., existing song) in the target playlist. The contribution of each distance score is measured by our proposed memory metric-based attention me chanism . In the third approach, we fuse the two previous models into a unied model to further enhance their p erformance . In addition, we adopt and customize Adversarial Personalized Ranking ( APR) for our three approaches to further improve their r obustness and predictive capa- bilities. Through extensive experiments, we show that our proposed models outperform eight state-of-the-art models in two large-scale real-world datasets. A CM Reference Format: Thanh Tran, Renee Sweeney , K yumin Lee. 2019. Adversarial Mahalanobis Distance-based Attentive Song Recommender for A utomatic Playlist Con- tinuation. In Proceedings of the 42nd International ACM SIGIR Conference on Research and Development in Information Retrieval (SIGIR ’19), July 21–25, 2019, Paris, France. ACM, New Y ork, NY , USA, 10 pages. https://doi.org/10. 1145/3331184.3331234 1 IN TRODUCTION The automatic playlist continuation ( APC ) problem has received in- creased attention among researchers following the growth of online music streaming services such as Spotify , Apple Music, SoundCloud, etc . Given a user-created playlist of songs, APC aims to recommend Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than ACM must be honored. Abstracting with credit is permitted. T o cop y otherwise, or republish, to post on servers or to redistribute to lists, requires prior specic permission and /or a fee. Request permissions from permissions@acm.org. SIGIR ’19, July 21–25, 2019, Paris, France © 2019 Association for Computing Machinery . ACM ISBN 978-1-4503-6172-9/19/07. . . $15.00 https://doi.org/10.1145/3331184.3331234 one or more songs that t the user’s preference and match the playlist’s theme. Due to the inconsistency of available side information in public music playlist datasets, we rst attempt to solve the APC problem using only interaction data. Collaborative ltering (CF) methods, which enco de users, playlists, and songs in lower-dimensional latent spaces, have been widely used [ 14 , 15 , 18 , 24 ]. T o account for the extra playlist dimension in this work, the term item in the context of APC will refer to a song, and the term user will refer to either a user or playlist, depending on the model. W e will also use the term member song to denote an existing song within a target playlist. CF solutions to the APC problem can be classied into the following three groups: Group 1: Recommending songs that are directly relevant to user/playlist taste. Methods in this group aim to measure the conditional probability of a target item given a target user using user-item interactions. In APC , this is either P ( s | u ) – the conditional probability of a target song s given a target user u by taking users and songs within their playlists as implicit fee dback input –, or P ( s | p ) – the conditional probability of a target song s given a target playlist p by utilizing playlist-song interactions. Most of the works in this group measure P ( s | u ) 1 by taking the dot product of the user and song latent vectors [ 5 , 15 , 18 ], denote d by P ( s | u ) ∝ − → u T · − → s . With the recent success of deep learning appr oaches, researchers proposed neural network-based models [14, 27, 58, 62]. Despite their high performance, Group 1 is limited for the APC task. First, although a target song can be v ery similar to the ones in a target playlist [ 9 , 38 ], this association information is ignored. Sec- ond, these approaches only measure either P ( s | u ) or P ( s | p ) , which is sub-optimal. P ( s | u ) omits the playlist theme, causing the model to recommend the same songs for dierent playlists, and P ( s | p ) is not personalized for each user . Group 2: Re commending songs that are similar to existing songs in a playlist. Methods in this group are based on a principle that similar users prefer the same items (user neighborhoo d design), or users themselves prefer similar items (item neighborhood design). ItemKNN [ 42 ], SLIM [ 35 ], and FISM [ 21 ] solve the APC problem by identifying similar users/playlists or similar songs. These works are limited in that they give equal weight to each song in a playlist when calculating their similarity to a candidate song. In reality , certain aspects of a memb er song, such as the genre or artist, may be more important to a user when deciding whether or not to add 1 Measuring P ( s | p ) is easily obtained by replacing the user latent vector u with the playlist latent vector p . u1 p1 s1 u1 p1 s2 u2 p2 s1 u2 p2 s2 u2 p2 s3 s1 s2 s3 u1 ✔ ✔ u2 ✔ ✔ ✔ s1 s2 s3 p1 ✔ ✔ p2 ✔ ✔ ✔ 1 1 -1 u 1 u 2 s 1 s 2 s 3 1 1 -1 p 1 p 2 s 1 s 2 s 3 1 1 -1 u 1 u 2 s 1 s 2 s 3 1 1 -1 s 1 s 2 s 3 p 1 p 2 dot product metric learning input interactions Figure 1: Learning with dot product vs. metric learning. another song into the playlist. This calls for diering song weights, which are produced by attentive neighborhood-based mo dels. Recently , [ 7 ] proposed a Collaborative Memory Network (CMN) that considers both the target user preference on a target item as well as similar users’ preferences on that item (i.e., user neigh- borhood hybrid design). It utilizes a memor y network to assign dierent attentive contributions of neighb oring users. However , this approach still does not work well with APC datasets due to sparsity issues and less associations among users. Group 3: Recommending next songs as transitions from the previous songs. Methods in this group are called sequential rec- ommendation mo dels, which rely on Markov Chains to capture sequential patterns [ 41 , 54 ]. In the APC domain, these methods make recommendations based on the order of songs added to a playlist. Deep learning-based se quential methods are able to model even more complex transitional patterns using convolutional neu- ral networks (CNNs) [ 45 ] or recurrent neural networks (RNNs) [ 6 , 16 , 20 ]. Howev er , Sequential recommenders have restrictions in the APC domain, namely that playlists are often listened to on shue. It means that users typically add songs based on an overar- ching playlist theme, rather than song transition quality . In addition, added song timestamps may not be available in music datasets. Motivation. A common drawback of the works listed in the three groups above is that they rely on the dot pr oduct to measure simi- larity . Dot product is not metric learning, so it does not convey the crucial inequality property [ 17 , 39 ], and does not handle dierently scaled input variables well. W e illustrate the drawback of dot prod- uct in a toy example shown in Figure 1 2 , where the latent dimension is size d = 2 . Assume we hav e two users u 1 , u 2 , two playlists p 1 , p 2 , and three songs s 1 , s 2 , s 3 . W e can see that p 1 and p 2 (or u 1 and u 2 ) are similar (i.e., both liked s 1 , and s 2 ), suggesting that s 3 would be relevant to the playlist p 1 . Learning with dot product can lead to the following result: p 1 = ( 0 , 1 ) , p 2 = ( 1 , 0 ) , s 1 = ( 1 , 1 ) , s 2 = ( 1 , 1 ) , s 3 = ( 1 , − 1 ) , b ecause p T 1 s 1 = 1 , p T 1 s 2 = 1 , p T 2 s 1 = 1 , p T 2 s 2 = 1 , p T 2 s 3 =1 (same for users u 1 , u 2 ). Howev er , the dot product between p 1 and s 3 is -1, so s 3 would not b e recommended to p 1 . Howev er , if we use metric learning, it will pull similar users/playlists/songs closer together by using the inequality property . In the example, the dis- tance between p 1 and s 3 is rescaled to 0, and s 3 is now correctly portrayed as a good t for p 1 . There exist several works that adopt metric learning for rec- ommendation. [ 17 ] proposed Collaborative Metric Learning (CML) 2 This Figure is inspired by [17] which use d Euclidean distance to pull positive items closer to a user and push negative items further away . [ 4 , 8 , 11 ] also use d Euclidean distance but for modeling transitional patterns. Howev er , these metric-based models still fall into either Group 1 or Group 3 , inher- iting the limitations that we described previously . Furthermore, as Euclidean distance is the primary metric, these models are highly sensitive to the scales of (latent) dimensions/variables. Our appr oaches and main contributions. According to the lit- erature, Mahalanobis distance 3 [ 57 , 59 ] overcomes the drawback (i.e., high sensitivity) of Euclidean distance. How ever , Mahalanobis distance has not yet been applied to recommendation with neural network designs. By ov ercoming the limitations of existing recommendation mod- els, we propose three novel deep learning approaches in this paper that utilize Mahalanobis distance. Our rst approach, Mahalanobis Distance Base d Recommender (MDR), belongs to Group 1 . Instead of modeling either P ( s | p ) or P ( s | u ) , it measures P ( s | u , p ) . T o com- bine both a user’ preference and a playlist’ theme , MDR measures and combines the Mahalanobis distance between the target user and target song, and between the target playlist and target song. Our second approach, Mahalanobis distance-based Attentive Song Similarity recommender (MASS), falls into Group 2 . Unlike the prior works, MASS uses Mahalanobis distance to measure similarities between a target song and member songs in the playlist. MASS in- corporates our pr oposed memory metric-based attention mechanism that assigns attentive weights to each distance score between the target song and each member song in order to capture dierent inuence levels. Our third approach, Mahalanobis distance based Attentive Song Recommender (MASR), combines MDR and MASS to merge their capabilities. In addition, we incorporate customize d Ad- versarial Personalized Ranking [ 13 ] into our thr ee models to further improve their r obustness. W e summarize our contributions as follows: • W e propose three deep learning approaches ( MDR , MASS , and MASR ) that fully exploit Mahalanobis distance to tackle the APC task. As a part of MASS , we propose the memory metric-based attention mechanism. • W e improv e the robustness of our models by applying adver- sarial personalized ranking and customizing it with a exible noise magnitude. • W e conduct extensive experiments on two large-scale APC datasets to show the ee ctiv eness and eciency of our ap- proaches. 2 OTHER RELA TED WORK Music recommendation literature has frequently made use of avail- able metadata such as: lyrics [ 33 ], tags [ 19 , 28 , 33 , 50 , 51 ], au- dio features [ 28 , 33 , 50 , 51 , 56 ], audio spectrograms [ 36 , 52 , 55 ], song/artist/playlist names [1, 20, 22, 37, 46], and T witter data [19]. Deep learning and hybrid approaches have made signicant progress against traditional collaborative ltering music recommenders [ 36 , 50 , 51 , 55 ]. [ 56 ] uses multi-arm bandit reinfor cement learning for interactive music recommendation by leveraging novelty and music audio content. [ 28 ] and [ 52 ] perform weighted matrix factorization 3 https://en.wikipedia.org/wiki/Mahalanobis_distance using latent features pre-trained on a CNN, with song tags and Mel-frequency cepstral coecients (MFCCs) as input, respe ctiv ely . Unlike these works, our propose d approaches do not require or incorporate side information. Recently , attention mechanisms have shown their eectiveness in various machine learning tasks including document classication [61], machine translation [3, 29], recommendation [30, 31], etc . So far , several attention mechanisms are proposed such as: general at- tention [ 29 ], dot attention [ 29 ], concat attention [ 3 , 29 ], hierar chical attention [ 61 ], scaled dot attention and multi-head attention [ 53 ], etc. . However , to our best of knowledge, most of previously pro- posed attention mechanisms leveraged dot product for measuring similarities which is not optimal in our Mahalanobis distance-based recommendation approaches b ecause of the dierence between dot product space and metric space. Therefor e, we propose a memory metric-based attention mechanism for our models’ designs. 3 PROBLEM DEFINI TION Let U = { u 1 , u 2 , u 3 , .. ., u m } denote the set of all users, P = { p 1 , p 2 , p 3 , ..., p n } denote the set of all playlists, S = { s 1 , s 2 , s 3 , ..., s v } denote the set of all songs. Bolded versions of these variables, which we will introduce in the following sections, denote their respective embeddings. m, n, v are the number of users, playlists, and songs in a dataset, respectively . Each user u i ∈ U has created a set of playlists T ( u i ) ={ p 1 , p 2 , ..., p | T ( u i ) | }, where each playlist p j ∈ T ( u i ) contains a list of songs T ( p j ) ={ s 1 , s 2 , ..., s | T ( p j ) | }. Note that T ( u 1 ) ∪ T ( u 2 ) ∪ . . . ∪ T ( u m ) = { p 1 , p 2 , p 3 , .. ., p n }, T ( p 1 ) ∪ T ( p 2 ) ∪ . . . ∪ T ( p n ) = { s 1 , s 2 , s 3 , .. ., s v }, and the song order within each playlist is often not available in the dataset. The Automatic Playlist Continuity ( APC) problem can then be dened as recommending new songs s k < T ( p j ) for each playlist p j ∈ T ( u i ) created by user u i . 4 MAHALANOBIS DIST ANCE PRELIMINARY Given two points x ∈ R d and y ∈ R d , the Mahalanobis distance between x and y is dened as: d M ( x , y ) = ∥ x − y ∥ M = q ( x − y ) T M ( x − y ) (1) where M ∈ R d × d parameterizes the Mahalanobis distance metric to be learned during model training. T o ensure that Eq. (1) produces a mathematical metric 4 , M must be symmetric positive semi-denite ( M ⪰ 0 ). This constraint on M makes the model training process more complicated, so to ease this condition, we r ewrite M = A T A ( A ∈ R d × d ) since M ⪰ 0 . The Mahalanobis distance between two points d M ( x , y ) now becomes: d M ( x , y ) = ∥ x − y ∥ A = q ( x − y ) T A T A ( x − y ) = q A ( x − y ) T A ( x − y ) = ∥ A ( x − y ) ∥ 2 = ∥ A x − A y ∥ 2 (2) where ∥ · ∥ 2 refers to the Euclidean distance . By rewriting Eq. (1) into Eq. (2), the Mahalanobis distance can now be computed by measuring the Euclidean distance b etween two linearly transformed points x → A x and y → A y . This transforme d space encourages the mo del to learn a more accurate similarity between x and y . 4 https://en.wikipedia.org/wiki/Metric_(mathematics) d M ( x , y ) is generalized to basic Euclidean distance d ( x , y ) when A is the identity matrix. If A in Eq. (2) is a diagonal matrix, the objective becomes learning metric A such that dierent dimensions are assigned dierent weights. Our experiments show that learning diagonal matrix A generalizes well and produces slightly b etter performance than if A were a full matrix. Therefore in this pap er we focus on only the diagonal case. Also note that when A is diagonal, we can rewrite Eq. (2) as: d M ( x , y ) = ∥ A ( x − y ) ∥ 2 = ∥ d i a д ( A ) ⊙ ( x − y ) ∥ 2 (3) where d ia д ( A ) ∈ R n returns the diagonal of matrix A , and ⊙ de- notes the element-wise product. Therefore, we can parameterize B = d i a д ( A ) ∈ R n and learn the Mahalanobis distance by simply computing ∥ B ⊙ ( x − y ) ∥ 2 . In our models’ calculations, we will adopt squared Mahalanobis distance, since quadratic form promotes faster learning. 5 OUR PROPOSED MODELS In this section, we delve into design elements and parameter esti- mation of our three proposed models: Mahalanobis Distance based Recommender (MDR) , Mahalanobis distance-base d Attentive Song Similarity recommender (MASS) , and the combine d model Maha- lanobis distance based Attentive Song Recommender (MASR) . 5.1 Mahalanobis Distance based Recommender (MDR) As mentioned in Section 1, MDR belongs to the Group 1 . MDR takes a target user , a target playlist, and a target song as inputs, and outputs a distance score reecting the dir ect relevance of the target song to the target user’s music taste and to the target playlist’s theme. W e will rst describ e how to measure each of the conditional probabilities – P ( s k | u i ), P ( s k | p j ), and nally P ( s k | u i , p j ) – using Mahalanobis distance. Then we will go ov er MDR ’s design. 5.1.1 Measuring P( s k | u i ). Given a target user u i , a target playlist p j , a target song s k , and the Mahalanobis distance d M ( u i , s k ) between u i and s k , P ( s k | u i ) is measured by: P ( s k | u i ) = exp (−( d 2 M ( u i , s k ) + β s k )) Í l exp (−( d 2 M ( u i , s l ) + β s l )) (4) where β s k , β s l are bias terms to capture their respective song’s overall popularity [23]. User bias is not included in Eq.(4) because it is independent of P ( s k | u i ) when varying candidate song s k . The denominator Í l exp (− d M ( u i , s l ) + β s l ) is a normalization term shared among all candidate songs. Thus, P ( s k | u i ) is measured as: P ( s k | u i ) ∝ − d 2 M ( u i , s k ) + β s k (5) Note that training with Bayesian Personalized Ranking (BPR) will only require calculating Eq. (5), since for every pair of observed song k + and unobserved song k − , we model the pairwise ranking P ( s k + | u i ) > P ( s k − | u i ) . Using Eq. (4), this inequality is satise d only if d 2 M ( u i , s k + ) + β s k + < d 2 M ( u i , s k − ) + β s k − , which leads to Eq. (5). 5.1.2 Measuring P( s k | p j ). Given a target playlist p j , a target song s k , and the Mahalanobis distance d M ( p j , s k ) between p j and s k , P( s k | p j ) is measured by: P ( s k | p j ) = exp (−( d 2 M ( p j , s k ) + γ s k )) Í l exp (−( d 2 M ( p j , s l ) + γ s l )) (6) target user target song song embedding element-wise subtraction user-song distance user embedding target playlist playlist embedding playlist-song distance — B 1 — + element-wise summation T ranspose + element-wise multiplication x x — B 2 x user-playlist-song distance o (MDR) Embedding layer Mahalanobis Distance Module T T T Figure 2: Architecture of our MDR. where γ s k and γ s l are song bias terms. Similar to P ( s k | u i ) , we shortly measure P ( s k | p j ) by: P ( s k | p j ) ∝ −( d 2 M ( p j , s k ) + γ s k ) (7) 5.1.3 Measuring P( s k | u i , p j ). P ( s k | u i , p j ) is computed using the Bayesian rule under the assumption that u i and p j are conditionally independent given s k : P ( s k | u i , p j ) ∝ P ( u i | s k ) P ( p j | s k ) P ( s k ) = P ( s k | u i ) P ( u i ) P ( s k ) P ( s k | p j ) P ( p j ) P ( s k ) P ( s k ) ∝ P ( s k | u i ) P ( s k | p j ) 1 P ( s k ) (8) In Eq. (8), P ( s k ) represents the popularity of target song s k among the song pool. For simplicity in this paper , we assume that selecting a random candidate song follows a uniform distribution instead of modeling this p opularity information. P ( s k | u i , p j ) then becomes proportional to: P ( s k | u i , p j ) ∝ P ( s k | u i ) P ( s k | p j ) . Using Eq. (4, 6), we can approximate P ( s k | u i , p j ) as follows: P ( s k | u i , p j ) ∝ exp − ( d 2 M ( u i , s k ) + β s k ) Í l exp − ( d 2 M ( u i , s l ) + β s l ) × exp − ( d 2 M ( p j , s k ) + γ s k ) Í l exp − ( d 2 M ( p j , s l ) + γ s l ) = exp − ( d 2 M ( u i , s k ) + β s k ) − ( d 2 M ( p j , s k ) + γ s k ) Í l exp − ( d 2 M ( u i , s l ) + β s l ) Í l ′ exp − ( d 2 M ( p j , s l ′ ) + γ s l ′ ) (9) Since the denominator of Eq. (9) is shared by all candidate songs (i.e., normalization term), we can shortly measure P ( s k | u i , p j ) by: P ( s k | u i , p j ) ∝ − d 2 M ( u i , s k ) + d 2 M ( p j , s k ) − β s k + γ s k = − d 2 M ( u i , s k ) + d 2 M ( p j , s k ) + θ s k (10) With P ( s k | u i , p j ) now established in Eq. (10), we can move on to our MDR model. 5.1.4 MDR Design. The MDR architecture is depicted in Figure 2. It has an Input, Embedding Layer , and Mahalanobis Distance Module. Input: MDR takes a target user u i (user ID), a target playlist p j (playlist ID), and a target song s k (song ID) as input. Embedding Layer: MDR maintains three embedding matrices of users, playlists, and songs. By passing user u i , playlist p j , and song s k through the embedding layer , we obtain their respe ctiv e em- bedding vectors u i ∈ R d , p j ∈ R d , and s k ∈ R d , where d is the embedding size. Mahalanobis Distance Module : As depicted in Figure 2, this mod- ule outputs a distance score o ( M D R ) that indicates the relevance of candidate song s k to both user u i ’s music preference and playlist p j ’s theme. Intuitively , the lower the distance score is, the more relevant the song is. o ( M D R ) ( u i , p j , s k ) is computed as follows: . . . song embedding matrix S user embedding matrix U . . . . . . ∑ song embedding matrix S (a) user embedding matrix U (a) . . . . . . input Embedding layer Processing layer Output Memory metric-based Attention Distance scores Distance scores Attentive scores Target user Target song Target song Target user \ member songs member songs . . . . . . q ik q ik (a) o (MASS) softmin Target playlist Target playlist Figure 3: Architecture of our MASS. o ( M D R ) = o ( u i , s k ) + o ( p j , s k ) + θ s k (11) where θ s k is song s k ’s bias, and o ( u i , s k ) , o ( p j , s k ) are quadratic Mahalanobis distance scores between user u i and song s k , and be- tween playlist p j and song s k , shown in the following two equations. B 1 ∈ R d and B 2 ∈ R d are two metric learning vectors. And, o ( u i , s k ) = B 1 ⊙ ( u i − s k ) T B 1 ⊙ ( u i − s k ) o ( p j , s k ) = B 2 ⊙ ( p j − s k ) T B 2 ⊙ ( p j − s k ) 5.2 Mahalanobis distance-based Attentive Song Similarity recommender (MASS) As stated in the Section 1, MASS b elongs to Group 2 , where it measures attentive similarities between the target song and member songs in the target playlist. An overview of MASS ’s architecture is depicted in Figure 3. MASS has ve components: Input, Embedding Layer , Processing Layer , Attention Layer , and Output. 5.2.1 Input: The inputs to our MASS mo del include a target user u i , a candidate song s k for a target playlist p j , and a list of l member songs within the playlist, where l is the number of songs in the largest playlist (i.e., containing the largest number of songs) in the dataset. If a playlist contains less than l songs, we pad the list with zeroes until it reaches length l . 5.2.2 Embedding Layer: This layer holds two embedding matrices: a user embedding matrix U ∈ R m × d and a song embe dding matrix S ∈ R v × d . By passing the input target user u i and target song s k through these two respective matrices, we obtain their embedding vectors u i ∈ R d and s k ∈ R d . Similarly , we acquire the embedding vectors for all l member songs in p j , denoted by s 1 , s 2 , .. ., s l . 5.2.3 Processing Layer: W e rst ne ed to consolidate u i and s k . Following widely adopted deep multimodal network designs [ 43 ], we concatenate the tw o embeddings, and then transform them into a new vector q i k ∈ R d via a fully connected layer with weight matrix W 1 ∈ R 2 d × d , bias term b ∈ R , and activation function ReLU . W e formulate this process as follows: q i k = ReLU W 1 u i s k + b 1 (12) Note that q i k can be interpreted as a search query in QA systems [ 2 , 60 ]. Since we combined the target user u i with the query song s k (to add to the user’s target playlist), the search quer y q i k is personalized. The ReLU activation function mo dels a non-linear combination between these two target entities, and was chosen over sigmoid or tanh due to its encouragement of sparse activations and proven non-saturation [10], which helps pr event overtting. Next, given the embedding vectors s 1 , s 2 , .. ., s l of the l member songs in target playlist p j , we appro ximate the conditional proba- bility P ( s k | u i , s 1 , s 2 , .. ., s l ) by: P ( s k | u i , s 1 , s 2 , .. ., s l ) ∝ − l Õ t = 1 α i k t d 2 M ( q i k , s t ) + b s k (13) where d M (·) returns the Mahalanobis distance between two vectors, b s k is the song bias reecting its overall popularity , and α i k t is the attention score to weight the contribution of the partial distance between search query q i k and member song s t . W e will show how to calculate d 2 M ( q i k , s t ) below , and α i k t in Attention Layer at 5.2.4. As indicated in Eq. (3), we parameterize B 3 ∈ R d , which will be learne d during the training phase. The Mahalanobis distance between the search query q i k and each member song s t , treating B 3 as an edge-weight vector , is measured by: d 2 M ( q i k , s t ) = e T i k t e i k t 2 2 where e i k t = B 3 ⊙ ( q i k − s t ) (14) Calculating Eq. (14) for every memb er song s t yields the follow- ing l -dimensional vector: d 2 M ( q i k , s 1 ) d 2 M ( q i k , s 2 ) . . . d 2 M ( q i k , s l ) = e T i k 1 e i k 1 2 2 e T i k 2 e i k 2 2 2 . . . e T i k l e i k l 2 2 (15) Note that B 3 is shared across all Mahalanobis measur ement pairs. Now we go into detail of how to calculate the attention weights α i k t using our proposed Attention Layer . 5.2.4 Aention Layer: With l distance scores obtained in Eq. (15), we need to combine them into one distance value to reect how relevant the target song is w .r .t the target playlist’s memb er songs. The simplest approach is to follow the well-known item similarity design [ 21 , 35 ] where the same weights are assigne d for all l dis- tance scores. This is sub-optimal in our domain because dierent member song can relate to the target song dierently . For example, given a country playlist and a target song of the same genre, the member songs that share the same artist with the target song would be more similar to the target song than the other memb er songs in the playlist. T o address this concern, w e propose a novel mem- ory metric-based attention me chanism to properly allo cate dier ent attentive scores to the distance values in Eq. (15). Compared to existing attention mechanisms, our attention mechanism maintains its own emb edding memory of users and songs (i.e., memory-based property), which can function as an external memory . It also com- putes attentive scores using Mahalanobis distance (i.e., metric-based property) instead of traditional dot product. Note that the memory- based property is also commonly applied to question-answering in NLP, wher e memory networks have utilized external memory [ 44 ] for better memorization of context information [ 25 , 34 ]. Our attention mechanism has one external memor y containing user and song embedding matrices. When the user and song embedding matrices of our attention mechanism are identical to those in the embedding layer , it is the same as looking up the embedding vectors of target users, target songs, and member songs in the emb edding layer (Section 5.2.2). Therefore , using external memory will make room for more exibility in our models. The attention layer features an external user embedding matrix U ( a ) ∈ R m × d and external song emb edding matrix S ( a ) ∈ R v × d . Given the following inputs – a target user u i , a target song s k , and all l member songs in playlist p j – by passing them through the corresponding embedding matrices, we obtain the embedding vectors of u i , s k , and all the memb er songs, denoted as u ( a ) i , s ( a ) k , and s ( a ) 1 , s ( a ) 2 , .. ., s ( a ) l , respectively . W e then forge a personalize d sear ch quer y q ( a ) i k by combining u ( a ) i and s ( a ) k in a multimodal design as follows: q ( a ) i k = ReLU W 2 " u ( a ) i s ( a ) k # + b 2 (16) where W 2 ∈ R 2 d × d is a weight matrix and b 2 is a bias term. Next, we measure the Mahalanobis distance (with an edge weight vector B 4 ∈ R d ) from q ( a ) i k to a member song’s embedding vector s ( a ) t where t ∈ 1 , l : d 2 M ( q ( a ) i k , s ( a ) t ) = e ( a ) i k t T e ( a ) i k t 2 2 where e ( a ) i k t = B 4 ⊙ q ( a ) i k − s ( a ) t (17) Using Eq. (17), we generate l distance scores between each of l member songs and the candidate song. Then we apply softmin on l distance scores in order to obtain the memb er songs’ attentive scores 5 . Intuitively , the lower the distance between a search query and a member song vector , the higher its contribution level is w .r .t the candidate song. α i k t = exp − e ( a ) i k t T e ( a ) i k t 2 2 Í l t ′ = 1 exp − e ( a ) i k t ′ T e ( a ) i k t ′ 2 2 (18) 5.2.5 Output: W e output the total attentive distances o ( M AS S ) from the target song s k to target playlist p j ’s existing songs by: o ( MASS ) = − l Õ t = 1 α i k t d 2 M ( q i k , s t ) + b s k (19) where α i k t is the attentive score from Eq. (18), d M ( q i k , s t ) is the personalized Mahalanobis distance between target song s k and a member song s t in user u i ’s playlist (Eq. (15)), b s k is the song bias. 5.3 Mahalanobis distance based Attentive Song Recommender (MASR = MDR + MASS) W e enhance our performance on the APC problem by combining our MDR and MASS into a Mahalanobis distance based Attentive Song Recommender (MASR) mo del. MASR outputs a cumulative distance score from the outputs of MDR and MASS as follows: o ( MASR ) = α o ( MDR ) + ( 1 − α ) o ( MASS ) (20) where o ( MDR ) is from Eq. (11), o ( MASS ) is from Eq. (19), and α ∈ [ 0 , 1 ] is a hyperparameter to adjust the contribution lev els of MDR and MASS . α can b e tuned using a development dataset. However , in the following experiments, we set α = 0 . 5 to r eceive equal contribution from MDR and MASS . W e pretrain MDR and MASS rst, then x MDR and MASS ’s parameters in MASR . There are two benets of this design. First, if MASR is learnable with pretrained MDR and 5 Note that attentive scores of padded items are 0. MASS initialization, MASR would have too high a computational cost to train. Se cond, by making MASR non-learnable, MDR and MASS in MASR can be traine d separately and in parallel, which is more practical and ecient for real-world systems. 5.4 Parameter Estimation 5.4.1 Learning with Bayesian Personalized Ranking (BPR) loss. W e apply BPR loss as an obje ctiv e function to train our MDR, MASS, MASR as follows: L (D | Θ ) = argmin Θ − Í ( i , j , k + , k − ) log σ ( o i j k − − o i j k + ) + λ Θ ∥ Θ ∥ 2 (21) where ( i , j , k + , k − ) is a quartet of a target user , a target playlist, a positive song, and a negativ e song which is randomly sampled. σ (·) is the sigmoid function; D denotes all training instances; Θ are the model’s parameters (for instance , Θ = { U , S , U ( a ) , S ( a ) , W 1 , W 2 , B 3 , B 4 , b } in the MASS model); λ Θ is a regularization hyper-parameter; and o ijk is the output of either MDR , MASS , or MASR , which is measured in Eq. (11), (19), and (20), respectively . 5.4.2 Learning with Adversarial Personalized Ranking (APR) loss. It has been shown in [ 13 ] that BPR loss is vulnerable to adversarial noise, and APR was proposed to enhance the robustness of a simple matrix factorization model. In this work, we apply APR to further improve the robustness of our MDR , MASS , and MASR . W e name our MDR , MASS , and MASR trained with APR loss as AMDR , AMASS , AMASR by adding an “adversarial ( A)” term, respectively . Denote δ as adversarial noise on the model’s parameters Θ . The BPR loss from adding adversarial noise δ to Θ is dened by: L (D | ˆ Θ + δ ) = argmax Θ = ˆ Θ + δ − Õ ( i , j , k + , k − ) log σ ( o i j k − − o i j k + ) (22) where ˆ Θ is optimized in Eq. (21) and xed as constants in Eq. (22). Then, training with APR aims to play a minimax game as follows: arg min Θ max δ , ∥ δ ∥ ≤ ϵ s ( ˆ Θ ) L (D | Θ ) + λ δ L (D | ˆ Θ + δ ) (23) where ϵ is a hyper-parameter to contr ol the magnitude of perturba- tions δ . In [ 13 ], the authors xed ϵ for all the model’s parameters, which is not ideal b ecause dierent parameters can endure dierent levels of perturbation. If we add too large adversarial noise, the model’s performance will downgrade, while adding too small noise does not guarantee more robust models. Hence , we multiply ϵ with the standar d deviation s ( ˆ Θ ) of the targeting parameter ˆ Θ to pr ovide a more exible noise magnitude. For instance , the adversarial noise magnitude on parameter B 3 in AMASS model is ϵ × s ( B 3 ) . If the val- ues in B 3 are widely dispersed, they are more vulnerable to attack, so the adversarial noise applied during training must be higher in order to improve r obustness. Whereas if the values are centralized, they are already robust, so only a small noise magnitude is neede d. Learning with APR follows 4 steps: Step 1 : unlike [ 13 ] where parameters are saved at the last training epo ch, which can be over- tted parameter values (e.g. some thousands of epoches for matrix factorization in [ 13 ]), we rst learn our models’ parameters by min- imizing Eq. (21) and save the best checkpoint base d on e valuating on a development dataset. Step 2: with optimal ˆ Θ learned in Step 1 , in Eq. (22), we set Θ = ˆ Θ and x Θ to learn δ . Step 3: with optimal ˆ δ learned in Eq. (22), in Eq. (23) we set δ = ˆ δ and x δ to learn new values for Θ . Step 4 : W e repeat Step 2 and Step 3 until a maximum T able 1: Statistics of datasets. Statistics 30Music AO TM # of users 12,336 15,835 # of playlists 32,140 99,903 # of songs 276,142 504,283 # of interactions 666,788 1,966,795 avg. # of playlists per user 2.6 6.3 avg. & max # of songs per playlist 18.75 & 63 17.69 & 58 Density 0.008% 0.004% number of epochs is r eached and sav e the b est checkp oint based on evaluation on a development dataset. Following [ 13 , 26 ], the up date rule for δ is obtained by using the fast gradient metho d as follo ws: δ = ϵ × s ( ˆ Θ ) × ` δ (L ( D | ˆ Θ + δ )) ` δ (L ( D | ˆ Θ + δ )) 2 (24) Note that update rules of parameters in Θ can be easily obtained by computing the partial derivative w .r .t each parameter in Θ . 5.5 Time Complexity Let Ω denote the total number of training instances (= Í j N ( p j ) where N ( p j ) refers to the number of songs in training playlist p j ). ω = ma x ( N ( p j )) , ∀ j = 1 , n denotes the maximum numb er of songs in all playlists. For each forward pass, MDR takes O ( d ) to measure o ( M D R ) (in Eq. (11)) for a positive training instance, and another forward pass with O ( d ) to calculate o ( M D R ) for a negative instance. The backpropagation for updating parameters take the same complexity . Therefore, the time comple xity of MDR is O ( Ω d ) . Similarly , for each p ositiv e training instance, MASS takes (i) O ( 2 d 2 ) to make each query in Eq. (12) and Eq. (16); (ii) O ( ω d ) to calculate ω distance scores from ω member songs to the target song in Eq. (15); and (iii) O ( ω d ) to measure attention scores in Eq. (18). Since embedding size d is often small, O ( ω d ) is a dominant term and MASS ’s time complexity is O ( Ω ω d ) . Hence, both MDR and MASS scale linearly to the numb er of training instances and can run very fast, especially with sparse datasets. When training with APR , updating δ in Eq. (24) with xe d ˆ Θ needs one forward and one backward pass. Learning Θ in Eq. (23) requires one forward pass to measure L (D | Θ ) in Eq. (21), one forward pass to measure L (D | ˆ Θ + δ ) in Eq. (22), and one backward pass to up date Θ in Eq. (23). Hence, time complexity when training with APR is h times higher ( h is small) compared to training with BPR loss. 6 EMPIRICAL ST UD Y 6.1 Datasets T o evaluate our proposed models and existing baselines, we used two publicly accessible real-world datasets that contain user , playlist, and song information. They are described as follows: • 30Music [ 49 ]: This is a collection of playlists data retrieved from Internet radio stations through Last.fm 6 . It consists of 57K playlists and 466K songs from 15K users. • A OTM [ 32 ]: This dataset was collected from the Art of the Mix 7 playlist database. It consists of 101K playlists and 504K songs from 16K users, spanning from Jan 1998 to June 2011. 6 https://www.last.fm 7 http://www.artofthemix.org/ For data preprocessing, we removed duplicate songs in playlists. Then we adopted a widely used k-core prepr ocessing step [ 12 , 47 ] (with k-core = 5), ltering out playlists with less than 5 songs. W e also removed users with an extremely large numb er of playlists, and extremely large playlists (i.e ., containing thousands of songs). Since the datasets did not have song order information for playlists (i.e., which song was added to a playlist rst, then next, and so on), we randomly shued the song order of each playlist and used it in the sequential recommendation baseline models to compare with our models. The two datasets are implicit feedback datasets. The statistics of the preprocessed datasets are presented in T able 1. 6.2 Baselines W e compared our propose d models with eight strong state-of-the- art models in the APC task. The baselines were trained by using BPR loss for a fair comparison: • Bayesian Personalize d Ranking (MF-BPR) [ 40 ]: It is a pair- wise matrix factorization method for implicit feedback datasets. • Collaborative Metric Learning (CML) [ 17 ]: It is a collabo- rative metric-based method. It adopted Euclidean distance to measure a user’s preference on items. • Neural Collab orativ e Filtering (NeuMF++) [ 14 ]: It is a neu- ral network based method that models non-linear user-item interactions. W e pretrained two components of NeuMF to ob- tain its best performance (i.e., NeuMF++). • Factored Item Similarity Methods (FISM) [ 21 ]: It is a item neighborhood-base d method. It ranks a candidate song based on its similarity with member songs using dot product. • Collaborative Memory Network (CMN++) [ 7 ]: It is a user- neighborhood base d model using a memory network to assign attentive scores for similar users. • Personalized Ranking Metric Embedding (PRME) [ 8 ]: It is a sequential recommender that models a personalized rst- order Markov behavior using Euclidean distance. • Translation-based Recommendation (Transrec) [ 11 ]: It is one of the best se quential recommendation methods. It models the third order between the user , the previous song, and the next song where the user acts as a translator . • Convolutional Sequence Embedding Recommendation (Caser) [ 45 ]: It is a CNN based sequential recommendation. It embeds a se quence of recent songs into an “image ” in time and latent spaces, then learns sequential patterns as local features of the image using dierent horizontal and vertical lters. W e did not compare our models with baselines that performed worse than above listed baselines like item-KNN [ 42 ], SLIM [ 35 ], etc. MF-BPR, CML, and NeuMF++ used only user/playlist-song inter- action data to model either users’ preferences over songs P ( s | u ) or playlists’ tastes over songs P ( s | p ). W e ran the baselines b oth ways, and report the best results. Tw o neighborho od-based baselines utilized neighbor users/playlists (i.e., CMN++) or member songs (i.e., FISM) to recommend the next song based on user/playlist sim- ilarities or song similarities (i.e., measure P ( s | u , s 1 , s 2 , .. ., s l ) and P ( s | p , s 1 , s 2 , .. ., s l ), of which we report the best results). T able 2: Performance of the baselines, and our models. The last two lines show the relativ e improvement of MASR and AMASR compar ed to the best baseline. Methods 30Music AO TM hit@10 ndcg@10 hit@10 ndcg@10 (a) MF-BPR 0.450 0.315 0.699 0.473 (b) CML 0.600 0.452 0.735 0.481 (c) NeuMF++ 0.623 0.461 0.741 0.498 (d) FISM 0.544 0.346 0.686 0.446 (e) CMN++ 0.536 0.397 0.722 0.505 (f ) PRME 0.426 0.260 0.570 0.354 (g) Transrec 0.570 0.417 0.710 0.450 (h) Caser 0.458 0.289 0.681 0.448 Ours MDR 0.705 0.524 0.820 0.631 MASS 0.670 0.500 0.834 0.639 MASR 0.731 0.564 0.854 0.654 AMDR 0.764 0.581 0.850 0.658 AMASS 0.753 0.581 0.856 0.659 AMASR 0.785 0.604 0.874 0.677 Imprv . (%) MASR +17.34 +22.34 +13.36 +28.24 AMASR +26.00 +31.02 +17.95 +34.19 6.3 Experimental Settings Protocol: W e use the widely adopted leave-one-out evaluation set- ting [ 14 ]. Since both the 30Music and AO TM datasets do not contain timestamps of added songs for each playlist, we randomly sample two songs per playlist–one for a positive test sample, and one for a development set to tune hyp er-parameters–while the remaining songs in each playlist make up the training set. W e follow [ 14 , 48 ] and uniformly random sample 100 non-member songs as negative songs, and rank the test song against those negative songs. Evaluation metrics: W e evaluate the performance of the mo dels with two widely used metrics: Hit Ratio ( hit@N ), and Normalized Discounted Cumulative Gain ( NDCG@N ). The hit@N measures whether the test item is in the recommended list or not, while the NDCG@N takes into account the p osition of the hit and assigns higher scores to hits at top-rank positions. For the test set, we measure both metrics and report the average scores. Hyper-parameters settings: Models are trained with the A dam optimizer with learning rates from {0.001, 0.0001}, regularization terms λ Θ from {0, 0.1, 0.01, 0.001, 0.0001}, and embedding sizes from {8, 16, 32, 64}. The maximum number of epochs is 50, and the batch size is 256. The number of hops in CMN++ are selected from {1, 2, 3, 4}. In NeuMF++ , the number of MLP layers are selected from {1, 2, 3}. The number of negative samples per one positive instance is 4, similar to [ 14 ]. The Markov or der L in Caser is selected from {4, 5, 6, 7, 8, 9, 10}. For APR training, the numb er of APR training epo chs is 50, the noise magnitude ϵ is selected from {0.5, 1.0}, and the adversarial regularization λ δ is set to 1, as suggested in [ 13 ]. Adversarial noise is adde d only in training process, and are initialized as zero . All hyper-parameters are tuned by using the development set. Our source code is available at https://github.com/thanhdtran/MASR.git . 6.4 Experimental Results 6.4.1 Performance comparison. T able 2 shows the performance of our proposed models and baselines on each dataset. MDR and base- lines (a)-( c) are in Group 1 , but MDR shows much better performance T able 3: Performance of variants of our MDR and MASS. RI indicates relative average improvement over the corre- sponding method. Methods 30Music AO TM RI(%) hit@10 ndcg@10 hit@10 ndcg@10 MDR_us 0.684 0.500 0.815 0.594 +3.68 MDR_ps 0.654 0.476 0.746 0.547 +10.79 MDR_ups (i.e., MDR) 0.705 0.524 0.818 0.613 MASS_ups 0.651 0.479 0.789 0.581 +4.12 MASS_ps 0.621 0.450 0.764 0.523 +10.82 MASS_us (i.e., MASS) 0.670 0.500 0.820 0.631 compared to the (a)-(c) baselines, improving at least 11.14% hit@10 and 18.81% NDCG@10 on average. CML simply adopts Euclidean distance between users/playlists and p ositiv e songs, but has nearly equal performance with NeuMF++, which utilizes a neural network to learn non-linear relationships b etween users/playlists and songs. This result shows the eectiveness of using metric learning over dot product in recommendation. MDR outp erforms CML by 19.04% on average. This conrms the ee ctiv eness of Mahalanobis distance over Euclidian distance for recommendation. MASS outperforms both FISM and CMN++ , improving hit@10 by 18.4%, and NDCG@10 by 25.5% on av erage. This is b ecause FISM does not consider the attentive contribution of dierent neigh- bors. Even though CMN++ can assign attention scores for dierent user/playlist neighbors, it bears the aws of Group 1 by considering only either neighbor users or neighbor playlists. More importantly , MASS uses a novel attentive metric design, while dot product is utilized in FISM and CMN++ . Se quential mo dels, (f )-( h) baselines, do not work well. In particular , MASS outperforms the (f )-(h) baselines, improving 24.6% on average compared to the best model in (f )-( h). MASR outperforms b oth MDR and MASS , indicating the eective- ness of fusing them into one model. Particularly , MASR improves MDR by 5.0%, and MASS by 6.7% on average. Performances of MDR, MASS, MASR are booste d when adopting APR loss with a exible noise magnitude. AMDR improves MDR by 7.7%, AMASS improves MASS by 9.4%, and AMASR improves MASR by 5.8%. W e also com- pare our exible noise magnitude with a xed noise magnitude used in [ 13 ] by varying the xed noise magnitude in {0.5, 1.0} and setting λ δ = 1 . W e observe that APR with a exible noise magnitude performs better with an average improvement of 7.53%. Next, we build variants of our MDR and MASS models by re- moving either playlist or user embeddings, or using both of them. T able 3 presents an ablation study of exploiting playlist embed- dings. MDR_us is the MDR that uses only user-song interactions (i.e., ignore playlist-song distance o ( p j , s k ) in Eq. (11)). MDR_ps is the MDR that uses only playlist-song interactions (i.e., ignores user- song distance o ( u i , s k ) in Eq. (11)). MDR_ups is our proposed MDR model. Similarly , MASS_ups is the MASS model but considers both user-song distances and playlist-song distances in its design. The Embedding Layer and Attention Layer of MASS_ups have additional playlist emb edding matrices P ∈ R n × d and P ( a ) ∈ R n × d , respec- tively . MASS_ps is the MASS model that replaces user embeddings with playlist embeddings. MASS_us is our proposed MASS model. MDR (i.e., MDR_ups ) outperforms its derived forms ( MDR_us and MDR_ps ), improving by 3.7 ∼ 10.8% on average. This r esult shows N (a) 30Music. N (b) AOTM. Figure 4: Performance of our models and the baselines when varying N (or top-N recommendation list) from [1, 10]. Figure 5: Performance of all models when varying the em- bedding size d from {8, 16, 32, 64} in 30Music dataset. the eectiveness of modeling b oth users’ preferences and playlists’ themes in MDR design. MASS (i.e., MASS_us ) outperforms its two variants ( MASS_ups and MASS_ps ), improving MASS_ups by 3.7%, and MASS_ps by 10.8% on average. It makes sense that using ad- ditional playlist embeddings in MASS_ups is redundant since the member songs have already conveyed the playlist’s theme, and ignoring user embeddings in MASS_ps neglects user preferences. 6.4.2 V ar ying top-N recommendation list and embedding size. Fig- ure 4 shows performances of all models when var ying top-N recom- mendation from 1 to 10. W e see that all models gain higher results when increasing top-N , and all our proposed models outperform all baselines across all top-N values. On average, MASR improves 26.3%, and AMASR improves 33.9% o ver the best baseline’s performance. Figure 5 8 shows all models’ performances when var ying the embedding size d from {8, 16, 32, 64} for the 30Music dataset. Note that the AO TM dataset also shows similar results but is omitted due to the space limitations. W e observe that most mo dels tend to have increased p erformance when increasing embedding size. AMDR does not improve MDR when d = 8 but does so when increasing d . This phenomenon was also reported in [ 13 ] because when d = 8 , MDR is too simple and has a small numb er of parameters, which is far from overtting the data and not very vulnerable to adversarial noise. However , for more complicated models like MASS and MASR , even with a small embedding size d = 8 , APR shows its eectiveness in making the models more robust, and leads to an improv ement of AMASS by 12.0% over MASS , and an improvement of AMASR by 7.5% over MASR . The improvements of AMDR, AMASS, AMASR over their corr esponding base models are higher for larger d due to the increase of model complexity . 8 Figure 5 shares the same legend with Figure 4 for saving space. T able 4: Performance of MASS using various attention mech- anisms. Attention T ypes 30Music AO TM RI(%) hit@10 ndcg@10 hit@10 ndcg@10 non-mem + dot 0.630 0.454 0.785 0.574 +8.51 non-mem + metric 0.660 0.490 0.803 0.601 +3.43 mem + dot 0.659 0.475 0.791 0.585 +5.40 mem + metric 0.670 0.500 0.834 0.639 (a) ρ =0.153 (b) ρ =0.215 (c) ρ =0.171 (d) ρ =0.254 Figure 6: Scatter plots of PMI attention scores vs. attention weights learne d by various attention mechanisms, showing corresponding Pearson correlation score ρ ). (a)non-mem + dot, (b)non-mem + metric, (c)mem + dot, (d)mem + metric. 6.4.3 Is our memory metric-based aention helpful? T o answer this question, we evaluate how MASS ’s performance change d when varying its attention mechanism as follows: • non-memory + dot product ( non-mem + dot ): It is the popular dot attention introduced in [29]. • non-memory + metric ( non-mem + metric ): It is our propose d attention with Mahalanobis distance but no external memory . • memory + dot product ( mem + dot ): It is the dot attention but exploiting external memory . • memory + metric ( mem + metric ): It is our pr oposed attention mechanism. W e do not compare with the no-attention case because literature has already proved the eectiveness of the attention mechanism [ 53 ]. T able 4 shows the performance of MASS under the variations of our proposed attention mechanism. W e have some key observa- tions. First, non-mem + metric attention outperforms non-mem + dot attention with an impr ovement of 4.9% on average. Similarly , mem + metric attention improves the mem + dot attention design by 5.4% on av erage. This enhancement comes from dierent nature of metric space and dot product space. Moreover , these results con- rm that metric-based attention designs t better into our proposed Mahalanobis distance based model. Second, memory based atten- tion works better than non-mem attention. Particularly , on average, mem + dot improves non-mem + dot by 2.98%, and mem + metric improves non-mem + metric by 3.43%. Overall, the performance order is mem + metric > non-mem + metric > mem + dot > non-mem + dot , which conrms that our proposed attention p erforms the best and improves 3.43 ∼ 8.51% compared to its variations. 6.4.4 Deep analysis on aention. T o further understand how at- tention mechanisms work, we connect attentive scor es generated by attention mechanisms with Pointwise Mutual Information scores. Given a target song k and a member song t , the PMI score between them is dened as: P M I ( k , t ) = lo д P ( k , t ) P ( k )× P ( t ) . Here, PMI ( k,t) scor e indicates how likely two songs k and t co-occur together , or how likely a target song k will be added into song t ’s playlist. Figure 7: Runtime of all models in 30Music and AO TM. Given a playlist that has a set of l member songs, we measure PMI scores between the target song k and each of l member songs. Then, we apply so f t max to those PMI scores to obtain PMI atten- tive scores . Intuitively , the member song t that has a higher PMI score with candidate song k (i.e., co-occurs more with song k ) will have a higher PMI attentive score . W e draw scatter plots between PMI attentive scores and attentive scores generated by our propose d attention mechanism and its variations. Figure 6 shows the experi- mental results. W e obser ve that the Pearson correlation ρ between the PMI attentive scores and the attentiv e scores generated by our attention mechanism is the highest (0.254). This result shows that our proposed attention tends to give higher scores to co-occurred songs, which is what we desire. The Pearson correlation results ar e also consistent with what was reported in T able 4. 6.4.5 Runtime comparison. T o compare model runtimes, we use d a Nvidia GeForce GTX 1080 Ti with a batch size of 256 and embedding size of 64. W e do not report MASR and AMASR ’s runtimes because their components are pretrained and xed (i.e., there is no learning process/time). Figure 7 shows the runtimes (seconds per epoch) of our models and the baselines for each dataset. MDR only took 39 and 173 seconds per epoch in 30Music and A OTM , respectively , while MASS took 88 and 375 se conds. MDR , one of the fastest models, was also competitive with CML and MF-BPR . 7 CONCLUSION In this work, w e pr oposed three novel recommendation approaches based on Mahalanobis distance. Our MDR mo del use d Mahalanobis distance to account for both users’ preferences and playlists’ themes over songs. Our MASS model measured attentive similarities be- tween a candidate song and member songs in a target playlist through our proposed memory metric-based attention me chanism. Our MASR model combine d the capabilities of MDR and MASR . W e also adopted and customize d Adversarial Personalized Rank- ing (APR) loss with proposed exible noise magnitude to further enhance the robustness of our three models. Through extensive ex- periments against eight baselines in tw o r eal-world large-scale APC datasets, we show ed that our MASR improved 20.3%, and AMASR using APR loss improv ed 27.3% on average over the best baseline. Our runtime experiments also showed that our models were not only competitive, but fast as well. A CKNO WLEDGMEN T This work was supporte d in part by NSF grant CNS-1755536, Google Faculty Research A ward, Microsoft Azure Research A ward, A WS Cloud Credits for Research, and Google Cloud. Any opinions, nd- ings and conclusions or recommendations expressed in this material are the author(s) and do not necessarily reect those of the sp onsors. REFERENCES [1] Natalie Aizenberg, Y ehuda Kor en, and Oren Somekh. 2012. Build your own music recommender by modeling internet radio streams. In WW W . 1–10. [2] Stanislaw Antol, Aishwarya Agrawal, Jiasen Lu, Margaret Mitchell, Dhruv Batra, C Lawrence Zitnick, and Devi Parikh. 2015. V qa: Visual question answering. In ICCV . 2425–2433. [3] Dzmitry Bahdanau, K yunghyun Cho, and Y oshua Bengio. 2014. Neural ma- chine translation by jointly learning to align and translate. arXiv preprint arXiv:1409.0473 (2014). [4] Shuo Chen, Josh L Moore, Douglas Turnbull, and Thorsten Joachims. 2012. Playlist prediction via metric embedding. In SIGKDD . 714–722. [5] Robin Devooght, Nicolas Kourtellis, and Amin Mantrach. 2015. Dynamic matrix factorization with priors on unknown values. In SIGKDD . 189–198. [6] Tim Donkers, Benedikt Loepp, and Jürgen Ziegler . 2017. Se quential user-based recurrent neural network recommendations. In RecSys . 152–160. [7] Travis Ebesu, Bin Shen, and Yi Fang. 2018. Collaborative Memory Network for Recommendation Systems. arXiv preprint arXiv:1804.10862 (2018). [8] Shanshan Feng, Xutao Li, Yifeng Zeng, Gao Cong, Y eow Meng Chee, and Quan Y uan. 2015. Personalized Ranking Metric Embedding for Next New POI Recom- mendation.. In IJCAI . 2069–2075. [9] Arthur F lexer , Dominik Schnitzer, Martin Gasser, and Gerhard Widmer. 2008. Playlist Generation using Start and End Songs.. In ISMIR , V ol. 8. 173–178. [10] Xavier Glorot, Antoine Bor des, and Y oshua Bengio. 2011. Deep sparse rectier neural networks. In AIST ATS . 315–323. [11] Ruining He, W ang-Cheng Kang, and Julian McA uley . 2017. Translation-based recommendation. In RecSys . 161–169. [12] Ruining He and Julian McAule y . 2016. Ups and downs: Mo deling the visual evolution of fashion trends with one-class collaborative ltering. In W WW . 507– 517. [13] Xiangnan He, Zhankui He, Xiaoyu Du, and Tat-Seng Chua. 2018. Adversarial personalized ranking for recommendation. In SIGIR . 355–364. [14] Xiangnan He, Lizi Liao, Hanwang Zhang, Liqiang Nie, Xia Hu, and T at-Seng Chua. 2017. Neural collaborative ltering. In W WW . 173–182. [15] Xiangnan He, Hanwang Zhang, Min-Y en Kan, and Tat-Seng Chua. 2016. Fast matrix factorization for online recommendation with implicit fee dback. In SIGIR . 549–558. [16] Balázs Hidasi and Alexandros Karatzoglou. 2018. Re curr ent neural networks with top-k gains for session-based recommendations. In CIKM . 843–852. [17] Cheng-Kang Hsieh, Longqi Y ang, Yin Cui, T sung-Yi Lin, Serge Belongie, and Deborah Estrin. 2017. Collaborative metric learning. In W WW . 193–201. [18] Yifan Hu, Y ehuda Koren, and Chris V olinsky . 2008. Collaborative ltering for implicit feedback datasets. In ICDM . 263–272. [19] Dietmar Jannach, Iman Kamehkhosh, and Lukas Lerche. 2017. Leveraging multi- dimensional user models for personalized next-track music recommendation. In SIGAPP . 1635–1642. [20] How Jing and Alexander J Smola. 2017. Neural survival recommender . In WSDM . 515–524. [21] Santosh Kabbur , Xia Ning, and George Karypis. 2013. Fism: factored item simi- larity models for top-n recommender systems. In SIGKDD . 659–667. [22] Iman Kamehkhosh and Dietmar Jannach. 2017. User perception of next-track music recommendations. In UMAP . 113–121. [23] Y ehuda Koren. 2009. Collaborative ltering with temp oral dynamics. In SIGKDD . 447–456. [24] Y ehuda Koren, Robert Bell, and Chris V olinsky . 2009. Matrix factorization te ch- niques for recommender systems. Computer 8 (2009), 30–37. [25] Ankit Kumar , Ozan Irsoy, Peter Ondruska, Mohit Iyyer , James Bradbury, Ishaan Gulrajani, Victor Zhong, Romain Paulus, and Richard Socher . 2016. Ask me anything: Dynamic memory networks for natural language processing. In ICML . 1378–1387. [26] Alexey Kurakin, Ian Goodfellow , and Samy Bengio. 2016. Adversarial examples in the physical world. arXiv preprint arXiv:1607.02533 (2016). [27] Dawen Liang, Rahul G Krishnan, Matthew D Homan, and T ony Jebara. 2018. V ariational Autoencoders for Collaborative Filtering. arXiv preprint arXiv:1802.05814 (2018). [28] Dawen Liang, Minshu Zhan, and Daniel PW Ellis. 2015. Content- A ware Collab- orative Music Recommendation Using Pre-trained Neural Networks.. In ISMIR . 295–301. [29] Minh- Thang Luong, Hieu Pham, and Christopher D Manning. 2015. Eec- tive approaches to attention-based neural machine translation. arXiv preprint arXiv:1508.04025 (2015). [30] Chen Ma, Peng Kang, Bin Wu, Qinglong W ang, and Xue Liu. 2018. Gated Attentive- Autoencoder for Content-A ware Recommendation. arXiv preprint arXiv:1812.02869 (2018). [31] Chen Ma, Yingxue Zhang, Qinglong W ang, and Xue Liu. 2018. Point-of-Interest Recommendation: Exploiting Self-Attentive A utoencoders with Neighbor-A ware Inuence. In CIKM . 697–706. [32] B. McFee and G. R. G. Lanckriet. 2012. Hypergraph models of playlist dialects. In ISMIR . [33] Brian McFee and Gert RG Lanckriet. 2012. Hypergraph Models of Playlist Di- alects.. In ISMIR , V ol. 12. 343–348. [34] Alexander Miller , Adam Fisch, Jesse Dodge, Amir-Hossein Karimi, Antoine Bor- des, and Jason W eston. 2016. Key-value memory networks for dir ectly reading documents. arXiv preprint arXiv:1606.03126 (2016). [35] Xia Ning and George Karypis. 2011. Slim: Sparse linear methods for top-n recommender systems. In ICDM . 497–506. [36] Sergio Oramas, Oriol Nieto, Mohamed Sordo, and Xavier Serra. 2017. A deep multimodal approach for cold-start music recommendation. arXiv preprint arXiv:1706.09739 (2017). [37] Martin Pichl, Eva Zangerle, and Günther Specht. 2017. Improving context-aware music recommender systems: beyond the pre-ltering approach. In ICMR . 201– 208. [38] Tim Pohle, Elias Pampalk, and Gerhard Widmer . 2005. Generating similarity- based playlists using traveling salesman algorithms. In DAFx . 220–225. [39] Parikshit Ram and Alexander G Gray . 2012. Maximum inner-product search using cone trees. In SIGKDD . 931–939. [40] Steen Rendle, Christoph Freudenthaler , Zeno Gantner, and Lars Schmidt- Thieme. 2009. BPR: Bayesian personalized ranking from implicit feedback. In U AI . 452– 461. [41] Steen Rendle, Christoph Freudenthaler , and Lars Schmidt-Thieme . 2010. Factor- izing personalized markov chains for next-basket recommendation. In WWW . 811–820. [42] Badrul Sar war , George Karypis, Joseph Konstan, and John Rie dl. 2001. Item-base d collaborative ltering recommendation algorithms. In WW W . 285–295. [43] Nitish Srivastava and Ruslan R Salakhutdinov . 2012. Multimodal learning with deep boltzmann machines. In NIPS . 2222–2230. [44] Sainbayar Sukhbaatar , Jason W eston, Rob Fergus, et al . 2015. End-to-end memory networks. In NIPS . 2440–2448. [45] Jiaxi T ang and Ke W ang. 2018. Personalized top-n sequential recommendation via convolutional sequence embedding. In WSDM . 565–573. [46] Irene T einemaa, Niek T ax, and Carlos Bentes. 2018. A utomatic P laylist Con- tinuation through a Composition of Collaborative Filters. arXiv preprint arXiv:1808.04288 (2018). [47] Thanh Tran, K yumin Lee, Yiming Liao, and Dongwon Lee. 2018. Regularizing Matrix Factorization with User and Item Embeddings for Recommendation. In CIKM . 687–696. [48] Thanh Tran, Xinyue Liu, Kyumin Lee, and Xiangnan K ong. 2019. Signed Distance- based Deep Memory Re commender . In W WW . 1841–1852. [49] Roberto Turrin, Massimo Quadrana, Andrea Condorelli, Roberto Pagano, and Paolo Cremonesi. 2015. 30Music Listening and Playlists Dataset. [50] Andreu Vall, Matthias Dorfer, Markus Schedl, and Gerhard Widmer . 2018. A Hybrid Approach to Music Playlist Continuation Based on Playlist-Song Mem- bership. arXiv preprint arXiv:1805.09557 (2018). [51] Andreu V all, Hamid Eghbal-Zadeh, Matthias Dorfer , Markus Schedl, and Gerhard Widmer . 2017. Music playlist continuation by learning from hand-curated exam- ples and song features: Alleviating the cold-start problem for rare and out-of-set songs. In DLRS . 46–54. [52] Aaron V an den Oord, Sander Dieleman, and Benjamin Schrauw en. 2013. Deep content-based music recommendation. In NIPS . 2643–2651. [53] Ashish V aswani, Noam Shazeer , Niki Parmar , Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser , and Illia Polosukhin. 2017. Attention is all you need. In NIPS . 5998–6008. [54] Pengfei W ang, Jiafeng Guo, Yanyan Lan, Jun Xu, Shengxian W an, and Xueqi Cheng. 2015. Learning hierarchical representation model for nextbasket recom- mendation. In SIGIR . 403–412. [55] Xinxi W ang and Y e W ang. 2014. Improving content-based and hybrid music recommendation using deep learning. In SIGMM . 627–636. [56] Xinxi W ang, Yi W ang, David Hsu, and Y e W ang. 2014. Exploration in interactive personalized music recommendation: a reinforcement learning approach. TOMM 11, 1 (2014), 7. [57] Kilian Q W einberger , John Blitzer , and Lawrence K Saul. 2006. Distance metric learning for large margin nearest neighbor classication. In NIPS . 1473–1480. [58] Y ao Wu, Christopher DuBois, Alice X Zheng, and Martin Ester. 2016. Collab- orative denoising auto-encoders for top-n recommender systems. In WSDM . 153–162. [59] Eric P Xing, Michael I Jordan, Stuart J Russell, and Andrew Y Ng. 2003. Distance metric learning with application to clustering with side-information. In NIPS . 521–528. [60] Caiming Xiong, Stephen Merity , and Richard Socher . 2016. Dynamic memory networks for visual and textual question answering. In ICML . 2397–2406. [61] Zichao Y ang, Diyi Y ang, Chris Dyer , Xiaodong He, Alex Smola, and Eduard Hovy . 2016. Hierarchical attention networks for do cument classication. In NAACL HLT . 1480–1489. [62] Ziwei Zhu, Jianling W ang, and James Caverlee. 2019. Improving T op-K Recom- mendation via Joint Collaborative Autoencoders. In WWW .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment