MPI+X: task-based parallelization and dynamic load balance of finite element assembly

The main computing tasks of a finite element code(FE) for solving partial differential equations (PDE’s) are the algebraic system assembly and the iterative solver. This work focuses on the first task, in the context of a hybrid MPI+X paradigm. Although we will describe algorithms in the FE context, a similar strategy can be straightforwardly applied to other discretization methods, like the finite volume method. The matrix assembly consists of a loop over the elements of the MPI partition to compute element matrices and right-hand sides and their assemblies in the local system to each MPI partition. In a MPI+X hybrid parallelism context, X has consisted traditionally of loop parallelism using OpenMP. Several strategies have been proposed in the literature to implement this loop parallelism, like coloring or substructuring techniques to circumvent the race condition that appears when assembling the element system into the local system. The main drawback of the first technique is the decrease of the IPC due to bad spatial locality. The second technique avoids this issue but requires extensive changes in the implementation, which can be cumbersome when several element loops should be treated. We propose an alternative, based on the task parallelism of the element loop using some extensions to the OpenMP programming model. The taskification of the assembly solves both aforementioned problems. In addition, dynamic load balance will be applied using the DLB library, especially efficient in the presence of hybrid meshes, where the relative costs of the different elements is impossible to estimate a priori. This paper presents the proposed methodology, its implementation and its validation through the solution of large computational mechanics problems up to 16k cores.

💡 Research Summary

This paper addresses the performance bottlenecks that arise during the matrix assembly phase of finite‑element (FE) simulations when executed on modern high‑performance computing (HPC) platforms. The authors focus on the “MPI+X” hybrid programming model, where MPI handles distributed‑memory parallelism and the “X” component traditionally relies on OpenMP loop parallelism. In the assembly loop each element’s local stiffness matrix and right‑hand side vector are computed and then added to a shared global matrix. Direct parallelisation with OpenMP leads to race conditions; common work‑arounds are (i) graph‑coloring, which serialises groups of elements to avoid concurrent writes, and (ii) sub‑structuring, which reorganises data structures to eliminate conflicts. Coloring degrades instruction‑per‑cycle (IPC) because it destroys spatial locality, while sub‑structuring requires extensive code refactoring and becomes cumbersome when many element loops must be treated.

The authors propose a different approach: they “taskify” the element loop using extensions to the OpenMP model provided by the OmpSs runtime. Each element’s compute‑and‑assemble sequence is expressed as an independent task, and the runtime automatically analyses data dependencies to schedule tasks without conflicts. This eliminates the need for coloring, preserves cache‑friendly memory access patterns, and requires only modest source‑code changes. Because tasks are fine‑grained, the runtime can dynamically balance work among threads, which is especially valuable for hybrid meshes (prisms, pyramids, tetrahedra) where element‑wise computational costs differ dramatically and are hard to predict a priori.

To further address load imbalance that originates from the static mesh partitioning (e.g., METIS) the paper integrates the Dynamic Load Balance (DLB) library. DLB monitors the CPU utilisation of each MPI rank at runtime and redistributes idle cores to overloaded ranks without moving any mesh data. This is a lightweight alternative to full mesh repartitioning, which would incur significant communication overhead and cannot react to transient load spikes caused by physics (e.g., moving particles, dam break). The combination of task‑based assembly and DLB therefore targets both intra‑node (shared‑memory) and inter‑node (distributed‑memory) imbalance.

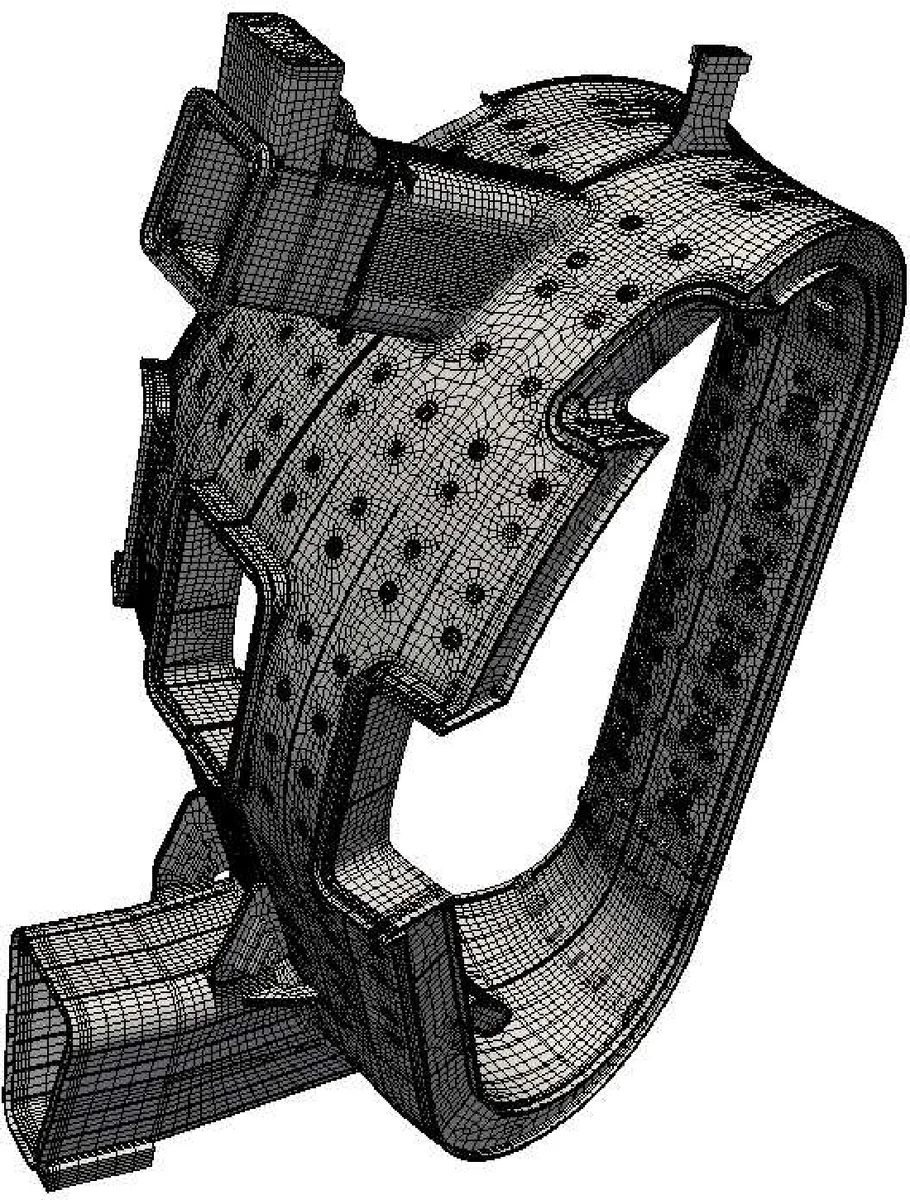

The methodology is validated on two large‑scale applications: (1) a respiratory‑system fluid dynamics case with 17.7 million hybrid elements (prisms for boundary layers, tetrahedra for core flow, pyramids for transitions) and (2) a structural mechanics model of a fusion‑reactor vacuum vessel containing 31.5 million elements of mixed types (hexahedra, prisms, pyramids, tetrahedra). Both problems are solved with the Alya code, which uses a variational multiscale formulation for incompressible Navier‑Stokes and a Newton‑Raphson scheme for structural dynamics. The authors compare three configurations: (a) classic OpenMP loop parallelism with coloring, (b) the proposed OmpSs task‑based version, and (c) the task‑based version augmented with DLB. Performance results show that the taskified assembly achieves on average 1.4 × higher scaling efficiency than the colored OpenMP version. When DLB is enabled, total runtime drops up to an additional 20 % because the runtime can reassign cores from under‑utilised MPI ranks to those experiencing heavier element loads. Strong scaling tests up to 16 384 cores demonstrate that the combined MPI+X+DLB approach maintains high efficiency even in the presence of pronounced heterogeneity in element costs.

Beyond raw performance, the paper emphasizes implementation practicality. The task‑based transformation is achieved by inserting OmpSs pragmas around the element loop, preserving code readability and avoiding massive rewrites. DLB operates as an external library that can be linked without modifying the core application logic. Consequently, the proposed strategy can be adopted by existing FE codes with relatively low engineering effort.

In conclusion, the study shows that task‑based parallelism, when coupled with a lightweight dynamic load‑balancing runtime, overcomes the limitations of traditional coloring and sub‑structuring techniques. It delivers superior scalability for finite‑element assembly on hybrid meshes and on systems with tens of thousands of cores, while keeping the development burden modest. The authors suggest future work extending the approach to other discretisation methods (finite volume, spectral elements) and to heterogeneous architectures such as GPUs, where task granularity and runtime‑driven load balancing could provide similar benefits.

Comments & Academic Discussion

Loading comments...

Leave a Comment