Efficient Plane-Based Optimization of Geometry and Texture for Indoor RGB-D Reconstruction

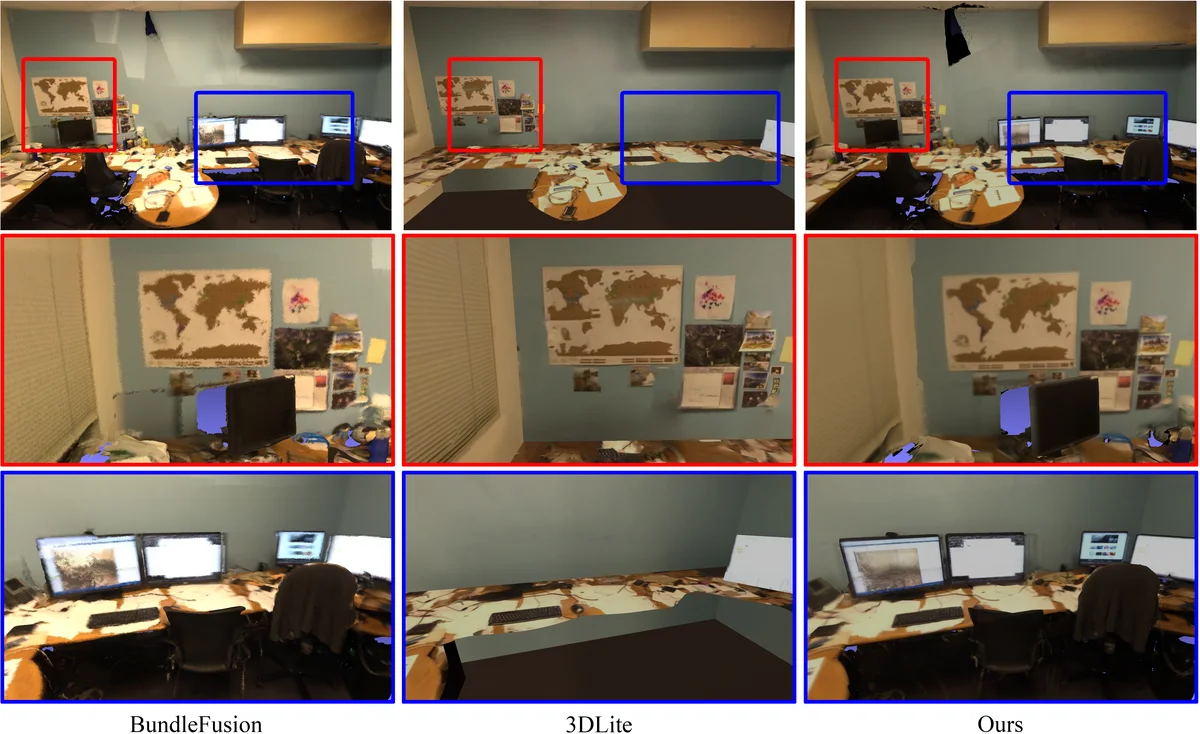

We propose a novel approach to reconstruct RGB-D indoor scene based on plane primitives. Our approach takes as input a RGB-D sequence and a dense coarse mesh reconstructed from it, and generates a lightweight, low-polygonal mesh with clear face textures and sharp features without losing geometry details from the original scene. Compared to existing methods which only cover large planar regions in the scene, our method builds the entire scene by adaptive planes without losing geometry details and also preserves sharp features in the mesh. Experiments show that our method is more efficient to generate textured mesh from RGB-D data than state-of-the-arts.

💡 Research Summary

This paper presents a novel and efficient pipeline for reconstructing high-quality, lightweight textured mesh models from RGB-D scans of indoor scenes. The method takes as input an RGB-D frame sequence and a dense, coarse mesh generated by an online reconstruction system like BundleFusion. Its core innovation lies in using adaptive plane primitives to represent the entire scene—not just large structural planes—thereby preserving geometric details while achieving significant simplification.

The pipeline operates in four main stages. First, in the Mesh Planar Partition stage, the input dense mesh is segmented into numerous planar clusters using a PCA-based energy minimization algorithm. Adjacent similar planes are then merged. This step is crucial as it converts the entire scene, including free-form surfaces like furniture, into a plane-based representation.

Second, the Mesh Simplification stage applies Quadric Error Metrics (QEM) simplification within each cluster independently, allowing for parallel processing to create a lightweight base mesh.

The third and most critical stage is the Plane, Camera Pose, and Texture Optimization. Texture patches are created for each plane cluster, and texel points are sampled. A global optimization problem is formulated to maximize the photometric consistency of these texels across selected keyframes. The objective function simultaneously optimizes camera poses (T), plane parameters (Φ), and texture colors (C). A key contribution over prior work

Comments & Academic Discussion

Loading comments...

Leave a Comment