A Game-Theoretic Taxonomy and Survey of Defensive Deception for Cybersecurity and Privacy

Cyberattacks on both databases and critical infrastructure have threatened public and private sectors. Ubiquitous tracking and wearable computing have infringed upon privacy. Advocates and engineers have recently proposed using defensive deception as a means to leverage the information asymmetry typically enjoyed by attackers as a tool for defenders. The term deception, however, has been employed broadly and with a variety of meanings. In this paper, we survey 24 articles from 2008-2018 that use game theory to model defensive deception for cybersecurity and privacy. Then we propose a taxonomy that defines six types of deception: perturbation, moving target defense, obfuscation, mixing, honey-x, and attacker engagement. These types are delineated by their information structures, agents, actions, and duration: precisely concepts captured by game theory. Our aims are to rigorously define types of defensive deception, to capture a snapshot of the state of the literature, to provide a menu of models which can be used for applied research, and to identify promising areas for future work. Our taxonomy provides a systematic foundation for understanding different types of defensive deception commonly encountered in cybersecurity and privacy.

💡 Research Summary

The paper “A Game-Theoretic Taxonomy and Survey of Defensive Deception for Cybersecurity and Privacy” presents a systematic review and classification of defensive deception techniques that have been modeled using game theory between 2008 and 2018. The authors begin by highlighting the growing threat landscape—massive data breaches, attacks on critical infrastructure, and pervasive tracking that erodes personal privacy. They argue that defenders can exploit the information asymmetry that attackers typically enjoy by deliberately misleading them, a concept they term “defensive deception.”

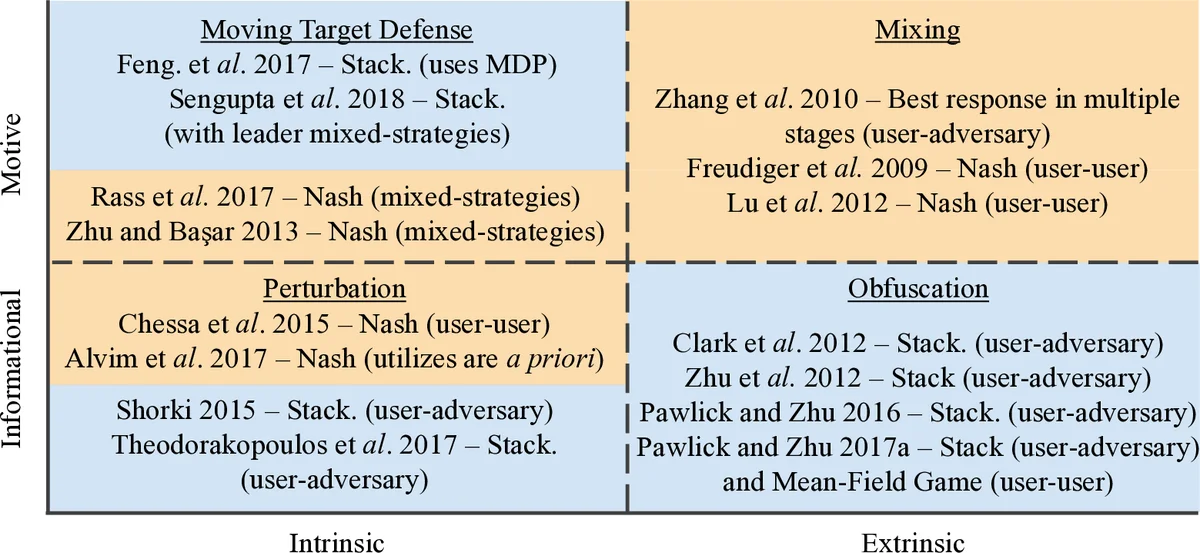

To bring rigor to a field where the term “deception” is used loosely across disciplines (military, psychology, economics, etc.), the authors propose a taxonomy grounded in four game‑theoretic dimensions: private information (who knows what), players (defender vs. attacker), action sets (what moves are available), and interaction duration (one‑shot vs. repeated). They survey 24 peer‑reviewed papers that satisfy three criteria: (i) focus on cybersecurity or privacy, (ii) employ a defensive deception mechanism, and (iii) use non‑cooperative game theory (Stackelberg, Nash, or signaling games).

The survey reveals that most prior work adopts Stackelberg models, where the defender (leader) commits to a strategy—such as moving target defense (MTD) or honeypot deployment—and the attacker (follower) best‑responds after observing the commitment. Signaling games are used for perturbation and privacy‑preserving noise injection, while simultaneous‑move (Nash) games capture static obfuscation scenarios. The authors tabulate each paper’s game structure, the deception type addressed, the assumed information structure, and the primary findings.

From this analysis they extract six distinct categories of defensive deception:

-

Perturbation – Adding random noise to data or measurements to degrade attacker inference. Typically modeled as a signaling game where the defender sends a noisy signal and the attacker updates beliefs.

-

Moving Target Defense (MTD) – Dynamically changing system configurations (e.g., IP addresses, ports, software stacks) to invalidate attacker reconnaissance. Most often captured by Stackelberg games because the defender’s policy is announced first.

-

Obfuscation – Deliberately complicating logs, code, or network traffic to raise the cost of analysis. Usually represented by simultaneous‑move games where both parties choose strategies without observing each other’s moves.

-

Mixing – Using mix networks, multi‑path routing, or similar techniques to blend traffic and hide user identities. This blends aspects of signaling (noise) and simultaneous moves (multiple paths).

-

Honey‑X – Deploying deceptive assets such as honeypots, honey‑nets, or honey‑files to lure attackers for observation, intelligence gathering, or waste their resources. Stackelberg frameworks are natural here because the defender creates a credible fake environment.

-

Attacker Engagement – Actively interacting with an attacker over multiple rounds to learn their tactics, drain their resources, or steer them into traps. This category calls for repeated‑interaction or Markov decision process models, which are under‑explored in the surveyed literature.

The taxonomy clarifies the subtle differences between, for example, perturbation (injecting false data) and obfuscation (making true data harder to interpret), and between static deception (one‑shot) and dynamic deception (repeated). It also maps each type to the appropriate game‑theoretic formalism, providing a “menu” for researchers to select a model that matches their defensive objective.

Key insights from the analysis include:

-

The dominance of Stackelberg models reflects a design bias toward defender‑led commitments, but many real‑world attacks are multi‑stage and adaptive, suggesting a need for richer signaling or repeated‑game models.

-

Several papers conflate perturbation and obfuscation, indicating a gap in precise definitions that the taxonomy seeks to fill.

-

Attacker engagement is still nascent; few works model the attacker’s learning dynamics or the defender’s budget constraints over time.

-

Most existing studies assume rational, utility‑maximizing attackers, overlooking behavioral economics findings such as lying aversion or bounded rationality.

The authors conclude by outlining promising research directions: (a) Mimetic deception, where defenders imitate legitimate behavior to blend in; (b) Theoretical extensions involving multi‑player, multi‑stage, and Bayesian equilibrium concepts; (c) Practical implementations and experimental validation on real networks or IoT platforms; and (d) Interdisciplinary integration of psychology, economics, and sociology to capture human factors in attacker decision‑making.

Overall, the paper provides a rigorous, game‑theoretic foundation for classifying defensive deception techniques, highlights current methodological trends and gaps, and offers a clear roadmap for future work that could bridge theory and practice in securing cyber‑physical systems and protecting user privacy.

Comments & Academic Discussion

Loading comments...

Leave a Comment