Disparity-based HDR imaging

High-dynamic range imaging permits to extend the dynamic range of intensity values to get close to what the human eye is able to perceive. Although there has been a huge progress in the digital camera sensor range capacity, the need of capturing seve…

Authors: Jennifer Bonnard (CRESTIC), Gilles Valette (CRESTIC), Celine Loscos (CRESTIC)

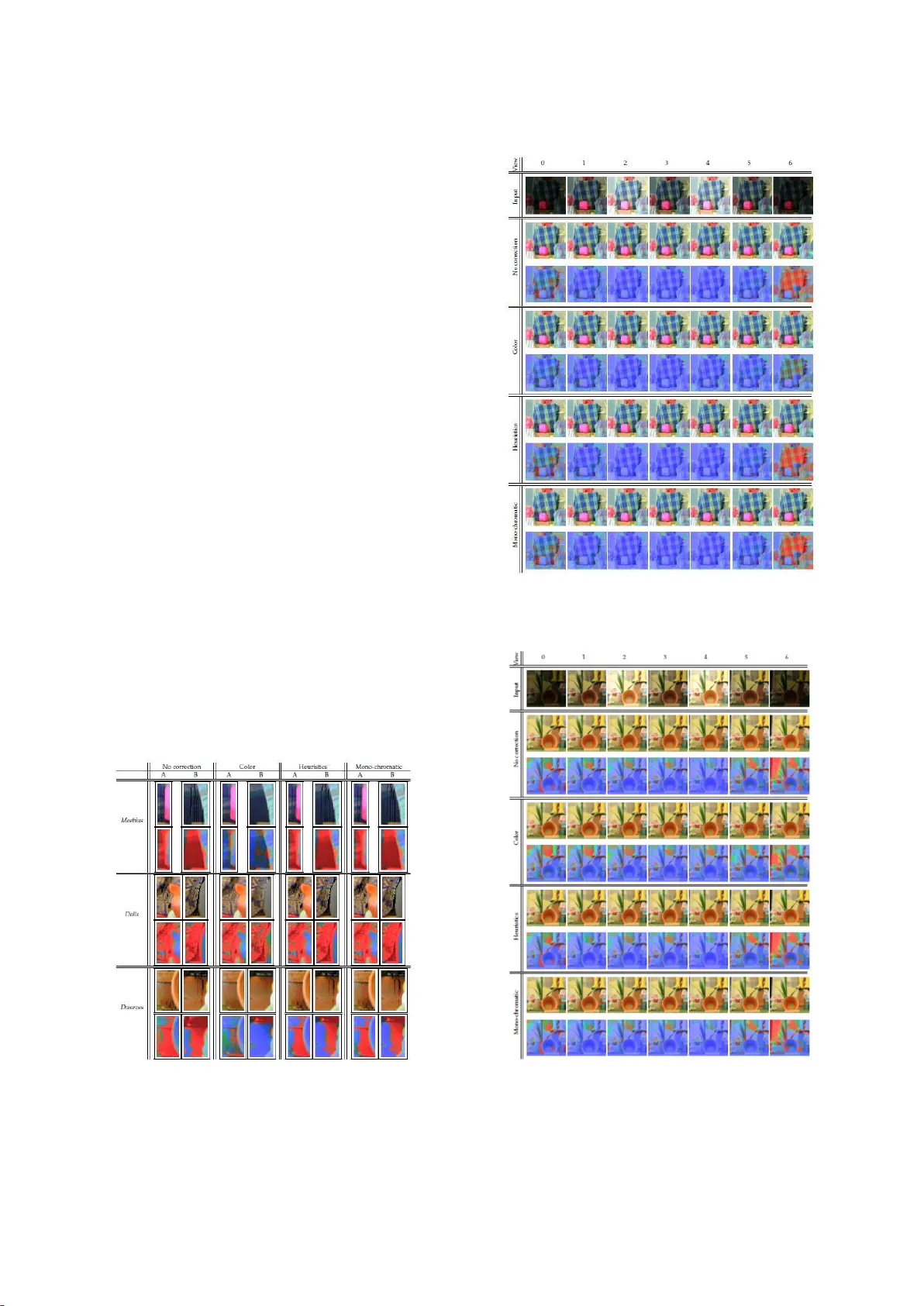

DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ Disparity-based HDR imaging Jennifer Bonnard Gilles Valette Céline Loscos gilles.valet te@univ-reims. fr celine.loscos@un iv-reims.f r CReSTIC laboratory University of Reims Champagne-Ardenne ABSTRACT High-dynamic range imagin g permits to ext end the d ynamic ran ge of intensity valu es to get close to what the human eye is able to perceive. Although there ha s b een a h uge p rogress in the digital camera sensor range capac ity, the need of capturi ng several exposures in order to reconstruc t high-dynamic ran ge values persist. I n this p aper, w e pr esent a study on how t o acquire hi gh- dynamic range valu es for multi-stereo i mages. In many pap ers, disparity h as b een used to re gister pix els of di fferent i mages and guide th e reconstru ction. In this paper, we show the limitati ons of such approaches and pr opose h euristics as solutions t o id entified problematic cases. Keywords Multi scopic images, hi gh-dynamic ran ge i mages, i mage registration. 1. INTRODUCTION Conventional digital i mages are c alled LDR (L ow Dyna mic Range). They co rrespond to most images ac quired so f ar with cameras SLRs and com pact cameras but also with the cameras available in the cell phones fo r example. They are usually captured and stored in an 8 to 10-bit precision format. Wh en the dynamic color r an ge is extended on at least 16 bi ts, t he i mages are call ed HDR (Hi gh D ynamic Range) [21]. Digital H DR imaging has existed for more t han twenty ye ars and is now well integrated to t he general p ublic because its f eature is n ow ac cessible o n mobile phones in particular. H DR imagin g is intended to overcome t he shortcomings of current sensor s whil e appr oaching solu tions representing the ran ge of i ntensities p erceptible by th e human eye. In parallel wit h digital imaging t hat can be considered t wo- dimensional, th e t hree-dimensional images (3D images) enabl e to perceive th e r elief of a sc ene. This techn ology is based on th e geometry defined by stereoscopic vision. The fields of H DR imagin g and 3D imaging have evolved a lot but independently. The d evelopment of methods allowin g the acquisition or the generation of 3D and H DR i mages is booming but remains without r eal solution f or the general publ ic. Th e methods we p ropose d eal with the fusion of th e two d omains of to obtain 3D HDR images with a wider dynamic ran ge of colors th an conventional 3D LDR images. In a pr evious approach , w e p roposed a disparity-b ased fra mework for 3D HDR imaging, from acq uisiti on to generation [3]. In this paper, w e propose a t wo-step solut ion to corr ect l ocal errors. Our first contribution is an automatic detection of errors. Our second contribution is a set o f proposed approaches to gene rate HDR values for identified problematic pix els. After reviewing pr evious w ork (sect ion 2) and providing more details on our original a pproach (section 3) , w e describ e our two contributions: automatic error detection (section 4) a nd HDR value computation for erroneous pixels (section 5). In section 6, we giv e detailed objective results on tests run on different datasets. 2. PREVIOUS WORK 3D reconstruc tion by stereovision. 3D reconstruction by stereovision relates to th e automatic depth e x traction of a 3 D scene structure from d ifferent viewpoin ts (2 to n ) acquired a t th e sa me time. It comes d own to match a ll ho mologous pixels from th e 3D point projections on the n i mages. Th is p aper considers si mplified multi-epipolar geometry, reached either by using directly a capture configuration in parallel geometry [17] or applying a pr e- processing step of rectification on each i mage [9], leading to epipolar lines parallel to image columns or rows. A matching scheme defin es data similarity ( or diss imilarity) wit hin a g i ven neighborhood b etween potential h omologous pixels, r endered difficult b y lack of in formation in images (such as occlusions) or ambiguous in formation (suc h a s homoge ne ous/repetitive area or luminosity variations). Multiscopic methods usually gain robustness with information redundancy by computing simultaneously the n depth maps [ 16]. HDR imaging T raditional HDR i mage reconst ruction methods [21] combine i nformation captured at different exposure t imes to acquire dif ferent in tensities of a s cene [ 6][11]. One difficulty with this approach is to han dle dynamic scenes, wh ere objects can move during the acqu isition process. Se veral methods were propos ed [1 1] but very few of them aim at reconstructing HDR values for dynami c environments[19]. Co mbining HDR with st ereovision enables high-quality depth p erception re production of real-world s cenes, but few cont ributi ons have b e en made in this domain. Solu tions were pr oposed for 3 D HDR im ages with stereo cameras [ 22][10] o r multi-stereo cameras [ 3] following stereovision-ba sed pr ocedures, with r emaining inaccuracies i n u nder- or over-exposed areas. This is improved using p atch-map al ong th e epipolar l ine [2 0] bu t spatial coherence is lost. 3. METHOD OVERVIEW 3.1 Original framewor k for mu ltiscopic HDR reconstruction In [ 3], we pr ese nt ed a fra mework to obta in, from a set of m ul ti-view and multi-exposur e LDR images , a set of HDR i mages, one i mage being ge nerated for e ach point of view. The d epth in formation provided by th e multi-view aspec t of th e images i s used to fin d matching p ixels in all i mages. T he information provided b y th e different exposures is then used to obtain a radiance valu e for each pixel. An example of acquisition and results is given Figure 1. The output of our method can b e used as an inp ut for autost ereoscopic display aft er app lication of a t one-mapping al gorithm. M oreover, since a dispar ity map is produced for eac h image, au gmented reality applications are also conceivable. Our approach consists of two main st eps. Fi rst, we identify p ixels representing t he sa me point o f th e s cene on different views. F or th is step, we adapted an a pproach from Niquin et al . [ 16] to the multi- exposure c ontext. S econd, for ea ch vi ew, w e calcu late an HDR image, bas ed on th e list of matching pixels. We reformulated t he DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ well-known method of Debevec and Malik [6] to consider the multi-view aspect of the images. Figure 1. Example of input (top) and output (bottom) of our method (HDR ima ges tone mapped using [7] ). 3.2 Stereo matching Usually, the images acquired to obtain an HD R image are captured from a sin gle viewpoint. In this case, th e pixels representing th e same point in the scene ar e in t he s ame posi tion i n each i mage. Th is is n o longer the case wh en the acqu isition of the i mages i s carri ed out from diff erent points of vi ew. Th ese pix els, to which an HDR value is to be assi gned, ar e th en at different coordinates in each image wh ere they ar e r epresented. A matchin g step is th erefore necessary i n order to match t he p ix els repr esenting the same point in each image constituting the dataset. We c all a match a set of matching pixels, i.e., a s et of pix els representing the same poi nt of a scene whi ch will get a uniqu e HDR value. Using th is notion of match, t he method proposed by Niqui n et al. [ 16] ai ms to s imultaneously build a map of disparit ies for each image and a match list in ord er to perform a 3D r econstruction of the scene. It i s worth n oticing t hat this stereo matching method i s based on color s imilarity and only processes images with the same e xpos ure. Therefore, w e brin g th e i mages of dif ferent exposur es back to a common exposure in ord er to use t he method as is . If th e data ar e linear, a f actor based on the percentage o f light reaching the sensor is ap plied to th e i mages. If they a re not lin ear, a first step c onsists in es ti ma tin g the response c urve associated with the sensor in question. For t his, w e exploit the me thod o f D ebevec and Malik [ 6]. 3.3 Multi HDR value computation In our fra mework we adapt th e commonly us ed formula of Debevec and Malik [6] f or calculating t he H DR value ( radi ance E) associated with a pi xel. Th e ini tial equation i s recalled in Eq . (1) . This equation considers th e s et o f p oints on t he scene at th e sa me position (i , j ) i n th e set of images consid ered. The calculation is carried out separately on each color compon ent. 𝑬 ( 𝒊, 𝒋 ) = ∑ 𝝎(𝑰 𝒏 ( 𝒊,𝒋 ) )( 𝒇 𝟏 (𝑰 𝒏 ( 𝒊,𝒋 ) ) ∆𝒕 𝒏 𝑵 𝒏𝟏 ∑ 𝝎(𝑰 𝒏 ( 𝒊,𝒋 ) ) 𝑵 𝒏𝟏 ( 1 ) where is t he t otal nu mber of i mages, I n (i,j) th e color va lue of t h e coordinate pi xel (i ,j) i n t he image I n acqu ired wit h an exposure time t n et f -1 t he i nverse functi on of the ca mera respons e an d a weight function for penalizing under- or over-exposed pixels. We adapted Eq. (1) t o allow th e computation of a r adianc e v alue E c on the color component c associated with each match m. 𝑚 = { 𝑞 | 𝑖 ∈ 𝐴 ⊆ { 1. . 𝑁} }, 𝑬 𝒄 ( 𝒎 ) = ∑ 𝝎 ( 𝒒 𝒊𝒄 ) 𝒇 𝟏 𝒒 𝒊𝒄 𝚫𝒕 𝒊 𝒊∈𝑨 ∑ 𝝎 ( 𝒒 𝒊𝒄 ) 𝒊∈𝑨 ( 2 ) where m is a match, q ic the color value of the pi xel q i b elonging to the m match on the c ol or component c {R,G,B}, t i the exposure time of the i mage I i t o w hich the pi xel q i belongs, f -1 the inverse functi on o f the camera response and a we ight fun ction. 3.4 Proposed extension We have s hown in previous art icles [3][4] that t he r esults of our original framework pr esented objectively q uantifiable imperfections. W e propo se an auto matic algorithm to detect invalid pixels ( see details in section 4). W e id entified s everal poss ibl e methods to com pute a corrected HD R value on thes e detected invalid pixels. Th ey ar e presented in secti on 5 . Th ese addi tional steps to our original approach are pres ented in Figure 2. Figure 2. Extended pipeline: the green box represents the addi tional steps proposed to improve the radiance of the pixels of invalid matches . 4. AUTOMATIC DETECT ION OF INVALID MATCHES An invalid match is a match where the radian ce of its p ix els is considered as incorrect. To a utomatically detect the invalid matches in a set of H DR i mages, we based our al gorithm on a subjective evaluation that the color s of t he p ixels belonging t o th ese matches ar e colored differently t han th eir neighbors, as sh own in the examples of the Figure 3. (a) (b) Figure 3. HDR images produced b y our method on the first view for the image set (a) Moëbius and (b) Dwarves of the Middlebury database. Black boxes outline pixels inconsisten t w ith their neighborood. The d etermination of the non-acc eptance of the r adiance of the pixels of a match is based o n the su m of th e R GB values of each pixel of this match. This sum mu st bel ong to an int erval [ a,b] so that the match is not cons idered i nvalid. The li mits of t his in terval depend on th e ran ge of LDR values on w hich the ori ginal ima ges are st ored, and c orrespond to a selection of non und er- or over- exposed pixels. A s econd criterion considers the n umber of different exposures rep resented in the match under consideration. If th e c omplete set of exposures available i n the proc essed im ages is not represented, we consider that th e radianc e of the pi x els of thi s match must b e corrected. In this way we c an d istin guish a dark- colored pixel from an under-exposed p ixel and a l i ght-colored pixel from an o ver-exposed pixel. The set of invalid matches d etected is then p laced in a list tre ated by e ach of th e methods of improvement of the radiances proposed in the followin g sectio ns . 5. HDR VALUE FOR INVALI D MATCHES We propose three solutions to corr ect th e radian ce of the invalid pixels d etected by th e method d escribed in the previous section. The first method p ermits to assign a new pi xel radia nce to the DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ current pixel by using th e radiance of th e pixels in its neighbourhood (see s ection 5.1). Th e two ot her methods ar e decomposed i n t wo steps: correct ion of th e initial disparities and then correction of th e radiances of the pixels. The first method modifies t h e disp arity of t he p ixels by takin g into account the disparity that is attri buted to the p ixels of th e n eighborhood whil e the s econd method takes in to account t he di sparities calculated on each of th e color components R, G and B separately. The new disparity maps l ead to n ew l ists of matches: th e corr ection of t he radiances of the pixels tr eated b y these two meth ods i s then based on the exploitation of these new list of match es. 5.1 Correction by interpolation of radiances The steps for as signing new radiances to the pixels of i nvalid matches by th e color-based method are detailed in algorithm 1 . T he method consists in in t erpolating th e HDR v alues by considering the pixels of the 3 3 neighbourhood of all the pixels of an i nvalid match. The radiance o f the set of pi xels of a neighborhood is considered wit h a confidence index in order to d istin guish between the p ixels of a valid match and the p ixels of an invalid match. Th is confid ence ind ex ca n hav e thre e valu es: 0 f or invalid matches pixels, 0 .5 for inva lid matches pix els whose radi ance has been corrected and 1 for va lid matches pixels. T hus, a pixel of an invalid ma tch can see i ts c onfidence in dex r ise fr om 0 to 0 .5 but never to 1, this val ue being reserved fo r the pi xels of a val id m atch. The z ero confidence ind ex means that as long as th e r adiance of a pixel from th e list of invalid ma tches has not been c orrected, it cannot be considered in the computation of the radiance of anothe r pixel. To treat th e pix els whos e neighborho od is of better q uality, we impose a constraint on the su m of the confidence indices of the neighboring pixels. Th is constrai nt is gradually released so that all pixels can be processed. T he p rocessing al gorithm is iterativ e in order to manage th e priority constraint in the ma in loop. It impli es the i mpossibility of processing all of the invalid match es in a single iteration. T herefore, the process is rep eated un til all the radi ances of the pi x els are replaced. When there is no chan ge for a given confidence ind ex, i t is d ecremented by 0.5. It is only at th e end of the curr ent iteration that th e radian ce values calculat ed d uring this iteration are allocated to the pixels concern ed. For a s et of 8 multiscopic ima ges, th e radianc e attri buted t o t h e pixels of an invalid match m is obtained by the formula: 𝑬 ( 𝒑 ) = ∑ ∑ 𝛂 𝒊𝒋 𝑬 𝒊 𝒑 𝒋 𝟕 𝒋𝟎 𝑪𝒂𝒓𝒅 ( 𝒎 ) 𝒊𝟏 ∑ ∑ 𝛂 𝒊𝒋 𝟕 𝒋𝟎 𝑪𝒂𝒓𝒅 ( 𝒎 ) 𝒊𝟏 ( 3 ) where p is t he current pix el of the invalid match processed, p j i s a pixel of the neighborhood o f t h e p ixel considered wi th j {0, .. , 7} its identifi er, E i (p j ) is the radi ance of th e pixel p j and ij corresponds to the confidence index of the pixel p j . 5.2 Correction based on disparities In thi s section, w e p ropose a solution to correct the disparities originally attrib uted to the p ixels of i nvalid matches. Accordin g to our obs ervations, the disparity of these pixels is wrong b ecause many of th em ar e on the contours of the objects. A bad disparity leads to the pairing of pixels not representing the same point of the scene. Invalid matches c annot be k ept as is. Th e pi xels of t hese matches are separated so t hat they can be tr eated separat ely and are considered as singleton matches. The proposed methods ar e di vided into thr ee steps (s ee Fi gure 4). When the disparity maps are compu ted, a new pairing of t he pixels is carri ed out. Th en w e lo ok in th is n ew li st of matches which are now the pixels c ounterparts of the invalid matches . W e can th en calculate an HDR value only for t he pixels of the invalid matches. The radiance of the other pixels remains unchanged. Figure 4. Pipelin e generation of new HDR values from the list of in valid matches. Two methods are proposed for co rrecting disparities. The first one is based on h euristics about the dispar ities of the nei ghborhood. The second one performs the computation on monochromatic valu es. 5.2.1 Method based on heu ristics on the disparities of the neighborhood The choice of a method for modifying di sparities by heuristics is guided by the knowledge w e have of t he di sparity of each pixel of the i mages. W e assu me that, co nsidering a restricted neighborhood, there is little risk of a l arge difference be tween the di sparities o f the pixels. The advant age of methods of estimatin g di sparities is th eir sensitivity to th e t exture of the obj ects of th e sc ene. Th eir calculation is then more precise than the modification of the colors presented in th e previous se ction. It may then be possi ble to retrieve the textu re d etails in a scene based on the c ontinuity of th e disparities. Horizontal contin uities ar e favored over vertical continuities. If the dispariti es ar e equal, the p ixels are inside an object, oth erwise the p ixels are at the edge of an obj ect or on two different objects. The proposed method is summarized in the algorithm 2. Considering the n eighborhood of size 3 3, t he n umber of pixels considered decreases from 8 to 1, giving priority to the gr eatest number o f e xploit able pi xels, t hen th e c ondition is g radu ally released. The algorithm is int erru pted wh en th e threshold of the number of e xpl oitable pixels b ecomes z ero. This stop crit erion DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ implies that t he proc essing loop may stop b efore all p ixels ar e processed. The consid eration of t he n eighborhood to determine the n ew radiance to be allocated to a p ix el i s de pendent on the disparities of the n eighboring pixels, w hich ca n potentially p ropagate errors. T he recalculated dispa rities are consid ered with the s ame reliabi lity as the initial di sparities. The distin ction is made between a vali d match and an in valid match but a d ifferenti ation i s also made at the l evel of th e pi xels. A p ixel can be ex ploitable or non-exploitable. Its exploitability is based on the LDR value of its color components. I f at least one of the m is not within a defined range, the pixel is considered to be non-exploit able. O therwise, it is exploitable and can therefore be used in the proposed methods. In this definition, a pixel of w hich th e set of the u nder-exposed or over-exposed color components is considered to be non-exploitable. Figure 5. Pixels a nd disparities labelling of the neighb orhood 3 3 of a pixel p of an invalid match. The disparity d of th e curr ent pixel is calcula te d as a f unction of th e disparities of th e exploitable pixels of the neighborhood. It is defined b y the a lgorithm 3. This choi ce i s guided b y t he fact that the d ifficulties of p airing essentia lly t ake pl ace at the edges of th e objects. Horizontal and then vertical consistenci es are favore d first in equal ity and th en in increment. If the n eighboring disparities d o not c orrespond t o t hese ca ses, a me dian valu e of the disparit ies is attributed. The method is based on the assumption t hat the disparit y is wrong, so it is n ecessary for the new dispa rity to be diff erent from th e initial one. However, thi s method does not ensure th e modification of all the disparities of th e pixels of th e inv alid matches. Th e new disparity may sometimes be id entical to the original dispar ity without any other choice in the attribu tion. 5.2.2 Method based on mono-chromatic disparities The second met hod proposed t o modify t he disparities of th e p ixels of in valid ma tch es is based on dispar ity m a ps independently computed on ea c h color component R, G, B. Assuming that a pixe l may not b e under-exposed or o ver-expos ed on at l east one of th e 1 http:// vision.middl ebury.edu/st ereo/data/ color components , w e exploit this independence so a s to modi fy th e disparity of a pix el by taking into account the disparities calculat ed on th e t hree co lor components se parately. The method consists first of all of considering only on e component on each of t he multi- exposure i mages. T he s ame component is chosen f or th e set of images. T hus, f rom the RGB i mages, we obtain a set of i mages corresponding t o th e only R comp onent (th e same for the G and B components). Each of th e three sets of i mage s is therefore us ed separately to generate the disparity maps associated with each c olor component. For ea ch pixel, we now have t hre e d isparities obtained respectively from th e R i mages, th e G i mages and the B i mages. T he choice of the disparity is based on th e LDR val ues R, G and B of the processed pix el. A pixel wh ose color i s clos e to the median valu e range on wh ich the LDR i mages are stored is neith er underexposed nor overexposed. Its disparity is therefore likely to have been correctly calculated. W e classify the valu es R, G and B as a function of their distanc e from the median. The dis parity chosen is that calculated from th e color component whose distanc e t o t he median is the lowest. We verify that this disparity is different from the initial disparity. If this is not t he ca se, th e choic e i s the disp arity calculated on th e s econd c omponent whose value is closest t o t he median, and so on. As with th e previous meth od, it is p ossible that the n ew disparity cann ot be di fferent from t he i nitial disparit y. In this case, the correction is n ot made and the initial disparity is retained. 6. RESULTS AND DISCUSSIONS 6.1 Image dataset We t este d our approaches on three di fferent datas ets. The first one is gen erated using th e OctoCam multiview camera [17] equi pped with eight horizontally aligned and sync hronized objectives designed to deliver 3D co n tent for auto-stereoscopic displays. This camera is based on a simplified epipolar geometry that permits strong assumptions on 3D st ereovision algorithms [ 18] and horizonta lly al igned epipolar lin es. Each of its s ensors allows t he acquisiti on of 10 bits per color c hannel. A neutral d ensity fil ter i s fixed on e ach objective; consequently, a di fferent percentage of t he light reaches the s ensor for each view, hence acquired images represent different exposures. The s econd series of i mages was generated usin g th e POV-Ray ray- tracer. W e repr oduced th e geometry o f th e Oct oCam to render e i ght images from aligned viewpoints of a synthetic scene. The thi rd source of images is the database m ad e available on the Internet by Midd lebury University 1 w hich offers i mages acquired from different points of view and different exposures. Contrar y to those of O ctoCam, th e images proposed by Mi ddlebury are acquired accordin g t o a parall el geometry s o it was necessary to choose a region o f interest in the original images in o rder to satisfy the requirements of the off-centered parallel geometry. 6.2 Objective quality metrics To j u dge on the quality of an HDR i mage, the most common method is to c ompare it with a ref erence image. Th e HDR-VDP (High Dynamic Range Vis ible Difference Predictor), developed by Mantiuk et al.[ 13] has been devel oped f or this typ e of image. An update of t his metric was proposed b y Narwaria et al . [14]. One of the m ajor d isadvantages o f this predictor i s the numb er of DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ parameters to be consid ered. H owever, for simpli city a version is available onl ine 2 . On t he same princi ple, th e author s ha ve developed a met hod for HDR v ideos[15]. Aydi n et al. [ 1] and Valenzise et al. [23] pro posed comparing t wo HDR i mages with the measures traditionall y us ed t o compare t wo LDR im ages such as PSNR (Peak Signal-to-Noise R atio) and SSIM (Structural Similarity Index) by adapting the d ata used. Inst ead of ta king into account the color com ponents Red, Gr een and Bl ue, th e data is transformed to b ecome p erceptually uni form. W hen th e data were not linear, Narw aria e t al. [14] demonstrated b etter HDR-VDP performance. Hanhart et a l. [8] conclude that HDR-VDP-2 and P Q2VIFP are the best gen eric pr edictors of visual q uality as they show l ess c ontent dependency than the other metrics. 6.3 Evidence of limitation on objects’ edges Visual e r rors occur b ecause of the d ifficul ty of aggrega tin g en ough pixels in a match to obtain a corr ect HD R value. Wh atever th e s et of images considered, e rrors are mostly l ocalized at the contours of the obj ects si nce th ere ar e low er numb ers of co rrespondents. Figur e 6 presents the i mage a cquired on the view #0 for th e Dolls i mage set of the Middlebury database and th e number of pixels contained in the match to which the cur rent pixel belongs. In the neighborhood of objects’ edges, t he nu mbe r of pi xels contained i n the matches decreases. Figure 6. Distribut ion of the number of pixels per match in the HDR image generated on the vie w #0 of the Dolls image set. The associated color code is: gray-7 pixels, cyan-6 pixels, green-5 pixels, yel low -4 pixels, orange-3 pixels, red-2 pix els and black-1 pixel. 6.4 Evaluation of the method of automatic detection of invalid matches A comparison is mad e between the r eference HDR images an d th e HDR images produced by ou r uncorrected method. This comparison is made independentl y on e ach of the point s of vie w. A threshold, fix ed at 6% of th e range of pixel r adiance values, is considered to b e tolerable. The pix els whose Euclid ean distance in the RGB space is g reater than this threshold are considered incorrect. We obtain reference v alues on the number of correct pixels and the numb er of incorrect pixels. Th ese values are compared to those obtained by our automatic detection. Table 1 shows a s eries of p ercentages calculat ed on the i mage s acquired wit h the OctoCam when the neutral density filters ar e fixed on s ome of th e camera l enses and on the ima ge set Dwarv es of th e Middlebury databas e. The Correct Pixels column bin ds the number of pixels correctly clas sified a s correct b y our method t o the correct number o f pixels in th e reference me thod . The Incorrect Pixels co lumn corresponds to t he num ber of p ixels co rrectly classified as inco rrect by the pr oposed d etection me thod relative to the number of inc orrect pi xels in the r eference method. The False 2 http://dri iqm.mpi- inf.mpg.de Positives column r epresents the n umber of pix els that are incorrectly cl assified as incorre ct by our method r elative to th e t otal number of pixels that are classi fied as incorrect b y the same method. The Fa lse Negati ves col umn r elates th e number of pixels that are in correctly classified as correct by the propos ed method and the total n umb er of p ixels th at are classified as correct in the same method. Table 1. Dis tribution of the detection of invalid matches in comparison with the di fference bet w een the reference HDR image and the ge nerated HDR image. Figure 7. Erroneous pixels automatically detected by our pre sented approach. These results show that th e i ncorrect pi xels are l ess numerous b y our method t han b y t h e r eference method b ut they correspond to pixels whose r adiance must absolutely b e corrected. Th e referenc e method n eeds a thr eshold whos e value is di fficult to choose. Our experimentations show that the incr ease of this threshold increases the n umber of false positives and re duc es the numb er of fals e negatives in the proposed method, and its reduction does not allow the d etection of clearly identif ied pixels that ar e err oneous i n th e HDR images produced. An exampl e case is shown in Figure 7. 6.5 Evaluation of the quality of the generated HDR images For our work we have chos en to use HDR-VDP-2 (see F igure 8 ) . However, in order to apply th is metric, w e n eed HDR r efer ence images to comp are to. To obtain the b est possibl e refer ence i mages, we generate ind ependently p er-viewpoint ref erence HDR i mages by combining several exposures of a s ame vi ewpoint us ing th e weighted average method of D ebevec and Mal ik [6]. W e use thr ee exposures for Mi ddlebury database s ets and f our for s ets acquired with OctoCam and synthetic sets. Figure 8. Color scale of visibility difference probabilit y detecti on used by HDR-VDP-2. Among t he three c orrection methods proposed, th e color-based method is t he one t hat produces the higher quality HD R images for the i mages of the Middlebury database. Fi gure 9 shows particular DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ areas which clearly hi ghlight the i mprovements made locally by t he color-based method. As shown inFi gure 10 and Figure 11 , m any of the p erceptible defects in th e H DR i mages have b een corrected. Th e i m ages produced by th e HD R-VDP-2 s how a l arger b lue c olor r ange with this m ethod on views 0 a nd 6 , wh ich p roves that human eye d oes not perceive any dif ference wit h the reference i mage in thi s area. By considering the neighborhood of a pixel, it is possib le to assign an HDR valu e to each pi xel, wh ich is not allowed by the original method, because of the c onsideration of a saturation threshold on the p ixels of a match, neither by the two m ethods bas ed on disparities, since for some pixels the disparity cannot be modified. For th e data s et a cquired w ith Oct oCam (Figure 1 2) and t h e synthesis images ( Figure 1 3), we can see that the results of t he methods proposed a re cl ose. Using the HDR-VDP-2, we can see some d ifferences b etween the images produced by e ach of the methods a nd those produced by the method without correction, but the p ixels c oncerned are few in n umber. Th ese two sets of i mages have fewer automatically d etected pixels, which results in th e correction of a small number of pi xels i n the whole ima ge. Therefore, the differences between the methods are not perc eived. Methods bas ed on the improvement of th e dispar ities do not make it possibl e t o achieve t he qual ity of the HDR images produced b y the c olor-based method. T he choice t o change the d isparities is based on th e desire t o reduc e the number of invalid matches to t he maximum but these two methods encounter two di fficulties. Th e first is th e n eed t o fi nd a di sparity di fferent from t hat ori ginally attributed, w hich is not always possible si nce t he algorith ms may lead t o th e choice of the same d isparit y. The second difficul ty is, despite th e correction of the dispariti es, to b e able t o assign a n ew correct HDR value. The changes made by the color-based method ar e more i mportant on the extreme views because the pixels belongin g to invalid matches ar e on these i mages. Indeed , it i s on these views t hat are placed th e images of lower exposures, w hich makes their p ixels more vuln erable. Few pixels ar e erroneous on the central views s o the images appear to be identical to their initial value. Figure 9. HDR and HDR-VDP-2 i mages corresponding to selected areas in the Midd le bury database images for color b ased, heuristic disparity and mo no-chromatic methods. Figure 10. HDR and HDR-VDP-2 images on each point of view for color-based, heuri stic-disparity and m ono- chromatic methods for the Moebius image set of the Middlebury database. Figure 11. HDR and HDR-VDP-2 images on each point of view for the color-based , heuristic-on-disparity and mono-chromatic methods for the Dwarves image set of the Middlebury database. DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ Figure 12. HDR and HDR-VDP-2 images on each point of view for co lor-based, heuristic-on-disparity and mono- chromatic methods for the image set acq uired w ith OctoCam mo unted with neutral density filters. Figure 13. HDR and HDR-VDP-2 images on each point of view for co lor-based, heuristic-on-disparity and mono- chromatic methods for the ima ge set generated with the POV-Ra y raytracing soft w are. 6.6 Highlighting errors due to the pixel mapping method The studies we carried out on the geometric and colorimetric coherence o f the d ata acquired for the generation of 3D HDR images [4] do not completely justify the presence of all the errors . In order to highlight the rol e of t he wrong r esults in th e matchin g method, ne w refe rence images are co mputed. For these images, th e disparity maps and the associated m atch lists are cal culated fr om the same e xposure images, wh ich g ives optimal disparity maps. An HDR image p er point of view is then calcula ted from these n ew elements. This image becomes a new reference that we compare to the reference i mage used i n pr evious comparisons (s ee Figure 1 4). The central i mage really emphasizes t hat the errors in th e methods come fro m t he method of d isparities and n ot from its application on images of multiple exposures. (a) (b) (c) Figure 14. Comparisons: a ) of the initial reference HDR image wi th the ne w reference HDR image, b) of the HDR image w ith the initial reference HDR image, c) of the HDR image with the new reference HDR image. The results of th e color-based method clearly sho w that corrections have b een made in are as where errors are d ue to multi-exposure frames B . The HDR-VDP-2 shows th at the corrections made are generally better sin ce the z ones A ar e also i mproved. It i s interesting t o n ote, ho wever, in B ox C, errors r eintroduced by t he method appear (see Fi gure 15) . The radiance attributed t o the m becomes of lower quality than the ini tial radiance. (a) (b) (c) Figure 15. Comparisons: a ) of the initial reference HDR image wi th the ne w reference HDR image, b) of the HDR image wi th the ne w reference HDR image, c) of the HDR image after color-based correction with the new reference HDR image. 7. CONCLUSION In th is paper, we address t he di fficult topic of HDR generation f or multiscopic data. Th e main difficult ies, hig hl ighted in t his research work, come from t h e fa ct that HDR generation requi res accurate registration of pixel. However, m ultiscopic im ages p resent n on- linear displacement, making thus this reg istration proc ess difficult. Disparity i s explor ed as a registration approach. W e provid e an indepth analysis on the li mitation of disparity-bas ed HDR generation. On e difficulty co mes from t he fact that disparity solvers rely on color matchi ng whereas data inpu t in our case ar e differently exposed. A nother difficulty is int rinsic to d isparity algorithm, where o bject bor ders o r o utliers (highli ghts) have always been known as difficult to address. In th is pap er, we propose an automatic method t o locall y d etect wrongly gene rated H DR va lues and we p ropose c orrection approaches. Our results show that we are able to correctly id entify erroneous pix els and that w e a re able t o si gnificantly improv e th e results. As future work, we would like to explore s olutions that solve together disparity and HDR value s, thus be neficiatin g bot h on depth and HDR knowl edge. A khavan et al. [ 1] dem onstrated the benefice of HDR imagin g to disparity computation. 8. ACKNOWLEDGMENT This work was fund ed by th e ANR pr oject ReVeR Y ANR-17- CE23-0020. DISP '19, O xford, United Kingdom ISBN: 978-1-91253 2-09-4 DOI: http://dx.doi.org/10.17501........................................ 9. REFERENCES [1] Akhavan T., Kaufmann H.. Backward compatible hdr ster eo matching: a hybrid tone-mapping-based framework. EURASIP Journal on Image and Video Processing, 2015 (1), 2015. [2] Aydın, T.O.; Mantiuk, R.; S ei del, H.P. Extending Quality Metrics to Full Dynamic Ran ge Images. Human Vision and Electronic Imaging XIII; 2008 ; Procee din gs of SPIE, pp. 6806–10. [3] Bonnard, J.; Loscos, C.; Valette, G.; Nourrit, J.M.; Lu cas, L. High-dynamic range video acquisition with a multiview camera. Proceedings SPIE, Optics, Photonics, and Digital Technologies for Multimedia Applications II 2012 , 8436, 84360A–0 – 84360A–11. [4] Bonnard, J.; Valette, G.; Nourrit, J.M.; Loscos, C. Analysis of the Consequences of Data Quality and Calibration on 3D HDR Image Generation. European Signal Process ing Conference (EUSIPCO); 2014. [5] HDR Image Generation. European Signal Proce ssing Conference (EUSIPCO); , 2014 . [6] Debevec, P.E.; Malik, J. Recoverin g High Dynamic Range Radiance Maps from Photographs. Proceedin gs of SIGGRAPH Computer Graphics, 1997, pp. 369–378 .. [7] Drago, F.; Myszkowski, K.; Annen, T.; Chiba, N. Adapt ive Logarithmic Mapping For Displaying High Contrast Scen es. Computer Graphics Forum 2003, 22, 419–426. [8] Hanhart, P.; Rerabek, M.; Ebrahimi, T. Subj ective and Objective Evaluation of HDR Video Coding Technologies. Quality of Multimedia Experience (QoMEX), 201 6 Eighth International Conference on, 2016, pp. 1–6. [9] Hartley R.; Zisser man A., Multi ple view geometry in computer vision. Cambridge university press, 2003. [10] Lin H.-Y., Chan g W .-Z., High d ynamic r ange imagin g for stereoscopic scene representation, in Image Processin g (ICIP), 2009 16th IEEE International Con ference on, 2 009, pp. 430 5– 4308. [11] Loscos C., Jacobs K., Hi gh-Dynamic Ra nge Imaging for Dynamic Scenes. CRC Press/ Taylor & Francis, p. 259. [12] Mann S., Picard R. W., On being ‘undigital’ with dig ital cameras: Ex tending dynamic range by combining di fferently exposed p ictures, in IS&T, 4 8th an nual conference, Washington, D.C., May 1995, pp. 442–448. [13] Mantiu k, R. ; Daly, S.J.; Myszkow ski, K.; Seidel, H.P. Predicting visible differences in high dynamic range 308 images: model and its calibration . Procee d in gs SPIE 2005, 5666, 204–214. [14] Narwaria, M.; Manti uk, R.K.; Da Silva, M.P.; Le Callet, P. HDR-VDP-2.2: a calibrated method for obj ect iv e quality prediction of high-dynamic range and standard i mages. Journal of Electronic Imaging 2015, 24, 010501. [15] Narwaria, M.; Perr eira Da Silva, M.; Le Callet, P. HD R- VQM: An objective quality measu re for high dynamic range video. Signal Processin g: Image Communica tion 2015 , 35, 46 – 60. [16] Niquin , C.; Prévost, S.; Remion, Y. An occlusion approach with consistency constraint for multiscopic depth extraction. International Journal of Digital Multi media Broadcasting (IJDMB), special issue Advances in 3DTV: Theory and Practice 2010, 2010, 1–8. [17] Pr évoteau, J.; Chalençon-Piotin, S.; Debons, D.; Lucas, L.; Remion, Y. Multi-view shooting geometry for multiscopic rendering with controlled distortion . I nt ernational Journal of Digital Multimedia Broadcastin g (I JDMB), special issue Advances in 3DTV: Theory and Practic e 2010, 2010 , 1–11. [18] Pr évoteau, J.; Lucas, L.; Remion, Y. Shooting and Vi ew in g Geometries in 3DTV. In 3D Video: From Captu re 306 to Diffusion; Lucas, L.; Loscos, C.; Remion, Y., Eds.;Wiley- ISTE, 2013; pp. 71–90. [19] Ra mirez Orozco R., Martin I., Loscos C ., Vasqu ez P.P., F ull high-dynamic range images for dyna mic scenes, in Optics, Photonics, and Dig ital Technologies for Multimedia Applications II, 843609, SPIE, Jun. 2012. [20] Ra mirez Orozco R., Loscos C., Martin I., and Artusi A., Multiscopic HDR i mage sequence generation, in Win ter School of Comput er Graphics, V. Skala, Ed., Pilsen, Czech Republic, p. 111. [21] Reinhard, E.; Ward, G.; Pattanaik , S.; Debevec, P.; Heidrich, W.; Myszkowski, K. High Dynamic Ran ge Imaging: Acquisition, Display, and Image-b ased Lighting, 2nd ed.; The Morgan Kaufmann series in Co mputer Graphics, Elsevier (Morgan Kaufmann): Burlington, MA, 2010; p. 649. [22] Sun N., Mansour H., Ward R., HDR image construction from multi-exposed ster eo LDR images, in Proc eedings of t he IEEE International Conference on Ima ge Pro cessing (ICIP), Hon g Kong, 2010. [23] Valenz ise, G.; De Simone, F.; Lauga, P.; Dufaux, F. Performance evaluation of obj ective quality metrics for 316 HDR image compression. Proceedin gs of SPIE 2014 , 9217, 92170C–92170C–10.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment