Transferring Multiscale Map Styles Using Generative Adversarial Networks

The advancement of the Artificial Intelligence (AI) technologies makes it possible to learn stylistic design criteria from existing maps or other visual art and transfer these styles to make new digital maps. In this paper, we propose a novel framework using AI for map style transfer applicable across multiple map scales. Specifically, we identify and transfer the stylistic elements from a target group of visual examples, including Google Maps, OpenStreetMap, and artistic paintings, to unstylized GIS vector data through two generative adversarial network (GAN) models. We then train a binary classifier based on a deep convolutional neural network to evaluate whether the transfer styled map images preserve the original map design characteristics. Our experiment results show that GANs have a great potential for multiscale map style transferring, but many challenges remain requiring future research.

💡 Research Summary

The paper presents a novel framework that leverages Generative Adversarial Networks (GANs) to automate multiscale map style transfer, a task traditionally handled by manual cartographic rule‑based workflows. The authors focus on the challenge of producing a coherent visual identity across zoom levels (1–20) for web‑based maps, where existing tools (CartoCSS, Mapbox Studio, TileMill) require extensive hand‑crafted styling. To address this, they investigate two GAN architectures: Pix2Pix, a paired image‑to‑image translation model, and CycleGAN, an unpaired domain‑translation model.

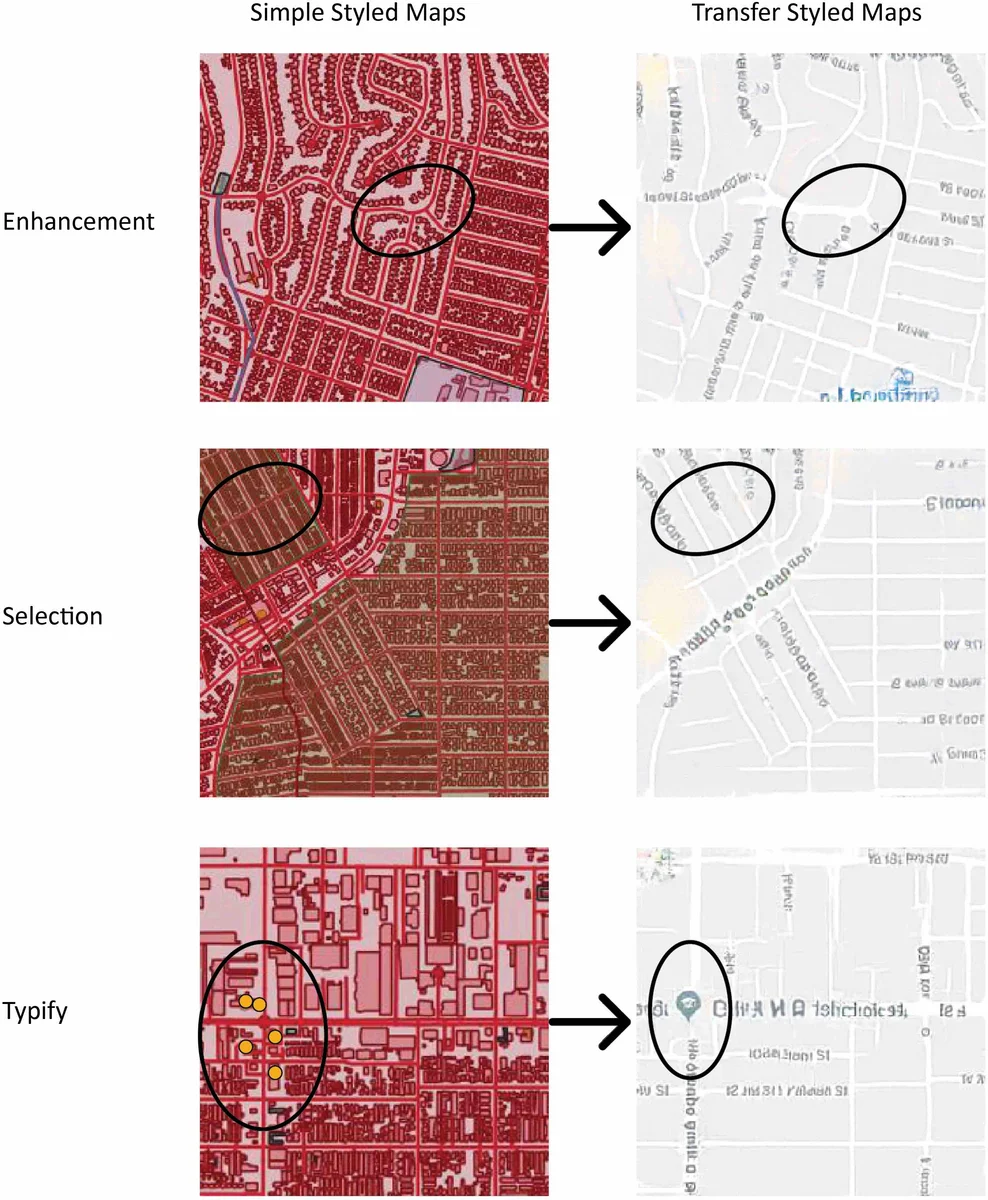

Data preparation begins with raw OpenStreetMap (OSM) vector data, which is rendered with a minimal, uniform color scheme to create “simple styled” map tiles. These tiles serve as the content source. As target styles, the authors collect Google Maps tiles (representing a commercial cartographic style) and a set of artistic paintings (e.g., Monet, Picasso) to explore non‑cartographic aesthetics. All tiles are generated at 256 × 256 pixels, aligned in the Spherical Mercator projection (EPSG:900913), and organized into a hierarchical pyramid so that each zoom level corresponds to a distinct scale.

For Pix2Pix, the authors pair each OSM tile with the corresponding Google Maps tile at the same geographic extent and zoom level, enabling supervised learning of a deterministic mapping from content to style. The loss function combines the standard conditional GAN adversarial loss with an L1 pixel‑wise loss, encouraging the generator to produce outputs that are both realistic and close to the ground‑truth target. CycleGAN, by contrast, does not require paired data; it learns two mappings (X→Y and Y→X) between the OSM domain and the artistic‑painting domain, using adversarial losses for each direction plus a cycle‑consistency loss that forces a round‑trip reconstruction to resemble the original input. This allows the transfer of purely visual styles (color palettes, brushstroke textures) onto map content, even when the source and target images share no geographic correspondence.

After training, the models are applied to map tiles covering Los Angeles and San Francisco at multiple zoom levels. To assess whether the generated images are perceived as legitimate maps, the authors construct a binary classifier named IsMap, based on the Inception‑v3 architecture. The classifier is trained on two classes: (1) map tiles (both original and style‑transferred) and (2) non‑map photographs harvested from Flickr. Evaluation metrics include true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN). Pix2Pix achieves a TP rate above 85 %, indicating that most of its outputs are recognized as maps, while CycleGAN attains roughly 70 % TP, reflecting the difficulty of preserving fine cartographic symbols when only visual style is transferred.

The study highlights several strengths and limitations. GANs can indeed learn and reproduce complex visual styles without explicit CartoCSS rules, and the paired Pix2Pix approach preserves detailed symbol attributes (road width, label color) better than the unpaired CycleGAN. However, challenges remain: (a) limited geographic and scale diversity in the training set leads to degradation of small symbols at high zoom levels; (b) mode collapse and training instability occasionally produce artifacts; (c) the binary “map vs. photo” evaluation does not capture nuanced cartographic quality aspects such as label legibility, color contrast, or thematic clarity.

Future work suggested includes (i) incorporating scale‑conditional inputs into the GAN to explicitly model zoom‑level variations, (ii) designing composite loss functions that simultaneously enforce style fidelity and symbol preservation, (iii) expanding the dataset to cover more regions, scales, and thematic layers, and (iv) developing richer evaluation protocols that combine human perceptual studies with quantitative map‑readability metrics. If these directions are pursued, the proposed framework could evolve into a practical, automated styling pipeline for web mapping platforms, reducing the manual burden on cartographers while enabling novel, artistically inspired map designs.

Comments & Academic Discussion

Loading comments...

Leave a Comment