Utterance-level Aggregation For Speaker Recognition In The Wild

The objective of this paper is speaker recognition "in the wild"-where utterances may be of variable length and also contain irrelevant signals. Crucial elements in the design of deep networks for this task are the type of trunk (frame level) network…

Authors: Weidi Xie, Arsha Nagrani, Joon Son Chung

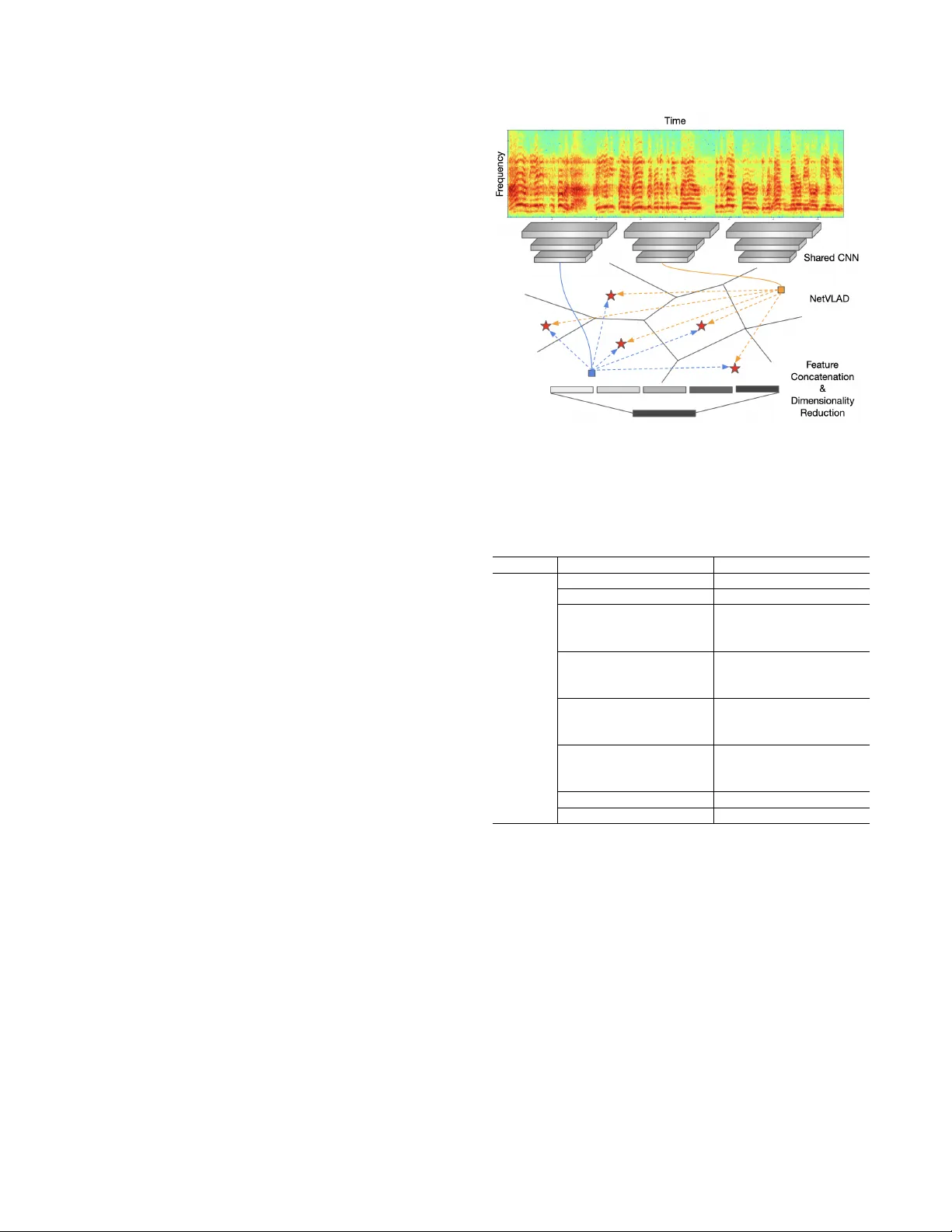

UTTERANCE-LEVEL A GGREGA TION FOR SPEAKER RECOGNITION IN THE WILD W eidi Xie 1 , Arsha Na grani 1 , J oon Son Chung 1 , 2 and Andr ew Zisserman 1 1 V isual Geometry Group, Department of Engineering Science, Uni versity of Oxford, UK 2 Nav er Corporation, South Korea { weidi, arsha, joon, az } @robots.ox.ac.uk ABSTRA CT The objectiv e of this paper is speaker recognition ‘in the wild’ – where utterances may be of v ariable length and also con- tain irrelev ant signals. Crucial elements in the design of deep networks for this task are the type of trunk (frame lev el) net- work, and the method of temporal aggregation. W e propose a powerful speaker recognition deep network, using a ‘thin- ResNet’ trunk architecture, and a dictionary-based NetVLAD or GhostVLAD layer to aggregate features across time, that can be trained end-to-end. W e show that our network achiev es state of the art performance by a significant margin on the VoxCeleb1 test set for speaker recognition, whilst requiring fewer parameters than pre vious methods. W e also in vestigat e the effect of utterance length on performance, and conclude that for ‘in the wild’ data, a longer length is beneficial. Index T erms — speaker recognition, speak er v erification, speech, deep learning, CNNs 1. INTR ODUCTION Speaker recognition ‘in the wild’ has receiv ed an increas- ing amount of interest recently due to the av ailability of free large-scale datasets [1, 2, 3], and the easy accessibility of deep learning framew orks [4, 5, 6]. For speaker recognition, the goal is to condense information into a single utterance-level representation, unlike speech recognition where frame-lev el representations are desired. Obtaining a good utterance lev el representation becomes particularly important for speech ob- tained under noisy and unconstrained conditions, where irrel- ev ant parts of the signal must be filtered out. Therefore, a k ey area of research in deep learning for speaker recognition is to in vestig ate how to effecti vely aggregate frame-lev el charac- teristics into utterance-lev el speaker representations. Earlier deep neural network (DNN) based speaker recog- nition systems ha ve na ¨ ıvely used pooling methods that have been successful for visual recognition tasks, such as av erage pooling [2, 3, 7, 8] or fully connected layers [9, 10] to con- dense frame-level information into utterance-lev el representa- tions. Although such methods serve the purpose of aggregat- ing frame-level information into a single representation whilst http://www.robots.ox.ac.uk/ ˜ vgg/research/speakerID still allowing back-propagation, the aggregation is not content dependent, so they are not able to consider which parts of the input signal contain the most relev ant information. On the other hand, traditional methods for speaker and language identification such as i-vector systems hav e ex- plored the use of statistical or dictionary-based methods for aggregation. A number of recent works have proposed to bring similar methods to deep speaker recognition [11, 12, 13, 14, 15] (described in Sec. 1.1). Based on these works, we propose to marry the best of both Con volutional Neu- ral Networks (henceforth, CNNs) and a dictionary-based NetVLAD [16] layer , where the former is kno wn for cap- turing local patterns, and the latter can be discriminati vely trained for aggregating information into a fixed-sized de- scriptor from an input of arbitrary size, such that the final representation of the utterance is unaffected by irrelev ant information. W e make the following contributions: (i) W e propose a powerful speaker recognition deep network, based on a NetVLAD [16] or GhostVLAD [17] layer that is used to aggregate ‘thin-ResNet’ architecture frame features; (ii) The entire network is trained end-to-end using a large margin softmax loss on the large-scale VoxCeleb2 [3] dataset, and achiev es a significant improvement ov er the current state- of-the-art verification performance on VoxCeleb1 , despite using fewer parameters than the current state-of-the-art ar- chitectures [3, 13]; and, (iii) W e analyse the effect of input segment length on performance, and conclude that for ‘in the wild’ sequences having longer utterances (4s or more) is a significant improv ement ov er shorter segments. 1.1. Related works End-to-end deep learning based systems for speaker recogni- tion usually follo w a similar three-stage pipeline: (i) frame lev el feature extraction using a deep neural network (DNN); (ii) temporal aggre gation of frame le vel features; and (iii) op- timisation of a classification loss. In the following, we re view the three components in turn. The trunk DNN architecture used is often either a 2D CNN with con volutions in both the time and frequency do- main [2, 3, 15, 18, 19, 20], or a 1D CNN with con v olutions applied only to the time domain [11, 12, 13, 21]. A number of papers [8, 22] ha ve also used LSTM-based front-end archi- tectures. The output from the feature extractor is a variable length feature vector , dependant on the length of the input utter - ance. A verage pooling layers have been used in [2, 3, 8] to aggregate frame-lev el feature vectors to obtain a fixed length utterance-lev el embedding. [11] introduces an extension of the method in which the standard deviation is used as well as the mean – this method is termed statistical pooling , and used by [12, 21]. Unlike these methods which ingest infor - mation from all frames with equal weighting, [20, 22] hav e employed attention models to assign weight to the more dis- criminativ e frames. [13] combines the attention models and the statistical model to propose attentive statistics pooling – this method holds the current state-of-the-art performance on the VoxCeleb1 dataset. The final pooling strategy of in- terest is the Learnable Dictionary Encoding (LDE) proposed by [14, 15]. This method is closely based on the NetVLAD layer [16, 23] designed for image retriev al. T ypically , such systems are trained end-to-end for classi- fication with a softmax loss [13] or one of its v ariants, such as the angular softmax [15]. In some cases, the network is fur- ther trained for verification using the contrastiv e loss [2, 3, 24] or other metric learning losses such as the triplet loss [7]. Similarity metrics like the cosine similarity or PLDA are of- ten adopted to generate a final pairwise score. 2. METHODS For speaker recognition, the ideal model should have the fol- lowing properties: (1) It should ingest arbitrary time lengths as input, and produce a fixed-length utterance-level descrip- tor . (2) The output descriptor should be compact (i.e. lo w- dimensional), requiring little memory , to facilitate efficient storage and retriev al. (3) The output descriptor should also be discriminative , such that the distance between descriptors of different speak ers is larger than those of the same speak er . T o satisfy all the aforementioned properties, we use a modified ResNet in a fully con volutional way to encode in- put 2D spectrograms, followed by a NetVLAD/GhostVLAD layer for feature aggregation along the temporal axis. This produces a fixed-length output descriptor . Intuitiv ely , the VLAD layer can be thought of as trainable discriminativ e clustering: ev ery frame-lev el descriptor will be softly as- signed to dif ferent clusters, and residuals are encoded as the output features. T o allow efficient verification (i.e. low memory , fast similarity computation), we further add a fully connected layer for dimensionality reduction. Discriminativ e representations emerge because the entire network is trained end-to-end for speaker identification. The network is shown in Figure 1 and described in more detail in the following paragraphs. Fig. 1 : Network architecture. It consists of two parts: fea- tur e extraction , where a shared CNN is used to encode the spectrogram and extract frame-lev el features, and aggr ega- tion , which aggregates all the local descriptors into a single compact representation of arbitrary length. Module Input Spectrogram ( 257 × T × 1 ) Output Size Thin ResNet con v2d, 7 × 7 , 64 257 × T × 64 max pool, 2 × 2 , stride ( 2 , 2 ) 128 × T / 2 × 64 conv , 1 × 1 , 48 conv , 3 × 3 , 48 conv , 1 × 1 , 96 × 2 128 × T / 2 × 96 conv , 1 × 1 , 96 conv , 3 × 3 , 96 conv , 1 × 1 , 128 × 3 64 × T / 4 × 128 conv , 1 × 1 , 128 conv , 3 × 3 , 128 conv , 1 × 1 , 256 × 3 32 × T / 8 × 256 conv , 1 × 1 , 256 conv , 3 × 3 , 256 conv , 1 × 1 , 512 × 3 16 × T / 16 × 512 max pool, 3 × 1 , stride ( 2 , 2 ) 7 × T / 32 × 512 con v2d, 7 × 1 , 512 1 × T / 32 × 512 T able 1 : The thin-ResNet used for frame lev el feature extrac- tion. ReLU and batch-norm layers are not shown. Each row specifies the # of con volutional filters, their sizes, and the # filters. This architecture has only 3 million parameters com- pared to the standard ResNet-34 (22 million). Featur e Extraction. The first stage in volv es feature extrac- tion from input spectrograms. While any netw ork can be used in our learning framew ork, we opt for a modified ResNet with 34 layers. Compared to the standard ResNet used before by [3], we cut down the number of channels in each residual block, making it a thin ResNet-34 (T able 1). NetVLAD. The second part of the network uses NetVLAD [16] to aggregate frame-le vel descriptors into a single utterance- lev el vector . Here we provide a brief ov erview of NetVLAD (for full details please refer to [16]). The thin ResNet maps the input spectrogram ( R 257 × T × 1 ) to frame-le vel descriptors with size R 1 × T / 32 × 512 . The NetVLAD layer then takes dense descriptors as input and produces a single K × D matrix V , where K refers to the number of chosen cluster , and D refers to the dimensionality of each cluster . Concretely , the matrix of descriptors V is computed using the following equation: V ( k , j ) = T / 32 X t =1 e w k x t + b k P K k 0 =1 e w 0 k x t + b k 0 ( x t ( j ) − c k ( j )) (1) where { w k } , { b k } and { c k } are trainable parameters, with k ∈ [1 , 2 , ..., K ] . The first term corresponds to the soft- assignment weight of the input vector x i for cluster k , while the second term computes the residual between the vector and the cluster centre. The final output is obtained by performing L 2 normalisation and concatenation. T o keep computational and memory requirements lo w , dimensionality reduction is performed via a Fully Connected (FC) layer , where we pick the output dimensionality to be 512 . W e also experiment with the recently proposed GhostVLAD [17] layer , where some of the clusters are not included in the final concatenation, and so do not contribute to the final representation, these are re- ferred to as ‘ghost clusters’ (we used two in our implementa- tion). Therefore, while aggregating the frame-le vel features, the contribution of the noisy and undesirable sections of a speech segment to normal VLAD clusters is effecti vely down- weighted, as most of their weights hav e been assigned to the ‘ghost cluster’. For further details, please see [17]. 3. EXPERIMENTS 3.1. Datasets W e train our model end-to-end on the VoxCeleb2 [3] dataset (only on the ‘dev’ partition, this contains speech from 5,994 speakers) for identification and test on the VoxCeleb1 verification test sets [3]. Note that the de velopment set of VoxCeleb2 is completely disjoint from the VoxCeleb1 dataset ( i.e. no speakers in common). 3.2. T raining Loss Besides the standard softmax loss, we also experiment with the additive margin softmax (AM-Softmax) classification loss [25] during training. This loss is known to improve ver - ification performance by introducing a margin in the angular space. The loss is giv en by the follo wing equation: L i = − log e s (cos θ y i − m ) e s (cos θ y i − m ) + P j 6 = y i e s cos( θ j ) (2) where L i refers to cost of assigning the sample to the correct class, θ y = ar ccos ( w T x ) refers to the angle between sample features ( x ) and the decision hyperplane ( w ), as both vectors hav e been L2 normalised. The goal is therefore to minimise this angle by making cos ( θ y i ) − m as large as possible, where m refers to the angular margin. The hyper -parameter s con- trols the “temperature” of the softmax loss, producing higher gradients to the well-separated samples (and further shrinking the intra-class v ariance). W e used the default values m = 0 . 4 and s = 30 [25]. 3.3. T raining Details During training, we use a fixed size spectrogram correspond- ing to a 2.5 second temporal se gment, extracted randomly from each utterance. Spectrograms are generated in a slid- ing windo w fashion using a hamming window of width 25ms and step 10ms. W e use a 512 point FFT , gi ving us 256 fre- quency components, which together with the DC component of each frame gi ves a short-time Fourier transform (STFT) of size 257 × 250 (frequency × temporal) out of every 2.5 sec- ond crop. The spectrogram is normalised by subtracting the mean and dividing by the standard deviation of all frequency components in a single time step. No v oice activity detection (V AD), or automatic silence remov al is applied. W e use the Adam optimiser with an initial learning rate of 1 e − 3 , and decrease the learning rate by 10 after ev ery 36 epochs until con ver gence. 4. RESUL TS In this section we first compare the performance of our NetVLAD and GhostVLAD architectures trained using dif- ferent losses to the state of the art, and then inv estigate how performance varies with utterance length. 4.1. V erification on V oxCeleb1 The trained network is e v aluated on three different test lists from the VoxCeleb1 dataset: (1) the original VoxCeleb1 test list with 40 speakers; (2) the extended VoxCeleb1-E list that uses the entire VoxCeleb1 (train and test splits) for ev aluation; and (3) the challenging VoxCeleb1-H list where the test pairs are drawn from identities with the same gender and nationality . In addition, we find that there are a small number of errors in the VoxCeleb1-E and VoxCeleb1-H lists, and hence we ev aluate on a cleaned up version of both lists as well, which we release publically . The network is tested on the full length of the test segment. W e do not use any test time augmentation, which could potentially lead to slight performance gains. T able 2 compares the performance of our models to the current state-of-the-art on the original VoxCeleb1 test set. Our model outperforms all previous methods. W ith standard Front-end model Loss Dims Aggregation Training set EER (%) V oxCeleb1 test set Nagrani et al. [2] I-vectors + PLD A – – – V oxCeleb1 8.8 Nagrani et al. [2] VGG-M Softmax 1024 T AP V oxCeleb1 10.2 Cai et al. [15] ResNet-34 A-Softmax + PLD A 128 T AP V oxCeleb1 4.46 Cai et al. [15] ResNet-34 A-Softmax + PLD A 128 SAP V oxCeleb1 4.40 Cai et al. [15] ResNet-34 A-Softmax + PLD A 128 LDE V oxCeleb1 4.48 Okabe et al. [13] TDNN (x-vector) Softmax 1500 T AP V oxCeleb1 4.70 Okabe et al. [13] TDNN (x-vector) Softmax 1500 SAP V oxCeleb1 4.19 Okabe et al. [13] TDNN (x-vector) Softmax 1500 ASP V oxCeleb1 3.85 Hajibabaei et al. [19] ResNet-20 A-Softmax 128 T AP V oxCeleb1 4.40 Hajibabaei et al. [19] ResNet-20 AM-Softmax 128 T AP V oxCeleb1 4.30 Chung et al. [3] ResNet-34 Softmax + Contrastiv e 512 T AP V oxCeleb2 5.04 Chung et al. [3] ResNet-50 Softmax + Contrastiv e 512 T AP V oxCeleb2 4.19 Ours Thin ResNet-34 Softmax 512 T AP V oxCeleb2 10.48 Ours Thin ResNet-34 Softmax 512 NetVLAD V oxCeleb2 3.57 Ours Thin ResNet-34 AM-Softmax 512 NetVLAD V oxCeleb2 3.32 Ours Thin ResNet-34 Softmax 512 GhostVLAD V oxCeleb2 3.22 Ours Thin ResNet-34 AM-Softmax 512 GhostVLAD V oxCeleb2 3.23 Ours (cleaned † ) Thin ResNet-34 Softmax 512 GhostVLAD V oxCeleb2 3.24 V oxCeleb1-E Chung et al. [3] ResNet-50 Softmax + Contrastiv e 512 T AP V oxCeleb2 4.42 Ours Thin ResNet-34 Softmax 512 GhostVLAD V oxCeleb2 3.24 Ours (cleaned † ) Thin ResNet-34 Softmax 512 GhostVLAD V oxCeleb2 3.13 V oxCeleb1-H Chung et al. [3] ResNet-50 Softmax + Contrastiv e 512 T AP V oxCeleb2 7.33 Ours Thin ResNet-34 Softmax 512 GhostVLAD V oxCeleb2 5.17 Ours (cleaned † ) Thin ResNet-34 Softmax 512 GhostVLAD V oxCeleb2 5.06 T able 2 : Results for verification on the original V oxCeleb1 test set [2] and the extended and hard test sets (V oxCeleb-E and V oxCeleb-H) [3]. [15, 13, 19] do not report results on the V oxCeleb-E and V oxCeleb-H) [3] test sets. T AP: T emporal A verage Pooling. SAP: Self-attentive Pooling Layer [15], † Cleaned up versions of the test lists have been released publically . W e encourage other researchers to ev aluate on these lists. softmax loss and a NetVLAD aggregation layer , it outper- forms the original ResNet-based architecture [3] by a sig- nificant margin (EER of 3.57% vs 4.19%) whilst requiring far fewer parameters (10 vs 26 million). By replacing the standard softmax with the additi ve margin softmax (AM- Softmax), a further performance gain is achiev ed (3.32% EER). The GhostVLAD layer , which excludes irrelev ant information from the aggregation, additionally mak es a mod- est contribution to performance (3.22% EER). On the chal- lenging V oxCeleb1-H test set, we outperform the original ResNet-based architecture [3] (EER of 5.17% vs 7.33%), which is by a larger mar gin than on the original V oxCeleb1 test set. The most similar architecture to ours is the dictionary based method of Cai et al. [15], which we also outperform (EER of 3.22% vs 4.48% ). W e note that training a soft- max loss based on features from temporal av erage pooling (T AP) yields extremely poor results (EER of 10.48%). W e conjecture that the features from T AP are typically good at optimizing the inter -class dif ference (i.e., separating dif ferent speakers), but not good at reducing the intra-class variation (i.e. making features of the same speaker compact). There- fore, contrastiv e loss with online hard sample mining leads to a significant performance boost, as demonstrated in [3] for T AP . It is possible that this would also gi ve a performance boost for NetVLAD/GhostVLAD pooling. 4.2. Additional experiment on GhostVLAD In T able 3, we study the effect of the number of clusters in the GhostVLAD layer . Despite small differences in performance, we show that VLAD aggregation is robust to the number of clusters and to two dif ferent loss functions. Loss Aggregation Clusters G Clusters EER (%) Softmax GhostVLAD 8 2 3.22 AMSoftmax GhostVLAD 8 2 3.23 Softmax GhostVLAD 10 2 3.37 AMSoftmax GhostVLAD 10 2 3.34 Softmax GhostVLAD 12 2 3.30 Softmax GhostVLAD 14 2 3.31 T able 3 : Results for verification on the original V oxCeleb1 test set. All models used the same architecture (Thin ResNet- 34), and we vary the number of VLAD clusters and the loss function. 4.3. Probing v erification based on length T able 4 sho ws the effect of the length of the test segment on speaker recognition performance. In order to provide a fair comparison on lengths up to 6 seconds, we restricted the testing dataset (V oxCeleb1) to speech segments that were 6 seconds or longer (87,010 segments or 56.7% of the total dataset). T o generate verification pairs, for each speaker in the V oxCeleb1 dataset (1251 speakers in total), we randomly sample 100 positive pairs and 100 negati ve pairs, resulting in 25,020 verification pairs. During testing, segments of length 2s, 3s, 4s, 5s and 6s are randomly cropped from each verifi- cation pair . W e repeat this process three times, and compute mean and standard deviation. Segment length(s) 2 3 4 5 6 EER 7.97 ± 0.06 5.73 ± 0.04 4.70 ± 0.02 4.10 ± 0.02 3.39 ± 0.02 T able 4 : Utterance length (in seconds) on performance. As shown in T able 4, there is indeed a strong correlation between verification performance and sequence length. For ‘in the wild’ sequences, some of the data may be noise, si- lence, or speech from other speakers, and a single short se- quence may be unlucky and consist of predominantly these irrelev ant signals. As the temporal length increases, there is a higher chance of capturing rele vant speech signals from the actual speaker . 5. CONCLUSION In this paper , we hav e proposed a po werful speaker recogni- tion network, using a ‘thin-ResNet’ trunk architecture, and a dictionary-based NetVLAD and GhostVLAD layers to ag- gregate features across time that can be trained end-to-end. The network achieves state-of-the-art performance on the popular VoxCeleb1 test set for speaker recognition, whilst requiring fewer parameters than previous methods. W e have also shown the ef fect of utterance length on performance, and concluded that for ‘in the wild’ data, a longer length is beneficial. Acknowledgements. Funding for this research is pro vided by the EPSRC Programme Grant Seebibyte EP/M013774/1. AN is supported by a Google PhD Fello wship in Machine Perception, Speech T echnology and Computer V ision. 6. REFERENCES [1] M. McLaren, L. Ferrer , D. Castan, and A. Lawson, “The speakers in the wild (SITW) speaker recognition database, ” in INTERSPEECH , 2016. [2] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxCeleb: a large-scale speaker identification dataset, ” in INTER- SPEECH , 2017. [3] J. S. Chung, A. Nagrani, and A. Zisserman, “V ox- Celeb2: Deep speak er recognition, ” in INTERSPEECH , 2018. [4] M. Abadi, A. Agarwal, P . Barham, E. Brevdo, Z. Chen, C. Citro, G.S. Corrado, A. Davis, J. Dean, M. Devin, et al., “T ensorflo w: Large-scale machine learning on heterogeneous distrib uted systems, ” arXiv preprint arXiv:1603.04467 , 2016. [5] A. Paszk e, S. Gross, S. Chintala, G. Chanan, E. Y ang, Z. DeV ito, Z. Lin, A. Desmaison, L. Antiga, and A. Lerer , “ Automatic dif ferentiation in pytorch, ” 2017. [6] A. V edaldi and K. Lenc, “Matconvnet: Con volutional neural networks for matlab, ” in Pr oc. A CMM , 2015. [7] C. Li, X. Ma, B. Jiang, X. Li, X. Zhang, X. Liu, Y . Cao, A. Kannan, and Z. Zhu, “Deep speaker: an end-to- end neural speaker embedding system, ” arXiv preprint arXiv:1705.02304 , 2017. [8] L. W an, Q. W ang, A. Papir , and I.L. Moreno, “General- ized end-to-end loss for speaker v erification, ” in 2018 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 4879–4883. [9] Y . Lukic, C. V ogt, O. D ¨ urr , and T . Stadelmann, “Speaker identification and clustering using con volutional neural networks, ” in IEEE 26th International W orkshop on Machine Learning for Signal Pr ocessing (MLSP) . IEEE, 2016, pp. 1–6. [10] I. Lopez-Moreno, J. Gonzalez-Dominguez, O. Plchot, D. Martinez, J. Gonzalez-Rodriguez, and P . Moreno, “ Automatic language identification using deep neural networks, ” in Acoustics, Speec h and Signal Pr ocess- ing (ICASSP), 2014 IEEE International Confer ence on . IEEE, 2014, pp. 5337–5341. [11] D. Snyder , D. Garcia-Romero, D. Pov ey , and S. Khu- danpur , “Deep neural network embeddings for text- independent speaker verification, ” Pr oc. Interspeech 2017 , pp. 999–1003, 2017. [12] S. Shon, H. T ang, and J. Glass, “Frame-le vel speaker embeddings for text-independent speaker recognition and analysis of end-to-end model, ” arXiv pr eprint arXiv:1809.04437 , 2018. [13] K. Okabe, T . K oshinaka, and K. Shinoda, “ Attenti ve statistics pooling for deep speaker embedding, ” arXiv pr eprint arXiv:1803.10963 , 2018. [14] W . Cai, Z. Cai, X. Zhang, X. W ang, and M. Li, “ A no vel learnable dictionary encoding layer for end-to-end lan- guage identification, ” arXiv pr eprint arXiv:1804.00385 , 2018. [15] W . Cai, J. Chen, and M. Li, “Exploring the en- coding layer and loss function in end-to-end speaker and language recognition system, ” arXiv pr eprint arXiv:1804.05160 , 2018. [16] R. Arandjelovi ´ c, P . Gronat, A. T orii, T . Pajdla, and J. Sivic, “NetVLAD: CNN architecture for weakly su- pervised place recognition, ” in Pr oc. CVPR , 2016. [17] Y . Zhong, R. Arandjelovi ´ c, and A. Zisserman, “GhostVLAD for set-based face recognition, ” in Asian Confer ence on Computer V ision, ACCV , 2018. [18] W . Cai, J. Chen, and M. Li, “ Analysis of length normal- ization in end-to-end speaker verification system, ” arXiv pr eprint arXiv:1806.03209 , 2018. [19] M. Hajibabaei and D. Dai, “Unified hypersphere embedding for speaker recognition, ” arXiv pr eprint arXiv:1807.08312 , 2018. [20] G. Bhattacharya, J. Alam, and P . Kenn y , “Deep speaker embeddings for short-duration speaker verification, ” in Pr oc. Interspeec h , 2017, pp. 1517–1521. [21] D. Snyder , D. Garcia-Romero, G. Sell, D. Pov ey , and S. Khudanpur, “X-vectors: Rob ust dnn embeddings for speaker recognition, ” ICASSP , Calgary , 2018. [22] F A Cho wdhury , Quan W ang, Ignacio Lopez Moreno, and Li W an, “ Attention-based models for text- dependent speaker verification, ” arXiv pr eprint arXiv:1710.10470 , 2017. [23] Jinkun Chen, W eicheng Cai, Danwei Cai, Zexin Cai, Haibin Zhong, and Ming Li, “End-to-end language identification using netfv and netvlad, ” in Proc. ISC- SLP , 2018. [24] D. Chen, S. Tsai, V . Chandrasekhar , G. T akacs, H. Chen, R. V edantham, R. Grzeszczuk, and B. Girod, “Residual enhanced visual vectors for on-de vice image matching, ” in Asilomar , 2011. [25] F . W ang, W . Liu, H. Liu, and J. Cheng, “ Additiv e margin softmax for face verification, ” arXiv pr eprint arXiv:1801.05599 , 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment