Classifying the Correctness of Generated White-Box Tests: An Exploratory Study

White-box test generator tools rely only on the code under test to select test inputs, and capture the implementation’s output as assertions. If there is a fault in the implementation, it could get encoded in the generated tests. Tool evaluations usually measure fault-detection capability using the number of such fault-encoding tests. However, these faults are only detected, if the developer can recognize that the encoded behavior is faulty. We designed an exploratory study to investigate how developers perform in classifying generated white-box test as faulty or correct. We carried out the study in a laboratory setting with 54 graduate students. The tests were generated for two open-source projects with the help of the IntelliTest tool. The performance of the participants were analyzed using binary classification metrics and by coding their observed activities. The results showed that participants incorrectly classified a large number of both fault-encoding and correct tests (with median misclassification rate 33% and 25% respectively). Thus the real fault-detection capability of test generators could be much lower than typically reported, and we suggest to take this human factor into account when evaluating generated white-box tests.

💡 Research Summary

The paper investigates a largely overlooked aspect of white‑box test generation: the ability of developers to correctly interpret the tests that such tools produce. White‑box generators such as Microsoft’s IntelliTest (formerly Pex) explore a program’s code, generate inputs, and record the observed outputs as assertions. When the underlying implementation contains a fault, the generated test will capture that faulty behavior as a passing test, effectively encoding the bug. Traditional evaluations of these tools therefore measure fault‑detection capability by counting “fault‑encoding” tests or by using mutation scores, assuming that a test that fails on the original version but passes on a faulty version signals a discovered fault. This assumption, however, presumes that a human reviewer can reliably distinguish whether a generated assertion reflects correct or incorrect behavior.

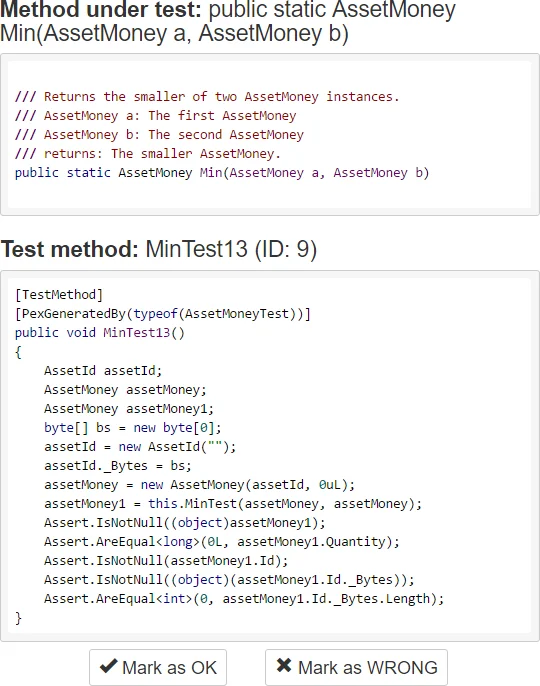

To assess this assumption, the authors conducted an exploratory, interpretivist study with 54 graduate students (MSc level) who had prior exposure to unit testing and test‑generation concepts. The study used two open‑source C# projects—NBitcoin (AssetMoney class) and Math.NET Numerics (Combinatorics class)—selected for moderate size, inter‑class dependencies, and the presence of at least one injectable fault. IntelliTest generated 15 test cases (five methods, three tests each) that all passed on the buggy version; the participants were asked to label each test as “OK” (correct) or “Faulty” (encodes a bug). The participants worked in a lab setting, with screen capture and logging to record their actions (running tests, debugging, code navigation, etc.). The authors then analyzed the data quantitatively (binary classification metrics, misclassification rates, time per test) and qualitatively (coding of observed behaviors).

Key quantitative findings:

- The median misclassification rate for fault‑encoding tests was 33 %; only two participants classified all 15 tests correctly.

- For tests that actually reflected correct behavior, the median misclassification rate was 25 %.

- On average, participants spent about two minutes per test, with considerable variance (some taking up to five minutes).

Qualitative analysis revealed that participants repeatedly performed test execution, debugging, and code inspection, but these activities did not correlate strongly with higher accuracy. Moreover, prior experience with testing or test‑generation tools did not significantly affect performance, suggesting that familiarity alone does not guarantee correct interpretation of generated tests.

The authors argue that these results expose a critical validity threat in many prior evaluations of white‑box test generators, which often ignore the human factor. If developers misclassify a substantial portion of generated tests, the reported fault‑detection capabilities (e.g., mutation scores, number of fault‑encoding tests) may be substantially inflated. Consequently, the practical usefulness of such tools in real development settings could be far lower than suggested by tool‑centric studies.

Based on the findings, the paper offers several recommendations:

- Incorporate human validation steps into the evaluation of test‑generation tools, explicitly accounting for classification error rates.

- Reduce the manual verification burden by employing implicit or derived oracles (e.g., robustness checks, regression comparisons) that can automatically flag suspicious assertions.

- Enhance developer training to include systematic techniques for interpreting generated assertions and distinguishing expected from observed behavior.

- Conduct replication studies with professional developers, multiple programming languages, and a broader set of tools (e.g., EvoSuite, Randoop) to assess the generalizability of the observed misclassification rates.

In summary, the paper provides the first empirical evidence that developers struggle to correctly classify automatically generated white‑box tests, both for fault‑encoding and correct cases. This human limitation calls for a re‑examination of how we measure the effectiveness of test‑generation tools and suggests that future research and practice should integrate human‑centric evaluation methods and supportive tooling to bridge the gap between automated test creation and reliable fault detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment