Towards Unsupervised Single-Channel Blind Source Separation using Adversarial Pair Unmix-and-Remix

Blind single-channel source separation is a long standing signal processing challenge. Many methods were proposed to solve this task utilizing multiple signal priors such as low rank, sparsity, temporal continuity etc. The recent advance of generativ…

Authors: Yedid Hoshen

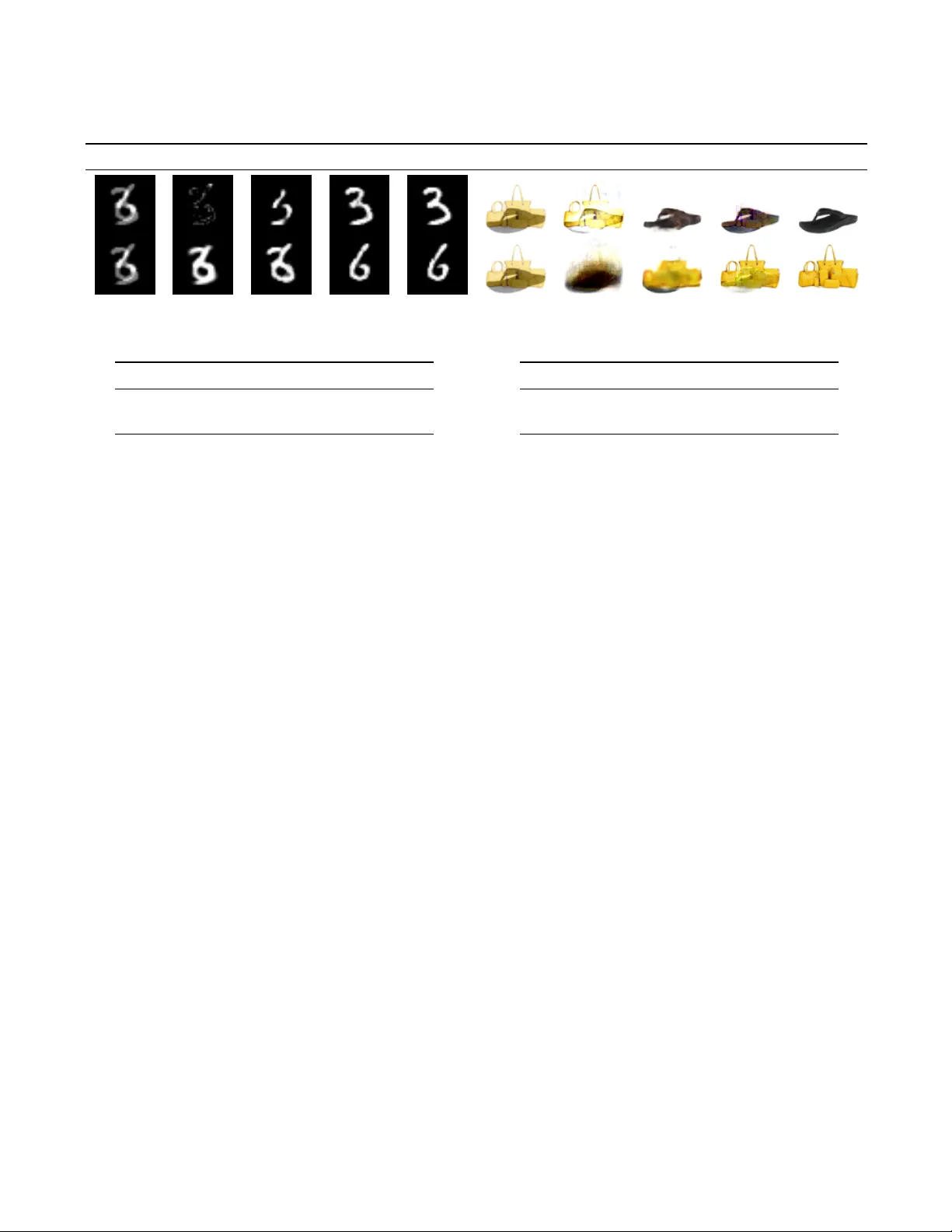

TO W ARDS UNSUPER VISED SINGLE-CHANNEL BLIND SOURCE SEP ARA TION USING AD VERSARIAL P AIR UNMIX-AND-REMIX Y edid Hoshen Hebre w Univ ersity of Jerusalem and Facebook AI Research ABSTRA CT Blind single-channel source separation is a long standing sig- nal processing challenge. Many methods were proposed to solve this task utilizing multiple signal priors such as low rank, sparsity , temporal continuity etc. The recent advance of generativ e adversarial models presented ne w opportunities in signal regression tasks. The power of adversarial training howe ver has not yet been realized for blind source separa- tion tasks. In this work, we propose a novel method for blind source separation (BSS) using adversarial methods. W e rely on the independence of sources for creating adv ersarial con- straints on pairs of approximately separated sources, which ensure good separation. Experiments are carried out on im- age sources validating the good performance of our approac h, and presenting our method as a promising approach for solv- ing BSS for general signals. Index T erms — BSS, GANs, Source Separation, Adver- sarial T raining, Unmixing 1. INTR ODUCTION The task of single-channel blind source separation (BSS) sets to reconstruct each of se veral sources (typically additively) mixed together . The task is poorly determined as more infor - mation needs to be reconstructed than the number of obser- vations. BSS methods therefore need to rely on strong signal priors in order to constrain source reconstruction. Many pri- ors were proposed for this task each gi ving rise to dif ferent optimization criteria. Source priors include: sparsity in time- frequency , non-Gaussian distrib ution of sources and low rank of sources. Recently , deep neural netw ork methods that learn high quality signal representations (a form of prior learning) made much progress on single-channel source separation for cases where clean samples of each of the sources were a vail- able in training. This allowed creating synthetically mix ed datasets, where random clean samples from each source are sampled and additi vely mix ed. A deep neural network is then used to regress each of the components from the synthetic mixture. Such approaches are very effecti ve due to learning source priors, rather than using generic hand-specified pri- ors. Recent work was carried out to reduce the supervision required to having clean samples of only a single source, ho w- ev er when only mixed source samples are available (and no clean samples), classical methods are still used. In this paper , we introduce a machine learning-based ap- proach for the single-channel BSS case i.e. when no clean source samples are available at training time. Our method is based on generati ve adversarial netw orks (GANs) and uses a mixture of distributional, energy and cycle constraints to achiev e high-quality unsupervised source separation. Our method makes the assumption of distrib utional independence between sources. In this w ork, we concentrate on the case where we are gi ven mix ed images (which is similar to ha ving short audio clips) and do not take into account temporal pri- ors (e.g. HMM models), which are left to future work. Our method is experimentally shown to outperform state-of-the- art single-channel BSS methods for image signals. Due to the strong performance on image signal separation, we belie ve that our approach presents a no vel and promising direction for solving the long-standing task of single-channel BSS for general signals. 2. PREVIOUS WORK Single-channel BSS has recei ved much attention. The best results are typically obtained by using strong priors about the signals. Robust-PCA [1] separates instrumental and vocal sources by assuming that one source is low-rank while the vocal source is sparse. Results are improv ed with supervision [2]. For repetitiv e signals, Kernel Additive Models [3] may be used. Using temporal continuity was exploited by Ro weis [4] and V irtanen [5]. W ork was also done on designing priors for image mixture separation e.g. Le vin and W eiss [6] used the presence of corners, although this method required access to clean signals. Machine learning methods take away some of the dif- ficulty in manual prior design. Speaker source separation was achiev ed by deep neural networks by [7]. Permutation- in variant training [8] allows speaker independent separation using deep learning methods. The above methods were used in a supervised source separation context i.e. when clean sam- ples of each source are av ailable. Supervised deep methods were also used for image separation (e.g. in the context of reflection removal [9, 10]). Generativ e adversarial networks (GANs) [11] were used by some researchers in a supervised Fig. 1 . A schematic of our architecture: we select a pair of samples y 1 and y 2 , and separate them into estimated sources. W e then flip the combination of sources between the tw o signals to synthesize z 1 , z 2 . W e optimize T ( y ) (implemented as y · M ( y )) to make the ne w mixtures z 1 , z 2 indistinguishable from the original mixtures y 1 , y 2 . W e then separate and remix the ne w mixtures again. The optimal separation function will recover the original mixtures y 1 , y 2 . setting, for learning a better loss function [12, 13], typically with modest gains. They hav e been similarly used in image separation tasks with more significant gains [9]. There ha ve been fe w attempts to apply deep methods for the unsupervised regime. In a recent w ork [14], we proposed using deep learning methods for semi-supervised separation (when samples of one source are av ailable but not of the other). One of our baselines proposed a GAN-based method for learning the masking function. This method was outper- formed by our main method - Neural Egg Separation which is non-adversarial. In this paper , we deal with the more chal- lenging scenario, where no clean examples are av ailable for any of the sources. The architecture in DRIT [15] used for image style and content disentanglement bears some relation to ours as it uses cycle and pair-adv ersarial constraints . Our approach is dif- ferent in key ways: we operate in the input rather than latent domain, we use masking rather than a set of encoders signif- icantly constraining the network and improving results. W e also introduce the energy equity term which is critical for the success of our approach. 3. AD VERSARIAL UNMIX-AND-REMIX In the following, we denote the set of mixed signals as y 1 , y 2 ..y N . For ease of e xplanation, our formulation will assume two sources (howe ver in Sec. 6 we explain why there is no loss of generality). W e name our sources, X and B such that e very mixed signal y i consists of separate sources x i and b i , but no e xamples of such sources are gi ven in the training set. Our objectiv e is to learn separation function T () which separates a mixed signal y into its sources x and b . W e parametrize the separation function by a multiplicativ e mask- ing operation M () as shown in Eq. 1: T ( y ) = y · M ( y ) (1) The separated sources are therefore gi ven by y · M ( y ) and y · (1 − M ( y )) . The masking function is learned as part of training. Our method be gins by sampling tw o mix ed signals, which we will denote y 1 and y 2 (with no loss of generality). W e operate the masking function on each mixture obtaining: f x 1 = T ( y 1 ) e b 1 = y 1 − T ( y 1 ) f x 2 = T ( y 2 ) e b 2 = y 2 − T ( y 2 ) (2) W e make the assumption of independence between the two sources X and B . This assumption is valid for many in- teresting mixtures of signals such as images and reflections or foreground and background noise. W ith this assumption, we can no w synthesize new mixed signals z 1 and z 2 , which are obtained by flipping the source combinations between the two pairs: z 1 = f x 1 + e b 2 z 2 = f x 2 + e b 1 (3) Although the new mix ed signals will be different from y 1 and y 2 , we make the observ ation that their distrib ution should be the same as that of y 1 and y 2 , if the separation works correctly . Therefore to encourage correct separation, we re- quire the distrib ution of Y and Z to be identical. This can be enforced using an adversarial domain confusion constraint. Specifically this works by training a discriminator D () to at- tempt to identify if a specific signal comes from Y or from Z . The discriminator is trained using the follo wing LS-GAN [16] loss function: ar g min D L D = X y ∈Y ( D ( y ) − 1) 2 + X z ∈Z D ( z ) 2 (4) W e co-currently train the masking function M () so that it acts to fool the discriminator by making the mixed signals Z as similar as possible to Y : ar g min M L M = X z ∈Z ( D ( z ) − 1) 2 (5) Where z iterates ov er all z 1 and z 2 . Although perfect separation is one possible solution of the distrib ution matching equation, another acceptable by un- wanted solution is ˜ x = y and ˜ b = 0 . This tri vial solution satisfies the distributional matching perfectly , b ut obviously achiev es no separation. T o combat this tri vial solution, we add another loss term which fa vors solutions that give non- zero weights to the different sources: L E = X y ∈Y ( y · M ( y )) 2 + ( y · (1 − M ( y ))) 2 (6) A further constraint on the separation can be obtained by another application of the separation function of the synthetic mixture signal pair z ! and z 2 . W e perform the same unmixing and remixing operation as performed in the first stage: x 1 = T ( z 1 ) b 2 = z 1 − T ( z 1 ) x 2 = T ( z 2 ) b 1 = z 2 − T ( z 2 ) (7) In this case, we notice that the result should be identical to the original unmixed signals y 1 and y 2 : y 1 = x 1 + b 1 y 2 = x 2 + b 2 (8) W e therefore introduce a ”c ycle” loss term, ensuring that the double application of unmixing and remix operation of a pair of mixed signals reco vers the original signals: L C = X y ∈Y k y , y k (9) T o summarize, our method optimizes the separation func- tion T () (which is implemented using multiplicativ e masking function M () as described in Eq. 1). The loss function to be optimized is the combination of the domain confusion loss L M , the energy equity loss L E and the cycle reconstruction loss L C : ar g min M L T otal = L C + α · L M + β · L E (10) W e also adversarially optimize the discriminator D () as described in Eq. 4. 4. IMPLEMENT A TION W e implemented the masking function M () by an architec- ture that follows DiscoGAN [17] with 64 channels (at the layer before last, each preceding layer ha ving twice the num- ber of channels). The discriminator followed a standard DC- GAN [18] architecture with 64 channels. W e used a learning rate of 0 . 0001 . Optimization was carried out by SGD with the AD AM update rule. W e carried out 4 mask update steps for ev ery D () update. W e used α = 5 for the adversarial loss L M and β = 5 for the energy equity loss L E . 5. EV ALU A TION In this section, we ev aluate the effecti veness of our method for image separation tasks against other state-of-the-art unsu- pervised single channel source separation methods. Datasets: W e use the following image datasets in our ex- periments: MNIST : The MNIST dataset [19] consists of 50000 train- ing and 10000 validation images of hand written digits 0 − 9 . The images are roughly e venly distributed between the dif- ferent classes. The original image resolution is 28 × 28 . In order to use standard generati ve architectures, we pad the im- ages by 2 pixels from each direction to ha ve a size of 32 × 32 . W e split the dataset into two sources: the images of the digits from 0 − 4 and the images of the digits from 5 − 9 . A ran- dom image is sampled from each source, and then combined with equal weights. W e sampled 25 k training mixture images (from the training sets), and 5 k v alidation images from the validation set. Shoes and Bags: The Shoes dataset [20] first collected by Y u and Grauman consists of color images of different types of shoes. W e rescale the image resolution to 64 × 64 . The Handbags dataset [21] collected by Zhu et al. consists of color images of a v ariety of handbags. W e also rescale these images to a resolution of 64 × 64 . The two datasets are often used in image generative modeling tasks. As masking works better when the background has 0 v alue, we run our experiments on the in verted intensity images (i.e. from image I , we use 255 − I ). Our sampling procedure is to randomly sample a shoe image and a handbag image (without replacement) and mix them with equal weights. This is repeated 10 k times to form our training set. W e similarly sample 5 k mixture test images. No source image is repeated between the train and test sets. Methods: Separating two images from arbitrary image classes does not satisfy the requirements for an y of the typical priors as there are no ob vious temporal, sparsity or lo w-rank constraints. W e compare against RPCA [1] which is repre- sentativ e of methods that use strong priors. T o represent de- compositional methods we compare against GLO, a genera- tiv e model (which in [14] was preferable to NMF). T o ha ve an upper bound for the quantitati ve comparison, we also gi ve the Fig. 2 . A Qualitati ve Comparison of MNIST and Shoes/Bags Separation Mix RPCA GLO Ours GT Mix RPCA GLO Ours GT T able 1 . Separation Accuracy (PSNR) D ataset RPCA GLO Ours Sup MNIST 11.5 13.0 20.4 24.4 Shoes and Bags 7.9 12.0 19.0 22.9 fully supervised performance (using the same masking func- tion architecture that we used). W e stress howe ver that our method is fully unsupervised, and we do not expect to do bet- ter than the fully supervised method. Qualitative Results: A qualitativ e comparison is pre- sented in Fig. 2. W e observe that RPCA completely fails on this task, as the sparse/low-rank prior is not suitable for arbitrary images. GLO tended to result in une ven separation - one generator containing a part of one source, while the other generator containing a mixture of the sources. Our method, generally resulted in clean separation of the sources. In highly textured regions, we sometimes saw some ”dripping” of the texture to the other source. Quantitative Results: W e present a quantitati ve compar - ison on MNIST and Shoes/Bags . The metrics are PSNR (in T ab . 1) and SSIM [22] (in T ab.2), which are standard image reconstruction quality metrics. In both cases we can observe that GLO performed much better than RPCA (due to the pri or in RPCA being unsuitable for this more general task). Our method far outperformed both baseline methods, due to our careful separation design. The performance of our method approaches the supervised separation performance, howe ver there still is a significant performance gap due to supervision, which is unsurprising. In ablation experiments, we found that the adv ersarial loss and the ener gy equity loss were essential for the con vergence of our method to the correct solution. The cycle constraint was found to only slightly increase stability of con vergence and did not increase accuracy . Overall, we can conclude that the results v alidate the strong performance of our method for separating image sources. 6. DISCUSSION W e make se veral comments about our work: Priors: Our method was shown to be ef fectiv e at separat- T able 2 . Separation Accuracy (SSIM) D ataset RPCA GLO Ours Sup MNIST 0.36 0.74 0.90 0.96 Shoes and Bags 0.18 0.51 0.73 0.86 ing mixtures of images, using no image specific priors such as repetition, sparsity or lo w-rank. It is therefore potentially extensible to all 2D signals. W e make the general assumption that the distributions of the tw o signals are independent. Spectrograms: Preliminary experiments on spectro- grams were not able to match the success of the method for image separation. W e think that this is due to GAN modeling of images being more dev eloped than that of spectrograms. W e belie ve that with future progress in adversarial architec- ture for spectrograms, our technique will be able to separate audio clips. Multiple Sources: Although the formulation in this work only dealt with 2 sources, it can be applied to a larger num- ber of sources, by a applying the method in a binary tree-like structure (recursiv ely applying our method on each separated ”source” until reaching the leaves - the clean sources). W e note howe ver that the binary tree-like structure will need to hav e a stopping criterion detecting when a clean source has been found (similar to a leaf in a tree). W e lea ve this to future work. 7. CONCLUSION In this paper, we introduced a novel method for the single- channel separation of sources without seeing any clean ex- amples of the indi vidual sources. Previous methods hav e been able to achie ve this either by learning strong priors from clean data or by carefully hand-crafting priors for particular sources. Our method makes very fe w assumptions on the sources, making it applicable to signals for which strong pri- ors are not kno wn. W e demonstrated that our method works well on separating mixtures of images. Future work on ad- versarial training for spectrograms is needed to extend our approach to audio sources. 8. REFERENCES [1] Po-Sen Huang, Scott Deeann Chen, Paris Smaragdis, and Mark Hasegaw a-Johnson, “Singing-voice separa- tion from monaural recordings using robust principal component analysis, ” in ICASSP , 2012. [2] T ak-Shing Chan, Tzu-Chun Y eh, Zhe-Cheng F an, Hung-W ei Chen, Li Su, Y i-Hsuan Y ang, and Roger Jang, “V ocal activity informed singing voice separation with the ikala dataset, ” in ICASSP , 2015. [3] Antoine Liutkus, Derry Fitzgerald, Zafar Rafii, Bryan Pardo, and Laurent Daudet, “K ernel additi ve models for source separation, ” IEEE T ransactions on Signal Pr o- cessing , vol. 62, no. 16, pp. 4298–4310, 2014. [4] Sam T Ro weis, “One microphone source separation, ” in NIPS , 2001. [5] Tuomas V irtanen, “Monaural sound source separation by nonne gativ e matrix factorization with temporal con- tinuity and sparseness criteria, ” T ASLP , 2007. [6] Anat Levin, Assaf Zomet, and Y air W eiss, “Separating reflections from a single image using local features, ” in CVPR , 2014. [7] Po-Sen Huang, Minje Kim, Mark Hase gawa-Johnson, and Paris Smaragdis, “Deep learning for monaural speech separation, ” in ICASSP , 2014. [8] Dong Y u, Morten Kolbæk, Zheng-Hua T an, and Jesper Jensen, “Permutation inv ariant training of deep mod- els for speaker -independent multi-talker speech separa- tion, ” in ICASSP , 2017. [9] Zhixiang Chi, Xiaolin W u, Xiao Shu, and Jinjin Gu, “Single image reflection remo val using deep encoder - decoder network, ” in arXiv pr eprint arXiv:1802.00094 , 2018. [10] Xuaner Zhang, Ren Ng, and Qifeng Chen, “Single im- age reflection separation with perceptual losses, ” in CVPR , 2018. [11] Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farle y , Sherjil Ozair, Aaron Courville, and Y oshua Bengio, “Generative adversarial nets, ” in NIPS , 2014. [12] Daniel Stoller , Sebastian Ewert, and Simon Dixon, “ Ad- versarial semi-supervised audio source separation ap- plied to singing voice e xtraction, ” in ICASSP , 2018. [13] Cem Subakan and P aris Smaragdis, “Generati ve adver- sarial source separation, ” in ICASSP , 2018. [14] T avi Halperin, Ariel Ephrat, and Y edid Hoshen, “Neural separation of observ ed and unobserved distrib utions, ” in ICLR Submission , 2018. [15] Hsin-Y ing Lee, Hung-Y u Tseng, Jia-Bin Huang, Ma- neesh Singh, and Ming-Hsuan Y ang, “Div erse image- to-image translation via disentangled representations, ” in ECCV , 2018. [16] Xudong Mao, Qing Li, Haoran Xie, Raymond YK Lau, Zhen W ang, and Stephen Paul Smolley , “Least squares generativ e adversarial networks, ” in ICCV , 2017. [17] T aeksoo Kim, Moonsu Cha, Hyunsoo Kim, Jungkwon Lee, and Jiwon Kim, “Learning to discover cross- domain relations with generati ve adversarial networks, ” in ICML , 2017. [18] Alec Radford, Luke Metz, and Soumith Chintala, “Un- supervised representation learning with deep con volu- tional generativ e adversarial networks, ” arXiv pr eprint arXiv:1511.06434 , 2015. [19] Y ann LeCun and Corinna Cortes, “MNIST handwritten digit database, ” 2010. [20] Aron Y u and Kristen Grauman, “Fine-grained visual comparisons with local learning, ” in CVPR , 2014. [21] Jun-Y an Zhu, Philipp Kr ¨ ahenb ¨ uhl, Eli Shechtman, and Alex ei A Efros, “Generativ e visual manipulation on the natural image manifold, ” in ECCV , 2016. [22] Zhou W ang, Eero P Simoncelli, and Alan C Bovik, “Multiscale structural similarity for image quality as- sessment, ” in ACSSC , 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment